The AI Landscape in March 2026: Everything That Changed in Six Weeks

Three frontier models shipped in six weeks: Claude Opus 4.6 with 80.8% SWE-Bench and 1M context, Gemini 3.1 Pro at 77.1% on ARC-AGI-2, and GPT-5.4 with native computer use and a 1.05M context window. Microsoft chose Anthropic over OpenAI for Copilot Cowork, agentic AI hit 48% enterprise adoption in telecom, and Cursor background agents produced 151,000-line pull requests running autonomously for over 25 hours.

This guide maps every major development, explains what it means for developers and businesses, and gives you concrete actions to take right now. Whether you build AI products, use AI tools, or make purchasing decisions about AI platforms, this is the landscape you need to understand.

Model Velocity: Three Frontier Models in Six Weeks

The pace of frontier model releases in early 2026 has been unprecedented. Three major models launched in rapid succession:

Claude Opus 4.6 (February 5, 2026)

Anthropic fired first with Opus 4.6, their most capable model ever. Key specs:

- 1M token context window (GA as of March 13, 2026) — enough to process entire codebases

- 128K max output tokens — 2x more than any competitor (Gemini 3.1 Pro supports 65K)

- Agent Teams — multi-agent orchestration that can split complex tasks across specialized agents working in parallel. A multi-agent Claude Code setup built a working C compiler from scratch: 100,000 lines of code that boots Linux on three CPU architectures.

- Context compaction — automatic server-side summarization for effectively infinite conversations

- Adaptive thinking — the model dynamically decides when and how deeply to reason

- #1 on Chatbot Arena globally for user satisfaction

- 80.8% on SWE-Bench Verified — leading standard coding benchmark

Gemini 3.1 Pro (February 19, 2026)

Google DeepMind released Gemini 3.1 Pro just two weeks after Opus 4.6:

- 77.1% on ARC-AGI-2 — the highest abstract reasoning score, beating Opus (68.8%) and GPT-5.4 (73.3%)

- 1M token context window with streaming function calling

- Thinking level parameter — control how much internal reasoning the model performs

- Multimodal function responses — function returns can include images and PDFs

- More than double the reasoning performance of its predecessor, Gemini 3 Pro

GPT-5.4 (March 5, 2026)

OpenAI completed the trifecta with GPT-5.4:

- 1.05M token context window — the largest of the three

- Native computer use — first general-purpose model with state-of-the-art ability to operate desktops, browsers, and applications

- Tool Search — a novel system that reduces token costs for tool-heavy workflows by loading tool definitions on-demand

- 57.7% on SWE-Bench Pro — leading on the harder coding benchmark variant

- Native image generation and full-resolution image processing

- Excel/Google Sheets plugins for direct spreadsheet manipulation via API

- Three variants: standard, Pro (for subscribers), and Thinking (extended reasoning)

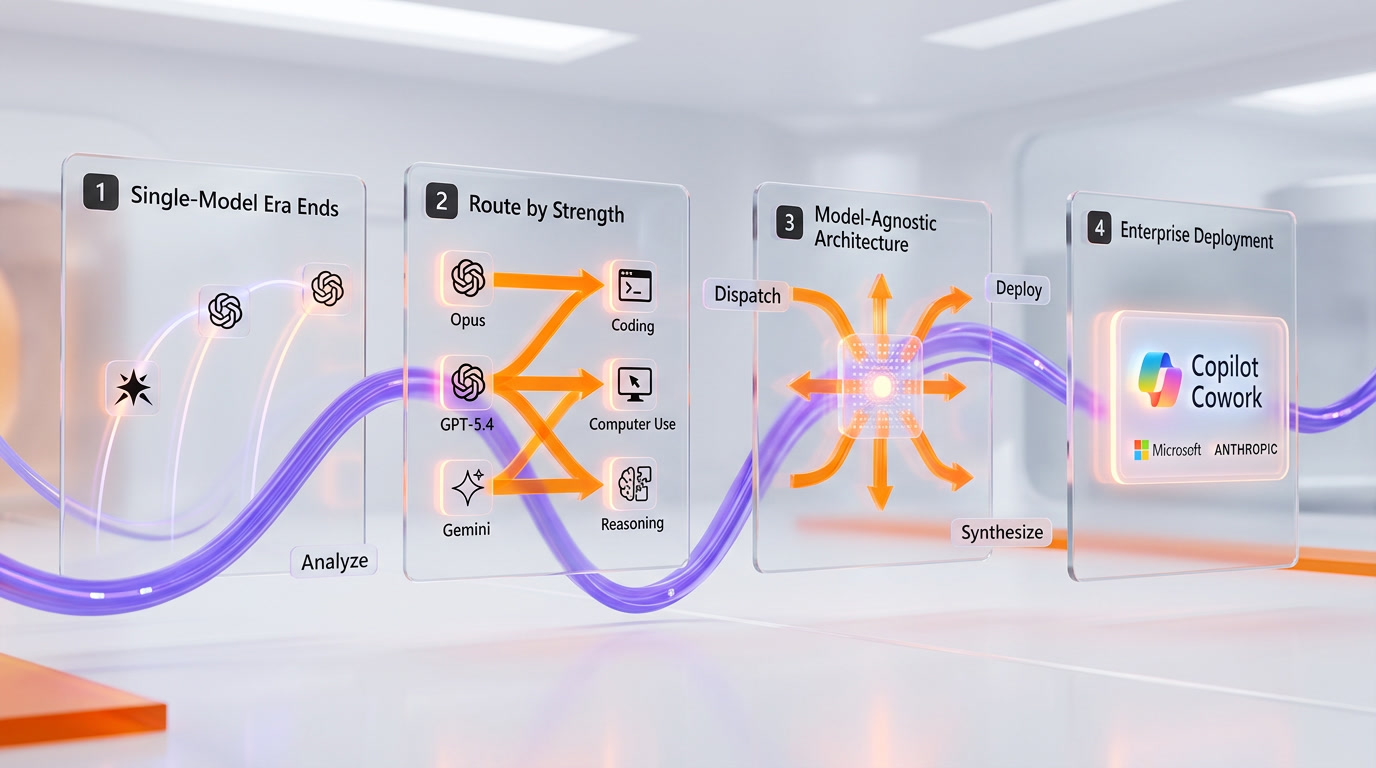

What this means: The "one best model" era is over. Each frontier model has distinct strengths — Opus for coding and agents, Gemini for reasoning, GPT-5.4 for breadth and computer use. Developers and businesses now need multi-model strategies, not single-model bets.

Multi-Model Strategies Go Mainstream

The most striking validation of the multi-model approach came on March 9, 2026, when Microsoft announced Copilot Cowork — powered by Anthropic's Claude, not OpenAI's GPT. This is Microsoft, the company that has invested over $13 billion in OpenAI, choosing a competitor's model for their most ambitious AI product.

Copilot Cowork uses Anthropic's Claude model as its reasoning engine, built on the same "agentic harness" as Anthropic's Claude Cowork technology. It handles long-running, multi-step workflows: preparing for a customer meeting by building the presentation, assembling financials, emailing the team, and coordinating prep time — all while keeping the user informed and in control.

This isn't an anomaly. It's a pattern:

- Microsoft now uses both OpenAI and Anthropic models across Copilot products

- Amazon (AWS) offers Claude, Llama, Mistral, and others through Bedrock alongside their own models

- Google Cloud provides Gemini natively but also hosts Claude and others on Vertex AI

- Enterprise customers increasingly route different task types to different models based on cost and capability

The takeaway: Building on a single AI provider is now a strategic risk. The winning approach is model-agnostic architecture with routing logic that sends each task to the best model for that job. Anthropic for complex coding. OpenAI for multimodal and computer use. Google for reasoning. Open-source for cost-sensitive, high-volume tasks.

Agentic AI Goes Mainstream

2025 was the year of "AI agents" as a buzzword. 2026 is the year they actually work in production.

Enterprise Agent Adoption

According to industry data, agentic AI adoption is highest in telecommunications at 48%, followed by retail and CPG at 47%. Enterprises have moved from experimentation to full-fledged deployments in early 2026. Google's AI Agent Trends 2026 report confirms that agents are no longer pilot projects — they are production systems handling real business workflows.

Developer Tool Agents

The AI IDE space has been transformed by agentic capabilities:

- Cursor's Background Agents run on cloud servers for 25-52+ hours, producing pull requests with 151,000+ lines of code. Developers can spin up an agent, close their laptop, and return to a completed PR.

- Windsurf's Cascade acts autonomously — reading files, making changes, and only asking for confirmation on ambiguous cases. Their proprietary SWE-1.5 model runs at 950 tokens per second (13x faster than Sonnet).

- Claude's Agent Teams split large tasks across multiple specialized agents working in parallel, enabling projects that would take a single agent days to complete in hours.

Microsoft's Agentic Office Suite

Microsoft 365 Copilot is now "agentic" in Word, Excel, PowerPoint, and Outlook. These aren't just AI suggestions — the agents take multi-step, app-native actions directly within documents: modeling scenarios in Excel with native formulas and charts, restructuring reports in Word, building presentations in PowerPoint. The new E7 license ($99 per user/month) bundles all of these capabilities with Agent 365 for governance.

Apple's Siri Reboot

Apple announced a completely reimagined Siri, but the rollout has faced significant delays. Key features have been pushed to iOS 26.5 (expected May 2026) and iOS 27 (expected September 2026), rather than the originally planned March 2026 launch with iOS 26.4. The new Siri will eventually feature "on-screen awareness" and seamless cross-app integration — a context-aware agent that understands what you're looking at and what you're trying to do. If Apple executes on the revised timeline, this puts an AI agent on every iPhone.

The Vibe Coding Revolution

The term "vibe coding" — building applications by describing what you want in natural language rather than writing code — went from niche to mainstream in early 2026. The numbers tell the story:

- Lovable reached $400M+ ARR as of March 2026, having hit $100M in its first 8 months — potentially the fastest-growing startup in history. It generates complete full-stack applications (frontend, backend, database, auth, deployment) from plain English descriptions.

- Replit's revenue jumped from $10M to $100M in just 5–6 months, and is now approaching $250M ARR after launching their Agent, which builds and deploys apps from natural language.

- Bolt launched v2 in early 2026, declaring "vibe coding goes pro" with enterprise-grade infrastructure directly in the browser.

- Vercel v0 added Git integration, a full VS Code-style editor, and three AI model tiers (Mini, Pro, Max) in a February 2026 platform overhaul.

What's different in 2026: Vibe coding tools have evolved past the "generate a landing page" phase. Lovable now includes Agent Mode with autonomous debugging and web search. Bolt v2 targets enterprise use cases. Replit's Agent can build, test, and deploy complete applications. These are no longer toys — they're production tools.

The impact on developers: Code is no longer the unit of value — clarity is. The ability to precisely describe what you want is becoming more important than the ability to type it in Python. This doesn't eliminate developer jobs (complex systems still need human engineering), but it fundamentally changes the skill mix. Prompt engineering, system architecture, and quality assurance are becoming more valuable relative to raw coding speed.

AI Video: Seedance 2.0 vs Hollywood

ByteDance launched Seedance 2.0 in early February 2026, and it sent shockwaves through the entertainment industry. This isn't just another AI video generator — it's the first model that treats video as a complete audiovisual medium from the start.

What Makes Seedance 2.0 Different

- Dual-Branch Diffusion Transformer architecture generates audio and video simultaneously — no separate audio step needed

- Cinema-grade video with synchronized audio, multi-shot storytelling, and phoneme-perfect lip-sync in 8+ languages

- Early beta users described it as matching or exceeding OpenAI's Sora 2 and Kuaishou's Kling 3.0 on motion realism

- Better accessibility and potentially lower cost than Western competitors

The Copyright Storm

Disney accused ByteDance of illegally using its IP to train Seedance 2.0. The irony: Disney subsequently struck a deal with OpenAI to give Sora access to trademarked characters like Mickey and Minnie Mouse. Hollywood is simultaneously fighting AI video and partnering with it — the industry is in a state of strategic contradiction.

CNN described Seedance 2.0 as so good it "spooked Hollywood" — raising questions about whether China's AI sector will accelerate or self-regulate given the geopolitical implications.

AI Infrastructure: The $10 Billion Cerebras-OpenAI Deal

On January 14, 2026, OpenAI signed a multiyear deal with Cerebras Systems for over $10 billion in computing infrastructure. The details:

- 750 megawatts of computing power — enough to power a small city

- Infrastructure built in multiple stages through 2028, with capacity rolling out starting 2026

- Focused on inference speed — getting faster responses for OpenAI's 900+ million weekly users

- Part of OpenAI's strategy to reduce dependence on NVIDIA while securing sufficient compute

For Cerebras, the deal is transformative — the chip designer was previously dependent on UAE-based G42 for 87% of its revenue. For the AI industry, it signals that the infrastructure race is as important as the model race. The companies with the cheapest, fastest inference infrastructure will win on margins as AI becomes commoditized at the model level.

Why This Matters for Developers

Inference costs drive API pricing. If OpenAI dramatically reduces inference costs through Cerebras's wafer-scale chips, API prices will drop — making AI-powered features cheaper to build and run. This is why GPT-5.4's API ($2.50/$15.00 per MTok, standard context) is already significantly cheaper than Claude Opus 4.6 ($5/$25 per MTok). Expect this gap to widen or force Anthropic to build similar infrastructure partnerships.

The Workforce Shift: AI Replacing Middle Management

The human impact of AI acceleration became concrete when Amazon announced layoffs of approximately 16,000 corporate employees in January 2026. Amazon's CEO noted the cuts were "not entirely AI-driven," though the restructuring aligned with broader AI integration efforts. The cuts primarily targeted middle management and administrative roles that have become redundant as the company integrates more sophisticated AI systems.

This is the pattern emerging across industries:

- AI doesn't replace frontline workers or senior executives — it replaces the coordination and information-routing layer in between

- Four major advertising agencies are using Anthropic's Claude to automate SEO audits, creative briefs, and campaign analysis — work previously done by junior to mid-level staff

- Google expanded "AI Mode" in Search (March 6), allowing users to draft documents, generate code, and build tools directly in the search interface — reducing the need for specialized knowledge workers

Enterprises are restructuring around automation. Agentic AI doesn't just assist workers — it replaces entire workflows. The professionals who thrive will be those who manage AI agents, not those who do the work AI agents can handle.

Edge AI: The Quiet Revolution

While frontier models get the headlines, edge AI — running AI models directly on devices — is increasingly important. Processing happens locally, reducing latency, improving privacy, and allowing instant decisions without cloud connectivity. Apple's new Siri, on-device translation, and local photo analysis are all edge AI applications that will reach billions of users.

What Developers Should Do RIGHT NOW

Based on everything that happened in the first quarter of 2026, here are concrete actions:

1. Build Model-Agnostic Architectures

If Microsoft is using both OpenAI and Anthropic, you should too. Abstract your AI layer so you can swap models without rewriting your application. Use routing logic to send coding tasks to Claude, multimodal tasks to GPT-5.4, and reasoning tasks to Gemini. The tools exist: LiteLLM, OpenRouter, and most cloud providers support multiple models.

2. Adopt an Agentic IDE

If you're still using vanilla VS Code or JetBrains without AI agent capabilities, you're leaving 30-50% productivity on the table. Cursor and Windsurf are the two leading options. Cursor at $20 per month for complex enterprise work. Windsurf at $15 per month for speed-first workflows. Claude Code for terminal-native development. Pick one and commit to learning its agent system deeply.

3. Experiment with Vibe Coding for Prototyping

Even if you're a senior developer, Lovable or Bolt can generate a working prototype in minutes that would take days to build manually. Use them for MVPs, internal tools, and proof-of-concepts. When the prototype validates the idea, rebuild the critical parts with proper engineering. This is not replacing your skills — it's accelerating your most repetitive work.

4. Invest in Prompt Engineering and System Design

As vibe coding tools mature, the ability to clearly describe what you want becomes more valuable than the ability to implement it. Practice writing detailed specifications, breaking complex systems into components, and reviewing AI-generated code for correctness. The developers who ship fastest will be the ones who communicate most clearly with AI.

5. Prepare for AI Agent Governance

Microsoft's Agent 365 and enterprise compliance tools like Purview integration signal that AI agent governance is becoming a real business requirement. If you're building products that use AI agents, start thinking about audit trails, approval workflows, and compliance now — before regulations require it.

6. Watch the Infrastructure Layer

The Cerebras-OpenAI deal means inference costs will drop significantly by 2027-2028. If you're building AI-powered products, design for a future where API calls are 5-10x cheaper than today. Features that are too expensive to run at scale today may become viable within 18 months.

7. Learn Multi-Agent Orchestration

Claude's Agent Teams, Cursor's subagent systems, and Copilot Cowork all point in the same direction: the future is not one AI doing everything, but multiple specialized AI agents coordinating on complex tasks. Learn how to design agent architectures, define agent responsibilities, and handle inter-agent communication.

Frequently Asked Questions

What is the best AI model in March 2026?

There is no single best model. Claude Opus 4.6 leads on coding (SWE-Bench Verified: 80.8%) and user satisfaction (Chatbot Arena #1). GPT-5.4 leads on breadth, computer use, and novel engineering (SWE-Bench Pro: 57.7%). Gemini 3.1 Pro leads on abstract reasoning (ARC-AGI-2: 77.1%). The best model depends on your specific use case.

Is AI replacing software developers?

No, but it's changing what developers do. Routine coding is being automated by tools like Cursor, Windsurf, and Claude Code. Developers are shifting toward architecture, system design, AI agent management, code review, and prompt engineering. Total developer demand is increasing because AI makes it possible to build more software — but the skill requirements are changing.

What is vibe coding?

Vibe coding is building applications by describing what you want in natural language rather than writing code manually. Platforms like Lovable, Bolt, and Replit Agent generate complete applications — frontend, backend, database, and deployment — from plain English descriptions. Lovable reached $400M+ ARR as of March 2026, hitting $100M in its first 8 months.

Should I use ChatGPT or Claude?

Both, ideally. ChatGPT (GPT-5.4) excels at multimodal tasks, computer use, and breadth. Claude (Opus 4.6) excels at coding, long-form output, agent orchestration, and writing quality. Use ChatGPT as your general-purpose assistant and Claude for coding and complex analytical work. Both cost $20 per month for standard subscriptions.

What is Microsoft Copilot Cowork?

Copilot Cowork is Microsoft's new agentic AI product, announced March 9, 2026, built in partnership with Anthropic (not OpenAI). It handles multi-step business workflows — preparing meetings, building presentations, coordinating teams — using Claude's reasoning capabilities within the Microsoft 365 ecosystem. It's available as a research preview through the Frontier program.

How much does AI cost for businesses?

Consumer AI subscriptions range from $0-$200 per month. Microsoft 365 E7 (including Copilot, Agent 365, and Entra Suite) costs $99 per user/month. AI IDEs cost $15-60/user/month. API costs vary: GPT-5.4 at $2.50/$15.00 per million tokens (standard context), Claude Opus 4.6 at $5/$25. For a 50-person team using Microsoft 365 E7 plus Cursor, budget approximately $6,000-7,000/month.

What is the Cerebras-OpenAI deal?

OpenAI signed a $10+ billion multiyear deal with Cerebras Systems for 750 megawatts of computing power, rolling out through 2028. It's focused on inference speed for OpenAI's 900+ million weekly users and reduces OpenAI's dependence on NVIDIA chips. This deal will likely lead to lower API costs for developers by 2027-2028.

What should I learn in 2026 to stay relevant?

Multi-model architecture design, prompt engineering, AI agent orchestration, system architecture, and code review for AI-generated output. The most valuable developers in 2026 are not the fastest coders — they're the clearest thinkers who can break complex problems into agent-solvable components and verify the results.