Here's a question that would have sounded absurd three years ago: when someone asks Claude or ChatGPT "what's the best project management tool for remote teams," does your product show up in the answer?

If you don't know — or worse, if you know the answer is no — you have a problem. Because in 2026, a significant and growing share of product research, technical queries, and buying decisions bypass Google entirely. They happen inside AI assistants, and the rules for showing up there are fundamentally different from the rules you learned in SEO 101.

Traditional SEO isn't dead. But it evolved. And two new disciplines — GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization) — have emerged alongside it. Understanding all three, and how they interact, is now table stakes for anyone serious about organic visibility.

We rebuilt ThePlanetTools.ai's entire content strategy around these principles. This guide is everything we learned — the frameworks, the tools, the technical implementation, and the real results.

Traditional SEO Is Not Dead — It Evolved

SEO in 2026 demands three disciplines: traditional search optimization, Generative Engine Optimization for AI citation via structured 40-word answer blocks, and Answer Engine Optimization prioritizing factual density over keyword density. Visibility now depends on llms.txt, prompt-matched FAQ schema, and E-E-A-T signals across both Google and AI answer engines like ChatGPT, Claude, and Perplexity.

What has changed is the nature of what Google rewards. The algorithm updates of 2024 and 2025 aggressively targeted AI-generated content farms, thin affiliate sites, and SEO-optimized-but-unhelpful content. Google's Helpful Content system got teeth, and a lot of sites that had been gaming the system got decimated.

What Google rewards in 2026 is actually simpler than what it rewarded five years ago: genuinely useful content from people with real experience. The keyword-stuffing, backlink-farming, content-spinning playbook is dead. What works is being genuinely good at something and communicating that expertise clearly.

That said, the share of searches that result in a click to a website has declined. Google's own AI Overviews, featured snippets, and knowledge panels answer many queries directly in the search results. And a growing number of users never even reach Google — they ask ChatGPT, Claude, or Perplexity instead.

This is where GEO and AEO come in.

GEO: Generative Engine Optimization

Generative Engine Optimization is the practice of structuring your digital content and managing your online presence to improve visibility in responses generated by AI systems. When someone asks ChatGPT, Claude, Perplexity, or Google Gemini a question and the AI cites your brand, product, or content in its answer — that's a GEO win.

GEO is broader than just "get mentioned by ChatGPT." It encompasses:

- AI visibility monitoring — tracking when and how AI models mention your brand across different platforms

- Cross-platform citation management — ensuring consistent, accurate information about your brand across all sources that LLMs crawl

- Brand entity optimization — making sure your brand is understood as a distinct entity with clear attributes, products, and relationships

- Content structuring for RAG — formatting your content so that retrieval-augmented generation (RAG) systems can effectively extract and cite it

How LLMs Find and Use Your Content

Understanding the technical pipeline is crucial. Most AI assistants use some form of RAG (Retrieval-Augmented Generation) when answering factual questions. The process works like this:

- The user asks a question

- The system searches its knowledge base and/or the web for relevant documents

- Retrieved documents are ranked by relevance

- The LLM synthesizes an answer using the retrieved context

- The answer may include citations to source material

Your goal with GEO is to be in the documents that get retrieved in step 2, ranked highly in step 3, and cited in step 5.

Practical GEO Strategies

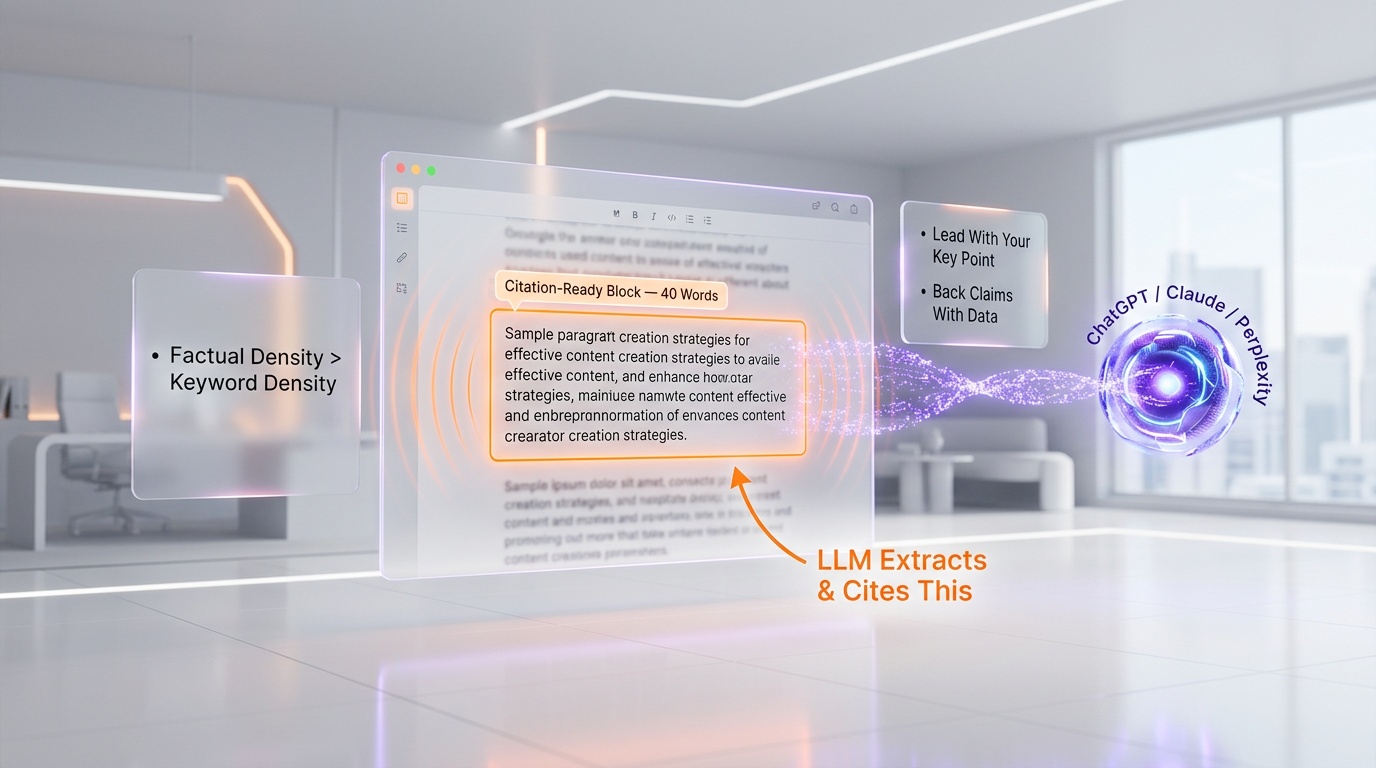

1. Structure content around clear, answerable claims. LLMs extract information most effectively from content that makes clear, factual statements. Instead of burying your key point in paragraph 7 of a 3,000-word post, lead with it. Use the "inverted pyramid" structure from journalism: most important information first.

2. Include data-backed claims with source attribution. LLMs are trained to favor factual, verifiable information. When you cite statistics, link to sources. When you make claims, back them up with evidence. "Our tool reduced deployment time by 40% across 50 enterprise clients" is more likely to be cited than "our tool is really fast."

3. Use the 40-word answer block technique. Research shows that concise answer blocks of approximately 40 words are optimal for AI extraction. After your detailed explanation, include a clear, concise summary that an LLM can directly quote. Think of these as "citation-ready" paragraphs.

4. Maintain cross-platform consistency. If your product description differs between your website, your GitHub README, your Product Hunt listing, and your LinkedIn page, LLMs get confused. Ensure your core messaging is consistent across all platforms that AI systems crawl.

5. Build a strong entity presence. LLMs understand entities — brands, products, people. Make sure your brand has a clear entity presence: a Wikipedia page (if notable enough), Wikidata entry, Crunchbase profile, consistent structured data across your web properties. The more clearly defined your entity is, the more confidently an LLM can reference it.

AEO: Answer Engine Optimization

Answer Engine Optimization is a subset of GEO focused specifically on optimizing for AI-powered answer engines — ChatGPT, Google Gemini, Microsoft Copilot, Perplexity, and Google's AI Overviews. While GEO is the broader discipline, AEO is the tactical execution.

The key insight: AEO values factual density over keyword density, structured answer blocks over page-level relevance, and content freshness over historical domain signals. This is a fundamentally different optimization philosophy than traditional SEO.

The Most Effective AEO Techniques in 2026

Question-first content structure. Align your content with real AI prompts, not just search keywords. People don't ask ChatGPT "best CRM software" — they ask "I'm running a 15-person sales team selling B2B SaaS. What CRM should I use and why?" Structure your content to answer these specific, context-rich questions.

FAQ schema deployment with prompt-matched questions. Deploy FAQ structured data where the questions match actual prompts people use with AI assistants. Research these prompts using tools like Perplexity (which shows related questions) and analyze "People Also Ask" patterns in Google.

Concise answer blocks following the 40-word rule. For every key question your content addresses, include a clean 30-50 word answer block that an AI can extract directly. Place these near the top of the relevant section.

Entity-centric knowledge graphs. Use JSON-LD structured data to explicitly define entities, their properties, and their relationships. This helps LLMs understand what your content is about and how different concepts relate to each other.

Multi-format answer coverage. Cover the same information in multiple formats: text, tables, lists, and structured data. Different AI systems prefer different formats, and multi-format coverage maximizes your chances of being extracted.

Data-backed claims with source attribution. Every significant claim should include a specific data point and a source. "According to [source], [specific metric] changed by [specific amount] in [specific timeframe]" is the pattern that gets cited.

Answer freshness protocols. AI systems favor fresh content. Establish a regular update cadence for your key content. Date your articles prominently. Update statistics and examples regularly. Stale content gets dropped from AI responses.

Cross-platform answer consistency. Ensure your answers are consistent across every platform where your brand appears. Inconsistency confuses RAG systems and reduces citation confidence.

The llms.txt Specification

Proposed in 2024 by Jeremy Howard of Answer.AI, llms.txt is an emerging standard that serves as a machine-readable map of your site's most important content. Think of it as a sitemap, but designed for LLMs instead of search engine crawlers.

How It Works

You place a plain-text file at /llms.txt in your website's root directory. Unlike sitemaps (which use XML), llms.txt uses Markdown formatting. The file contains:

- An H1 heading with your project or site name (required)

- A blockquote with a short summary containing key information

- Organized links to your most important pages with descriptions

- Key context that an LLM would need to accurately represent your brand

Should You Implement It?

As of early 2026, adoption is growing steadily. Several major documentation platforms have added llms.txt support, and static site generators are beginning to include it as a build option. However, it's important to note that none of the major LLM companies — OpenAI, Google, or Anthropic — have officially confirmed they follow these files when crawling websites.

Our recommendation: implement it anyway. It takes 30 minutes to create, costs nothing to maintain, and if LLM crawlers do start respecting it (which is likely as the standard matures), you'll be ahead of the curve. At ThePlanetTools.ai, we maintain an llms.txt that describes our tool categories, our editorial approach, and our most important content pages.

Implementation Example

Your llms.txt should clearly state what your site does, what your primary content categories are, and link to your most important pages with brief, factual descriptions. Keep it focused and accurate — this is information that will be fed directly to AI models as context.

JSON-LD Structured Data for AI Discovery

Structured data has always been important for SEO, but in the age of AI, it's critical. JSON-LD (JavaScript Object Notation for Linked Data) is the format Google recommends, and it's also what LLMs can most effectively parse.

Essential Schema Types for 2026

Organization schema: Establishes your brand as a distinct entity with name, URL, logo, social profiles, and founding date.

Article schema: Marks up your content with author, publish date, modified date, and headline. The dateModified field is particularly important — it signals content freshness to both Google and AI systems.

FAQ schema: Structures your FAQ sections so they can be directly extracted. Each question-answer pair should be a self-contained, cite-worthy answer.

HowTo schema: For tutorials and guides, this schema helps both Google and AI systems understand the step-by-step structure.

Product schema: If you review or list products, detailed product schema with ratings, prices, and features helps AI systems generate accurate product comparisons.

SoftwareApplication schema: Particularly relevant for ThePlanetTools.ai — this schema type lets you define software tools with their features, pricing, operating system compatibility, and categories.

Best Practices

- Use JSON-LD (not Microdata or RDFa) — it's cleaner, easier to maintain, and Google's preferred format

- Validate with Google's Rich Results Test and Schema Markup Validator

- Keep structured data in sync with visible content — mismatches are penalized

- Include as many specific properties as possible — the more detailed your schema, the more useful it is to AI systems

- Update

dateModifiedwhenever you update content

E-E-A-T Signals That Matter in 2026

E-E-A-T — Experience, Expertise, Authoritativeness, and Trustworthiness — is Google's framework for evaluating content quality. In 2026, with the internet flooded with AI-generated content, E-E-A-T signals matter more than ever because they're what separates genuine expertise from generated text.

Experience

The first "E" was added in December 2022, and it's become the most important differentiator in the AI age. Google wants to see content from creators with direct, first-hand involvement in the topic. A review of a project management tool from someone who has used it for six months is categorically more valuable than a review generated from the product's feature page.

How to signal experience: share specific anecdotes, reference real projects, include detailed visuals from actual use, mention specific limitations you discovered through use, and describe your workflow in concrete detail.

Expertise

Formal knowledge and demonstrated competence. For technical topics (which is our focus at ThePlanetTools.ai), this means detailed, accurate technical content that demonstrates deep understanding. Code examples that actually work. Architectural explanations that show real-world trade-offs. Performance benchmarks from actual testing.

Authoritativeness

Recognition from the broader community. This is built through backlinks from reputable sites, mentions in industry publications, speaking engagements, open-source contributions, and a consistent track record of quality content. It can't be faked — it has to be earned over time.

Trustworthiness

Google's Quality Rater Guidelines explicitly state that Trust is the most important component — "untrustworthy pages have low E-E-A-T no matter how Experienced, Expert, or Authoritative they may seem." Trust signals include: clear author identification, contact information, transparent business details, security (HTTPS), accurate claims, correction of errors, and clear distinction between editorial content and advertising.

Technical SEO Checklist for 2026

Core Web Vitals

Google introduced Core Web Vitals 2.0 in early 2026, bringing a more dynamic and predictive measurement system. The three core metrics remain but with enhanced sophistication:

- LCP (Largest Contentful Paint): Must be under 2.5 seconds. This measures how fast your main content loads. For Next.js sites, use server components, optimize images with the next/image component, and leverage ISR for dynamic content.

- INP (Interaction to Next Paint): Must be under 200 milliseconds. This replaced FID (First Input Delay) and measures responsiveness across ALL user interactions, not just the first one. Optimize by minimizing JavaScript execution time, breaking up long tasks, and using web workers for heavy computation.

- CLS (Cumulative Layout Shift): Must be under 0.1. This measures visual stability. Reserve space for images and ads, use CSS containment, and avoid inserting content above existing content.

Google evaluates the 75th percentile of all page loads, meaning 75% of your visitors must experience "good" performance for your site to pass.

Schema Implementation

- JSON-LD for all content types (articles, FAQs, products, organization)

- Breadcrumb schema for navigation structure

- SiteNavigationElement for main navigation

- SearchAction schema if your site has search functionality

Sitemaps and Indexing

- XML sitemap with lastmod dates (keep them accurate)

- Separate sitemaps for different content types if your site is large

- robots.txt properly configured (don't accidentally block CSS/JS)

- llms.txt file at root (as discussed above)

- Canonical tags on every page to prevent duplicate content issues

Page Speed and Performance

- Serve images in WebP/AVIF format with proper sizing

- Implement lazy loading for below-the-fold images

- Use a CDN (Vercel's Edge Network handles this automatically for Next.js)

- Minimize and tree-shake JavaScript bundles

- Use server components to reduce client-side JavaScript where possible

- Implement preloading for critical resources

Mobile Optimization

- Mobile-first design (Google uses mobile-first indexing)

- Touch targets at least 44x44 pixels

- No horizontal scrolling

- Readable font sizes without zooming (minimum 16px body text)

Tools We Use for SEO, GEO, and AEO

Here's our actual toolstack — no affiliate links, just honest assessments:

- Google Search Console: Still the most important free SEO tool. Performance reports, index coverage, Core Web Vitals data, and manual action notifications. Check it weekly at minimum.

- Bing Webmaster Tools: Underrated. Since Bing's data feeds into ChatGPT's web browsing, getting indexed and ranking well in Bing directly affects your visibility in ChatGPT. Submit your sitemap here too.

- Google's Rich Results Test: Validates your structured data implementation. Run every page template through this before deploying.

- PageSpeed Insights: Uses real-world Chrome User Experience Report (CrUX) data to measure Core Web Vitals. The lab data is useful for debugging, but the field data is what Google actually uses for ranking.

- Schema Markup Validator: Catches structured data errors that the Rich Results Test might miss.

- Perplexity: Use it as a research tool to understand how AI systems handle queries in your niche. Ask it questions your customers would ask and see if (and how) you're cited.

The Strategy We Applied to ThePlanetTools.ai

Here's what we actually did — the specific, tactical steps:

- Content restructuring: Every tool review and guide was restructured with question-first headings, 40-word answer blocks, and FAQ sections with 8+ questions. Each FAQ answer is self-contained and cite-worthy.

- Comprehensive JSON-LD: Every page has Organization, Article (or SoftwareApplication), FAQ, and BreadcrumbList schema. We use Next.js server components to generate this dynamically from our database.

- llms.txt implementation: We maintain a root-level llms.txt that describes ThePlanetTools.ai, our content categories, and links to our most important pages with accurate descriptions.

- E-E-A-T signals: Every review includes specific details about our actual experience using the tool — insights from our own setup, performance data from our own testing, specific use cases from building our SaaS products. This isn't generic content; it's content from builders for builders.

- Technical performance: Our Next.js + Vercel stack gives us excellent Core Web Vitals out of the box. Server components reduce client-side JavaScript. Vercel's Edge Network handles CDN and caching. Images are optimized and served in modern formats.

- Cross-platform consistency: Our messaging is consistent across our website, GitHub, social media profiles, and third-party listings. We maintain the same core description and value proposition everywhere.

- Regular freshness updates: We update published content monthly with new data, refreshed visuals, and revised recommendations. Every update includes an accurate

dateModifiedin our schema.

Frequently Asked Questions

What is Generative Engine Optimization (GEO)?

Generative Engine Optimization is the practice of structuring digital content and managing online presence to improve visibility in responses generated by AI systems like ChatGPT, Claude, Perplexity, and Google Gemini. It encompasses content optimization for AI extraction, brand entity management, cross-platform citation consistency, and AI visibility monitoring. GEO is the broader discipline that includes AEO as a subset.

What is Answer Engine Optimization (AEO)?

Answer Engine Optimization is the tactical practice of optimizing content so that AI-powered answer engines extract, cite, and recommend your brand in their generated responses. Unlike traditional SEO which optimizes for link-based search rankings, AEO optimizes for RAG (Retrieval-Augmented Generation) pipelines used by LLMs. Key techniques include question-first content structure, concise 40-word answer blocks, FAQ schema deployment, and data-backed claims.

Is traditional SEO still worth doing in 2026?

Absolutely. Google still processes billions of searches daily and drives the majority of organic web traffic for most businesses. Traditional SEO and GEO/AEO are complementary — good traditional SEO practices (quality content, technical excellence, strong E-E-A-T) also improve your AI visibility. The sites that perform best in 2026 are optimized for both traditional search and AI answer engines simultaneously.

What is llms.txt and should I implement it?

llms.txt is a proposed standard (created by Jeremy Howard of Answer.AI in 2024) for a plain-text Markdown file placed at your website's root directory that helps LLMs navigate your content. While no major LLM company has officially confirmed they follow these files, implementation takes minimal effort and positions you ahead of the curve as the standard matures. We recommend implementing it for any content-heavy website.

How do I optimize for ChatGPT and Claude specifically?

Focus on three areas: (1) Ensure your content is well-indexed by Bing, since ChatGPT's web browsing relies on Bing data. (2) Structure content with clear, factual claims backed by data and sources — LLMs prefer information they can verify. (3) Build a strong brand entity presence across Wikipedia, Wikidata, Crunchbase, and consistent structured data on your site. For Claude specifically, Anthropic has not disclosed crawling details, but the same principles of clear, authoritative, well-structured content apply.

What are the most important Core Web Vitals metrics in 2026?

The three Core Web Vitals are LCP (Largest Contentful Paint, target under 2.5s), INP (Interaction to Next Paint, target under 200ms), and CLS (Cumulative Layout Shift, target under 0.1). INP replaced FID in March 2024 and is the most challenging metric because it measures responsiveness across ALL user interactions, not just the first. Google evaluates the 75th percentile of visits, so 75% of your users must experience "good" performance.

How does E-E-A-T affect AI visibility?

E-E-A-T signals — particularly Experience and Expertise — help differentiate your content from AI-generated noise. LLMs trained on web data learn to associate certain signals (author credentials, specific anecdotes, data-backed claims, consistent brand mentions) with authority. Content that demonstrates real first-hand experience is more likely to be included in training data and cited by RAG systems than generic content that lacks these signals.

What JSON-LD schema types are most important for SEO in 2026?

The essential types are: Organization (establishes your brand entity), Article with dateModified (signals content freshness), FAQ (enables direct AI extraction of Q&A pairs), BreadcrumbList (clarifies site structure), and either Product or SoftwareApplication depending on your content. Use the HowTo schema for tutorials. All schemas should use JSON-LD format, be validated with Google's Rich Results Test, and stay in sync with visible content.

How often should I update content for SEO freshness?

For key pages (product reviews, guides, comparison articles), update at least monthly with new data, refreshed visuals, and revised recommendations. For evergreen content, quarterly updates are sufficient. Always update the dateModified field in your schema markup when you make substantive changes. AI systems favor fresh content, so a regular update cadence directly improves both traditional and AI search visibility.

What tools do you recommend for monitoring AI visibility?

Start with free tools: use Perplexity to test how AI handles queries in your niche, Google Search Console for traditional performance, and Bing Webmaster Tools (critical since Bing data feeds ChatGPT). For dedicated GEO/AEO monitoring, tools like Scrunch, GenOptima, and Profound track your brand mentions across multiple AI platforms. At minimum, manually test 10-20 key queries across ChatGPT, Claude, Perplexity, and Gemini monthly to understand your current AI visibility.