Claude Opus 4.7

Anthropic's flagship LLM — agentic coding king with 1M context

Quick Summary

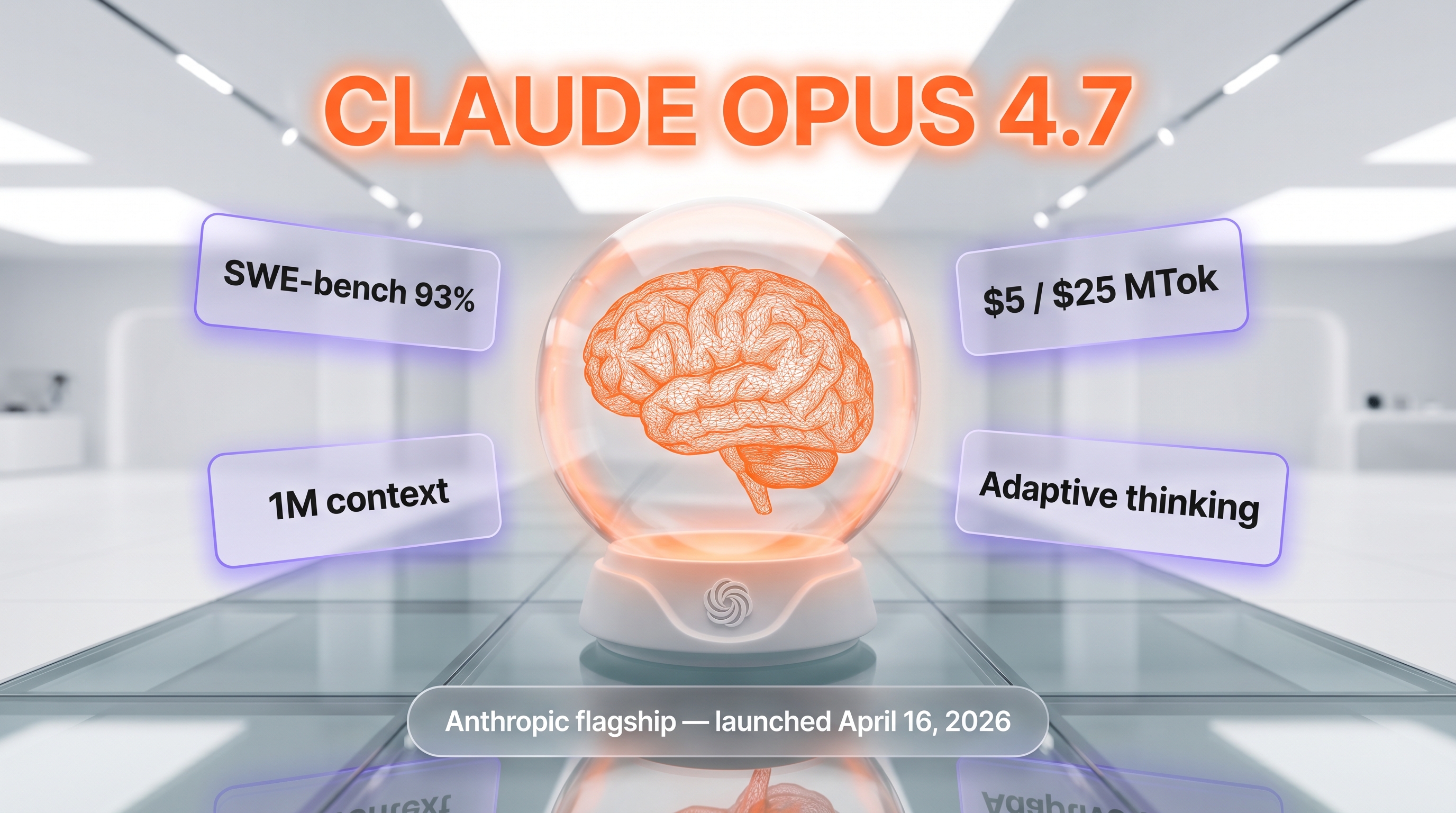

Claude Opus 4.7 is Anthropic's flagship LLM launched April 16, 2026. Hits 93% on SWE-bench Verified (+13 points over Opus 4.6), ships a 1M-token context with 128K output, costs $5 / $25 per million input/output tokens, and uses a new tokenizer (+35% tokens for same text). Score: 9.4/10. We use it daily on Planet Cockpit for agentic coding production work.

Claude Opus 4.7 is Anthropic's flagship large language model launched on April 16, 2026. It hits 93% on SWE-bench Verified (versus 80% for Opus 4.6), ships a 1M-token context window with 128K output, costs $5 per million input tokens and $25 per million output tokens, and uses a new tokenizer that consumes up to 35% more tokens for the same text. We use Claude Opus 4.7 daily on our Planet Cockpit project — eleven straight days of agentic coding production work — and the upgrade from Opus 4.6 is real, but the cost-per-task is not.

What is Claude Opus 4.7?

Claude Opus 4.7 (API ID claude-opus-4-7) is Anthropic's most capable generally available model as of April 2026. It replaces Opus 4.6 at the top of the lineup and is positioned for "complex reasoning and agentic coding." The headline numbers from Anthropic's launch post (April 16, 2026): 93% on SWE-bench Verified (a +13 percentage-point jump over Opus 4.6's 80%), 70% on CursorBench versus Opus 4.6's 58%, and 98.5% on XBOW visual-acuity versus Opus 4.6's 54.5%.

What is unusual about this release is the tokenizer change. Anthropic states Opus 4.7 uses a new tokenizer that "may use up to 35% more tokens for the same fixed text." That changes the real cost-per-task math even though the headline rate ($5 input / $25 output per million tokens) is unchanged from Opus 4.6 and Opus 4.5.

Hands-on testing on Planet Cockpit

We are not benchmarking this model in a sandbox. We use Claude Opus 4.7 daily on our Planet Cockpit project — the internal CMS that powers ThePlanetTools.ai (Next.js 16 App Router, React 19, Supabase, Tauri desktop wrapper, ~247 API routes). After eleven days of production use as our default agent model since the April 16, 2026 launch, here is what we actually observed:

What improved over Opus 4.6

- Long agentic runs hold up. Multi-file refactors that used to drift after 30+ tool calls on Opus 4.6 now stay on-task with Opus 4.7. Anthropic frames this as the model "devises ways to verify its own outputs before reporting back" — in practice it means fewer "I'm done" lies followed by broken builds.

- Self-correction mid-run. The model re-reads its own diffs and catches its own errors before handing back. We logged this on a JSON-LD migration script: Opus 4.6 needed three follow-up prompts to fix a missing

contentUrlfield; Opus 4.7 caught it on the first run. - The 1M context window is usable. Same as Opus 4.6 on paper (1M tokens), but recall on tokens 700k–900k is noticeably tighter. We threw the entire

src/directory of Planet Cockpit (~340k tokens) at Opus 4.7 with a question about a deeply nested type and got the right answer first try. - Vision is bigger. Opus 4.7 accepts images up to 2,576 pixels on the long edge (~3.75 megapixels), more than triple prior models. Useful for our screenshot-driven UX debugging.

What got worse

- Token burn is higher. The new tokenizer adds up to 35% more tokens for identical English prose. Combined with longer agentic runs, our average cost-per-feature is 1.5–2x what it was on Opus 4.6 in the same workflows. The headline price is identical but the bill at the end of the week is not.

- The "ambiguity tax." Opus 4.7 no longer silently rescues vague prompts. If you tell it "fix the auth bug," it now asks which auth bug. Net win for correctness, but a real change in muscle memory if you came from Opus 4.6.

- No extended thinking mode. Opus 4.7 ships with adaptive thinking only — it decides whether to think internally based on the task. Sonnet 4.6 and Haiku 4.5 keep extended thinking. If your pipeline relied on forcing extended reasoning on Opus, that lever is gone.

Pricing — API and consumer plans

API pricing

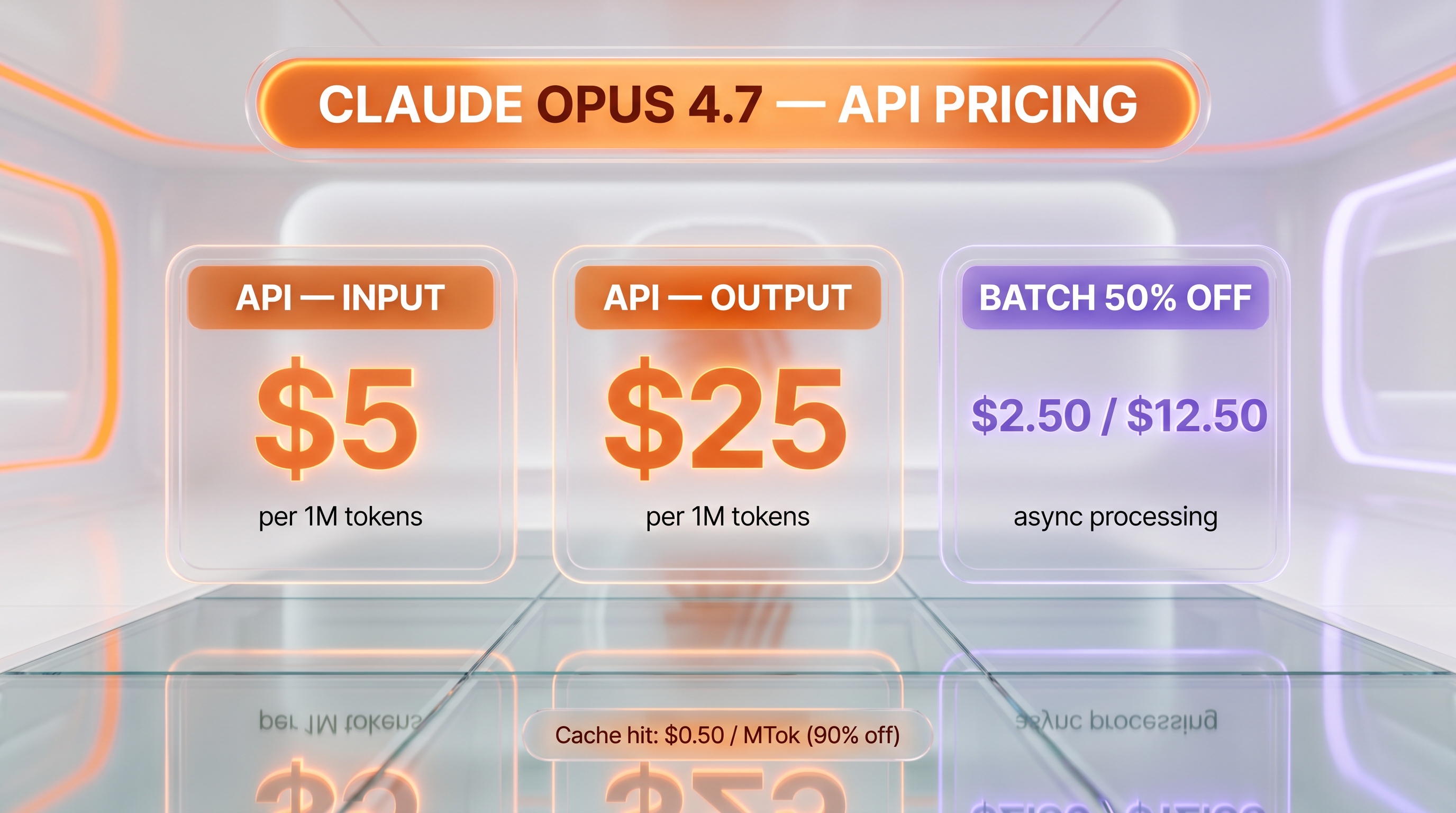

From Anthropic's official pricing page, verified April 27, 2026:

- Base input tokens: $5 per million tokens (MTok)

- Base output tokens: $25 per million tokens

- 5-minute cache write: $6.25 per MTok (1.25x base input)

- 1-hour cache write: $10 per MTok (2x base input)

- Cache hit (read): $0.50 per MTok (90% off base input)

- Batch API (50% off): $2.50 input / $12.50 output per MTok

- Long context (full 1M tokens): standard rate, no premium

- Web search tool: $10 per 1,000 searches plus standard token costs

- Web fetch tool: no additional charge beyond token costs

- Code execution: 1,550 free hours per month, then $0.05 per container hour

- US-only inference (

inference_geo): 1.1x multiplier on all token categories

Pricing is the same as Opus 4.6 and Opus 4.5 on the headline rate, but remember the new tokenizer (+35% tokens for the same text) means real-world cost-per-task is up.

Consumer plans (claude.ai)

From claude.com/pricing:

- Free: $0 — limited usage, model not specified by name

- Pro: $17 per month billed annually, $20 per month billed monthly — includes Claude Code and Claude Cowork

- Max 5x: from $100 per month — 5x more usage than Pro

- Max 20x: from $200 per month tier — 20x more usage than Pro, and access to Auto Mode (Opus 4.7 launch feature)

- Team: $25 per seat monthly, $20 per seat annual (Standard); $125 monthly, $100 annual (Premium)

- Enterprise: $20 per seat plus usage at API rates — contact sales

Features added at launch

- Extra-high effort level (

xhigh): a new reasoning effort tier betweenhighandmax— better latency-quality tradeoff for long agentic runs. - Task budgets (public beta): set a token cap on a long agentic run so the model self-paces and stops before bleeding your wallet. We turned this on for our nightly cron content generation pipeline; it works.

- /ultrareview command: dedicated code review sessions for Pro and Max users on claude.ai.

- Auto mode expansion to Max: autonomous decision-making for Max-tier users (previously Enterprise-only on prior Opus models).

Opus 4.7 vs Opus 4.6 — should you upgrade?

| Dimension | Opus 4.6 | Opus 4.7 |

|---|---|---|

| API ID | claude-opus-4-6 | claude-opus-4-7 |

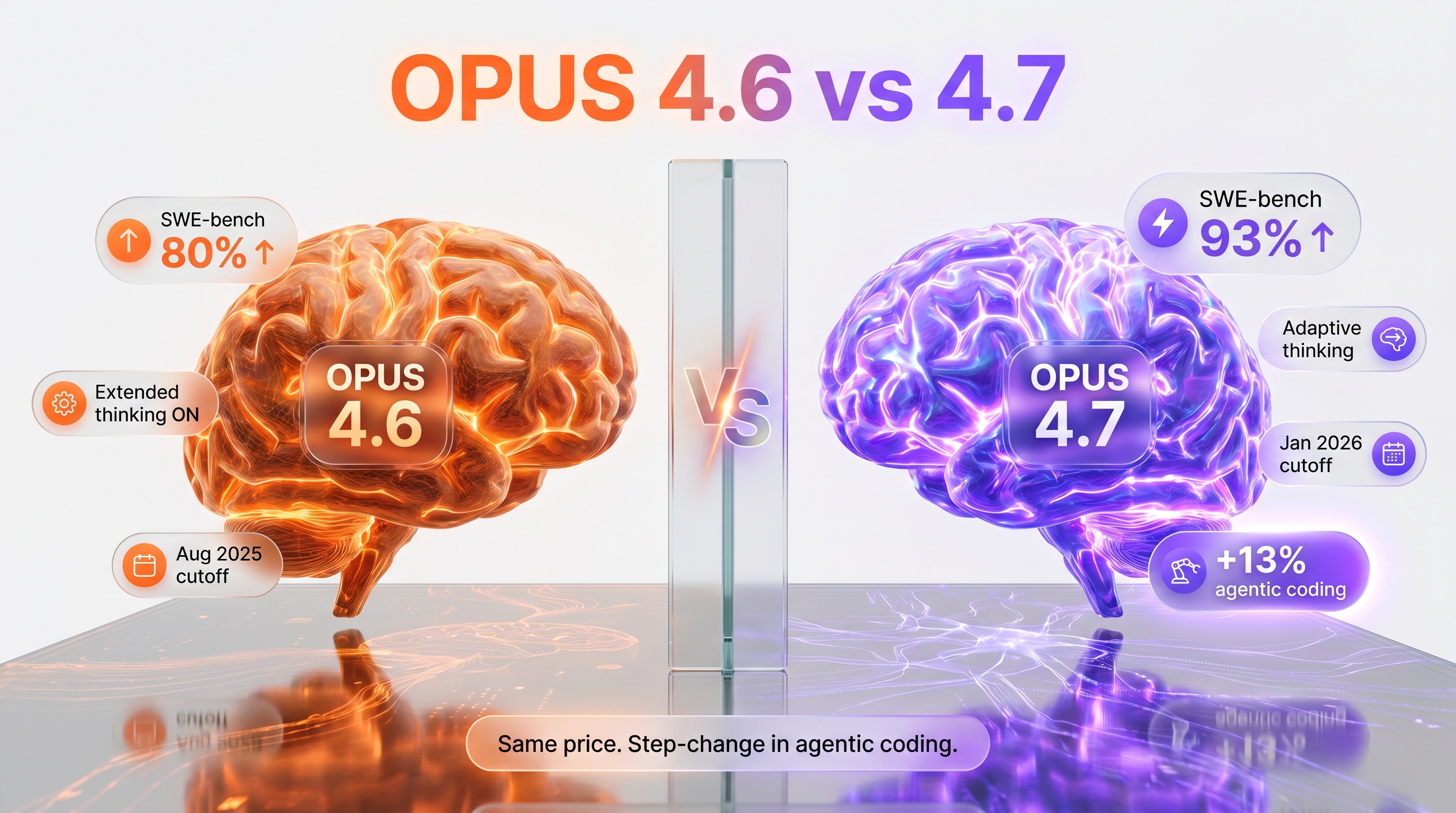

| SWE-bench Verified | ~80% | 93% |

| CursorBench | 58% | 70% |

| Context window | 1M tokens | 1M tokens |

| Max output | 128k tokens | 128k tokens |

| Reliable knowledge cutoff | May 2025 | Jan 2026 |

| Extended thinking | Yes | No |

| Adaptive thinking | — | Yes |

| Tokenizer | Standard Claude 4.x | New (+35% tokens) |

| Vision input max edge | Standard | 2,576 px |

| Input price | $5 / MTok | $5 / MTok |

| Output price | $25 / MTok | $25 / MTok |

Upgrade if: agentic coding, long-running multi-file refactors, anything where you previously hit 30+ tool calls per task. The +13 points on SWE-bench is real and the self-verification behavior is the headline win.

Stay on Opus 4.6 if: your workflow relies on forcing extended thinking, you have prompt budgets locked to 4.6 token counts, or you are running cost-sensitive batch jobs where the new tokenizer's +35% bump erases the value gain.

Who should use Claude Opus 4.7?

- Solo founders and small teams running production agents. The self-verification on long runs is the single biggest unlock since Opus 4.5. Worth it if your bottleneck is unattended autonomy.

- Teams doing big monorepo refactors. The 1M context plus the +12 points on CursorBench is exactly what large-codebase agentic editing needs.

- Anyone running Claude Code, Cursor, or Windsurf as their daily driver. Switch the model selector to

claude-opus-4-7and feel the difference within an afternoon.

Who should skip Opus 4.7

- Cost-sensitive production loads. The +35% tokenizer bump on top of the same per-token price hits batch jobs hard. Sonnet 4.6 at $3 input / $15 output is often the better answer.

- Fast iteration loops. Opus 4.7 latency is "moderate" per Anthropic. For short, snappy turns Haiku 4.5 or Sonnet 4.6 are cleaner.

- Anything that depends on extended thinking. Opus 4.7 dropped it. Sonnet 4.6 still has it.

Verdict

Claude Opus 4.7 is the best agentic coding model on the market in April 2026. The +13 points on SWE-bench Verified is not marketing fluff — we feel it on every multi-file refactor we hand off. The self-verification behavior alone is worth the migration. The catch is the new tokenizer: same headline price, real cost-per-task is up. If your workflow is "long agentic runs that need to actually finish," upgrade. If your workflow is "thousands of cheap batch calls," look at Sonnet 4.6.

We score it 9.4 / 10. We rate it the best agentic LLM available today, with the caveat that the cost shift is non-trivial and you should re-budget your monthly Anthropic spend before flipping the switch.

Frequently asked questions

What is Claude Opus 4.7?

Claude Opus 4.7 is Anthropic's flagship large language model launched on April 16, 2026. API ID claude-opus-4-7. It is positioned as Anthropic's "most capable generally available model for complex reasoning and agentic coding." Hits 93% on SWE-bench Verified (versus 80% for Opus 4.6), 70% on CursorBench (versus 58%), and 98.5% on XBOW visual-acuity (versus 54.5%). Ships with a 1M-token context window, 128K max output tokens, adaptive thinking, and a January 2026 reliable knowledge cutoff.

How much does Claude Opus 4.7 cost?

API pricing is $5 per million input tokens and $25 per million output tokens — identical to Opus 4.6. Cache hits drop to $0.50 per million tokens (90% off). Batch API gets a 50% discount: $2.50 input / $12.50 output per million tokens. On consumer plans, Opus 4.7 access is included with Pro ($17 per month annual / $20 monthly), Max 5x (from $100 per month), and Max 20x (from $200 per month). The catch: Opus 4.7 uses a new tokenizer that consumes up to 35% more tokens for the same English text, so the real cost-per-task is higher than the headline rate suggests.

Is Opus 4.7 better than Opus 4.6 for coding?

Yes, by a clear margin. SWE-bench Verified jumps from 80% to 93% (+13 percentage points). CursorBench jumps from 58% to 70%. The qualitative win we observed across eleven days of daily use on our Planet Cockpit project: Opus 4.7 self-verifies its own diffs before reporting back, so long agentic runs (30+ tool calls) hold up where Opus 4.6 used to drift. If your workflow is "long autonomous coding tasks that need to actually finish," upgrade.

How big is Opus 4.7's context window?

1 million tokens of input context, 128,000 tokens of max output (with a beta header allowing up to 300,000 output tokens via the Message Batches API). Anthropic notes that Opus 4.7 uses a new tokenizer with about 555,000 words equivalent for 1M tokens (versus ~750,000 words for Opus 4.6's tokenizer). In practice we throw entire mid-size codebases at it without compression and recall holds up well past the 700k-token mark.

Does Opus 4.7 support extended thinking?

No. Opus 4.7 dropped extended thinking in favor of adaptive thinking — the model decides internally whether to reason for longer based on task complexity. Sonnet 4.6 and Haiku 4.5 still support extended thinking explicitly. If your pipeline relied on forcing extended reasoning on Opus, you will need to switch that lever to Sonnet 4.6 or accept Opus 4.7's adaptive default.

What new features launched with Opus 4.7?

Four headline additions on April 16, 2026: (1) extra-high effort level xhigh between high and max for finer reasoning-latency tradeoffs; (2) task budgets in public beta — set a token cap on a long agentic run; (3) the /ultrareview slash command for dedicated code review sessions on Pro and Max plans; (4) Auto Mode expanded to Max-tier users (previously Enterprise-only). Vision input also bumped — accepts images up to 2,576 pixels on the long edge, more than triple prior Claude models.

How do I migrate from Opus 4.6 to Opus 4.7?

Change the model ID in your API calls from claude-opus-4-6 to claude-opus-4-7. That is the entire migration on the API side. Caveat: the new tokenizer changes token counts for identical input text, so any hard-coded token budgets need re-tuning. Anthropic publishes a dedicated migration guide at platform.claude.com/docs/en/about-claude/models/migration-guide. Opus 4.6 stays available — Anthropic has not announced a deprecation date.

Is Opus 4.7 available on AWS Bedrock and Google Vertex AI?

Yes. AWS Bedrock ID is anthropic.claude-opus-4-7 via the Messages-API Bedrock endpoint. Google Vertex AI ID is claude-opus-4-7. Microsoft Foundry also carries Opus 4.7. Note that regional and multi-region endpoints carry a 10% premium over global endpoints on AWS Bedrock and Vertex AI for Sonnet 4.5 and newer models — Opus 4.7 follows that pricing structure.

How does Claude Opus 4.7 compare to GPT-5.5 for agentic coding?

On SWE-bench Verified, Opus 4.7 scores 93% versus GPT-5.5 around 75%. In our daily Planet Cockpit usage, Opus 4.7 handles long multi-file refactors more reliably (1M context vs GPT-5.5's 400K) and is better at self-correcting when migration scripts fail. GPT-5.5 wins on raw text generation speed and on tool ecosystem maturity (Function calling, structured outputs, vision). For pure agentic coding workflows, Opus 4.7 is our pick. For multi-modal reasoning + native audio, GPT-5.5 wins.

Should I migrate from Claude Opus 4.6 to Opus 4.7?

Yes — but plan for the new tokenizer. Opus 4.7 uses a different tokenizer that adds approximately 35% more tokens for the same prompt (real cost-per-task is up despite identical sticker pricing). Benefits: +13 SWE-bench points (80%→93%), CursorBench 70% vs 58%, XBOW 98.5% vs 54.5%, plus xhigh effort level + task budgets + Auto Mode for Max tier. We migrated all production agents at Planet Cockpit on launch day (April 16, 2026). Re-tune your token budgets and drop explicit extended-thinking calls (4.7 dropped this lever in favor of adaptive thinking).

Key Features

Pros & Cons

Pros

- SWE-bench Verified 93% — best in class agentic coding

- Self-verification on long agentic runs

- 1M-token context window

- Adaptive thinking decides reasoning depth automatically

- Vision input up to 2,576 px on long edge

- Available on AWS Bedrock, Vertex AI, Microsoft Foundry

- Task budgets and xhigh effort level for fine control

Cons

- New tokenizer uses up to 35% more tokens for the same text

- No extended thinking mode (only adaptive)

- Real cost-per-task is up versus Opus 4.6 despite same headline price

- Latency is moderate, not best-in-class for short turns

- No silent rescue of vague prompts (correctness win, friction cost)

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Claude Opus 4.7?

Anthropic's flagship LLM — agentic coding king with 1M context

How much does Claude Opus 4.7 cost?

Claude Opus 4.7 costs $5/month.

Is Claude Opus 4.7 free?

No, Claude Opus 4.7 starts at $5/month.

What are the best alternatives to Claude Opus 4.7?

Top-rated alternatives to Claude Opus 4.7 can be found in our WebApplication category on ThePlanetTools.ai.

Is Claude Opus 4.7 good for beginners?

Claude Opus 4.7 is rated 9/10 for ease of use.

What platforms does Claude Opus 4.7 support?

Claude Opus 4.7 is available on api, web, aws-bedrock, gcp-vertex-ai, microsoft-foundry.

Does Claude Opus 4.7 offer a free trial?

No, Claude Opus 4.7 does not offer a free trial.

Is Claude Opus 4.7 worth the price?

Claude Opus 4.7 scores 8.5/10 for value. We consider it excellent value.

Who should use Claude Opus 4.7?

Claude Opus 4.7 is ideal for: Multi-file agentic refactors on large codebases, Long autonomous coding tasks (30+ tool calls), Complex reasoning and step-by-step analysis, Long-document analysis (entire codebases or 700+ page documents), Production AI agents and automation pipelines, Code review with /ultrareview command.

What are the main limitations of Claude Opus 4.7?

Some limitations of Claude Opus 4.7 include: New tokenizer uses up to 35% more tokens for the same text; No extended thinking mode (only adaptive); Real cost-per-task is up versus Opus 4.6 despite same headline price; Latency is moderate, not best-in-class for short turns; No silent rescue of vague prompts (correctness win, friction cost).

Ready to try Claude Opus 4.7?

Get started today

Try Claude Opus 4.7 Now →