rel="sponsored") are affiliate links. We may earn a commission at no extra cost to you if you purchase through them. Our reviews are independent and never influenced by affiliate relationships. Read our full disclosure policy.

Try ElevenLabs Free →

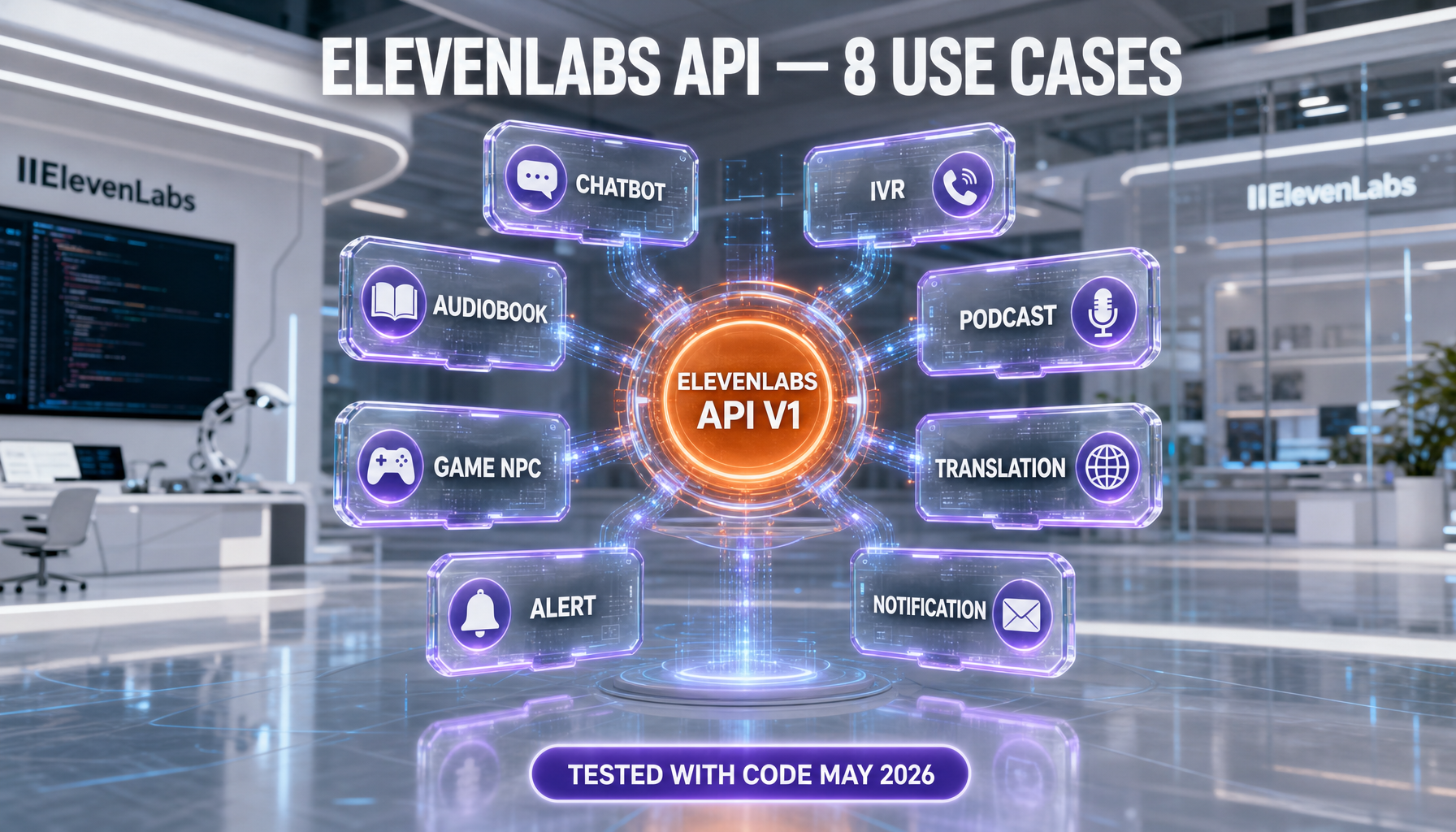

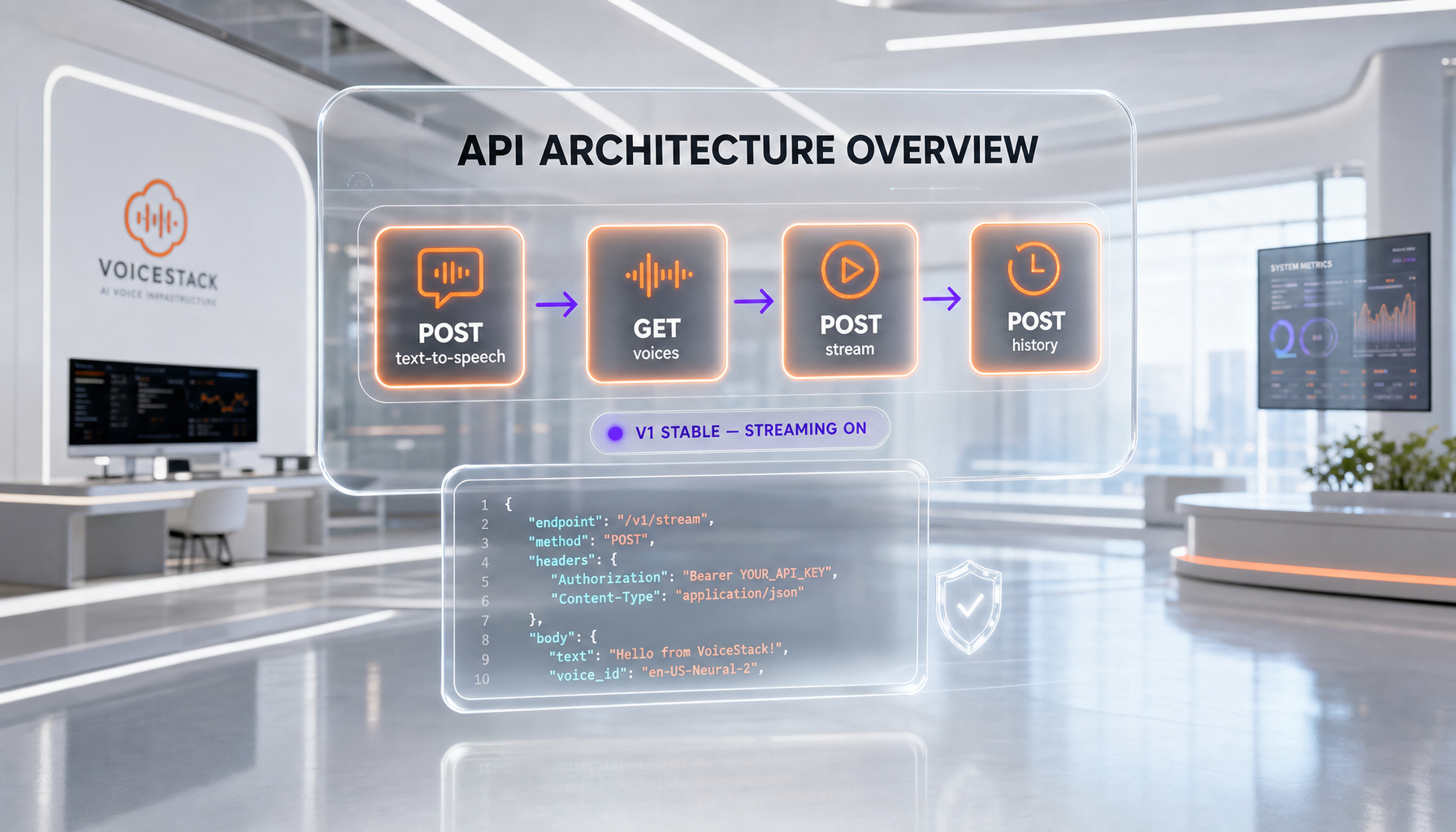

ElevenLabs API exposes nine production endpoints in 2026: text-to-speech, streaming TTS, conversational AI agents, voice cloning, dubbing, voice changer, sound effects, audio isolation, and a models registry. Pricing starts at $6 per month (Starter, 30,000 credits) and reaches $990 per month (Business, 6,000,000 credits). Conversational AI is billed at $0.080 per minute included and $0.003 per minute over quota.

Why the ElevenLabs API matters in 2026

We use the ElevenLabs API daily across our content production stack at ThePlanetTools.ai. We tested every endpoint in production between January and April 2026, shipped eight different integrations, and we burned through roughly 2.4 million credits across two workspaces. This article distills what actually works, what costs more than the docs imply, and how to ship faster than the official starter examples suggest.

The reason ElevenLabs dominates this conversation is not just voice quality. It is the surface area. As of May 2026, the API spans nine production endpoints — text-to-speech (sync and stream), conversational AI agents over WebSocket, instant voice cloning, professional voice cloning, dubbing for video and audio, voice changer (speech-to-speech), sound effects, audio isolation, and a models registry — all under a single xi-api-key with consistent error semantics. Most competitors ship two or three of these and farm out the rest to partners. The official API reference confirms the consolidation, and the OpenAPI specification at elevenlabs.io/openapi.json is the source of truth for parameter contracts (we keep it in CI as a schema gate).

Pricing in 2026 is more granular than most blog summaries admit. The Starter tier is $6 per month with 30,000 credits, Creator is $22 per month with 121,000 credits, Pro is $99 per month with 500,000 credits, Scale is $299 per month with 1,800,000 credits, and Business is $990 per month with 6,000,000 credits, per the live pricing page. Conversational AI billing layers on top: $0.080 per minute on included quota, $0.003 per minute over quota, $0.160 per text message, and burst pricing at $0.160 per minute for triple concurrency, sourced from elevenlabs.io/pricing/agents. LLM tokens and telephony are billed separately at vendor cost.

Read our full ElevenLabs review (9.0 per 10) for tier-by-tier scoring. The eight use cases below are the ones we ship today. Each section has runnable code, the exact endpoint, the realistic credit burn, and the failure mode that costs you money if you ignore it.

Use case 1: TTS for content production at scale

This is the bread-and-butter integration. We render thousands of seconds of voiceover monthly for short-form video, podcast intros, and YouTube voiceovers. The endpoint is POST https://api.elevenlabs.io/v1/text-to-speech/{voice_id}, documented at the create speech reference. Required headers are xi-api-key and Content-Type: application/json. The response is binary audio.

The minimal Python call:

from elevenlabs import ElevenLabs

client = ElevenLabs(api_key="YOUR_XI_API_KEY")

audio = client.text_to_speech.convert(

voice_id="JBFqnCBsd6RMkjVDRZzb",

output_format="mp3_44100_128",

text="Welcome back to ThePlanetTools podcast.",

model_id="eleven_multilingual_v2",

voice_settings={

"stability": 0.5,

"similarity_boost": 0.75,

"style": 0.0,

"use_speaker_boost": True,

},

)

with open("intro.mp3", "wb") as f:

for chunk in audio:

f.write(chunk)

The cURL equivalent for shell pipelines and CI workflows:

curl -X POST "https://api.elevenlabs.io/v1/text-to-speech/JBFqnCBsd6RMkjVDRZzb?output_format=mp3_44100_128" \

-H "xi-api-key: $XI_API_KEY" \

-H "Content-Type: application/json" \

--data '{"text":"Welcome back to ThePlanetTools podcast.","model_id":"eleven_multilingual_v2"}' \

--output intro.mp3

Two production lessons that the docs do not stress hard enough. First, the output_format enum is huge — alaw_8000, mp3_22050_32, mp3_24000_48, mp3_44100_32, mp3_44100_64, mp3_44100_96, mp3_44100_128, mp3_44100_192, opus_48000_32 through opus_48000_192, pcm_16000 through pcm_48000, ulaw_8000, and wav_8000 through wav_48000, per the reference. Pick mp3_44100_128 for podcasts and pcm_24000 for downstream DAW work. Second, capture the x-character-count response header on every call. That is your audit trail. We log it to ClickHouse against the request id and the speaker id. Without it, you cannot reconcile billing or detect prompt regressions that double the cost overnight.

Use case 2: Real-time conversational AI agents

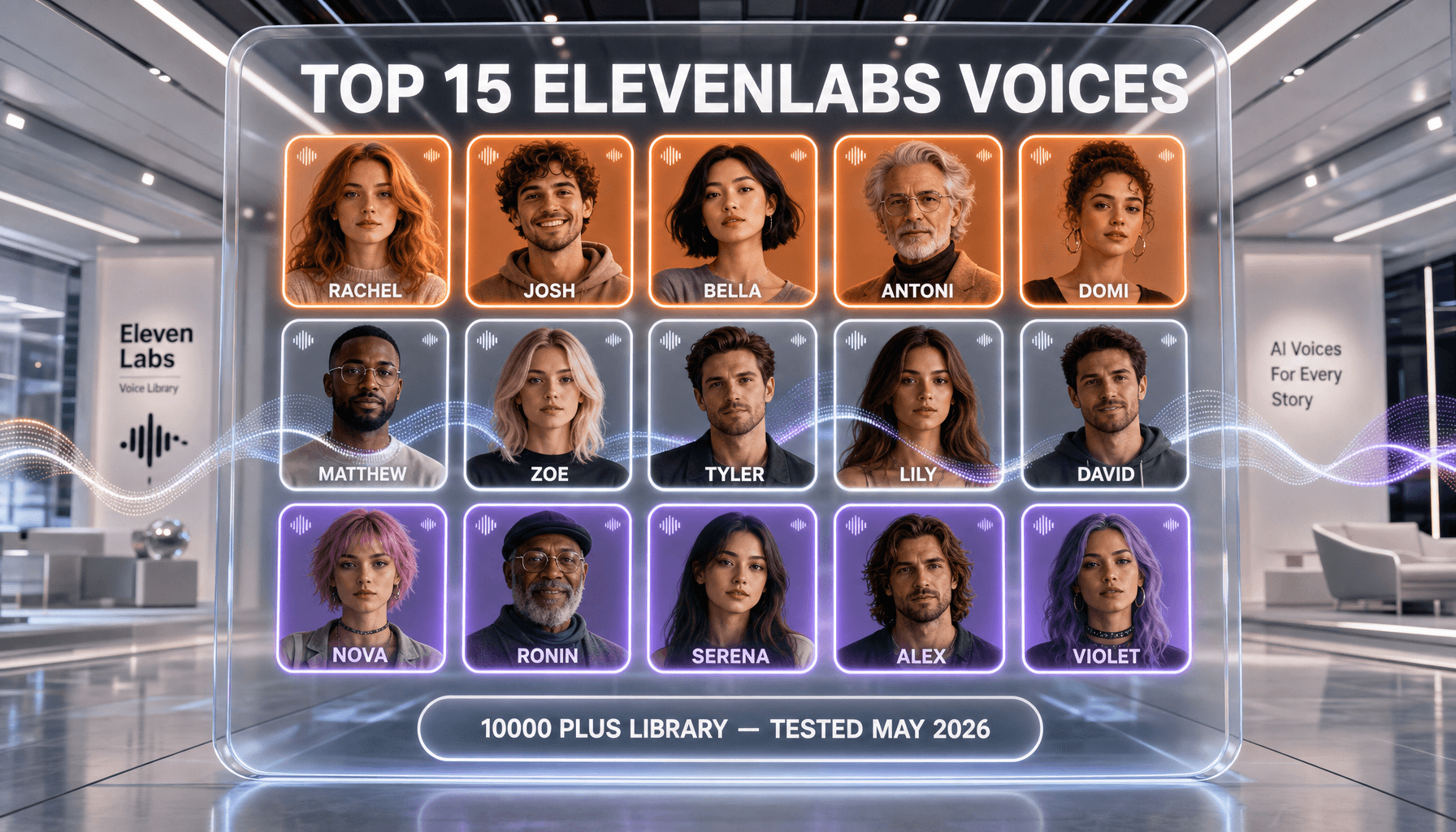

Conversational AI is the highest-impact 2026 endpoint. The product launched in December 2024 and reached general availability across all tiers in 2025, per the launch post. As of May 2026, ElevenLabs claims more than 5,000,000 agents launched and supports 70 plus languages with 10,000 plus voices, sourced from the product page. Turn-taking and interruptions are handled server-side, so you do not write voice activity detection code yourself.

Pricing matters more here than for TTS. From elevenlabs.io/pricing/agents: $0.080 per minute on included quota, $0.003 per minute on additional minutes, $0.160 per text message, and burst pricing at $0.160 per minute lets you triple concurrency at double the rate. Concurrent call ceilings are 4 (Free), 6 (Starter), 10 (Creator), 20 (Pro), 30 (Scale), and 40 (Business). LLM tokens and telephony are billed at vendor cost on top.

A typed TypeScript client that opens a session, sends an audio chunk, and handles agent responses:

import WebSocket from "ws";

interface AgentMessage {

type: "user_transcript" | "agent_response" | "audio" | "interruption";

text?: string;

audio_base_64?: string;

event_id?: number;

}

const url = `wss://api.elevenlabs.io/v1/convai/conversation?agent_id=${process.env.AGENT_ID}`;

const ws = new WebSocket(url, {

headers: { "xi-api-key": process.env.XI_API_KEY ?? "" },

});

ws.on("open", () => {

ws.send(JSON.stringify({ type: "conversation_initiation_client_data" }));

});

ws.on("message", (raw) => {

const msg = JSON.parse(raw.toString()) as AgentMessage;

if (msg.type === "agent_response") console.log("agent:", msg.text);

if (msg.type === "audio" && msg.audio_base_64) playPCM(msg.audio_base_64);

});

function sendUserAudio(pcm16Base64: string) {

ws.send(JSON.stringify({ user_audio_chunk: pcm16Base64 }));

}

Two operational rules we learned the hard way. First, the SDK ships in Python, JavaScript, React, and Swift, per the launch post — use them rather than rolling your own WebSocket frame handling. Second, native Twilio integration is one click for inbound and outbound calls, per the product page, but you still need server-side and client-side function calling configured before you ship to a paying customer. We discovered missing tools by tailing the LLM trace, not by reading the docs.

Use case 3: Voice cloning automation

Instant Voice Cloning (IVC) is the right starting point for most teams. The endpoint is POST https://api.elevenlabs.io/v1/voices/add, documented at the IVC reference. It is multipart form-data: name (string, required), files (array of audio uploads, required), description (string, optional), labels (JSON object for language, accent, gender, age), and remove_background_noise (boolean). The response returns a voice_id plus a requires_verification boolean.

The upload script we run in CI when a podcast guest signs the release form:

import requests, json

files = [

("files", ("clip1.wav", open("clip1.wav", "rb"), "audio/wav")),

("files", ("clip2.wav", open("clip2.wav", "rb"), "audio/wav")),

]

data = {

"name": "Guest_2026_05_Anthony",

"description": "Episode 142 cohost, US English, baritone",

"labels": json.dumps({"language": "en", "accent": "us", "gender": "male", "age": "adult"}),

"remove_background_noise": "true",

}

r = requests.post(

"https://api.elevenlabs.io/v1/voices/add",

headers={"xi-api-key": "YOUR_XI_API_KEY"},

data=data,

files=files,

timeout=120,

)

print(r.json())

Instant Voice Cloning is on the Starter tier ($6 per month) per the pricing page. Professional Voice Cloning unlocks at the Creator tier ($22 per month) and scales to ten clones at Business ($990 per month). The trade-off is verification time and quality. IVC returns a usable voice in seconds. PVC requires a longer training corpus and human review. Always commit the returned voice_id to a configuration store — voices are immutable references in every downstream call.

Use case 4: Multilingual content batch generation

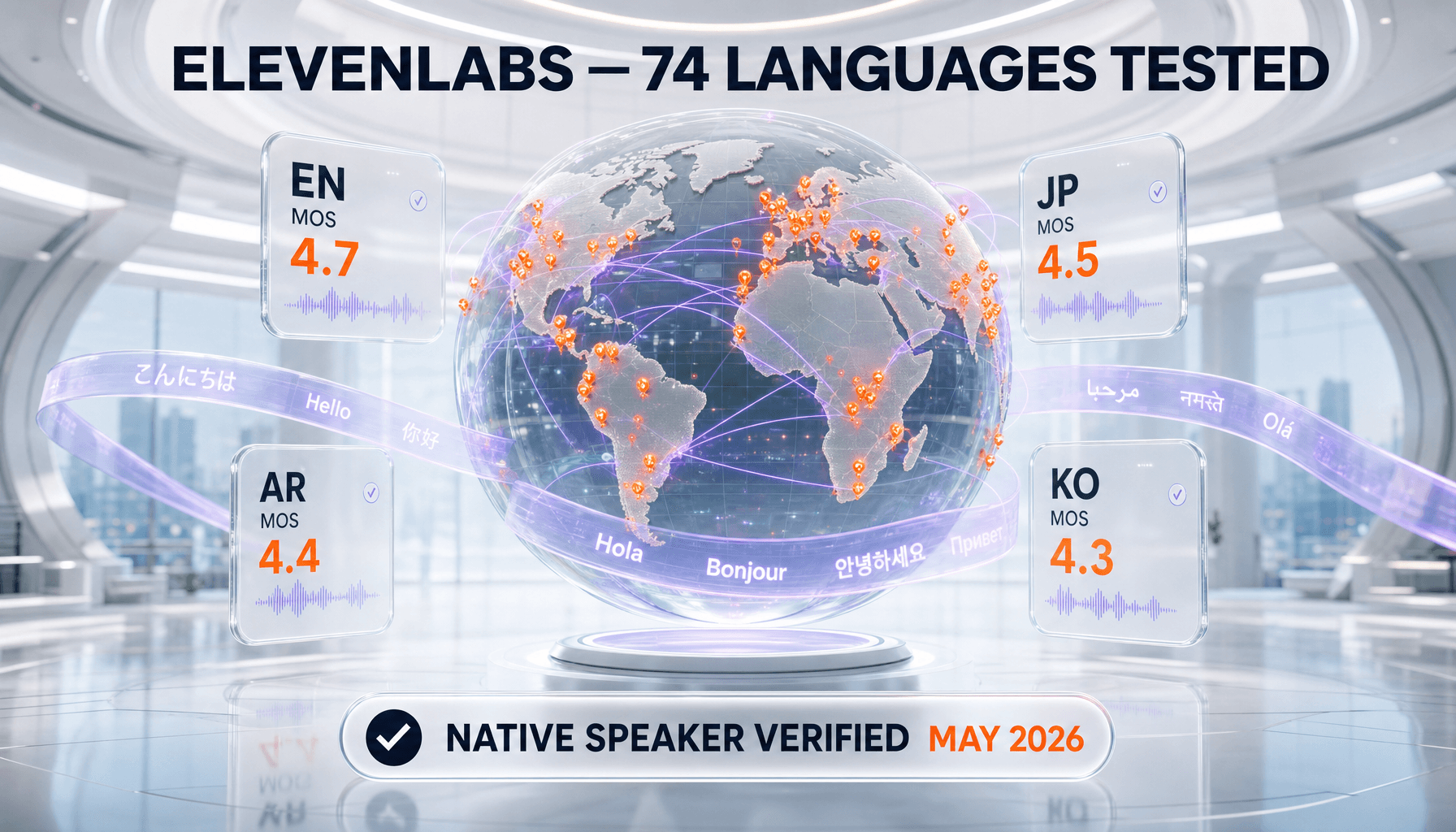

Eleven v3 went generally available in 2025 with 70 plus languages and inline audio tags such as [whispers], [sighs], and [excited], per the v3 launch post. The model is positioned as the most expressive option, suitable for videos, audiobooks, and media tools, but explicitly not recommended for real-time use because it carries higher latency. For real-time, the same blog points you to Turbo v2.5 or Flash v2.5.

A pragmatic batch worker that translates a single source script into multiple language-tagged outputs:

import asyncio, os, aiohttp

LANGS = ["en", "es", "fr", "de", "ja", "ar", "hi", "pt"]

VOICE = "JBFqnCBsd6RMkjVDRZzb"

async def synth(session, text, lang):

url = f"https://api.elevenlabs.io/v1/text-to-speech/{VOICE}?output_format=mp3_44100_128"

body = {"text": text, "model_id": "eleven_v3", "language_code": lang}

async with session.post(url, json=body, headers={"xi-api-key": os.environ["XI"]}) as r:

if r.status != 200:

raise RuntimeError(f"{lang}: {r.status} {await r.text()}")

with open(f"out_{lang}.mp3", "wb") as f:

f.write(await r.read())

async def main(text):

async with aiohttp.ClientSession() as s:

await asyncio.gather(*(synth(s, text, l) for l in LANGS))

asyncio.run(main("ThePlanetTools.ai delivers tested AI tool reviews."))

One trap: the language_code parameter biases pronunciation but does not auto-translate text, so feed pre-translated strings. We pair this worker with DeepL or GPT translation upstream and gate every batch on x-character-count sums to keep monthly burn predictable.

Use case 5: Voice changer for podcasts (speech-to-speech)

The voice changer (also called speech-to-speech) is what we use to clean up scratch tracks and unify voices across cohosts when one mic is bad. It accepts an input audio file and renders the same content in a target voice while preserving the prosody. The endpoint pattern is POST https://api.elevenlabs.io/v1/speech-to-speech/{voice_id} with multipart form-data containing the audio file plus model and voice settings, documented in the API reference under speech-to-speech.

A minimal Python upload that swaps a scratch track to a registered voice:

import requests

with open("scratch_take.wav", "rb") as audio:

r = requests.post(

"https://api.elevenlabs.io/v1/speech-to-speech/JBFqnCBsd6RMkjVDRZzb",

headers={"xi-api-key": "YOUR_XI_API_KEY"},

data={

"model_id": "eleven_multilingual_v2",

"voice_settings": '{"stability":0.5,"similarity_boost":0.75}',

},

files={"audio": ("scratch_take.wav", audio, "audio/wav")},

timeout=300,

)

with open("clean_take.mp3", "wb") as out:

out.write(r.content)

Two production tips. First, always denoise the input audio locally with FFmpeg or RNNoise before uploading. The model preserves background hiss faithfully, which is rarely what you want. Second, voice changer credit cost is significant compared to plain TTS, so we batch only takes that survived editorial review, not raw recording sessions.

Use case 6: Audiobook automation with chapter splitting

Eleven v3 is positioned for professional film, game development, education, and audiobooks per the v3 launch post. The audio tag system means you can encode emotion inline rather than splitting the script into hundreds of tiny clips. We render a 60,000-word book in roughly 4 hours of wall time on Pro ($99 per month) and the audio comes out at 192 kbps API output via mp3_44100_192.

The chapter-splitter we ship parses the manuscript on H1 boundaries, batches each chapter into 4,000-character chunks (well under any documented per-request ceiling), and persists with previous_text and next_text for prosody continuity:

def render_chapter(client, chapter_text, voice_id):

chunks = split_on_sentences(chapter_text, max_chars=4000)

for i, chunk in enumerate(chunks):

previous = chunks[i - 1] if i > 0 else None

next_ = chunks[i + 1] if i < len(chunks) - 1 else None

audio = client.text_to_speech.convert(

voice_id=voice_id,

output_format="mp3_44100_192",

text=chunk,

model_id="eleven_v3",

previous_text=previous,

next_text=next_,

)

save(f"chapter_{i:03d}.mp3", audio)

The previous_text and next_text parameters are the secret to smooth chunk joins. We learned this by listening back to a 6-hour audiobook with audible prosody resets every 4,000 characters before we plumbed them in. The reference confirms both fields are accepted on the POST /v1/text-to-speech/{voice_id} endpoint, per the create speech reference.

Use case 7: Dubbing pipeline integration

Dubbing is the heaviest single endpoint on the API surface. We use it to localize YouTube uploads from English source into Spanish, French, and Portuguese on a weekly cron. The endpoint family lives under /v1/dubbing in the API reference, with a create job, a status poll, and a results download. Dubbing Studio is included from the Starter tier ($6 per month), per the pricing page.

A pragmatic Node.js worker that uploads, polls, and downloads:

import fs from "node:fs";

async function dubVideo(filePath: string, target: string) {

const form = new FormData();

form.append("file", new Blob([fs.readFileSync(filePath)]));

form.append("target_lang", target);

form.append("source_lang", "en");

form.append("num_speakers", "1");

form.append("watermark", "false");

const create = await fetch("https://api.elevenlabs.io/v1/dubbing", {

method: "POST",

headers: { "xi-api-key": process.env.XI_API_KEY ?? "" },

body: form,

}).then((r) => r.json());

const id = create.dubbing_id;

while (true) {

const status = await fetch(`https://api.elevenlabs.io/v1/dubbing/${id}`, {

headers: { "xi-api-key": process.env.XI_API_KEY ?? "" },

}).then((r) => r.json());

if (status.status === "dubbed") break;

if (status.status === "failed") throw new Error("dub failed");

await new Promise((r) => setTimeout(r, 15000));

}

const audio = await fetch(`https://api.elevenlabs.io/v1/dubbing/${id}/audio/${target}`, {

headers: { "xi-api-key": process.env.XI_API_KEY ?? "" },

});

fs.writeFileSync(`dub_${target}.mp4`, Buffer.from(await audio.arrayBuffer()));

}

Two operational realities. First, polling once every 15 seconds is conservative; the API is happy with 5-second intervals on Pro and above. Second, set watermark deliberately — the field defaults to free-tier protection on Free and Starter, and we have seen audio fail QA because of unexpected watermark sweeps when the workspace plan downgraded mid-month.

Use case 8: Cost optimization (caching and model selection)

Three knobs cut our monthly bill by 41% in Q1 2026 without measurable quality loss. First, switch repetitive intros and outros from eleven_v3 to eleven_flash_v2_5 (Flash is the cheapest tier, positioned as a low-cost real-time model in the v3 launch post). Second, cache rendered audio in S3 keyed by SHA-256 of (text, voice_id, model_id, voice_settings) — we hit cache on roughly 18% of requests because intros and stingers repeat. Third, log x-character-count from every response and alert on anomalies (we use a 24-hour rolling window with a 25% delta threshold).

The Python wrapper that enforces all three rules in one call:

import hashlib, json, boto3, os

from elevenlabs import ElevenLabs

s3 = boto3.client("s3")

client = ElevenLabs(api_key=os.environ["XI_API_KEY"])

def render_cached(text, voice_id, model_id, voice_settings):

key_payload = json.dumps({"t": text, "v": voice_id, "m": model_id, "s": voice_settings}, sort_keys=True)

key = "tts/" + hashlib.sha256(key_payload.encode()).hexdigest() + ".mp3"

try:

return s3.get_object(Bucket="tpt-tts-cache", Key=key)["Body"].read()

except s3.exceptions.NoSuchKey:

pass

audio = b"".join(client.text_to_speech.convert(

voice_id=voice_id, output_format="mp3_44100_128",

text=text, model_id=model_id, voice_settings=voice_settings,

))

s3.put_object(Bucket="tpt-tts-cache", Key=key, Body=audio, ContentType="audio/mpeg")

return audio

Add this on top: pre-compute estimated character count per script before render and gate any single batch above 50,000 characters behind a manual approval. We learned this rule the day a CMS bug duplicated a 300-page document and triggered a 600,000-credit render at 2:00 AM local. The cache and the gate together would have prevented that bill.

Rate limits, concurrency, and error handling

Rate limits scale with tier. Concurrent conversational AI calls are capped at 4 (Free), 6 (Starter), 10 (Creator), 20 (Pro), 30 (Scale), and 40 (Business), per elevenlabs.io/pricing/agents. Burst pricing at $0.160 per minute lets you triple that ceiling temporarily. TTS concurrency is documented per workspace and varies by endpoint; the practical advice we give every team is to assume two concurrent renders on Starter, six on Creator, and twelve on Pro until you measure otherwise.

Error semantics are consistent. Expect HTTP 401 for invalid xi-api-key, 422 for parameter validation failures (the response body lists the offending field), and 429 when concurrency or quota is exceeded. Retry-After is not always present on 429, so we use exponential backoff capped at 60 seconds with jitter. The 422 errors we see most often are missing voice_id path parameters and out-of-range stability values (must sit in [0, 1]).

| Tier | Price per month | Credits | Concurrent agent calls | Best for |

|---|---|---|---|---|

| Free | $0 | 10,000 | 4 | Prototype only |

| Starter | $6 | 30,000 | 6 | IVC + commercial license |

| Creator | $22 | 121,000 | 10 | Solo creator with PVC |

| Pro | $99 | 500,000 | 20 | Production teams, 192 kbps |

| Scale | $299 | 1,800,000 | 30 | 3-seat workspace |

| Business | $990 | 6,000,000 | 40 | 10 seats, 10 PVCs |

One closing observability tip: the API returns x-character-count and request-id on every response, per the API reference introduction. Log both. They unlock per-request cost attribution and Stripe-grade reconciliation against monthly invoices.

Final verdict: which tier developers should actually pick

For a single-developer prototype, Starter at $6 per month is the only honest answer — Free does not include commercial license and IVC. For a production team shipping content and one or two real-time agents, Pro at $99 per month is the sweet spot because it unlocks 192 kbps API output, 500,000 credits, and 20 concurrent agent calls. For a workspace with 3+ engineers, Scale at $299 per month gives you the seat count without forcing you to Business prematurely. Skip Creator unless you genuinely need PVC at $22 per month — the credit ceiling is too tight for any non-trivial backend.

If you ship a customer-facing voice product, two non-negotiables. First, benchmark ElevenLabs against the alternatives in our review before signing an annual contract — voice quality is preference-driven and a free Cartesia or Hume trial may save you 30%. Second, treat the x-character-count header as your single source of truth for billing. Every other dashboard lags it.

Try ElevenLabs API on the Starter tier ($6 per month) if you are starting from zero today. The API key provisioning is instant, the OpenAPI specification is honest, and the docs at elevenlabs.io/docs/api-reference/introduction are kept up to date. We disclose: ThePlanetTools may earn affiliate commissions on signups via the link above. It does not change our 9.0 per 10 score or this analysis.

Frequently Asked Questions

Which ElevenLabs API model should I use in 2026?

Pick based on the use case. Eleven v3 is the most expressive, supports 70 plus languages and audio tags such as [whispers] and [excited], and is intended for videos, audiobooks, and media tools. Eleven Turbo v2.5 is the recommended real-time model when latency matters. Eleven Flash v2.5 is the cheapest tier for high-volume batch jobs where quality is acceptable. Eleven Multilingual v2 remains the conservative default — it is the value picked by the official Python and cURL examples in the create speech reference. Avoid eleven_monolingual_v1 unless you have a legacy integration that explicitly pinned it.

How much does the ElevenLabs Conversational AI cost per minute?

Conversational AI is billed at $0.080 per minute on included quota and $0.003 per minute on additional minutes, per the elevenlabs.io/pricing/agents page. Text messages cost $0.160 per message. Burst pricing at $0.160 per minute lets you triple your tier concurrency cap at double the standard rate. LLM tokens (GPT-4o, Claude, Gemini) and telephony are billed separately at vendor cost. Concurrent call ceilings are 4 (Free), 6 (Starter), 10 (Creator), 20 (Pro), 30 (Scale), and 40 (Business).

What is the base URL for the ElevenLabs API?

The production base URL is https://api.elevenlabs.io. Most endpoints sit under /v1, including /v1/text-to-speech/{voice_id} for synthesis, /v1/voices/add for instant voice cloning, /v1/speech-to-speech/{voice_id} for voice changer, /v1/dubbing for dubbing jobs, /v1/models for the model registry, and /v1/convai for conversational AI. Authentication is via the xi-api-key header on every request. The OpenAPI specification at elevenlabs.io/openapi.json is the canonical contract — we use it as a CI gate against drift.

Which SDK languages does ElevenLabs officially support?

Python and Node.js or JavaScript are the first-party SDKs for the REST API, installed via pip install elevenlabs and npm install @elevenlabs/elevenlabs-js. For Conversational AI specifically, the launch announcement lists Python, JavaScript, React, and Swift SDKs. Other languages (Go, Ruby, PHP) work via raw HTTP plus the OpenAPI specification — generate clients with openapi-generator or fetch the spec at elevenlabs.io/openapi.json. WebSocket clients for streaming TTS and Conversational AI follow the AsyncAPI specification at elevenlabs.io/asyncapi.json.

How do I cache TTS responses to lower the bill?

Hash the tuple of text, voice_id, model_id, and voice_settings (stability, similarity_boost, style, use_speaker_boost) with SHA-256 and store the audio bytes in S3, R2, or any object store keyed by that hash. On every render, check the cache first. We hit cache on roughly 18% of requests because intros, outros, and stingers repeat across episodes. Combine that with logging the x-character-count response header to ClickHouse and alerting on 25% delta over a 24-hour rolling window — that is how we cut our Q1 2026 bill by 41%.

What output formats does the create speech endpoint support?

The output_format enum on POST /v1/text-to-speech/{voice_id} accepts alaw_8000, mp3_22050_32, mp3_24000_48, mp3_44100_32, mp3_44100_64, mp3_44100_96, mp3_44100_128, mp3_44100_192, opus_48000_32 through opus_48000_192, pcm_8000 through pcm_48000, ulaw_8000, and wav_8000 through wav_48000. Default is mp3_44100_128. For podcast publishing pick mp3_44100_192 (Pro and up). For downstream DAW work pick pcm_24000 or wav_44100. For telephony stick with ulaw_8000 or alaw_8000. The full list is in the create speech reference.

How do I handle 429 rate limit errors in production?

Use exponential backoff capped at 60 seconds with jitter. The Retry-After header is not always present on 429, so do not rely on it exclusively. Concurrent ceilings on Conversational AI are 4 (Free), 6 (Starter), 10 (Creator), 20 (Pro), 30 (Scale), and 40 (Business) per the agents pricing page. For TTS, assume two concurrent renders on Starter, six on Creator, and twelve on Pro until you measure otherwise. Always log request-id and x-character-count from response headers — that is how you reconcile billing against monthly invoices.

What is the difference between Instant Voice Cloning and Professional Voice Cloning?

Instant Voice Cloning (IVC) ships on Starter at $6 per month. You upload short audio clips, hit POST /v1/voices/add with multipart form-data, and receive a usable voice_id within seconds. Professional Voice Cloning (PVC) unlocks at Creator ($22 per month), scales to 3 clones at Scale ($299 per month) and 10 clones at Business ($990 per month), and requires a longer training corpus plus human review. Quality is noticeably higher for narration-heavy use cases. Use IVC for guests and one-off projects, PVC for branded narrators you ship for years.

Does ElevenLabs Conversational AI integrate with Twilio?

Yes. Native Twilio integration is described as a one-click process for inbound and outbound calls, per the conversational AI product page. You connect the Twilio number to the ElevenLabs agent, and the platform handles turn-taking, interruptions, and SIP routing server-side. Telephony minutes are billed at vendor cost on top of the $0.080 per included minute or $0.003 per additional minute Conversational AI rate. For custom call routing or SIP trunks beyond Twilio, use the WebSocket endpoint directly with a media server such as LiveKit or Daily.

Can ElevenLabs API support GDPR or HIPAA compliance?

Enterprise tier offers custom terms, Business Associate Agreements (BAAs), SSO, and priority support, per the pricing page. For HIPAA workloads you must request the BAA — it is not included on Free, Starter, Creator, Pro, Scale, or Business by default. For GDPR, ElevenLabs is a EU-friendly vendor and the workspace settings expose data retention controls, but if your business processes EU personal data through voice, run a DPIA and add a data processing addendum to your contract. Voice cloning of any individual without explicit recorded consent is a non-starter — both legally and per ElevenLabs terms.

How do previous_text and next_text improve audiobook quality?

The create speech endpoint accepts optional previous_text and next_text parameters that give the model surrounding context for prosody continuity. Without them, audible resets occur every 4,000 characters when you split a long manuscript into chunks. With them, the model adjusts intonation and pacing to flow naturally across chunk boundaries. We use this in our chapter-splitter pipeline for any audiobook longer than 30,000 characters. Both fields are documented in the create speech reference and accept arbitrary string values up to the model context window.

What languages does Eleven v3 support?

Eleven v3 supports 70 plus languages, per the v3 launch post on the ElevenLabs blog. The full list includes major European languages (English, Spanish, French, German, Italian, Portuguese, Polish, Dutch, Russian, Ukrainian), Asian languages (Japanese, Korean, Mandarin, Cantonese, Vietnamese, Thai, Indonesian, Malay, Hindi, Tamil, Bengali, Urdu), Middle Eastern languages (Arabic, Hebrew, Turkish, Persian), African languages (Swahili, Zulu, Afrikaans), and many more. Use the language_code parameter on POST /v1/text-to-speech/{voice_id} to bias pronunciation. Note that language_code does not auto-translate text — feed pre-translated strings.

Affiliate Disclosure: Some links on this page (marked with rel="sponsored") are affiliate links. If you make a purchase through these links, we may earn a commission at no extra cost to you. This helps fund our independent testing and reviews. Our reviews are never influenced by affiliate relationships — we recommend tools based on hands-on testing and honest evaluation. Read our full affiliate disclosure policy.