AMI Labs closed a $1.03 billion seed round on March 10, 2026 — the largest seed funding in European startup history — at a $3.5 billion pre-money valuation. Founded by Turing Award winner Yann LeCun after leaving Meta, AMI Labs builds JEPA-based world models that learn 3D physics and spatial reasoning instead of predicting text tokens. The round was backed by NVIDIA, Jeff Bezos, Samsung, and Toyota, with headquarters in Paris and offices in New York, Montreal, and Singapore.

The Record-Breaking Seed Round

On March 10, 2026, AMI Labs officially closed a $1.03 billion seed round at a $3.5 billion pre-money valuation. This is not just a large funding round — it is the single largest seed investment ever recorded in Europe, and one of the largest globally. To put this in perspective, most AI seed rounds in 2024-2025 ranged from $5 million to $50 million. AMI Labs raised more than 20 times the upper end of that range before shipping a single product.

The round attracted a roster of investors that reads like a who's who of the global tech and industrial elite. NVIDIA led alongside participation from Jeff Bezos (through Bezos Expeditions), Samsung Ventures, and Toyota AI Ventures. This investor profile signals something critical: AMI Labs is not building another chatbot. The presence of Toyota and Samsung — companies that operate in the physical world with robotics, autonomous vehicles, and consumer electronics — tells us these backers see world models as foundational to their future product lines.

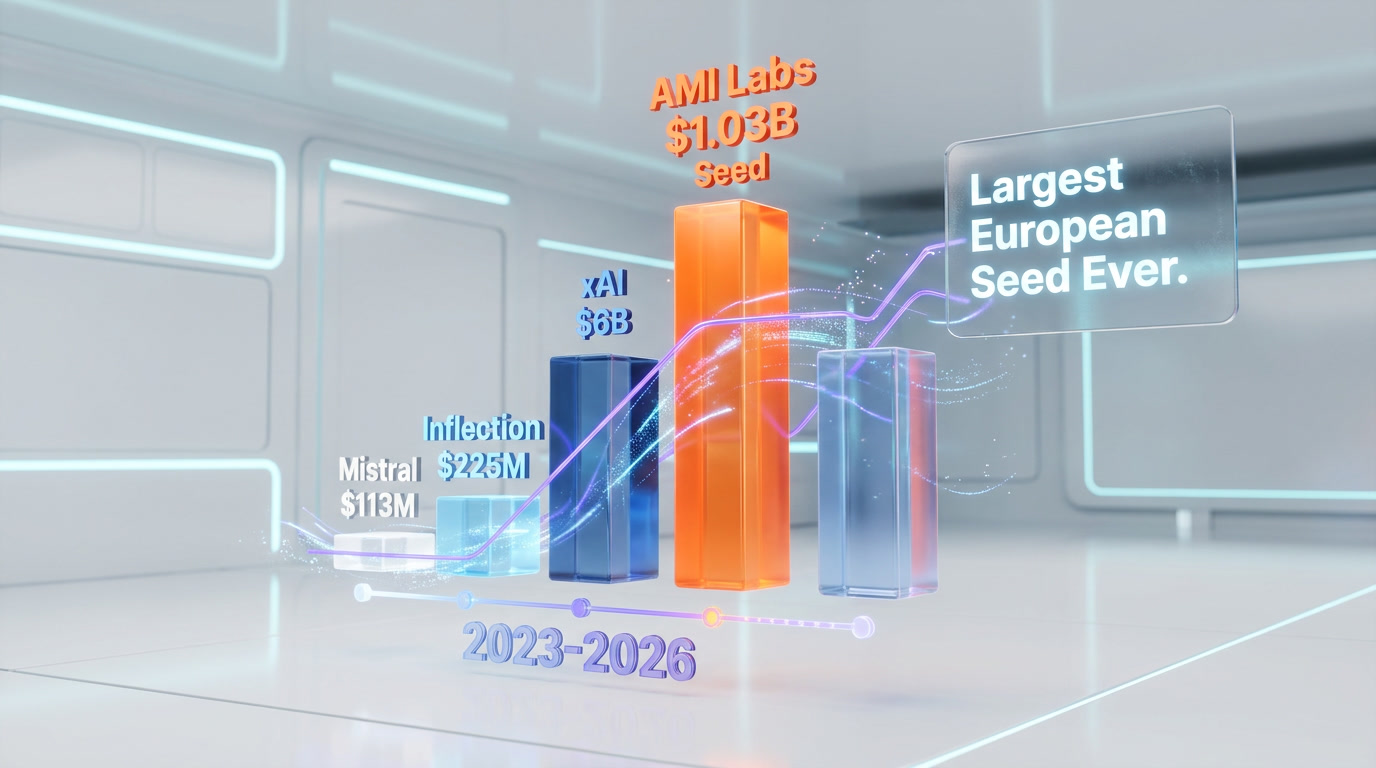

How AMI Labs Compares to Other Record Seed Rounds

| Company | Seed Amount | Valuation | Year | Focus |

|---|---|---|---|---|

| AMI Labs | $1.03B | $3.5B | 2026 | JEPA world models |

| Mistral AI | $113M | $260M | 2023 | Open-source LLMs |

| Inflection AI | $225M | $1B | 2022 | Personal AI assistant |

| Cohere | $40M | $170M | 2022 | Enterprise LLMs |

| Safe Superintelligence (SSI) | $1B | $5B | 2024 | Safe superintelligence |

The only comparable raise is Safe Superintelligence (SSI), co-founded by former OpenAI chief scientist Ilya Sutskever, which secured $1 billion in September 2024. However, SSI was classified by some as a Series A given the founders' track records. AMI Labs' round is explicitly labeled a seed — making the $1.03 billion figure even more remarkable for that stage.

What Are World Models?

World models are AI systems that build internal representations of how the physical world works. Instead of learning patterns in text sequences — as large language models (LLMs) do — world models learn the underlying physics, spatial relationships, and causal structure of three-dimensional environments. A world model can predict what happens when an object falls, how a robot arm should move to grasp a cup, or how a car should navigate an intersection — not by memorizing descriptions of these events, but by understanding the physical laws that govern them.

The concept draws heavily from cognitive science. Human infants develop world models before they learn language. A six-month-old baby understands that a ball hidden behind a screen still exists (object permanence), that unsupported objects fall (gravity), and that two solid objects cannot occupy the same space. These intuitions form a "physics engine" in the brain that is fundamentally different from language processing. LeCun's core thesis is that AI must develop this same kind of intuitive physics understanding to achieve anything resembling general intelligence.

In practical terms, world models could enable AI systems to plan multi-step actions in the real world, simulate outcomes before executing them, and generalize to entirely new situations they have never been explicitly trained on. This is the capability gap that separates today's AI — which excels at language and image generation — from AI that can reliably operate in unpredictable physical environments.

JEPA Explained: The Architecture Behind AMI Labs

JEPA — Joint Embedding Predictive Architecture — is the technical foundation of AMI Labs' approach. Developed by Yann LeCun during his time at Meta's FAIR lab, JEPA represents a fundamentally different paradigm from the transformer-based architectures that power GPT-4, Claude, Gemini, and other leading LLMs.

How JEPA Works

Traditional LLMs learn by predicting the next token in a sequence. Given "The cat sat on the", they predict "mat." This works remarkably well for language but has fundamental limitations when it comes to understanding the physical world. You cannot learn 3D physics from text descriptions any more than you can learn to ride a bicycle by reading about it.

JEPA takes a different approach. Instead of predicting raw data (pixels, tokens, audio samples), JEPA predicts abstract representations of data in a learned embedding space. Here is the key distinction:

- LLMs (Generative): Input → Predict next token → Generate output in data space

- JEPA (Predictive): Input → Encode to abstract representation → Predict future representations in embedding space

This means JEPA does not waste computational resources on predicting every pixel in a future video frame or every word in a paragraph. Instead, it learns to predict the essential structure — the abstract features that matter for understanding what is happening and what will happen next. This mirrors how the human brain processes sensory information: we do not consciously track every photon hitting our retinas, but we maintain a rich, abstract model of our surroundings.

Why This Matters for Robotics and Autonomous Systems

JEPA's abstract prediction capability is precisely what is needed for robotic planning. A robot using a JEPA-based world model could:

- Simulate actions before executing them — predict the consequences of reaching for an object, opening a door, or navigating through a crowd

- Plan multi-step sequences — chain together predictions to accomplish complex goals ("go to the kitchen, open the fridge, pick up the milk, bring it to the table")

- Transfer learning across domains — because the representations are abstract, knowledge about physics in one context transfers naturally to another

- Learn efficiently from video — JEPA can learn world dynamics from unlabeled video footage, potentially requiring far less curated training data than current approaches

Why LLMs Will Not Lead to AGI: LeCun's Four Arguments

Yann LeCun has been the most prominent critic of the "scale LLMs to AGI" narrative that dominates much of Silicon Valley. His arguments are not merely philosophical — they are grounded in concrete technical limitations. Here are the four core arguments he has made publicly and that underpin AMI Labs' research direction.

1. LLMs Cannot Learn Physics From Text

Language is a lossy compression of reality. When we describe a glass falling off a table, the text captures the event but not the physics — the parabolic trajectory, the acceleration due to gravity, the way the glass shatters based on its material composition and the surface it hits. No amount of text data contains the information necessary to reconstruct these physical dynamics. LeCun argues that an AI system trained exclusively on text will never develop a genuine understanding of the physical world, regardless of scale.

2. Next-Token Prediction Is the Wrong Objective

Autoregressive generation — predicting the next token — optimizes for statistical coherence, not truth or understanding. LLMs can produce text that reads perfectly but is factually wrong, logically inconsistent, or physically impossible. This is not a bug that will be fixed with more data or larger models; it is an inherent limitation of the training objective. Predicting what word comes next is not the same as understanding what is true or what will happen.

3. LLMs Lack Persistent World State

When you ask an LLM about a scenario, it processes the prompt from scratch each time. There is no persistent internal model of the world that updates as new information arrives. Humans maintain a continuously updated world model — we know where our keys are, what time our meeting starts, and whether it rained yesterday — without needing to re-process all of our life experiences. LLMs have context windows that simulate this, but they do not truly maintain state. LeCun argues this architectural limitation is fundamental, not solvable with longer context windows.

4. Planning Requires Simulation, Not Generation

To plan complex actions in the real world, you need to simulate potential futures and evaluate their outcomes. "If I turn the steering wheel left, will the car avoid the obstacle?" This requires a model that can forward-simulate physical dynamics. LLMs generate plausible-sounding action descriptions, but they cannot run internal simulations of physical outcomes. LeCun's JEPA architecture is specifically designed to enable this kind of mental simulation — predicting abstract future states rather than generating text about them.

The Team: Who Is Building AMI Labs

AMI Labs has assembled one of the most accomplished founding teams in AI research history. Every senior member has published extensively in top-tier venues and held leadership positions at major AI labs.

| Name | Role | Background | Notable Achievement |

|---|---|---|---|

| Yann LeCun | Founder & Chief Scientist | Former VP & Chief AI Scientist at Meta (FAIR) | 2018 Turing Award winner (with Hinton & Bengio). Pioneer of convolutional neural networks (CNNs). Invented backpropagation applied to CNNs. |

| LeBrun | CEO | Operational leadership & company building | Manages AMI Labs' global operations, investor relations, and go-to-market strategy across 4 offices. |

| Saining Xie | Chief Science Officer (CSO) | Former researcher at Meta FAIR, NYU faculty | Co-created ResNeXt and ConvNeXt architectures. Leading the JEPA research direction at AMI Labs. |

| Pascale Fung | Senior Research Lead | Professor at HKUST, NLP & multilingual AI expert | Pioneer in multilingual AI, cross-lingual transfer learning. Founding member of multiple AI ethics initiatives. |

| Mike Rabbat | Senior Research Lead | Former research scientist at Meta FAIR | Expert in distributed optimization and decentralized learning. Key contributor to JEPA development at Meta FAIR. |

The team's pedigree is not just academic. LeCun spent over a decade at Meta building FAIR into one of the world's most prolific open-source AI research labs. His decision to leave Meta and start AMI Labs — rather than retiring on his Turing Award laurels — signals deep conviction that the LLM paradigm has hit a ceiling, and that world models represent the next fundamental leap.

The Competition: World Labs and the Emerging World Model Race

AMI Labs is not the only company betting on world models. The most direct competitor is World Labs, founded by Fei-Fei Li — another titan of the AI research world. Li, known as the "godmother of AI" for creating ImageNet (the dataset that ignited the deep learning revolution), launched World Labs in 2024 with $230 million in funding at a $1 billion valuation.

AMI Labs vs World Labs

| Dimension | AMI Labs | World Labs |

|---|---|---|

| Founded | 2025 (launched publicly 2026) | 2024 |

| Funding | $1.03B seed | $230M Series A |

| Valuation | $3.5B pre-money | $1B |

| Founder | Yann LeCun (Turing Award) | Fei-Fei Li (ImageNet creator) |

| Core Architecture | JEPA (joint embedding predictive) | Spatial intelligence / 3D scene understanding |

| Focus | Universal world models (physics, robotics, planning) | 3D spatial AI for mixed reality and robotics |

| Open Source Commitment | Yes — will open-source research | Partial — selective open-source |

| Key Backers | NVIDIA, Bezos, Samsung, Toyota | a16z, Radical Ventures, NEA |

| Offices | Paris, New York, Montreal, Singapore | San Francisco |

The two companies approach world models from different angles. World Labs focuses heavily on 3D spatial intelligence — understanding and generating three-dimensional scenes from images and videos. AMI Labs aims broader, targeting universal world models that learn physics, causality, and planning across domains. Both approaches are valid, and the field is likely large enough for multiple paradigms to coexist. However, AMI Labs' $1.03 billion war chest and LeCun's JEPA framework give it a significant head start in the race to build general-purpose world models.

Beyond World Labs, several other players are active in adjacent spaces. Google DeepMind has published research on world models for game environments (Genie). Meta's FAIR lab — LeCun's former team — continues to work on V-JEPA and related architectures. And several robotics startups (Physical Intelligence, Covariant, Figure AI) are building embodied AI systems that implicitly require world models for manipulation and navigation.

The Open-Source Commitment

AMI Labs has publicly committed to open-sourcing its research. This is significant for several reasons. LeCun was one of the most vocal advocates for open AI research during his time at Meta, where FAIR released LLaMA, Segment Anything, and dozens of other models and papers under open licenses. His track record suggests this is not an empty promise.

Open-sourcing world model research could accelerate the entire field in the same way that the release of the transformer architecture paper in 2017 ("Attention Is All You Need") catalyzed the LLM revolution. If AMI Labs publishes its JEPA implementations, training methodologies, and (critically) pre-trained world model weights, it could enable thousands of researchers and companies to build on top of this work.

However, the open-source commitment comes with strategic calculus. By open-sourcing the foundational models, AMI Labs can:

- Build the ecosystem: If JEPA becomes the standard architecture for world models the way transformers became standard for LLMs, AMI Labs is positioned as the de facto leader

- Attract talent: Top researchers want to publish papers and contribute to open science — an open-source commitment is a powerful recruiting tool

- Commoditize the complement: By making the base models free, AMI Labs can monetize higher-level applications (enterprise world model APIs, robotics platforms, autonomous system licensing) while maintaining the research advantage

- Validate through replication: Open-source allows the global research community to verify claims and build confidence in the approach

The first year is dedicated entirely to R&D with no commercial product expected. This is the luxury that $1.03 billion in seed funding buys — the freedom to focus on fundamental research without the pressure of quarterly revenue targets.

Industry Impact: What This Means for AI in 2026 and Beyond

AMI Labs' $1.03 billion seed round is not just a funding headline — it represents a potential inflection point in the AI industry's direction. Here is why it matters beyond the number.

Signal #1: The Post-LLM Era Is Beginning

When a Turing Award winner raises a billion dollars to build something that is explicitly not an LLM, it sends a clear message to the industry. The "just scale the transformer" thesis that dominated 2023-2025 is being challenged at the highest levels. This does not mean LLMs are going away — they will continue to be enormously useful for language tasks. But the assumption that scaling LLMs alone leads to AGI is losing its grip on the field's most respected researchers.

Signal #2: Industrial AI Is the Next Frontier

The investor profile tells the story. NVIDIA provides the compute. Toyota needs world models for autonomous driving. Samsung needs them for consumer robotics. Bezos is betting on the physical world (Amazon robotics, Blue Origin). This is not a consumer chatbot play — it is an infrastructure bet on the next generation of AI that operates in physical reality. If world models work, the applications span manufacturing, logistics, autonomous vehicles, surgical robotics, and space exploration.

Signal #3: Europe's AI Ambitions Are Real

AMI Labs chose Paris as its headquarters, not San Francisco. Combined with Mistral AI (also Paris-based, raised $113M seed in 2023 and $640M Series B in 2024), Europe is establishing itself as a credible hub for frontier AI research. The European AI ecosystem now has two billion-dollar companies founded by world-class researchers. This challenges the narrative that all cutting-edge AI development happens in the Bay Area.

Signal #4: Compute Allocation Is Shifting

If AMI Labs' approach proves viable, the allocation of GPU compute in the AI industry could shift significantly. Training world models requires different computational patterns than training LLMs — more emphasis on video and 3D data processing, different parallelization strategies, and potentially new hardware architectures. This is partly why NVIDIA invested: they want to understand and shape the compute requirements of whatever comes after LLMs.

Our Take

We have been following LeCun's JEPA research since his original position papers at Meta FAIR. The technical arguments against LLMs as a path to AGI are compelling — and they are not new. What is new is that someone with LeCun's stature has now put $1.03 billion behind the alternative vision.

The biggest risk is timeline. World models are a harder problem than language models. LLMs had the advantage of massive text datasets and a clear training objective (predict the next token). World models need to learn from video, 3D environments, and physical interactions — data that is harder to collect, label, and process at scale. AMI Labs has committed to a full year of pure R&D before any product, which is honest but also means we will not have concrete benchmarks to evaluate until at least 2027.

The biggest opportunity is in the investor lineup. Toyota and Samsung are not speculative venture funds — they are companies with immediate, concrete needs for AI that understands the physical world. If JEPA-based world models can deliver even a fraction of their theoretical promise, the applications in autonomous driving, industrial robotics, and consumer electronics are worth orders of magnitude more than the $1.03 billion invested.

We are cautiously bullish. LeCun is not prone to hype — his public critiques of LLMs have been consistent for years, even when it was unpopular to voice them. The fact that he is now building a company rather than just writing papers suggests he believes the technology is close enough to practical viability to justify commercial development. That signal, more than the dollar figure, is what makes AMI Labs worth watching closely.

What's Next for AMI Labs

The roadmap is deliberately sparse. Year one is pure research — building and training JEPA-based world models, establishing the Paris research lab, and growing the team across four global offices. No product launches, no APIs, no revenue targets.

Key milestones to watch:

- Open-source releases: When AMI Labs publishes its first pre-trained world model weights, the research community will be able to independently evaluate the approach

- Benchmark results: World models do not have standardized benchmarks yet the way LLMs have (MMLU, HumanEval, etc.). How AMI Labs proposes to measure progress will itself be influential

- Industrial partnerships: Toyota and Samsung are investors, but when they announce integration pilots, that signals real-world viability

- Hiring patterns: Watch for hires from robotics labs (Boston Dynamics, Physical Intelligence) and autonomous vehicle companies (Waymo, Cruise) — this would confirm the embodied AI direction

- Follow-on funding: If AMI Labs raises a Series A at a significantly higher valuation within 18 months, it means early results are exceeding expectations

Frequently Asked Questions

What is AMI Labs?

AMI Labs is an AI research company founded by Turing Award winner Yann LeCun after leaving Meta. The company builds JEPA-based world models — AI systems that learn 3D physics and spatial reasoning rather than predicting text tokens. Headquartered in Paris with offices in New York, Montreal, and Singapore, AMI Labs raised $1.03 billion in seed funding at a $3.5 billion valuation in March 2026.

What are JEPA world models?

JEPA (Joint Embedding Predictive Architecture) is an AI architecture that learns by predicting abstract representations in an embedding space, rather than generating raw data like pixels or tokens. Unlike LLMs that predict the next word, JEPA learns the underlying physics and causal structure of three-dimensional environments. This enables capabilities like physical simulation, robotic planning, and spatial reasoning that LLMs cannot achieve.

Why does Yann LeCun say LLMs will not lead to AGI?

LeCun argues four key limitations: (1) LLMs cannot learn physics from text alone, (2) next-token prediction optimizes for statistical coherence rather than truth, (3) LLMs lack persistent world state that updates continuously, and (4) real-world planning requires physical simulation, not text generation. He believes world models trained on video and 3D data are necessary for artificial general intelligence.

Who are AMI Labs' investors?

AMI Labs' $1.03 billion seed round was backed by NVIDIA, Jeff Bezos (Bezos Expeditions), Samsung Ventures, and Toyota AI Ventures. The industrial investor profile — spanning compute (NVIDIA), e-commerce/logistics (Bezos), consumer electronics (Samsung), and automotive (Toyota) — reflects the physical-world applications that world models could enable.

How does AMI Labs compare to World Labs?

Both companies build world models but from different angles. AMI Labs (Yann LeCun, $1.03B, $3.5B valuation) focuses on universal JEPA-based world models for physics and planning. World Labs (Fei-Fei Li, $230M, $1B valuation) focuses on 3D spatial intelligence for mixed reality and robotics. AMI Labs has committed to open-source, while World Labs takes a selective approach. Both companies are well-positioned, and the field is large enough for multiple paradigms.

Frequently Asked Questions

Is AMI Labs' JEPA architecture better than OpenAI's GPT approach for physical AI?

For physical AI and robotics, JEPA has a structural advantage over OpenAI's GPT architecture. GPT predicts the next text token — a method that cannot encode 3D physics from language alone. JEPA predicts abstract representations in a learned embedding space, enabling it to model spatial relationships, object permanence, and causal physics. For language generation, GPT-4 and its successors remain the standard. For embodied AI — robots, autonomous vehicles, industrial automation — LeCun argues JEPA is the correct architecture, and investors including NVIDIA and Toyota appear to agree.

How does AMI Labs' $1.03B seed compare to Safe Superintelligence (SSI)?

Safe Superintelligence (SSI), co-founded by former OpenAI chief scientist Ilya Sutskever, raised $1 billion in September 2024 at a $5 billion valuation — higher than AMI Labs' $3.5 billion pre-money valuation. However, AMI Labs raised $1.03 billion and its round is explicitly labeled a seed, making it the largest true seed round in European history. SSI's round was classified by some as a de facto Series A given the founders' prior track records. Both companies had shipped zero products at the time of their raises.

Who should follow AMI Labs' world model research?

AMI Labs' research is most directly relevant to robotics engineers building manipulation and navigation systems, autonomous vehicle teams at companies like Toyota, AI researchers working on embodied intelligence and physical simulation, and enterprise AI strategists evaluating post-LLM infrastructure bets. Hardware companies — particularly those in consumer electronics (Samsung), industrial automation, and logistics — should track JEPA developments closely. Investors active in deep-tech AI, particularly in Europe and Asia, should also monitor AMI Labs given its $3.5 billion pre-money valuation at seed stage.

What are AMI Labs' current limitations?

As of March 2026, AMI Labs has not shipped any public product, API, or benchmark. The $3.5 billion valuation is entirely based on research promise and Yann LeCun's pedigree — there is no revenue, no open-source release, and no third-party evaluation of JEPA at scale. By contrast, Mistral AI shipped open-source LLMs within months of its $113 million seed, and Cohere had enterprise APIs live at its $40 million seed stage. JEPA-based world models are also computationally intensive and require novel training infrastructure that has not been publicly described. The risk profile is high even by AI startup standards.

Does AMI Labs' JEPA integrate with existing LLM frameworks like Mistral AI or Cohere?

JEPA operates in an abstract embedding space rather than the token prediction space used by Mistral AI, Cohere, GPT-4, and other transformer LLMs, making direct integration non-trivial. The two paradigms solve fundamentally different problems: LLMs process language sequences; JEPA models physical world dynamics. Hybrid architectures — where a JEPA world model handles spatial reasoning and an LLM handles natural language output — are theoretically plausible and likely part of AMI Labs' long-term roadmap, but no public API, SDK, or framework compatibility has been announced as of the seed close.

Why did Yann LeCun leave Meta to found AMI Labs?

Yann LeCun, Turing Award winner and longtime Chief AI Scientist at Meta's FAIR lab, founded AMI Labs to pursue his thesis that LLMs are a dead end for general intelligence. He has publicly argued four core positions: (1) LLMs cannot learn physics from text regardless of scale; (2) next-token prediction is the wrong training objective for understanding reality; (3) LLMs lack a persistent world state and forget context between sessions; (4) current LLM architectures will not scale to AGI. AMI Labs is his attempt to build the alternative — JEPA-based world models grounded in cognitive science and intuitive physics.

How does AMI Labs compare to Mistral AI and Inflection AI in funding strategy?

AMI Labs' $1.03 billion seed dwarfs both Mistral AI's $113 million seed (2023, $260 million valuation) and Inflection AI's $225 million seed (2022, $1 billion valuation). AMI Labs raised over 4x Inflection's amount and nearly 10x Mistral's at seed stage — before shipping a single product. Mistral AI pursued an open-source strategy to build developer adoption quickly; Inflection AI targeted consumer personal AI. AMI Labs' capital-intensive strategy reflects a conviction that world model research requires deep-tech hardware-style investment, not the lean software startup approach that worked for Mistral and Cohere.