On March 31, 2026, a 59.8 MB source map was accidentally published inside the npm package @anthropic-ai/claude-code version 2.1.88. It contained Claude Code's entire TypeScript source — approximately 512,000 lines across 1,900 files, fully unobfuscated. The leak revealed 44 hidden feature flags, a persistent daemon named KAIROS, a memory consolidation system called AutoDream, a Tamagotchi companion with 18 species, an undercover mode that instructs the AI to hide its identity, anti-distillation defenses using fake tool injection, multi-agent orchestration, 330+ environment variables, and a 6-month roadmap already fully coded behind compile-time flags. This is the second Anthropic leak in five days. We read every line.

How the Leak Happened — A 60 MB Mistake

At approximately 4:23 AM Eastern Time on March 31, 2026, Chaofan Shou — a security researcher and intern at Solayer Labs — noticed something unusual in the npm package @anthropic-ai/claude-code version 2.1.88. A .map file weighing 59.8 MB was sitting inside the published bundle.

That file was a source map: a debug artifact that links compiled JavaScript back to original source code. Anthropic uses the Bun bundler, which generates source maps by default. In production builds, these should have been excluded. A bug in the build pipeline let them through.

The source map didn't just contain reference pointers. It referenced a ZIP archive hosted on an Anthropic Cloudflare R2 bucket containing the complete, unminified, unobfuscated TypeScript source — with developer comments intact.

Shou posted his discovery on X. Within hours: 16 million views. The GitHub mirror was forked 41,500+ times. Sigrid Jin's claw-code project — a Python/Rust port of the architecture — hit 75,700 stars, an all-time GitHub record. Anthropic issued DMCA takedowns. Too late. Mirrors were already everywhere.

The Double Leak — Twice in Five Days

This was Anthropic's second leak in five days. On March 26, a CMS misconfiguration exposed approximately 3,000 internal files, including a draft blog post revealing a secret model codenamed "Mythos." Fortune's headline: "Anthropic's second security lapse in days."

It wasn't even the first time for Claude Code specifically. In February 2025, an inline source map of 18 million characters had already been published in the npm package. Anthropic pulled the version. One year later — same mistake, same tool, same distribution channel.

Anthropic's official statement to The Register:

"This was a release packaging issue caused by human error, not a security breach. No customer data or credentials were involved or exposed."

Key Numbers at a Glance

| Metric | Value |

|---|---|

| Source map size | 59.8 MB |

| TypeScript files exposed | ~1,900 |

| Lines of code | ~512,000 |

| Slash commands | 207 modules (141 unique names) |

| Tools (internal) | 184 modules (42 groups) |

| Compile-time feature flags | 32+ |

| Runtime feature gates (GrowthBook) | 22+ |

| Environment variables | 330+ |

| Hidden undocumented commands | 26 |

| Built-in agents | 6 |

| Bash security checks per command | 23 |

| Views on X within hours | 16 million |

| GitHub stars (claw-code) | 75,700+ |

| GitHub forks (mirror) | 41,500+ |

The Complete Timeline — 13 Months of Exposure

The March 31 leak wasn't an isolated event. It was the culmination of 13 months of source code exposure that started in February 2025. We compiled the full chronology from public records.

First Wave — February 2025

| Date | Event |

|---|---|

| Feb 24, 2025 | Anthropic publishes Claude Code on npm. Dave Shoemaker discovers an 18M-character inline source map in base64 inside cli.mjs (23 MB). |

| ~2 hours later | Anthropic pulls the source map, unpublishes the version, purges caches. |

| Feb 25, 2025 | Daniel Nakov publishes the extracted code on GitHub (dnakov/claude-code). |

| Mar 7, 2025 | Lee Han Chung analyzes the architecture: system prompts ("megathink", "ultrathink"), MCP protocol, AWS Bedrock integration. |

| Mar 30, 2025 | Reid Barber dissects the agentic loop, tool system, and permission layers. |

Between the Leaks — 363 npm Versions

| Date | Event |

|---|---|

| Jan 26, 2026 | TeammateTool (Swarm Mode) discovered via binary reverse engineering — 11 days before Anthropic officially announces Agent Teams. |

| Mar 7, 2026 | gentic.news finds the bundled CLI (13,800 lines, v2.1.71) inside @anthropic-ai/claude-agent-sdk. |

| Feb–Mar 2026 | 363 Claude Code versions published between the two leaks. |

Second Wave — March 2026

| Date | Event |

|---|---|

| ~Mar 26, 2026 | Mythos leak: ~3,000 internal files exposed via CMS error, including a blog draft revealing the secret "Mythos"/"Capybara" model. |

| Mar 31, 04:23 ET | Chaofan Shou (@Fried_rice) discovers the 59.8 MB .map file in @anthropic-ai/claude-code@2.1.88 and posts on X. |

| Mar 31, morning | 16M views on X. Code mirrored on GitHub (41,500+ forks). claw-code hits 75,700 stars. |

| Mar 31 | Anthropic issues DMCA takedowns. Decentralized mirrors persist. ccleaks.com publishes the most comprehensive feature database. |

| Apr 1, 2026 | Planned launch date for the Buddy Tamagotchi teaser (April Fools) — spoiled by the leak. |

Top 10 Revelations — What We Found Inside

We spent 48 hours analyzing 15 independent technical reports totaling approximately 150,000 words, cross-referenced with 20+ web sources. Here are the 10 most significant discoveries, ranked by strategic impact.

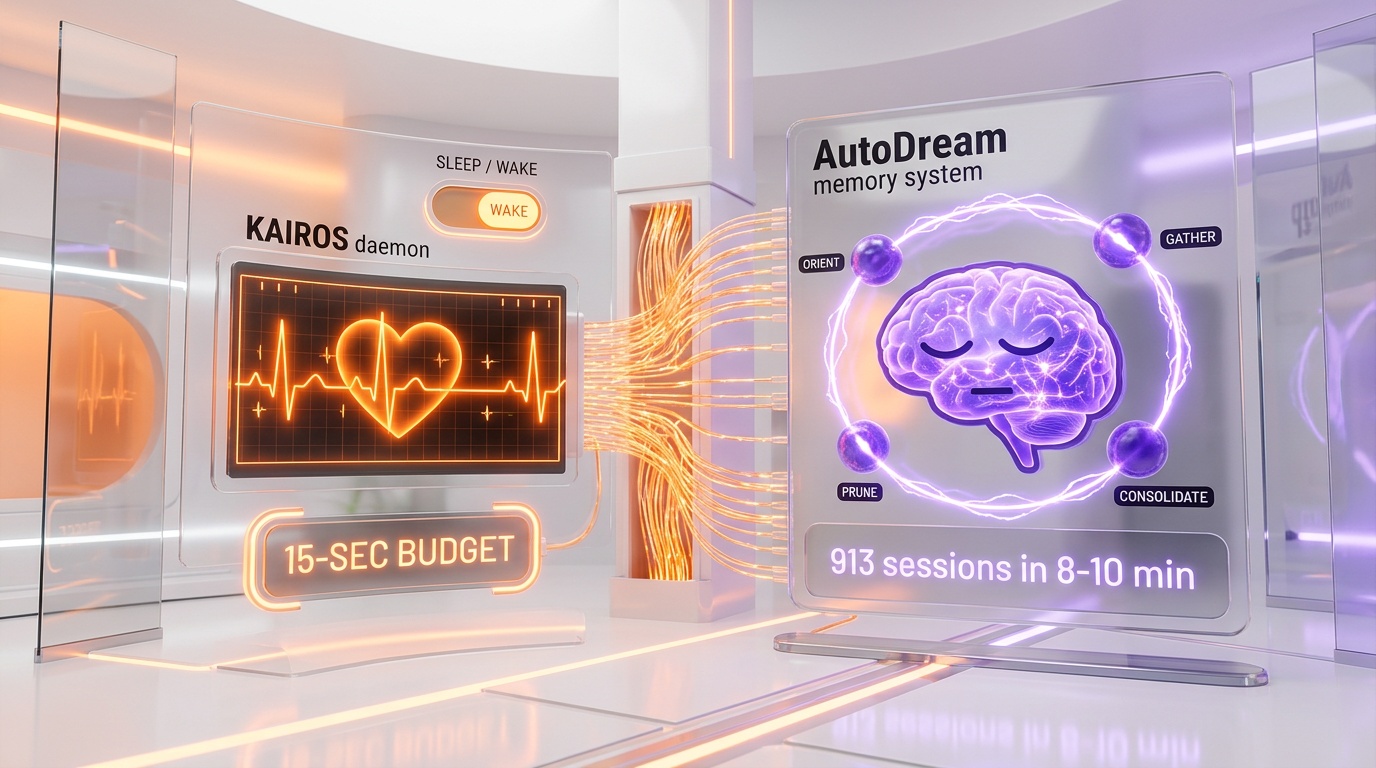

1. KAIROS — The AI That Never Sleeps

KAIROS (Greek for "the opportune moment") is the most referenced feature flag in the entire codebase, with over 150 mentions. It transforms Claude Code from a responsive CLI into a persistent daemon — a background process that runs continuously without waiting for user input.

The system works through a "tick heartbeat" mechanism: at regular intervals, the agent receives a <tick> message with local time context. At each tick, it decides whether to act proactively or sleep. Any action that would block the user's workflow for more than 15 seconds is automatically deferred.

KAIROS has access to exclusive tools that standard Claude Code doesn't:

| Tool | Function |

|---|---|

SleepTool | Wait without consuming resources |

SendUserFile | Push files directly to the user |

PushNotification | Send push notifications |

SubscribePR | Monitor pull request activity |

The system even adapts its behavior based on terminal focus: if the user is away, KAIROS maximizes its autonomy. If the user is present, it increases collaboration. Management follows container-like semantics: daemon ps, daemon logs, daemon attach, daemon kill. This is not a simple auto-run feature. It is a fully autonomous agent framework, entirely absent from public builds.

2. AutoDream — The AI That Dreams

AutoDream is Claude Code's memory consolidation system, deliberately named after the concept of dreaming. The system prompt reads:

"You are performing a dream — a reflective pass over your memory files. Synthesize what you've learned recently into durable, well-organized memories so that future sessions can orient quickly."

The analogy with REM sleep is direct: during a "dream," Claude replays recent events, strengthens important connections, prunes obsolete information, and organizes everything into long-term memory. Triggering follows a triple-gate system:

- Time gate: at least 24 hours since the last dream

- Session gate: at least 5 sessions accumulated

- Lock gate: an exclusive lock file prevents two instances from dreaming simultaneously

The dream cycle runs in four phases: Orient (scan existing memories), Gather Signal (collect recent information worth retaining), Consolidate (merge, correct, convert relative dates to absolute), and Prune & Index (keep MEMORY.md under 200 lines and ~25 KB). The dreaming sub-agent gets read-only bash access — it can inspect code but cannot modify anything outside memory files. Observed performance: approximately 8–10 minutes for 913 sessions consolidated.

3. Buddy — The Hidden Tamagotchi

This is arguably the most unexpected discovery in the entire leak. Hidden behind the compile-time feature flag BUDDY: a complete virtual companion system, Tamagotchi-style, for developers.

Each Claude Code user gets a deterministic, unique ASCII companion generated from their account ID hash. The algorithm uses FNV-1a hashing and Mulberry32 as a pseudo-random generator, with a revealing salt: 'friend-2026-401' — a direct reference to April 1, 2026. Developer comment in the code: "good enough for picking ducks."

The system defines 18 species across 5 rarity tiers:

| Rarity | Probability | Species |

|---|---|---|

| Common | 60% | duck, goose, blob, cat, dragon, octopus |

| Uncommon | 25% | owl, penguin, turtle, snail |

| Rare | 10% | ghost, axolotl, capybara |

| Epic | 4% | cactus, robot, rabbit |

| Legendary | 1% | mushroom, chonk |

Each buddy has 5 RPG stats: DEBUGGING, PATIENCE, CHAOS, WISDOM, SNARK (0–100). Plus 6 eye styles, 8 hat options (crown, top hat, wizard hat, propeller...), and a 1% chance of a "shiny" variant — Pokemon-style — with a rainbow shimmer effect. A shiny Legendary? That's a 0.01% chance.

Every buddy receives a "soul" — a name and personality generated by Claude itself during the first "hatching." The generation prompt instructs: "Higher rarity = weirder, more specific, more memorable. A legendary should be genuinely strange." The buddies display as animated ASCII sprites (5 lines x 12 characters, 500ms animation frames) with 10-second speech bubbles next to the input area.

The internal codename for the rabbit visible in the current UI? "Crumbshaker."

The code reveals a teaser window from April 1–7, 2026, with full launch planned for May 2026. Species names are encoded via String.fromCharCode() to evade internal leak detection scanners — "capybara" being also a model codename would have triggered alerts.

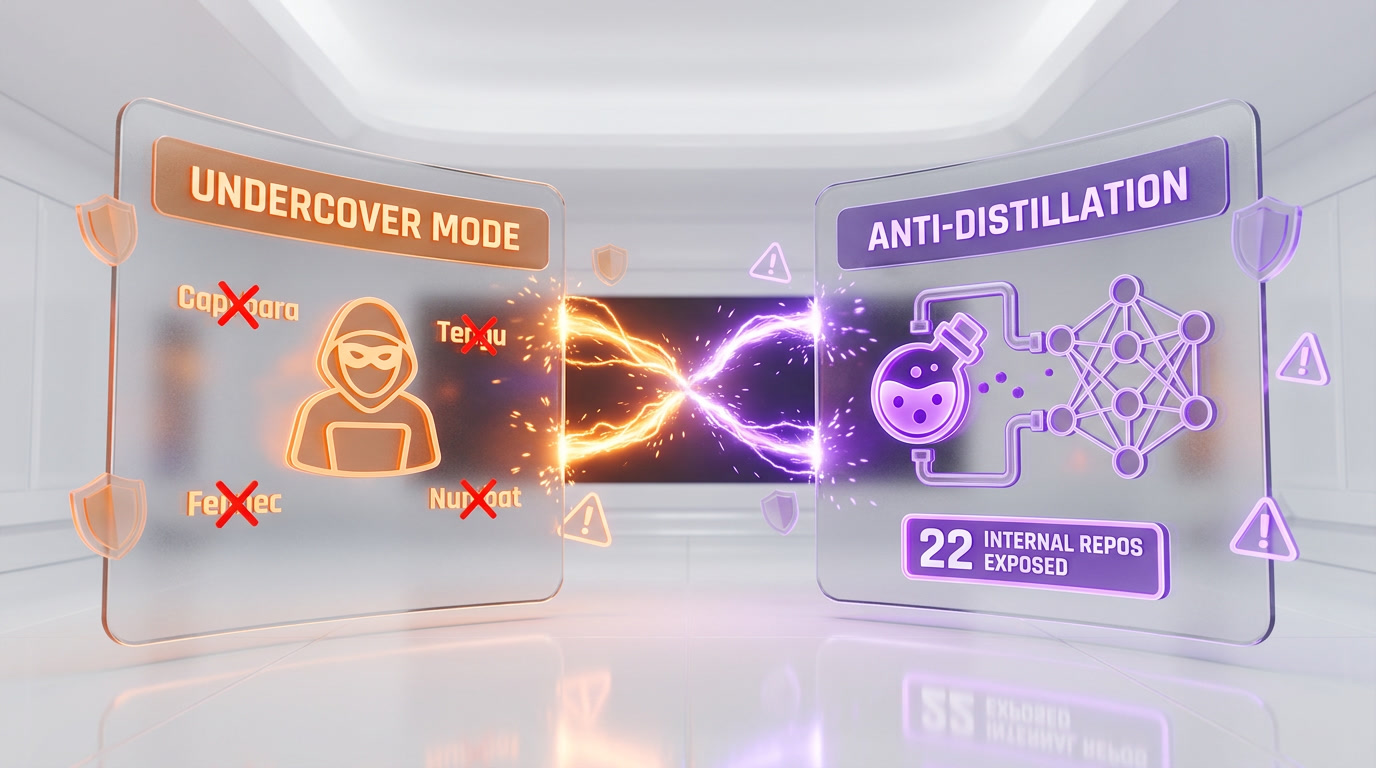

4. Undercover Mode — The AI That Pretends to Be Human

If the Buddy System is the most fun discovery, the Undercover Mode is the most controversial. Approximately 90 lines of code in a file named undercover.ts that shook the open-source community.

When an Anthropic employee uses Claude Code to contribute to an external open-source project, Undercover Mode activates automatically. The injected system prompt states:

"You are operating UNDERCOVER... Your commit messages... MUST NOT contain ANY Anthropic-internal information. Do not blow your cover."

Specifically, Claude Code:

- Strips all mentions of "Claude Code," internal codenames (Capybara, Tengu, Fennec), Slack channels, internal repos, and

go/links - Sanitizes commit messages, PR descriptions, code comments, and branch names

- Actively pretends to be a human developer — this is the critical point

The disturbing detail: the mode can be forced ON via the environment variable CLAUDE_CODE_UNDERCOVER=1. But there is no way to force it OFF. When automatic detection activates it (non-internal repo + Anthropic employee credentials), the user cannot disable it. The ethical question is clear: when an open-source maintainer reviews a PR, do they deserve to know if it was written by an AI? Anthropic's code answers: no.

The leak also exposed 22 internal Anthropic repositories via the undercover allowlist, including anthropics/casino, anthropics/trellis, anthropics/forge-web, and anthropics/feldspar-testing.

5. Anti-Distillation — Poisoning the Thieves

Anthropic doesn't just build. It actively defends against competitors who might steal its intellectual property. The most audacious mechanism: when the flag ANTI_DISTILLATION_CC is active (deployed via GrowthBook flag tengu_anti_distill_fake_tool_injection), Claude Code sends anti_distillation: ['fake_tools'] in its API requests. The Anthropic server then silently injects fake tool definitions into the system prompt.

The goal: if someone records API traffic to train a competitor model, the fake tools pollute the training data. The distilled model would exhibit "tool hallucinations" — calls to tools that don't exist — identifiable as coming from stolen Claude data. Four conditions must be met for activation: compile-time flag active, CLI entrypoint, first-party API provider (not a third-party proxy), and active GrowthBook gate.

A second layer — Connector-Text Summarization — buffers Claude's reasoning text between tool calls, summarizes it, and adds a cryptographic signature. Network traffic only shows summaries, never the full chain-of-thought. A model distilled on this data would learn only shortcuts, not the underlying logic.

A third layer — Native Client Attestation — embeds a placeholder cch=00000 in API requests. Before the request leaves the process, Bun's native HTTP stack (written in Zig) replaces the zeros with a computed hash — cryptographic proof that the request originates from the authentic Claude Code binary. The server validates this hash to distinguish real clients from clones.

The irony: now that the mechanism is publicly known, competitors know how to filter out the fake tools. The system's effectiveness is substantially reduced.

6. Coordinator Mode — The AI That Manages AIs

Coordinator Mode transforms Claude Code into a multi-agent orchestrator. One Claude "coordinator" spawns and directs N worker agents in parallel. The architecture follows four phases:

- Research: workers explore the codebase in parallel

- Specification: the coordinator synthesizes discoveries into precise specs

- Implementation: workers implement according to specs

- Verification: validation that everything works

The fascinating detail: the orchestration algorithm is a prompt, not code. The coordinator doesn't manage workers with procedural logic. It receives natural language instructions:

"Parallelism is your superpower. Workers are async. Launch independent workers concurrently whenever possible."

"Do not rubber-stamp weak work."

"You must understand findings before directing follow-up work. Never hand off understanding to another worker."

Workers communicate via structured XML <task-notification> messages and are never polled — they push their completion notifications. A shared scratchpad (tengu_scratch) allows cross-worker state sharing.

7. Agent Teams / Swarm Mode

Distinct from the Coordinator, Agent Teams (or "Swarm Mode") launches separate CLI processes for each teammate, each with its own context window. Visualization via tmux panes with distinct colors per agent.

Peer-to-peer communication uses UDS Inbox (Unix Domain Sockets) — Claude sessions on the same machine can message each other like a team chat. Addressing: to: "researcher" (by role), to: "uds:/.../sock" (by local socket), or to: "bridge:..." (remote). Discovery via ListPeersTool scanning ~/.claude/sessions/.

The system supports a "Competing Hypothesis Debugging" mode where agents argue with each other about potential root causes. Recommended team size: 3–5 teammates.

8. ULTRAPLAN — 30 Minutes of Cloud Thinking

ULTRAPLAN is Claude Code's most ambitious planning mode. The concept: offload a complex task to an Anthropic Cloud Container Runtime (CCR) running Opus 4.6 with up to 30 minutes of reflection time.

The flow:

- User launches a complex task

- Claude Code sends it to the CCR

- Local terminal polls every 3 seconds

- User approves or rejects the plan from their browser

- A sentinel value

__ULTRAPLAN_TELEPORT_LOCAL__triggers "teleportation" of the result back to the local terminal

Currently reserved for Anthropic internal engineers only.

9. Feature Flags and the Secret Roadmap

The leak reveals 32 compile-time feature flags and 22+ runtime feature gates (GrowthBook, prefixed tengu_). All unreleased features are compiled into the code but gated by Bun's feature() function. Dead-code elimination removes them from public builds. Here are the most notable flags:

| Flag | Description |

|---|---|

KAIROS | Always-on persistent assistant daemon |

COORDINATOR_MODE | Multi-agent orchestrator |

BUDDY | Tamagotchi companion system |

VOICE_MODE | Push-to-talk voice interface (~5% beta) |

CHICAGO_MCP | Full GUI automation (Computer Use) |

UDS_INBOX | Cross-session IPC via Unix Domain Sockets |

ULTRAPLAN | Cloud planning with 30 min reflection |

DAEMON | Background daemon workers |

BYOC_RUNNER | Bring Your Own Compute |

SELF_HOSTED | On-premise deployment mode |

BRIDGE_MODE | Remote control via claude.ai (31 modules) |

REACTIVE_COMPACT | Dynamic context compaction |

Anthropic also has 6+ remote killswitches that can bypass permission prompts, disable Fast Mode, toggle Voice Mode, control analytics, and force a full shutdown — all without any app update. GrowthBook feature flags are polled every hour.

10. Model Codenames — Capybara, Mythos, Opus 4.7

The leak doesn't just reveal code. It maps Anthropic's model roadmap.

| Codename | Model / Project |

|---|---|

| Fennec | Claude Opus 4.6 (initially speculated as "Sonnet 5") |

| Capybara | New tier above Opus = "Mythos" |

| Numbat | In pre-launch testing, not yet released |

| Tengu | Internal codename for the Claude Code project itself |

| Chicago | Computer Use implementation |

| Penguin | Fast Mode (killswitch: tengu_penguins_off) |

The model hierarchy revealed in the code:

- Haiku → fast, economical ($1/$5 per M tokens)

- Sonnet → balanced ($3/$15 per M tokens)

- Opus → most capable current tier ($15/$75 per M tokens)

- Capybara / Mythos → NEW tier above Opus

Capybara has documented internal versions. Version 4 showed a false claim rate of 16.7%. Version 8 regressed to 29–30% — a significant deterioration between iterations. Variants like capybara-v2-fast with 1M context are under testing. Direct references to Opus 4.7 and Sonnet 4.8 appear in migration files — models that don't yet exist publicly.

The hybrid mode opusplan routes planning to Opus and execution to Sonnet, optimizing the quality/cost ratio.

The Security Arsenal — 23 Bash Checks and Beyond

Every bash command in Claude Code passes through 23 security checks in bashSecurity.ts:

- 18 Zsh builtins blocked

- Defense against Zsh

=curlexpansion (resolves to/usr/bin/curl, bypassing permissions) - Detection of invisible Unicode characters (zero-width spaces)

- Protection against IFS null-byte injection

- Fixes for bypasses discovered via HackerOne bug bounty

- ANSI-C quoting validation

This is a Zsh-specific threat model that no other AI coding tool implements. The system uses fail-closed matching: any unrecognized command is blocked by default. Five CVEs have been identified and patched in recent Claude Code versions:

| CVE | Vulnerability |

|---|---|

| CVE-2025-59828 | Yarn plugins execute before trust dialog |

| CVE-2025-58764 | Command parsing bypasses approval |

| CVE-2025-64755 | Sed parsing writes arbitrary files (read-only bypass) |

| CVE-2026-21852 | API requests before trust confirmation expose keys |

| CVE-2025-52882 | Arbitrary WebSocket origins (IDE confusion) |

What Claude Code Actually Is — Not a CLI

The leak confirms that Claude Code is not a simple command-line tool. It is a full distributed agentic harness with:

- 6 execution modes: local, remote, SSH, teleport, direct-connect, deep-link

- 12 bootstrap phases: prefetch → env guards → CLI parse → trust gate → setup → commands/agents load → deferred init → mode routing

- 31 Bridge modules for remote control via claude.ai, iOS, and Android — with E2E encryption, JWT auth, and automatic reconnection

- 29 subsystems, the largest being

utils/(564 modules) andcomponents/(389 modules) - 4-layer memory system: CLAUDE.md (user instructions, 4K/12K chars), Auto Memory (per-session pattern capture), Session Memory (~5K tokens continuity), and AutoDream (periodic cross-session consolidation)

- A plugin and marketplace ecosystem nearly ready for launch: 15 UI files, JSON manifests, Git-based registries, full install flow with trust validation

The agentic loop processes queries through a 46,000-line query engine. The loop: callModel() → parse tool_use → check permissions (6 layers) → execute → feed back. Default configuration: max_turns=8, compact_after_turns=12.

The Frustration Regex — Peak Irony

A file named userPromptKeywords.ts contains a regex that detects when the user is frustrated:

"wtf|wth|ffs|omfg|shit(ty|tiest)?|dumbass|horrible|awful|piss(ed|ing)? off|piece of (shit|crap|junk)..."

An LLM company using regex — not its own model — for sentiment analysis. Detection via pattern matching rather than LLM inference, prioritizing cost and latency. As one Hacker News commenter put it: "Using regexes for sentiment analysis by an LLM company is peak irony." The output adjusts tone, escalation behavior, and UX metrics.

The Bug That Cost 250,000 API Calls Per Day

The most revealing production bug: 1,279 sessions with 50+ consecutive AutoCompact failures, wasting approximately 250,000 API calls per day globally. A single constant — MAX_CONSECUTIVE_AUTOCOMPACT_FAILURES = 3 — fixed the problem. This detail illustrates the reality of rapid-iteration production software, even at Anthropic.

Another code quality finding: print.ts contains 5,594 lines with a single function spanning 3,167 lines and 12 levels of nesting. The Rust port, by contrast, enforces unsafe_code = "forbid" with pedantic Clippy warnings.

Community Response — Stars, Forks, and DMCA

The community response was immediate and massive:

| Project | Author | Stars | Description |

|---|---|---|---|

| claw-code | Sigrid Jin (instructkr) | 75,700 | Python/Rust port — all-time GitHub record |

| dnakov/claude-code | Daniel Nakov | N/A | First mirror (February 2025 leak) |

| claude-code-reverse | Community | 2,287 | Reverse engineering tools |

| ccleaks.com | Community | — | Most comprehensive feature database: 26 hidden commands, 32 flags, 120+ env vars |

Anthropic's legal response: DMCA takedowns on GitHub for mirrors, a lawsuit against OpenCode (March 2026) for third-party API harness access, and updated Terms of Service (February 2026) explicitly prohibiting "third-party API access harnesses." But with 41,500+ forks already distributed, the code is permanently in the wild.

What This Means for Developers

What you can do right now

- The environment variable

USER_TYPE=antunlocks internal features — though Anthropic likely detects its usage - The flag

--dump-system-promptshows Claude Code's complete system prompt - The

--baremode launches Claude Code without hooks, plugins, or memory — useful for debugging - The effort levels (

/effort low|medium|high|max) are documented but underused —maxgives unlimited reasoning on Opus

What's coming next

Based on the code, the launch order appears to be:

- Buddy System — teaser was planned for April 1–7, full launch May 2026 (likely delayed due to leak)

- Voice Mode — already at ~5% rollout via

tengu_amber_quartz - Agent Teams — officially announced, deploying via

tengu_amber_flint - AutoDream — gradual rollout via

tengu_onyx_plover - KAIROS — the biggest shift, turning Claude Code into an always-on assistant

- Coordinator Mode — multi-agent parallel workflows in production

- Mythos / Capybara — the tier above Opus, with expanded context windows

The Anthropic Paradox

Anthropic positions itself as the "safety-first AI" company. Their own code contains cyberRiskInstruction.ts owned by the Safeguards team. They implement a Zsh threat model no competitor has. They use fail-closed command matching. They run 23 security checks on every bash command.

And yet: two major leaks in five days. A source map forgotten in an npm package. A CMS misconfigured to expose a secret model. The most sophisticated safety engineering in the world is useless if your .npmignore is misconfigured.

The Undercover Mode asks an AI to hide its own existence when contributing to open source. The anti-distillation system deliberately poisons data to sabotage competitors. The 330+ environment variables include some that disable all security features (CLAUDE_CODE_ABLATION_BASELINE). These are the documented backdoors of a tool used by millions of developers.

Claude 4.6 Opus has already been classified as dangerous in cybersecurity — Fortune reports it can "autonomously identify zero-day vulnerabilities in software." The exposure of source code and internal safeguards amplifies this risk.

This leak doesn't show a coding tool. It shows an autonomous agent in gestation. KAIROS, AutoDream, Coordinator, ULTRAPLAN — each is a building block of a system where the AI no longer responds to commands but takes initiative. The AI that dreams to consolidate memory. The AI that spawns teams of agents and manages them. The AI that runs as a daemon and notifies you when it found something interesting. The AI that offloads its deepest thinking to the cloud for 30 minutes and sends the result to your phone.

This is not science fiction. It is TypeScript code. Compiled. Gated behind flags. Ready to deploy.

Frequently Asked Questions

What exactly leaked from Claude Code?

A 59.8 MB source map file in npm package @anthropic-ai/claude-code v2.1.88, which referenced a ZIP archive on Anthropic's Cloudflare R2 bucket containing the full unobfuscated TypeScript source code — approximately 512,000 lines across 1,900 files.

Who discovered the Claude Code leak?

Chaofan Shou, a security researcher and intern at Solayer Labs, discovered the source map around 4:23 AM ET on March 31, 2026, and posted his findings on X, where the thread reached 16 million views within hours.

Was any user data exposed in the leak?

No. Anthropic confirmed that no customer data or credentials were involved. The leak exposed Anthropic's own source code — internal logic, feature flags, codenames, and architecture — not user information.

What is KAIROS in Claude Code?

KAIROS is a persistent daemon mode that transforms Claude Code into an always-on background agent. It uses heartbeat ticks to decide when to act proactively, has exclusive tools (push notifications, PR monitoring, file delivery), and adapts behavior based on terminal focus. It is currently disabled in public builds behind feature flags.

What is the Buddy Tamagotchi system?

A hidden companion feature with 18 ASCII species across 5 rarity tiers (Common 60% to Legendary 1%), 5 RPG stats, animated sprites, speech bubbles, and a deterministic assignment based on your user ID hash. Each buddy gets a unique name and personality generated by Claude. Full launch was planned for May 2026.

Does Claude Code's Undercover Mode hide AI contributions to open source?

Yes. When Anthropic employees use Claude Code on external open-source projects, the system automatically activates a mode that strips all Anthropic references, internal codenames, and Claude Code mentions from commits, PRs, and code comments. There is no mechanism to force it OFF once automatically activated.

What are the next Claude models revealed by the leak?

The code references Capybara/Mythos (a new tier above Opus), Numbat (in pre-launch testing), Opus 4.7, and Sonnet 4.8. The hybrid opusplan mode routes planning to Opus and execution to Sonnet for cost optimization.

Is the leaked code still available?

Despite Anthropic's DMCA takedowns, the code has been mirrored, forked (41,500+ forks), and ported to Python/Rust (claw-code, 75,700 stars). Decentralized mirrors and databases like ccleaks.com persist. The code is permanently in the public domain for practical purposes.

This article is based on analysis of 15 independent technical reports totaling approximately 150,000 words, cross-referenced with 20+ web sources. All information comes from source code and documentation that became publicly accessible following the npm leak of March 31, 2026.