Microsoft released three in-house AI foundation models on April 2, 2026: MAI-Transcribe-1 (speech-to-text, 25 languages, 3.8% avg WER, beats OpenAI Whisper on all 25 languages), MAI-Voice-1 (text-to-speech, 60s audio in 1s), and MAI-Image-2 (top-3 on Arena.ai). Built by Mustafa Suleyman's MAI Superintelligence team. Pricing starts at $0.36 per hr for transcription.

Why This Matters: Microsoft Finally Ships Its Own AI Stack

On April 2, 2026, Microsoft AI dropped three foundation models that signal a seismic shift in the AI industry. After investing over $13 billion in OpenAI and spending years as the company's primary distribution channel, Microsoft is now building competing models — under the same roof. The three models, MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, are available immediately through Microsoft Foundry and the new MAI Playground.

We have been tracking this story since Mustafa Suleyman formed the MAI Superintelligence team in November 2025. Six months later, the team has shipped production-ready models that directly compete with OpenAI Whisper, ElevenLabs, and DALL-E. Here is everything you need to know.

The Three MAI Models: Specs, Benchmarks, and Pricing

| Model | Type | Key Benchmark | Pricing | Availability |

|---|---|---|---|---|

| MAI-Transcribe-1 | Speech-to-Text | 3.8% avg WER (FLEURS), #1 across 25 languages | $0.36 per hr | Public preview, Foundry |

| MAI-Voice-1 | Text-to-Speech | 60s audio in <1s on single GPU | $22 per million tokens characters | Public preview, Foundry |

| MAI-Image-2 | Text-to-Image | Top 3 on Arena.ai leaderboard | $5 per million tokens input tokens, $33 per million tokens output tokens | Public preview, Foundry + Bing + PowerPoint |

Best for: Enterprise developers building multimodal AI applications, companies looking for Azure-native alternatives to OpenAI Whisper/DALL-E/ElevenLabs, and organizations that need production-grade speech and image generation at competitive pricing.

MAI-Transcribe-1: The OpenAI Whisper Killer

MAI-Transcribe-1 is the headline act. This speech-to-text model claims the lowest Word Error Rate (WER) across 25 languages on the FLEURS benchmark — the industry-standard multilingual test — averaging just 3.8%. According to Microsoft's published benchmarks:

- Beats OpenAI Whisper-large-v3 on all 25 languages

- Beats Google Gemini 3.1 Flash on 22 of 25 languages

- Beats ElevenLabs Scribe v2 on 15 of 25 languages

- Beats OpenAI GPT-Transcribe on 15 of 25 languages

The speed gains are equally impressive. MAI-Transcribe-1 runs batch transcription 2.5x faster than Microsoft's existing Azure Fast offering. It accepts MP3, WAV, and FLAC files up to 200MB and supports enterprise-grade reliability across accents and real-world audio conditions.

At $0.36 per hour of audio, Microsoft claims it delivers competitive accuracy at nearly half the GPU cost compared to leading alternatives. For enterprise customers already embedded in the Azure ecosystem, this is a no-brainer migration path from third-party transcription services.

Enterprise Use Cases

- IVR systems and call-center transcription at scale

- Real-time live captioning for events and meetings

- Video subtitling and media archiving workflows

- Educational platform transcription

- Business intelligence extraction from spoken data

MAI-Voice-1: 60 Seconds of Audio in One Second

MAI-Voice-1 is a high-fidelity speech generation model that Microsoft describes as "one of the most efficient speech systems available today." The headline number: it generates 60 seconds of expressive audio in under one second on a single GPU. That is a 60x real-time factor — and it opens the door to truly real-time conversational AI.

What makes MAI-Voice-1 particularly interesting for developers is the Personal Voice capability. Users can clone a custom voice from just 10 seconds of audio. Custom voice creation does require Microsoft's responsible AI approval process, which adds friction but also signals that Microsoft is serious about preventing deepfake abuse.

At $22 per million characters, MAI-Voice-1 is priced competitively against ElevenLabs and other text-to-speech providers. It already powers Copilot's Audio Expressions and podcast features internally, and developers get access to a gallery of over 700 pre-built voices through Azure Speech.

Key Applications

- Conversational AI agents with natural, emotional speech

- Podcast and audiobook production at scale

- Customer interaction systems with brand-specific voices

- Training platform narration and e-learning content

- Real-time caption and voiceover generation

MAI-Image-2: Top 3 on Arena.ai, Now in Bing and PowerPoint

MAI-Image-2 is not new — it has been powering Microsoft products internally. But this release marks its first broad commercial availability through Foundry's API. The model debuted as a top-three model family on the Arena.ai image generation leaderboard, putting it in direct competition with Midjourney, DALL-E 3, and Stable Diffusion XL.

Microsoft highlights three core strengths: photorealistic generation, accurate in-image text rendering (historically a weakness for image models), and complex layouts with cinematic visuals. It delivers at least 2x faster generation times on Foundry and Copilot compared to its predecessor.

WPP, the global marketing conglomerate, is already building production workflows on MAI-Image-2. Rob Reilly, WPP's Global Chief Creative Officer, called it "a genuine game-changer" that "deeply respects the craft involved in generating" production-ready visuals.

| Feature | MAI-Image-2 | DALL-E 3 (OpenAI) | Midjourney v6 |

|---|---|---|---|

| Arena.ai Ranking | Top 3 | Top 10 | Top 5 |

| Text-in-Image | Strong | Moderate | Weak |

| API Access | Foundry | OpenAI API | Discord / Web |

| Enterprise Integration | Azure, Copilot, Bing, PowerPoint | ChatGPT, Azure (via OpenAI) | None native |

| Pricing (Output) | $33 per million tokens tokens | $0.04-0.12/image | $10-60/mo subscription |

The MAI Superintelligence Team: Who Built This

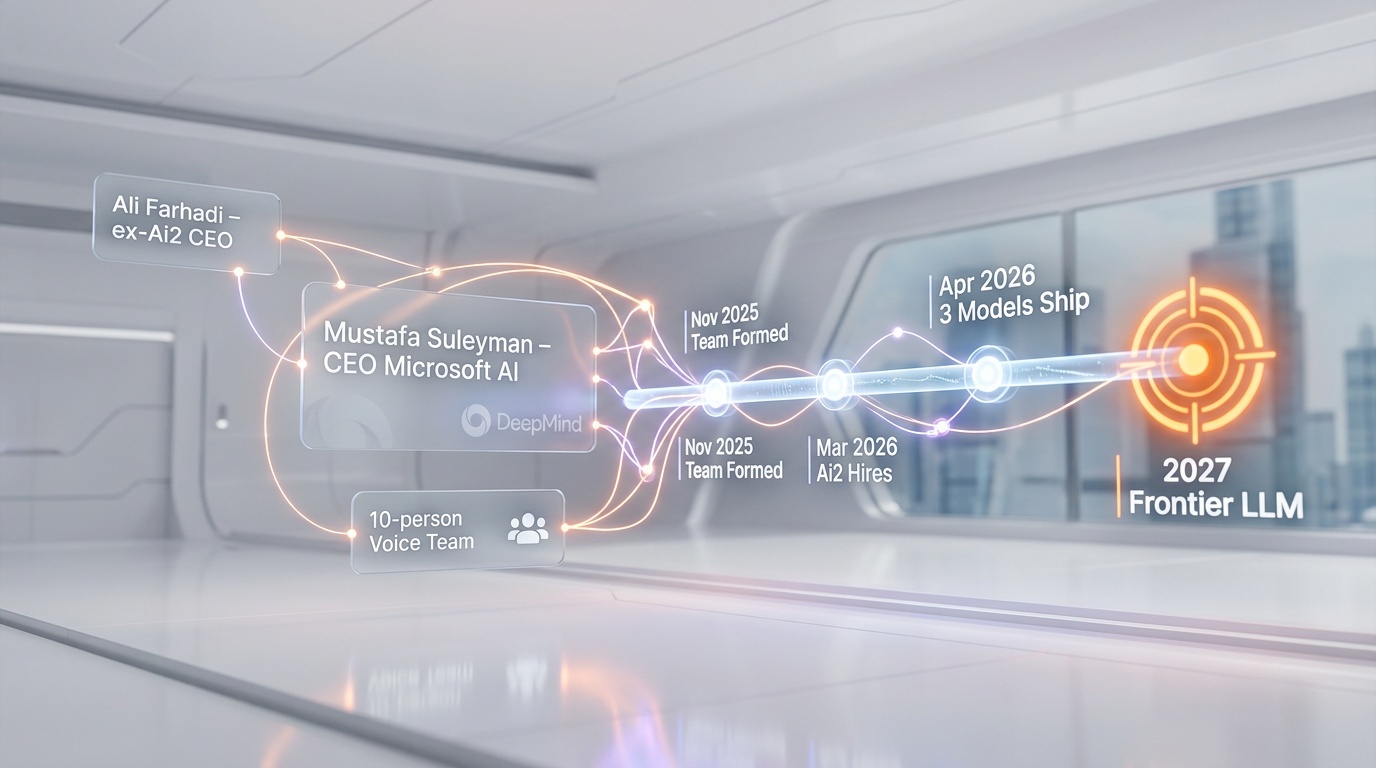

These models come from the MAI Superintelligence team, an AI research group formed in November 2025 under the leadership of Mustafa Suleyman, CEO of Microsoft AI and co-founder of DeepMind. In a March 2026 internal memo first reported by Business Insider, Suleyman wrote that he intended to "focus all of his energy on superintelligence" and deliver world-class models for Microsoft over the next five years.

The team operates with a lean philosophy. The audio model (MAI-Voice-1) was built by just 10 people — a deliberate choice reflecting Suleyman's belief in smaller, more empowered engineering teams over large headcounts.

In March 2026, Microsoft made a significant talent acquisition: Ali Farhadi, the former CEO of the Allen Institute for AI (Ai2), joined the MAI Superintelligence team along with researchers Hanna Hajishirzi, Ranjay Krishna, and Sophie Lebrecht (Ai2's former COO). All retain faculty positions at the University of Washington's Allen School of Computer Science.

Suleyman himself acknowledges these initial models are what he calls "mid-class" — optimized for cost and speed rather than raw frontier capability. The team's stated goal is to produce frontier-class language models by 2027, which would put Microsoft in direct competition with OpenAI's GPT line, Google's Gemini, and Anthropic's Claude on the most demanding AI benchmarks.

The $13 Billion Elephant in the Room: Microsoft vs. OpenAI

This launch cannot be understood without the broader context of Microsoft's complex relationship with OpenAI. Here are the key numbers:

- $13 billion+ invested by Microsoft in OpenAI since 2019

- 27% equity stake in OpenAI Group PBC (valued at ~$135 billion)

- $250 billion in Azure cloud commitments from OpenAI

- $7.6 billion in revenue Microsoft earned from OpenAI in Q2 FY2026 alone

- 45% of Microsoft's $625 billion revenue backlog tied to OpenAI

The relationship is symbiotic but increasingly strained. In March 2026, OpenAI circulated an investor document (ahead of a potential IPO at a $730 billion valuation) that explicitly flagged its dependence on Microsoft as a material business risk. OpenAI wrote: "If Microsoft modifies or terminates its commercial partnership with us, or if we are unable to successfully diversify our business partners, our business, prospects, operating results and financial condition could be adversely affected."

Meanwhile, Microsoft's September 2025 renegotiation of the partnership agreement fundamentally changed the calculus. The revised memorandum of understanding gave Microsoft three critical things:

- Licensing rights to everything OpenAI builds through 2032

- $250 billion in new Azure cloud commitments

- The freedom to build competing models — a right that was contractually restricted before

The MAI models are the first tangible result of that third clause. Microsoft can now simultaneously profit from distributing OpenAI's models through Azure AND build its own alternatives. It is hedging the biggest bet in AI history.

Our Analysis: What This Really Means

We see four critical implications from this launch:

1. Microsoft Started With Multimodal, Not Language

The deliberate choice to ship speech and image models first — rather than a GPT competitor — is strategic. These models fill immediate product needs (Copilot, Bing, PowerPoint, Azure Speech) without directly threatening the OpenAI partnership on the most visible front: large language models. It is a flanking maneuver, not a frontal assault.

2. Pricing Pressure on the Entire Market

At $0.36 per hr for transcription, Microsoft is undercutting multiple competitors while leveraging Azure infrastructure at scale. When you own the cloud, the economics of AI inference change dramatically. Expect ElevenLabs, Assembly AI, and Deepgram to feel the squeeze.

3. The Talent War Is Heating Up

Hiring Ali Farhadi and three other Ai2 researchers in a single move is aggressive. The MAI Superintelligence team is clearly building toward frontier LLMs, and the talent acquisitions signal that Suleyman is not waiting. The 2027 timeline for frontier language models is ambitious but credible given the caliber of researchers now on the team.

4. OpenAI's IPO Just Got More Complicated

OpenAI is preparing for an IPO at a $730 billion valuation while its largest partner and investor is building competing models. The investor document that flagged Microsoft dependency as a risk was prescient — these MAI models prove the risk is not hypothetical. If Microsoft's frontier LLMs arrive in 2027, OpenAI's Azure exclusivity becomes less valuable, and the partnership dynamics shift from interdependence to competition.

How to Access the MAI Models Today

All three models are available in public preview through:

- Microsoft Foundry — Full API access for enterprise developers

- MAI Playground (playground.microsoft.ai) — Interactive testing environment (US only at launch)

- Azure Speech — MAI-Transcribe-1 and MAI-Voice-1 integrated into existing Azure Speech services

- Product integration — MAI-Image-2 rolling out to Bing Image Creator and PowerPoint

Developers already using Azure Speech can access MAI-Transcribe-1 through the existing SDK. The voice gallery includes 700+ pre-built voices alongside the new custom voice cloning feature.

Timeline: Microsoft's Path to AI Independence

| Date | Event |

|---|---|

| 2019-2023 | Microsoft invests $13B+ in OpenAI |

| September 2025 | Partnership renegotiation: Microsoft gains right to build competing models |

| October 2025 | OpenAI restructures as PBC; Microsoft holds 27% ($135B) stake |

| November 2025 | Mustafa Suleyman forms MAI Superintelligence team |

| March 2026 | Ali Farhadi and Ai2 researchers hired; OpenAI flags Microsoft dependency as IPO risk |

| April 2, 2026 | MAI-Transcribe-1, MAI-Voice-1, MAI-Image-2 released on Foundry |

| 2027 (projected) | Frontier-class language models from MAI Superintelligence team |

The Bottom Line

Microsoft is playing both sides of the AI revolution — and doing it in plain sight. The MAI models are technically solid (beating Whisper on all 25 languages is no small feat), strategically timed (right as OpenAI prepares for IPO), and priced to win enterprise adoption. We will be watching the 2027 frontier LLM deadline closely. If Suleyman's team delivers, the Microsoft-OpenAI dynamic will fundamentally reshape.

For developers and enterprises currently building on Azure, the immediate takeaway is clear: you now have first-party Microsoft alternatives for speech-to-text, text-to-speech, and image generation that are competitive with — or better than — the OpenAI equivalents you have been using.

Frequently Asked Questions

What are the three new Microsoft MAI models released in April 2026?

Microsoft released MAI-Transcribe-1 (speech-to-text supporting 25 languages), MAI-Voice-1 (text-to-speech generating 60 seconds of audio in under 1 second), and MAI-Image-2 (text-to-image ranked top 3 on Arena.ai). All three are available through Microsoft Foundry in public preview.

How does MAI-Transcribe-1 compare to OpenAI Whisper?

According to Microsoft's FLEURS benchmark results, MAI-Transcribe-1 beats OpenAI's Whisper-large-v3 on all 25 tested languages with an average Word Error Rate of 3.8%. It also runs 2.5x faster than Microsoft's previous Azure Fast transcription service and is priced at $0.36 per hour of audio.

Who built the Microsoft MAI models?

The models were developed by the MAI Superintelligence team, formed in November 2025 under the leadership of Mustafa Suleyman, CEO of Microsoft AI and co-founder of DeepMind. The team recently hired Ali Farhadi, former CEO of the Allen Institute for AI (Ai2). Notably, MAI-Voice-1 was built by a team of just 10 engineers.

Does this mean Microsoft is leaving OpenAI?

No. Microsoft still holds a 27% equity stake in OpenAI valued at approximately $135 billion, and OpenAI has committed $250 billion in Azure cloud purchases. However, the September 2025 renegotiation gave Microsoft the contractual freedom to build competing models. The MAI release is the first exercise of that right — Microsoft is hedging, not breaking up.

How much do the Microsoft MAI models cost?

MAI-Transcribe-1 costs $0.36 per hour of audio. MAI-Voice-1 starts at $22 per million characters. MAI-Image-2 costs $5 per million input tokens (text) and $33 per million output tokens (image). All models are available through Microsoft Foundry.

When will Microsoft release its own large language model?

Mustafa Suleyman has stated it will take "another year or two" before the MAI Superintelligence team produces frontier-class language models. The projected timeline is 2027, which would put Microsoft in direct competition with OpenAI's GPT series, Google's Gemini, and Anthropic's Claude.

Can I try the MAI models right now?

Yes. All three models are in public preview on Microsoft Foundry. The MAI Playground at playground.microsoft.ai provides an interactive testing environment, currently available in the US. Developers using Azure Speech can access MAI-Transcribe-1 and MAI-Voice-1 through the existing Azure SDK.

What products already use the MAI models?

MAI-Transcribe-1 powers Copilot's Voice Mode transcriptions and dictation features. MAI-Voice-1 powers Copilot's Audio Expressions and podcast features. MAI-Image-2 is rolling out in Bing Image Creator and PowerPoint. All three models are integrated into Azure Speech and Copilot internally.

Frequently Asked Questions

Is MAI-Transcribe-1 better than OpenAI Whisper-large-v3?

Yes. MAI-Transcribe-1 beats OpenAI Whisper-large-v3 on all 25 languages tested on the FLEURS benchmark, achieving a 3.8% average Word Error Rate. It also runs batch transcription 2.5x faster than Microsoft's existing Azure Fast offering, priced at $0.36 per hr — competitive against Whisper-based third-party providers.

How does MAI-Voice-1 compare to ElevenLabs?

MAI-Voice-1 generates 60 seconds of expressive audio in under 1 second on a single GPU (60x real-time factor), priced at $22 per million characters — directly competitive with ElevenLabs pricing. It also offers voice cloning from just 10 seconds of audio and access to a gallery of 700+ pre-built voices on Azure Speech, with a responsible AI approval gate for custom voice creation.

Does MAI-Image-2 beat DALL-E 3 and Midjourney v6?

MAI-Image-2 ranks in the top 3 on the Arena.ai image generation leaderboard, placing it competitively above DALL-E 3 (top 10) and alongside Midjourney v6 (top 5). It delivers at least 2x faster generation times than its predecessor and excels at photorealistic output, accurate in-image text rendering, and complex cinematic layouts — available via Foundry API at $5 per million tokens input tokens and $33 per million tokens output tokens.

Who should use Microsoft's MAI models?

Microsoft's MAI models are best suited for enterprise developers building multimodal AI applications, companies already embedded in the Azure ecosystem seeking native alternatives to OpenAI Whisper, DALL-E, or ElevenLabs, and organizations needing production-grade speech and image generation at competitive pricing. Use cases include IVR transcription, live captioning, podcast production, and image generation for marketing pipelines.

Does MAI-Transcribe-1 integrate with Azure and Microsoft Foundry?

Yes. MAI-Transcribe-1 is available in public preview directly through Microsoft Foundry and the MAI Playground. It accepts MP3, WAV, and FLAC files up to 200MB, integrates natively with Azure's enterprise infrastructure, and supports IVR systems, real-time captioning, video subtitling, and business intelligence extraction from spoken data.

What are Microsoft MAI models' current limitations?

Mustafa Suleyman himself describes the current MAI models as 'mid-class' — optimized for speed and cost efficiency rather than frontier capability. The MAI Superintelligence team targets frontier-class large language models only by 2027, meaning MAI currently cannot challenge GPT-4o, Google Gemini Ultra, or Anthropic Claude 3.5 on the most demanding reasoning benchmarks. Custom voice cloning also requires Microsoft's responsible AI approval, adding friction for developers.

How do Microsoft's MAI models affect its $13B OpenAI partnership?

Microsoft has invested over $13 billion in OpenAI since 2019 and holds a 27% equity stake in OpenAI Group PBC. Despite earning $7.6 billion from OpenAI in Q2 FY2026 alone and having 45% of its $625 billion revenue backlog tied to AI, Microsoft is now shipping models — MAI-Transcribe-1, MAI-Voice-1, MAI-Image-2 — that directly compete with OpenAI Whisper and DALL-E 3, signaling a strategic hedge toward in-house AI independence.