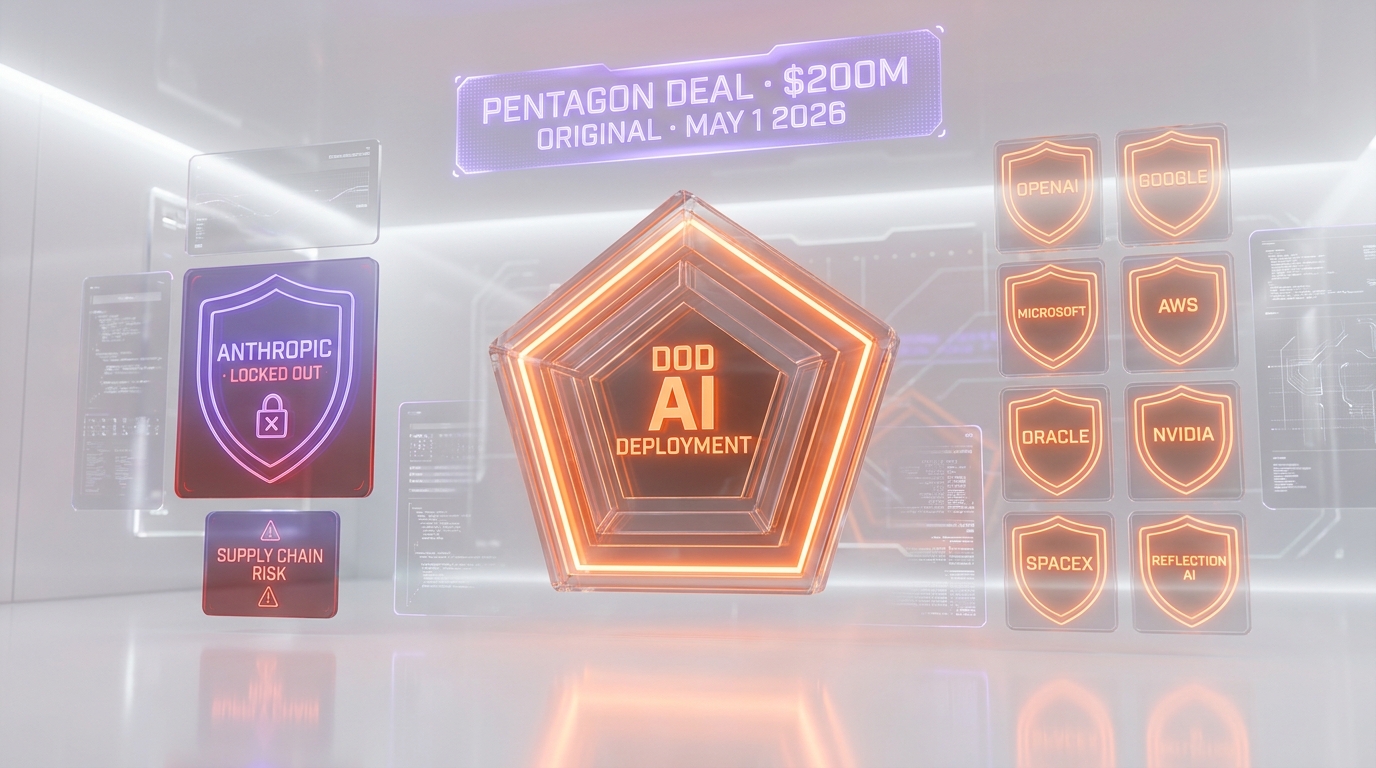

The Department of Defense announced on May 1, 2026 that it had finalized AI deployment agreements with eight technology companies for use on its classified networks — OpenAI, Google, Microsoft, Amazon Web Services, Oracle, Nvidia, SpaceX and Reflection AI. Anthropic was explicitly excluded and the Pentagon labeled the company a “supply chain risk,” a designation typically reserved for foreign adversaries. Defense Secretary Pete Hegseth had publicly called Anthropic CEO Dario Amodei an “ideological lunatic” in a Bloomberg-reported statement on April 30, 2026. The exclusion follows from the original $200 million-per-company frontier AI contracts the Pentagon awarded in summer 2025 to OpenAI, Anthropic, Google and xAI — with Anthropic now blocked from the next phase. Anthropic has sued the DoD over the supply-chain-risk designation in federal court.

TL;DR — what just happened

- May 1, 2026: Pentagon finalizes AI deployment agreements on classified networks with eight companies: OpenAI, Google, Microsoft, Amazon Web Services, Oracle, Nvidia, SpaceX and Reflection AI — per the Department of War official release.

- Anthropic excluded and labeled a “supply chain risk,” a designation typically reserved for foreign adversaries per DefenseScoop.

- Original $200M contracts: in summer 2025 the Pentagon awarded individual contracts of up to $200 million each to OpenAI, Anthropic, Google and xAI for “frontier AI projects.” Anthropic is now blocked from the next phase of that work.

- Hegseth quote: Defense Secretary Pete Hegseth called Dario Amodei an “ideological lunatic” in a statement reported by Bloomberg on April 30, 2026, defending Pentagon AI use and saying the US does not let AI make lethal targeting decisions.

- The dispute: Anthropic refuses to allow Claude to be used for mass surveillance of Americans or in fully autonomous weapons operations. Pentagon designates this position incompatible with “all lawful use” access requirements.

- Anthropic counter-strategy: three days after the Pentagon exclusion, on May 4, 2026, Anthropic announced a $1.5 billion enterprise AI services joint venture with Blackstone, Goldman Sachs, Hellman & Friedman and five other Wall Street firms — redirecting its enterprise growth from federal contracts to private-sector deployment.

- Litigation status: Anthropic has filed suit in federal court contesting the supply-chain-risk designation. White House meetings with Amodei have continued in parallel.

What happened: 8 companies in, Anthropic locked out

On May 1, 2026, the Pentagon — recently renamed the Department of War in the executive branch's nomenclature change — announced through its official release that eight US technology companies had signed formal agreements to deploy frontier AI capabilities on Defense Department classified networks “for lawful operational use.” The companies named in the release are OpenAI, Google, NVIDIA, Reflection AI, Microsoft, Amazon Web Services, Oracle and SpaceX.

Per the official release wording, these agreements authorize each company to deploy “their frontier AI capabilities” on DoD classified networks. The release does not specify dollar amounts for each agreement. The earlier contracts, from summer 2025, awarded individual contract values of up to $200 million each to OpenAI, Anthropic, Google and xAI for “frontier AI projects.” The May 1, 2026 agreements operationalize the deployment phase of that earlier round — with Anthropic explicitly removed from the list and replaced by SpaceX (Grok via Starlink/xAI) and Reflection AI as the new entrants.

The eight cleared companies vs Anthropic exclusion

| Company | Cleared on May 1, 2026? | Originally in 2025 $200M tranche? | Notes |

|---|---|---|---|

| OpenAI | Yes | Yes | Department of War deal announced separately |

| Yes | Yes | Continued from 2025 | |

| Microsoft | Yes | No | Joins via Azure Government / Copilot |

| Amazon Web Services | Yes | No | AWS GovCloud + Bedrock |

| Oracle | Yes | No | Federal cloud contracts |

| Nvidia | Yes | No | Hardware + AI software stack |

| SpaceX | Yes | No | New entrant; Starlink + xAI Grok integration |

| Reflection AI | Yes | No | New entrant; agentic systems specialist |

| Anthropic | No | Yes | Labeled “supply chain risk” by DoD |

The most striking detail in this list is that xAI is also missing from the May 1, 2026 announcement under its own name — despite being one of the original four labs in the 2025 $200M tranche. Coverage suggests xAI's Grok arrives at the Pentagon via the SpaceX entry, with Elon Musk's two companies operating effectively as a combined federal vendor. That structural choice will become its own governance question over time. For now, the headline is that of the four AI labs that won the original $200M tranche, two (OpenAI and Google) are in cleanly under their own names, one (xAI) is in via SpaceX, and one (Anthropic) is fully excluded.

Why it matters: the “supply chain risk” designation is unprecedented

The single piece of language that elevates this story above a routine contracting decision is the Pentagon's labeling of Anthropic as a “supply chain risk.” That designation has a specific operational meaning in DoD acquisition law. It is most often invoked against foreign companies operating in adversarial jurisdictions — notably Chinese telecommunications and surveillance vendors like Huawei, ZTE, Hikvision and Dahua — or against US firms with material foreign ownership exposing them to FOCI (Foreign Ownership, Control or Influence) concerns.

Applying it to a US-headquartered company with a US CEO, US investors and no foreign ownership is unusual to the point of being unprecedented in the AI industry. The DoD's reasoning, per DefenseScoop reporting, is that Anthropic's contractual usage policies — specifically its prohibition on using Claude for mass surveillance of Americans and on fully autonomous weapons targeting — are themselves the “risk.” Anthropic's posture, in this framing, is incompatible with the Pentagon's requirement of unrestricted access for “all lawful use,” and that incompatibility is treated as the equivalent of a supply chain vulnerability.

This is a meaningful precedent. If the Pentagon can designate any US company as a supply chain risk because its acceptable use policies do not match DoD requirements, the framework can in principle extend to any commercial vendor that maintains content policies, ethics restrictions or use-case prohibitions. The legal and constitutional questions raised by this designation are at the heart of the federal court case Anthropic has now filed.

Hegseth's “ideological lunatic” quote in context

The single most quoted line in mainstream coverage of this dispute is Defense Secretary Pete Hegseth calling Anthropic CEO Dario Amodei an “ideological lunatic.” Per Bloomberg's reporting on April 30, 2026, Hegseth used the phrase while defending the Pentagon's use of AI and saying the US does not let artificial intelligence make lethal targeting decisions. The full Bloomberg headline frames the comment as a defense of Pentagon AI use, not just as an attack on Amodei.

Three contextual layers matter for reading the quote.

First, the policy context. Hegseth's comment came in response to Anthropic's public refusal to allow Claude to be used in two specific Pentagon use cases: mass surveillance of US citizens and fully autonomous weapons systems. Hegseth's framing is that these refusals reflect “ideological” preferences imposed on a tool the military needs — rather than legitimate ethical guardrails. Anthropic's framing, consistent with its public safety positioning since founding in 2021, is that these are non-negotiable constraints baked into the company's mission.

Second, the timing context. The Hegseth statement landed on April 30, 2026 — one day before the May 1, 2026 announcement of the eight-company classified-networks deal. The sequence is not coincidental. The Pentagon walked the public-statement-then-formal-exclusion sequence deliberately, signaling its position to the broader contracting world before publishing the new approved-vendor list.

Third, the constitutional context. Hegseth's framing of an AI safety policy as “ideological lunacy” from a sitting cabinet official is itself a notable constitutional moment. It implies that a US-based commercial AI vendor's First Amendment-protected right to set its own product use policies can be punitively re-cast as a national security threat by the executive branch when those policies inconvenience federal procurement. That precedent is what Anthropic's federal lawsuit is designed to test.

The earlier February 23, 2026 Axios scoop previewed all of this: Hegseth and Amodei met in February, the Pentagon was already threatening “banishment,” and the underlying dispute had been live for months before the public April 30 statement.

The original $200M tranche: what was lost

The dollar amount in this story is $200 million per company — not $200 billion. The figure originated in summer 2025 when Pentagon leaders unveiled individual contracts with OpenAI, Anthropic, Google and xAI, each worth up to $200 million, for “frontier AI projects.” That was the prior tranche, and Anthropic was originally inside the contracting envelope.

The May 1, 2026 announcement is the deployment phase of that earlier round, scaled to add Microsoft, Amazon, Oracle, Nvidia, SpaceX and Reflection AI alongside the surviving original four (with xAI riding on SpaceX). Anthropic's exclusion means it loses access to the future expansion of that contracting framework — not the original $200M itself, which had already been awarded.

The strategic loss is not the $200M one-time contract value. It is the loss of access to:

- The follow-on classified-networks revenue, which over a 5-7 year buildout could be multiples of the original $200M.

- The intelligence community sub-contracting opportunities that flow from a primary DoD relationship.

- The strategic credibility of being a Pentagon-cleared frontier AI vendor — a credential that influences enterprise buying signals across regulated industries.

- The optionality on future export-controlled federal AI work as the geopolitical AI cold war intensifies.

What Anthropic appears to have decided, per the May 4 Wall Street JV announcement, is that all four of those losses are tolerable in exchange for maintaining the company's safety policies intact. That is a high-conviction stance and a high-cost one.

Anthropic's counter-strategy: from Pentagon out to Wall Street in

The single most revealing detail of this whole episode is the timing of Anthropic's response. On May 1, 2026 the Pentagon announced the exclusion. On May 4, 2026 — three days later — Anthropic announced a $1.5 billion enterprise AI services joint venture with Blackstone, Goldman Sachs, Hellman & Friedman, Apollo, General Atlantic, GIC, Leonard Green and Sequoia Capital. Per TechCrunch reporting, the JV will deploy Applied AI engineers from Anthropic into mid-sized customer companies across community banks, regional manufacturers, regional health systems and healthcare services networks.

The substitution is structural. Federal contracting is substituted with private-sector deployment. DoD-mediated revenue is substituted with Wall Street-allocator-mediated revenue. Pentagon-controlled gatekeeping is substituted with private-equity-portfolio gatekeeping. The same week the Pentagon classified Anthropic as a supply chain risk, Wall Street classified Anthropic as a $1.5 billion enterprise channel. The market vote is unambiguous.

| Vector | Pentagon (May 1, 2026) | Anthropic JV (May 4, 2026) |

|---|---|---|

| Status | Excluded | $1.5B raised |

| Designation | “Supply chain risk” | “Founding partner” |

| Capital direction | Federal contracts denied | Wall Street capital deployed |

| Customer access | Classified networks blocked | Mid-market portfolio access opened |

| Strategic counterparty | DoD, Hegseth | Blackstone, Goldman, Hellman & Friedman |

| Public statement direction | “Ideological lunatic” | “Founding partner of choice” |

For Anthropic, the strategic logic is sound: the company's enterprise revenue base in private-sector commercial customers is already estimated at $8B+ run rate as of April 2026 — orders of magnitude larger than any classified-networks contract value. Choosing the safety-policy stance over Pentagon revenue costs Anthropic the federal channel but leaves intact the much larger commercial channel and the brand that drives it. Wall Street's $1.5B vote three days later is the market validation that the math works.

White House meetings and the federal lawsuit

One of the under-reported wrinkles of this story is that the White House has continued to meet with Dario Amodei in parallel with the Pentagon's exclusion. Per Bloomberg's coverage, Amodei has visited the White House to meet with top administration officials “in recognition of the company's powerful AI models” even as the Pentagon banned Anthropic products. This is the kind of cross-cutting executive-branch dynamic that signals the dispute is not settled at the political level.

The federal lawsuit Anthropic has filed contests the “supply chain risk” designation directly. The relief sought, based on initial filings, includes both injunctive relief (lifting the designation and reinstating access) and declaratory relief (establishing that a US commercial vendor's acceptable use policy cannot be re-cast as a national security supply chain vulnerability). The case is still in early phases as of May 2026.

Three procedural milestones to watch:

- Preliminary injunction motion: if Anthropic moves for a PI to lift the supply-chain-risk designation pending the merits trial, the briefing and ruling timeline could deliver a court order within 60-90 days.

- White House intervention: continued Amodei-White House meetings could produce an executive-branch resolution that moots the lawsuit before a court ruling.

- Congressional attention: at least two House and Senate committees (Armed Services and Intelligence) have authority to hold hearings on Pentagon AI vendor selection. Whether they exercise it will signal congressional appetite for the question.

Market context: who actually fills the Anthropic gap?

The eight cleared companies divide cleanly into three categories of who they serve and what gap they fill in the post-Anthropic Pentagon stack.

Frontier AI labs: OpenAI and Reflection AI are the two pure-play frontier AI labs in the eight. OpenAI through ChatGPT Enterprise / Azure OpenAI / direct API. Reflection AI as the agentic systems specialist that serves use cases Anthropic's Claude was originally positioned for. Reflection's inclusion is a strategic upgrade for the Pentagon — it gets a frontier-grade agentic vendor without the policy friction.

Hyperscale infrastructure: Google, Microsoft, Amazon Web Services, Oracle and Nvidia are the cloud and chip layer. Each delivers AI capability either through its own model stack (Google Gemini, Microsoft Copilot, AWS Bedrock + Amazon Q) or as the inference infrastructure for the labs above (Nvidia GPUs, Oracle dedicated cloud).

Defense-adjacent: SpaceX is the unusual entrant. Operationally it brings Starlink for connectivity and xAI's Grok via the Musk corporate envelope. SpaceX's inclusion is in part a recognition that Starlink is now de facto national security infrastructure.

What is not in the eight is just as informative as what is. No Mistral. No Cohere. No specialized vertical AI defense vendors (Palantir is notably absent from the AI-deployment list, though it has separate DoD relationships). No Chinese-affiliated companies. No firms with significant exposure to safety-policy-driven use restrictions other than Anthropic. The Pentagon has chosen vendors by the alignment-with-procurement-requirements axis, not by underlying technical capability.

What to watch next

Three threads will determine how this dispute resolves.

First, the federal court ruling. Anthropic's lawsuit on the supply-chain-risk designation is the legal centerpiece. A preliminary injunction granting partial relief in Anthropic's favor would be a major political moment and could re-open the contract pipeline. A denial would entrench the exclusion.

Second, the White House move. The Amodei-White House meetings in parallel with Pentagon hostility suggest an executive-branch-internal resolution is possible. If President Trump or a senior White House official intervenes to override the supply-chain-risk designation, it would be a sharp political reversal of Hegseth's posture and a structural win for Anthropic.

Third, the JV rollout. The May 4 Wall Street joint venture is Anthropic's most visible response to the Pentagon exclusion. The next 12 months of named customer wins from Blackstone, Goldman, Hellman & Friedman portfolio companies will determine whether the private-sector substitution actually delivers the revenue Anthropic implicitly forecast when it accepted the Pentagon ban.

Our take

This is the cleanest test yet of whether a US AI lab can be punished at the federal contracting level for maintaining product use restrictions. The Pentagon's “supply chain risk” designation, applied to a US-headquartered company over its acceptable use policies, is a precedent with implications far beyond Anthropic. If the designation stands, every commercial AI vendor with a content policy is now a potential federal-contract risk. If the designation falls, the executive branch's leverage over commercial AI policy weakens materially.

For Anthropic specifically, the strategic call has been made. The company has chosen safety policy over Pentagon revenue and bet that Wall Street capital plus the existing $8B+ commercial revenue base can absorb the loss. The May 4 JV is the public signature on that bet. Whether the bet pays off depends on whether the JV's Applied AI engineering model actually scales into the enterprise revenue Anthropic needs, and whether the federal lawsuit reverses the precedent before it hardens.

For the broader AI industry, the lesson is structural: federal contracting is no longer a stable revenue channel for AI labs that maintain ethical product policies. The Wall Street alternative is now visibly larger and faster than the federal alternative. Anthropic has just stress-tested that proposition publicly — and the early scoreboard reads $200M lost, $1.5B gained, three days apart.

Frequently asked questions

How much is the Pentagon AI deal worth that Anthropic was excluded from?

The original Pentagon contracts from summer 2025 were worth up to $200 million each per company, awarded to OpenAI, Anthropic, Google and xAI for frontier AI projects. The May 1, 2026 announcement is the deployment phase of that earlier framework, expanded to eight companies. The figure is $200 million per company, not $200 billion. Specific dollar amounts for the May 1, 2026 classified-networks agreements have not been disclosed in the official Department of War release.

Which 8 companies did the Pentagon clear for AI work on classified networks?

The eight companies cleared in the May 1, 2026 announcement are OpenAI, Google, Microsoft, Amazon Web Services, Oracle, Nvidia, SpaceX and Reflection AI. The agreements authorize each company to deploy frontier AI capabilities on Defense Department classified networks for “lawful operational use.” Notably xAI is not named separately, though Grok arrives via the SpaceX entry given Elon Musk's overlapping ownership. Anthropic is excluded entirely.

Why did the Pentagon exclude Anthropic?

The Pentagon labeled Anthropic a “supply chain risk,” a designation typically reserved for foreign adversary vendors, after Anthropic publicly refused to allow Claude to be used for mass surveillance of US citizens or in fully autonomous weapons systems. The DoD treats these acceptable-use restrictions as incompatible with its requirement of unrestricted access for “all lawful use.” Anthropic argues the restrictions are non-negotiable constraints baked into the company's mission since founding.

What did Defense Secretary Hegseth say about Dario Amodei?

Defense Secretary Pete Hegseth called Anthropic CEO Dario Amodei an “ideological lunatic” in a statement reported by Bloomberg on April 30, 2026, while defending the Pentagon's use of AI and saying the United States does not let artificial intelligence make lethal targeting decisions. The comment landed one day before the May 1, 2026 formal exclusion of Anthropic from the eight-company classified-networks announcement.

Has Anthropic sued the Pentagon?

Yes. Anthropic has filed suit in federal court contesting the Department of Defense's “supply chain risk” designation. The lawsuit is in active litigation as of May 2026. Relief sought includes both injunctive relief to lift the designation and declaratory relief to establish that a US commercial vendor's acceptable use policy cannot be re-cast as a national security supply chain vulnerability. The case is one of the most consequential AI policy lawsuits of 2026.

Has the White House met with Anthropic during the Pentagon dispute?

Yes. Per Bloomberg reporting, Dario Amodei has visited the White House to meet with top administration officials in parallel with the Pentagon's hostility, in recognition of Anthropic's frontier AI capabilities. This cross-cutting executive-branch dynamic suggests the dispute is not settled at the political level and that an executive-branch-internal resolution overriding the Pentagon's posture remains possible.

What is the “supply chain risk” designation and why is it unprecedented?

The “supply chain risk” designation under DoD acquisition law is most often invoked against foreign companies operating in adversarial jurisdictions, such as Chinese telecommunications and surveillance vendors like Huawei, ZTE, Hikvision and Dahua, or against US firms with significant foreign ownership exposure. Applying the designation to a US-headquartered AI company with a US CEO and US investors over its product acceptable-use policies is unprecedented in the AI industry and is at the center of Anthropic's federal lawsuit.

What is Anthropic's counter-strategy after the Pentagon exclusion?

Three days after the Pentagon exclusion, on May 4, 2026, Anthropic announced a $1.5 billion enterprise AI services joint venture with Blackstone, Goldman Sachs, Hellman & Friedman, Apollo Global Management, General Atlantic, GIC, Leonard Green & Partners and Sequoia Capital. The joint venture deploys Applied AI engineers from Anthropic into mid-sized customer companies across regulated industries. The substitution is structural — federal contracting replaced by private-sector deployment with Wall Street capital backing.

Did xAI also get excluded from the Pentagon deal?

xAI is not named separately in the May 1, 2026 announcement of the eight cleared companies. However, SpaceX is named, and given Elon Musk's overlapping ownership of both companies, xAI's Grok model effectively arrives at the Pentagon through the SpaceX entry. This means three of the four labs from the original 2025 $200 million tranche (OpenAI, Google directly; xAI via SpaceX) survived the new round. Anthropic is the only original lab that was fully excluded.

What does this mean for AI safety policies at other labs?

The Pentagon's designation of Anthropic as a supply chain risk over its acceptable-use policies sets a precedent that any commercial AI vendor with content policies, ethics restrictions or use-case prohibitions could potentially face similar federal exclusion. If the designation stands after court review, every AI lab maintaining safety guardrails will need to weigh those guardrails against federal contracting access. If the designation falls, the executive branch's leverage to demand unrestricted access from commercial vendors weakens materially. The federal lawsuit Anthropic has filed is the test case.