Starcloud closed a $170M Series A on March 30, 2026, at a $1.1B valuation — making it the fastest Y Combinator unicorn in history at just 17 months from founding. Led by Benchmark and EQT with participation from NVIDIA, the round brings total funding to $200M. The company launched its first satellite carrying an NVIDIA H100 GPU in November 2025 and has since achieved three world firsts: AI model training in space, Gemini inference in orbit, and model fine-tuning off-planet. Its roadmap targets an 88,000-satellite constellation delivering GPU compute at $0.05 per kWh via solar power — while SpaceX (post-xAI merger) has filed for 1 million compute satellites of its own.

Funding Breakdown: $200M Total, Unicorn in 17 Months

Starcloud's funding trajectory is unlike anything the space-tech or AI industries have seen. The company reached unicorn status faster than any Y Combinator graduate in the accelerator's 20-year history. For context, Stripe took roughly four years to hit a $1B valuation. Coinbase took about three. Starcloud did it in 17 months.

| Round | Date | Amount | Valuation | Lead Investors |

|---|---|---|---|---|

| Pre-Seed / Seed | 2024 | $30M | Undisclosed | Y Combinator, early angels |

| Series A | March 30, 2026 | $170M | $1.1B | Benchmark, EQT, NVIDIA |

| Total Raised | — | $200M | $1.1B | — |

The investor roster tells a story on its own. Benchmark — the firm behind eBay, Uber, Instagram, and Discord — doesn't lead rounds casually. They take one board seat per partner and bet on category-defining companies. EQT, the Swedish investment firm managing over $130B in assets, brings deep infrastructure expertise. And NVIDIA's participation isn't just a financial endorsement — it signals hardware-level collaboration. When NVIDIA invests in a company deploying H100s in orbit, that's a strategic alignment, not a passive check.

The speed of this raise reflects a simple reality: GPU compute demand is growing faster than terrestrial data center capacity can expand. Every major AI lab — OpenAI, Anthropic, Google DeepMind, xAI — is constrained by power, cooling, and land. Starcloud's thesis is that space solves all three constraints simultaneously.

What Starcloud Actually Does

At its core, Starcloud is building orbital data centers — satellites equipped with high-performance GPUs that deliver AI compute from low Earth orbit. The concept sounds like science fiction, but the company has already shipped working hardware to space and run real AI workloads on it.

The value proposition rests on three fundamental advantages over terrestrial data centers:

- Unlimited solar power — In orbit, solar panels receive uninterrupted sunlight with no atmosphere, no weather, and no night cycle (in sun-synchronous orbits). Starcloud's target is $0.05 per kWh — roughly 60-80% cheaper than the average industrial electricity rate in the US and dramatically cheaper than the premium rates AI data centers pay in power-constrained markets.

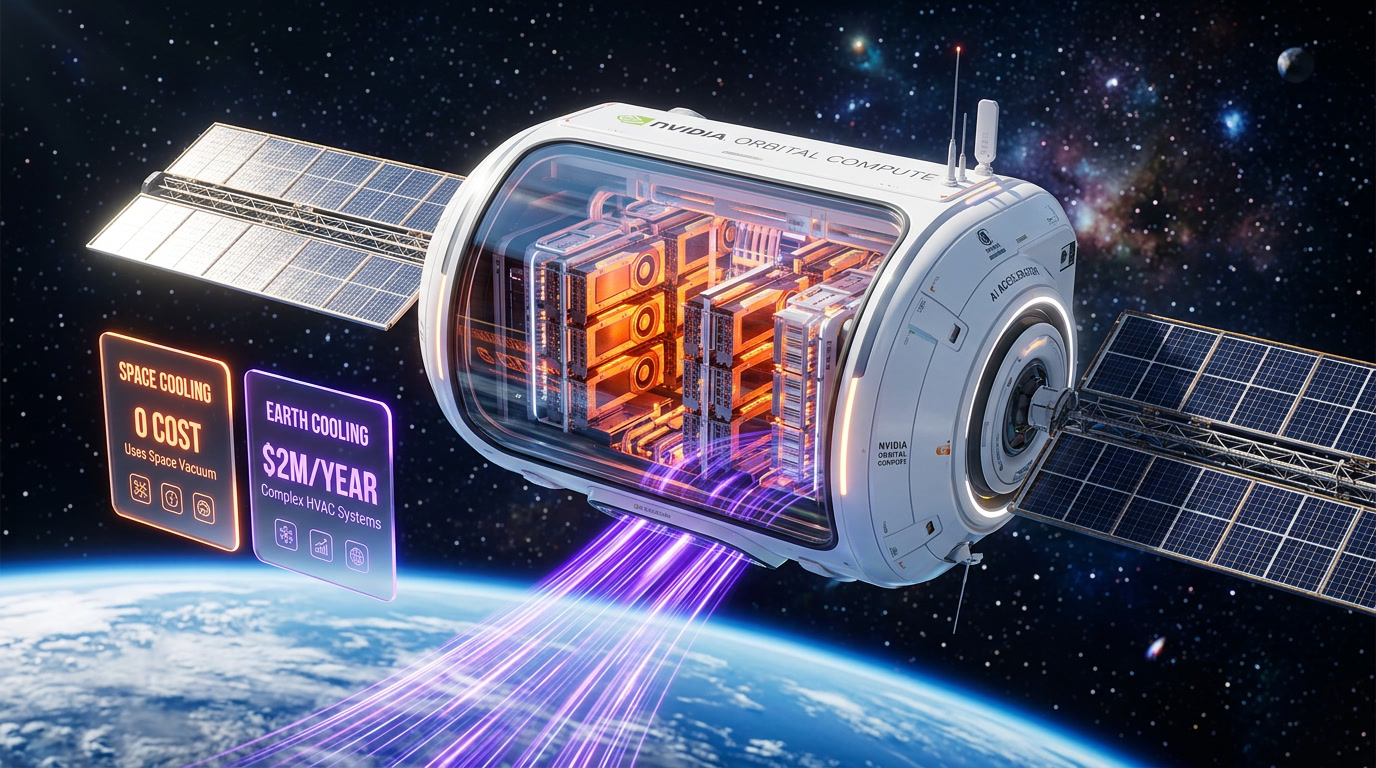

- Zero cooling costs — The vacuum of space is the ultimate heat sink. Terrestrial data centers spend 30-40% of their total energy on cooling. In orbit, that cost drops to near zero. Radiant heat dissipation in the vacuum of space is essentially free.

- No land constraints — Building a 500MW terrestrial data center requires years of permitting, grid connections, water rights, and community negotiations. Low Earth orbit has no zoning boards, no NIMBY opposition, and effectively unlimited real estate.

The trade-off, of course, is the extreme engineering challenge of launching, operating, and maintaining compute hardware in space. Radiation hardening, thermal cycling, latency management, satellite-to-ground data transfer, and orbital debris mitigation are all non-trivial problems. But Starcloud's November 2025 launch demonstrated that the core concept works — a GPU can operate in orbit and perform real AI computations.

Three World Firsts That Prove the Concept

Between November 2025 and March 2026, Starcloud achieved three firsts that no other company — public or private — has accomplished:

1. First AI Model Training in Space

Starcloud successfully trained an AI model entirely in orbit using its H100-equipped satellite. While the model was small by terrestrial standards, the milestone proved that the full training loop — forward pass, loss computation, backpropagation, weight updates — can execute reliably in the space environment. Radiation-induced bit flips, thermal cycling, and power fluctuations were all managed within acceptable error margins.

2. First Gemini Inference in Orbit

In collaboration with Google, Starcloud ran inference on a Gemini model variant from orbit. This demonstrated that cloud AI services can be extended to orbital compute nodes — a satellite acting as an edge inference server. The implications for latency-tolerant AI workloads (batch processing, background training, offline inference) are significant.

3. First Model Fine-Tuning Off-Planet

Taking the training milestone further, Starcloud fine-tuned a pre-trained model in orbit — adjusting weights on a foundation model using domain-specific data uploaded to the satellite. Fine-tuning is the most commercially relevant ML workflow for enterprises, so demonstrating it in space moves the technology from "interesting experiment" to "viable commercial service."

Each of these firsts on its own would be a notable technical achievement. Together, they demonstrate that orbital compute isn't a distant concept — it's an operational reality that's already executing the three core ML workflows: training, inference, and fine-tuning.

The Roadmap: From One Satellite to 88,000

Starcloud's roadmap is aggressive and structured in three clear phases:

| Phase | Hardware | Power | Target |

|---|---|---|---|

| Starcloud-1 | NVIDIA H100 GPU | Standard satellite power | Proof of concept (achieved Nov 2025) |

| Starcloud-2 | NVIDIA Blackwell chip | Enhanced power systems | Production-grade compute per satellite |

| Starcloud-3 | Next-gen GPU cluster | 200kW (Starship-class) | Full-scale orbital data center, 88K constellation |

Starcloud-2 is the next major milestone. By upgrading from H100 to NVIDIA's Blackwell architecture, each satellite will deliver significantly more compute per watt — a critical metric when your power budget comes from solar panels. Blackwell's improved energy efficiency (roughly 4x inference performance per watt over H100 for many workloads) makes it the ideal chip for power-constrained orbital deployment.

Starcloud-3 is the moonshot phase — literally. Designed for launch on SpaceX's Starship, each Starcloud-3 unit will carry 200kW of compute power. For reference, a single rack in a modern terrestrial data center typically draws 20-40kW. A Starcloud-3 satellite would carry the equivalent of 5-10 high-density racks — in a single orbital platform. Multiply that by 88,000 satellites and you get a compute constellation that rivals the total capacity of the world's largest terrestrial hyperscalers.

The $0.05 per kWh target is the economic linchpin. If Starcloud can deliver compute at that energy cost — and solar physics suggest it's achievable — the unit economics of orbital compute become dramatically competitive with terrestrial alternatives, especially in regions where power costs $0.10-0.20/kWh or higher.

The Elephant in Orbit: SpaceX and the xAI Merger

Starcloud isn't operating in a vacuum — figuratively speaking. The most significant competitive development is SpaceX's application to deploy 1 million compute satellites, filed after Elon Musk's xAI merged with SpaceX's operations.

This sets up what may become the defining infrastructure competition of the next decade. Here's how the two players compare:

| Factor | Starcloud | SpaceX / xAI |

|---|---|---|

| Founded | ~2024 (YC batch) | 2002 (SpaceX) / 2023 (xAI) |

| Funding | $200M | $10B+ combined |

| Valuation | $1.1B | $350B+ (SpaceX) |

| Launch Capability | Third-party (buys launches) | Owns Falcon 9, Starship |

| Satellite Target | 88,000 | 1,000,000 |

| AI Models | Model-agnostic (partners: Google, etc.) | Grok (proprietary) |

| GPU Partner | NVIDIA (H100, Blackwell) | Custom AMD + NVIDIA mix |

| Key Advantage | First mover, proven orbital AI | Vertical integration (launches + AI + satellites) |

| Key Risk | Dependent on third-party launches | Regulatory scrutiny, antitrust |

The David-vs-Goliath framing is obvious but misleading. Starcloud has a genuine first-mover advantage: it has already put a GPU in orbit and run real AI workloads on it. SpaceX has filed applications but hasn't yet deployed compute satellites. In technology races, being first to demonstrate working technology matters enormously — it attracts talent, investors, and customers in a way that plans on paper cannot.

However, SpaceX's structural advantages are formidable. It owns its own launch vehicles, which means it can deploy satellites at marginal cost rather than paying market rates. Falcon 9 launches cost SpaceX roughly $15-20M internally versus $60-70M at market rates. Starship, once operational at scale, will reduce that further. Starcloud, by contrast, must purchase every launch from a provider — potentially even from SpaceX itself, which creates an awkward dependency.

The xAI merger adds another dimension. SpaceX isn't just building orbital compute infrastructure — it's building it for a specific AI company (xAI, maker of Grok) that has massive compute needs. This vertical integration — launch provider + satellite manufacturer + AI model developer — mirrors the AWS playbook of building infrastructure to serve your own needs first, then selling excess capacity to the market.

Starcloud's counter-strategy is platform neutrality. By partnering with NVIDIA on hardware and Google on model inference, Starcloud positions itself as the open orbital compute platform — a space-based cloud where any AI company can run any workload. If the history of cloud computing is any guide, open platforms tend to win over vertically integrated ones in the long run. AWS beat IBM. Azure and GCP grew by being model-agnostic. Starcloud is betting the same dynamic plays out in orbit.

Why This Matters: The Bigger Picture

The significance of Starcloud — and orbital compute in general — extends far beyond one startup's funding round. Three structural forces are converging to make space-based compute not just viable but potentially necessary:

1. The Terrestrial Power Crisis Is Real

AI data centers are consuming electricity at rates that are straining power grids worldwide. The International Energy Agency estimates that data center electricity consumption will double between 2024 and 2028. In the US, utilities in Virginia (the world's data center capital) are struggling to meet demand. New nuclear plants take 10-15 years to build. Solar and wind farms require land and grid connections. Orbital solar bypasses all of these constraints — unlimited, uninterrupted power with no grid dependency.

2. GPU Demand Far Outstrips Supply

Despite NVIDIA shipping record volumes of H100 and Blackwell GPUs, demand continues to outpace supply. Every major AI lab has a GPU waitlist. Cloud providers ration GPU access. The companies that can find new ways to deploy and power GPUs — even in unconventional locations like orbit — will capture enormous value.

3. Latency-Tolerant Workloads Are the Majority

Not every AI workload needs sub-100ms latency. Model training runs for hours or days. Batch inference processes millions of requests overnight. Fine-tuning jobs run on schedules. Research experiments iterate over weeks. For these workloads — which represent the majority of total AI compute consumption — the additional latency of orbital compute (roughly 20-50ms for LEO satellites) is irrelevant. Starcloud doesn't need to replace terrestrial data centers for real-time inference. It needs to absorb the overflow of batch and training workloads that terrestrial facilities can't power.

If even 10-20% of global AI compute demand shifts to orbital infrastructure over the next decade, that's a market worth hundreds of billions of dollars annually. Starcloud is positioning to be the AWS of that market.

Frequently Asked Questions

What is Starcloud and what does it do?

Starcloud builds orbital AI data centers — satellites equipped with high-performance GPUs (currently NVIDIA H100s) that deliver AI compute from low Earth orbit. The company launched its first GPU satellite in November 2025 and has demonstrated AI training, inference, and fine-tuning in space. Its target is an 88,000-satellite constellation delivering compute at $0.05 per kWh using solar power.

How much funding has Starcloud raised?

Starcloud has raised $200M in total. The latest round — a $170M Series A announced March 30, 2026 — valued the company at $1.1B. It was led by Benchmark and EQT, with participation from NVIDIA. This makes Starcloud the fastest Y Combinator company to reach unicorn status, achieving it in approximately 17 months.

How does orbital AI compute compare to terrestrial data centers?

Orbital compute offers three main advantages: unlimited solar power (target $0.05 per kWh vs. $0.10-0.20/kWh on Earth), zero cooling costs (vacuum of space provides free heat dissipation), and no land or permitting constraints. The trade-offs include higher latency (20-50ms for LEO), the engineering complexity of operating hardware in space, and dependence on launch providers for deployment.

Is SpaceX building orbital data centers too?

Yes. Following the xAI-SpaceX merger, SpaceX applied for authorization to deploy 1 million compute satellites. While SpaceX hasn't yet launched compute-specific satellites, its vertical integration — owning launch vehicles, satellite manufacturing, and an AI company (xAI/Grok) — gives it significant structural advantages. However, Starcloud has the first-mover advantage with proven orbital AI workloads.

When will orbital AI data centers be commercially available?

Starcloud's roadmap includes three phases: Starcloud-1 (H100 GPU, proof of concept — completed November 2025), Starcloud-2 (NVIDIA Blackwell chip, production-grade compute — next milestone), and Starcloud-3 (200kW Starship-class satellites, full constellation of 88,000 units). Commercial availability at meaningful scale is likely in the 2028-2030 timeframe, though early-access partnerships (like the Google Gemini inference demonstration) are already operational.

Frequently Asked Questions

How does Starcloud compare to SpaceX and xAI's orbital compute plans?

Starcloud targets an 88,000-satellite constellation at $0.05 per kWh. SpaceX, following its xAI merger, filed to deploy 1 million compute satellites — 11x more. SpaceX owns its own launch capability (Falcon 9, Starship) while Starcloud buys launches from third parties, giving SpaceX a significant cost and speed advantage at scale. However, Starcloud has already launched operational hardware and proven AI training, Gemini inference, and model fine-tuning in orbit — milestones SpaceX has not yet matched.

Is Starcloud's $1.1B valuation justified compared to other AI infrastructure startups like CoreWeave?

Starcloud reached $1.1B in just 17 months from founding — faster than any Y Combinator company in the accelerator's 20-year history, including Stripe (4 years) and Coinbase (3 years). While CoreWeave and other terrestrial GPU cloud providers face land, power, and cooling constraints, Starcloud's orbital model eliminates all three. Benchmark, EQT, and NVIDIA's participation in the $170M Series A suggests institutional confidence in the orbital compute thesis as genuinely differentiated infrastructure.

What AI workloads has Starcloud actually run in space?

Between November 2025 and March 2026, Starcloud completed three world firsts: (1) the first AI model training in orbit using an NVIDIA H100 GPU, (2) the first Gemini model inference run from orbit in collaboration with Google, and (3) the first model fine-tuning off-planet — adjusting weights on a pre-trained foundation model using data uploaded to the satellite. These cover the three core ML workflows: training, inference, and fine-tuning.

How does Starcloud's $0.05 per kWh energy cost compare to terrestrial data centers used by OpenAI or Anthropic?

Starcloud's target of $0.05 per kWh is roughly 60–80% cheaper than average US industrial electricity rates, and dramatically lower than the premium rates AI labs like OpenAI and Anthropic pay in power-constrained markets. Additionally, terrestrial data centers spend 30–40% of total energy on cooling — a cost that drops to near zero in orbit where the vacuum of space acts as a heat sink. If the $0.05 per kWh target is met at scale, orbital compute could undercut terrestrial alternatives on unit economics.

What NVIDIA hardware does Starcloud deploy, and what's the upgrade roadmap?

Starcloud-1 launched in November 2025 with an NVIDIA H100 GPU as a proof of concept. Starcloud-2 upgrades to NVIDIA's Blackwell architecture, delivering approximately 4x inference performance per watt — critical for power-constrained solar-powered satellites. Starcloud-3, designed for Starship-class launch vehicles, will carry 200kW of compute per satellite — the equivalent of 5–10 high-density terrestrial racks — across an 88,000-satellite constellation.

Who should pay attention to Starcloud's orbital AI data center development?

AI labs and enterprises constrained by data center power, land, or cooling capacity should monitor Starcloud closely. Organizations running batch inference, background training, or latency-tolerant workloads are the most immediate commercial targets. Investors in terrestrial GPU cloud providers (CoreWeave, Lambda Labs, etc.) and hyperscalers (AWS, Google Cloud, Azure) should track Starcloud as a potential structural disruptor to data center economics, especially as the $0.05 per kWh cost target approaches commercial viability.

What are Starcloud's current limitations and risks vs. established cloud providers?

Starcloud faces significant engineering challenges: radiation hardening for GPUs, thermal cycling stress, satellite-to-ground data transfer latency, orbital debris mitigation, and Starship dependency for the 200kW Starcloud-3 phase. With only $200M raised vs. SpaceX's $350B+ valuation and no proprietary launch capability, Starcloud is entirely dependent on third-party launch providers. The 88,000-satellite constellation remains a roadmap target — currently, only one operational satellite exists.

Does Starcloud's orbital compute integrate with existing cloud AI platforms like Google Cloud or AWS?

Starcloud has already demonstrated integration with Google's AI infrastructure by running Gemini model inference from orbit in collaboration with Google. The satellite acted as an edge inference node, suggesting orbital compute can extend existing cloud AI services. Full API-level integration with Google Cloud, AWS, or Azure for production workloads has not been publicly announced as of March 2026, but the Gemini collaboration signals the technical pathway for cloud-to-orbit compute bridging.