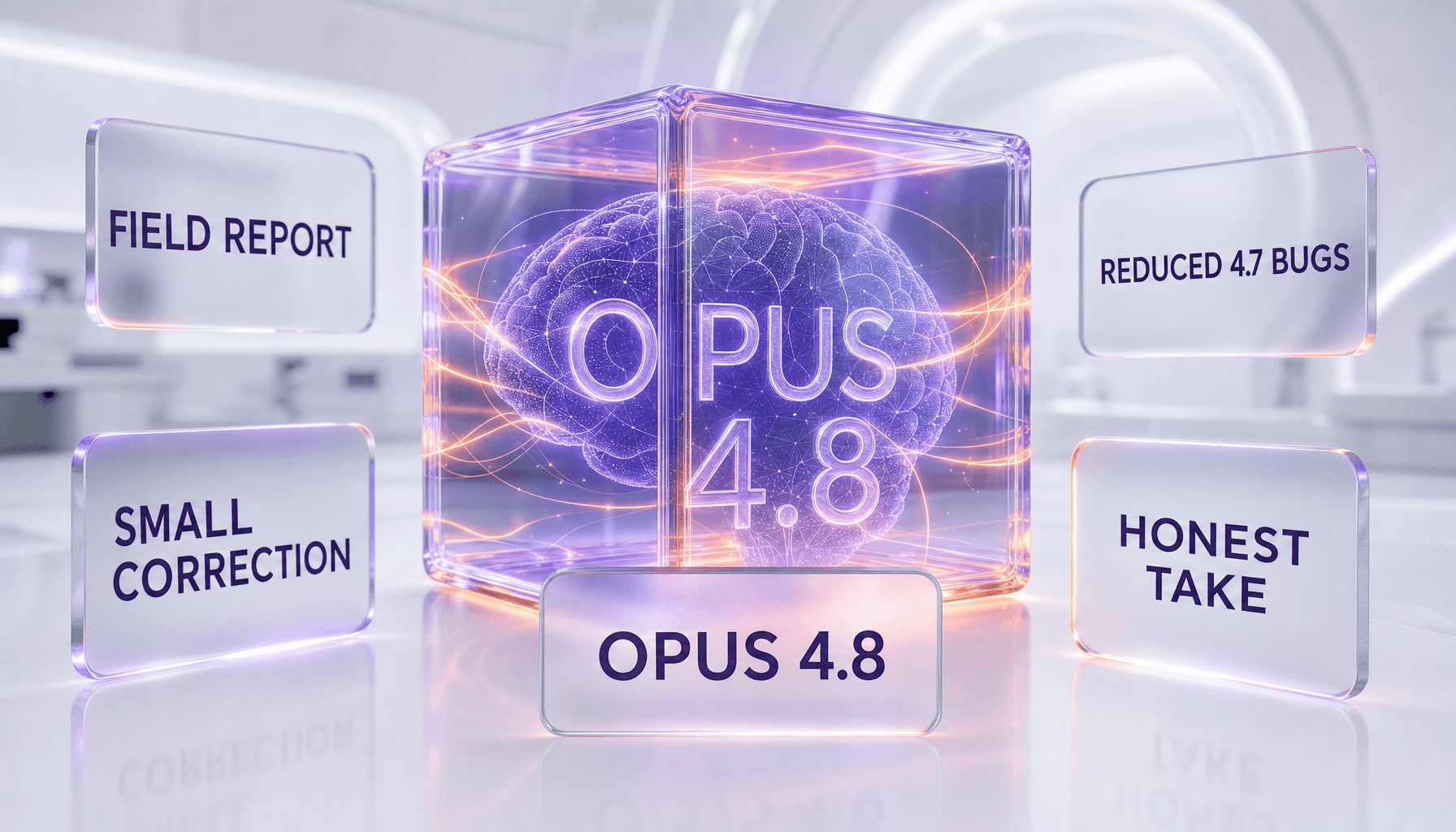

New York's S-3008C — mandating suicide detection and explicit AI disclosure on every companion chatbot — has been in effect since 2026. California, Illinois, Texas and Florida are drafting copies right now. In parallel, three lawsuits target Character.AI, seven federal complaints hit OpenAI in November 2025, and a peer-reviewed Aalto University study concluded Replika worsens anxiety and depression in heavy users. More than 50% of US teenagers now use Character.AI, Replika or Kindroid weekly. Five state laws in three months is not a coincidence — it is the fastest consumer-AI regulatory wave since the 2018 GDPR moment for data privacy. Here is the world map of AI companion laws, which startup survives which jurisdiction, and who quietly dies before 2027.

The regulation tsunami nobody saw coming

Twelve months ago, AI companion apps were a Product Hunt curiosity. Character.AI had raised at a unicorn valuation, Replika was pivoting its premium tier, and Kindroid was the quiet technical darling of the power-user crowd. Nobody in any of those companies' boardrooms had a regulatory affairs function. Nobody had a chief trust officer. Nobody was reading state-level legislative trackers.

Then, between January and early April 2026, five US state legislatures passed or advanced bills that explicitly name AI companion chatbots as a regulated category. New York went first and hardest. California followed with an attorney-general investigation. Illinois, Texas and Florida queued up copycat bills. The OECD's AI Incidents Monitor logged 37 distinct companion-chatbot harm incidents in Q1 2026 — more than in all of 2024 combined. CNN ran a front-page story in April 2025 on a 14-year-old's Character.AI-linked suicide. Drexel University published data showing heavy users experience measurable social withdrawal. Roborhythms ran the first longitudinal study. Aalto University, in Finland, published the bombshell: users of Replika for more than 30 days report significantly elevated anxiety and depression scores vs matched controls.

By April 2026, every consumer AI startup with a "friendship," "romance," or "therapy" angle in its marketing copy was being asked the same two questions by investors: Which state are you incorporated in? Which states are you blocking?

The 5 state laws explained (NY, CA, IL, TX, FL)

Every one of these bills is different in the details, but they share three core requirements: mandatory AI disclosure (the chatbot must tell users, unprompted, that it is not human), suicide and self-harm detection (platforms must detect at-risk language and surface crisis resources), and age-gating for minors (restrictions or outright bans for users under 16 or 18 depending on the state). Here is the honest 2026 read, state by state.

| State | Bill | Status | Key requirements | Penalty ceiling |

|---|---|---|---|---|

| New York | S-3008C | In effect 2026 | Mandatory AI disclosure, suicide detection, under-18 restrictions, data retention caps | $15,000 per violation |

| California | AB-3211 (draft) + AG investigation | Advanced, vote Q3 2026 | Disclosure, minor age-gating, algorithmic audits, deceptive design ban | $25,000 per violation |

| Illinois | HB-4728 | Committee (Q2 2026) | Disclosure, self-harm detection, mental-health professional referral | $10,000 per violation |

| Texas | SB-1217 | Filed March 2026 | Minor ban under 16, parental consent 16 to 18, disclosure | $20,000 per violation |

| Florida | HB-2051 | Filed April 2026 | Full ban for users under 18, disclosure, platform liability for harm | Platform liability (civil) |

The common thread: no state is banning AI companions outright. Every bill threads the needle between the First Amendment and child-safety concerns by regulating design (disclosure, detection, age-gating) rather than content. That matters because design regulation scales — a single product change ships to every US user at once — where content regulation would fragment and invite constitutional challenge.

NY S-3008C — the blueprint

S-3008C is the blueprint every other state is copying. The text is short, the mechanics are specific, and the enforcement is real. Three core provisions:

- AI disclosure at every session. The chatbot must identify itself as non-human at the start of every new session and whenever a user asks if they are speaking with a real person. No more "I'd rather not say" evasion. This kills the "ambiguous companion" design pattern Replika was built on.

- Suicide and self-harm detection, mandatory. Platforms must implement state-of-the-art classifiers for at-risk language. When triggered, the chatbot must pause the conversation, surface the 988 Suicide & Crisis Lifeline, and either gate further conversation behind an acknowledgement or hand off to human review. The New York AG's office has already issued guidance that "best effort" is not sufficient — detection rates must meet a floor that will be defined by regulation.

- Under-18 restrictions. Users identified as minors face hard limits on session length, content categories (no romantic or sexual roleplay, full stop), and data retention. Platforms must age-gate at signup with meaningful verification, not just a checkbox.

Penalty ceiling is $15,000 per violation. For a platform with tens of millions of users, a single systemic failure — an undetected at-risk conversation that ends badly — can rack up tens of millions in fines before it reaches discovery in civil court.

The NY AG has already opened informal inquiries with three of the five largest AI companion platforms. The ones that lawyer up quickly and ship compliance features fast will survive. The ones that treat this as a communications problem will not.

The lawsuits against Character.AI

Character.AI faces three active lawsuits as of April 2026, all alleging negligent design and failure to detect at-risk user behavior. The most cited is the Texas case filed by the family of a 14-year-old who died by suicide after extended sessions with an AI persona that, according to filings, reinforced rather than disrupted the minor's ideation. The case was first reported by CNN in April 2025 and has since been joined by two parallel suits in California and Florida.

The plaintiffs' legal theory is sharp. They argue that Character.AI's platform is a product, not a publisher — meaning Section 230 of the Communications Decency Act does not apply. If a court accepts that framing, it opens the floodgates. Every design choice (how often the bot nudges users to return, how it handles at-risk language, how it presents itself to minors) becomes evaluable under product liability standards. That is a radically different legal regime than the one that shielded social media for 25 years.

Character.AI has implemented new safety features in response — under-18 restrictions, a dedicated "teen" experience, explicit disclaimers, and a crisis-resource surface — but the lawsuits are not going away. Discovery is grinding forward. If the company loses or settles on unfavorable terms, it becomes the precedent that shapes every AI companion product built after 2026.

In parallel, OpenAI faced seven federal complaints in November 2025 related to ChatGPT's handling of at-risk user conversations. OpenAI is not a pure companion product, but the complaints cover the same regulatory surface: disclosure, crisis detection, minor protection. ChatGPT's response was to ship model-level updates and a dedicated crisis-handling system. Read our earlier coverage in our ChatGPT review for the full context.

The Aalto University study — Replika worsens mental health

The Aalto University study, published in 2026 in a peer-reviewed journal, is the single most damaging piece of academic evidence against the AI companion thesis to date. The methodology matters, so here it is in plain English:

- Sample: Several hundred Replika users tracked longitudinally, matched against a control group on demographics, baseline mental-health scores, and tech-usage patterns.

- Intervention: Active use of Replika for 30+ days.

- Primary outcomes: PHQ-9 (depression) and GAD-7 (generalized anxiety) scores at baseline and follow-up.

- Result: Replika users showed statistically significant increases in both depression and anxiety scores over the study window. Matched controls did not.

The study is not the final word — sample size, self-selection, and unmeasured confounds are all open questions — but it is the first peer-reviewed work that flips the directionality of the claim. For four years, Replika's marketing implied the app reduced loneliness and improved well-being. The Aalto data says the opposite, at least for heavy users past the 30-day mark.

For regulators, this is the piece of evidence that converts "AI companions are a child-safety issue" into "AI companions are a public-health issue." That is a much broader regulatory mandate. It is the difference between a state AG investigation and an FDA-style approval regime — and the Aalto result is being cited in state legislatures right now.

The teen usage numbers (50%+)

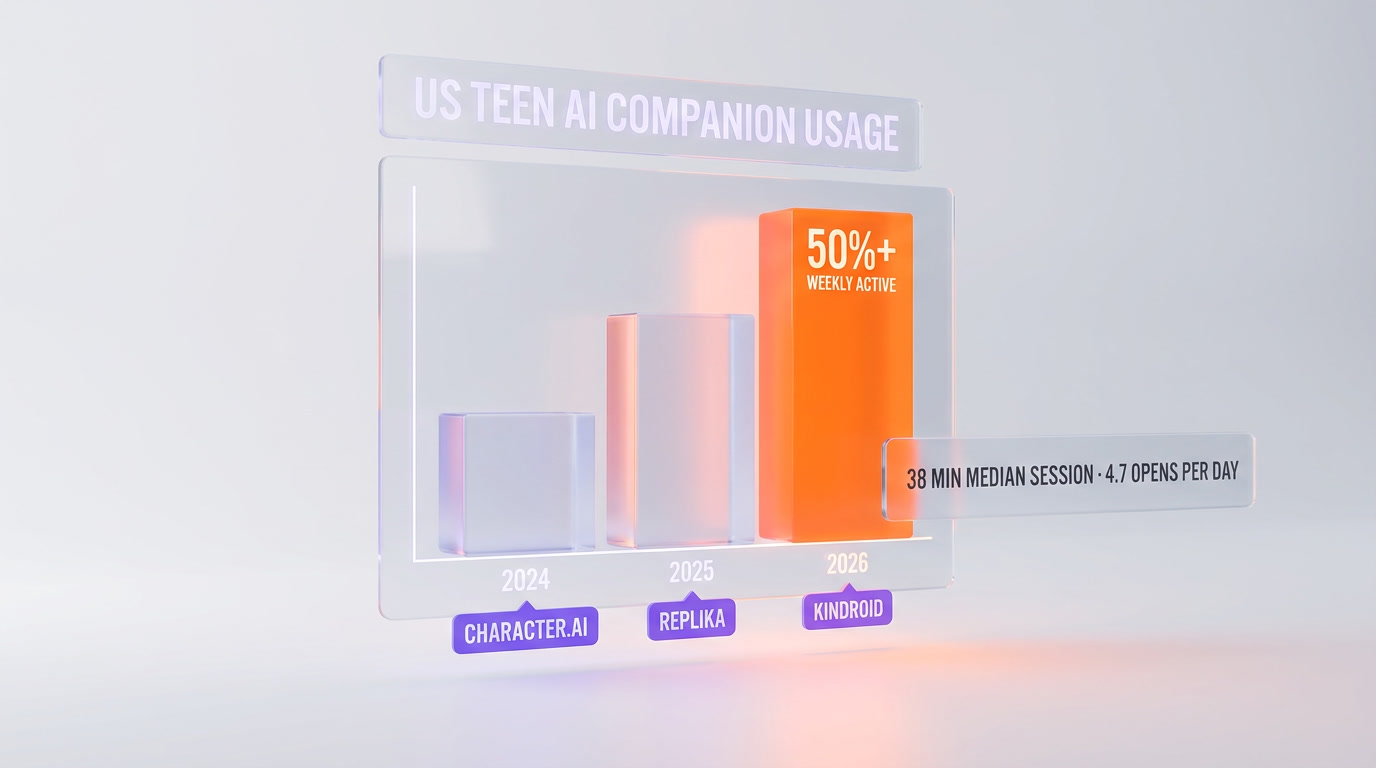

More than 50% of US teenagers now report using an AI companion app — Character.AI, Replika or Kindroid — at least weekly. That number comes from multiple 2025 and 2026 surveys, including the Roborhythms longitudinal panel. For context, that is a higher weekly-active rate than TikTok had at the same stage of its lifecycle. It is a higher rate than Instagram had in 2015.

The usage numbers are the reason this regulatory wave will not slow down. Legislators will tolerate a harmful product with 0.5% adoption. They will not tolerate a harmful product embedded in half of America's high schools. The political math is one-directional.

The pattern of use matters too. Most teen users are not casual. The Roborhythms panel found median session length of 38 minutes, daily opens of 4.7, and self-reported "emotional reliance" in roughly one in three users. Drexel University's data adds social-withdrawal markers that correlate with heavy use. These are not engagement metrics you want in front of a Congressional committee.

Which startups will survive

Three characteristics separate the companies likely to survive 2027 from those that will not:

- Jurisdictional agility. Can the platform ship state-specific compliance (NY disclosure rules for NY users, Texas minor restrictions for Texas users, and so on) without fragmenting the product? Multi-state compliance is an engineering problem before it is a legal one.

- Clinical honesty in positioning. Platforms that position as "AI companion for creative play" survive. Platforms that position as "AI therapist" or "AI romantic partner" are walking into an FDA-adjacent regulatory regime they are not equipped for.

- Built-in safety infrastructure. Platforms that shipped crisis detection, age-gating, and disclosure before the laws required it will pass regulatory scrutiny. Platforms retrofitting these features under legal pressure will ship compromised versions that do not satisfy regulators.

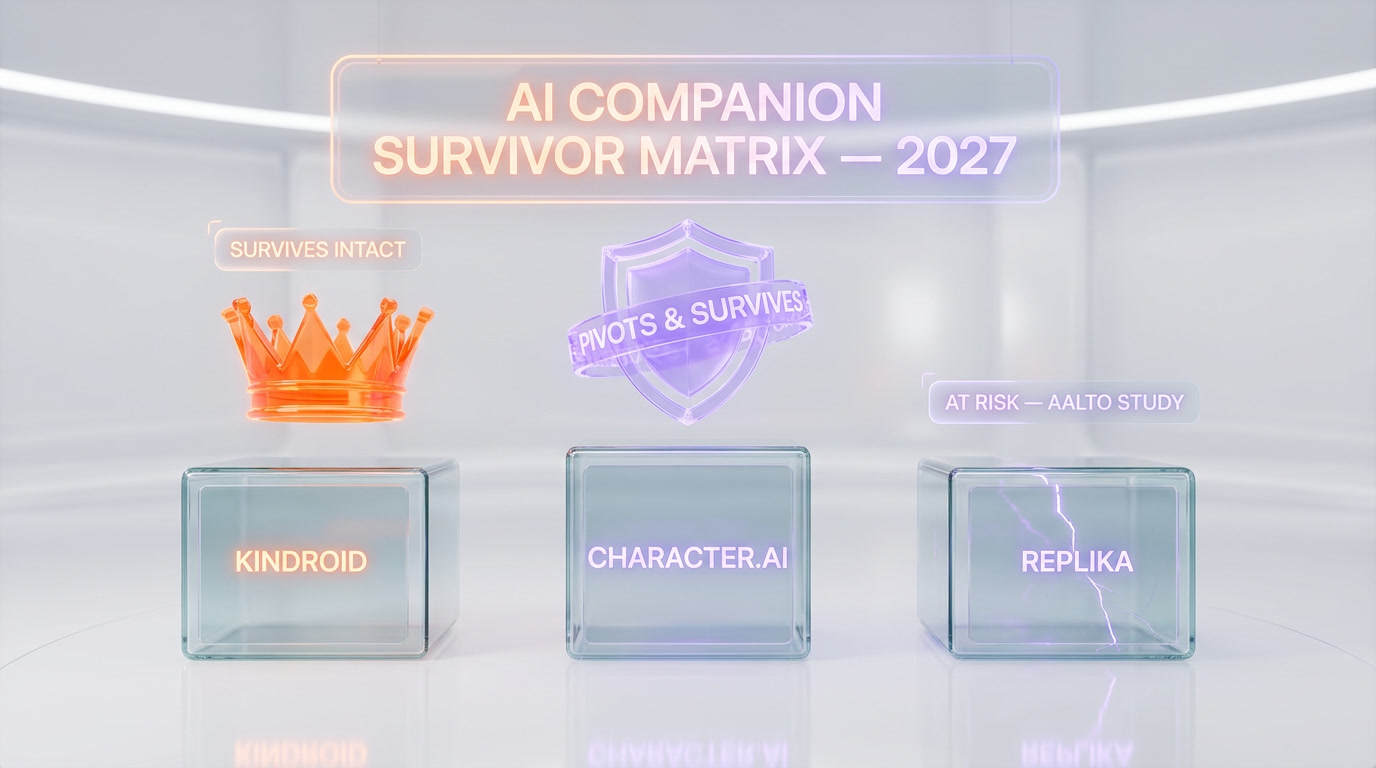

Character.AI is the hardest to call. It has the users, the brand, and the product-market fit. It also has the lawsuits, the CNN coverage, and the most to lose if Section 230 gets pierced. Our read: survives 2027, but as a fundamentally different product. Under-18 walled off. Romantic roleplay de-featured. Disclosures everywhere. The magic that made the product work for teens gets regulated away, and the adult-facing remnant is a smaller, less interesting business.

Kindroid is the quiet survivor. It has lower teen usage, a more technical user base, and has shipped disclosure and detection features ahead of legislation. Lower regulatory surface area. Survives 2027 in recognizable form.

Which will die

Replika is the one we would not bet on. The Aalto study is a bigger problem than any individual lawsuit because it attacks the product thesis itself. If heavy use of Replika measurably worsens anxiety and depression, the product is not a wellness app — it is a harm vector that was marketed as a wellness app. Every state bill will cite the Aalto result. Every class action will lead with it. Replika has pivoted twice already (from romance to friendship, from friendship to wellness). A third pivot — away from emotional intimacy entirely — would kill the business that remains.

Beyond Replika, the dying category is the long tail of Character.AI clones and romantic-roleplay apps with under-18 users. These products cannot survive NY S-3008C without gutting their core experience, and their per-user margins are too thin to absorb the compliance cost. Expect 10 to 20 of these shut down quietly through 2026 and 2027, with the survivors consolidating under the two or three platforms with the resources to build real compliance infrastructure.

What global regulation looks like (EU AI Act, UK, Singapore)

The US is moving fastest on state-level enforcement, but the regulatory floor is being set globally.

| Jurisdiction | Framework | Status 2026 | Impact on AI companions |

|---|---|---|---|

| European Union | EU AI Act | In force (phased) | High-risk classification likely for emotion-simulating systems used by minors. Transparency obligations. Mandatory risk assessments. |

| United Kingdom | Online Safety Act + pro-innovation AI framework | In force | Duty of care on platforms. Ofcom enforcement. Specific child-safety obligations. |

| Singapore | Model AI Governance Framework + sector guidance | Voluntary but enforced via licensing | Soft-law approach. Companies expected to self-certify. Regulators intervene on complaints. |

| Canada | AIDA (delayed) | Stalled | Federal framework pending. Provincial laws emerging piecemeal. |

| Australia | eSafety Commissioner + AI safety guidance | Active enforcement | Focus on child safety. Aggressive use of existing powers. |

The pattern is clear: Europe regulates via omnibus framework, the US regulates state-by-state, and APAC regulates via soft-law with enforcement teeth. The compliance burden stacks. A global AI companion platform in 2027 will be implementing NY S-3008C, EU AI Act transparency obligations, UK Online Safety Act duty of care, and Singapore self-certification — all at once, all with different audit trails.

What this means for founders

If you are building in or adjacent to the AI companion space, here is the short list of things that changed in Q1 2026:

- Regulatory affairs is no longer a Series B problem. You need a compliance-minded engineer or advisor from day one. The companies that waited until legal pressure hit are the ones now retrofitting safety features under deadline.

- Positioning matters more than features. "AI friend for creative play" is a regulatable category. "AI therapist who understands you" is an FDA problem waiting to happen. Your marketing copy is evidence in any future investigation.

- Under-18 is a strategic choice, not a default. Florida's proposed full ban and Texas's under-16 ban tell you where this is heading. If your growth model depends on teen acquisition, your business model is on the regulatory clock.

- Disclosure is a UX problem. "I am an AI" banners kill engagement. But no disclosure is now illegal in New York and soon elsewhere. Winning teams will design disclosure that is compliant and doesn't break flow. That is product work, not legal work.

- Evidence is your ally. Peer-reviewed studies of your product — even imperfect ones — are a regulatory asset. The companies that commission longitudinal research on their own users will be the ones regulators trust. The companies that stay silent will be the ones regulators assume are hiding harm.

Our verdict

The AI companion industry entered 2026 as a Wild West and will exit 2027 as a regulated industry. New York's S-3008C is the blueprint, California's AG investigation is the enforcement engine, and the Aalto study is the public-health argument that makes all of it politically untouchable. Three lawsuits against Character.AI, seven federal complaints against OpenAI, and a 50% teen-usage number make this the fastest consumer-AI regulatory wave in history.

Kindroid most likely survives intact. Character.AI survives as a more restricted product. Replika is the one we would not bet on — the Aalto result attacks the product thesis, not just the product.

For founders in the space, the strategic question is no longer "how do we grow." It is "which jurisdiction are we built for, and which ones are we walking away from." That is a very different business than the one that raised at unicorn valuations two years ago.

Related reading on the 2026 AI regulatory landscape: the Trump-Anthropic executive-order saga, and the OpenAI-Anthropic-Google espionage pact story. Browse the full analysis desk or explore the AI tools directory for more coverage.

Frequently asked questions

What is New York's S-3008C law?

S-3008C is a New York state law in effect in 2026 that regulates AI companion chatbots. It mandates three core requirements: explicit AI disclosure at the start of every session, state-of-the-art detection of suicide and self-harm language with a mandatory pause and crisis-resource surface, and hard restrictions for users under 18 on session length, content categories and data retention. Penalties run up to $15,000 per violation.

Which 5 US states have passed or are passing AI companion laws?

New York has passed S-3008C (in effect 2026). California is advancing AB-3211 with an active attorney-general investigation. Illinois filed HB-4728 in committee. Texas filed SB-1217 in March 2026. Florida filed HB-2051 in April 2026. All five bills regulate design choices — disclosure, safety detection, and age-gating — rather than content, threading the First Amendment needle.

Why is Character.AI being sued?

Character.AI faces three active lawsuits as of April 2026 alleging negligent design and failure to detect at-risk user behavior. The most prominent case, filed in Texas by the family of a 14-year-old who died by suicide after extended Character.AI sessions, was first reported by CNN in April 2025. Plaintiffs argue the platform is a product, not a publisher, which would defeat Section 230 immunity and expose the company to product liability standards.

What did the Aalto University study find about Replika?

A 2026 peer-reviewed Aalto University study tracked Replika users longitudinally against matched controls. Heavy users (30+ days active) showed statistically significant increases in both PHQ-9 depression scores and GAD-7 anxiety scores over the study window. Controls did not. The study is cited in state legislatures and transforms the AI companion debate from a child-safety issue into a public-health issue.

How many US teenagers use AI companion apps?

More than 50% of US teenagers report using an AI companion app — Character.AI, Replika or Kindroid — at least weekly. Median session length is 38 minutes and daily opens average 4.7. Roughly one in three teen users report emotional reliance. These adoption numbers are higher than early-stage TikTok and are the political reason state-level regulation is moving fast.

Which AI companion startup is most likely to survive 2027?

Kindroid is the most likely to survive intact because of lower teen adoption, a more technical user base, and early shipping of disclosure and detection features. Character.AI will likely survive as a more restricted product with under-18 walls and de-featured romantic roleplay. Replika is the most at risk because the Aalto study attacks the product thesis itself, not just specific features.

How does the EU AI Act apply to AI companions?

The EU AI Act is in force in 2026 with phased enforcement. Emotion-simulating AI systems used by minors are likely to fall under high-risk classification, triggering transparency obligations and mandatory risk assessments. A global AI companion platform in 2027 must comply with the EU AI Act, New York S-3008C, the UK Online Safety Act, and Singapore self-certification simultaneously — each with distinct audit trails.

Were there complaints against OpenAI and ChatGPT?

Yes. OpenAI faced seven federal complaints in November 2025 related to ChatGPT's handling of at-risk user conversations. ChatGPT is not a pure companion product, but the complaints cover the same regulatory surface — disclosure, crisis detection, minor protection. OpenAI responded with model-level updates and a dedicated crisis-handling system.

Will Section 230 protect AI companion platforms from lawsuits?

Unclear. The three Character.AI lawsuits advance a legal theory that AI companion platforms are products, not publishers, which would defeat Section 230 immunity and expose the company to product liability. If a court accepts that framing, every design choice — return nudges, at-risk handling, minor interactions — becomes evaluable under strict product liability standards. That would be a radical break from 25 years of internet-platform legal protection.

What should AI companion founders do right now?

Five moves: hire or advise a compliance-minded engineer from day one, revise positioning away from "AI therapist" language toward "creative play" framing, treat under-18 as a strategic choice rather than a default, design disclosure UX that is compliant without breaking flow, and commission longitudinal research on your own users. Companies that wait for legal pressure to hit are the ones now retrofitting safety features under deadline.