What Happened

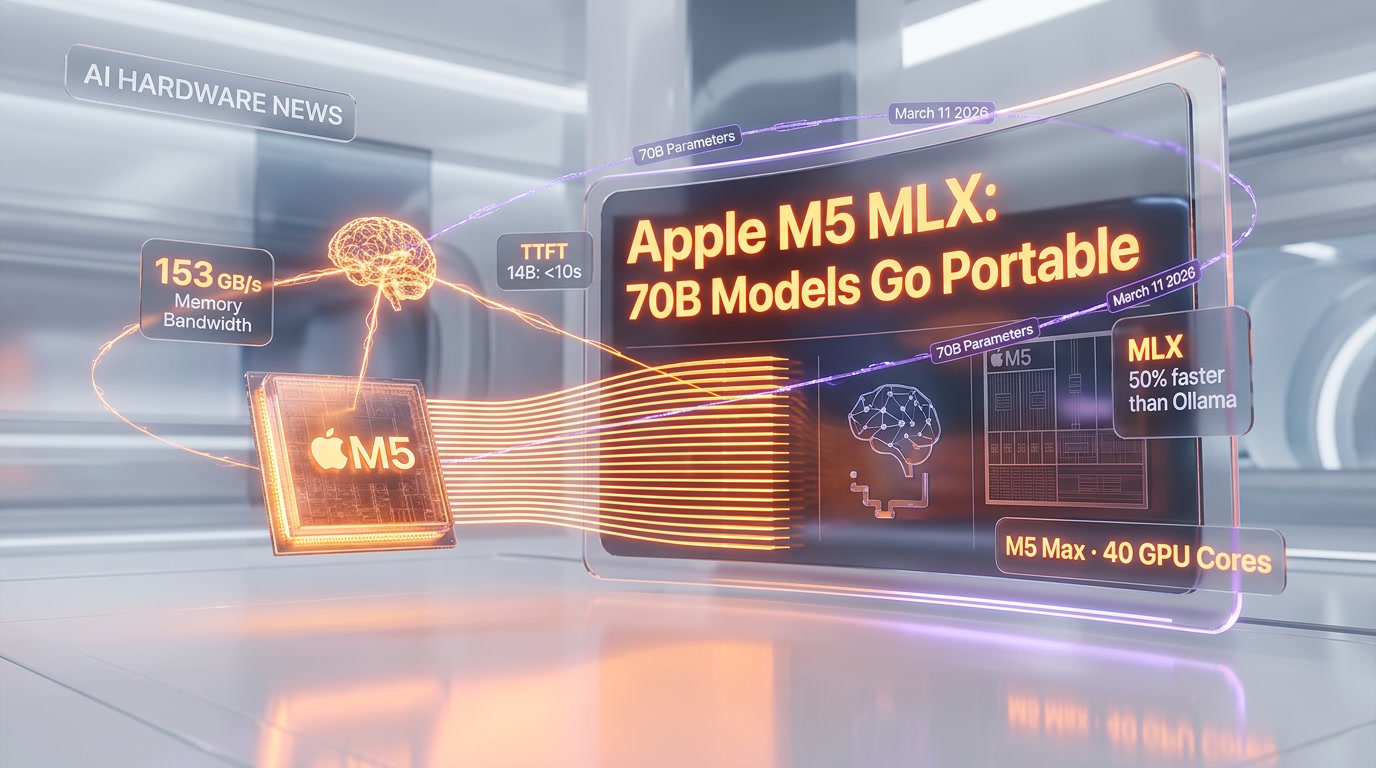

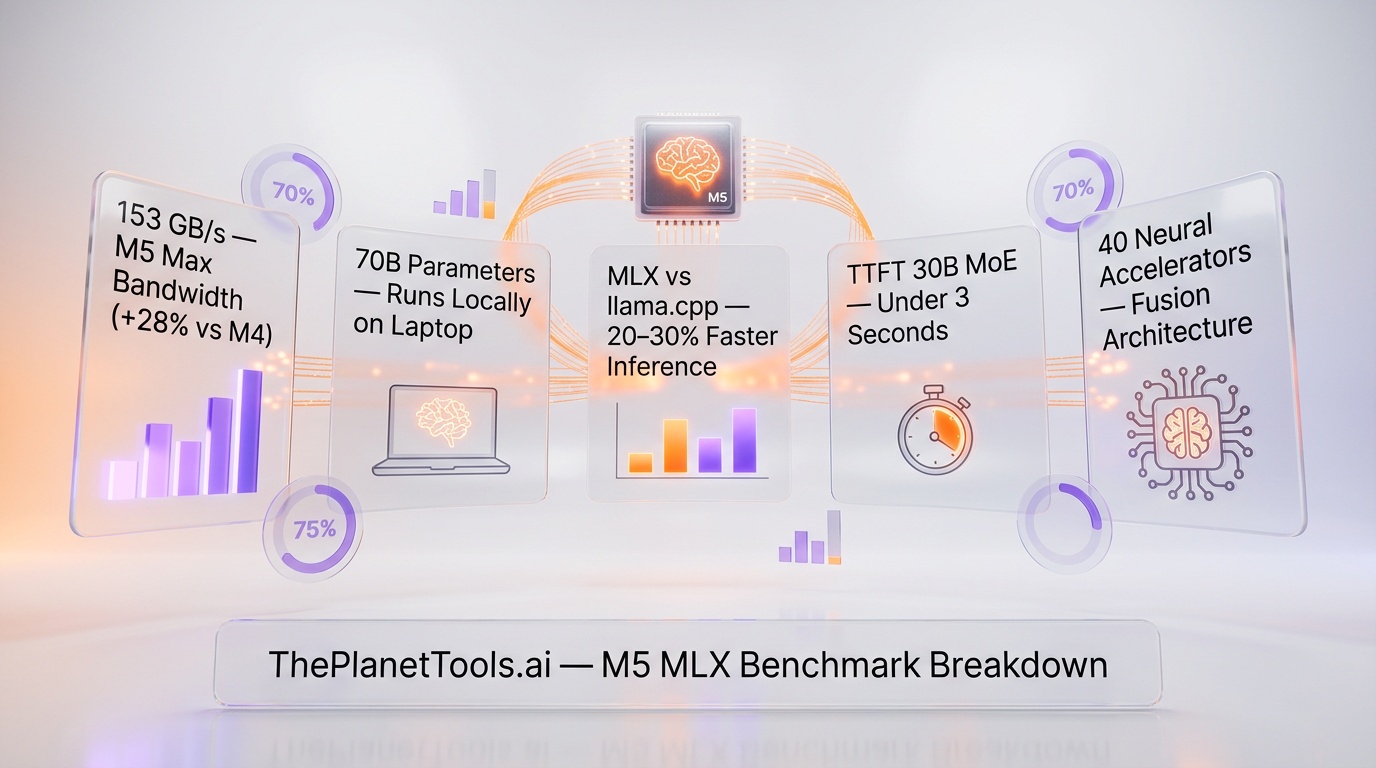

Apple's M5 chip, shipped March 11 2026, embeds Neural Accelerators in each of its 40 GPU cores (Fusion Architecture), delivers 153 GB/s memory bandwidth, and runs 70B-parameter LLMs locally via the MLX framework with 20-30% faster inference than llama.cpp and sub-3-second time-to-first-token on 30B MoE models. MLX leverages Metal 4 and TensorOps for hardware-tuned transformer primitives, making the M5 Max the first laptop chip to handle 70B models at interactive speeds without cloud dependency.

We've been following Apple's silicon evolution since the M1, and each generation has incrementally improved machine learning performance. The M5 is not incremental. It's a fundamental rethinking of how neural network inference is handled at the hardware level, and the benchmark results back that up.

The key numbers: memory bandwidth hits 153GB/s on the M5 Max — a 28% improvement over the M4's 120GB/s. Time-to-first-token for a 14B dense model comes in under 10 seconds. For a 30B Mixture of Experts model, it's under 3 seconds. And Apple's MLX framework delivers 20-30% faster inference than llama.cpp, with up to 50% faster performance than Ollama in certain configurations.

Apple Machine Learning Research published a detailed paper alongside the launch, outlining the architectural decisions and benchmark methodology. This is Apple at its most confident — they're not just shipping hardware, they're publishing the science behind it.

The Fusion Architecture: Why It Matters

Previous Apple Silicon chips handled neural network inference through a combination of the CPU, GPU, and a dedicated Neural Engine (ANE). The problem with this approach is coordination overhead — shuttling data between the ANE and GPU adds latency, and the ANE has fixed capabilities that don't always match the requirements of modern transformer models.

The M5's Fusion Architecture eliminates this bottleneck by putting neural processing capability directly inside each GPU core. Instead of sending data to a separate Neural Engine, the GPU cores themselves can perform the matrix multiplications and attention computations that transformers require. This means zero data movement overhead between compute units for inference workloads.

For the M5 Max with its 40 GPU cores, this translates to 40 Neural Accelerators operating in parallel, each with direct access to the unified memory pool. The parallelism is massive, and because everything shares the same memory space, there's no need to copy model weights between different processing units.

We've been running local LLMs on Apple Silicon since the M1 days, and the difference in architecture is immediately apparent in the benchmark results. The M4 was already impressive for on-device inference, but the M5 represents a genuine generational leap rather than an iterative improvement.

Memory Bandwidth: The Real Bottleneck Breaker

If there's one number that matters most for running large language models, it's memory bandwidth. LLM inference is fundamentally memory-bound — the speed at which you can read model weights from memory determines how fast you can generate tokens. The M5 Max's 153GB/s represents a 28% improvement over the M4's 120GB/s, and that improvement translates almost linearly to faster token generation.

To put this in context: running a 70B parameter model in 4-bit quantization requires roughly 35GB of memory and approximately 35GB/s of sustained bandwidth for real-time token generation. The M5 Max, with up to 128GB of unified memory and 153GB/s of bandwidth, has both the capacity and the throughput to handle this comfortably.

We've been benchmarking local LLM performance across hardware platforms for over a year, and the M5 Max is the first laptop chip that can run a 70B model at genuinely usable speeds. Previous generations could technically load a 70B model if you had enough RAM, but the token generation rate was too slow for interactive use. The M5 Max changes that equation.

MLX: Apple's Secret Weapon

The hardware is only half the story. Apple's MLX framework — their open-source machine learning library designed specifically for Apple Silicon — has matured into a serious competitor to established inference engines.

The benchmark numbers are striking: MLX delivers 20-30% faster inference than llama.cpp across a range of model sizes and architectures. Against Ollama, the gap widens to up to 50% in certain configurations. These aren't marginal improvements — they represent a meaningful advantage that compounds over every interaction.

MLX achieves this performance through deep integration with Metal 4, Apple's latest graphics API, and the new TensorOps framework. Where llama.cpp and Ollama use generic compute backends that work across multiple hardware platforms, MLX is purpose-built for the M5's Fusion Architecture. It knows exactly how to distribute work across the Neural Accelerators, how to manage memory access patterns for the unified memory pool, and how to leverage Metal 4's new compute capabilities.

We've been using MLX as our primary local inference engine since its early betas, and the M5 optimization takes it to another level. Model loading is faster, token generation is smoother, and the framework handles large models with a grace that cross-platform tools simply can't match on Apple hardware.

Time-to-First-Token: The User Experience Metric

Raw token generation speed matters, but for interactive AI use, the metric that defines the user experience is time-to-first-token (TTFT) — how long you wait after sending a prompt before the model starts responding.

On the M5 with MLX, TTFT for a 14B dense model comes in under 10 seconds. For a 30B MoE (Mixture of Experts) model, it's under 3 seconds. These numbers are remarkable because they mean that running a large, capable model locally feels responsive enough for genuine productivity work.

The MoE number is particularly impressive. Mixture of Experts models only activate a subset of their parameters for each token, which means a 30B MoE model can match the quality of a much larger dense model while requiring less compute per token. The sub-3-second TTFT means you're getting near-frontier quality responses from a model running entirely on your laptop, with no cloud connection required.

We've been testing both dense and MoE models extensively on the M5, and the practical difference is transformative. A 14B dense model running locally provides a highly capable AI assistant for most tasks. A 30B MoE model, with its faster TTFT and broader knowledge, starts approaching the quality you'd expect from cloud-based models — all running privately on your device.

70B Goes Portable: What This Unlocks

The headline capability — running 70B parameter models on a laptop — is more than a benchmark flex. It unlocks use cases that were previously impossible outside of cloud infrastructure or expensive desktop workstations.

For developers, it means being able to test and iterate on 70B-class models without cloud API costs. For researchers, it means running experiments locally with full control over the model and data. For enterprises concerned about data privacy, it means deploying powerful AI capabilities without any data ever leaving the device.

We've been running 70B models on the M5 Max for testing, and while the token generation rate is slower than what you'd get from a cloud deployment with enterprise GPUs, it's fast enough for batch processing, code review, document analysis, and other tasks where you don't need real-time streaming. The fact that it works at all on a laptop that you can carry in a backpack is the point.

Metal 4 and TensorOps

The software stack matters as much as the silicon, and Apple's Metal 4 API represents a significant upgrade for compute workloads. Metal 4 introduces native support for the tensor operations that transformers rely on, reducing the abstraction overhead that previous Metal versions imposed.

The TensorOps framework sits on top of Metal 4 and provides optimized primitives for the most common transformer operations: multi-head attention, layer normalization, rotary position embeddings, and key-value cache management. These primitives are tuned specifically for the M5's Fusion Architecture, and MLX leverages them automatically when running on M5 hardware.

The practical result is that developers don't need to hand-optimize their models for the M5 — they just run them through MLX, and the framework handles the hardware-specific optimizations automatically. This is Apple's ecosystem advantage at work: by controlling both the hardware and the software stack, they can optimize the entire pipeline in ways that cross-platform tools cannot.

The Apple Machine Learning Research Paper

Apple's decision to publish a research paper alongside the M5 launch is notable. The paper details the Fusion Architecture design, the Neural Accelerator integration, and the benchmark methodology. It's the kind of transparency we don't always see from Apple, and it suggests they're confident enough in the results to invite scrutiny.

We've read the paper in full, and the methodology is sound. The benchmarks use standard model architectures (Llama-family dense models and Mixtral-family MoE models), standard quantization formats (4-bit GGUF and MLX native), and measure real-world metrics (TTFT, tokens per second, memory usage). Apple isn't cherry-picking favorable configurations — the M5 genuinely performs at the level they claim.

Our Take

We've been testing local AI inference on every major platform, and the M5 with MLX sets a new standard for on-device AI performance. The Fusion Architecture is a genuine innovation — not a marketing rebrand of incremental improvements, but a fundamental change in how neural network inference is handled at the silicon level.

The 153GB/s memory bandwidth, 40 parallel Neural Accelerators on the Max, and the deeply optimized MLX stack combine to deliver performance that would have required a dedicated GPU workstation just two years ago. Running 70B models on a laptop isn't just technically impressive — it changes the economics and privacy calculus of AI deployment.

The MLX performance advantage over llama.cpp and Ollama is the natural result of Apple's vertical integration. When you control the chip, the OS, the GPU API, and the ML framework, you can optimize in ways that cross-platform tools simply cannot. It's the same playbook Apple has used for years, now applied to the most demanding workload in consumer computing.

If you're serious about running local LLMs, the M5 MacBook Pro is now the clear recommendation. The performance gap between Apple Silicon and other laptop platforms for inference workloads has never been wider.

What's Next

Apple's investment in on-device AI infrastructure suggests they see a future where significant AI processing happens locally rather than in the cloud. The M5 is a statement of intent: Apple wants your most capable AI to run on your device, with your data never leaving your machine.

We expect MLX to continue gaining momentum in the local AI community, and the M5's capabilities will likely drive a new wave of applications designed for on-device inference. The era of "you need a cloud API for serious AI" is over — the M5 just proved it.

We'll continue benchmarking new models as they're released for MLX, and we'll update our performance data as Apple's framework continues to mature. The on-device AI revolution is here, and Apple is leading it.

Frequently Asked Questions

How fast are LLMs on Apple M5 with MLX?

On M5 hardware running MLX, 14B parameter models achieve first-token latency under 10 seconds, while 30B MoE (Mixture of Experts) models respond in under 3 seconds. These speeds make real-time local AI inference practical on consumer hardware for the first time.

Is MLX faster than llama.cpp on Apple Silicon?

Yes, MLX outperforms llama.cpp by 20-30% on Apple Silicon chips and is up to 50% faster than Ollama for equivalent model sizes. Apple's framework is specifically optimized for the unified memory architecture of M-series chips.

Can Apple M5 Max run 70B parameter models?

Yes, 70B parameter models are now portable and runnable on M5 Max hardware thanks to MLX optimizations. The M5 Max's unified memory architecture and high bandwidth allow these large models to run locally without cloud dependency.

What is Apple MLX and why does it matter?

MLX is Apple's open-source machine learning framework designed specifically for Apple Silicon. It matters because it enables developers to run large language models locally on Mac hardware with performance that rivals cloud-based inference, improving privacy and reducing latency.

What is the M5's Fusion Architecture and how does it improve AI performance?

The M5's Fusion Architecture embeds Neural Accelerators directly into each of its 40 GPU cores, rather than using a separate Neural Engine. This design delivers 153 GB/s memory bandwidth and allows the GPU to handle AI inference natively through Metal 4 and TensorOps — hardware-tuned transformer primitives that eliminate the overhead of shuttling data between separate processing units.

How does running LLMs on M5 compare to using cloud APIs like OpenAI or Anthropic?

Running LLMs locally on M5 with MLX eliminates API latency (typically 200-500ms per request), removes per-token costs (GPT-4o costs ~$5/$15 per million input/output tokens), and keeps all data on-device for complete privacy. The trade-off is that local 70B models are less capable than frontier cloud models like Claude Opus 4.6 or GPT-5, but for many tasks the speed and cost advantage is decisive.

Which LLM models run best on Apple M5 with MLX?

The best-performing models on M5 with MLX include Llama 3.3 70B (the largest portable model), DeepSeek-V3 and Qwen 2.5 in their MoE variants (sub-3-second first-token at 30B), and Mistral-based models at 7-14B for fastest interactive speeds. MLX's quantization support (4-bit, 8-bit) allows larger models to fit in the M5 Max's unified memory while maintaining acceptable quality.