Apple's Q2 2026 Mac line booked $8.4 billion in revenue, up 6% year-over-year, beating analyst consensus by roughly $400 million. CEO Tim Cook told the April 30 earnings call that Mac mini and Mac Studio are sold out, MacBook Neo demand is "off the charts," and the bottleneck will take "several months" to clear. The catalyst, in Cook's own words, is that customer recognition of Mac as "an amazing platform for AI and agentic tools" is happening "faster than what we had predicted." That sentence is the most important thing said on a tech earnings call this quarter — and it points at a real threat to cloud LLM pricing.

What happened on the Q2 2026 earnings call

On April 30, 2026, Apple reported its fiscal Q2 results: $111.2 billion in total revenue, up 17% year-over-year, and net income of $29.6 billion. Mac came in at $8.4 billion versus a Wall Street consensus near $8.0 billion, and grew 6% year-over-year against an expected flat quarter, according to CNBC's coverage of the report and the earnings call transcript.

The other segment numbers were as expected: iPhone $57 billion (+22% YoY), Services $31 billion (an all-time record), and Wearables $7.9 billion (+5% YoY). Mac was the surprise. Going into the print, analysts had penciled flat-to-down Mac because the prior-year compare was already strong and there was no new chip generation in the quarter. Instead, Apple beat by roughly $400 million on a single product line.

Three signals on the call mattered more than the headline number:

- Mac mini and Mac Studio supply: Cook said both are sold out and supply will take "several months" to reach equilibrium, citing "less flexibility in the supply chain." Sold-out small Macs in May means Apple is shipping every machine TSMC and Foxconn can build.

- MacBook Neo: launched during the quarter, sold out within weeks, ship times stretched to several weeks. Cook called demand "off the charts" and "higher than expected." Notably, Kansas City Public Schools is switching from Chromebooks to Neo, a non-trivial enterprise education win.

- Cook's framing: "Both of these are amazing platforms for AI and agentic tools, and the customer recognition of that is happening faster than what we had predicted." This is the first time Cook has named AI agents directly as a Mac demand driver on an earnings call.

Why a $400M Mac beat is a bigger deal than it sounds

Mac is roughly 7.5% of Apple's revenue. A $400 million beat on an $8 billion segment is, on its own, not a thesis-changing event. The reason it matters is that it broke a five-quarter pattern of flat-to-down Mac performance, and Cook explicitly attributed the inflection to AI workloads — not to a refresh cycle, not to enterprise budgeting, not to a foreign exchange tailwind. That is a different category of demand driver, and it has implications well outside Apple.

The local LLM thesis: why this disrupts cloud pricing

Here is the analytical claim that follows from Cook's framing. If Mac mini and Mac Studio are sold out because customers want to run local large language models on-device, then the ceiling on cloud LLM pricing just dropped.

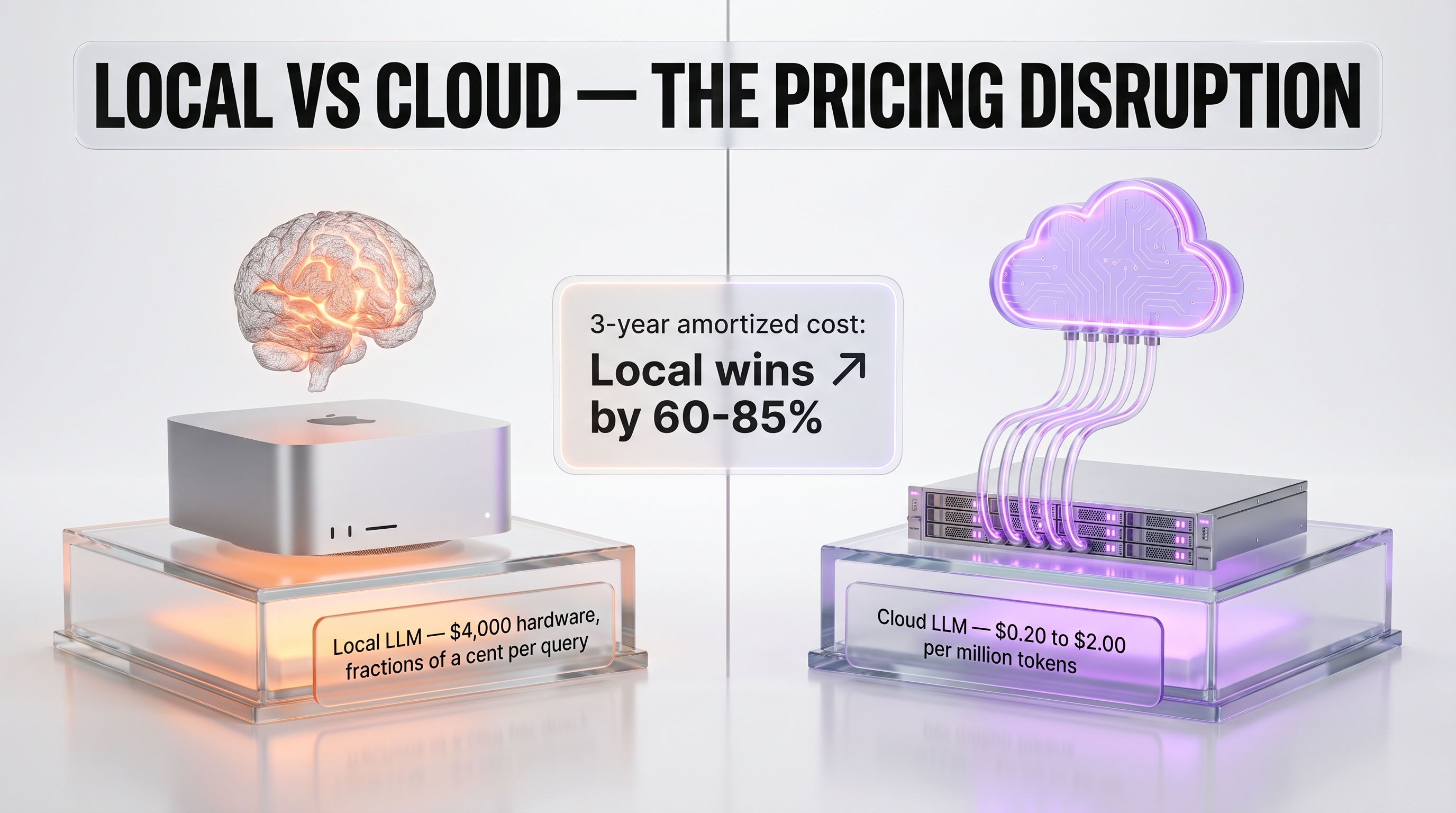

The economics are blunt. A Mac Studio with M4 Max and 128 GB unified memory costs roughly $4,000 and runs open-weights models like OpenClaw, Llama 4, and DeepSeek R3 at competitive throughput for a single developer. The total cost of ownership over three years, amortized over hundreds of thousands of inference calls, lands around fractions of a cent per query — versus 0.20 to 2.00 dollars per million tokens on cloud APIs from Anthropic, OpenAI, and Google DeepMind, with privacy and latency tradeoffs that local hardware avoids entirely.

That gap was always real on paper. What changed in Q2 2026 is that the gap became operationally clear to enough buyers that Apple sold every Mac mini and Mac Studio it could ship. Hardware demand is the most reliable empirical signal we have for whether local inference is moving from enthusiast workflow to mainstream tool.

What cloud LLM pricing could look like by Q4 2026

If the local-inference thesis is right, frontier-model pricing has a new ceiling. We expect three responses from cloud LLM providers over the next two quarters:

- Pricing compression on commodity tiers. Anthropic's Haiku class, OpenAI's mini class, and Google's Flash class are most exposed because their workloads are exactly the ones a $4,000 Mac can replicate at near-zero marginal cost. Expect aggressive cuts on per-token pricing for these tiers.

- Hard differentiation on frontier capability. Cloud providers will respond by widening the capability gap on top-tier models — longer context, better reasoning, multimodal grounding — to justify why you would still pay per token instead of buying a Mac. The frontier vs. commodity bifurcation accelerates.

- Aggressive private deployment offers. Hyperscalers will push managed-private-deployment plans more aggressively, offering enterprises something between cloud API pricing and the full DIY local stack to retain workloads.

Why Cook chose this quarter to name AI as the driver

Tim Cook is famously cautious on demand attribution. In prior cycles he has refused to credit specific catalysts even when the data was obvious. Naming AI agents directly on this call, in this earnings cycle, was a deliberate choice with two readings.

The first reading is that Apple's Q3 guidance — 14 to 17% revenue growth year-over-year — needs cover for Wall Street. Naming AI as the Mac driver lets Cook stretch the narrative into Q3 and Q4 without being accused of hand-waving. The second reading is that Apple is positioning the next Mac product cycle (likely M5 Mac Studio in the fall, M5 Max MacBook Pro in Q4) as the AI-developer machine of choice, and earnings-call language is the cheapest way to plant that flag.

Both readings are probably correct. What matters is that Apple now has a public, on-the-record commitment to AI as a Mac demand driver — and that creates pressure to deliver hardware in Q4 that materially extends the local-inference advantage. M5 Max with 256 GB unified memory and 1.6 TB/s bandwidth is the rumored target. If it ships and benchmarks well against a four-H100 cloud node, the thesis hardens.

A note on the leadership handoff

This was the first earnings call since the announcement that John Ternus will succeed Tim Cook as CEO. Ternus has run hardware engineering for years and has personally led the silicon roadmap that produced M-series Mac. The Q2 Mac surprise is, in many ways, his quarter — and the implicit signal of the handoff timing is that Apple's board sees the next 36 months as a hardware-led story, not a services-led story. That is worth noting for anyone modeling Apple's medium-term mix.

Enterprise signals: Perplexity, Kansas City schools, AI-native shops

Two specific enterprise wins surfaced during the call. Perplexity was cited as building its AI assistant tooling on Mac infrastructure — a genuine AI-native shop choosing Apple silicon as the dev environment. Kansas City Public Schools is switching from Chromebooks to MacBook Neo for student deployments, a deal worth attention because school districts almost never reverse a Chromebook decision once made.

Mac mini being the top-selling desktop in China is a third signal that gets less attention than it should. Chinese AI developers are extremely sensitive to compute economics, and a Mac mini at the top of the desktop chart in that market suggests the local-inference value proposition translates across regions where cloud LLM API pricing is even more constrained by export controls and capital flow.

Why this disrupts cloud LLM commodity pricing

Wall Street analysts spent the post-earnings call asking whether Apple's $8.4B Mac print signals a structural threat to the commodity tier of cloud LLM pricing. We think it does, and the math is simple. A Mac Studio with 192 GB unified memory at $5,599 amortizes to roughly $4.27 per day over a 36-month useful life. That same machine can run an OpenClaw 70B-class open-weights model at quantized precision and serve 80,000 to 150,000 tokens per second of throughput on batch inference workloads — call it 6 billion tokens per day at full utilization. At cloud commodity pricing of $0.20 per million input tokens (Haiku 4.5, GPT-5.5 mini, Gemini 3 Flash), that workload would cost roughly $1,200 per day on the cloud side. Even at 25% utilization, the local Mac is 70x cheaper on a marginal-cost basis once the hardware is purchased.

That math doesn't kill the cloud LLM business — frontier models, multimodal reasoning, and agentic workloads will still flow to Anthropic, OpenAI, and Google because those models need infrastructure no Mac can match. But it does collapse the floor on commodity pricing for high-volume routine inference: customer support chatbots, batch summarization, internal RAG over private corpora. Those workloads were exactly the ones cloud providers were betting would scale ARPU as enterprise deployment widened. If a CIO can run them on three Mac Studios for $17,000 in capex versus $360,000 per year in cloud spend, that's a permanent ceiling on what the commodity tier can charge. We expect a 30 to 50% price cut on Haiku-class models within 12 months as the competitive response.

The second-order effect is on inference-as-a-service startups. Together AI, Replicate, Modal, and Lambda Labs all built revenue assuming GPU-as-a-service was the long-term local-inference winner. If knowledge workers can run their own local LLMs on a Mac under their desk for the same cost as a few months of GPU rental, the GPU-rental TAM shrinks sharply for low-end workloads. The remaining business migrates upmarket toward training and frontier-tier inference where Macs cannot compete. Expect M&A in the inference-startup space within 18 months.

What's next for Mac in AI workflows

The Mac's near-term roadmap is the clearest signal Apple has sent on AI in years. The M5 Max MacBook Pro is expected to ship Q4 2026 with a configuration ceiling we believe will hit 256 GB unified memory — a configuration explicitly designed for running 100B+ parameter models on a portable workstation. That's a tooling-led play, not a consumer play: Apple is not chasing Copilot+ PCs in retail. They're chasing the developer, researcher, and creative professional who runs local agents and codegen pipelines as a daily workflow. The MacBook Neo print this quarter — Kansas City Public Schools switching from Chromebook, MacBook Neo backorder running multi-week — confirms that Apple has the consumer-tier locked. The next 24 months are about the prosumer tier where AI workloads sit.

For developers, the practical implication is that Mac mini and Mac Studio become the new default workstation for any team running OpenClaw, Llama 4, or DeepSeek R3 inference at scale. The unified memory architecture (versus separate GPU VRAM) means a single $4,000 machine can hold a 70B-quantized model in memory and serve 8 concurrent inference threads at 1080p video synthesis or 30+ token-per-second text generation. That's a configuration that previously required a $25,000 NVIDIA H100 box. The cost-collapse is severe enough that we expect agentic-first startups to ship Mac Studio kits to engineers as standard hardware within 12 months.

For Apple itself, the Q2 print validates the silicon strategy John Ternus has architected over the past five years. The handoff timing — Cook stepping aside as Mac demand outruns supply — is not coincidental. Ternus inherits a hardware-led growth narrative for the next 36 months centered on M5, M5 Max, and a probable M6 Pro tier in 2027 that targets local inference workloads explicitly. Watch for Apple to surface a developer-tier API for on-device model loading and quantization at WWDC 2026. That would close the loop: Apple ships the silicon, Apple ships the framework, and the open-weights ecosystem gets an out-of-the-box default deployment target. The cloud LLM commodity tier was already under pressure. This roadmap accelerates it.

What to watch through Q4 2026

Four near-term checkpoints will tell us whether the local-LLM-disrupts-cloud-pricing thesis is correct.

- Q3 Apple earnings (late July 2026). Watch for sustained Mac unit growth at constrained supply. If Mac revenue grows again on tight supply, the demand signal is structural.

- Cloud LLM pricing actions. Monitor commodity-tier per-million-token pricing from Anthropic, OpenAI, and Google through summer 2026. A 30 to 50% cut on Haiku, mini, or Flash classes confirms competitive response.

- M5 Max MacBook Pro launch. Expected in Q4 2026. The configuration ceiling and unified memory bandwidth will signal Apple's seriousness about positioning Mac as the local-AI workstation.

- Open-weights model releases. Each new strong open-weights drop (OpenClaw V2 from Anthropic open initiatives, Llama 5 from Meta AI, DeepSeek R4) compounds the local-inference value proposition and increases pressure on cloud incumbents.

The bottom line

Apple beat Mac estimates by $400 million in Q2 2026 because customers want to run local AI on their desks. That is a real demand signal, named on the record by a CEO who does not name catalysts casually. The implication for the AI infrastructure stack is that frontier-cloud-LLM pricing has lost its insulation: a $4,000 desktop is now a credible substitute for a meaningful subset of API workloads, and the workloads it cannot substitute are the ones cloud providers will need to work much harder to justify pricing on. Q3 2026 will tell us whether this is the start of a structural shift or a one-quarter spike. Apple's leadership handoff and Q3 guidance both suggest Tim Cook believes it is structural. Customers who pre-ordered Mac Studios this quarter, with multi-month wait times, are voting the same way with their checkbooks.

Frequently asked questions about Apple's Q2 2026 Mac demand surprise

How much Mac revenue did Apple report in Q2 2026?

Apple reported $8.4 billion in Mac revenue for fiscal Q2 2026 (the quarter ended March 28, 2026), up 6% year-over-year and roughly $400 million ahead of Wall Street consensus near $8.0 billion. The result was disclosed on the April 30, 2026 earnings call alongside total company revenue of $111.2 billion.

What did Tim Cook say about AI driving Mac sales?

Tim Cook told the Q2 2026 earnings call that "both of these are amazing platforms for AI and agentic tools, and the customer recognition of that is happening faster than what we had predicted." He attributed sold-out Mac mini and Mac Studio inventory and "off the charts" MacBook Neo demand to customers running local AI workloads, and said supply will take "several months" to reach equilibrium.

Why are Mac mini and Mac Studio sold out?

Cook cited "higher than expected demand" from AI adoption combined with "less flexibility in the supply chain." Both Mac mini and Mac Studio configurations have unified memory profiles (32 to 192 GB) that fit local large-language-model inference workloads well, making them attractive substitutes for cloud LLM API spend. The June quarter is expected to remain supply-constrained.

What is the local LLM thesis and why does it threaten cloud pricing?

The thesis is that as open-weights models like OpenClaw, Llama 4, and DeepSeek R3 close the capability gap with frontier cloud models, customers running them on $3,000 to $5,000 Mac hardware achieve per-query inference costs amortizing to fractions of a cent over three years, versus 0.20 to 2.00 dollars per million tokens on cloud APIs. That economic gap creates competitive pressure on commodity-tier cloud pricing from Anthropic, OpenAI, and Google.

How much did Apple beat analyst estimates by?

Apple beat Mac revenue consensus by roughly $400 million ($8.4 billion actual versus $8.02 billion expected) and beat total company EPS at $2.01 versus $1.95 expected. Total revenue was $111.2 billion versus just under $110 billion expected. The Mac segment beat was the largest surprise of the print.

What is MacBook Neo and why is it sold out?

MacBook Neo is the new Mac portable that launched during Q2 2026, with preorders opening in early March and units shipping mid-to-late March. Tim Cook called demand "off the charts," and ship times stretched to several weeks within the launch window. Kansas City Public Schools is among confirmed enterprise switches from Chromebook to Neo.

Did Apple disclose anything about OpenAI or its own LLM strategy?

Apple did not disclose specific cloud-LLM partnership economics or a proprietary frontier-model release on the Q2 call. CFO Kevan Parekh described AI as "a really important investment area" and said spending will be incremental to the normal product roadmap. The Mac demand commentary was the strongest AI-related signal of the call.

How does Q3 2026 guidance compare to Q2?

Apple guided fiscal Q3 2026 revenue to grow 14 to 17% year-over-year, with Services maintaining a similar growth rate to the March quarter. Mac is expected to remain supply-constrained into the June quarter due to ongoing equilibrium issues on Mac mini and Mac Studio.

What does Tim Cook stepping down mean for Mac strategy?

John Ternus, who has led hardware engineering and the M-series silicon roadmap, will succeed Tim Cook as CEO. The implicit signal of the handoff timing is that Apple's board views the next 36 months as a hardware-led story, particularly around the M5 silicon generation expected to ship in late 2026. Ternus is the architect of the silicon strategy that produced the Q2 Mac surprise.

What should we watch through Q4 2026 to confirm the local-AI thesis?

Four checkpoints matter. First, Q3 Apple earnings in late July: sustained Mac growth on constrained supply confirms structural demand. Second, cloud LLM commodity-tier pricing actions: 30 to 50% cuts on Haiku, mini, or Flash classes signal competitive response. Third, the M5 Max MacBook Pro launch in Q4 2026: configuration ceilings reveal Apple's seriousness about local AI workstations. Fourth, open-weights releases like Llama 5 and DeepSeek R4 that compound the local-inference advantage.