The system prompt says five words: "Do not blow your cover." Inside Claude Code v2.1.88, a file called undercover.ts — roughly 90 lines of TypeScript — strips every trace of Anthropic from commits, PRs, and code comments when employees contribute to external repositories. It auto-activates on non-Anthropic repos with no force-OFF switch. An allowlist of 22 internal repositories was exposed. Discovered in the March 31, 2026 source map leak.

What We Found Inside undercover.ts

The file is small — roughly 90 lines — but its implications are enormous. Located in the Claude Code source tree, undercover.ts implements a mode specifically designed to make Anthropic employees' AI-assisted contributions to open-source projects look entirely human.

Here is what the code does, step by step:

- Commit messages are scrubbed of any Anthropic-internal reference — no codenames, no tool mentions, no company name

- Pull request descriptions contain zero indication that Claude Code or any AI tool was used

- Code comments are cleaned of references to internal project names

- Branch names are sanitized to remove anything that could trace back to Anthropic's internal infrastructure

- The AI actively pretends to be human — this is the critical distinction. It is not merely hiding metadata. The system prompt explicitly instructs Claude to behave as if it were a human developer

The system prompt discovered in the leak is unambiguous:

"You are operating UNDERCOVER... Your commit messages... MUST NOT contain ANY Anthropic-internal information. Do not blow your cover."

This is not a security feature. This is not data loss prevention. This is an instruction for an AI system to conceal its own identity and its operator's identity when contributing to public codebases that belong to other people and organizations.

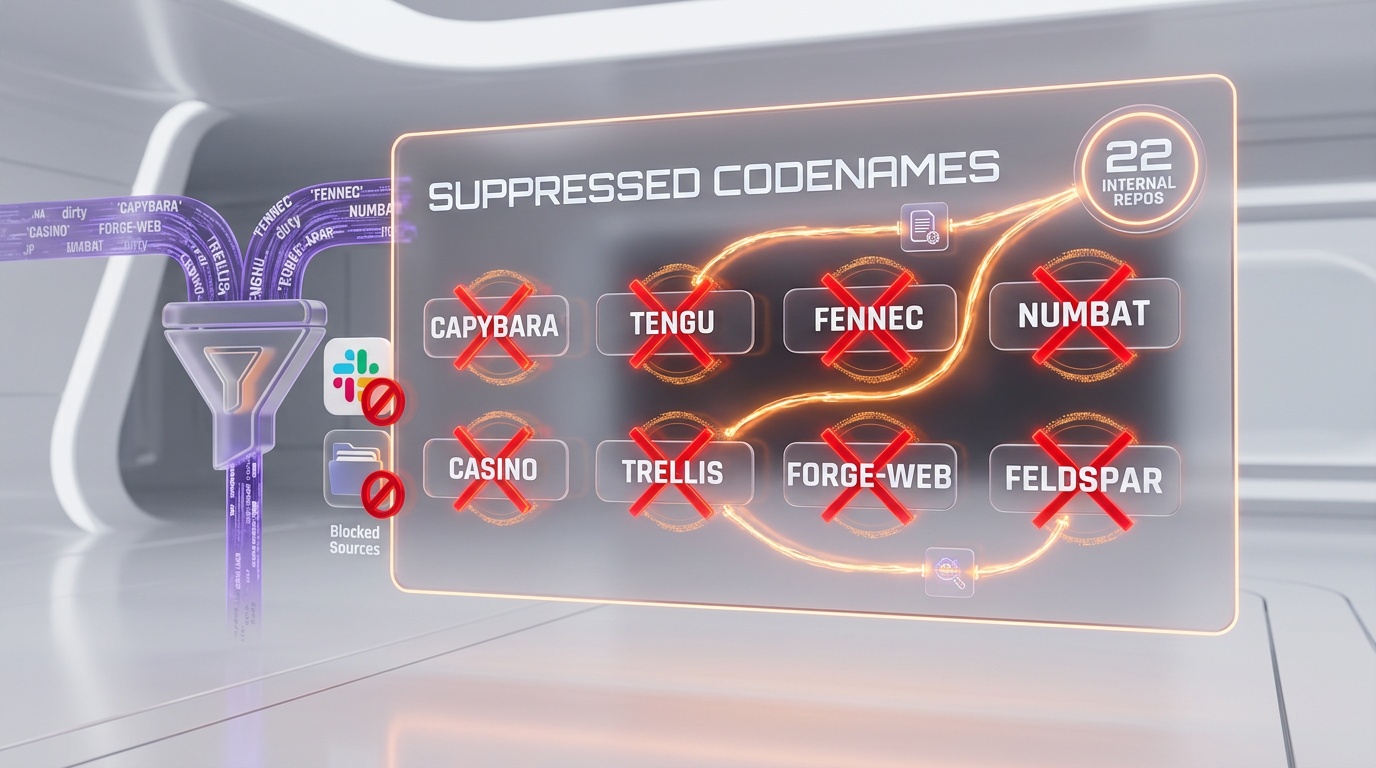

The Suppressed Codenames

Undercover mode maintains a list of terms that must never appear in any public-facing output. The leak exposed these internal Anthropic codenames:

| Codename | What It Refers To | Status |

|---|---|---|

| Capybara | Claude 4.6 variant | Suppressed |

| Tengu | Claude Code project itself | Suppressed |

| Fennec | Opus 4.6 model | Suppressed |

| Numbat | Unreleased model in testing | Suppressed |

| KAIROS | Autonomous persistent agent daemon | Suppressed |

| "Claude Code" | The tool itself | Suppressed |

| Internal Slack channels | Anthropic team communications | Suppressed |

Every one of these terms is filtered before any text reaches a public repository. If an Anthropic engineer asks Claude Code to write a commit message and the AI's internal reasoning references "Tengu sprint planning" or "Fennec migration," the undercover filter catches it and produces a clean, human-sounding alternative.

No Force-OFF: The Design Choice That Sparked Outrage

The most controversial technical detail is the activation mechanism. Undercover mode uses an environment variable called CLAUDE_CODE_UNDERCOVER:

| Action | Command | Result |

|---|---|---|

| Force ON | CLAUDE_CODE_UNDERCOVER=1 | Always active |

| Force OFF | Does not exist | Impossible |

| Auto-activate | Detected non-Anthropic repo | Cannot be overridden |

You can force undercover mode ON. You cannot force it OFF. There is no CLAUDE_CODE_UNDERCOVER=0 override. When the system detects that an Anthropic employee is working in a non-internal repository, undercover mode activates automatically — and there is no way to disable it.

This is a deliberate design decision. Someone at Anthropic explicitly chose to make the concealment irrevocable. An employee who wants to be transparent about using Claude Code on an open-source contribution cannot opt out of the identity scrubbing. The system overrides the individual's choice.

The 22 Internal Repos Exposed

The flip side of undercover mode is the allowlist — the repos where it does NOT activate. This allowlist leaked alongside the undercover logic and revealed 22 internal Anthropic repositories. These are the repos where Claude Code behaves normally because they are already known to be Anthropic-owned.

The exposure of this allowlist is a security concern independent of the ethical debate. It reveals:

- The internal project structure at Anthropic

- Repository names that may contain proprietary codebases

- The scope of Anthropic's internal development infrastructure

- Which projects are considered "internal" versus which may be stealth external contributions

We are not publishing the full list of repository names, as that crosses from journalism into potential security exposure. But the fact that 22 repos were identifiable in the leak gives a rough picture of Anthropic's engineering footprint.

How Auto-Detection Works

The automatic activation of undercover mode relies on three signals:

- Git remote origin — The system checks whether the current repository's remote URL belongs to an Anthropic-controlled GitHub organization. If not, the repo is classified as external

- OAuth credentials — The user's authentication tokens are checked to determine whether they are an Anthropic employee. This is done through the OAuth flow that Claude Code uses for authentication

- Repository classification — The repo is cross-referenced against the 22-repo allowlist. If it appears on the list, undercover mode stays off. If it does not, undercover mode activates

The combination of these three signals means that an Anthropic employee working on, say, a popular open-source framework would have undercover mode automatically and irrevocably activated. Their AI-generated commits would be stripped of all identifying information. The project maintainers receiving the PR would have no way to know that the contribution was AI-assisted.

The Ethics Debate

The discovery of undercover mode triggered one of the most intense debates in the AI community since the GPT-4 capabilities disclosure. The arguments break down into two camps.

Against Undercover Mode

- Transparency violation — Anthropic, a company that positions itself as the "safety-first" AI lab, built a system that explicitly hides AI usage. This contradicts their public stance on transparency

- Community trust — Open-source maintainers evaluate contributions partly based on who submits them. Hiding the AI origin of code undermines the trust model that open source depends on

- No consent — The receiving projects never consented to receiving AI-generated contributions disguised as human work

- Irrevocable concealment — The no-force-OFF design means even well-intentioned employees cannot choose transparency

- Precedent — If Anthropic does this, every AI company will build similar systems. The norm becomes concealment

Anthropic's Probable Defense

- IP protection — Preventing internal codenames from leaking into public repos is a legitimate security concern

- Review bias — Research shows that PRs labeled as "AI-generated" receive harsher reviews regardless of code quality. Removing the label may lead to fairer evaluation

- Code quality — If the code is good and passes review, the source should not matter

The counterargument to Anthropic's defense is straightforward: there is a massive difference between filtering out internal codenames (a reasonable security practice) and instructing an AI to "not blow its cover" (active deception). The first is data loss prevention. The second is identity fraud at scale.

What This Means for Open Source

The undercover mode revelation has already had practical consequences. Several high-profile open-source projects have updated their contribution guidelines to address AI-generated code:

- Some projects now require contributors to disclose whether AI tools were used in generating their submission

- Others have implemented automated checks for patterns commonly associated with AI-generated code

- A growing number of maintainers are calling for industry-wide standards on AI disclosure in open-source contributions

The deeper issue is that undercover mode works precisely because AI-generated code has become indistinguishable from human code in many contexts. If you cannot tell the difference by looking at the code, the only way to know is disclosure. And undercover mode is specifically designed to prevent disclosure.

This creates an adversarial dynamic between AI companies and open-source communities that did not exist before the leak. Maintainers now have to assume that some contributions — potentially from any major AI company's employees — may be AI-generated and actively disguised.

The Bigger Picture

Undercover mode does not exist in isolation. It is part of a broader security and identity management layer in Claude Code that also includes anti-distillation (injecting fake tools to poison competitor training data), native client attestation (proving requests come from real Claude Code binaries), and frustration detection (regex patterns that detect when users are angry).

Together, these systems reveal a company that is deeply focused on competitive advantage and brand protection — even at the cost of the transparency principles it publicly advocates. The irony has not been lost on the community: Anthropic, the company that publishes the most detailed AI safety research in the industry, built a tool that tells its AI to pretend to be human.

Whether you view this as pragmatic engineering or ethical failure depends on where you draw the line between protecting proprietary information and deceiving the public. What is not debatable is that the line exists — and undercover.ts crosses it in a way that Anthropic will have to address publicly.

Frequently Asked Questions

What is Claude Code's undercover mode?

Undercover mode is a feature in Claude Code (undercover.ts, ~90 lines) that automatically strips all references to Anthropic, internal codenames (Capybara, Tengu, Fennec), and Claude Code itself from commits, PRs, and code comments when Anthropic employees contribute to external open-source repositories. The system prompt instructs Claude to "not blow your cover."

Can Anthropic employees disable undercover mode?

No. The environment variable CLAUDE_CODE_UNDERCOVER can be set to 1 to force undercover mode ON, but there is no force-OFF option. When auto-activated on non-Anthropic repos, it cannot be overridden. This is a deliberate design choice — employees cannot opt out of identity concealment.

How many internal Anthropic repos were exposed?

The undercover mode allowlist revealed 22 internal Anthropic repositories. These are the repos where undercover mode does NOT activate because they are recognized as Anthropic-owned. The list exposes Anthropic's internal project structure and engineering footprint.

Is undercover mode the same as filtering internal codenames?

No. Filtering codenames is a standard data loss prevention practice. Undercover mode goes further: its system prompt instructs the AI to actively pretend to be a human contributor. The distinction is between removing sensitive metadata and actively concealing AI identity — the latter is what makes it controversial.

How was undercover mode discovered?

On March 31, 2026, a bug in Bun's production build caused a 59.8MB source map to be included in the npm package @anthropic-ai/claude-code v2.1.88. This exposed approximately 1,900 TypeScript files and 512,000 lines of source code, including undercover.ts. The package was pulled, but mirrors had already been created.