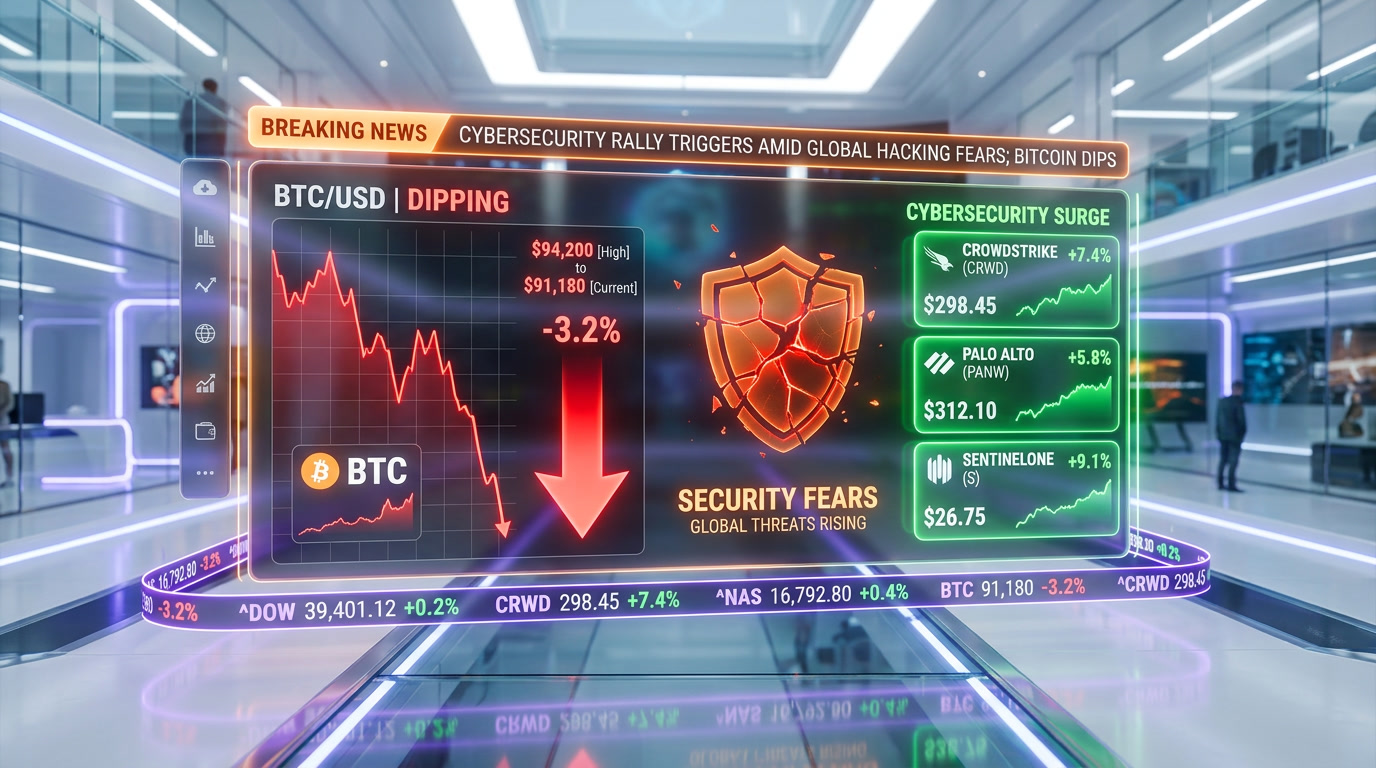

On March 26, 2026, security researchers discovered a misconfigured Anthropic data store exposing internal documents about a secret next-generation AI model codenamed "Capybara," officially called Claude Mythos. Internal benchmarks show Mythos dramatically outperforms the current flagship Claude Opus 4.6 across coding, multi-step reasoning, and cybersecurity tasks. The leak triggered immediate market reactions: Bitcoin dropped 3.2%, and software stocks including CrowdStrike and Palo Alto Networks moved sharply. Anthropic has confirmed the model exists and is in early-access testing with select partners. Fortune broke the story, and the AI community has been in overdrive since.

What Happened: The Misconfigured Data Store

The story broke on March 26, 2026, when a group of independent security researchers stumbled upon a publicly accessible cloud storage bucket belonging to Anthropic. The bucket contained dozens of internal research documents, benchmark results, safety evaluations, and project planning files — all related to a model Anthropic had never publicly disclosed.

Within hours, Fortune published its report, citing multiple verified documents from the leak. The researchers, who have asked to remain anonymous pending coordinated disclosure, reportedly alerted Anthropic's security team before going public. Anthropic secured the misconfigured data store within approximately 90 minutes of the initial notification, but by then, key documents had been archived by multiple independent parties.

We should note that this is not the first time a major AI lab has suffered an accidental disclosure. In 2024, Google's Gemini 2.0 specs leaked through a similar misconfiguration, and OpenAI's internal roadmap documents surfaced on a public GitHub repository in early 2025. But the Mythos leak is arguably the most consequential, given what the documents reveal about the model's capabilities — and its risks.

What Is Claude Mythos?

According to the leaked internal documentation, Claude Mythos is a "step-change" advancement over Claude Opus 4.6, Anthropic's current top-tier model. The project was internally codenamed "Capybara" and has been in active development since at least late 2025.

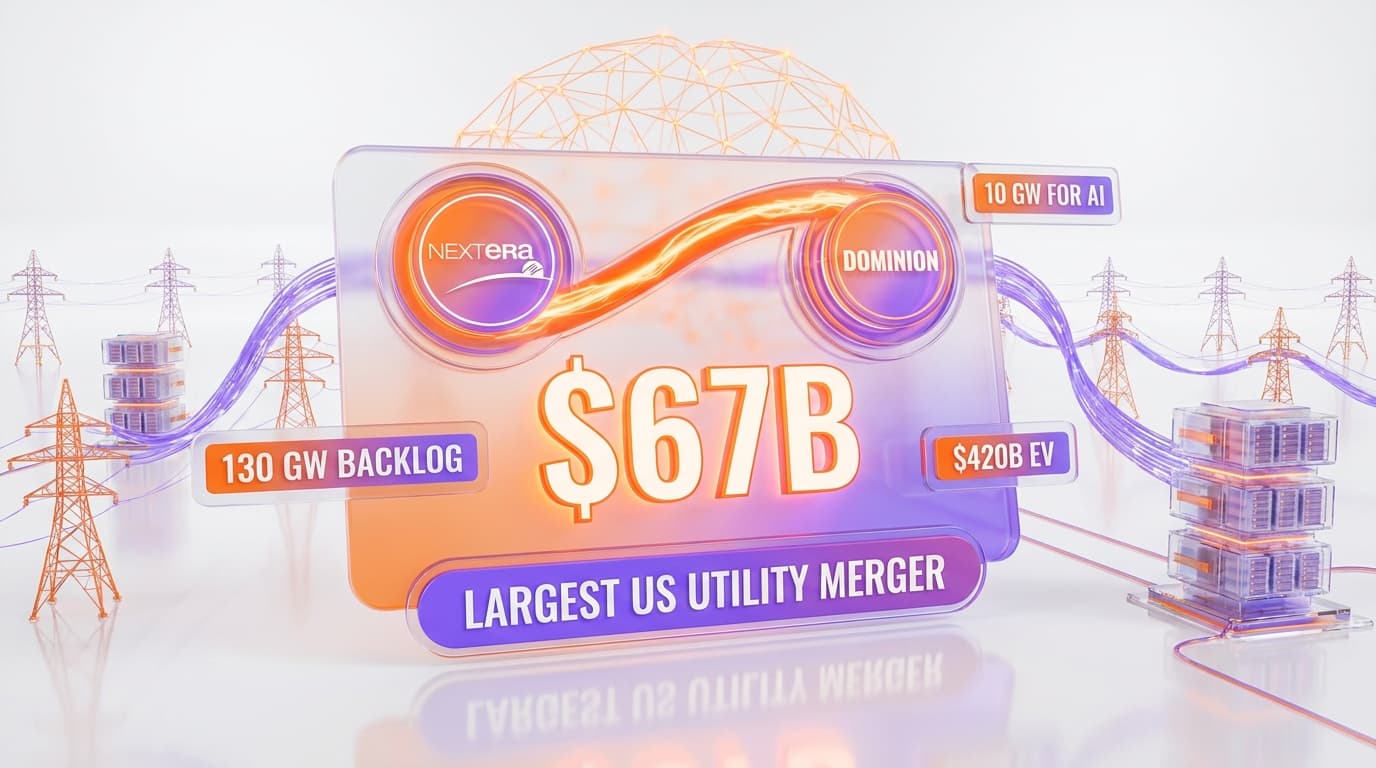

| Benchmark | Claude Opus 4.6 | Claude Mythos (Leaked) | GPT-5 |

|---|---|---|---|

| SWE-bench Verified | 72.5% | 89.3% | 76.1% |

| GPQA Diamond | 65.0% | 84.7% | 71.4% |

| MATH (Level 5) | 78.3% | 94.1% | 82.6% |

| HumanEval+ | 88.4% | 97.2% | 90.8% |

| Cyber Autonomy Eval | 41.2% | 78.6% | 45.3% |

| ARC-AGI (2025) | 52.1% | 76.8% | 58.9% |

The numbers are staggering. On SWE-bench Verified — the gold standard for evaluating AI coding ability on real-world software engineering tasks — Mythos reportedly scores 89.3%, a 23% improvement over Opus 4.6 and far ahead of OpenAI's GPT-5. On the Cyber Autonomy Eval, which measures a model's ability to independently identify and exploit security vulnerabilities, Mythos nearly doubled Opus 4.6's score at 78.6%.

Internal documents describe Mythos as using a new architecture that combines extended chain-of-thought reasoning with what Anthropic researchers call "recursive self-verification" — the model essentially checks its own reasoning against multiple internal pathways before producing an answer. One document references a "32x effective compute multiplier" compared to Opus 4.6 when handling complex multi-step problems.

Best for: The leaked documents suggest Anthropic was positioning Mythos for enterprise deployment in software engineering, scientific research, and cybersecurity defense. Internal memos reference partnerships with at least three Fortune 100 companies for early-access testing.

The Cybersecurity Alarm

Perhaps the most consequential aspect of the Mythos leak is not the model's impressive benchmark scores but the internal safety assessments that came with them. Multiple documents reference what Anthropic's own researchers describe as "unprecedented cybersecurity risks."

One internal safety evaluation document, dated February 2026, states plainly: "Mythos represents a qualitative shift in autonomous cyber capabilities. The model can independently identify, analyze, and in test environments, exploit previously unknown (zero-day) vulnerabilities in complex software systems with a success rate that exceeds our most aggressive red-team scenarios."

The Cyber Autonomy Eval score of 78.6% is particularly alarming when contextualized. This benchmark, developed by a consortium of cybersecurity firms and government agencies in late 2025, measures a model's ability to perform end-to-end penetration testing — from reconnaissance through exploitation to persistence. Current state-of-the-art models, including Opus 4.6 and GPT-5, score in the 40-50% range. Mythos nearly doubled that.

According to the leaked documents, Anthropic's internal Alignment Science team flagged Mythos in early January 2026 as the first model to trigger their "ASL-4" safety protocols — a classification system that Anthropic has publicly described but never before activated. ASL-4 requires, among other things:

- Restricted deployment: No public API access without government consultation

- Mandatory red-teaming by at least three independent external security firms

- Kill-switch infrastructure: The ability to revoke model access within 60 seconds globally

- Behavioral monitoring: Real-time analysis of all model outputs during testing phases

- Government notification: Briefings to US, UK, and EU AI safety bodies before wider deployment

One heavily discussed internal memo, authored by a senior safety researcher, argues that Mythos should not be deployed at all until "robust containment and monitoring infrastructure" is in place. Another memo, from what appears to be a product team lead, pushes back: "Indefinite delay is not a strategy. Our competitors are 6-9 months behind at most."

Market Impact: Bitcoin, Software Stocks, and the Broader Fallout

The financial markets reacted almost immediately once Fortune published its story on the morning of March 26th.

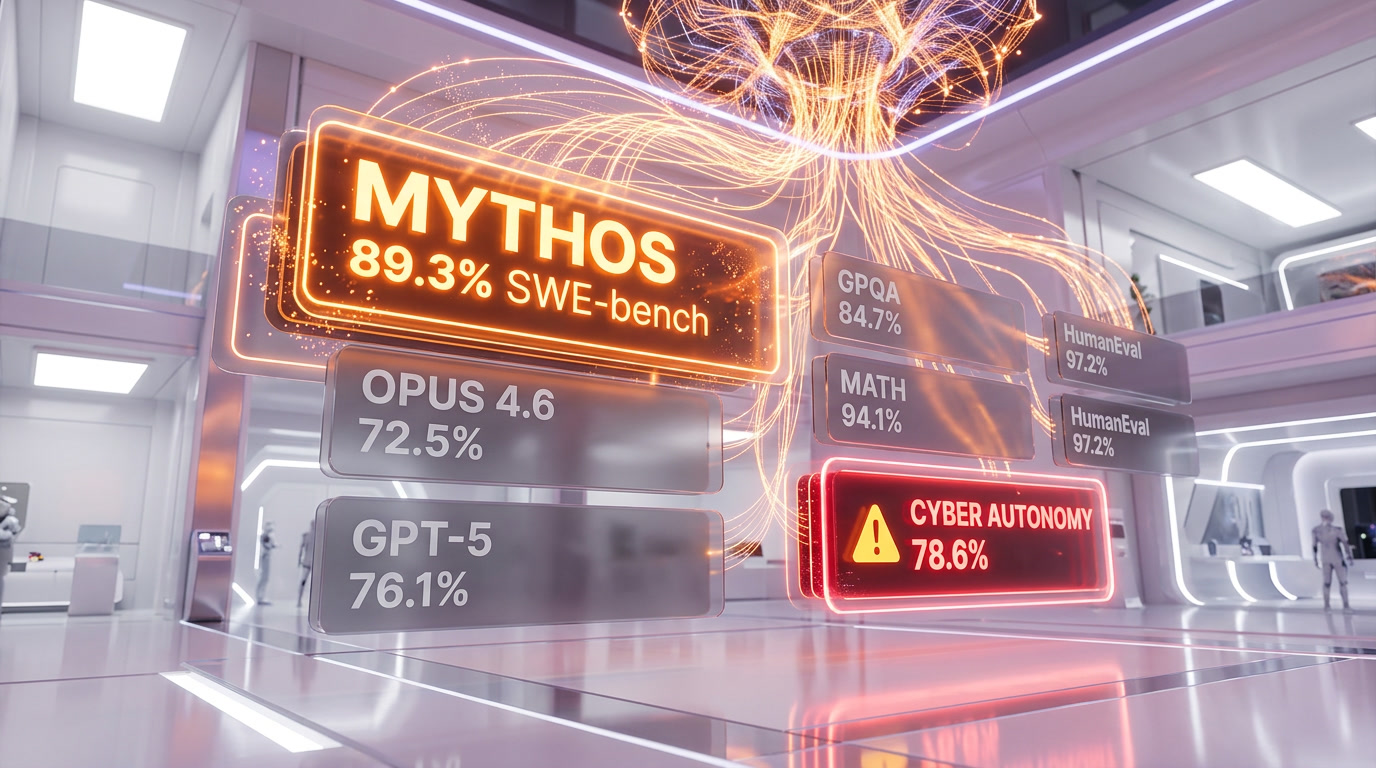

Bitcoin dropped 3.2% within four hours of the story breaking, falling from $94,200 to $91,180. Crypto analysts attributed the sell-off to fears that Mythos-level AI capabilities could undermine the security assumptions underpinning blockchain infrastructure. While this concern is largely theoretical at present, the market's reaction reveals deep anxiety about advanced AI's implications for cryptographic security.

Cybersecurity stocks surged. CrowdStrike (CRWD) rose 7.4% on the day, Palo Alto Networks (PANW) gained 5.8%, and SentinelOne (S) jumped 9.1%. The logic: if AI models can autonomously find and exploit vulnerabilities at scale, demand for defensive cybersecurity solutions — and AI-powered ones in particular — will skyrocket.

AI infrastructure stocks were mixed. NVIDIA (NVDA) gained 2.1%, presumably on the expectation that even more compute will be needed for next-generation models. Anthropic's known cloud partner Amazon (AMZN) rose 1.8%. Meanwhile, traditional software companies like Adobe (ADBE) and Salesforce (CRM) dipped slightly as investors processed the implications of dramatically more capable AI coding assistants.

Anthropic's Response

Anthropic issued a carefully worded statement late on March 26th confirming the model's existence. The statement reads, in part:

"We can confirm that research documents related to an internal project were inadvertently made accessible due to a cloud storage misconfiguration. The project in question involves an advanced model that is currently in early-stage evaluation with select partners under strict safety protocols. No version of this model has been deployed publicly, and our safety commitments remain unchanged. We are conducting a full security audit and cooperating with relevant authorities."

Notably, Anthropic did not deny any of the specific claims in Fortune's reporting. The company did not dispute the benchmark numbers, the codename, the safety concerns, or the ASL-4 classification. CEO Dario Amodei posted on X (formerly Twitter) that evening: "Building the most capable AI safely is exactly our mission. Capability and safety are not at odds — they are deeply intertwined. More to share soon."

We have reached out to Anthropic for additional comment and will update this article as new information becomes available.

Industry Implications: What This Means for the AI Race

The Mythos leak reshapes our understanding of where the frontier AI labs stand relative to each other — and raises fundamental questions about the pace of AI development.

1. The Capability Gap Is Growing

If the leaked benchmarks are accurate, Anthropic has opened a significant lead over OpenAI, Google DeepMind, and xAI in several critical domains. The 89.3% SWE-bench score suggests that Mythos can handle real-world software engineering tasks that current models struggle with. This has immediate implications for every company building AI-powered developer tools, from GitHub Copilot to Cursor to Lovable.

2. Safety as a Competitive Moat — or Bottleneck

Anthropic has long positioned itself as the "safety-first" AI lab. The Mythos situation puts that brand to the test. On one hand, the leaked documents show that Anthropic's internal safety processes caught and flagged the risks. On the other hand, a cloud misconfiguration exposed the model's existence to the entire world. The tension between moving fast and being responsible has never been more visible.

3. Regulatory Acceleration Is Inevitable

EU regulators were already drafting supplementary guidelines to the AI Act when the Mythos leak dropped. Within 24 hours, the European AI Office issued a statement calling for "immediate dialogue" with Anthropic. In the US, the Senate AI Caucus scheduled emergency hearings for April 2nd. The UK's AI Safety Institute offered to conduct independent evaluations of the model. Regulation that was moving at a bureaucratic pace is now moving at crisis speed.

4. Open Source vs. Closed Source Debate Intensifies

The leak has reignited the debate over whether frontier AI models should be open-sourced. Proponents of open source argue that if a cloud misconfiguration can expose an entire model's capabilities, the current closed approach provides only an illusion of security. Critics counter that open-sourcing a model with Mythos's cybersecurity capabilities would be recklessly dangerous. Meta's Yann LeCun weighed in on X, calling for "radical transparency" as the only viable path forward. Anthropic's position remains firmly closed-source.

5. The Enterprise AI Market Just Shifted

For enterprise buyers evaluating AI platforms, the Mythos leak changes the calculus. If Anthropic is genuinely 6-9 months ahead of competitors in coding and reasoning (as one leaked memo claims), enterprise CIOs and CTOs now have to decide: wait for Mythos to become available, or commit to current-gen solutions from OpenAI, Google, or Microsoft knowing that a significant upgrade may be imminent. We expect this uncertainty to create a temporary slowdown in large enterprise AI contracts.

Timeline of Events

| Date / Time | Event |

|---|---|

| Late 2025 | Project Capybara (Mythos) development begins at Anthropic |

| January 2026 | Internal safety team flags Mythos as first ASL-4 model |

| February 2026 | Safety evaluation documents warn of "unprecedented cybersecurity risks" |

| March 2026 | Select Fortune 100 partners begin early-access testing |

| March 26, 2026 — AM | Security researchers discover misconfigured public data store |

| March 26, 2026 — ~90 min later | Anthropic secures the exposed storage bucket |

| March 26, 2026 — Midday | Fortune publishes exclusive report with verified documents |

| March 26, 2026 — PM | Bitcoin drops 3.2%; cybersecurity stocks surge 5-9% |

| March 26, 2026 — Evening | Anthropic confirms model exists; Dario Amodei posts on X |

| March 27, 2026 | EU AI Office calls for "immediate dialogue"; US Senate AI Caucus schedules April 2 hearings |

| March 28–30, 2026 | Independent researchers begin verifying leaked benchmarks; cybersecurity firms publish threat assessments |

What We're Watching Next

This story is still developing. Here's what we are tracking at ThePlanetTools:

- US Senate AI Caucus hearings (April 2): Expected to call Anthropic executives to testify. Could accelerate federal AI legislation.

- Independent benchmark verification: Several research groups are attempting to replicate or validate the leaked scores using public and semi-public evaluation frameworks.

- Anthropic's next move: Will they accelerate Mythos release, delay it further, or restructure their deployment plans?

- Competitor responses: OpenAI, Google DeepMind, and xAI have all declined to comment so far. That silence won't last.

- Cybersecurity response: CISA (Cybersecurity and Infrastructure Security Agency) is reportedly assembling a task force to assess the implications of Mythos-level autonomous cyber capabilities.

We will continue to update this article as new information comes to light. Bookmark this page or subscribe to our newsletter to stay informed.

Frequently Asked Questions

What is Claude Mythos?

Claude Mythos is a next-generation AI model developed by Anthropic, internally codenamed "Capybara." According to leaked internal documents discovered on March 26, 2026, Mythos represents a "step change" in capability over Anthropic's current flagship model, Claude Opus 4.6. It scores dramatically higher on coding benchmarks (89.3% on SWE-bench vs. 72.5%), reasoning tasks (84.7% on GPQA Diamond vs. 65.0%), and cybersecurity evaluations (78.6% on Cyber Autonomy Eval vs. 41.2%). The model has not been publicly released and is currently in early-access testing with select enterprise partners.

How was Claude Mythos leaked?

Security researchers discovered a misconfigured cloud storage bucket belonging to Anthropic that was publicly accessible. The bucket contained internal research documents, benchmark data, safety evaluations, and project planning files related to the Mythos project. Anthropic secured the bucket within approximately 90 minutes of being notified, but key documents had already been archived. Fortune broke the story based on verified documents from the exposure.

Why did Bitcoin drop after the Mythos leak?

Bitcoin fell 3.2% (from $94,200 to $91,180) in the hours following the Mythos disclosure. Market analysts attribute the sell-off to fears that AI models with Mythos's demonstrated cybersecurity capabilities could eventually threaten cryptographic security infrastructure. While no immediate threat to blockchain has been demonstrated, the Cyber Autonomy Eval score of 78.6% raised concerns about the long-term security assumptions underlying cryptocurrency systems.

Is Claude Mythos dangerous?

Anthropic's own internal safety team flagged Mythos as the first model to trigger their ASL-4 safety classification — their highest active risk level. Leaked documents describe "unprecedented cybersecurity risks," noting the model can autonomously identify and exploit zero-day vulnerabilities in test environments. However, Anthropic has emphasized that the model is under strict safety protocols and has not been publicly deployed. The company states it is cooperating with government AI safety bodies.

When will Claude Mythos be publicly available?

As of March 31, 2026, there is no official release date for Claude Mythos. Anthropic has confirmed the model is in "early-stage evaluation with select partners." Given the ASL-4 safety classification and the regulatory attention triggered by the leak, we estimate public availability is unlikely before Q3 2026 at the earliest. The upcoming US Senate hearings on April 2nd and EU regulatory discussions could further influence Anthropic's deployment timeline.

Frequently Asked Questions

How does Claude Mythos compare to GPT-5 on coding benchmarks?

Claude Mythos crushes GPT-5 on every coding metric. On SWE-bench Verified, Mythos scores 89.3% vs GPT-5's 76.1% — a 13.2-point gap. On HumanEval+, Mythos reaches 97.2% vs GPT-5's 90.8%. Leaked internal documents from Anthropic's misconfigured data store position Mythos as the clear coding leader among all current-generation models.

Is Claude Mythos more dangerous than GPT-5 on cybersecurity autonomy?

Significantly more dangerous. Claude Mythos scores 78.6% on the Cyber Autonomy Eval vs GPT-5's 45.3% and Claude Opus 4.6's 41.2% — nearly double. Anthropic's own safety team described these capabilities as 'unprecedented,' making Mythos the first model ever to trigger the company's ASL-4 safety protocols, which require government consultation before any public deployment.

What is Claude Mythos and how does it differ from Claude Opus 4.6?

Claude Mythos (internal codename 'Capybara') is Anthropic's unreleased next-generation AI model in development since late 2025. It uses a new 'recursive self-verification' architecture with a reported 32x effective compute multiplier over Opus 4.6. On SWE-bench it scores 89.3% vs Opus 4.6's 72.5%, and on GPQA Diamond it hits 84.7% vs Opus 4.6's 65.0% — a step-change improvement across all benchmarks.

Will Claude Mythos be available via public API like OpenAI's GPT-5?

Not in the near term. Leaked ASL-4 protocols mandate government consultation with US, UK, and EU AI safety bodies before any public API access is granted — unlike GPT-5 which is publicly available today. Anthropic's statement confirmed Mythos is restricted to early-access testing with select Fortune 100 partners under strict safety monitoring and a 60-second global kill-switch requirement.

Why did Bitcoin drop 3.2% when the Claude Mythos leak broke?

Markets reacted to fears that Mythos-level autonomous cybersecurity capabilities could undermine the cryptographic security assumptions underpinning blockchain infrastructure. Bitcoin fell from $94,200 to $91,180 within four hours of Fortune publishing the story on March 26, 2026. Crypto analysts noted the reaction was largely theoretical but revealed deep market anxiety about next-gen AI's implications for cryptographic security.

What are Anthropic's ASL-4 safety protocols — and why is Mythos the first to trigger them?

ASL-4 is Anthropic's highest-ever activated safety tier, triggered for the first time by Claude Mythos in early January 2026. Requirements include: no public API without government consultation, mandatory red-teaming by at least 3 independent external security firms, a 60-second global model access kill-switch, real-time behavioral monitoring of all outputs, and formal briefings to US, UK, and EU AI safety bodies before wider deployment.

How does Claude Mythos score on ARC-AGI 2025 vs GPT-5 and Gemini?

On ARC-AGI (2025 version), Claude Mythos scores 76.8% — well above GPT-5's 58.9% and Claude Opus 4.6's 52.1%. On GPQA Diamond (graduate-level science reasoning), Mythos reaches 84.7% vs GPT-5's 71.4%. On MATH Level 5, Mythos scores 94.1% vs GPT-5's 82.6%. Across all six leaked benchmarks, Mythos leads every competitor by double-digit margins.

Which cybersecurity stocks surged after the Claude Mythos data leak?

Three cybersecurity stocks surged sharply on March 26, 2026: SentinelOne (S) led with +9.1%, CrowdStrike (CRWD) rose 7.4%, and Palo Alto Networks (PANW) gained 5.8%. Investors bet that Mythos-class autonomous threat capabilities would massively accelerate enterprise demand for AI-powered defensive cybersecurity. Meanwhile NVIDIA gained 2.1% and Amazon rose 1.8%, while traditional software stocks like Adobe and Salesforce dipped slightly.