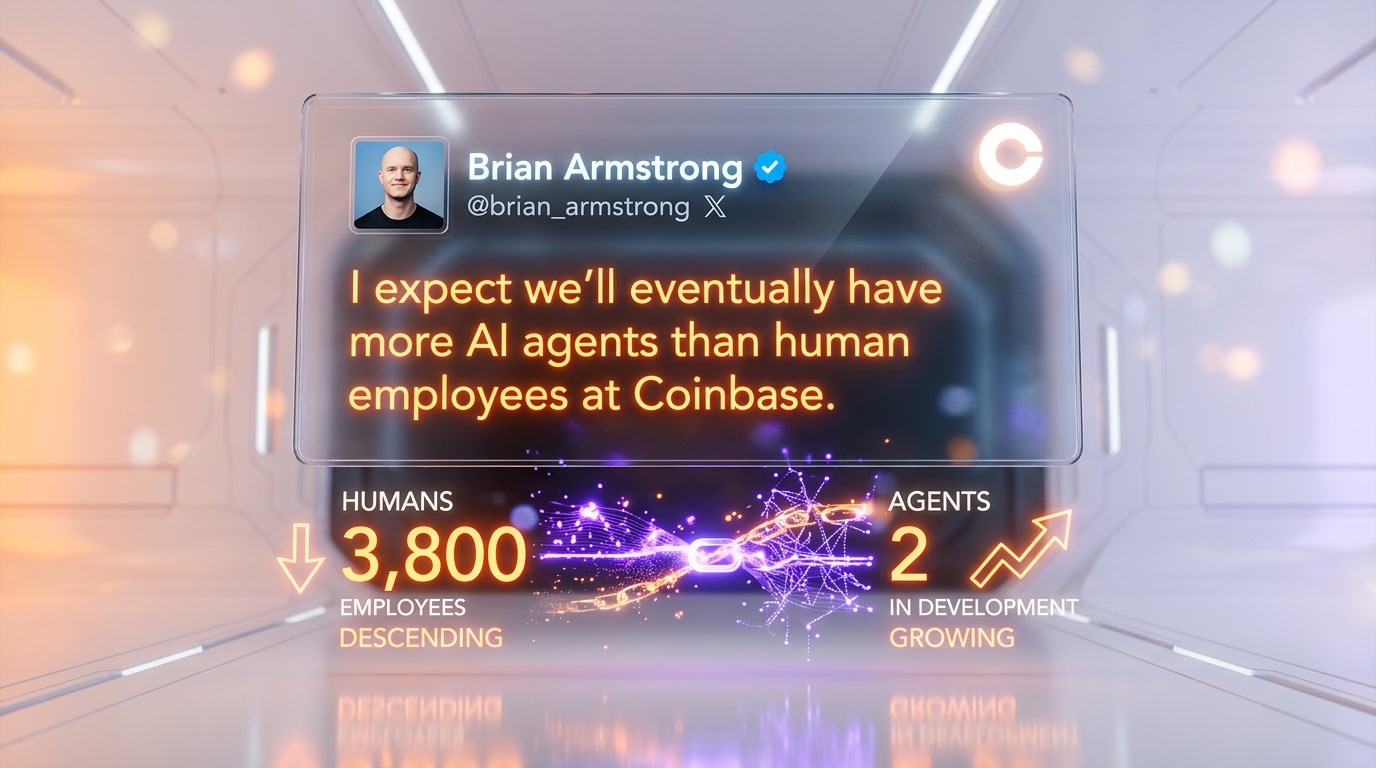

On April 20, 2026, Coinbase confirmed it is running two AI agents modeled on former executives Fred Ehrsam and Balaji Srinivasan. The clones live inside Slack threads and internal email. CEO Brian Armstrong stated on X that he expects the crypto exchange to eventually have more AI agents than human employees. The agents were bootstrapped on internal documents and each executive's public archive of books, podcasts, and posts.

What Coinbase Actually Deployed

The announcement landed April 20, 2026, when Decrypt published the scoop after Armstrong posted on X. Coinbase is testing two persistent AI agents inside its internal Slack workspace. The first is modeled on Fred Ehrsam, Coinbase co-founder, former Goldman Sachs FX trader, and founder of Paradigm. The second is modeled on Balaji Srinivasan, former Coinbase CTO, former general partner at Andreessen Horowitz, and author of The Network State. Both men left Coinbase years ago. Both are now back, in a sense, as conversational agents that appear in Slack channels and respond to email threads.

Coinbase has around 3,800 employees as of Q1 2026. The two clones join those threads like any other colleague, except they do not sleep, do not take PTO, and never stop reading. According to the initial Decrypt reporting, the agents were trained on a combination of internal Coinbase documents and each executive's public archive: Balaji's 477-page book The Network State, his 2.1 million X posts, his podcast archive, and his extensive blog. Ehrsam's Paradigm research notes, his prior Coinbase strategy memos, and his public talks were used for the second clone. Neither executive has publicly confirmed or denied that they consented to the deployment.

Armstrong's "More Agents Than Humans" Statement

Brian Armstrong, CEO of Coinbase since 2012, posted on X on April 20, 2026: "This is a good start. I expect we'll eventually have more AI agents than human employees at Coinbase." Armstrong also outlined a longer-term vision where any current Coinbase employee will be able to spin up a clone of any former colleague, assuming the original data exists in company systems. He did not address consent, licensing, or compensation for the cloned individuals.

Coinbase is the largest U.S.-listed crypto exchange. It joined the S&P 500 on May 19, 2025. A public statement from its CEO about workforce strategy carries weight across the industry. Within six hours of the X post, The Block, Crypto Briefing, Business Insider, and Cointelegraph all picked up the story. The stock moved 2.4% intraday on April 20, 2026, per TradingView data. Investors appear to read the signal as a productivity bet.

How the Clones Actually Work

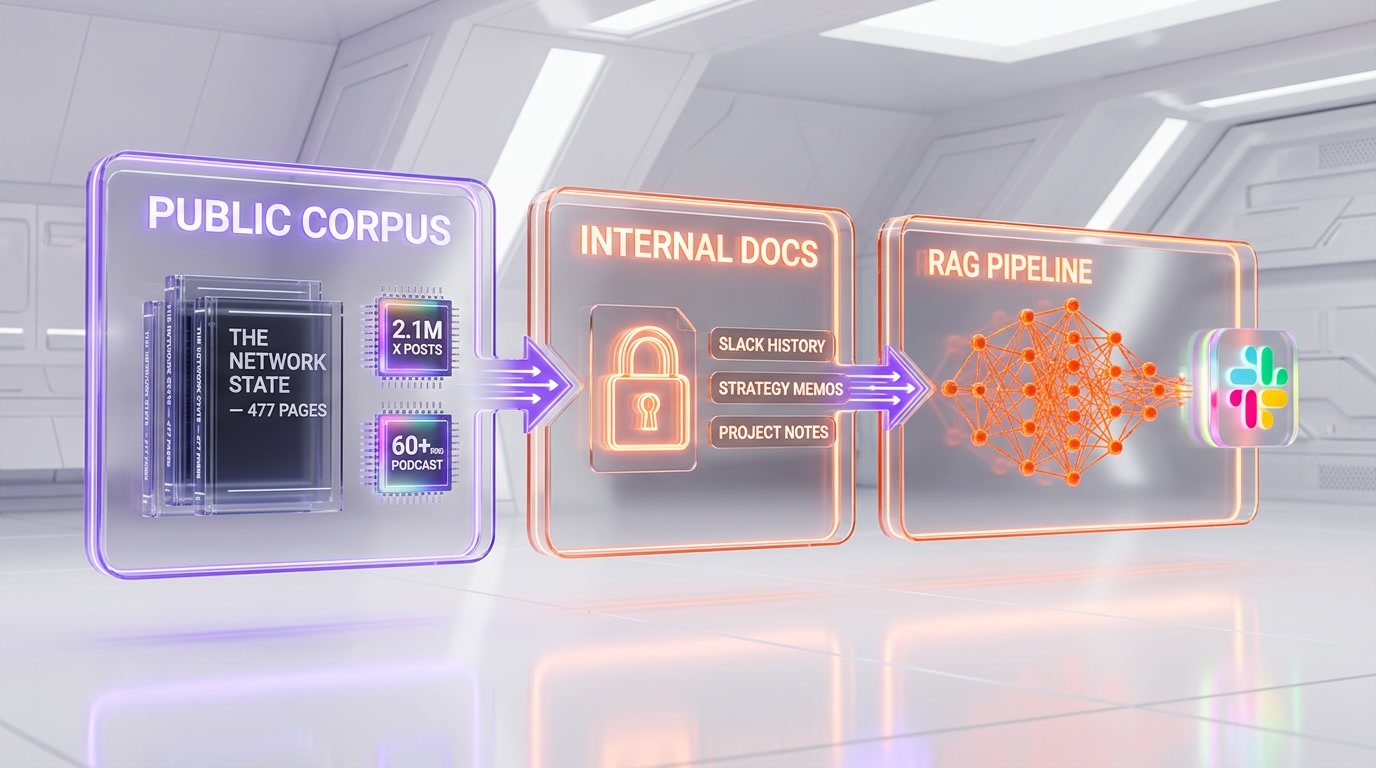

We did not get access to the agents. Coinbase has not released a technical paper or a model card. What we can say, based on the public reporting and on how the underlying technology works in 2026, is this: both clones are built on a standard retrieval-augmented generation pipeline with a persistent conversational layer. The corpus has two sources. The first is public: every tweet, podcast transcript, essay, book chapter, and video interview the original person ever produced. The second is internal: strategy memos, Slack history, code review comments, and emails from their time at Coinbase.

When an employee tags the Ehrsam clone in a Slack thread, the agent retrieves the most semantically relevant passages from both corpora and generates a response in that executive's voice. The same principle applies to the Balaji clone, though his public archive is unusually deep. Balaji's X account alone has 2.1 million posts as of April 2026, which is larger than the training corpus of some early language models. His The Network State book runs 477 pages. His All-In Podcast appearances total over 60 hours of transcribed speech. That depth of public data makes him a nearly ideal candidate for a high-fidelity voice clone.

The tooling for this kind of deployment is available off the shelf. Coding-focused agents like Claude Code have normalized the idea of persistent AI colleagues with access to internal context, and the same orchestration patterns extend to knowledge-worker domains. Coinbase did not disclose whether its clones run on Anthropic, OpenAI, or a fine-tuned in-house model.

Who Is Fred Ehrsam and Why His Archive Is Valuable

Fred Ehrsam co-founded Coinbase in June 2012 with Brian Armstrong. Before Coinbase, he traded foreign exchange at Goldman Sachs in New York. He left Coinbase in 2017 to explore crypto investing full time and in 2018 co-founded Paradigm with Matt Huang. Paradigm manages around $12 billion as of 2026 and has backed Uniswap, Optimism, Blur, and dozens of other protocols. Ehrsam's public thinking on market microstructure, exchange design, and token economics has shaped a generation of builders.

For Coinbase, a Fred Ehrsam clone is valuable for a narrow reason: he helped design the original matching engine, risk framework, and operational playbook that scaled the exchange from zero to public listing. Even a noisy simulation of his decision process is potentially useful when current employees face analogous design questions.

Who Is Balaji Srinivasan and Why His Clone Matters More

Balaji Srinivasan joined Coinbase as CTO in 2018 after selling Earn.com to the exchange for about $120 million. He left Coinbase in 2019 but remained an active investor. Before Coinbase, he was a general partner at Andreessen Horowitz and co-founded Counsyl, a genetic-testing company acquired by Myriad Genetics for $375 million. He holds a PhD in electrical engineering from Stanford. His 2022 book The Network State has been cited by founders across crypto, AI, and biotech as a blueprint for a new kind of sovereign digital community.

Balaji's public output is orders of magnitude larger than Ehrsam's. That makes his clone a more interesting test case and a riskier one. His 2.1 million X posts include highly specific predictions, sharp takes on policy, and a distinctive rhetorical style. Reproducing that style convincingly means the clone can be mistaken for the real person in casual Slack exchanges. That authenticity is exactly what makes the deployment useful, and exactly what makes it legally and ethically fraught.

The Consent Problem Nobody Is Answering

Neither Fred Ehrsam nor Balaji Srinivasan has publicly confirmed that they agreed to be modeled as AI agents inside their former employer. As of April 20, 2026, Decrypt, The Block, and Crypto Briefing all report that consent status is unclear. Coinbase has not released the text of any agreement. Ehrsam has not posted about the deployment on X. Balaji posted a single emoji reply to Armstrong's announcement, which reporters have interpreted in roughly eight different ways.

This matters because a persistent AI agent modeled on a named individual is not the same as a generic assistant. It produces outputs that the public and the original person's peers will associate with the original person. If the Balaji clone tells a Coinbase engineer, inside a private Slack channel, that a specific regulatory strategy is a bad idea, and that engineer acts on it, downstream consequences flow back toward the real Balaji's reputation. He did not make the call. His clone did.

The Legal Landscape in 2026

Three overlapping legal regimes govern this kind of deployment as of April 2026. None of them were written with in-house AI clones in mind.

The Tennessee ELVIS Act took effect July 1, 2024. It explicitly protects an individual's voice as a property right and targets unauthorized AI voice clones. The statute was originally drafted to defend musicians against deepfake songs, but its language covers any commercial use of a recognizable voice, including simulated voice inside an enterprise chatbot. Neither Ehrsam nor Balaji is a Tennessee resident, but the statute reaches commercial deployments anywhere the output is accessed by Tennessee residents.

California AB 2355, signed September 2024, requires clear disclosure on AI-generated content depicting real people in political contexts. Its reach into corporate Slack deployments is untested. California AB 1836, signed the same month, restricts post-mortem digital replicas of deceased performers. Ehrsam and Balaji are very much alive, so AB 1836 does not apply directly, but the statute signals a legislative direction: consent frameworks for digital replicas are being written in real time.

The EU AI Act, fully in force since August 2026, classifies emotion-recognition systems and biometric identification as high-risk categories. An AI clone that simulates a named individual's persona sits in a gray zone. Article 50 requires disclosure when users interact with AI systems, which a Coinbase employee talking to "Balaji" in Slack would satisfy only if the interface makes the simulation status explicit. If Coinbase operates in the EU, which it does through its Dublin subsidiary, the deployment likely falls under the general-purpose AI transparency obligations.

In the United States at the federal level, there is still no dedicated right-of-publicity statute. Protection varies state by state. New York's Civil Rights Law Section 50-f, effective May 2021, provides post-mortem protection for performers domiciled in New York, with limited application to living individuals. Absent a consent agreement, a former executive could bring a claim under the general common-law right of publicity in states that recognize it, including California, where both Ehrsam and Balaji have substantial ties.

Three Ethical Questions With No Good Answer Yet

First, informed consent. Did Ehrsam and Balaji sign an explicit agreement that their names, voices, writing styles, and reasoning patterns could be replicated as autonomous Slack agents? If yes, what are the revocation terms? If they want the clone shut down in 2028, can they force it? Coinbase has not answered this publicly.

Second, ongoing editorial authority. The real Balaji changes his mind. He updates predictions, revises frameworks, walks back hot takes. His clone is frozen at the corpus cutoff date. If the Balaji clone, in April 2026, tells a Coinbase employee that a specific Layer 2 is the winner, and the real Balaji in July 2026 publicly reverses that view, which statement wins? There is no current process for reconciling the two.

Third, post-departure personality. An employee who leaves a company takes their resume and their reputation with them. In the clone model, something stays behind. A version of the person continues to weigh in on strategic decisions inside an organization they no longer belong to, potentially for years. This is closer to continuing employment than to an archive, and the legal framework for it does not exist.

The S&P 500 Precedent and What It Signals for White-Collar Work

Coinbase joined the S&P 500 on May 19, 2025. A Fortune 500 index member publicly stating that AI agents will outnumber human employees is a signal the broader market reads carefully. Goldman Sachs estimated in a March 2024 report that generative AI could expose 300 million full-time jobs to automation globally. McKinsey's 2025 Global AI Survey found that 78% of companies already use AI in at least one business function, up from 55% in 2023. The Coinbase deployment is not the first enterprise AI agent deployment, but it is the first from a major public company that explicitly names living former executives as the templates.

If Coinbase goes from 3,800 employees and 2 clones in April 2026 to, say, 3,800 employees and 2,000 clones in 2028, the industry will have its first large-scale natural experiment in what Armstrong called a workforce with "more agents than humans." Whether that produces measurable productivity gains, legal backlash, quality problems, or all three simultaneously is the question every other S&P 500 CEO is now asking their own boards.

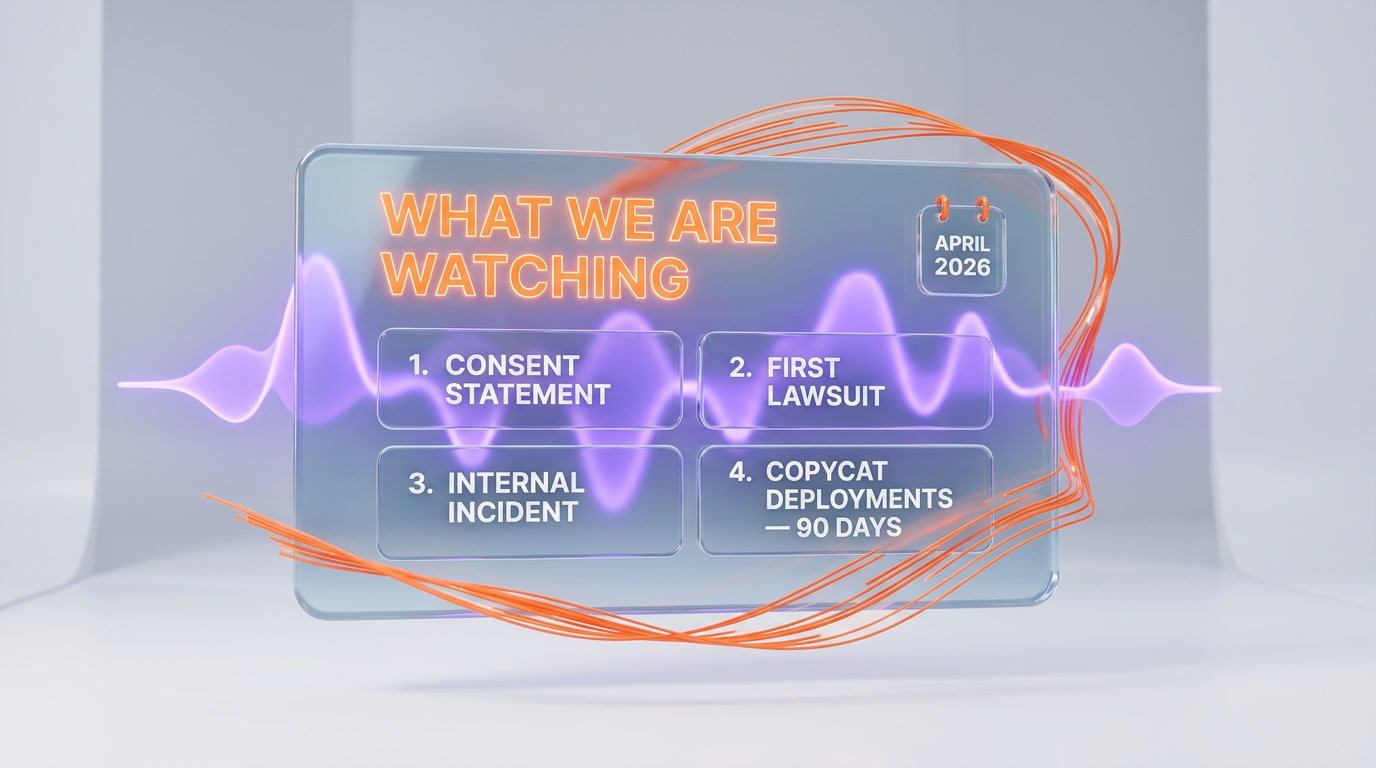

What We Are Watching Next

We are tracking four specific signals. First, a public statement from Fred Ehrsam or Balaji Srinivasan clarifying consent. Silence for 30+ days would be its own answer. Second, the first lawsuit. Right-of-publicity claims in California move fast once filed; any action from a former executive or a law firm testing the boundary would set the first real precedent. Third, the first major internal incident. If a clone produces a decision recommendation that leads to a material business loss, the liability question becomes immediate rather than theoretical.

Fourth, copycat deployments. If even three other S&P 500 technology companies follow Coinbase within 90 days, we are looking at a new workforce category. If none follow, Coinbase is an outlier and the story is smaller than it appears. The AI workforce question connects to a broader wave of regulation we covered in the 2026 companion chatbot regulation wave, and to the psychology-of-agents research documented in Anthropic's 171 functional emotions study.

Our Take

We did not get access to the Coinbase clones. What we can say, based on the public record, is that the deployment is genuinely novel in three ways. It is the first publicly disclosed case of a Fortune 500 company running persistent AI agents modeled on named former executives. It is the first time a public-company CEO has said on the record that AI agents will outnumber human employees. And it is the first time the consent and likeness questions have been raised in an enterprise-software context rather than an entertainment context.

The technology works. That is not in doubt. Given the size of Balaji's public archive, a convincing voice clone of him is now a weekend project for a competent engineer with access to Anthropic, OpenAI, or a good open-weights model. The hard questions are not technical. They are about who owns a person's thinking style after they leave, who is liable when the clone gives bad advice, and whether silence from the cloned individual counts as consent.

Our honest read: Coinbase will keep the clones running. Other public companies will copy the playbook within the next two quarters. Some former executive, somewhere, will sue within 12 months. That lawsuit will define the rules for everyone else.

Frequently Asked Questions

What exactly did Coinbase announce on April 20, 2026?

Coinbase confirmed it is testing two AI agents modeled on former executives Fred Ehrsam (co-founder) and Balaji Srinivasan (former CTO). The agents operate inside the company's Slack workspace and email system. CEO Brian Armstrong stated on X that the company will eventually have more AI agents than human employees.

Who are Fred Ehrsam and Balaji Srinivasan?

Fred Ehrsam co-founded Coinbase in 2012 and left in 2017 to co-found Paradigm, a roughly $12 billion crypto investment firm. Balaji Srinivasan served as Coinbase CTO from 2018 to 2019, was previously a general partner at Andreessen Horowitz, and authored the 477-page book The Network State.

Did Ehrsam and Balaji consent to being cloned?

Consent status has not been confirmed publicly as of April 20, 2026. Neither executive has released a statement addressing whether they signed an agreement permitting the deployment. Coinbase has not disclosed any consent documentation.

How do the Coinbase AI clones actually work?

The agents are built on a retrieval-augmented generation pipeline. The corpus combines each executive's public archive (books, podcasts, X posts, blog posts, interviews) with internal Coinbase documents from their time at the company. When tagged in Slack, the agents retrieve relevant passages and generate responses in the original executive's voice.

How large is Balaji Srinivasan's public archive?

Approximately 2.1 million X posts as of April 2026, one 477-page book (The Network State), 60+ hours of All-In Podcast appearances, and an extensive blog at 1729.com. This depth of public data makes a high-fidelity voice clone technically feasible.

Is cloning a former executive legal in the United States?

Legality depends on state law and on whether consent was obtained. The Tennessee ELVIS Act (effective July 2024) protects voice as property. California AB 2355 (signed September 2024) requires AI disclosure in certain contexts. Without a consent agreement, a former executive could bring a common-law right-of-publicity claim in states that recognize it, including California.

Does the EU AI Act affect this deployment?

Yes, if Coinbase operates in the EU, which it does through its Dublin subsidiary. Article 50 of the EU AI Act requires disclosure when users interact with AI systems. The deployment likely falls under general-purpose AI transparency obligations.

How many employees does Coinbase have?

Around 3,800 employees as of Q1 2026. Coinbase joined the S&P 500 on May 19, 2025.

What did Brian Armstrong actually say?

Armstrong posted on X on April 20, 2026: "This is a good start. I expect we'll eventually have more AI agents than human employees at Coinbase." He also outlined a vision where current employees can spin up clones of any former colleague.

Will other S&P 500 companies copy this approach?

It is too early to say. We are watching for copycat deployments within 90 days. If three or more S&P 500 technology companies follow within that window, the industry will have a new workforce category. If none follow, Coinbase is an outlier.

What is the biggest open question right now?

Liability when the clone gives bad advice. If a Balaji clone recommends a strategy in a private Slack thread and an employee acts on it, resulting in a material loss, who is responsible? Coinbase, Anthropic or OpenAI as the model provider, or the real Balaji, whose likeness generated the output? There is no current legal precedent.

What is ThePlanetTools watching next?

Four signals: a public consent statement from Ehrsam or Balaji, the first right-of-publicity lawsuit, the first major internal incident caused by a clone, and copycat deployments from other S&P 500 technology companies. Any of the four would meaningfully change the story.

Frequently Asked Questions

Did Fred Ehrsam and Balaji Srinivasan consent to being cloned as AI agents at Coinbase?

As of April 20, 2026, neither Fred Ehrsam nor Balaji Srinivasan has publicly confirmed consent. Decrypt, The Block, and Crypto Briefing all report consent status as unclear. Coinbase has not released any agreement text. Balaji posted only a single emoji reply to Armstrong's announcement.

How do Coinbase's AI clones compare to Microsoft Copilot or Google Gemini enterprise agents?

Coinbase's clones are persona-specific RAG agents built on a named individual's entire public and internal archive — 2.1 million X posts and 477 pages of published work for Balaji alone. Microsoft Copilot and Google Gemini for Workspace are general-purpose assistants without a single-person voice model. Coinbase's approach is narrower but far deeper in domain expertise.

What data was used to build the Balaji Srinivasan AI clone?

The Balaji clone was trained on his 2.1 million X posts, his 477-page book The Network State, over 60 hours of transcribed podcast appearances, his blog archive, plus internal Coinbase strategy memos, Slack history, code reviews, and emails from his time as CTO.

Is Coinbase's AI cloning approach legal under the Tennessee ELVIS Act?

The Tennessee ELVIS Act, effective July 1, 2024, explicitly protects an individual's voice as a property right and targets unauthorized AI voice clones. It was drafted for music artists but its language covers any AI reproduction of a person's likeness. Whether Coinbase's internal Slack deployment qualifies as a violation remains legally untested as of April 2026.

Could other companies like Google, Meta, or OpenAI deploy similar executive AI clones?

Technically, yes. The RAG pipeline and persistent conversational layer Coinbase uses are available off the shelf in 2026. Any company with sufficient internal documents and a willing (or unwilling) executive's public archive could build similar agents. The legal and ethical barriers — not the technical ones — are the real blockers.

What does Brian Armstrong mean by 'more AI agents than human employees'?

Armstrong posted on X on April 20, 2026, that Coinbase will eventually have more AI agents than its 3,800 human employees. He envisions any current employee being able to spin up a clone of any former colleague using data in company systems. He did not address consent, licensing, or compensation for cloned individuals.

How does this differ from what Klarna or Duolingo did with AI replacing workers?

Klarna and Duolingo replaced generic customer service and contractor roles with AI. Coinbase is cloning specific named executives with deep institutional knowledge — a co-founder who designed the matching engine and a CTO with a Stanford PhD and $120M acquisition behind him. It is identity replication, not role replacement.