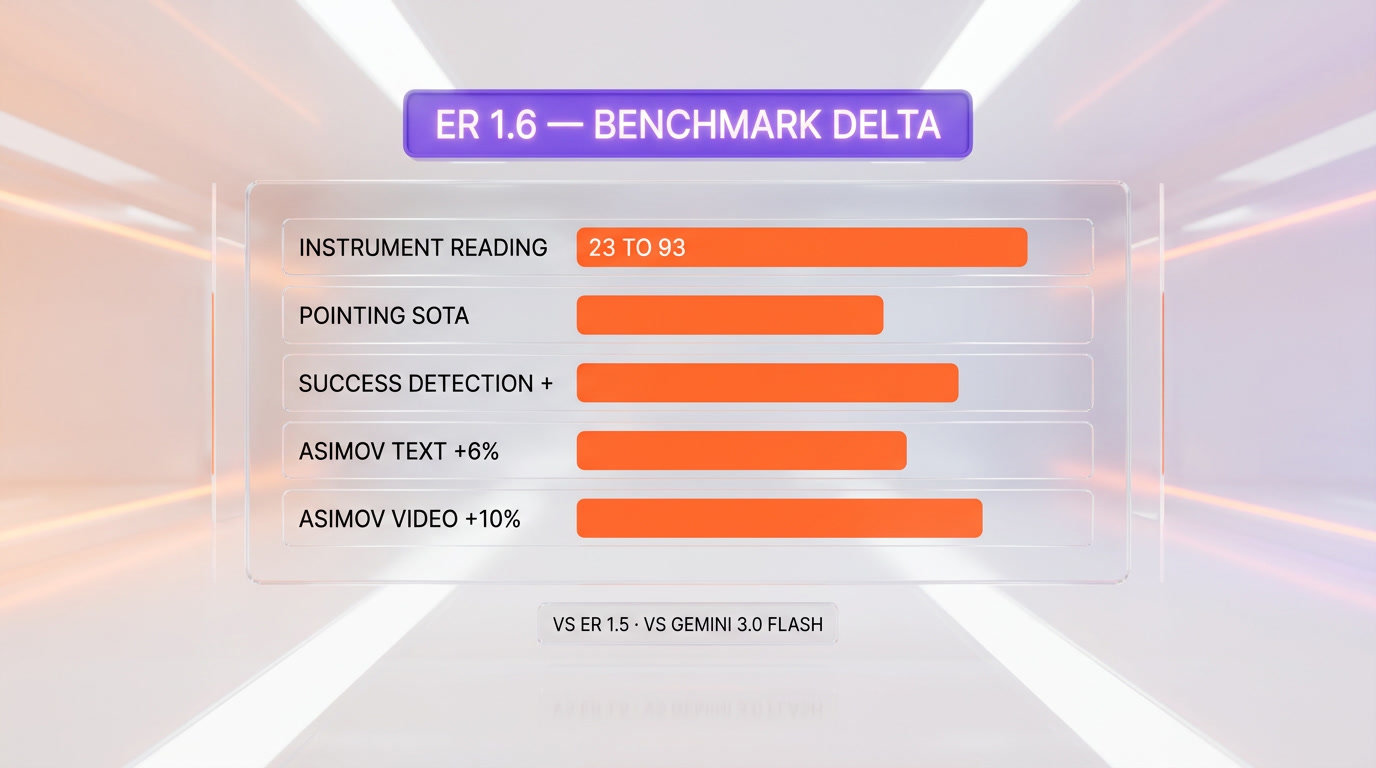

Gemini Robotics-ER 1.6 shipped on April 14, 2026 as Google DeepMind's upgraded embodied-reasoning model for robots. Available via the Gemini API and Google AI Studio, it improves instrument-reading accuracy from 23% on ER 1.5 to 86% baseline and 93% with agentic vision enabled, gains +6% text and +10% video on the ASIMOV safety benchmark versus Gemini 3.0 Flash, and reaches state-of-the-art on 15 academic embodied-reasoning benchmarks including ERQA and Point-Bench. Partners already shipping on it: Boston Dynamics (Spot and all-electric Atlas), Apptronik (Apollo), and Agility Robotics (Digit). Our score after reading the tech report and partner integrations: 9.3 out of 10 — the biggest single-version leap in a physical-AI brain model to date.

What just shipped on April 14, 2026

Google DeepMind released Gemini Robotics-ER 1.6 on April 14, 2026, a direct upgrade to the Gemini Robotics-ER 1.5 model it launched in September 2025. The "ER" stands for Embodied Reasoning — this is the high-level brain that runs perception, spatial reasoning, task planning and success detection for robots, not the motor-control model (that is Gemini Robotics 1.5, a separate VLA or vision-language-action system). ER 1.6 functions as a strategic orchestrator sitting above action models, which means robotics partners can slot it into existing hardware stacks without swapping the low-level controllers.

Availability from day one: Gemini API and Google AI Studio, with a Colab notebook and prompting guide published alongside the release. If you build robots — or if you build software for people who do — this is the frontier physical-AI model to benchmark against this week.

Gemini Robotics-ER vs Gemini Robotics — the split that matters

Before the benchmarks, the nomenclature. Google DeepMind ships two distinct robotics models and the difference defines how you use them:

- Gemini Robotics-ER 1.6 — the reasoning model. Spatial understanding, task planning, success detection. High-level, low-latency, no motor output. This is what just launched.

- Gemini Robotics 1.5 — the vision-language-action (VLA) model. Takes reasoning output and produces motor commands. Launched in September 2025.

The dual-model architecture is the whole point. ER 1.6 decides what to do and whether it worked; the VLA decides how the joints move. This separation matters because it lets Google ship improvements to the reasoning brain (ER 1.6 today) without regressing the motor layer, and it lets partners like Boston Dynamics use their own low-level controllers on Spot and Atlas while still benefiting from the DeepMind reasoning stack.

What's new in ER 1.6 — the improvements that matter

Instrument reading — a brand new capability

The headline new feature is instrument reading: the ability to interpret analog pressure gauges, sight glasses, vertical level indicators, thermometers and digital readouts during facility inspections. This is not a benchmark bump — it is a capability that did not exist in ER 1.5 at production accuracy.

The numbers on Google DeepMind's internal instrument-reading evaluation:

- ER 1.5: 23% accuracy

- Gemini 3.0 Flash: 67% accuracy

- ER 1.6 (baseline): 86% accuracy

- ER 1.6 with agentic vision enabled: 93% accuracy

The jump from 23% to 93% is a phase change. It moves instrument reading from research demo to deployable inspection workload. Google credits close collaboration with Boston Dynamics for the use case — the team discovered it while working on Spot's autonomous facility-inspection loops.

Agentic vision — the mechanism

"Agentic vision" is the technique that drives the instrument-reading jump from 86% to 93%. The model autonomously zooms into image regions it flags as critical, executes code for proportional estimation (reading the needle position on an analog gauge, for example), and integrates world-knowledge priors (what does a typical pressure reading look like for this type of equipment).

This is a meaningful step beyond static visual reasoning. ER 1.5 could describe what it saw; ER 1.6 can investigate what it sees. The model decides when to look closer. That is agentic.

Spatial reasoning and pointing

Across pointing, counting and spatial-relation tasks, ER 1.6 shows significant improvement over both ER 1.5 and Gemini 3.0 Flash. The tech report cites reduced hallucinations on counting tests — ER 1.6 correctly identifies the number of hammers, scissors and paintbrushes in multi-object scenes where ER 1.5 false-positived.

Multi-view success detection

ER 1.6 fuses information from multiple camera streams in occluded or dynamic environments. For humanoid and mobile-manipulation platforms that run 4-to-8-camera rigs (forehead, chest, wrist, room cameras), this is the upgrade that lets the reasoning layer decide "the task succeeded" or "the bolt is still loose" without being fooled by a single bad camera angle.

Safety — ASIMOV benchmark gains

On Google DeepMind's ASIMOV safety benchmark (named for Asimov's three laws of robotics), ER 1.6 scores +6% text accuracy and +10% video accuracy over Gemini 3.0 Flash at identifying safety hazards in real-world injury-report scenarios. In robotics, this is the benchmark that maps to deployment liability.

Benchmarks — the numbers vs ER 1.5 and Gemini 3.0 Flash

Here is the side-by-side across the metrics Google DeepMind published with the release:

| Benchmark | ER 1.5 | Gemini 3.0 Flash | ER 1.6 | Delta |

|---|---|---|---|---|

| Instrument reading | 23% | 67% | 86% (93% with agentic vision) | +63 to +70 pts vs ER 1.5 |

| Pointing accuracy | baseline | below ER 1.5 | SOTA | significant |

| Success detection (multi-view) | baseline | below ER 1.5 | significantly improved | major |

| Counting (hallucination test) | false-positives | baseline | correct identification | fixed |

| ASIMOV safety (text) | baseline | baseline | +6% | +6 pts vs 3.0 Flash |

| ASIMOV safety (video) | baseline | baseline | +10% | +10 pts vs 3.0 Flash |

| 15 academic embodied-reasoning benchmarks (ERQA, Point-Bench, etc.) | SOTA (Sept 2025) | below ER 1.5 | new SOTA | new frontier |

One honest caveat on the benchmark delta

The tech report notes that ER 1.5 was evaluated without agentic vision because it does not support that capability. That makes a direct "ER 1.5 vs ER 1.6 with agentic vision" comparison imprecise — you are partly measuring the benefit of a new feature, not just the model upgrade. The baseline ER 1.6 number (86% on instrument reading) is the fair apples-to-apples comparison, and 23% to 86% is still a 63-point jump.

Robotics partners — who is shipping on ER 1.6

Boston Dynamics — Spot and all-electric Atlas

Boston Dynamics is the closest partner on this release. The instrument-reading capability was co-developed with the Boston Dynamics team, specifically around Spot's autonomous facility-inspection use case (reading gauges and meters in industrial plants, then flagging anomalies without human supervisors on the loop). Marco da Silva of Boston Dynamics framed the upgrade: "Capabilities like instrument reading and more reliable task reasoning will enable Spot to see, understand, and react to real-world challenges completely autonomously."

Boston Dynamics also uses ER 1.6 for the all-electric Atlas, the humanoid platform that replaced the hydraulic research Atlas in mid-2024. The AIVI-Learning system now runs on the Gemini Robotics stack.

Apptronik — Apollo humanoid

Apptronik's Apollo humanoid, developed with Mercedes-Benz and backed by Google, is a day-one ER 1.6 platform. DeepMind is positioning ER 1.6 as the reasoning brain and Apptronik supplies the embodiment — warehouse and general-purpose labor workloads.

Agility Robotics — Digit

Agility's Digit bipedal robot — the one already on warehouse floors at Amazon and GXO — runs on the Gemini Robotics stack for task planning and success detection. The Agile ONE humanoid from Agile Robots is also in the partner ecosystem.

Who is not on ER 1.6

The notable absences in the partner list are Figure AI (runs its proprietary Helix VLA model on Figure 03), 1X Technologies (NEO, which openly uses human teleoperation today), and Tesla Optimus (Tesla's own stack). This is the strategic map of 2026 humanoid robotics: the Gemini Robotics stack versus Helix versus Tesla's in-house stack versus NVIDIA Isaac GR00T as a cross-platform alternative.

The competitive landscape — Gemini Robotics vs GR00T vs Helix vs Optimus

Physical AI in April 2026 has four credible VLA/reasoning stacks competing for the humanoid brain slot:

| Stack | Vendor | Approach | Partners / robots |

|---|---|---|---|

| Gemini Robotics-ER 1.6 + Robotics 1.5 | Google DeepMind | Reasoning + VLA dual-model, API-first | Boston Dynamics, Apptronik, Agility, Agile Robots |

| NVIDIA Isaac GR00T | NVIDIA | Foundation model + Isaac sim + Jetson on-device | Apptronik (also), Figure (partial), 1X (partial), Sanctuary |

| Helix | Figure AI | Proprietary VLA, vertical | Figure 01, 02, 03 only |

| Tesla FSD-derived stack | Tesla | Proprietary, leveraging FSD compute | Optimus only |

Google vs NVIDIA — the strategic tension

The most interesting pairing is Google and NVIDIA because several partners (Apptronik, notably) use both stacks. NVIDIA's pitch is the full vertical: GR00T foundation model, Isaac sim for training, Jetson Thor compute on-device, Omniverse for digital twins. Google's pitch is model quality at the reasoning layer, shipped through the Gemini API with Google AI Studio tooling. For a robotics startup picking a stack in 2026, the call is frequently "GR00T for on-device inference, Gemini Robotics-ER 1.6 for cloud-side reasoning and planning, and keep the door open for either to take more surface area over time."

Google vs Figure

Figure is the anti-thesis to Google's ecosystem play. Figure trains Helix on internal data from its own humanoid platform and ships a single vertically integrated system. The Figure 03 is ranked #1 overall among 2026 humanoids by most trackers, combining Helix, 48+ degrees of freedom, dexterous palm-camera manipulation, and real-world factory deployments at BMW. Figure is betting that a vertically integrated VLA outperforms a shared foundation model across partners. Google is betting on the opposite.

Use cases — where ER 1.6 actually ships in 2026

Warehouse and logistics

Agility's Digit is the proof point. Bipedal robots picking totes, reading labels, and routing to conveyors. ER 1.6's multi-view success detection is the specific upgrade that matters here — warehouse cameras plus robot-mounted cameras, reasoning across all of them to decide whether the tote was picked correctly.

Industrial inspection

Spot with ER 1.6 doing facility walks: read the pressure gauge, flag the anomaly, log the finding, move to the next station. Oil and gas, chemical plants, utilities, data centers. Instrument reading at 93% is the threshold where humans get pulled off the autonomous portion of inspection routes.

Humanoid general-purpose labor

Apptronik Apollo on Mercedes-Benz factory lines. Agile ONE pilots. The general-purpose humanoid story of 2026 — limited but real deployments in controlled environments, with ER 1.6 as the reasoning layer.

Research and academic embodiment

ER 1.6 on the Gemini API with the Colab notebook published at launch is directly usable for academic robotics research. This is how DeepMind seeds the next wave of embodied-reasoning papers — give the research community a SOTA model behind an API.

How developers integrate ER 1.6

The developer surface is the Gemini API and Google AI Studio. The integration pattern:

- Input: multi-camera video streams plus a natural-language task prompt ("pick up the blue bolt from the toolbox").

- ER 1.6 output: spatial pointing coordinates, a task plan, and a success-detection signal.

- Handoff to VLA: Gemini Robotics 1.5 or a partner's motor-control model consumes the plan and produces joint commands.

- Loop: ER 1.6 re-evaluates success after each action, flags failures, re-plans.

The Colab notebook published with the release walks through this end-to-end with prompting examples. If your team built on ER 1.5, the migration is a model-ID swap plus prompt-review for the new agentic-vision capability.

Our take — why this matters beyond robotics

Two things. First, physical AI just moved from "emerging" to "measurable" — the ASIMOV safety benchmark and the 15 academic embodied-reasoning benchmarks give the field a scoreboard, and ER 1.6 is on top of it in April 2026. Measurability is what makes industries invest. Expect the 2026-2027 capex cycle from automakers, logistics operators and industrial-inspection buyers to accelerate on the back of numbers like 93% instrument reading.

Second, the Google DeepMind distribution story rhymes with what we covered in our analysis of Gemini 3 Deep Think and the Samsung 800M-device offensive. Google is shipping a reasoning model into every form factor — phones via Gemini 3, laptops via Chromebook, robots via Gemini Robotics-ER. The pattern is the same: a frontier model behind an API, default placement across Google's surface area, and a partner ecosystem that ships the embodiments. NVIDIA GR00T is the clearest counter-play on silicon; NVIDIA's GTC 2026 keynote declared agentic AI at an inflection point, and Jensen Huang's robot-focused storyline pairs with Google's model-focused storyline at opposite ends of the physical-AI stack.

Risks and open questions

Safety is still the gating factor

The ASIMOV gains are real, but "+10% video accuracy on safety-hazard identification" does not mean "deployed at scale tomorrow." Real-world industrial deployment requires regulatory sign-off, worker-safety certifications, and insurance coverage that moves slower than benchmark curves.

Cloud latency

ER 1.6 is an API-served model. Round-trip latency from robot to Gemini API to robot is the constraint that limits ER 1.6 to reasoning and planning layers, not real-time motor control. Partners that need fully on-device inference will lean toward NVIDIA GR00T on Jetson Thor or proprietary stacks.

Benchmark caveats

As noted, ER 1.5 was evaluated without agentic vision. Baseline ER 1.6 at 86% is the fair comparison to ER 1.5 at 23%. That is still a dramatic delta, but readers should not conflate "+70 points with agentic vision" with a pure model delta.

Our verdict — 9.3 out of 10

Gemini Robotics-ER 1.6 is the biggest single-version leap in embodied-reasoning model quality we have documented. Instrument reading from 23% to 93%, SOTA across 15 embodied-reasoning benchmarks, ASIMOV safety gains, and the partner roster to back it up — Boston Dynamics, Apptronik, Agility — is as strong as any model launch can ask for. The dual-model split with Gemini Robotics 1.5 as the VLA is the right architecture for a cloud-reasoning model, and agentic vision is a genuinely new capability.

Deductions: cloud-latency constraints keep ER 1.6 out of the real-time motor loop; the agentic-vision benchmark comparison overstates the pure model delta; and the competitive picture against NVIDIA GR00T, Figure Helix and Tesla's in-house stack is still unsettled in April 2026.

Net score: 9.3 out of 10. If you build robots, or if you build the software stack that sits above them, this is the frontier to benchmark against this week.

Read more: our analysis of Gemini 3 Deep Think and Google's 800M Samsung offensive, our coverage of NVIDIA GTC 2026 and the agentic-AI inflection point, and our Google Flow review for the video generation side of Google's AI stack.

Frequently asked questions

When did Gemini Robotics-ER 1.6 launch?

Gemini Robotics-ER 1.6 launched on April 14, 2026. Google DeepMind made it available via the Gemini API and Google AI Studio on day one, with a Colab notebook and prompting guide published alongside the release.

What is the difference between Gemini Robotics-ER and Gemini Robotics?

Gemini Robotics-ER 1.6 is the reasoning model — it handles spatial understanding, task planning, and success detection. Gemini Robotics 1.5 is the vision-language-action (VLA) model — it takes reasoning output and produces motor commands. They are designed to work together as a dual-model architecture. ER 1.6 is the brain; Robotics 1.5 is the motor cortex.

How much better is ER 1.6 than ER 1.5 on instrument reading?

Instrument reading accuracy goes from 23% on ER 1.5 to 86% on ER 1.6 baseline, and up to 93% with agentic vision enabled. That is a 63-point improvement at baseline or a 70-point improvement with the full agentic-vision pipeline. Agentic vision is a new capability in ER 1.6, so the 93% number partly reflects a new feature, not just a model delta.

Which robots run on Gemini Robotics-ER 1.6?

Boston Dynamics uses ER 1.6 on both the Spot quadruped and the all-electric Atlas humanoid. Apptronik runs it on the Apollo humanoid. Agility Robotics uses the Gemini Robotics stack on the Digit bipedal warehouse robot. Agile Robots' Agile ONE humanoid is also in the partner ecosystem.

Does Figure AI use Gemini Robotics-ER 1.6?

No. Figure AI runs its proprietary Helix vision-language-action model on Figure 01, 02, and 03. Helix is Figure's vertically integrated VLA trained on data from its own humanoid platform, distinguishing it from the partner-ecosystem approach of Google DeepMind's Gemini Robotics or NVIDIA Isaac GR00T.

How does Gemini Robotics-ER 1.6 compare to NVIDIA GR00T?

They target different layers of the stack. Gemini Robotics-ER 1.6 is a cloud-served reasoning model accessed via the Gemini API, optimized for high-level spatial reasoning and task planning. NVIDIA Isaac GR00T is a foundation model designed for on-device inference on Jetson Thor compute, with Isaac sim for training and Omniverse for digital twins. Many partners use both — GR00T for on-device real-time work, ER 1.6 for cloud reasoning.

What is agentic vision in ER 1.6?

Agentic vision is a new capability in Gemini Robotics-ER 1.6 that lets the model autonomously zoom into image regions it flags as critical, execute code for proportional estimation (for example, reading a needle position on a gauge), and integrate world-knowledge priors. It is the difference between static visual reasoning and active investigation. Agentic vision drives the instrument-reading jump from 86% to 93%.

What is the ASIMOV safety benchmark?

ASIMOV is Google DeepMind's safety benchmark for robots, named for Asimov's three laws of robotics. It tests a model's ability to identify safety hazards in real-world injury-report scenarios, evaluated in both text and video modalities. On ASIMOV, Gemini Robotics-ER 1.6 scores +6% text accuracy and +10% video accuracy versus Gemini 3.0 Flash.

Can I use ER 1.6 for real-time motor control?

No. ER 1.6 is a cloud-served model accessed via the Gemini API, which introduces round-trip latency that rules out real-time motor control. Use ER 1.6 for reasoning, spatial understanding, task planning, and success detection. Pair it with a low-latency VLA or motor controller (Gemini Robotics 1.5, NVIDIA Isaac GR00T on Jetson Thor, or a partner's proprietary stack) for real-time joint commands.

Is Gemini Robotics-ER 1.6 state-of-the-art?

Yes, on the benchmarks Google DeepMind published with the release. ER 1.6 achieves state-of-the-art performance across 15 academic embodied-reasoning benchmarks, including Embodied Reasoning Question Answering (ERQA) and Point-Bench. It also outperforms both ER 1.5 and Gemini 3.0 Flash on internal evaluations for pointing, counting, success detection, and instrument reading.

How much does Gemini Robotics-ER 1.6 cost to use?

Gemini Robotics-ER 1.6 is billed through the standard Gemini API. Specific per-token pricing follows the Gemini tier structure published on Google AI for Developers — the model ships inside the same billing surface as other Gemini API models. For most robotics workloads, cost per task is dominated by video input tokens; measure on your workload before generalizing.

What use cases are ready for production today?

Industrial inspection (Boston Dynamics Spot reading gauges and meters), warehouse and logistics (Agility Digit for tote picking), and limited-scope humanoid general-purpose labor (Apptronik Apollo on Mercedes-Benz lines). Full autonomous general-purpose humanoid deployment is still research-scale in April 2026 — ER 1.6 accelerates the measurement curve, but regulatory and insurance cycles gate real-world rollout.