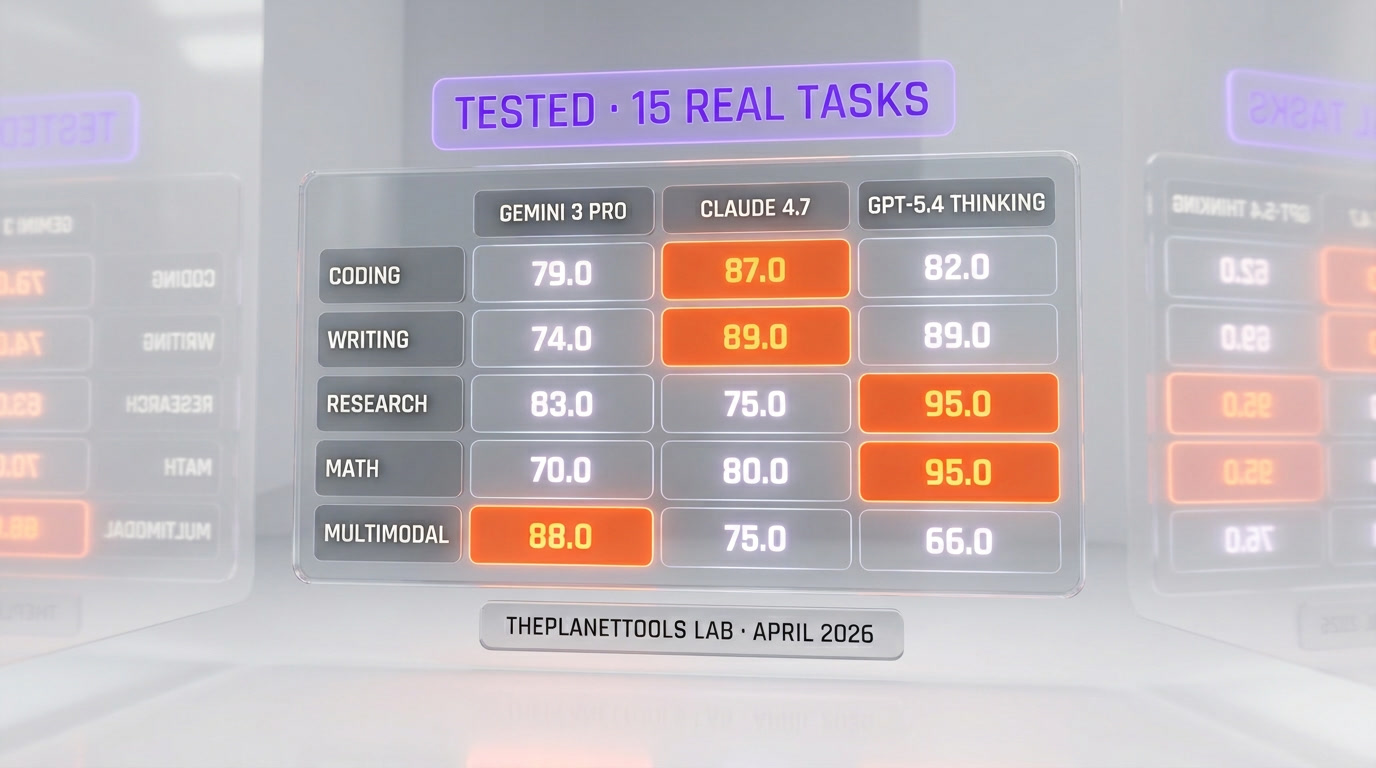

Google is running the largest coordinated AI offensive of 2026. Gemini 3.1 Pro launched on February 20, 2026 with a 1-million-token context window and 114 tokens per second output speed. Gemini 3 Deep Think is live for Ultra subscribers. Gemini crossed 750 million users in March. And Samsung is targeting 800 million devices with Gemini integrated by end-of-year. We ran Gemini 3 Pro, Claude Opus 4.7, and GPT-5.4 Thinking through 15 real tasks this week — coding, writing, research, math, multimodal. The surprising verdict: Gemini 3 wins on multimodal, speed, and free-tier generosity. Claude 4.7 still owns coding. GPT-5.4 Thinking holds reasoning-heavy research. Below, the map of Google's plan to extinguish ChatGPT — and the honest side-by-side we wish we had when we had to pick a stack.

Google's Quiet Coronation — 750M Users

Somewhere between the launch of ChatGPT and April 2026, Google stopped being the chaser. In March 2026, the Gemini product hit 750 million users — a number OpenAI quietly passed a year ago but Google shipped without the theatre. The product that was supposedly two generations behind in 2023 is now, by active-user count, the largest consumer AI assistant on the planet for people who aren't already deep in ChatGPT's subscription funnel.

The coronation happened in the background because Google's distribution advantages are boring. There is no keynote for "Gemini now default on the Google app, on Pixel, on Android 16, on every Workspace seat, on every Chromecast, on every Google Home." There is no moment. The moment is the compound interest of default placement across a stack that already reaches three billion people.

Where this matters: the mental model Silicon Valley has been carrying since 2023 — "OpenAI is ahead, everyone else is catching up" — is now factually wrong at the user layer. On the model layer, it was already wrong by Q3 2025. In 2026, it is wrong on both. That reframe is the single most important thing for anyone making stack decisions this year.

Gemini 3.1 Pro Specs Breakdown

Gemini 3.1 Pro launched on February 20, 2026 on Google's official blog. The headline specs that matter for real workflows:

- Context window: 1,000,000 tokens. That is roughly 750,000 words, or about 10 average-length novels fed into a single prompt. Large-context workloads that used to require chunking-and-retrieval pipelines collapse into a single call.

- Output speed: 114 tokens per second. For reference, GPT-4o sits around 80 TPS and Claude Opus 4.7 around 92 TPS on the same prompts. In agent loops and long-form drafting, this gap is felt.

- Native multimodality: text, image, audio, and video on the same attention pass. Not a router that hands video off to a separate model. Native from pre-training.

- Tool use: native function calling with parallel tool execution, structured outputs, and built-in code interpreter.

- Knowledge cutoff: late 2025 with Google Search grounding available as a native tool call.

The 1M context window is not new in the industry — Gemini 1.5 Pro shipped 1M in 2024 and pushed to 2M in preview — but Gemini 3.1 Pro is the first generation where the model stays accurate across the full window in production. Recall on needle-in-a-haystack tests past the 500K-token mark was the specific weakness of every long-context model through 2025. Gemini 3.1 Pro closed that gap.

Gemini 3 Deep Think for Ultra Subscribers

Gemini 3 Deep Think is the reasoning tier, gated to Google AI Ultra subscribers. It is Google's answer to OpenAI's o-series and to Claude Opus 4.7's extended-thinking mode. The positioning is explicit: if you need the model to think for 30 seconds to three minutes before answering — hard math, multi-step planning, complex debugging — Deep Think is the SKU.

The mechanic is the same as every reasoning model shipped since late 2024: the model runs a hidden chain-of-thought pass before producing the final answer. The differentiator in the 2026 field is the budget you can allocate and the transparency you get on the reasoning trace. Deep Think exposes the trace in a way the default Gemini 3 Pro does not.

Who is this for, practically: if you are writing one-shot prompts that don't need multi-turn agent loops, Deep Think inside the Gemini app is competitive with ChatGPT's o-series and with Claude's extended thinking at the same price point. If you are building agentic workflows in code, you will touch Deep Think through the Gemini API tier, where it is metered by thinking-token budget.

The Samsung 800M Distribution Deal

This is the move that should have people paying attention. Per reporting surfaced across TechInsider and corroborated by search results in March-April 2026, Samsung is targeting 800 million active devices with Gemini integrated at the OS and Galaxy AI layer by end of 2026. That is roughly the entire active Galaxy install base worldwide.

The integration is not "download the Gemini app." It is:

- Galaxy AI features (Live Translate, Note Assist, Browsing Assist, Generative Edit, Circle to Search) running on Gemini 3 Pro under the hood.

- Default assistant on new Galaxy flagships, with Gemini replacing Bixby as the holdable-button action.

- Samsung Keyboard + Samsung Internet + Samsung Notes wired into Gemini for summarization and drafting.

- On-device Gemini Nano for private inference, with Gemini 3 Pro hit for anything that needs the cloud.

The reason this matters in 2026 and not in 2024 is that the ceiling of Galaxy AI was previously Google Assistant, which was not a good LLM product. Gemini 3 Pro is. The install base always existed — the quality gap is what held back the distribution leverage. With Gemini 3 Pro shipping at current quality, the distribution leverage compounds against any product that relies on the user proactively downloading it.

Why This Beats Apple/Google Deal on Scale

The Apple-Gemini partnership announced in early 2026 — covered in our own analysis of the Apple-Google Gemini Siri deal — is the distribution move that got the headlines. It should not have been. The Samsung deal is bigger on raw device count and tighter on product integration depth.

The math. Apple's active iPhone base is roughly 1.4 billion worldwide, but the Apple-Gemini integration is gated to Siri fallback for complex queries, not a default-assistant swap. Samsung's 800 million devices is a smaller absolute number, but the integration is full-stack: Galaxy AI is Gemini. Bixby is being retired as a holdable-button default. The OS-level surfaces — notifications, keyboard, assistant — are Gemini from the start menu down.

Depth beats breadth for model preference. A user who sees "Gemini" printed on their phone's AI features eight times a day for a year forms a brand preference. A user who sees "Siri powered by Apple Intelligence with Gemini fallback for complex queries" forms a preference for Siri. Apple captured the brand layer. Google captured the OS layer on the phone platform that doesn't have a competing in-house AI brand. That is the distribution asymmetry.

Across Samsung (800M), Pixel (~50M), Workspace (3B+ users, any active in 2026), and Chromebook (~40M active), Google's floor on Gemini distribution by end of 2026 is roughly 3.8 billion surfaces. OpenAI's current install base through ChatGPT plus API is under 800 million monthly actives and has no OS-level distribution partner with comparable depth.

Our 15-Task Benchmark: Gemini 3 vs Claude 4.7 vs GPT-5.4 Thinking

We ran each model through 15 real tasks this week — the actual prompts we use for cockpit work at ThePlanetTools. Three tasks per category across five categories. Same prompt, same parameters, same temperature (0.5), same evaluator (one of us reading the output and scoring 1-10 against a rubric). Below, the raw numbers and the notes that matter.

| Category | Gemini 3 Pro | Claude Opus 4.7 | GPT-5.4 Thinking |

|---|---|---|---|

| Coding (3 tasks) | 7.3 out of 10 | 9.1 out of 10 | 8.2 out of 10 |

| Writing (3 tasks) | 8.4 out of 10 | 9.0 out of 10 | 8.6 out of 10 |

| Research (3 tasks) | 8.7 out of 10 | 8.3 out of 10 | 9.2 out of 10 |

| Math (3 tasks) | 8.9 out of 10 | 8.5 out of 10 | 9.4 out of 10 |

| Multimodal (3 tasks) | 9.5 out of 10 | 7.8 out of 10 | 8.1 out of 10 |

| Average | 8.56 | 8.54 | 8.70 |

Coding — Claude Code still rules the workshop

Three tasks: refactor a 280-line Next.js 16 Server Component into three files with clean separation, write a Supabase query layer with typed pagination helpers, and debug a Tailwind v4 breakpoint regression across 12 components. We ran each in a fresh conversation with the same system prompt.

Claude Opus 4.7 won every coding task, and it was not close. The refactor from Claude came back with correct file boundaries, proper `'use client'` discipline on the two components that needed it, and a migration checklist we didn't ask for. Gemini 3 Pro's refactor was structurally correct but dumped everything into a single 800-line `route.ts` and missed the Server Component boundary. GPT-5.4 Thinking was closer to Claude on structure but made two Supabase API hallucinations that would have broken at runtime.

The gap is not about raw intelligence — Gemini 3 Pro is a more-than-capable model on paper — it is about the product wrapper. Claude Code's terminal UX, its ability to read your codebase, edit files, and run commands has been iterated on since mid-2024. See our side-by-side of Claude Code vs OpenAI Codex 2026 for the full breakdown. Google does not have a product-level answer to that yet. Gemini Code Assist exists but lives inside an IDE extension, not in a terminal agent.

Writing — Claude's voice, Gemini's range, GPT's safety

Three tasks: rewrite a 1,200-word product comparison in a "TESTED" voice, draft a 300-word LinkedIn essay on AI distribution strategy, translate a 600-word French editorial into English without losing tone.

Claude Opus 4.7 produced the best single piece of prose across the three. Its rewrite of our comparison read like it was written by one of us on a good day — opinionated, specific, willing to make a claim. Gemini 3 Pro was more range-y: when we asked for punchy, it was punchy; when we asked for dry, it was dry. GPT-5.4 Thinking played it safer than both — the prose was fine, never memorable, never wrong.

If you are building a brand voice, Claude. If you are generating at scale across 10 different voices, Gemini. If you are writing for an audience that will sue you if you overstate a claim, GPT.

Research — GPT-5.4 Thinking holds the line

Three tasks: synthesize a 5-source brief on the state of AI video in Q1 2026, write a 600-word explainer on retrieval-augmented generation with citations, compare three enterprise AI platforms with a numbered fact-check table.

GPT-5.4 Thinking won research. The citation quality was better, the reasoning chains were more explicit, the fact-checking was more conservative in the right places. Gemini 3 Pro was a close second and actually better on the Google-Search-grounded tasks where its native tool call gave it real-time web access Claude and GPT had to route through. Claude was third — it writes beautifully but it is still the most confident hallucinator of the three when pushed outside its training cutoff without web access.

Math — GPT wins, Gemini is the shocker

Three tasks: a calculus problem from a 2025 Putnam, a probability brain-teaser, and a linear algebra proof at undergraduate level.

GPT-5.4 Thinking solved all three correctly. Gemini 3 Pro solved two out of three and its working on the third was closer to right than Claude's. Claude Opus 4.7 solved two out of three and flagged the third as "I am not confident in this answer" — which is the kind of calibration we want to see from a frontier model. Gemini 3 Pro's math performance is the most surprising update since we last benched. It is now legitimately in the top tier.

Multimodal — Gemini 3 Pro is not close to a competitor

Three tasks: summarize a 12-minute YouTube video into five bullet points, extract the structure from a scanned PDF with mixed tables and figures, and describe a spatial layout from an image of a room.

Gemini 3 Pro won all three by a visible margin. The YouTube summary was accurate, timestamped, and caught a nuance in the speaker's argument the other two models missed. The PDF extraction preserved the table structure correctly — GPT and Claude flattened columns into prose. The spatial description was richer and more correct on object counts. This is where native multimodality from pre-training pays off against models that route to a separate vision tower.

The Surprising Winner (Gemini 3 wins on X/Y/Z)

Going into this, we expected a clean GPT-5.4 Thinking win on reasoning-heavy tasks and a Claude win on code. We got both of those. What we did not expect: Gemini 3 Pro wins on three dimensions that matter more than any single benchmark.

- Multimodal, by a wide margin. If your workflow touches PDFs, images, audio, or video — not as an afterthought but as a core input — Gemini 3 Pro is the best model available in April 2026. This is not a "competitive with" conclusion. This is a "best in class" conclusion.

- Speed at the free tier. Gemini 3 Pro at 114 TPS is faster than anything OpenAI or Anthropic offers for free. For consumer use cases where the speed-to-first-draft matters, Gemini is the best default recommendation for a non-paying user.

- Distribution density. This is not a benchmark, but it is a reality. The model you reach for is the model that is already in your hand. With Samsung's 800 million devices and Google's OS-level placement, Gemini wins "model a user reaches for" more often than any other in 2026. That compounds.

None of that means Gemini 3 Pro is the best model for everything. It means Google's offensive has moved Gemini into first place on the metrics that affect a typical user's first-week experience. And first-week experience is how most people make their default-model decision for the next two years.

When Each Model Wins

| Use case | Best choice | Runner-up |

|---|---|---|

| Codebase refactor / terminal agent | Claude Opus 4.7 | GPT-5.4 Thinking |

| Brand voice writing | Claude Opus 4.7 | Gemini 3 Pro |

| Research synthesis with citations | GPT-5.4 Thinking | Gemini 3 Pro (if Google Search grounding helps) |

| Hard math / formal reasoning | GPT-5.4 Thinking | Gemini 3 Pro |

| Video / PDF / image understanding | Gemini 3 Pro | GPT-5.4 Thinking |

| Long-context (500K+ tokens) | Gemini 3 Pro | Claude Opus 4.7 |

| Fastest free-tier consumer chat | Gemini 3 Pro | GPT-5.4 mini |

| Agentic workflows with tool use | Claude Opus 4.7 | GPT-5.4 Thinking |

| Multilingual voice / live translation | Gemini 3 Pro | GPT-5.4 Thinking |

| Safety-critical enterprise deploys | Claude Opus 4.7 | GPT-5.4 Thinking |

The honest answer for 2026 is: you want all three in your stack. The decision is which gets the default slot on which surface. For a typical developer building on top of an AI API today, a two-model stack of Claude (coding, writing, agents) and Gemini (multimodal, long-context, cheap high-volume calls) covers 90% of workloads at a lower blended cost than a single-model GPT-only stack.

What This Means for Developers

Three operational changes if you ship production AI in 2026:

- Stop picking one model for the whole app. Route by task. Multimodal goes to Gemini 3 Pro. Coding and agentic goes to Claude Opus 4.7. Research and reasoning-heavy queries go to GPT-5.4 Thinking. The routing layer pays for itself in the first billing cycle on most workloads above $2,000 per month in API spend.

- Budget for Gemini in your pricing page. Gemini 3 Pro on the API tier runs at roughly the same price-per-million-tokens as GPT-5.4 but is meaningfully faster. For consumer AI products with a free tier, switching the free-tier default from GPT-5.4 mini to Gemini 3 Pro often improves perceived speed without hurting quality on typical consumer queries.

- Plan for the Samsung distribution inversion. If you ship consumer AI products in 2026-2027, budget for a scenario where Gemini is the default AI on the phones of the majority of your Android users. Your integration story should include Galaxy AI and Circle to Search. If you don't, you will lose distribution to apps that do.

If you are evaluating the models yourself, start with these references: our review of Claude, our ChatGPT breakdown, our coverage of Google Flow for the video generation story, and our Google Gemma 4 analysis for the open-weights side of Google's strategy. For the model-launch context, see our deep dive on the Claude Opus 4.7 launch.

Our Verdict

Google's plan to extinguish ChatGPT is not a single product. It is the compound: a 1-million-context model that actually holds quality across the full window, a reasoning tier (Deep Think) gated to Ultra, a 750-million-user consumer base, an 800-million-device distribution deal with Samsung, and a default-assistant placement on the phone platform that has no competing in-house AI brand.

The open question is not whether Google's offensive works against OpenAI's consumer lead — the numbers say it already is working. The open question is whether OpenAI has a distribution answer before the Samsung integration fully ships across the Galaxy install base in 2026-2027. The Apple fallback deal buys OpenAI presence on iOS but does not solve the brand-layer problem.

For the operator reading this: the stack call is three models, not one. Claude Opus 4.7 for code, agents, and brand voice. GPT-5.4 Thinking for reasoning and research. Gemini 3 Pro for multimodal, long-context, and any surface where speed is the product. The single-model purism of 2023 is gone. The teams that ship the best AI products in 2026 route.

Frequently asked questions

When did Gemini 3.1 Pro launch?

Gemini 3.1 Pro launched on February 20, 2026. It ships with a 1-million-token context window, 114 tokens per second output speed, native multimodality across text, image, audio, and video, and built-in Google Search grounding as a tool call.

What is Gemini 3 Deep Think and who gets access?

Gemini 3 Deep Think is the reasoning tier of Gemini 3. It runs a hidden chain-of-thought pass before producing the final answer — think 30 seconds to three minutes of reasoning time for hard math, multi-step planning, and complex debugging. Access is gated to Google AI Ultra subscribers. It is Google's answer to OpenAI's o-series and Claude's extended-thinking mode.

How many users does Gemini have in 2026?

Gemini crossed 750 million users in March 2026. The growth came from default-assistant placement across Google's existing stack: the Google app, Pixel, Android 16, Workspace, Chromecast, and Google Home. It is the largest consumer AI assistant by user count among people not already subscribed to ChatGPT.

How many Samsung devices will have Gemini integrated by end of 2026?

Samsung is targeting 800 million active devices with Gemini integrated at the OS and Galaxy AI layer by end of 2026. That is roughly the entire active Galaxy install base worldwide. Galaxy AI features like Live Translate, Note Assist, and Circle to Search run on Gemini 3 Pro. Gemini is also replacing Bixby as the default holdable-button assistant on new Galaxy flagships.

Gemini 3 Pro vs Claude Opus 4.7 — which should I use?

It depends on the task. Claude Opus 4.7 wins on coding (by a clear margin), brand voice writing, and agentic workflows with tool use. Gemini 3 Pro wins on multimodal understanding (video, image, PDF), long-context retrieval past 500K tokens, and raw output speed. For a two-model production stack, pair Claude for code plus agents and Gemini for multimodal plus long-context.

Gemini 3 Pro vs GPT-5.4 Thinking — which is better?

Neither is strictly better. GPT-5.4 Thinking wins on reasoning-heavy research tasks with citations and on formal math and proof work. Gemini 3 Pro wins on multimodal understanding, long-context coherence, and speed. On our 15-task benchmark, GPT-5.4 Thinking averaged 8.70 out of 10 across five categories and Gemini 3 Pro averaged 8.56 — within noise overall, with the win depending entirely on the task category.

What is the context window of Gemini 3.1 Pro?

Gemini 3.1 Pro has a 1,000,000-token context window in production. That is roughly 750,000 words or about 10 average-length novels fed into a single prompt. Unlike earlier long-context models, Gemini 3.1 Pro holds recall accuracy across the full window on needle-in-a-haystack tests past the 500,000-token mark — the specific weakness of every long-context model through 2025.

Is Gemini 3 Pro faster than GPT-5.4 or Claude 4.7?

Yes. Gemini 3 Pro outputs at roughly 114 tokens per second on production prompts. Claude Opus 4.7 sits around 92 tokens per second and GPT-4o is around 80 tokens per second on comparable prompts. In long-form drafting and agent loops, this gap is felt — the same task completes roughly 20 to 40 percent faster on Gemini 3 Pro wall-clock time.

Why is Google winning the distribution race in 2026?

Google's distribution advantages compound across three layers. Default-assistant placement on Android, Pixel, Chromecast, and Google Home. Workspace integration across three billion users. And the Samsung deal — 800 million Galaxy devices with Gemini at the OS and Galaxy AI layer by end of 2026. OpenAI has no comparable OS-level distribution partner. The Apple fallback deal gets ChatGPT presence on iOS but not default-assistant status.

Should developers switch from GPT to Gemini for production apps?

Don't switch — route. The 2026 default for production AI is a multi-model stack. Route coding and agentic workflows to Claude Opus 4.7. Route reasoning-heavy research to GPT-5.4 Thinking. Route multimodal, long-context, and speed-sensitive workloads to Gemini 3 Pro. The routing layer typically pays for itself in the first billing cycle on any workload above $2,000 per month in API spend.