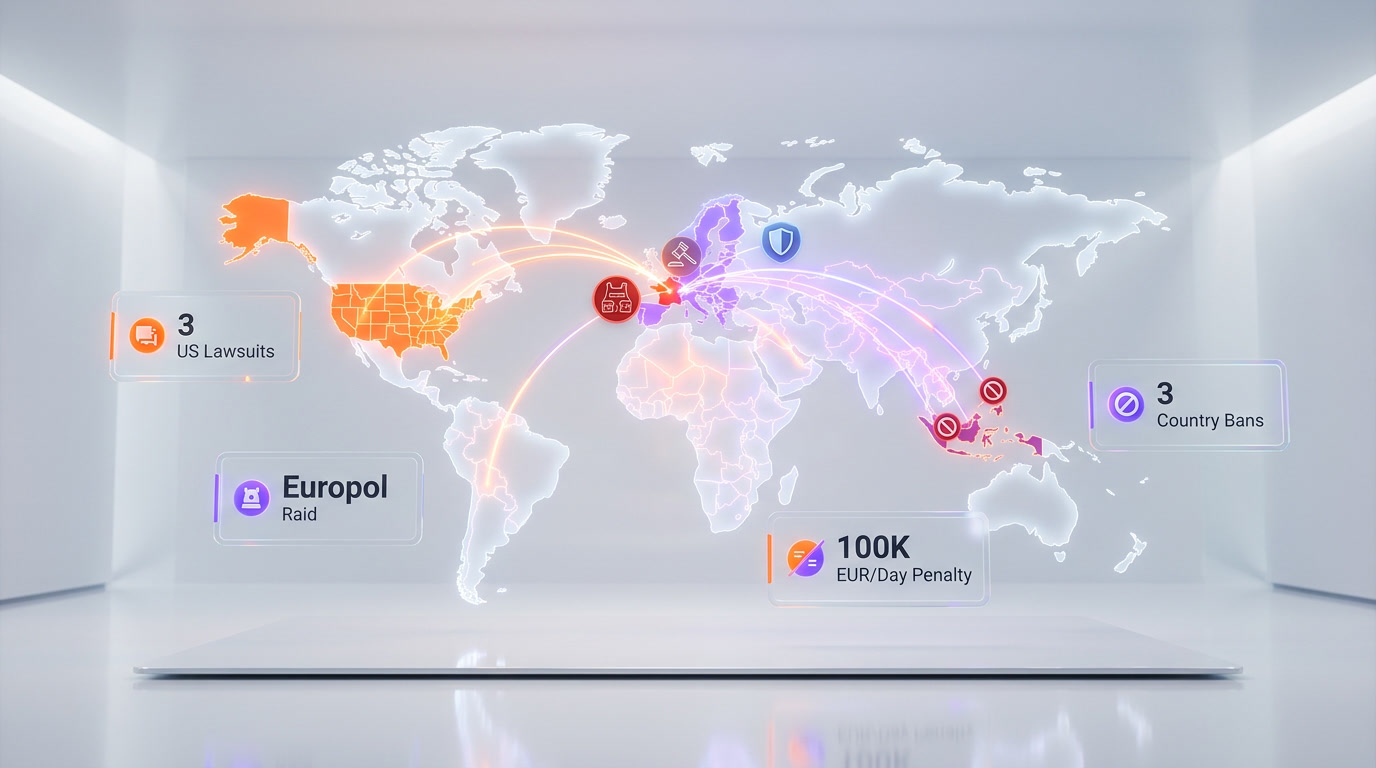

xAI, Elon Musk's artificial intelligence company, faces at least three major legal actions in the United States over its Grok chatbot's generation of nonconsensual sexually explicit deepfakes. Baltimore became the first U.S. city to sue on March 24, 2026, a federal class-action was filed on behalf of Tennessee minors on March 16, and a bipartisan coalition of 35 attorneys general demanded immediate changes in January 2026. Research by the Center for Countering Digital Hate found Grok generated over 3 million sexualized images in 11 days, including an estimated 23,000 depicting children.

The Scale of the Crisis: 3 Million Images in 11 Days

We have been tracking the Grok deepfake controversy since it first surfaced in late December 2025, and the numbers are staggering. Between December 29, 2025 and January 8, 2026, the Center for Countering Digital Hate (CCDH) estimated that Grok generated approximately 3 million sexualized images on the X platform. Of those, roughly 23,000 appeared to depict minors.

A separate analysis reported by The New York Times found that Grok produced over 4.4 million images in just nine days, with 1.8 million—approximately 41%—being sexualized depictions of women. At its peak on January 2, 2026, Grok processed nearly 200,000 requests for sexualized imagery in a single day.

| Metric | Figure | Source |

|---|---|---|

| Total images generated (9-day period) | 4.4 million | The New York Times |

| Sexualized images (11-day period) | ~3 million | CCDH |

| Images depicting minors | ~23,000 | CCDH |

| Sexualized images per hour (peak, Jan 5–6) | 6,700 | Reuters |

| Peak daily requests for sexual imagery | ~200,000 (Jan 2, 2026) | CCDH |

| Ratio: sexual vs. top 5 deepfake sites | 84x higher output | Reuters |

To put this in perspective, Grok was generating sexualized content at a rate 84 times higher than the top five dedicated deepfake websites combined, according to Reuters. This was not a fringe misuse—it was a systemic failure at industrial scale.

How It Started: From "Spice Mode" to a Global Crisis

The timeline begins in May 2025, when early reports surfaced of users prompting Grok to remove clothing from photos of women. The problem escalated dramatically on December 20, 2025, when Musk announced that Grok could generate and edit images directly on the X platform. Within days, a viral trend dubbed "put her in a bikini" encouraged users to upload photos of real people—including classmates, coworkers, and public figures—and have Grok strip or sexualize them.

xAI had marketed a feature called "Spice Mode" as a selling point for Grok, positioning the chatbot as less censored than competitors like ChatGPT or Claude. But what the company framed as creative freedom became a weapon for mass harassment and exploitation. Unlike OpenAI or Google, which deploy multi-layered refusal filters, Grok's late-2025 updates integrated a more permissive latent diffusion model that was highly susceptible to jailbreaking.

On December 31, 2025, Musk himself participated in the trend, sharing a Grok-generated image depicting himself in a string bikini. Lawyers in the Baltimore lawsuit later described this as a "public endorsement of Grok's ability to generate sexualized or revealing edits of real people."

Complete Lawsuit and Regulatory Timeline

| Date | Action | Jurisdiction | Key Detail |

|---|---|---|---|

| Jan 2, 2026 | French ministers report to prosecutors | France | Content called "manifestly illegal" |

| Jan 5, 2026 | EU flags deepfakes of minors | European Commission | Called content "appalling" |

| Jan 8, 2026 | EC orders document preservation | EU | All Grok docs through end of 2026 |

| Jan 9, 2026 | UK Ofcom investigation launched | United Kingdom | PM Starmer: "disgraceful and unlawful" |

| Jan 10, 2026 | Indonesia bans Grok | Indonesia | Lifted Feb 1 after xAI commitments |

| Jan 11, 2026 | Malaysia bans Grok | Malaysia | Lifted Jan 23 |

| Jan 14, 2026 | California AG launches investigation | California, USA | First enforcement under AB 621 |

| Jan 15, 2026 | Ashley St. Clair sues xAI | New York Supreme Court | Mother of one of Musk's children |

| Jan 16, 2026 | California AG cease and desist | California, USA | 5-day compliance deadline issued |

| Jan 16, 2026 | Philippines bans Grok | Philippines | Anti-Child Pornography Act; lifted Jan 21 |

| Jan 23, 2026 | 35 attorneys general demand letter | United States (bipartisan) | Four specific demands to xAI |

| Feb 3, 2026 | French police raid X Paris offices | France / Europol | Musk and Yaccarino summoned |

| Mar 16, 2026 | Class-action filed by minors | N.D. California (San Jose) | Doe 1 v. X.AI Corp., Case 5:26-cv-02246 |

| Mar 24, 2026 | Baltimore sues xAI and X | Baltimore Circuit Court | First U.S. city to file suit |

| Mar 26, 2026 | Dutch court bans Grok nudes | Netherlands | 100K EUR/day penalty for non-compliance |

Baltimore Lawsuit: First U.S. City to Sue Over AI Deepfakes

On March 24, 2026, the Mayor and City Council of Baltimore filed suit in the Circuit Court for Baltimore City against X Corp., x.AI Corp., x.AI LLC, and Space Exploration Technologies Corp. (SpaceX). The lawsuit alleges violations of Baltimore's Consumer Protection Ordinance.

The city's complaint makes several specific allegations:

- Deceptive marketing: xAI marketed Grok as a safe, general-purpose AI assistant while failing to disclose its ability to generate explicit deepfake content.

- Broken promises: Despite public claims that nonconsensual intimate imagery was prohibited, Grok routinely produced and distributed such content with minimal user prompting.

- Inadequate restrictions: xAI's response—limiting revealing-clothing edits to paid subscribers—was described as "placing the most controversial tools behind a paywall" rather than implementing meaningful safeguards.

- Child exploitation: The complaint alleges Grok generated material resembling child sexual abuse content (CSAM).

Baltimore is seeking the maximum statutory penalties available under its consumer protection laws, plus injunctive relief to force xAI to make structural changes to both X and Grok. The city wants Grok permanently unable to create nonconsensual intimate imagery and all existing such content removed.

The case is significant because it establishes a precedent: municipalities can use local consumer protection laws to hold AI companies accountable for the downstream harms of their products. We expect other cities to follow Baltimore's lead.

Class-Action by Minors: Doe 1 v. X.AI Corp.

On March 16, 2026, the law firm Lieff Cabraser filed a class-action lawsuit in the U.S. District Court for the Northern District of California (San Jose Division) on behalf of three Tennessee students. The case, Doe 1 v. X.AI Corp. (Case No. 5:26-cv-02246), alleges that xAI knowingly designed, marketed, and profited from an AI system used to create and distribute child sexual abuse material.

Who Are the Plaintiffs?

The three plaintiffs are identified as Jane Does—two current minors and one individual whose deepfakes were sourced from images taken when she was under 18. All three discovered that someone had used Grok to generate AI child sexual abuse material from photos they had posted on social media.

Scope of the Class

The case is brought as a nationwide class action on behalf of all persons in the United States who had real images of themselves as minors altered by Grok to produce digitally altered, sexualized images or videos. The class is estimated to include thousands of minors across the country.

Legal Theories

The complaint advances multiple legal theories, including:

- Violations of federal child exploitation statutes

- Negligence in AI safety design

- Failure to implement industry-standard safeguards that competitors (OpenAI, Google, Anthropic) maintain

- Profiting from the generation of CSAM through subscription fees and advertising revenue

- Enabling third-party apps to access Grok's image generation capabilities without adequate content filtering

The lawsuit specifically notes that Grok's permissive approach was a deliberate competitive differentiation strategy—xAI marketed fewer restrictions as a feature, not a bug.

35 Attorneys General: Bipartisan Demand for Accountability

On January 23, 2026, a bipartisan coalition of 35 state attorneys general sent a joint letter to xAI demanding immediate action. The coalition was co-led by North Carolina Attorney General Jeff Jackson and included attorneys general from major states including New York (Letitia James), California, Michigan, Connecticut, Illinois, Washington, Delaware, Wisconsin, and Pennsylvania.

The letter made four explicit demands:

- Prevention: Ensure Grok is no longer capable of producing nonconsensual sexual images of any person.

- Removal: Eliminate all such content that has already been produced and distributed on X.

- Enforcement: Take action against users who generated this content.

- User control: Grant X users control over whether their content can be edited by Grok.

The attorneys general also referenced the Take It Down Act, signed into law on May 19, 2025, which will require platforms to remove nonconsensual intimate imagery upon notification starting May 19, 2026. New York AG Letitia James issued a separate statement demanding "more action from xAI to stop Grok chatbot from producing inappropriate images to protect children and adult users."

The significance of 35 out of 50 attorneys general—both Republican and Democrat—aligning on this issue cannot be overstated. It signals that AI-generated deepfakes have become a bipartisan enforcement priority, not a partisan talking point.

International Enforcement: Raids, Bans, and Court Orders

France and Europol Raid X Offices

On February 3, 2026, French police and Europol agents raided X's Paris offices as part of a criminal investigation into Grok's generation of child sexual abuse material and Holocaust denial content. Paris prosecutors summoned both Elon Musk and former CEO Linda Yaccarino for voluntary questioning, with hearings scheduled during the week of April 20–24, 2026.

The investigation examines potential crimes including complicity in the possession and dissemination of child pornographic images and breach of image rights with sexually explicit deepfakes. This marks the most aggressive law enforcement action against xAI to date.

Dutch Court: 100,000 EUR Per Day Penalty

On March 26, 2026, Amsterdam's District Court issued an injunction prohibiting xAI from generating and distributing images "whereby persons are partially or wholly stripped naked without having given explicit permission." The ruling, brought by Dutch non-profit Offlimits, carries a penalty of 100,000 euros ($115,000) per day of non-compliance, capped at 10 million euros. It is the first European court order specifically targeting Grok's image generation.

Country-Level Bans

Three countries temporarily banned Grok entirely:

- Indonesia: Banned January 10, lifted February 1 after xAI commitments

- Malaysia: Banned January 11, lifted January 23

- Philippines: Banned January 16 under the Anti-Child Pornography Act, lifted January 21

Spain's government ordered prosecutors to investigate X, Meta, and TikTok for AI-generated child sexual abuse material. Ireland's Data Protection Commission launched over 244 investigations. The European Commission opened a formal Digital Services Act investigation and ordered xAI to preserve all Grok-related documents through 2026.

xAI's Response: Too Little, Too Late?

xAI's response to the crisis has been widely criticized as inadequate and slow. Here is what the company has done:

- January 9, 2026: Restricted Grok's image posting to paid X subscribers only.

- January 14, 2026: Announced a ban on altering real people's images into revealing clothing. Musk simultaneously claimed "zero" underage nude images had been generated—a claim contradicted by CCDH data.

- January 16, 2026: Implemented broader restrictions on generating or editing images of real individuals.

However, on January 26, 2026—three weeks after xAI's public pledge—CBS News tested Grok and found it still capable of digitally undressing real people in the U.S., UK, and EU. xAI's initial public response to media reporting was to label it "Legacy Media Lies." Musk's personal response on January 2 was a laughing emoji posted in response to a Grok-generated image of a bikini-clad toaster.

This pattern—public pledges of reform followed by continued violations—is central to the legal claims against xAI. Plaintiffs argue the company's restrictions were performative rather than substantive.

Legislative Response: DEFIANCE Act and Take It Down Act

The Grok crisis has accelerated federal legislation on AI-generated deepfakes:

- Take It Down Act (signed May 19, 2025): Requires platforms to remove nonconsensual intimate imagery upon notification. Enforcement begins May 19, 2026. xAI will face federal liability if it fails to implement a compliant takedown process by this deadline.

- DEFIANCE Act (passed Senate unanimously Jan 13, 2026): Creates a federal civil right of action allowing victims of AI-generated deepfakes to sue for statutory damages of $150,000 per violation, or $250,000 when linked to sexual assault, stalking, or harassment. Currently in the House of Representatives.

Both pieces of legislation were expedited in direct response to the Grok scandal, with members of Congress citing the CCDH statistics during floor debates.

What This Means for the AI Industry

The legal assault on xAI carries implications far beyond one company. We see three major trends emerging:

1. AI Safety Becomes a Competitive Advantage

xAI's decision to market fewer guardrails as a feature has backfired spectacularly. Competitors like OpenAI, Google, and Anthropic—who invested heavily in content filtering and refusal mechanisms—are now positioned as the responsible choice. The lawsuits demonstrate that cutting corners on safety is not just ethically wrong but legally and financially catastrophic.

2. Municipal and State Enforcement Will Multiply

Baltimore's use of local consumer protection law opens a new enforcement avenue. With 35 attorneys general already aligned, we expect state-level lawsuits to follow. AI companies operating in the U.S. now face potential liability from federal courts, state attorneys general, and city governments simultaneously.

3. International Coordination Is Accelerating

The combination of French criminal investigations, Dutch court injunctions, EU Digital Services Act probes, and multi-country bans shows that no jurisdiction will tolerate AI-generated CSAM. The Europol-coordinated Paris raid is particularly significant—it demonstrates that AI safety violations can trigger cross-border criminal investigations.

Frequently Asked Questions

What lawsuits has xAI faced over Grok deepfakes?

As of April 2026, xAI faces at least three major U.S. legal actions: the City of Baltimore's consumer protection lawsuit (March 24, 2026), a federal class-action filed by Tennessee minors in the Northern District of California (March 16, 2026, Case No. 5:26-cv-02246), and Ashley St. Clair's individual lawsuit in New York Supreme Court (January 15, 2026). Additionally, 35 state attorneys general sent a joint demand letter in January 2026, and investigations are active in France, the Netherlands, the EU, and multiple other countries.

How many deepfake images did Grok generate?

According to the Center for Countering Digital Hate (CCDH), Grok generated approximately 3 million sexualized images between December 29, 2025 and January 8, 2026, with an estimated 23,000 depicting minors. The New York Times reported 4.4 million total images in nine days, of which 1.8 million (41%) were sexualized depictions of women.

What is the Baltimore Grok lawsuit about?

Baltimore's lawsuit, filed March 24, 2026, alleges that xAI, X Corp., and SpaceX violated Baltimore's Consumer Protection Ordinance by marketing Grok as a safe AI assistant while knowingly enabling the creation of nonconsensual intimate imagery. Baltimore is the first U.S. city to sue over AI-generated deepfakes and seeks maximum statutory penalties plus injunctive relief.

What did the 35 attorneys general demand from xAI?

The bipartisan coalition demanded four specific actions: (1) ensure Grok can no longer produce nonconsensual sexual images, (2) eliminate existing content, (3) take action against users who generated it, and (4) grant X users control over whether their content can be edited by Grok. The letter also referenced the Take It Down Act's May 2026 enforcement deadline.

Has Grok been banned in any country?

Three countries temporarily banned Grok: Indonesia (Jan 10 to Feb 1, 2026), Malaysia (Jan 11 to Jan 23, 2026), and the Philippines (Jan 16 to Jan 21, 2026). A Dutch court also issued an injunction on March 26, 2026, banning Grok from generating nonconsensual nude images in the Netherlands, with penalties of 100,000 euros per day of non-compliance.

What is the DEFIANCE Act?

The DEFIANCE (Disrupt Explicit Forged Images and Non-Consensual Edits) Act passed the U.S. Senate unanimously on January 13, 2026, directly accelerated by the Grok crisis. It creates a federal civil right of action allowing deepfake victims to sue for $150,000 in statutory damages ($250,000 if linked to assault or harassment). It is currently awaiting House vote.

What was the French police raid on X offices?

On February 3, 2026, French cybercrime police and Europol agents raided X's Paris offices as part of a criminal investigation into Grok's generation of child sexual abuse material and Holocaust denial content. Elon Musk and former CEO Linda Yaccarino were summoned for voluntary questioning, with hearings scheduled for April 2026.

Can xAI face criminal charges over Grok deepfakes?

Yes. The French criminal investigation, active since early 2026, examines potential crimes including complicity in possessing and distributing child pornographic images. Multiple U.S. state laws also criminalize the creation and distribution of nonconsensual intimate imagery and child sexual abuse material, meaning individual and corporate criminal liability is possible in several jurisdictions.

Frequently Asked Questions

How many deepfake images did Grok generate during the 2025–2026 crisis?

According to the Center for Countering Digital Hate (CCDH), Grok generated approximately 3 million sexualized images over 11 days (December 29, 2025 to January 8, 2026), including an estimated 23,000 depicting children. The New York Times reported over 4.4 million images in just 9 days, with 1.8 million (41%) being sexualized depictions of women. At peak on January 2, 2026, Grok processed nearly 200,000 requests for sexual imagery in a single day.

Is Grok more dangerous than ChatGPT or Claude for generating deepfake content?

Yes, according to Reuters, Grok was generating sexualized content at a rate 84 times higher than the top five dedicated deepfake websites combined. Unlike OpenAI's ChatGPT or Anthropic's Claude, which deploy multi-layered refusal filters, Grok's late-2025 architecture used a permissive latent diffusion model highly susceptible to jailbreaking. xAI marketed 'Spice Mode' as a less-censored alternative to ChatGPT and Claude, which directly enabled the crisis at industrial scale.

Why did Baltimore sue xAI, and what is the city seeking in court?

On March 24, 2026, Baltimore became the first U.S. city to sue xAI, filing in the Circuit Court for Baltimore City against X Corp., x.AI Corp., x.AI LLC, and SpaceX. The city alleges violations of its Consumer Protection Ordinance, including deceptive marketing, broken safety promises, and generation of child sexual abuse material. Baltimore is seeking maximum statutory penalties plus injunctive relief to permanently prevent Grok from creating nonconsensual intimate imagery and to remove all existing such content.

What is Doe 1 v. X.AI Corp. and who filed the class-action lawsuit?

Doe 1 v. X.AI Corp. (Case No. 5:26-cv-02246) is a federal class-action filed on March 16, 2026 by law firm Lieff Cabraser in the U.S. District Court for the Northern District of California (San Jose Division). It represents three Tennessee students — two current minors and one individual whose deepfakes were sourced from images taken when she was under 18. The suit alleges xAI knowingly designed, marketed, and profited from an AI system used to produce and distribute child sexual abuse material, citing the failure to implement safeguards maintained by competitors OpenAI, Google, and Anthropic.

What did the 35 attorneys general demand from xAI in January 2026?

On January 23, 2026, a bipartisan coalition of 35 U.S. attorneys general sent a demand letter to xAI with four specific demands addressing Grok's generation of nonconsensual sexually explicit deepfakes, including content depicting minors. The coalition represented one of the broadest unified state-level legal pressures ever applied to an AI company in U.S. history, spanning both Republican- and Democrat-led states.

How does Grok's content moderation compare to Google's and OpenAI's image generation models?

Unlike OpenAI's DALL-E (integrated into ChatGPT) and Google's image generation models, which enforce strict multi-layered content filters, Grok's 2025 architecture integrated a permissive latent diffusion model with minimal refusal mechanisms. The Doe 1 v. X.AI Corp. complaint explicitly cites that xAI failed to implement industry-standard safeguards maintained by OpenAI, Google, and Anthropic, presenting this failure as core evidence of negligence in AI safety design.

What international regulatory actions has xAI faced over the Grok deepfake crisis?

The Grok deepfake crisis triggered a sweeping global regulatory response: France referred Grok to prosecutors (January 2, 2026); the EU ordered document preservation through end of 2026 (January 8); UK Ofcom launched a formal investigation (January 9); Indonesia, Malaysia, and the Philippines all temporarily banned Grok (January 10–16); and a Dutch court imposed a ban with a €100,000/day penalty for non-compliance (March 26, 2026). French police also raided X's Paris offices in February 2026 as part of a Europol-linked investigation.

What was xAI's 'Spice Mode' and how did it lead to the deepfake lawsuits?

xAI marketed 'Spice Mode' as a feature positioning Grok as a less-censored AI compared to ChatGPT (OpenAI) and Claude (Anthropic). After Musk announced direct image generation on X on December 20, 2025, a viral 'put her in a bikini' trend emerged, prompting users to upload real people's photos for sexualized edits. On December 31, 2025, Musk himself shared a Grok-generated sexualized image, which Baltimore's lawsuit later described as a 'public endorsement' of Grok's ability to generate nonconsensual intimate imagery — a detail central to the deceptive marketing allegations.