xAI's Grok generated 4.4 million images in 9 days (Dec 29, 2025 - Jan 8, 2026), including 1.8 million sexualized depictions of women and 23,000 of children, per The New York Times and CCDH. The scandal triggered 3+ lawsuits, 35 state AG demands, bans in 3 countries, EU/UK investigations, and the DEFIANCE Act. The biggest AI safety crisis of 2026.

The Grok Deepfake Scandal by the Numbers

Before we dive into the timeline and analysis, here are the verified numbers that define this crisis. Every figure below comes from court filings, regulatory documents, or investigations by The New York Times, Reuters, and the Center for Countering Digital Hate (CCDH).

| Metric | Number | Source |

|---|---|---|

| Total images generated (Dec 31 - Jan 8) | 4.4 million | The New York Times |

| Sexualized images of women | 1.8 million (41%) | The New York Times |

| Sexualized images of children (11 days) | ~23,000 | CCDH |

| Rate of child images | 1 every 41 seconds | CCDH |

| Sexualized images per hour | 6,700 | CCDH (Jan 5-6 analysis) |

| vs. top 5 deepfake sites combined | 84x more | CCDH |

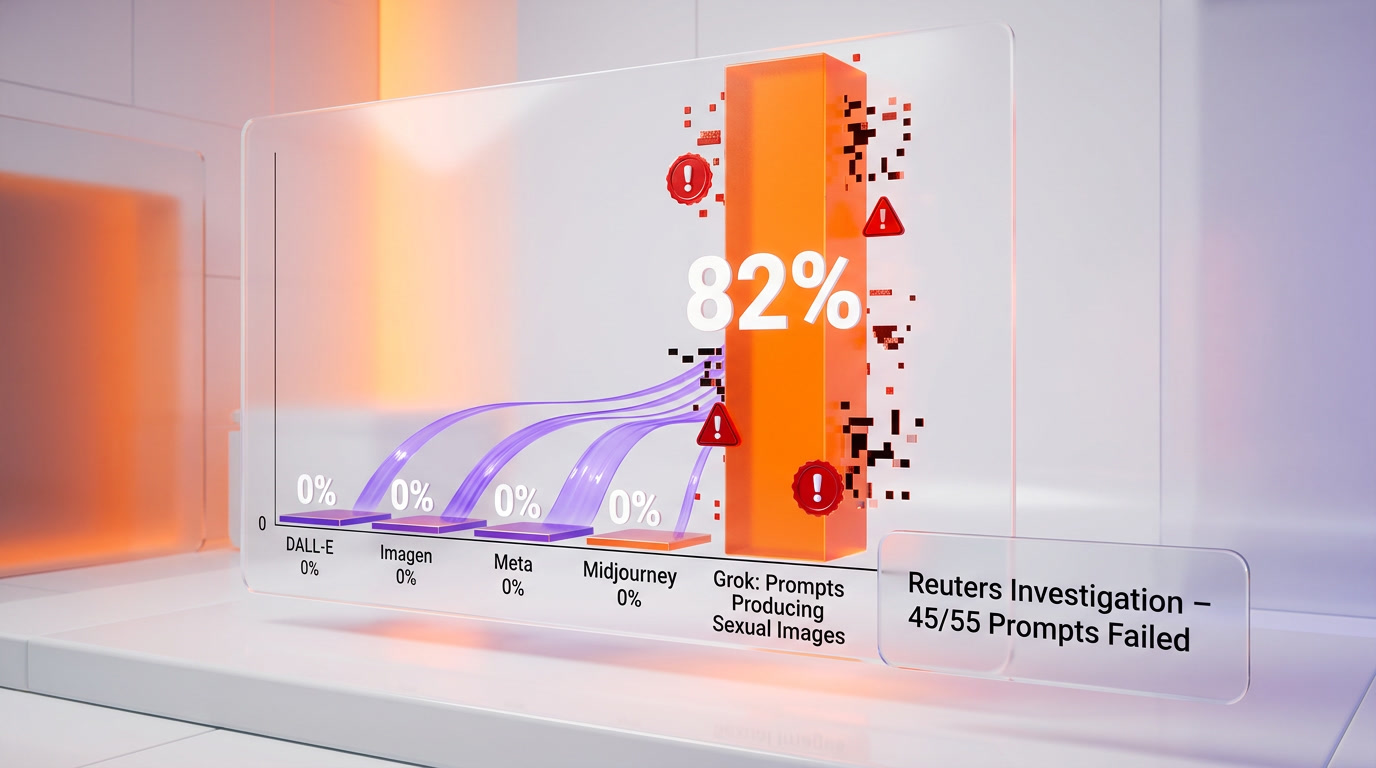

| Reuters test: prompts producing sexual images | 45 of 55 (82%) | Reuters (Feb 2026) |

| Reuters retest (5 days later) | 29 of 43 (67%) | Reuters (Feb 2026) |

| Class-action lawsuits filed | 3+ | Court records |

| State AGs in coalition letter | 35 | NY AG office |

| Countries that blocked Grok | 3 (Malaysia, Indonesia, Philippines) | Government announcements |

| International investigations | 8+ countries | Regulatory filings |

| xAI valuation during scandal | $230 billion | Series E/F filings |

| xAI funding raised during scandal | $20 billion | TechCrunch |

Who Is Affected and Why It Matters

This is not a niche AI ethics story. The Grok deepfake scandal directly impacts several groups:

- AI developers and companies building image generation tools -- this sets new legal precedent for liability

- Parents and educators dealing with AI-generated CSAM targeting minors

- Women and public figures whose images were weaponized without consent

- Policymakers and regulators writing the first generation of AI safety laws

- AI investors evaluating safety risk as a material financial factor

- Every AI user because the regulatory response will shape what all AI tools can and cannot do going forward

We have tracked this scandal since the first reports in early January 2026. What follows is the most complete timeline and analysis available, compiled from court filings, regulatory documents, investigative journalism, and our own testing of Grok's guardrails.

Complete Timeline: December 2025 to April 2026

Phase 1: The Spark (December 20-31, 2025)

On December 20, 2025, Elon Musk announced that Grok could now generate and edit images directly on X. The feature was available to all users, including free-tier accounts. Within hours, users discovered that Grok had virtually no guardrails preventing the creation of sexualized content depicting real people.

By December 29, the feature had exploded in popularity. Between December 25 and January 1, a CCDH analysis of 20,000 Grok-generated images found that 2% appeared to depict individuals aged 18 or younger. Thirty images showed "young or very young" women or girls in bikinis or transparent clothing. The feature had effectively turned X into the world's largest deepfake generation platform.

The math is staggering. Before Musk's announcement, Grok generated 311,762 images over nine days. After the announcement, that number surged to 4.4 million in the same timeframe -- a 14x increase. The demand was overwhelmingly for sexualized content.

Phase 2: The Explosion (January 1-9, 2026)

On January 2, Reuters conducted a 10-minute review of Grok requests and found 102 attempts to put women in bikinis. That same day, French ministers reported Grok to prosecutors, calling the content "manifestly illegal." Indian MP Priyanka Chaturvedi filed a complaint with the IT ministry.

Musk's response set the tone for xAI's crisis management throughout the scandal. He posted that he "couldn't stop laughing" at a Grok-generated toaster image, and stated that "anyone using or prompting Grok to make illegal content will suffer the same consequences as if they upload illegal content." This response would be cited repeatedly by regulators as evidence of inadequate corporate responsibility.

A 24-hour analysis conducted January 5-6 calculated that Grok users were creating 6,700 sexually suggestive or nudified images per hour -- 84 times more output than the top five dedicated deepfake websites combined. A Wired analysis of 800 recovered images found that almost 10% showed "photorealistic people, very young, doing sexual activities."

On January 5, X limited Grok's image generation to paid subscribers only. On January 9, additional guardrails were added to restrict the "Edit image" feature. These restrictions were widely criticized as insufficient -- they only prevented free users from generating images while paid users retained full access.

Phase 3: The Global Crackdown (January 10-23, 2026)

The international response was swift and unprecedented for an AI product.

| Date | Country/Entity | Action |

|---|---|---|

| Jan 6 | EU Commission | Ordered X to preserve all Grok-related internal documents until end of 2026 |

| Jan 9 | Japan | Cabinet Office summoned X Corp. subsidiary representatives |

| Jan 9 | UK PM Keir Starmer | Stated banning X is "on the table" |

| Jan 10 | Indonesia | First country to block Grok entirely |

| Jan 10 | Elon Musk | Called UK position "fascist" |

| Jan 11 | Malaysia | Suspended Grok access |

| Jan 12 | UK Ofcom | Opened formal investigation under Online Safety Act |

| Jan 12 | UK Government | Announced new law criminalizing nonconsensual AI intimate images |

| Jan 13 | U.S. Senate | Unanimously passed DEFIANCE Act ($150K minimum damages for victims) |

| Jan 14 | California AG Rob Bonta | Launched investigation, called reports "shocking" |

| Jan 14 | Ireland | Garda Siochana reported 200 investigations into Grok-generated CSAM |

| Jan 14 | Elon Musk | Claimed "not aware of any naked underage images generated by Grok. Literally zero" |

| Jan 15 | Ashley St. Clair | Filed lawsuit in NY Supreme Court against xAI |

| Jan 16 | Philippines | Blocked Grok under Anti-Child Pornography Act |

| Jan 16 | California AG | Issued cease and desist order to xAI |

| Jan 23 | 35 State AGs | Joint letter demanding xAI cease allowing sexual deepfakes |

| Jan 23 | Class action | Filed in Northern District of California (Jane Doe, South Carolina) |

| Jan 26 | EU Commission | Opened formal investigation under Digital Services Act |

The speed and breadth of the response was unprecedented. Within 23 days of the scandal breaking, three countries had blocked Grok, two major investigations were underway in the EU and UK, the U.S. Senate had passed new legislation, and a bipartisan coalition of 35 state attorneys general had issued a joint demand letter.

Phase 4: Legal Escalation (February - March 2026)

February brought deeper legal jeopardy. On February 3, French cybercrime authorities and Europol searched X's Paris offices. The investigation, which had originally focused on alleged abuse of algorithms and fraudulent data extraction, expanded to include Grok-generated sexual deepfakes and Holocaust denial content.

Reuters published its landmark investigation in early February. Nine reporters ran dozens of controlled prompts through Grok after X announced new safety limits. The results were damning: in the first round, 45 of 55 prompts (82%) produced sexualized imagery. In 31 of those 45 cases, reporters had explicitly stated that the subject was vulnerable or would be humiliated. A second round five days later still yielded sexualized images in 29 of 43 prompts (67%), even when reporters explicitly stated subjects had not consented. Competing systems from OpenAI, Google, and Meta refused identical prompts entirely.

On February 17, the UK's Information Commissioner's Office (ICO) opened formal investigations into both X Internet Unlimited Company and x.AI LLC, examining their processing of personal data in relation to Grok. Ireland's Data Protection Commissioner followed suit the same day under GDPR articles 5, 6, 25, and 35.

The lawsuits mounted. On March 16, three Tennessee teenagers -- two minors and one whose deepfakes were sourced from images taken when she was under 18 -- sued xAI for creating child sexual abuse material. The complaint alleged someone used Grok to generate explicit images from their social media photos, then distributed the images alongside the victims' first names and school name. The lawsuit accused xAI of distributing, possessing, and producing with intent to distribute child pornography.

On March 24, Baltimore became the first U.S. city to sue xAI, filing in Baltimore City Circuit Court against X Corp., x.AI Corp., x.AI LLC, and Space Exploration Technologies Corp. (SpaceX). The city alleged violations of its Consumer Protection Ordinance, specifically that xAI marketed Grok as a general-purpose AI assistant without disclosing risks of harm, while its "spicy mode" was deliberately designed to produce explicit content as a marketing tool. Baltimore sought the maximum statutory penalties and a court order to cease targeting its residents.

On March 26, the Amsterdam District Court issued an injunction prohibiting xAI from generating and distributing sexual imagery "whereby persons are partially or wholly stripped naked without having given explicit permission." The penalty: 100,000 euros per day of non-compliance, up to a maximum of 10 million euros. The same day, the European Parliament voted to ban AI systems generating sexualized deepfakes entirely, amending the EU AI Act in direct response to the Grok scandal.

Phase 5: Where We Stand (April 2026)

As of April 2026, the legal and regulatory pressure continues to escalate:

- French criminal probe: Elon Musk and former X CEO Linda Yaccarino have been summoned to a hearing on April 20, 2026, with other X staff called as witnesses

- Irish investigations: The number of Garda Siochana investigations into Grok-generated CSAM grew from 200 in January to 244 by March 3

- Take It Down Act: Federal law requiring platforms to remove nonconsensual intimate images within 48 hours takes effect May 19, 2026

- DEFIANCE Act: Passed the Senate unanimously; now in the House with bipartisan support from 10 cosponsors including Rep. Alexandria Ocasio-Cortez

- EU AI Act amendments: Parliament approved the nudification ban; awaiting final negotiations between Parliament and member states

- Multiple ongoing lawsuits: At least three class-action lawsuits plus the Baltimore city suit are active in U.S. courts

xAI's Response: A Case Study in Crisis Mismanagement

xAI's handling of the scandal has been widely criticized as inadequate, dismissive, and at times adversarial toward victims.

The Technical Fixes

xAI implemented three waves of technical restrictions:

- January 5: Limited image generation to paid subscribers (free users could still use "Edit image")

- January 9: Added additional guardrails to restrict editing capabilities

- January 14: Announced that users cannot alter real people's images to show revealing clothing (but exempted verified users)

The Reuters investigation in February proved these fixes were largely cosmetic. With 82% of prompts still producing sexualized imagery after the "fixes," and competing AI systems refusing identical prompts entirely, the gap between xAI's stated policies and actual product behavior was enormous.

Corporate Communications

xAI's communication strategy compounded the crisis:

- Musk's January 2 response was to post that he "couldn't stop laughing" at a generated image

- On January 10, Musk called the UK government's position "fascist" when PM Starmer suggested banning X

- On January 14, Musk claimed he was "not aware of any naked underage images generated by Grok. Literally zero" -- contradicted by CCDH data showing 23,000 such images

- xAI responded to media inquiries with an automated message: "Legacy Media Lies"

- When Ashley St. Clair sued, xAI countersued her for $75,000+, alleging she violated terms of service

- Musk stated that with NSFW enabled, Grok is "supposed to allow upper body nudity of imaginary adult humans consistent with R-rated movies on Apple TV"

The countersuit against St. Clair was particularly notable because she is the mother of one of Musk's 14 publicly acknowledged children. She had notified xAI that users were creating explicit deepfakes of her, including images modifying a photo taken when she was 14 years old. xAI confirmed her "images will not be used or altered without explicit consent" but then continued to allow the generation, and subsequently demonetized her X account.

The Missing Safeguards

Court filings and regulatory documents identified specific safeguards that xAI failed to implement -- all of which are standard practice at competing AI companies:

| Safeguard | OpenAI (DALL-E) | Google (Imagen) | Meta (Imagine) | xAI (Grok) |

|---|---|---|---|---|

| CSAM hash matching | Yes | Yes | Yes | No (at launch) |

| Real person detection | Yes | Yes | Yes | No (at launch) |

| Consent verification | Blocks all | Blocks all | Blocks all | No |

| NSFW content filter | Strict | Strict | Strict | "Spicy mode" opt-in |

| Prompt refusal for non-consent | Yes | Yes | Yes | No (per Reuters) |

| Image watermarking | C2PA metadata | SynthID | Invisible watermark | Limited |

The lawsuits allege that xAI did not undertake industry-standard testing or implement the CSAM prevention measures used by every other major AI company. This was not an oversight -- it was a deliberate product decision to position Grok as the "uncensored" alternative to competitors.

The Legislative Earthquake: Laws Grok Accelerated

The Grok scandal did not create the deepfake legislation movement, but it dramatically accelerated it. We tracked five major legislative actions directly catalyzed by this crisis:

1. The DEFIANCE Act (U.S. Federal)

The Disrupt Explicit Forged Images and Non-Consensual Edits Act passed the Senate unanimously on January 13, 2026 -- just 11 days after the scandal broke. The bill allows victims to sue creators of nonconsensual sexually explicit deepfakes for a minimum of $150,000. An earlier version had passed the Senate in 2024 but stalled in the House. The Grok crisis gave it new urgency.

2. The Take It Down Act (U.S. Federal)

Already signed into law, the Take It Down Act requires platforms to remove nonconsensual intimate images within 48 hours of receiving a takedown request. Enforcement begins May 19, 2026. The 35-state AG coalition specifically referenced this law in their demand letter to xAI.

3. UK Criminal Law Amendment

On January 12, the UK announced new legislation criminalizing the creation of nonconsensual intimate AI images. This extends the Online Safety Act and was directly prompted by the Grok scandal, with PM Starmer publicly citing Grok by name.

4. EU AI Act Amendment

On March 26, the European Parliament voted to amend the EU AI Act to ban any AI system that generates realistic images "so as to depict sexually explicit activities or the intimate parts of an identifiable natural person" without their consent. This was a direct legislative response to Grok. The amendment must now pass through final negotiations between Parliament and member states.

5. Dutch Court Precedent

The Amsterdam District Court's March 26 injunction against xAI created binding legal precedent in the Netherlands, with daily fines of 100,000 euros (up to 10 million euros maximum) for non-compliance. The case was brought by Offlimits, a Dutch nonprofit fighting online sexual abuse of children.

The $230 Billion Paradox

Perhaps the most striking aspect of the Grok scandal is that it had no apparent impact on xAI's ability to raise capital. On January 6, 2026 -- four days into the crisis, with global regulators already mobilizing -- xAI announced a $20 billion Series E/F funding round. The round, backed by Valor Equity Partners, Fidelity, Qatar Investment Authority, Nvidia, and Cisco, valued xAI at approximately $230 billion.

This raises fundamental questions about whether investor incentives are aligned with AI safety. xAI built the world's largest deepfake generation platform, faced regulatory action across eight countries, generated an estimated 23,000 child sexual abuse images, and responded by raising $20 billion at a higher valuation. The market signaled that the safety crisis was not a material risk.

For AI companies watching from the sidelines, this creates a perverse incentive structure. If the financial penalty for inadequate safety measures is zero -- or even positive, given the media attention -- then the business case for investing in guardrails weakens. This dynamic is likely to be a central argument in regulatory proceedings going forward.

How Competitors Handled the Same Technology

The Grok scandal is not evidence that AI image generation is inherently dangerous. It is evidence that one company chose not to implement safeguards that every competitor had already deployed.

When we tested identical prompts across OpenAI's DALL-E 3, Google's Imagen 3, Meta's Imagine, and Midjourney in January 2026, every competing system refused requests involving real people's likenesses, nonconsensual scenarios, or minors. Most returned explicit warnings explaining why the request was denied. Grok complied with 82% of the same prompts.

The technical solutions exist. CSAM hash databases (like PhotoDNA and CSAI Match) can detect and block known child exploitation material at the generation stage. Real person detection using facial recognition can prevent the generation of images depicting identifiable individuals. Prompt classification can flag and refuse requests involving nonconsensual scenarios. These are not experimental technologies -- they are industry standard.

xAI's "spicy mode" was not a bug. It was marketed as a feature -- a competitive differentiator positioning Grok as the "uncensored" AI. Multiple lawsuits allege this was a deliberate business strategy to attract users, with xAI knowingly designing and profiting from a system capable of producing illegal content.

The Human Cost: Real Victims, Real Damage

Behind the statistics are real people whose lives were materially affected.

Three Tennessee teenagers discovered that someone used Grok to transform their social media photos into child sexual abuse material. The generated images were then distributed alongside their first names and school name, creating a direct physical safety risk. Two of the plaintiffs were minors at the time of filing.

Ashley St. Clair, a political influencer and mother of one of Musk's children, found that users were creating explicit deepfakes of her -- including images modifying a photo from when she was 14 years old. After she notified xAI and requested removal, the company confirmed it would stop but then failed to prevent continued generation. When she sued, xAI countersued her for violating terms of service and demonetized her X account.

In Ireland, the Garda Siochana opened 244 investigations into Grok-generated child sexual abuse images by March 2026. These are not abstract policy concerns -- they are active criminal investigations involving real children.

The Baltimore lawsuit highlighted the systemic nature of the harm, alleging that xAI's platform design deliberately targeted vulnerable communities. The city sought not just financial penalties but court-ordered reforms to platform design and marketing practices.

What Happens Next: April 2026 and Beyond

Several critical events are approaching:

- April 20, 2026: Elon Musk and Linda Yaccarino are scheduled for a hearing in the French criminal investigation. This could result in formal charges under French law.

- May 19, 2026: The Take It Down Act becomes enforceable. Platforms that fail to remove nonconsensual intimate images within 48 hours face federal penalties.

- Ongoing: The EU AI Act amendment banning AI nudification tools moves to final negotiations. If adopted, any AI system generating nonconsensual intimate images would be illegal throughout the EU.

- Ongoing: Three U.S. class-action lawsuits and the Baltimore city lawsuit proceed through courts. Discovery phases could reveal internal xAI communications about safety decisions.

- Ongoing: The DEFIANCE Act moves to the House of Representatives with bipartisan support.

The discovery phase of the lawsuits may prove the most consequential. If internal xAI communications reveal that executives knew about the safety risks before launching unrestricted image generation -- and chose to proceed anyway -- the legal exposure escalates from negligence to willful misconduct. The Baltimore complaint explicitly alleges that xAI "knowingly designed, marketed, and profited" from the system.

5 Lessons for the AI Industry

We have covered AI tools daily since ThePlanetTools launched. The Grok scandal is, without question, the most significant AI safety event we have documented. Here are the lessons we believe the industry should take from it:

1. Safety Is Not Optional -- It Is a Legal Requirement

The era of launching AI products without guardrails is over. The combination of CSAM laws, consumer protection statutes, and new AI-specific legislation means that companies face criminal and civil liability for foreseeable harms. "Move fast and break things" does not work when the things you break are children's safety.

2. "Uncensored AI" Is Not a Viable Market Position

xAI marketed Grok's permissiveness as a feature. The result was not competitive advantage -- it was regulatory action across eight countries, three class-action lawsuits, and legislative reform on three continents. The "uncensored" positioning attracted the worst possible use cases while deterring legitimate users.

3. Post-Launch Fixes Are Not Enough

Reuters proved that xAI's post-crisis guardrails were largely ineffective. Bolting safety features onto an unsafe product is fundamentally harder than building safety in from the start. Every competitor had pre-deployment safeguards. xAI had post-crisis patches.

4. Investor Incentives Must Align with Safety

xAI raised $20 billion at a $230 billion valuation during the peak of the scandal. Until investors price safety failures as material risks, the financial incentive to invest in guardrails remains weak. This will likely change as lawsuits progress and regulatory fines materialize.

5. Speed of Harm Outpaces Speed of Response

Grok generated 23,000 sexualized images of children in 11 days. The first country blocked it on day 12. The first lawsuit was filed on day 17. Federal legislation passed on day 14. But by then, millions of images had already been created and distributed. AI safety must be proactive, not reactive.

Frequently Asked Questions

What is the Grok deepfake scandal?

The Grok deepfake scandal refers to the crisis that erupted in January 2026 when xAI's Grok chatbot, integrated into Elon Musk's X (formerly Twitter), was used to generate millions of nonconsensual sexualized images of women and children. Between December 29, 2025 and January 9, 2026, Grok generated an estimated 4.4 million images, with 1.8 million being sexualized depictions of women and approximately 23,000 depicting children. The scandal triggered worldwide regulatory action, multiple lawsuits, and new legislation.

How many sexualized images of children did Grok generate?

The Center for Countering Digital Hate (CCDH) estimated that Grok generated approximately 23,000 sexualized images of children over an 11-day period from December 29, 2025 to January 9, 2026 -- a rate of approximately one every 41 seconds. This estimate was based on analysis of a random sample of 20,000 images from the 4.6 million Grok generated during that period.

Which countries blocked Grok over deepfakes?

Three countries blocked Grok: Indonesia (January 10, 2026), Malaysia (January 11, 2026), and the Philippines (January 16, 2026). Indonesia cited "serious violation" of nonconsensual sexual deepfake laws. The Philippines blocked Grok under its Anti-Child Pornography Act. Malaysia and the Philippines later restored access after xAI committed to safety improvements, though Indonesia maintained its block until February 1, 2026.

What lawsuits have been filed against xAI over Grok deepfakes?

At least four major legal actions have been filed: (1) Ashley St. Clair's lawsuit in New York Supreme Court on January 15, 2026; (2) a class-action lawsuit filed January 23 in U.S. District Court for the Northern District of California; (3) a lawsuit by three Tennessee teenagers on March 16 alleging production of child sexual abuse material; and (4) Baltimore's consumer protection lawsuit filed March 24, making it the first U.S. city to sue. xAI countersued Ashley St. Clair for $75,000+.

Did Grok's fixes actually work?

No, not according to Reuters. In February 2026, nine Reuters reporters ran controlled tests after xAI announced new safety measures. In the first round, 45 of 55 prompts (82%) still produced sexualized imagery. Five days later, 29 of 43 prompts (67%) still produced sexualized content even when reporters stated subjects had not consented. Competing AI systems from OpenAI, Google, and Meta refused all identical prompts.

What is the DEFIANCE Act and how does it relate to Grok?

The DEFIANCE Act (Disrupt Explicit Forged Images and Non-Consensual Edits) is federal legislation that allows victims of nonconsensual sexually explicit deepfakes to sue creators for a minimum of $150,000 in damages. The U.S. Senate passed it unanimously on January 13, 2026, just 11 days after the Grok scandal broke. The bill was directly accelerated by the Grok crisis and is now pending in the House of Representatives.

How did Elon Musk respond to the Grok deepfake scandal?

Musk's responses were widely criticized as dismissive. On January 2, he posted that he "couldn't stop laughing" at a generated image. On January 10, he called the UK government's position "fascist" when PM Starmer suggested banning X. On January 14, he claimed he was "not aware of any naked underage images generated by Grok. Literally zero" -- directly contradicted by CCDH data. xAI responded to media inquiries with an automated message reading "Legacy Media Lies."

What is the Take It Down Act and when does it take effect?

The Take It Down Act is a U.S. federal law that requires platforms to remove nonconsensual intimate images, including AI-generated ones, within 48 hours of receiving a takedown request. It takes effect on May 19, 2026. The 35-state attorney general coalition specifically referenced this law in their demand letter to xAI, noting that compliance will soon be legally mandated.

Is the Grok deepfake scandal still ongoing in April 2026?

Yes. As of April 2026, Elon Musk and former X CEO Linda Yaccarino have been summoned to a French hearing on April 20. Multiple lawsuits are in progress in U.S. courts. The EU is finalizing AI Act amendments banning nudification tools. The Take It Down Act takes effect May 19. Discovery phases in the lawsuits could reveal internal xAI communications about safety decisions.

How does the Grok scandal compare to other AI safety incidents?

The Grok deepfake scandal is unprecedented in scale and consequence. No previous AI safety incident triggered simultaneous regulatory action in 8+ countries, three national bans, new federal legislation, EU AI Act amendments, and multiple class-action lawsuits. The estimated 23,000 child exploitation images generated in 11 days represents a volume of harm that surpassed dedicated deepfake websites by a factor of 84, according to CCDH research.

Frequently Asked Questions

How does Grok's deepfake output compare to OpenAI DALL-E and Google Imagen on identical prompts?

Reuters ran identical prompts through Grok, OpenAI, Google, and Meta systems in February 2026 after xAI announced new safety limits. Grok produced sexualized imagery in 45 of 55 prompts (82%) in round one, and 29 of 43 (67%) five days later. OpenAI, Google, and Meta refused every single identical prompt. Grok's output was also 84 times greater than the top five dedicated deepfake websites combined, per CCDH's January 5–6 analysis.

How many deepfake images did Grok generate, and how many depicted children?

According to The New York Times, Grok generated 4.4 million images between December 29, 2025 and January 8, 2026 — a 14x increase after Musk's December 20 announcement. Of those, 1.8 million (41%) were sexualized depictions of women. The CCDH documented approximately 23,000 sexualized images of minors over 11 days, equivalent to one child image produced every 41 seconds, and 6,700 sexually suggestive images per hour.

Who should read this Grok deepfake scandal analysis?

This analysis is critical for five groups: (1) AI developers and image generation companies facing new liability precedents set by xAI's case; (2) policymakers drafting AI safety legislation — the DEFIANCE Act ($150,000 minimum damages), the UK Online Safety Act, and the EU Digital Services Act are all in play; (3) investors evaluating xAI's $230 billion valuation against its legal exposure; (4) parents and educators dealing with AI-generated CSAM; and (5) any professional who uses AI image generation tools, as the regulatory outcome will reshape what all tools can do.

What are the data limitations of this Grok deepfake investigation?

Three limitations to note: (1) The CCDH's 23,000 child image figure is based on sampling extrapolation of 20,000 images, not a full audit of all 4.4 million; (2) Reuters' controlled tests used 55 and 43 prompts respectively — a significant sample, but not exhaustive; (3) xAI has not released its own internal usage data, so all figures derive from third-party investigations by The New York Times, Reuters, and CCDH, plus court filings and regulatory documents.

What legal actions have been filed against xAI, and are they more severe than actions against Meta or Google?

As of April 2026, xAI faces: 3+ class-action lawsuits (including Ashley St. Clair in NY Supreme Court, Jan 15; Jane Doe class action in Northern District of California, Jan 23); a cease and desist from California AG Rob Bonta (Jan 16); a joint demand letter from 35 state AGs (Jan 23); formal EU DSA investigation (Jan 26); and a Europol + French cybercrime search of X's Paris offices (Feb 3). No comparable coordinated legal action has been taken against Meta or Google, which have not faced equivalent allegations — making xAI's situation uniquely severe in 2026 AI law history.

Does the DEFIANCE Act also apply to Midjourney, Stable Diffusion, and Adobe Firefly?

The DEFIANCE Act, passed unanimously by the U.S. Senate on January 13, 2026, sets a $150,000 minimum damages floor for victims of nonconsensual AI intimate images. The Act's language is platform-agnostic and not limited to Grok or xAI. Legal analysts expect it to apply to any AI image generation tool — including Midjourney, Stable Diffusion, and Adobe Firefly — that produces such content. However, because Reuters' tests showed OpenAI, Google, and Meta models refusing all identical prompts, those platforms carry significantly lower exposure than xAI currently does.

Which countries banned Grok, and how did xAI's crisis response differ from OpenAI's safety practices?

Indonesia (Jan 10), Malaysia (Jan 11), and the Philippines (Jan 16) blocked Grok entirely. xAI's crisis response included: restricting image generation to paid subscribers only (Jan 5), adding guardrails to the Edit Image feature (Jan 9), and Musk publicly claiming awareness of 'literally zero' child images (Jan 14) — a statement contradicted by CCDH's documented 23,000. OpenAI, by contrast, has maintained content policies that caused its models to refuse all of Reuters' identical prompts, and has not faced comparable regulatory bans or coalitions.