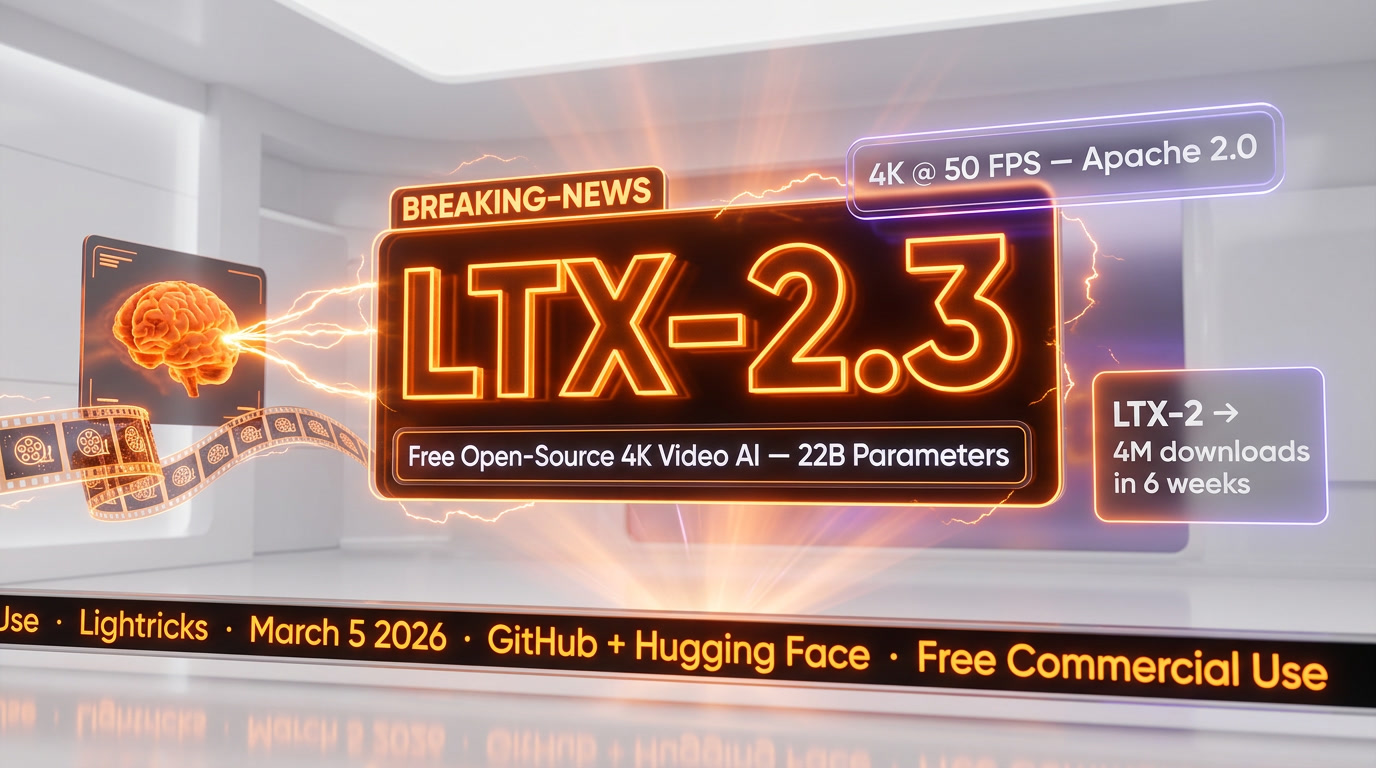

Something significant happened on March 5, 2026. No splashy keynote, no celebrity demo reel, no $20-a-month paywall. Lightricks pushed LTX-2.3 to GitHub and Hugging Face and let the model speak for itself. Twenty-two billion parameters. Native 4K video at 50 frames per second. Synchronized audio generated in a single pass — not bolted on after the fact. Up to 20-second clips. Portrait mode. Last-frame interpolation. Apache 2.0 license. Free for most commercial use cases.

We've been watching the AI video space closely for a while now, and we'll be upfront: this one stopped us mid-scroll. After going deep on the official docs, Lightricks' own release blog, the Hugging Face model card, Awesome Agents' review, community threads on r/StableDiffusion, and multiple third-party technical breakdowns — it's clear this isn't a minor patch release dressed up as news. LTX-2.3 is a structural shift, both architecturally and in terms of what it signals for the broader market.

What Happened

LTX-2 launched in January 2026 and hit four million downloads in six weeks — the fastest-downloaded video model in history at that point. That version already turned heads. LTX-2.3, released on March 5, takes the foundation and rebuilds significant parts of it.

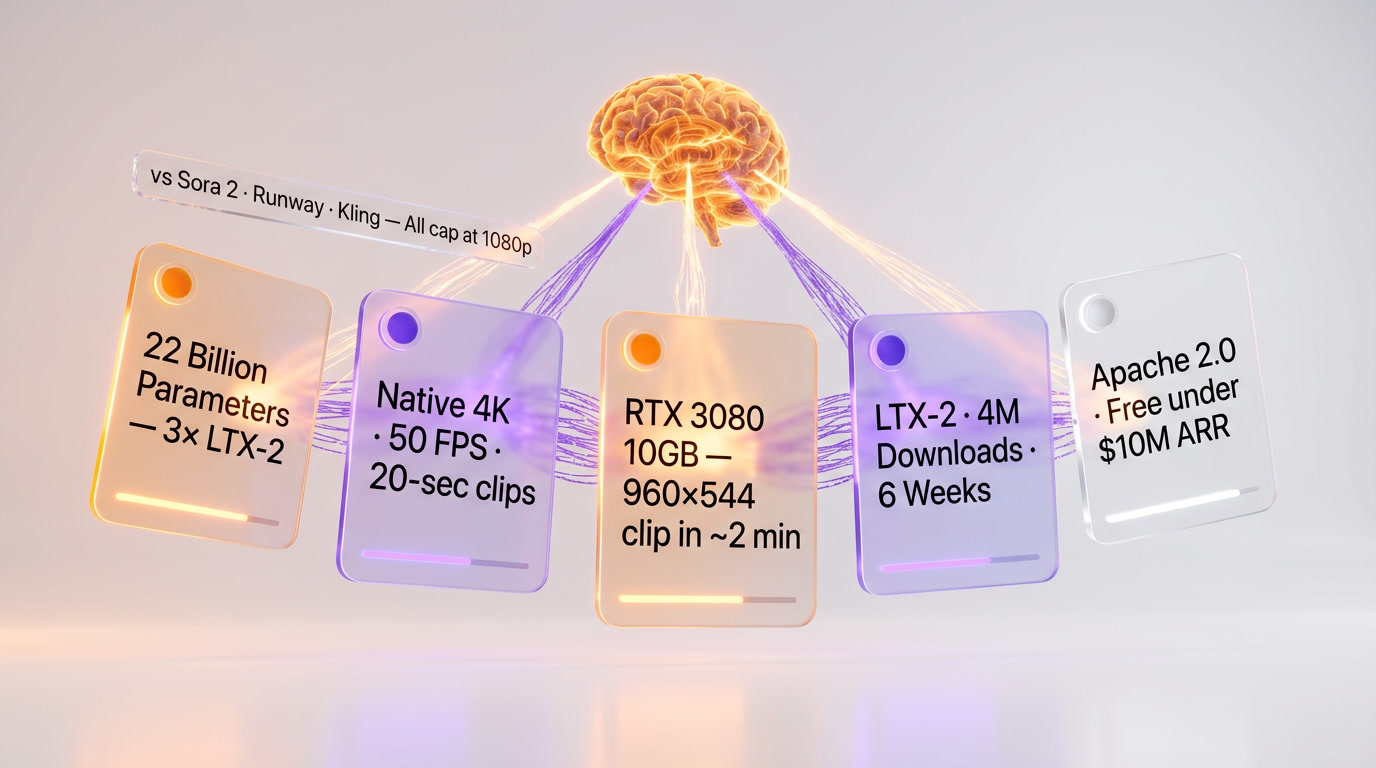

The headline number: 22 billion parameters, up from roughly 8 billion in LTX-2. Nearly triple the size. But Lightricks didn't just scale the existing architecture and call it a day — they redesigned core components. The VAE (variational autoencoder) was completely rebuilt with higher-quality training data. In practical terms, that means sharper fabric textures, cleaner hair, stable reflections on chrome during camera moves. The kinds of fine-detail failures that made earlier open-source video models feel unfinished. The text connector was quadrupled in size, which matters more than it sounds: multi-subject prompts now maintain spatial relationships across the full clip rather than drifting apart after three or four seconds — a notorious failure mode in previous generations.

The audio architecture deserves its own paragraph. Audio and video aren't generated sequentially; they're cross-attended at every layer of the diffusion process. The model jointly reasons about both modalities from step one. Your first draft plays like a scene, not a silent concept clip waiting for post-production sound design.

Shipped alongside the weights: a native desktop editor enabling full local inference on consumer GPUs. That's not a feature checkbox. That's a product philosophy statement.

Several weight variants are available on Hugging Face at Lightricks/LTX-2.3: the full BF16 dev model at 42GB on disk for fine-tuning and research, an 8-step distilled version for faster inference with lower memory overhead, a quantized fp8 version running at roughly 18–20GB with a small quality trade-off, and a LoRA adapter that combines distillation-speed sampling with the dev model's quality ceiling. The full fp16 weights need around 44GB just for the model — so a 48GB+ GPU is required for full native 4K. But the quantized path is genuinely viable: one developer reported running the Q4_K_S GGUF variant on an RTX 3080 (10GB VRAM) and getting a 960×544, 5-second clip with audio in about two to three minutes. For a 22-billion-parameter model, that's remarkable.

Why It Matters

Here's what tends to get lost in the spec-sheet coverage: expensive AI video subscriptions work well enough that most people never question them. Sora charges premium pricing per clip. Runway starts at $12 per month and scales aggressively at production volume. Kling has a credit system that drains faster than expected. For individual creators that's annoying friction. For teams building video features into products, it's a real, recurring cost constraint with a third-party dependency baked in.

LTX-2.3 changes that calculation. Fundamentally.

The LLaMA comparison isn't hyperbole — it's the right frame. When Meta released LLaMA in 2023, it didn't kill GPT-4. But it restructured who could build with language models. Developers could host their own inference, fine-tune on proprietary data, ship products without API dependency risk. The community built quantized versions that ran on laptops. The ecosystem exploded. LTX-2.3 is doing the same thing for video generation. The open weights are out. The community is already running it on 10GB consumer cards. LoRAs, ComfyUI workflows, and optimization techniques are appearing daily on r/StableDiffusion. The trajectory is identical.

On the resolution question alone: LTX-2.3 generates native 4K at 50 FPS. Not upscaled. Native. Sora 2, Runway Gen-4.5, and Kling 2.6 all currently cap at 1080p. That gap is not cosmetic — it's a meaningful production advantage that currently exists only in an open-source, free-to-run model. That's a strange sentence to type, but it's accurate.

Portrait mode deserves a specific callout. Native 1080×1920 vertical video — not a crop of a landscape output, but a dedicated native generation mode designed for social media aspect ratios. For anyone running short-form content pipelines at scale, this is genuinely useful. TikTok, Reels, Shorts: all handled natively, in 4K.

The licensing situation is worth spelling out clearly. The code is Apache 2.0, one of the most permissive open-source licenses available. Lightricks' own terms state it's free for organizations under $10 million ARR. Above that threshold, you should verify the commercial terms directly with Lightricks before assuming Apache 2.0 governs everything. For the vast majority of creators, indie developers, and small-to-mid-size teams, this is effectively zero cost to run locally — just compute.

How It Compares

We spent time going through benchmark data, third-party comparison reviews, and community discussions to get a grounded picture of where LTX-2.3 actually sits in the landscape. The competitive picture is genuinely interesting right now.

Against Sora 2: OpenAI's model is still the realism benchmark. Physics coherence, emotional subtlety, complex crowd scenes, believable human faces — Sora 2 leads. But the pricing is punishing at scale. At roughly $0.40 per second in compute, a prompt that requires five iterations on a 20-second clip at Pro resolution can cost $50 before you export a single deliverable. LTX-2.3 via fal.ai (for teams who don't want to manage GPU infrastructure) runs at $0.04 per second in fast mode — ten times cheaper per second. Locally, it costs electricity.

Against Runway Gen-4.5: Runway is the agency standard for good reason. Character consistency across shots is its superpower, and it's reliable in ways that matter for production workflows. But it caps at 1080p and requires a subscription that scales with usage. LTX-2.3 exceeds Runway on resolution and undercuts it significantly on cost. The trade-off is that Runway's ecosystem — timeline tools, asset management, collaborative workflows — is more polished for team production use.

Against Kling 3.0: Kling is fast and well-suited to social content. Multi-shot sequences with subject consistency across 3–15 seconds are a genuine advantage. But it's a closed model with no local inference option, and audio quality in community reviews has been repeatedly called out as "muffled." LTX-2.3 runs circles around it on resolution and audio fidelity.

One benchmark that surfaced consistently in developer discussions: LTX-2.3 runs 18x faster than WAN 2.2, the other significant open-source video model. That's not incremental improvement — that's a different speed category entirely. For batch workflows and production pipelines, that multiplier compounds quickly.

NVIDIA's endorsement is the most telling external signal. They integrated LTX-2.3 into their official video generation workflow, citing it as a reference implementation running locally on RTX GPUs via Blender and ComfyUI. When NVIDIA's own documentation starts pointing to your open-source model, the market has made a judgment.

The Awesome Agents review scored it 8.2 out of 10 and called it "the strongest open-source video+audio model available, with real 4K generation." From what we've gathered across multiple technical reviews and community threads, that rating holds up. The praise is consistent across sources: rebuilt VAE, improved prompt adherence, native portrait mode, audio-video sync. The criticisms are also consistent: complex fluid physics (water, rain), crowd scenes, and emotionally tonal sequences still lag behind Sora 2 at its best. That's an honest assessment.

Our Take

A few developers we know have been using LTX-2.3 since launch, and the feedback pattern is interesting. The consensus isn't "this is flawless." It's "this changed what I reach for first." One developer mentioned they hadn't opened a proprietary video tool in two weeks. Another flagged that 1080p generation at high complexity still introduces artifacts. Both observations are valid simultaneously — which is kind of the point. You don't need a perfect model to change behavior. You need a good-enough model with no subscription fee and local control.

From everything we've gathered — the official release documentation, community Reddit threads with 700+ upvotes and detailed technical replies, third-party benchmarks, and developer forum breakdowns — here's our honest read on LTX-2.3:

It's not a Sora-killer. Complex physics and the kind of emotionally nuanced cinematic output that Sora 2 handles well are still not LTX-2.3's strongest territory. The hardware requirements are real: 48GB for full 4K, 32GB official minimum, with quality trade-offs on consumer-tier cards. Image-to-video has known stability bugs in the current release. These are real limitations, not marketing footnotes.

But the trajectory is what matters. LTX-2 had four million downloads in six weeks. LTX-2.3 tripled the parameter count, shipped a rebuilt VAE, a 4x larger text connector, native portrait mode, and a desktop editor — all in a follow-up release less than three months later. The community LoRA ecosystem is spinning up fast. Quantized variants already run on 10GB cards. AMD and Apple Silicon ports are in progress. This is early LLaMA energy, and the open-source compounding effect is just getting started.

If you're a solo creator or small team currently paying $50–100 per month for AI video generation, LTX-2.3 is worth a serious look right now. Not because it replaces every workflow — it doesn't, not yet — but because for ambient video content, social media clips, product demos, and experimental projects, it covers a wide range of use cases for the cost of running a GPU.

And for product teams: local inference isn't just about cost. It's about latency, privacy, and not having your users' data piped through a third-party API you don't control. That's a meaningful architectural argument completely independent of output quality.

What's Next

The model is live on Hugging Face at Lightricks/LTX-2.3. The GitHub repository at Lightricks/LTX-Video has inference code, training utilities, LoRA fine-tuning support, and ComfyUI workflow templates. For teams that want serverless cloud inference without managing GPU infrastructure, fal.ai has seven endpoints covering text-to-video, image-to-video, audio-to-video, extend-video, and retake-video — starting at $0.04 per second in fast mode, up to $0.24 per second for native 4K.

Lightricks has signaled that image-to-video stability improvements and additional control LoRA resources are actively in development. The community is making quick progress on hardware portability — AMD ROCm and Apple Silicon MLX ports are experimental but advancing. For Mac users, fal.ai remains the most reliable option for now.

What does this mean for the paid players? Probably not an immediate revenue crisis. Sora, Runway, and Kling all have advantages LTX-2.3 doesn't have yet. But the pressure is compounding with each release cycle, and the gap is narrowing faster than most proprietary teams expected. When the best 4K video model in the world is open-source and free-to-run, the burden of justification for subscription alternatives gets heavier by the month.

We're working on a follow-up piece that goes deeper with actual generated outputs, visual comparisons, and hands-on results from the model itself — so watch this space for that very soon.

Frequently Asked Questions

Is LTX-2.3 better than Sora 2?

LTX-2.3 beats Sora 2 on resolution — native 4K at 50 FPS versus Sora 2's 1080p ceiling — and costs roughly 10x less: $0.04 per sec via fal.ai in fast mode versus Sora 2's ~$0.40 per sec, meaning five iterations on a 20-second clip at Pro can run $50 on Sora before a single export. LTX-2.3 also includes synchronized audio generated in a single diffusion pass. Sora 2 retains the lead on physics coherence, emotional subtlety in human faces, and complex crowd scenes. For resolution-critical or high-volume workflows, LTX-2.3 offers a decisive cost and quality-per-dollar advantage.

Is LTX-2.3 better than Runway Gen-4.5?

LTX-2.3 surpasses Runway Gen-4.5 on both resolution (native 4K vs Runway's 1080p cap) and cost (free locally or $0.04 per sec via fal.ai vs Runway starting at $12 per month and scaling aggressively at production volume). LTX-2.3 also ships with synchronized audio natively, while Runway requires separate audio workflows. Runway's advantage is its polished collaborative ecosystem — timeline tools, asset management, and team production pipelines. For developers and solo creators, LTX-2.3 is the stronger choice; for agency teams, Runway's workflow tooling still leads.

Is LTX-2.3 better than Kling 3.0?

LTX-2.3 outperforms Kling 3.0 on resolution (native 4K vs 1080p), audio quality — community reviews repeatedly flag Kling's audio as 'muffled,' whereas LTX-2.3 cross-attends audio and video at every diffusion layer — and total cost, since Kling's credit system drains faster than expected with no local inference option. Kling 3.0 holds an edge in multi-shot subject consistency across 3–15 second social clips. For portrait-mode short-form content at 4K, LTX-2.3's native 1080×1920 mode makes it the stronger pick.

Who should use LTX-2.3?

LTX-2.3 is the best fit for: (1) indie developers and small product teams building video features without recurring API costs or third-party dependency risk; (2) short-form content creators who need native portrait mode at 1080×1920 for TikTok, Reels, and Shorts at 4K resolution; (3) researchers and fine-tuners who need open weights under Apache 2.0; (4) any organization under $10M ARR seeking 4K video generation at zero software cost beyond GPU compute. Teams above $10M ARR should verify commercial terms with Lightricks before relying on Apache 2.0 coverage.

What are LTX-2.3's limitations?

LTX-2.3's main constraints are hardware-driven: the full fp16 weights require a 48GB+ GPU for native 4K. The quantized Q4_K_S GGUF variant runs on a 10GB RTX 3080 and produces a 960×544, 5-second clip with audio in roughly 2–3 minutes, but with a quality trade-off. On content quality, LTX-2.3 trails Sora 2 on physics realism and nuanced human face rendering, and trails Runway Gen-4.5 on team collaborative tooling. Organizations above $10M ARR must confirm commercial licensing directly with Lightricks, as the Apache 2.0 license covers the code but not necessarily all commercial model use at scale.

Does LTX-2.3 integrate with ComfyUI?

Yes. ComfyUI workflows for LTX-2.3 are actively appearing on r/StableDiffusion alongside LoRA adapters and GPU optimization techniques. Lightricks also ships a native desktop editor enabling full local inference on consumer GPUs. For teams that prefer managed infrastructure over local GPU setup, LTX-2.3 is available via fal.ai at $0.04 per second in fast mode — no GPU required. The model weights are hosted at Lightricks/LTX-2.3 on Hugging Face in BF16 dev, 8-step distilled, fp8 quantized, and LoRA adapter variants.

How fast is LTX-2.3 compared to WAN 2.2?

LTX-2.3 runs 18x faster than WAN 2.2, the other major open-source video generation model. In absolute terms, a developer running the Q4_K_S GGUF quantized variant on an RTX 3080 with 10GB VRAM generates a 960×544, 5-second clip with synchronized audio in approximately 2–3 minutes. For batch content pipelines processing dozens of clips, this 18x speed multiplier compounds dramatically versus any WAN 2.2-based workflow.

Is LTX-2.3 free for commercial use?

LTX-2.3's code is licensed under Apache 2.0, one of the most permissive open-source licenses available. Lightricks explicitly states the model is free for organizations under $10 million ARR. Above that threshold, commercial terms should be verified directly with Lightricks before assuming Apache 2.0 governs all model use. For the vast majority of creators, indie developers, and small-to-mid-size teams, running LTX-2.3 locally costs nothing beyond electricity and GPU compute — there is no subscription, no per-clip fee, and no API dependency.