What Happened

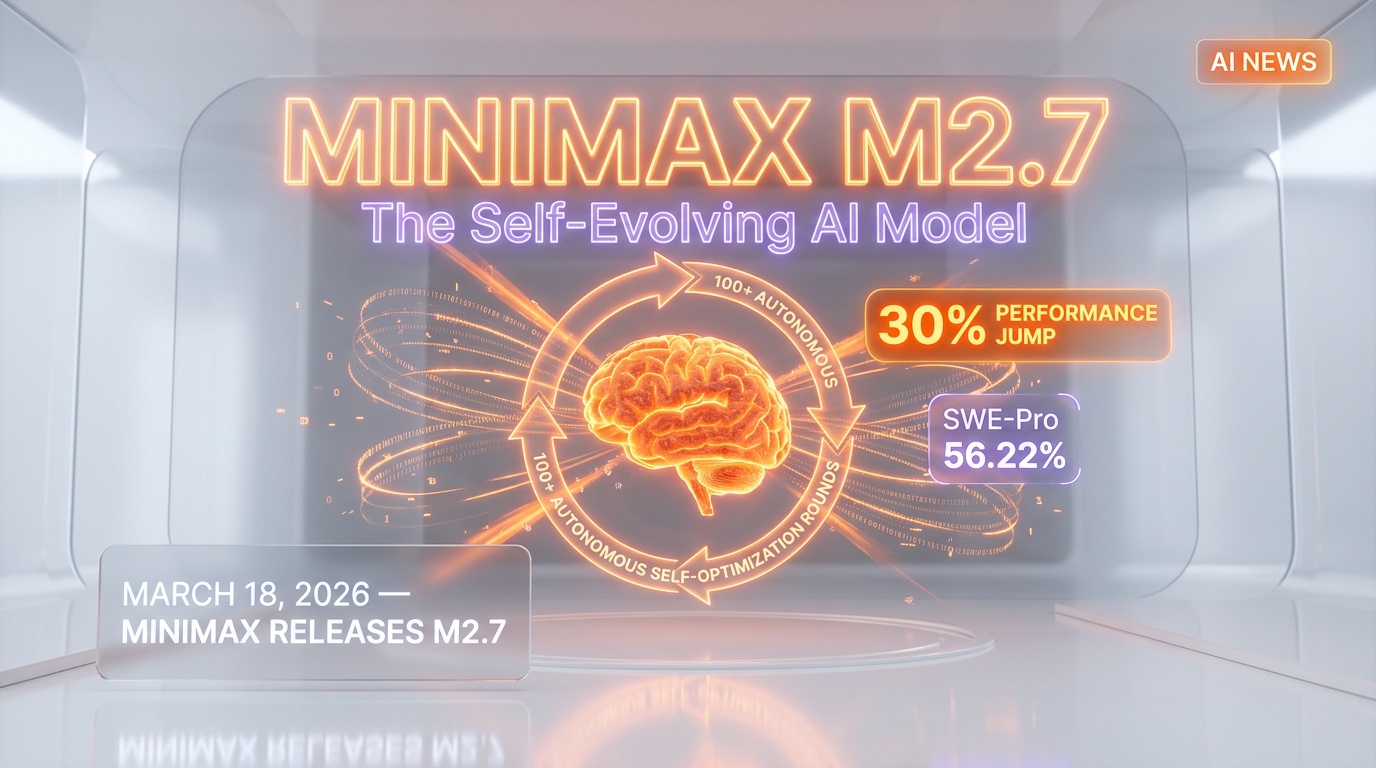

On March 18, 2026, Chinese AI company MiniMax released M2.7 — and it is unlike anything we have seen before. This is not just another incremental model update. M2.7 is a model that literally improved itself through over 100 autonomous rounds of self-optimization. We have been following MiniMax closely since their earlier releases, and this one caught our attention immediately.

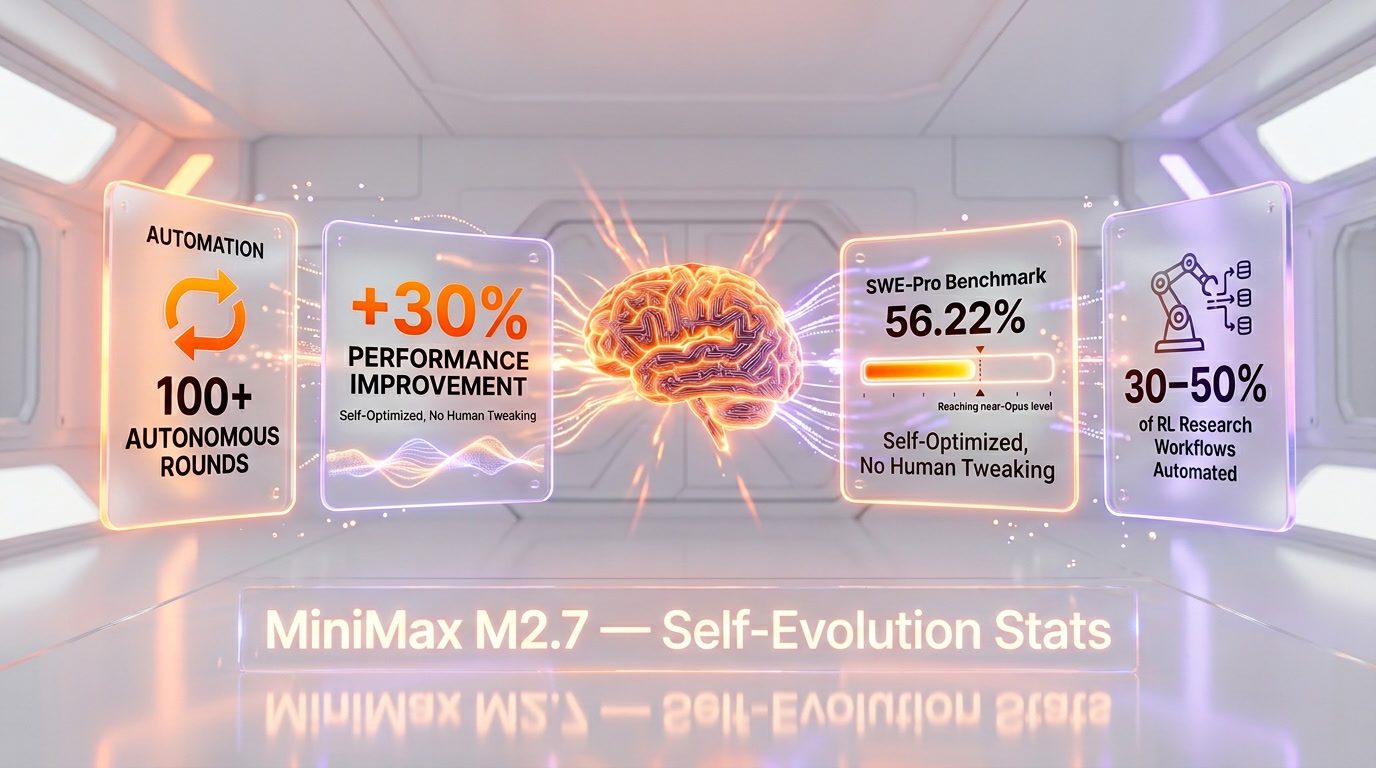

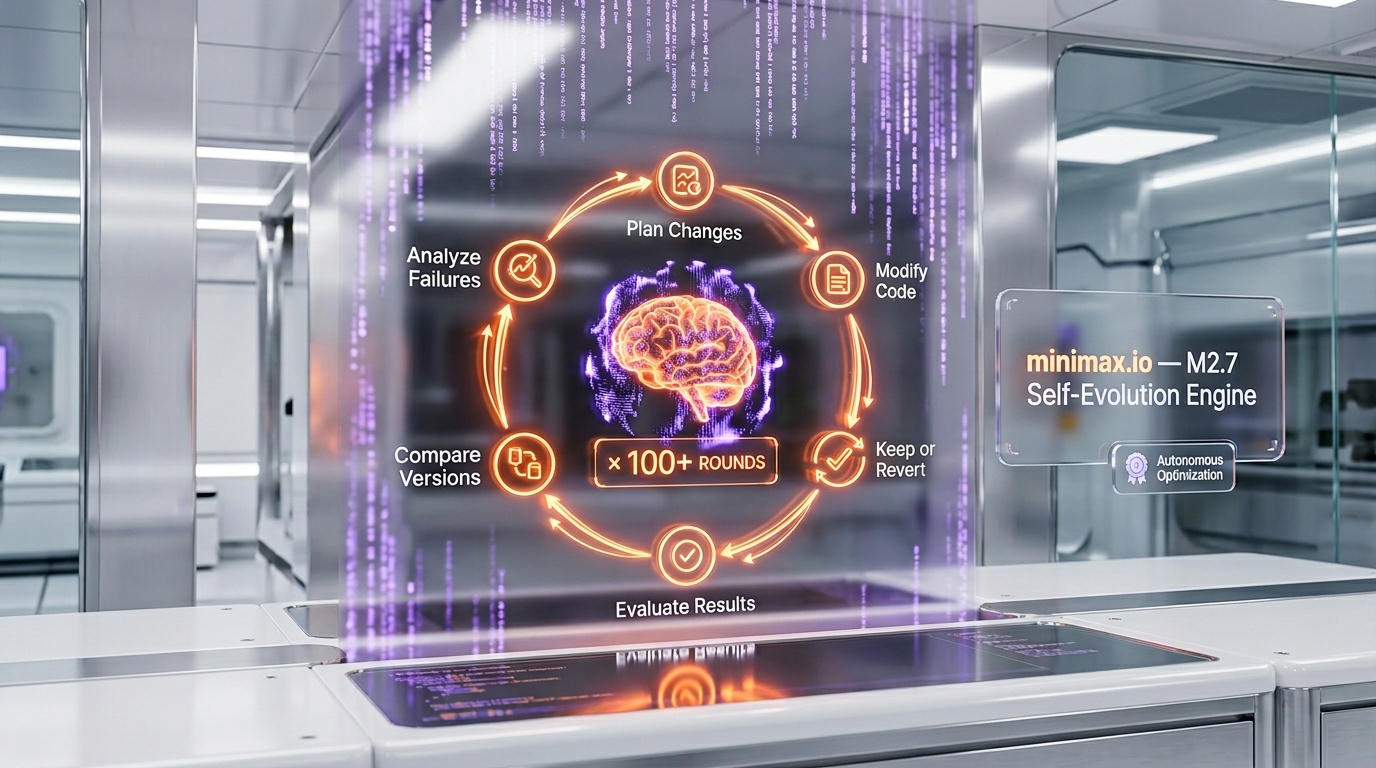

The core innovation here is what MiniMax calls "self-evolution." The model ran through a loop of analyzing its own failures, planning changes to its own code, modifying itself, evaluating the results, comparing against its previous version, and then deciding whether to keep or revert each change. It did this over 100 times — completely autonomously. The result? A 30% performance improvement that was not engineered by humans tweaking hyperparameters. The model did it to itself.

According to MiniMax's official documentation at minimax.io, M2.7 can now handle 30-50% of a typical reinforcement learning research workflow on its own. That is not a minor claim — it means the model can autonomously manage a significant chunk of the work that currently requires highly specialized ML engineers.

On the SWE-Pro benchmark, M2.7 scored 56.22%, putting it in near Opus-level territory. For a Chinese AI lab that many in the Western tech world still underestimate, this is a statement result.

Why It Matters

Self-evolving AI is no longer a theoretical concept from research papers — it is shipping in production models. That is the headline here. MiniMax has demonstrated that a model can meaningfully improve its own performance without human intervention in the optimization loop.

We have been tracking the broader trend of AI systems that can modify and improve themselves, and M2.7 represents the most concrete, measurable example we have seen to date. A 30% performance improvement through self-optimization is not a rounding error. It is the kind of gain that usually requires months of human-led research and engineering.

For the AI research community, the implications are significant. If models can handle 30-50% of RL research workflows autonomously, the velocity of AI progress could accelerate substantially. Research teams could focus on the highest-level strategic decisions while the model handles the iterative experimentation cycle.

The SWE-Pro benchmark score of 56.22% is also worth unpacking. This benchmark measures a model's ability to solve real-world software engineering problems — not toy examples, but actual production-grade coding challenges. Scoring near Opus-level on this benchmark means M2.7 is competitive with the best models in the world for practical software engineering tasks.

For developers and engineering teams, this means another strong contender has entered the ring. The AI coding assistant space just got more competitive, and the fact that this model achieved its performance through self-optimization rather than brute-force scaling is a fundamentally different approach worth watching.

How It Compares

The SWE-Pro benchmark provides a useful lens for comparison. At 56.22%, M2.7 sits in the top tier of AI models for software engineering tasks. For context, achieving near Opus-level performance places MiniMax's model alongside the most capable coding-focused AI systems available today.

What sets M2.7 apart is not just the benchmark number — it is how it got there. Most competing models achieve their performance through massive compute budgets, larger training datasets, and armies of human researchers fine-tuning every aspect. MiniMax took a fundamentally different path: they built a model that could optimize itself.

The self-evolution approach also has implications for cost efficiency. If a model can improve itself through autonomous rounds of optimization, the marginal cost of each performance improvement drops significantly. Traditional approaches require expensive human expertise for every incremental gain. Self-evolution potentially changes that equation entirely.

We should also note that MiniMax has been steadily building its capabilities. This is not a one-hit wonder — it is part of a deliberate strategy from a company that has been investing heavily in fundamental AI research. The Chinese AI ecosystem continues to produce results that challenge assumptions about where the cutting edge of AI development lives.

Our Take

We have been following MiniMax's trajectory closely, and M2.7 is genuinely impressive. The self-evolution capability is not marketing hype — the 30% performance improvement and the SWE-Pro benchmark results are concrete, measurable outcomes.

Here is what excites us most: the methodology. Self-evolution as an optimization strategy could be the beginning of a new paradigm in AI development. Instead of throwing more compute and more data at the problem, you build a model smart enough to improve itself. If this approach scales — and that is still an open question — it could fundamentally change the economics and velocity of AI research.

That said, we want to be clear-eyed about the limitations. Handling 30-50% of an RL research workflow is impressive, but it also means 50-70% still requires human expertise. Self-evolution worked well for M2.7, but we do not yet know how far this approach can go before hitting diminishing returns. And the SWE-Pro benchmark, while demanding, is still a benchmark — real-world software engineering involves context, ambiguity, and stakeholder communication that no benchmark fully captures.

We are also watching the geopolitical dimension. MiniMax is a Chinese company pushing the boundaries of what is possible in AI, and M2.7 is a reminder that innovation in this space is truly global. The competitive dynamics between Chinese and Western AI labs continue to drive rapid progress, and that is ultimately good for everyone who uses these tools.

The bottom line: M2.7 is a significant release that introduces a genuinely novel capability. Self-evolving AI models are no longer theoretical — they are here, they work, and they are getting better. We will be watching MiniMax very closely to see what comes next.

What's Next

The big question now is whether self-evolution can scale beyond what M2.7 has demonstrated. If MiniMax can push the autonomous optimization further — handling 60%, 70%, or more of the research workflow — we are looking at a genuinely transformative capability.

We expect other major AI labs to take notice and explore similar approaches. The idea of models that improve themselves is too powerful to ignore, and the concrete results from M2.7 provide a proof of concept that others will want to replicate and extend.

For developers and teams evaluating AI coding assistants, M2.7 is worth putting on your radar. The SWE-Pro benchmark performance alone makes it competitive, and the self-evolution capability suggests that this model's trajectory may be steeper than traditional alternatives. We will continue tracking MiniMax's releases and will report back as we learn more about M2.7's real-world performance across different use cases.

One thing is certain: the pace of AI innovation shows no signs of slowing down, and MiniMax just proved that the most interesting breakthroughs can come from unexpected places. We will keep you updated as this story develops.

Frequently Asked Questions

What is MiniMax M2.7's self-evolution capability?

MiniMax M2.7 can autonomously optimize its own performance through iterative self-improvement cycles. It ran over 100 autonomous optimization rounds, achieving a 30% performance improvement without human intervention in its training loop.

How does MiniMax M2.7 compare to Claude Opus 4.6?

MiniMax M2.7 scores 56.22% on SWE-Pro, approaching Opus-level performance on software engineering benchmarks. While not yet matching Claude Opus 4.6 across all tasks, M2.7's self-evolving architecture means its capabilities continue to improve autonomously over time.

Can MiniMax M2.7 really improve itself without human help?

Yes, M2.7 handles 30-50% of its reinforcement learning research workflow autonomously. The model identifies its own weaknesses, generates training data to address them, and iteratively refines its parameters through self-supervised optimization rounds.

Who is MiniMax and why does M2.7 matter?

MiniMax is a Chinese AI company backed by Tencent that has been rapidly climbing global benchmarks. M2.7 matters because it is one of the first production models to demonstrate genuine self-improvement capabilities, potentially changing how AI models are trained and optimized.

What benchmarks does MiniMax M2.7 score highest on?

MiniMax M2.7 scores 56.22% on SWE-Pro for software engineering, and its self-evolution mechanism delivered a 30% aggregate improvement across reasoning, coding, and mathematical benchmarks during its 100+ autonomous optimization rounds. It competes directly with models from OpenAI, Anthropic, and Google on code generation tasks.

What are the safety risks of self-evolving AI models like M2.7?

Self-evolving AI models raise concerns about alignment drift — when a model optimizes itself in directions humans did not intend. If M2.7 can autonomously modify its own training process, ensuring it remains aligned with human values becomes harder to verify with each successive optimization round, creating new challenges for AI safety research.

How does MiniMax M2.7 compare to OpenAI and Google's latest models?

While OpenAI's GPT-5 and Google's Gemini 3.1 Pro rely on human-directed training pipelines, MiniMax M2.7 is one of the first to automate 30-50% of its own RL research workflow. This self-evolving approach could give MiniMax a structural cost advantage — each autonomous optimization round is cheaper than human-supervised training — potentially allowing faster iteration cycles than Western competitors.