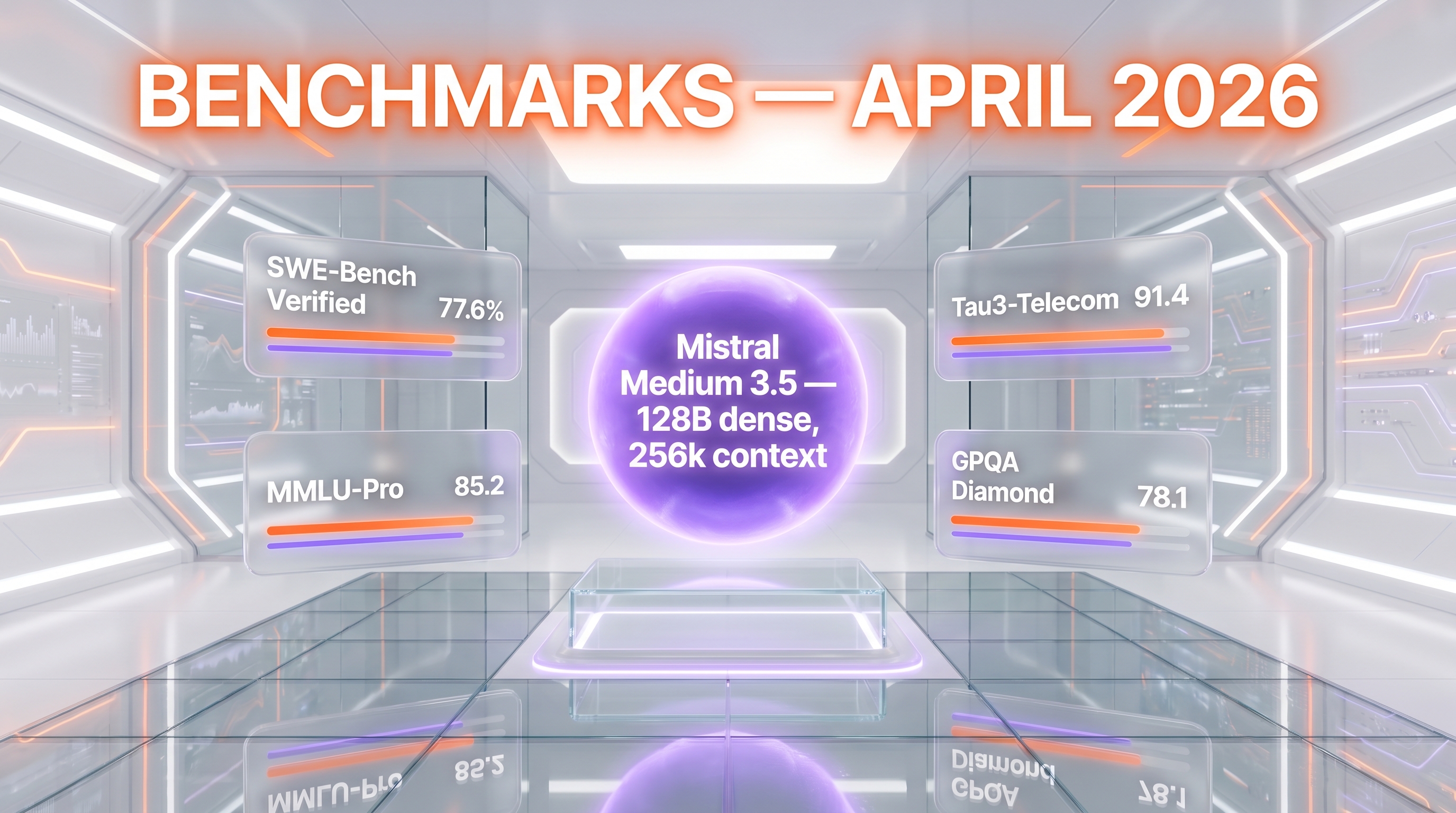

Mistral AI released Mistral Medium 3.5 on April 29, 2026, a 128-billion-parameter dense multimodal model with a 256k context window, open-weighted on Hugging Face under a modified MIT license. It scores 77.6% on SWE-Bench Verified and 91.4 on the Tau3-Telecom agentic benchmark, costs $1.5 per million input tokens and $7.5 per million output tokens via API, and self-hosts on as few as four GPUs. Mistral simultaneously moved its Vibe coding agents from local to remote cloud sandboxes, with parallel async sessions that open pull requests on GitHub.

TL;DR — what shipped on April 29, 2026

- Mistral Medium 3.5: dense 128B parameters, 256k context, multimodal (text + vision), single set of weights covering instruction-following, reasoning, and coding.

- Open weights on Hugging Face under a modified MIT license that permits commercial and non-commercial use with carve-outs for very large companies.

- Self-hostable on as few as four GPUs per Mistral's own announcement, with vLLM tensor-parallel-size 8 as the recommended serving config in the model card.

- API pricing: $1.5 per million input tokens and $7.5 per million output tokens.

- SWE-Bench Verified: 77.6%. Tau3-Telecom: 91.4. Configurable reasoning effort per request.

- Vibe Cloud: coding agents now run in remote isolated sandboxes, in parallel, while you are away. Sessions can open pull requests on GitHub and ping you when done.

- Le Chat Work mode: a new agentic mode for cross-tool workflows, research synthesis, and inbox triage — connectors on by default — also powered by Medium 3.5.

- Replaces three older models in Mistral's stack: Mistral Medium 3.1 (general), Magistral (reasoning in Le Chat), and Devstral 2 (Vibe coding agent).

What happened: Mistral collapses three models into one

On April 29, 2026, Mistral AI announced Mistral Medium 3.5 alongside a broader product update: remote cloud agents inside Vibe and a new Work mode in Le Chat. The post on the Mistral newsroom, archived as "Remote agents in Vibe. Powered by Mistral Medium 3.5.", frames the release as the company's first flagship merged model — a single 128B dense network that subsumes three previously separate models in Mistral's lineup.

Per the official announcement, Mistral Medium 3.5 replaces:

- Mistral Medium 3.1 — the company's previous mid-tier general-purpose model.

- Magistral — Mistral's reasoning-specialized model used inside Le Chat for harder analytical tasks.

- Devstral 2 — the coding-specialized model that previously powered the Vibe coding agent.

The merge is not a stylistic choice. Across the AI lab landscape, the trend through 2025 was specialization: separate reasoning, coding, vision, and chat checkpoints, each fine-tuned for its niche. With Medium 3.5, Mistral is stating publicly that it can now deliver instruction-following, reasoning, and coding inside one set of weights — with reasoning effort configurable per request via a reasoning_effort parameter, per the Hugging Face model card.

Why it matters: Europe's frontier strike, on open weights

The strategic context here is hard to miss. OpenAI's frontier models are closed. Anthropic's Claude is closed. Google's Gemini is closed. Meta paused its Llama frontier track. xAI ships Grok closed. The Chinese labs — DeepSeek, Qwen, GLM — are openly shipping competitive open-weight models, often with permissive licenses.

Europe's answer, until recently, was Mistral with capable but mid-tier open releases and closed top-tier models. Medium 3.5 changes that calculus. A 128B dense model, scoring 77.6% on SWE-Bench Verified, with a 256k context and a modified MIT license — that is a frontier-class artefact released as open weights from a European lab. It lands in the same week as the news cycle around US export controls, the EU AI Act enforcement timeline, and the ongoing debate over sovereign AI infrastructure.

The framing in the Mistral newsroom and in coverage from GIGAZINE emphasizes the "surpasses Claude Sonnet 4.5" angle on coding benchmarks. Whether or not that exact comparison holds across every workload, the more important point is the package: frontier-class numbers, open weights, self-hostable on four GPUs, modified MIT license. That is a configuration no US lab is currently offering at this parameter scale.

Technical details: 128B dense, 256k context, multimodal

The Hugging Face model card and Mistral's announcement give a specific picture of what Medium 3.5 is under the hood.

| Spec | Mistral Medium 3.5 |

|---|---|

| Architecture | Dense decoder transformer (not MoE) |

| Parameters | 128 billion |

| Context window | 256,000 tokens |

| Modalities | Text + image input, text output |

| Vision encoder | Trained from scratch, variable image sizes and aspect ratios |

| Languages | 24+ including English, French, Spanish, German, Italian, Portuguese, Dutch, Chinese, Japanese, Korean, Arabic |

| Reasoning | Configurable per request via reasoning_effort |

| Tool use | Native function calling, agentic tool calls |

| Tensor types | BF16, F8_E4M3 |

| License | Modified MIT (open weights, with carve-out for very large revenue companies) |

| Self-host | As few as 4 GPUs per Mistral; vLLM tensor-parallel-size 8 recommended |

| API price (input) | $1.5 per million tokens |

| API price (output) | $7.5 per million tokens |

| Release date | April 29, 2026 |

The "merged model" framing is significant. Most modern labs ship multiple checkpoints per generation — a base, a reasoning variant, a coding variant. Mistral is asserting that a single 128B dense network can serve all three workloads competitively, with the trade-off front-loaded into a per-request reasoning effort dial rather than into separate model selection.

An EAGLE variant — Mistral Medium 3.5 128B EAGLE — was also released, supporting speculative inference for faster generation throughput.

Benchmarks: 77.6% on SWE-Bench Verified, 91.4 on Tau3-Telecom

Mistral's announcement headlines two benchmark numbers.

- SWE-Bench Verified — 77.6%. SWE-Bench Verified is the human-curated subset of SWE-Bench used to measure how well a model resolves real GitHub issues end-to-end. 77.6% places Medium 3.5 in the top tier of agentic coding scores published in 2026.

- Tau3-Telecom — 91.4. Tau-bench measures multi-turn agentic tool use; the Telecom split is the customer-service-style scenario. 91.4 is, per Mistral's own positioning, best-in-class at the time of release.

We have not yet run our own benchmarks against this model. The numbers above are reported by Mistral and consistent with what Hugging Face's model card describes as "frontier-class multimodal model optimized for agentic and coding use cases." Independent third-party scores will firm up over the next two to four weeks as community evaluations land.

Self-hosting: four GPUs in the announcement, eight in the recipe

One of the most-quoted lines from the Mistral launch is the claim that Medium 3.5 self-hosts on "as few as four GPUs." That is the pitch for sovereign deployment — a 128B frontier model that fits on a small cluster a serious enterprise can already buy.

The Hugging Face model card itself, however, recommends a more conservative serving recipe for production:

vllm serve mistralai/Mistral-Medium-3.5-128B \

--tensor-parallel-size 8 \

--tool-call-parser mistral \

--enable-auto-tool-choice \

--reasoning-parser mistral \

--max_num_batched_tokens 16384 \

--max_num_seqs 128 \

--gpu_memory_utilization 0.8That is tensor-parallel-size 8 — eight GPUs, not four — for the recommended vLLM serving path with native tool-call parsing, auto tool choice, and reasoning parser enabled. The "four GPUs" minimum likely refers to a quantized or lower-throughput configuration; the eight-GPU recipe is what production deployments will actually run.

Either way, this is not a model you spin up on a single A100 dev box. It is an enterprise self-host artefact aimed at organizations that already have GPU capacity and want frontier-class capability without the API dependency.

Pricing: $1.5 per million input, $7.5 per million output

The API pricing — $1.5 per million input tokens and $7.5 per million output tokens — places Medium 3.5 in the same neighborhood as Claude Sonnet and GPT-4o-class API pricing, but well above what some newer Chinese open models charge for hosted inference.

| Model | Input per million | Output per million | Open weights |

|---|---|---|---|

| Mistral Medium 3.5 | $1.5 | $7.5 | Yes (modified MIT) |

| Claude Sonnet 4.5 (Anthropic API) | $3.0 | $15.0 | No |

| GPT-4o-class (OpenAI API) | ~$2.5 | ~$10 | No |

| DeepSeek V3 (hosted) | ~$0.27 | ~$1.10 | Yes |

Some commentary at launch flagged the pricing as high relative to the Chinese open-weight competition — and it is. The bet Mistral is making is that for European enterprise buyers, regulated industries, and any team that wants the model on-prem under a permissive license, the pricing-plus-self-host package is more attractive than a cheaper-but-closed or cheaper-but-China-hosted alternative.

Vibe Cloud Agents: async coding sessions in the cloud

The second half of the announcement is about Vibe — Mistral's coding agent product, previously Devstral 2-powered and run mostly locally via CLI. With this update, Vibe coding agents now run in remote cloud sandboxes, in parallel, while the developer is away.

Per the official announcement, the new Vibe behavior is:

- Async coding sessions — "coding sessions can work through long tasks while you're away."

- Parallel execution — multiple agent sessions can run at the same time.

- Isolated sandbox — each session runs in an isolated cloud environment, not on the developer's machine.

- GitHub integration — agents can open a pull request and notify the developer when work is done.

- Le Chat hand-off — sessions can be initiated directly from a Le Chat conversation, not just from the Vibe CLI.

This is the same architectural pattern as Anthropic's Claude Code remote agents, OpenAI's Codex cloud agents, Cursor 3's Cloud Handoff, and Google Antigravity's agent surface. The frontier IDE/agent products in 2026 are converging on the same shape: long-running, cloud-isolated, parallel, GitHub-native. Mistral's Vibe Cloud is the European entry into that race.

Le Chat Work mode: connectors on by default

Alongside Vibe Cloud, Mistral introduced a Work mode in Le Chat — its consumer/prosumer chat product. Work mode is described as a new agentic mode "powered by Mistral Medium 3.5" with three explicit capabilities:

- Cross-tool workflows — agentic execution across multiple connected services in a single session.

- Research and synthesis — long-running information gathering and analysis tasks.

- Inbox triage — email/communication processing as a first-class agentic workflow.

The notable design choice is that connectors are on by default in Work mode — a deliberate departure from opt-in tool use, and a clear bet that for prosumer agentic work, the friction of toggling individual connectors is worse than the implicit-trust risk of having them all live.

Market impact: who Medium 3.5 actually competes with

Medium 3.5 sits at an unusual intersection in the 2026 model landscape. It is:

- Larger and more capable than the open-weight Chinese mid-tier (DeepSeek V3, Qwen3, GLM-4.5).

- Smaller in claimed parameter count than some open frontier models (Llama 3.1 405B, Mixtral 8x22B's effective scale) but stronger on coding and agentic benchmarks.

- Comparable on coding benchmarks to closed frontier models like Claude Sonnet 4.5 and GPT-4o, but with open weights and a permissive license.

- Self-hostable on enterprise-realistic GPU counts, unlike 405B-class models that need substantially more compute.

The clearest competitor at the product level is Anthropic's Claude: Claude Sonnet 4.5 / Opus 4.7 on the API for closed frontier coding, Claude Code for the agent layer. Medium 3.5 plus Vibe Cloud is the open-weight European answer to that stack. For enterprises in regulated industries or under EU sovereignty pressure, the Mistral package is now a credible alternative — not just a backup option.

The clearest competitor on the open-weight side is DeepSeek V3 / V4. DeepSeek's strength is aggressive pricing and strong coding performance; the weakness, for European and US buyers, is China-based hosting and the geopolitical overhang. Medium 3.5 is more expensive on the API than DeepSeek but ships from a lab inside the EU regulatory perimeter — a non-trivial differentiator for some buyers.

What to watch next

Three threads will determine whether Medium 3.5 is a milestone or a footnote.

Independent benchmarks. Mistral's own SWE-Bench Verified (77.6%) and Tau3-Telecom (91.4) numbers are strong. The next two to four weeks will produce community evaluations on LiveBench, Aider's polyglot benchmark, the Hugging Face Open LLM leaderboard, and the agentic benchmarks tracked by independent labs. If the third-party numbers hold within a few points of Mistral's, the model lands as a real frontier release. If they crater, the launch credibility takes a hit.

Vibe Cloud throughput and latency. Remote agentic coding products live or die on session reliability and pull request quality. The Cursor 3 Cloud Handoff, Claude Code remote agents, and Antigravity Manager Surface are already well-instrumented in the developer tooling press. Vibe Cloud is brand new. Whether it can match the user experience of those products in the first 60 days will set the trajectory.

Enterprise self-host adoption. Mistral's strategic bet — open-weight frontier with EU sovereignty positioning — only pays off if real European enterprises actually self-host. The signal to watch is named deployments: BNP Paribas, Airbus, Siemens, Bosch, Renault, the public sector. If Medium 3.5 ends up running inside those organizations under modified MIT, the "Europe sovereign AI counter-punch" framing is real. If it stays on the API while everyone keeps using closed US models in production, the framing is marketing.

Our take

Mistral Medium 3.5 is the most strategically interesting open-weight release of Q2 2026, and not primarily because of the benchmarks. The benchmarks are competitive but not jaw-dropping. What is jaw-dropping is the package: 128B dense, 256k context, multimodal, modified MIT, four-to-eight GPU self-host, frontier coding numbers, and a remote agent product on top — shipped as one launch from a European lab, in the same news cycle as US export-control negotiations and EU AI Act enforcement.

For US buyers, this is a "watch closely, pilot at scale, do not blink" release. For European buyers in regulated sectors, this is the first open-weight frontier model that plausibly displaces a closed-API US dependency without giving up capability. For Chinese open-weight competitors, this raises the bar: the Mistral release re-establishes the EU as a player at the frontier on permissive licensing terms.

The pricing on the API is the soft spot. $1.5 input, $7.5 output is fair against Claude and GPT-4o, but expensive against DeepSeek. Whether that is a deliberate self-host channel push or a real competitive vulnerability will become clear in the first earnings cycle that includes this product.

Frequently asked questions

What is Mistral Medium 3.5 and when was it released?

Mistral Medium 3.5 is a 128-billion-parameter dense multimodal language model released by Mistral AI on April 29, 2026. It has a 256,000-token context window, supports text and image input with text output, and is open-weighted on Hugging Face under a modified MIT license. It is positioned as Mistral's first flagship merged model, replacing Mistral Medium 3.1, Magistral, and Devstral 2.

What is the license for Mistral Medium 3.5?

Mistral Medium 3.5 is released under a modified MIT license. The license permits commercial and non-commercial use, with explicit carve-out exceptions for companies above a certain large-revenue threshold. The weights are hosted on Hugging Face at mistralai/Mistral-Medium-3.5-128B and the EAGLE speculative-inference variant ships under the same terms.

How does Mistral Medium 3.5 perform on coding benchmarks?

Mistral reports a 77.6% score on SWE-Bench Verified, the human-curated subset of SWE-Bench used to measure end-to-end GitHub issue resolution by coding agents. It also reports a 91.4 score on the Tau3-Telecom multi-turn agentic benchmark. Independent third-party benchmark numbers from community evaluations will publish in the weeks following the April 29 release.

How much does Mistral Medium 3.5 cost via API?

The API pricing is $1.5 per million input tokens and $7.5 per million output tokens. This is competitive with Claude Sonnet 4.5 and GPT-4o-class API pricing but more expensive than open-weight Chinese alternatives like DeepSeek V3. For self-hosted deployments using the open weights from Hugging Face, infrastructure cost is the only marginal cost.

How many GPUs are needed to self-host Mistral Medium 3.5?

Mistral's official announcement states the model can self-host on as few as four GPUs. The Hugging Face model card recommends a more conservative production serving config using vLLM with tensor-parallel-size 8 — i.e., eight GPUs — together with the Mistral tool-call parser, auto tool choice, and the Mistral reasoning parser enabled. The four-GPU minimum likely refers to a quantized lower-throughput configuration.

What is Vibe Cloud and how does it work?

Vibe is Mistral's coding agent product. With the April 29, 2026 update, Vibe coding sessions now run in remote isolated cloud sandboxes — not just on the developer's local machine. Sessions can run in parallel, work through long tasks asynchronously while the developer is away, open pull requests on GitHub, and be initiated directly from a conversation in Le Chat. The remote agents are powered by Mistral Medium 3.5.

What is the Le Chat Work mode introduced with Medium 3.5?

Work mode is a new agentic mode in Le Chat, also powered by Mistral Medium 3.5. It targets cross-tool workflows, research and synthesis tasks, and inbox triage. A defining design choice is that connectors are on by default, removing the friction of opt-in tool selection for prosumer agentic work in Le Chat.

How does Mistral Medium 3.5 compare to Claude Sonnet 4.5?

On Mistral's stated SWE-Bench Verified score of 77.6%, Medium 3.5 is competitive with or above Claude Sonnet 4.5 on coding tasks per Mistral's positioning. Independent benchmarks have not yet confirmed the comparison across all workloads. The bigger differentiation is structural: Medium 3.5 is open-weighted under a modified MIT license and self-hostable, while Claude Sonnet is closed and API-only. Pricing is also lower per million tokens for Medium 3.5 ($1.5 input, $7.5 output) versus Claude Sonnet 4.5 ($3 input, $15 output).

What languages does Mistral Medium 3.5 support?

Per the Hugging Face model card, Mistral Medium 3.5 supports 24 or more languages, including English, French, Spanish, German, Italian, Portuguese, Dutch, Chinese, Japanese, Korean, and Arabic. The vision encoder was trained from scratch to handle variable image sizes and aspect ratios for multimodal input.

Is Mistral Medium 3.5 a mixture-of-experts model?

No. Mistral Medium 3.5 is a dense decoder transformer, not a mixture-of-experts (MoE) architecture. All 128 billion parameters are active for every forward pass. This is a deliberate choice — dense models are easier to serve consistently and integrate into existing inference stacks than MoE models, at the cost of higher per-token compute.