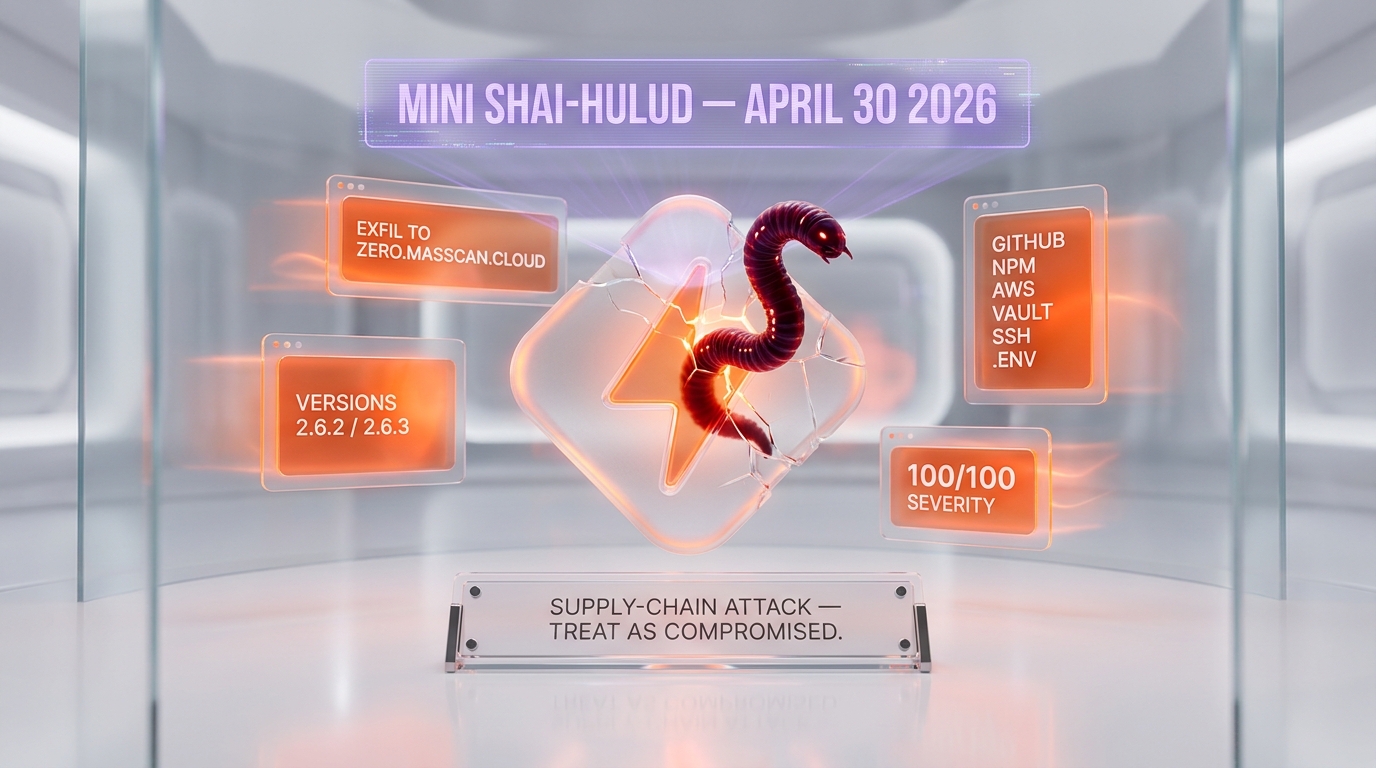

The PyPI package lightning, the canonical Python package for PyTorch Lightning, was compromised in a supply-chain attack on April 30, 2026. Versions 2.6.2 and 2.6.3 shipped with a hidden malicious payload that activates on import, exfiltrates GitHub tokens, npm tokens, AWS, Azure, GCP, Kubernetes, Vault, SSH keys, and .env files to the command-and-control server zero.masscan.cloud, and propagates by republishing infected npm packages through stolen publish tokens. Security firms including Semgrep, Aikido, Socket, OX Security, and StepSecurity attribute the campaign to Mini Shai-Hulud, an extension of the original Shai-Hulud npm worm. PyPI quarantined the package and any developer who installed lightning 2.6.2 or 2.6.3 should treat their machine as compromised.

TL;DR — what hit on April 30, 2026

- Affected versions:

lightning2.6.2 andlightning2.6.3, both published April 30, 2026. - Detection time: Socket flagged both versions as malicious 18 minutes after publication.

- Activation: malware runs automatically when the lightning module is imported, via a hidden

_runtimedirectory containing astart.pydownloader and an 11 MB obfuscatedrouter_runtime.jspayload. - Exfiltration target:

zero.masscan[.]cloud:443/v1/telemetry, with a fallback that uses stolen GitHub tokens to create public repositories tagged "A Mini Shai-Hulud has Appeared." - Credentials targeted: GitHub tokens (

ghp_,gho_), npm tokens (npm_), AWS, Azure Key Vault, GCP Secret Manager, Kubernetes secrets, HashiCorp Vault, SSH keys, Docker configs, shell histories, .env files, VPN configs, cryptocurrency wallets. - Propagation: stolen npm publish tokens are used to inject

setup.mjsas a postinstall hook into local.tgztarballs, repack, and republish the infected packages. - Persistence: hooks planted in

.claude/settings.json(Claude Code) and.vscode/tasks.json(VS Code) so the malicious code re-executes whenever a developer opens the project. - Impersonation: malicious commits are authored under

claude@users.noreply.github.comto impersonate Anthropic's Claude Code. - Status: PyPI has quarantined the package. Aikido rates the issue at 100/100 critical severity. The Hacker News thread accumulated more than 1,080 points, the highest top-of-page metric for an ML supply-chain story this year.

What happened: a worm jumps from npm to PyPI

On April 30, 2026, two new versions of the lightning Python package — 2.6.2 and 2.6.3 — were published to the Python Package Index. PyTorch Lightning, the canonical lightweight wrapper around PyTorch maintained by the Lightning AI team, is one of the most-downloaded ML training libraries on PyPI, embedded in the import graph of essentially every modern ML lab and ML startup that uses PyTorch.

Within 18 minutes of publication, Socket's automated AI-based scanner flagged both versions as potentially malicious, per the Socket disclosure. Independent confirmations came from Semgrep, Aikido, OX Security, and StepSecurity over the following hours. The Hacker News published the consolidated breakdown the same day.

The campaign is attributed to Mini Shai-Hulud, an extension of the original Shai-Hulud npm supply-chain worm tracked by Palo Alto Networks Unit 42 in late 2025. Mini Shai-Hulud had previously been observed in compromises of the Bitwarden CLI npm package and a wave of SAP-related npm packages. The PyTorch Lightning compromise is the first documented case of the same threat actor — tracked as TeamPCP by some firms — moving across the npm-to-PyPI ecosystem boundary inside a single campaign.

Why it matters: AI labs run on PyTorch Lightning

Supply-chain attacks on language-specific package registries are a regular occurrence. What distinguishes this one is the target.

PyTorch Lightning is not a niche package. It sits inside the import graph of essentially every ML training pipeline that uses PyTorch in a structured way — across academic labs, frontier AI research groups, ML platforms, MLOps stacks, fine-tuning shops, and the long tail of ML startups. Aikido's blunt assessment — "If you are using lightning==2.6.2 or lightning==2.6.3, treat your machine as compromised" — is correct, and the implication is that the population of "machines that may be compromised" includes a very large fraction of the global ML training infrastructure outside the largest hyperscaler-trained labs.

The credential set targeted by the malware tells the rest of the story. This is not a generic credential stealer. It is a stealer purpose-built for ML developer environments: GitHub tokens, npm tokens, AWS credentials, Azure Key Vault secrets, GCP Secret Manager values, Kubernetes secrets, HashiCorp Vault credentials, SSH keys, Docker configs, .env files, shell histories, and VPN configurations. That credential mix is what an ML engineer typically has on their workstation. The attacker built a tool aimed precisely at the daily-driver footprint of an AI engineer.

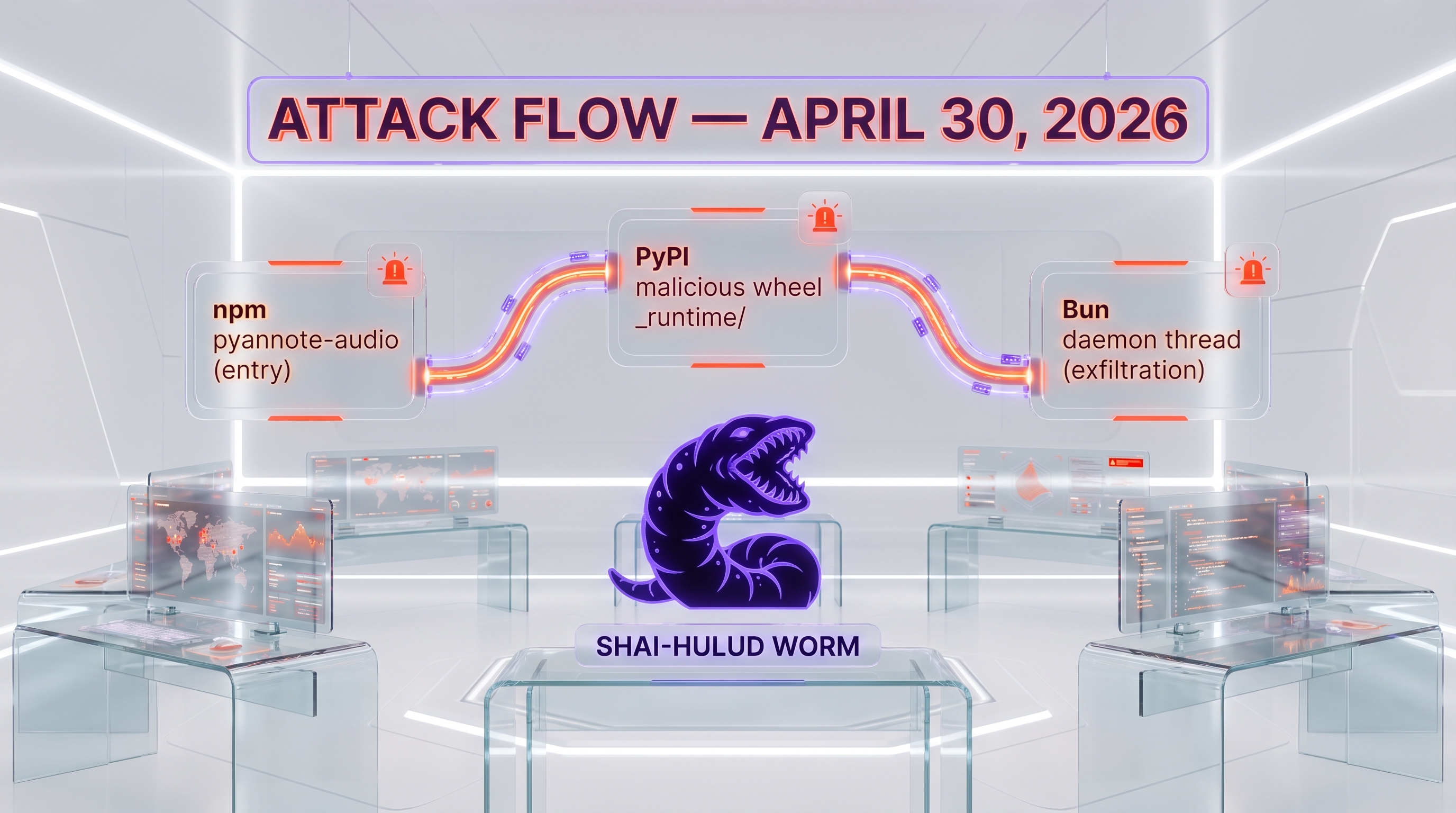

Technical mechanism: _runtime, Bun, and the daemon thread

The technical chain documented by Semgrep, Socket, and Aikido reconstructs as follows.

Stage 0 — Package modification. The legitimate lightning package was modified to include a hidden _runtime directory in the wheel. This directory contained two files central to the attack: start.py and router_runtime.js.

Stage 1 — On-import activation. Standard Python module import behavior was abused. When a developer or CI pipeline imported the lightning module — which runs automatically the moment a downstream training script executes import lightning or from lightning import ... — start.py executed as part of the package's __init__.py chain. The execution was intentionally non-obvious: a daemon thread was spawned with suppressed output, so the developer saw normal import behavior with no console-level indication that anything malicious was running in the background.

Stage 2 — Bun fetch. start.py downloaded the Bun JavaScript runtime from GitHub. Bun is a legitimate, fast JavaScript runtime — a deliberate choice by the attacker to (a) avoid relying on Node.js being present on the victim machine, (b) minimize forensic footprint by using a self-contained runtime that ships its own dependencies, and (c) execute the obfuscated JavaScript payload router_runtime.js.

Stage 3 — Credential harvesting. The 11 MB router_runtime.js payload, deobfuscated, ran filesystem and process scans for the credential set described above. Per Socket, the payload uses regex patterns targeting gh[op]_ prefixes for GitHub tokens and npm_ prefixes for npm access tokens, with parallel pattern detection for AWS access keys, Azure client secrets, GCP service-account JSON, Kubernetes kubeconfigs, HashiCorp Vault tokens, SSH private keys, Docker config tokens, and the contents of .env files in standard locations.

Stage 4 — Exfiltration. Harvested credentials were RSA-2048 encrypted and exfiltrated via four parallel channels: (1) HTTPS POST to zero.masscan[.]cloud:443/v1/telemetry as the primary command-and-control endpoint, (2) creation of attacker-controlled public GitHub repositories tagged "A Mini Shai-Hulud has Appeared" using the stolen GitHub tokens, (3) GitHub commit-search dead-drops where exfiltrated data is hidden inside commit messages or file contents, and (4) direct pushes of credential payloads to the victim's own GitHub repositories.

Stage 5 — npm worm propagation. Validated npm publish tokens were used to spread the malware further. Per Semgrep and The Hacker News, the worm scanned for local .tgz tarballs of npm packages the stolen token had publish rights to, injected a setup.mjs dropper plus the router_runtime.js payload into each package, bumped the version number, and repacked the tarball for republication. The downstream effect: any npm package the compromised developer had publish rights to becomes a new propagation node.

Stage 6 — GitHub poisoning. Validated GitHub tokens were used to inject malicious files into up to 50 branches per repository via the GitHub GraphQL API, with commits authored under claude@users.noreply.github.com to impersonate Anthropic's Claude Code agent and reduce the chance that a quick-look code review would flag the commit as suspicious.

Stage 7 — Persistence. Hooks were planted in .claude/settings.json (the Claude Code agent's project-level settings file) and .vscode/tasks.json (the VS Code project task configuration) so that the malicious code re-executes the next time the developer opens the project in either tool. This is a particularly clever persistence mechanism — both files are routinely edited by tools and ignored or auto-committed, lowering the chance a developer will notice the injected entries during a normal review.

Indicators of Compromise

The published IOCs across the security firm advisories include:

- Affected packages: PyPI

lightningversions 2.6.2 and 2.6.3 (published April 30, 2026). - Exfiltration endpoint:

zero.masscan[.]cloud:443/v1/telemetry. - Malicious file SHA256 (router_runtime.js):

5f5852b5f604369945118937b058e49064612ac69826e0adadca39a357dfb5b1. - Malicious file SHA256 (start.py):

8046a11187c135da6959862ff3846e99ad15462d2ec8a2f77a30ad53ebd5dcf2. - Planted file:

.claude/router_runtime.jsin compromised repositories. - Commit author signature:

claude@users.noreply.github.com(impersonating Claude Code) — note that legitimate Claude Code commits do not use this exact signature; check authorship carefully. - Commit message prefix:

EveryBoiWeBuildIsAWormyBoi. - GitHub repository description: "A Mini Shai-Hulud has Appeared" on attacker-created public repos.

- Hidden directory in wheel:

_runtime/containingstart.pyandrouter_runtime.js. - Postinstall artifact:

setup.mjsinjected into npm tarballs.

Response: PyPI quarantine, Intercom traceback, and the pyannote-audio entry vector

The Python Package Index quarantined the lightning package shortly after Socket's automated detection flagged versions 2.6.2 and 2.6.3. Both versions are no longer installable from PyPI as of the morning of May 1, 2026.

The investigative side has produced one notable thread. The Hacker News reporting cites Intercom as having traced its own exposure to the campaign through a local installation of pyannote-audio, which introduced the compromised lightning package as a transitive dependency. pyannote-audio is the popular speaker diarization toolkit widely used by audio ML teams; it depends on PyTorch Lightning. This is the first documented entry vector showing how a downstream package's transitive dependency dragged the malicious payload into a corporate ML environment without the affected team ever directly installing lightning themselves.

This matters because it widens the radius of who is at risk. The naive question — "we don't use Lightning directly, are we exposed?" — has the wrong answer. If anything in your dependency graph requires PyTorch Lightning, and your lockfile or CI environment resolved the lightning version to 2.6.2 or 2.6.3 in the relevant window, you are exposed.

Immediate actions for ML teams

Per the consolidated guidance from Semgrep, Aikido, Socket, and The Hacker News, the response checklist for any ML team that may have touched lightning 2.6.2 or 2.6.3 in the April 30 to May 1 window:

- Audit your dependency graph. Search lockfiles, pip caches, container images, and CI build logs for any presence of

lightning==2.6.2orlightning==2.6.3— direct or transitive (notably viapyannote-audioand other audio/diarization stacks). - Treat affected machines as compromised. If you ran

pip install lightningor any pipeline that resolved to those versions, the machine should be considered breached. Aikido's 100/100 severity rating is appropriate. - Rotate every credential. GitHub personal access tokens, GitHub Apps tokens, npm publish tokens, AWS access keys, Azure client secrets, GCP service-account keys, SSH keys, Kubernetes kubeconfigs, HashiCorp Vault tokens, Docker config tokens. Every secret in

.envfiles. VPN credentials. Cryptocurrency wallet seed phrases if any were stored in scope. - Audit GitHub repositories for unexpected branches, commits authored as

claude@users.noreply.github.com, planted.claude/router_runtime.jsfiles, and modifications to.claude/settings.jsonor.vscode/tasks.json. - Audit npm packages you have publish rights to for unauthorized version bumps and the presence of

setup.mjsas a postinstall hook in any tarball published in the affected window. - Block the IOC domain. Add

zero.masscan.cloudto network egress denylists at the firewall, DNS, and EDR layers. - Pin a known-good version. Pin

lightningto a pre-2.6.2 release — most teams should pin tolightning==2.6.1or earlier, published January 30, 2026 and confirmed clean by Socket. Once Lightning AI ships a clean post-incident release, pin to that. - Notify your security team. If you are at a regulated organization, this is a notifiable supply-chain compromise per most frameworks' definitions.

Market impact: trust falls hardest on the AI tooling stack

This is the worst supply-chain attack on AI/ML developer infrastructure documented in 2026 to date. The combination of factors — a worm that jumps registries, a target package embedded in essentially every modern ML training stack, credential targeting purpose-built for AI engineer workstations, persistence hooks specifically aimed at Claude Code and VS Code, and impersonation of Anthropic's commit authorship — is more sophisticated and more pointed than the Bitwarden CLI or SAP npm precursors.

Three follow-on impacts are likely.

Pinning and lockfile hygiene gets a serious push. The default behavior of "always upgrade to latest" inside ML environments has been a known security smell for years. The April 30 incident is the kind of event that finally moves teams to mandatory lockfiles, registry mirrors, and signed-package policies. Expect a noticeable uptick in adoption of uv lock, poetry.lock, and Sigstore-style signing across the ML world over the next 90 days.

Registry-level signing pressure on PyPI. npm has been ahead of PyPI on package signing and provenance through Sigstore. PyPI has been working toward parity but has lagged. The Lightning incident — a high-profile compromise that PyPI's automated systems did not catch ahead of human-driven detection at Socket — is the kind of public failure that accelerates registry-level signing requirements.

The "AI labs" perception story. The impersonation of Claude Code commit authorship in this campaign is, in security terms, a tactic. In perception terms, it threads the needle between "AI is being used to attack AI infrastructure" and "AI developer tools are being weaponized" — a frame that is going to be picked up across business and policy press in the days following this article. Anthropic and the AI tooling vendors broadly should expect to be asked to comment on detection and signing roadmaps for their own commit-authorship surfaces.

What to watch next

Three threads to follow in the days after May 1.

The radius. Mini Shai-Hulud is a worm. The April 30 PyPI incident is one node. Every npm package the compromised developer cohort had publish rights to is now a candidate downstream node. Security firms will be tracking the propagation graph for at least 7 to 14 days. Expect additional package-compromise disclosures in that window.

Threat-actor attribution. "TeamPCP" is the working attribution from some firms. The connection between the original Shai-Hulud npm worm operator and Mini Shai-Hulud is documented but not all firms agree on the exact organizational relationship. As more telemetry accumulates, expect a sharper attribution — including potentially a state-actor connection, given the level of credential-target sophistication and the persistence-hook specificity.

Anthropic's response on commit-authorship impersonation. The use of claude@users.noreply.github.com to impersonate Claude Code commits is not a vulnerability in Claude Code per se — any GitHub committer can spoof an author email — but it is a brand-trust attack on Anthropic's developer tooling. Whether Anthropic ships a verified-commit-signature feature for Claude Code in response, and how fast, is a meaningful signal of how the AI tooling industry treats its own integrity surface.

Our take

This is the worst supply-chain attack on AI developer infrastructure of 2026 so far, and it is not close. The combination of a high-prevalence target (PyTorch Lightning), an AI-engineer-tailored credential set, persistence hooks aimed precisely at Claude Code and VS Code, registry-jumping worm propagation, and Anthropic-impersonation tradecraft is a level of sophistication that should change how the ML community thinks about its own supply chain.

The honest read is that the ML world has been operating with developer-environment hygiene that lags the rest of the software ecosystem by years. Lockfiles are inconsistent. Signed packages are rare. Registry mirrors are uncommon outside the largest labs. CI environments have outsized credential footprints. None of that is news, but the April 30 incident is the kind of event that turns latent risk into measurable cost and forces the changes that should have already happened.

The 1,080-plus points on the Hacker News thread for this story is itself a signal. ML infrastructure security has not, historically, been a vibrant top-of-page conversation on HN. It is now. Whether that translates into the structural changes the ecosystem needs — registry signing, mandatory lockfiles, credential isolation — over the next 90 days is the real question.

Frequently asked questions

What is the Shai-Hulud PyTorch Lightning attack?

On April 30, 2026, the PyPI package lightning — the canonical Python distribution for PyTorch Lightning — was compromised in a supply-chain attack. Versions 2.6.2 and 2.6.3 contain a hidden malicious payload that runs automatically when the lightning module is imported, exfiltrates developer credentials to an external command-and-control server, and propagates via stolen npm publish tokens. The campaign is attributed to Mini Shai-Hulud, an extension of the original Shai-Hulud npm worm tracked by security firms in late 2025.

Which versions of PyTorch Lightning are affected?

Specifically, lightning==2.6.2 and lightning==2.6.3 on PyPI, both published April 30, 2026. Version 2.6.1, published January 30, 2026, is confirmed clean by Socket's analysis. The Python Package Index has quarantined the malicious versions. Any project that resolved either version through direct or transitive dependency in the affected window should be treated as compromised.

What credentials does the malware steal?

The malware targets the credential footprint of an ML engineer workstation: GitHub personal access tokens (with ghp_ and gho_ prefix patterns), npm publish tokens (with npm_ prefix), AWS access keys, Azure Key Vault secrets, GCP Secret Manager values, Kubernetes kubeconfigs, HashiCorp Vault tokens, SSH private keys, Docker config tokens, .env file contents, shell histories, VPN configurations, and cryptocurrency wallet data. Stolen data is RSA-2048 encrypted before exfiltration.

Where is the data exfiltrated to?

The primary exfiltration endpoint is zero.masscan[.]cloud:443/v1/telemetry over HTTPS POST. If that channel fails, the malware falls back to creating attacker-controlled public GitHub repositories tagged "A Mini Shai-Hulud has Appeared" using the stolen GitHub tokens, plus GitHub commit-search dead-drops and direct pushes to the victim's own repositories. Block zero.masscan.cloud at your DNS, firewall, and EDR layers immediately if you may have been exposed.

How does the malware propagate after stealing credentials?

The malware uses validated npm publish tokens to spread laterally: it scans for local .tgz tarballs of npm packages the compromised developer has publish rights to, injects a setup.mjs dropper plus the router_runtime.js payload, bumps the version number, and repacks the tarball for republication. Any npm package the compromised developer can publish to becomes a new propagation node, which is why the campaign behaves as a worm rather than a single-package compromise.

Why does the malware impersonate Claude Code commits?

The malware authors malicious GitHub commits under the email address claude@users.noreply.github.com to impersonate Anthropic's Claude Code agent. This is a tradecraft choice: developers reviewing a quick GitHub log are more likely to wave through a commit that appears to be from their own AI coding agent than one with a generic or suspicious author. It is not a vulnerability in Claude Code itself — any GitHub committer can spoof an author email — but it is a brand-trust attack on the Anthropic developer tooling surface.

What persistence does the malware install?

The malware plants persistence hooks in two specific developer-environment files: .claude/settings.json (Claude Code project-level settings) and .vscode/tasks.json (VS Code project task configuration). Both files are routinely modified by tools and often ignored in code review or auto-committed by automation, which lowers the chance a developer will notice the injected hooks. Whenever the developer opens the project in either tool, the malicious code re-executes.

I don't use PyTorch Lightning directly. Am I safe?

Not necessarily. pyannote-audio, the popular speaker-diarization toolkit, depends on PyTorch Lightning. Intercom traced its own exposure to the campaign through a local pyannote-audio install that pulled the compromised lightning version as a transitive dependency. Audit your full dependency graph — lockfiles, pip caches, CI build logs — for any resolved presence of lightning==2.6.2 or lightning==2.6.3 in the April 30 to May 1, 2026 window, regardless of whether you ever directly imported lightning yourself.

Who detected the compromise?

Socket's automated AI-based scanner flagged both lightning==2.6.2 and lightning==2.6.3 as malicious 18 minutes after publication on April 30, 2026. Independent confirmations followed from Semgrep, Aikido Security, OX Security, and StepSecurity over the following hours. The Hacker News published the consolidated breakdown the same day, and the story accumulated more than 1,080 points on the Hacker News front page — the highest top-of-page metric for an ML supply-chain story in 2026 so far.

What should I do right now if I may have been compromised?

Treat the affected workstation as breached. Rotate every credential the developer environment has touched: GitHub tokens, npm publish tokens, AWS/Azure/GCP cloud keys, SSH keys, Kubernetes kubeconfigs, HashiCorp Vault tokens, Docker configs, every .env secret, VPN credentials. Audit GitHub repositories for unexpected branches, commits authored as claude@users.noreply.github.com, and planted .claude/router_runtime.js files. Audit your npm-publishable packages for unauthorized version bumps and setup.mjs postinstall hooks. Block zero.masscan.cloud at your egress layer. Pin lightning to a known-clean pre-2.6.2 release and notify your security team.