What Happened

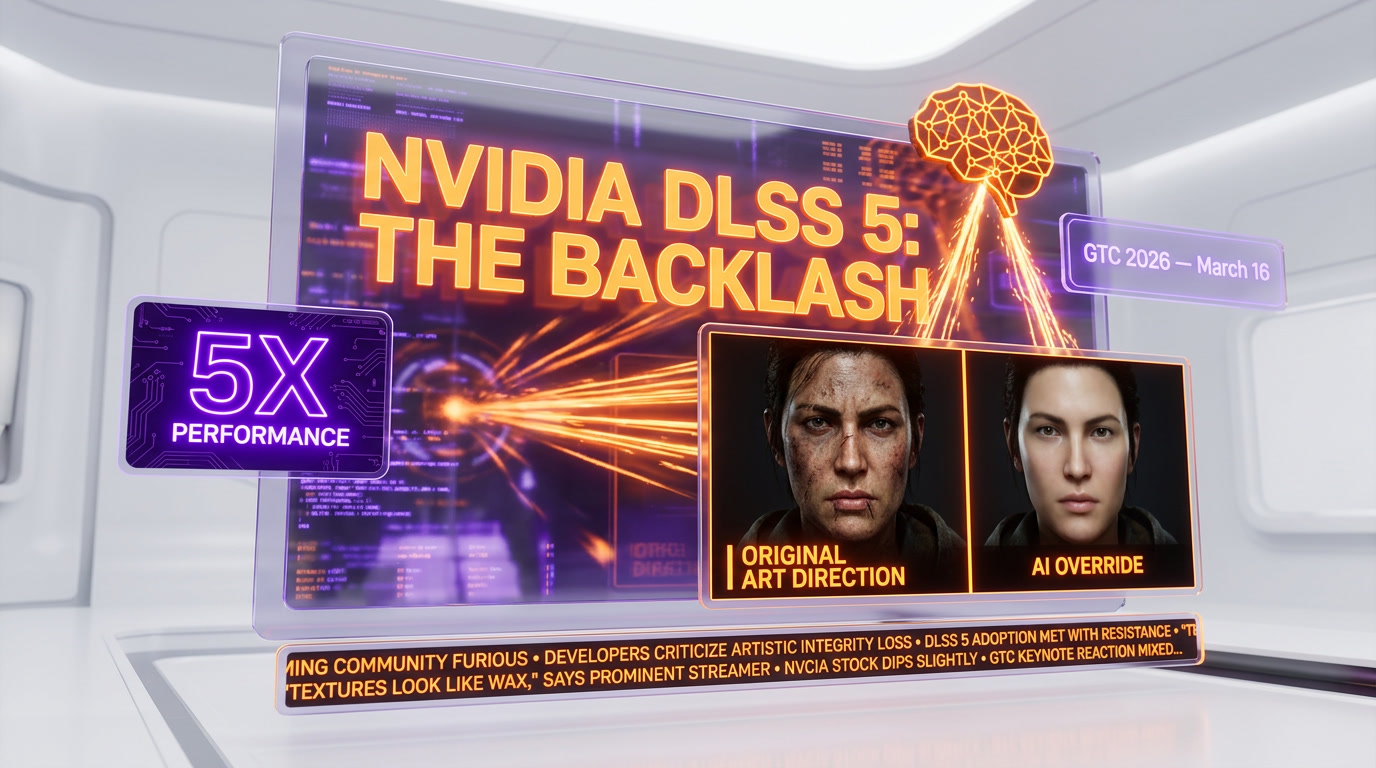

Nvidia dropped a bombshell at GTC 2026 on March 16. DLSS 5 — the next generation of their Deep Learning Super Sampling technology — was revealed with a bold new promise: AI-generated "photoreal lighting and materials" applied in real time to games. Not just upscaling. Not just frame generation. Full-on generative AI reshaping how games look on the fly.

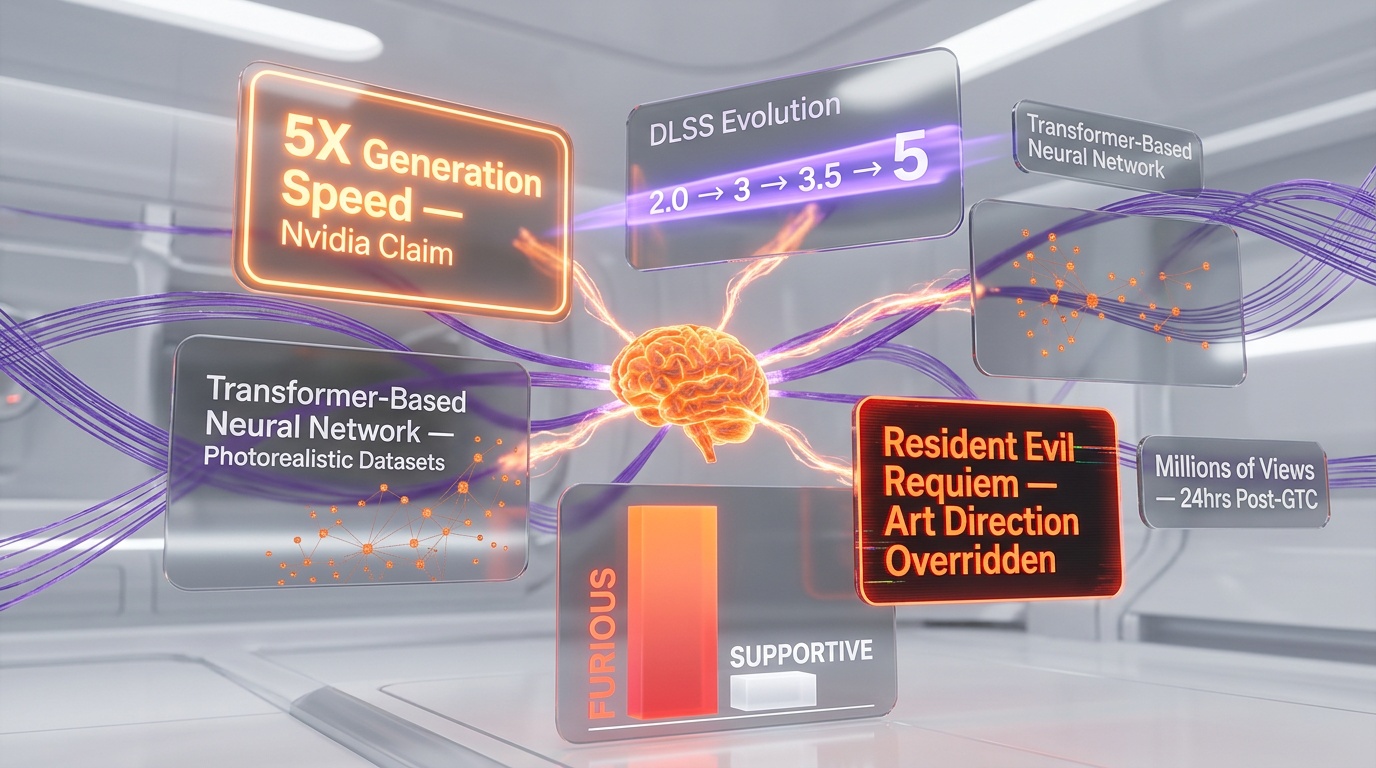

We've been following Nvidia's DLSS evolution since version 2.0 turned the technology from a blurry mess into a genuine game-changer. Each iteration brought meaningful improvements — better temporal stability, frame generation with DLSS 3, ray reconstruction with DLSS 3.5. But DLSS 5 is a fundamentally different beast. Instead of working within the boundaries of what the game engine renders, it uses generative AI models to add entirely new visual information to the scene.

According to Nvidia's GTC presentation, DLSS 5 leverages a transformer-based neural network trained on photorealistic datasets to inject lighting, material properties, and surface details that the original game engine never rendered. The result, Nvidia claims, is a 5x performance improvement in generation speed while delivering visuals that approach cinematic quality.

The gaming community had a very different word for it.

The Backlash: "Yassified" Gaming

Within hours of the GTC reveal, gaming forums, Reddit, and social media erupted. The reaction was not what Nvidia's marketing team had envisioned. Players and developers alike tore into the demo footage with a level of vitriol rarely seen outside of monetization controversies.

The criticisms coalesced around several devastating phrases that quickly went viral: "yassified," "garbage AI filter," and "looks-maxed freaks." These weren't fringe opinions from a handful of angry commenters — they represented a broad consensus across gaming communities that something was deeply wrong with what Nvidia was showing.

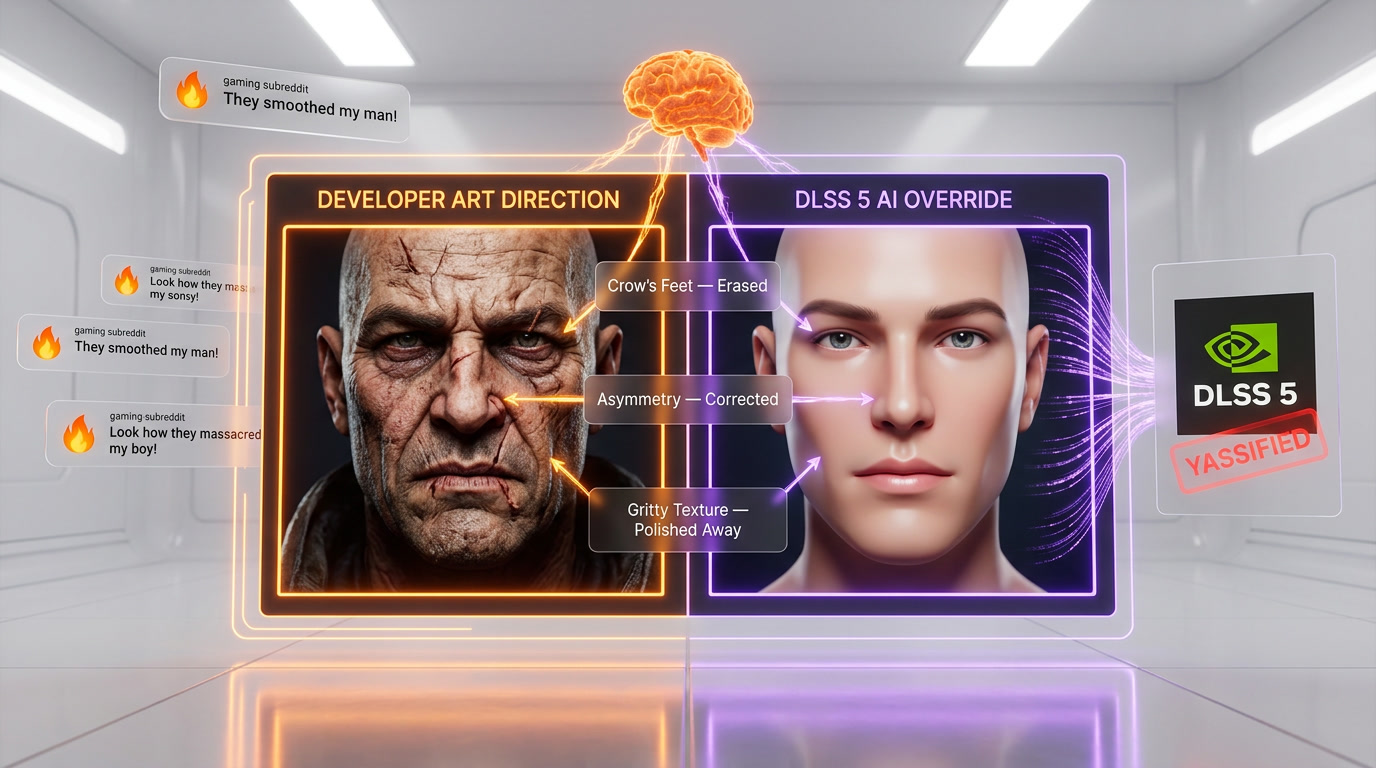

The core complaint is straightforward: DLSS 5's generative AI doesn't just enhance what's already there — it fundamentally changes the art direction that developers spent years crafting. Character faces that were deliberately stylized become eerily smoothed and "beautified." Gritty textures get polished into uncanny cleanliness. The intentional aesthetic choices that define a game's visual identity get steamrolled by an AI that only knows one thing: make it look more "photoreal."

The Worst Offenders: Resident Evil Requiem

If there was a single game that crystallized the backlash, it was Resident Evil Requiem. Capcom's latest entry in the survival horror franchise was used as a showcase during the GTC demo, and it backfired spectacularly.

Grace Ashcroft, the game's protagonist, went from a carefully designed character with realistic human imperfections to what players described as an "Instagram-filtered mannequin." Her skin lost its texture. Her facial features were subtly but noticeably altered toward conventional AI-generated beauty standards. The result was a character that looked less like a person surviving a horror scenario and more like a beauty influencer's profile picture.

Leon Kennedy, the franchise's beloved returning character, fared even worse in the eyes of fans. His rugged, battle-worn appearance — scarred and weary from decades of bioterrorism combat — was smoothed into what critics called a "looks-maxed freak." The crow's feet were gone. The subtle asymmetries that made his face feel real were erased. He looked younger, more symmetrical, and completely wrong.

The problem isn't that the technology can't produce impressive images. It clearly can. The problem is that "impressive" and "appropriate" are two very different things. A survival horror game needs its characters to look stressed, tired, and human. When AI smooths all of that away, it doesn't enhance the game — it undermines it at a fundamental level.

We've seen this pattern before in AI-generated imagery. The models are trained on datasets that skew heavily toward conventionally attractive, well-lit, high-production photography. When you point that same model at a game character designed to look haggard and desperate, the AI "corrects" those features because its training data says they're imperfections to be fixed. It's not a bug — it's an inherent limitation of the approach.

The Meme Machine

We've been tracking gaming community sentiment for years, and the meme response to DLSS 5 was among the fastest and most savage we've seen. Within 24 hours of the GTC presentation, dedicated comparison images were flooding every major gaming subreddit, Twitter/X, and YouTube.

The "yassified" meme format — showing a before/after of characters with exaggerated beauty-filter effects — became the dominant format. Players took Nvidia's own demo footage and placed it alongside the original game renders, highlighting every instance where the AI had "improved" a character into uncanny valley territory.

Side-by-side comparison videos racked up millions of views. Content creators who typically focus on hardware benchmarks found themselves doing art direction analysis instead, breaking down exactly how DLSS 5's generative model was altering game aesthetics in ways no developer had asked for.

The sentiment wasn't just mockery — there was genuine anger. Game artists and art directors weighed in, pointing out that their teams spend thousands of hours crafting specific visual identities for their games. Having an AI layer override those choices at the GPU level felt like a violation of creative intent.

Jensen Huang Fires Back

At a Tom's Hardware Q&A session during GTC, Nvidia CEO Jensen Huang was asked directly about the backlash. His response was characteristically blunt: "They're completely wrong."

Huang defended DLSS 5 by pointing to developer control. According to him, the technology isn't a one-size-fits-all filter that overrides art direction. Instead, developers can "fine-tune the generative AI" to match their specific visual style. The implication is that the GTC demo was showing the technology in a default, untuned state — and that in practice, game studios would calibrate DLSS 5 to respect and enhance their intended aesthetic rather than replace it.

It's a reasonable argument on paper. If developers have granular control over which aspects of the generative model are applied — and can dial back or disable the facial smoothing, material replacement, and lighting overrides — then DLSS 5 could theoretically be the best of both worlds: massive performance gains with developer-approved visual enhancement.

But the gaming community isn't buying it. The counter-argument, which we think has merit, is simple: if the out-of-the-box experience looks this wrong, most developers won't invest the time to fine-tune it properly. They'll either turn it on with default settings (leading to the problems showcased at GTC) or avoid it entirely. The middle ground of careful per-game calibration requires resources that many studios simply don't have.

The 5x Speed Claim and What It Really Means

Setting aside the art direction controversy, DLSS 5's performance claims are genuinely impressive. Nvidia says the new generative pipeline is 5x faster than its predecessor at producing enhanced frames. On RTX 50-series hardware, this translates to the ability to run generative enhancement in real time without the latency penalties that plagued earlier approaches.

We've been tracking AI inference performance on consumer GPUs closely, and a 5x improvement in generation speed is significant. It suggests Nvidia has made substantial architectural improvements to both the model efficiency and the dedicated Tensor Core pipeline on Blackwell GPUs. If these numbers hold up in independent testing, the raw technology is a genuine achievement.

But raw performance doesn't matter if the output is unwanted. A filter that ruins art direction at 5x the speed is still ruining art direction. The speed improvement only becomes meaningful if the quality and control issues are resolved first.

Why It Matters: The AI-Art Direction Collision

This controversy extends far beyond DLSS 5. It's the first major collision between generative AI capabilities and artistic intent in real-time rendering, and it won't be the last.

We've been watching this tension build across the creative industries. In film, AI color grading and enhancement tools have sparked similar debates. In photography, AI-powered editing software that automatically "improves" faces has been criticized for enforcing beauty standards. Now it's gaming's turn, and the stakes are arguably higher because games are interactive experiences where visual consistency is critical to immersion.

The fundamental question is one of control and consent. When a player enables DLSS 5, are they agreeing to have the game's visuals altered in ways the developer didn't intend? When a developer supports DLSS 5, are they ceding visual control to an AI model they didn't train? These are questions the industry hasn't answered yet, and Nvidia's GTC demo made them impossible to ignore.

There's also a competitive dimension. AMD's FSR technology has traditionally taken a more conservative approach — enhancing performance without fundamentally altering the rendered image. If DLSS 5's generative approach continues to draw backlash, AMD could position FSR as the "artist-respecting" alternative, potentially shifting market dynamics in a space where Nvidia has dominated.

Our Take

We've been following DLSS since its rocky debut, and we've championed every meaningful improvement Nvidia has made. DLSS 2.0 was transformative. Frame generation was clever. Ray reconstruction was brilliant. But DLSS 5, in its current form, crosses a line.

The technology is undeniably powerful. The 5x generation speed is real. The potential for genuinely stunning visuals is there. But "potential" is the operative word. What Nvidia showed at GTC wasn't enhancement — it was replacement. And in a medium where art direction is everything, that's a problem no amount of raw performance can solve.

Jensen Huang's promise of developer fine-tuning is the right answer, but it needs to be more than a talking point. Nvidia needs to ship robust tools, detailed documentation, and per-game profiles that respect artistic intent out of the box. The default experience cannot be the "yassified" nightmare that went viral from GTC.

The gaming community's reaction, while sometimes expressed in memes rather than measured critique, is fundamentally correct. Players care about art direction. They notice when it's wrong. And they will not accept a technology that treats developer-crafted aesthetics as imperfections to be corrected by an AI.

What's Next

Nvidia has a window to course-correct before DLSS 5 ships in its final form. The backlash, as brutal as it's been, is actually valuable feedback. It tells Nvidia exactly where the line is — and right now, they're on the wrong side of it.

We expect Nvidia to release updated demos with more restrained default settings and showcase the developer fine-tuning tools Huang promised. If they can demonstrate that DLSS 5 can enhance performance without overriding art direction, the conversation will shift. If they can't, this technology may end up as the most powerful feature that nobody enables.

We'll be testing DLSS 5 the moment it becomes available to press, and we'll report back with our own side-by-side comparisons. Stay tuned.

Frequently Asked Questions

What is Nvidia DLSS 5?

DLSS 5 is Nvidia's latest AI-powered rendering technology that adds what the company calls "photoreal lighting and materials" to supported games. It uses a transformer-based neural network to enhance graphics in real time, going beyond simple upscaling to actively modify scene rendering at 5x the speed of DLSS 4 on RTX 50-series Blackwell GPUs.

Why are gamers angry about DLSS 5?

Gamers report that DLSS 5 alters the original art direction of games, making character faces look plasticky and falling into uncanny valley territory. The AI enhancement overrides artistic choices made by game developers, changing the intended visual style rather than simply improving fidelity.

What did Jensen Huang say about the DLSS 5 backlash?

Nvidia CEO Jensen Huang dismissed the criticism, stating "They're completely wrong." He argued that game developers can fine-tune the AI to match their artistic vision and that the technology represents the future of real-time rendering.

Can DLSS 5 be turned off in games?

Yes, DLSS 5 is an optional feature that can be disabled in supported games' graphics settings. Players who prefer the original rendering can turn it off, though doing so means losing the performance benefits that come with AI-assisted rendering.

Which games showed the DLSS 5 problems at GTC 2026?

The backlash was primarily triggered by the Resident Evil Requiem demo shown at GTC on March 16, 2026. Character faces appeared smoothed and "yassified" — a term the gaming community used to describe the AI's tendency to beautify faces in ways that override the horror game's intentionally gritty art style.

How does DLSS 5 compare to AMD FSR?

AMD FSR (FidelityFX Super Resolution) uses a simpler upscaling approach that does not modify the game's art direction, which some players now view as a feature rather than a limitation. The DLSS 5 backlash has positioned AMD FSR as a potential "artist-respecting" alternative, especially if Nvidia fails to ship per-game calibration tools before DLSS 5's full commercial launch.

Which GPUs are required to run DLSS 5?

DLSS 5 requires Nvidia's RTX 50-series Blackwell GPUs to run at full capability with the transformer-based neural rendering features. Earlier RTX 40-series cards may receive partial DLSS 5 support for basic upscaling, but the generative AI lighting and materials features are exclusive to Blackwell architecture.