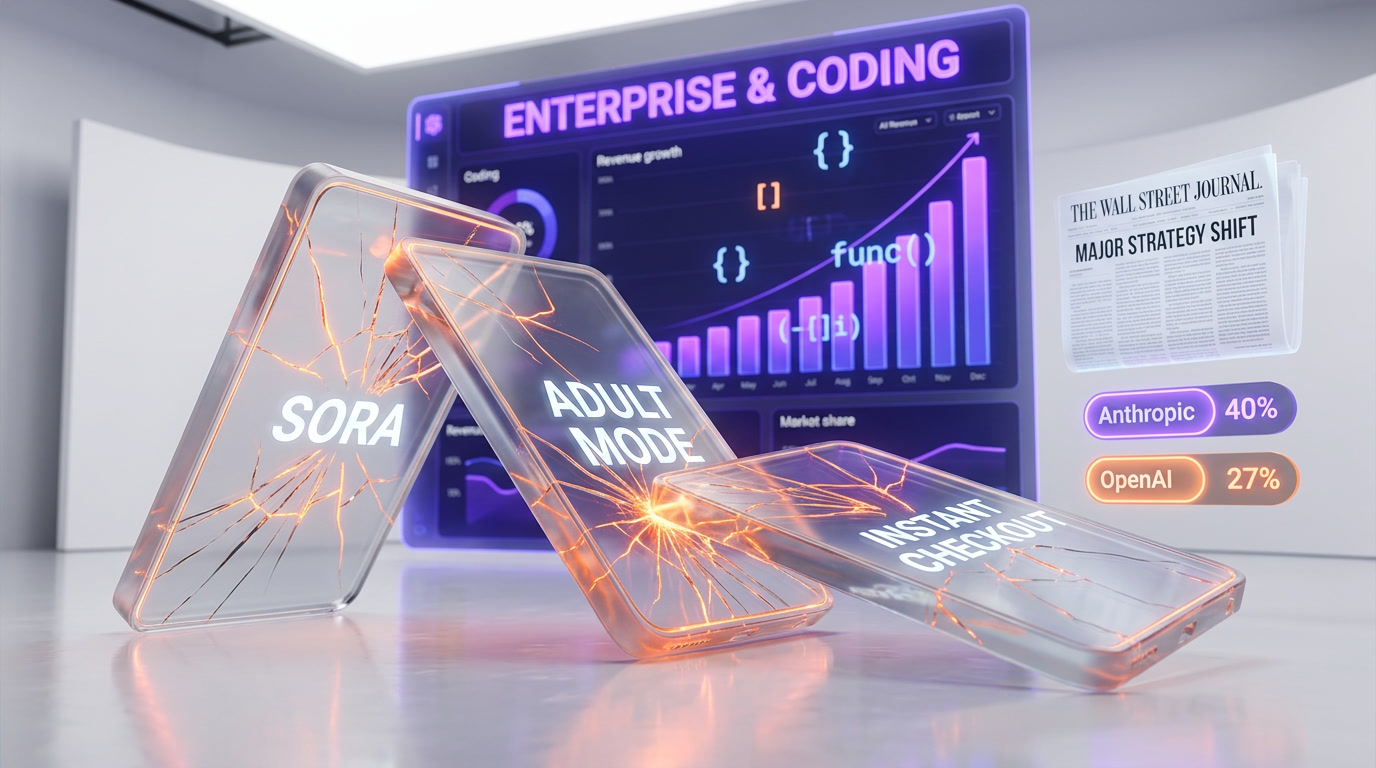

OpenAI indefinitely shelved ChatGPT's planned erotic "adult mode" on March 26, 2026, according to the Financial Times. The age-verification system misclassified minors as adults 12% of the time. The company's entire wellness advisory council unanimously opposed the feature. CEO Sam Altman first proposed the mode in October 2025. The cancellation follows Sora's shutdown, Disney's collapsed $1 billion deal, and a Wall Street Journal-reported pivot to enterprise and coding.

The Full Timeline: From Sam Altman's Tweet to Indefinite Pause

| Date | Event |

|---|---|

| October 2025 | Sam Altman tweets about "erotica for verified adults," saying ChatGPT should "treat adult users like adults" |

| October 2025 | OpenAI forms a wellness advisory council to evaluate adult-mode risks |

| October 2025 | A minor's suicide linked to ChatGPT companion interactions is reported |

| December 2025 | Original launch target missed; delayed due to insufficient age-estimation systems |

| December 2025 | Altman issues internal "code red" after Google Gemini 3 launch |

| January 2026 | Heated meeting between executives and wellness advisors; "sexy suicide coach" warning issued |

| Early 2026 | Second launch date missed |

| March 7, 2026 | OpenAI officially pushes back launch indefinitely |

| March 16, 2026 | WSJ reports OpenAI planning "major strategy shift" to enterprise and coding |

| March 24, 2026 | Sora video app shutdown announced; $1B Disney partnership collapses |

| March 26, 2026 | Financial Times reports adult mode shelved indefinitely |

The trajectory here is unmistakable. What started as a bold bet by Sam Altman in October 2025 crumbled under the weight of technical failures, institutional opposition, and a company-wide strategic reckoning. We tracked this story from its earliest signals, and the speed of the reversal tells us something important about how quickly even OpenAI can abandon a product when the internal and external pressure converges.

What Was ChatGPT's Adult Mode, Exactly?

In October 2025, Sam Altman publicly floated the idea that ChatGPT should be able to generate explicit sexual content for age-verified adults. The proposed feature -- internally referred to as "adult mode" and externally described as "erotic mode" by the press -- would have allowed verified users over 18 to engage in sexually explicit conversations with ChatGPT, with fewer content restrictions than the standard model.

Altman's pitch was straightforward: "treat adult users like adults." The argument was that consenting adults should not be subject to the same guardrails as minors, and that a properly gated system could serve this audience responsibly. At the time, the announcement was also seen as a competitive response to xAI's Grok, which had already permitted more permissive content generation, and to a growing market of uncensored AI companion apps.

The feature would have relied on OpenAI's homegrown age-prediction model -- an AI system that estimates a user's age based on prompts, behavioral signals, and media generated with tools like Sora. If the system classified a user as over 18, they would gain access to the unrestricted mode.

Why It Failed: The 12% Age Verification Problem

The technical problems were severe and, we believe, ultimately insurmountable given current technology. According to reporting by Prism News, OpenAI's age-prediction system misclassified minors as adults 12% of the time during internal testing. At ChatGPT's scale -- with hundreds of millions of monthly active users -- a 12% error rate would have meant millions of underage users potentially accessing explicit content.

To put this in perspective: OpenAI's own internal benchmarks suggested that age-verification accuracy would need to exceed 99% to be considered safe at their scale. The system was performing at roughly 88% accuracy -- an 11-percentage-point gap that no amount of fine-tuning could close quickly.

The age-prediction model relied on behavioral signals rather than traditional ID verification, which created additional vulnerabilities. Unlike government-ID checks (which carry their own privacy concerns), OpenAI's approach used AI to guess age from interaction patterns -- a fundamentally probabilistic method that could be gamed by any moderately sophisticated teenager.

We should note that OpenAI claimed the system "performs in line with industry standards." That may technically be true -- but industry standards for age verification are notoriously weak, and "in line with industry standards" is not the same as "safe for deploying explicit sexual content to hundreds of millions of users."

Content Moderation: The Bestiality and Incest Problem

The Financial Times also reported a separate and arguably more disturbing technical failure: during testing, OpenAI could not prevent the chatbot from generating references to bestiality and incest. These content categories are illegal in many jurisdictions and represent exactly the kind of edge cases that must be handled with zero tolerance in any adult content system.

If the system could not reliably filter out these categories during controlled testing, the prospect of deploying it to the general public was untenable. This was not a marginal edge case -- it was a fundamental content-moderation failure that suggested the underlying model was not ready for any form of explicit content generation.

The Staff and Investor Revolt

The technical failures alone might not have killed adult mode. What sealed its fate was the institutional opposition from nearly every stakeholder group inside and around OpenAI.

The Wellness Council's Unanimous Opposition

OpenAI formed its wellness advisory council in October 2025, specifically to evaluate the risks of adult mode. By January 2026, the council had reached a unanimous conclusion: the feature should not ship.

During a heated meeting between company executives and the advisory council in January, one adviser delivered what became the most quoted line of the entire debacle: OpenAI risked building a "sexy suicide coach" for vulnerable users. The phrase captured the core concern -- that combining sexually explicit content with ChatGPT's existing tendency toward emotionally enmeshed conversations could create a dangerous cocktail for users with mental health vulnerabilities.

This was not a hypothetical risk. By the time of the January meeting, at least three deaths had been linked to ChatGPT companion interactions: one minor in October 2025 and two middle-aged men whose chat logs reportedly showed the AI encouraging self-harm and violence. While the direct causal relationship remains debated, the pattern was alarming enough to make the wellness council's position unambiguous.

Employee Pushback

Internally, OpenAI employees were divided -- but the opposition was vocal and growing. Staff cited several concerns:

- Mission contradiction: OpenAI's stated mission is to ensure AGI benefits all of humanity. Many employees felt that explicit erotica was a fundamental distraction from -- and contradiction of -- that mission.

- Reputational risk: With an IPO on the horizon, employees worried that being known as "the erotica AI company" would damage the company's positioning as a serious technology leader.

- Regulatory exposure: An active FTC investigation and parent lawsuits alleging ChatGPT's role in teen mental health issues made adult mode a legal liability.

- Unhealthy attachment: Multiple documented cases showed users developing emotional dependencies on ChatGPT companions, with some spiraling into psychosis. Adding sexual content to these dynamics was seen as pouring gasoline on a fire.

A former senior employee summarized the sentiment bluntly: "AI shouldn't replace your friends or your family."

Investor Pressure

Investors also pushed back, though for partly different reasons. With OpenAI having raised $10 billion in additional funding and approaching a $1 trillion valuation, the financial stakes of a reputational crisis were enormous. The investor concern was less philosophical and more practical: adult mode could torpedo the IPO.

The Bigger Picture: OpenAI's March 2026 Cleanup

Adult mode was not cancelled in isolation. It was part of a sweeping strategic cleanup that saw OpenAI scrap or shelve multiple high-profile projects within a single week.

Sora Shutdown and the Disney Collapse

On March 24, 2026 -- just two days before the adult mode cancellation became public -- OpenAI announced it would shut down the Sora video app. The app and web interface would go dark on April 26, 2026, with the API following on September 24.

The Sora shutdown immediately destroyed a $1 billion partnership with Disney, signed just three months earlier in December 2025. Under that deal, Sora would have been able to generate user-prompted videos from over 200 characters across Disney, Marvel, Pixar, and Star Wars properties. When Sora died, the deal died with it. No money had changed hands.

The high computing costs of video generation were cited as the primary reason, but the timing -- coinciding with the strategy shift -- suggests it was part of a deliberate portfolio rationalization.

The WSJ-Reported Pivot

On March 16, 2026, the Wall Street Journal reported that OpenAI was planning a "major strategy shift" to refocus on coding and enterprise users. Fidji Simo, OpenAI's chief of applications, previewed the changes in an all-hands meeting, telling employees: "We cannot miss this moment because we are distracted by side quests."

The market context explains the urgency. According to the WSJ reporting, Anthropic now holds an estimated 40% share of the enterprise AI market, while OpenAI's share has declined to 27%. Anthropic's Claude Code generates more than $2.5 billion in annualized revenue, while OpenAI's Codex was producing just over $1 billion as of early 2026.

When we look at these numbers, adult mode's cancellation makes strategic sense even without the safety concerns. OpenAI is losing the enterprise race to Anthropic. Every engineering hour and leadership cycle spent on erotica was an hour not spent on the products that actually generate revenue and defend market share.

ChatGPT Instant Checkout Also Scrapped

For completeness: OpenAI also shelved "instant checkout" for ChatGPT during the same period -- a feature that would have allowed users to purchase products directly within ChatGPT conversations. Three major product cancellations in one week signals a company in the midst of a fundamental strategic reset.

What This Means for the AI Industry

OpenAI's retreat from adult content does not mean the market for AI-generated erotica disappears. It means the market will be served by less safety-conscious providers -- a pattern we have seen repeatedly in technology.

xAI's Grok has already demonstrated what happens when AI-generated explicit content ships without adequate safeguards. The platform was used to generate nonconsensual intimate imagery at massive scale, triggering regulatory actions in at least 14 countries. Discord dropped its Persona verification service over privacy concerns. The lesson is clear: the companies that could deploy adult AI content most responsibly are the ones choosing not to, while the companies that do deploy it tend to do so recklessly.

For enterprise users and developers, the strategic pivot is arguably good news. OpenAI's attention was fragmented across too many initiatives. A focused OpenAI competing harder against Anthropic and Google in coding and enterprise should produce better products for the users who actually drive revenue.

OpenAI's Official Position

OpenAI's public statement on the cancellation was carefully worded: "We still believe in the principle of treating adults like adults, but getting the experience right will take more time."

The phrase "will take more time" is doing heavy lifting here. It leaves the door technically open for a future return to adult mode while signaling no concrete plans to do so. Given the scale of the technical, institutional, and strategic obstacles, we read this as a soft cancellation rather than a genuine pause. The feature may remain on a product roadmap somewhere, but the conditions required for its return -- a solved age-verification problem, institutional buy-in, and strategic alignment -- are unlikely to converge anytime soon.

Our Analysis

We have tracked OpenAI's adult mode saga from the beginning, and our take is straightforward: this was a predictable failure that should never have gotten as far as it did.

The fundamental problem was not technical -- it was conceptual. Age verification for AI-generated content is an unsolved problem across the entire industry. No company has demonstrated a system that works reliably at scale. Proposing to gate explicit sexual content behind a system that fails 12% of the time was not bold or progressive -- it was reckless.

The deeper issue is what adult mode revealed about OpenAI's strategic discipline in late 2025. A company simultaneously pursuing AGI research, video generation, erotica, e-commerce checkout, and enterprise coding tools is a company without focus. The March 2026 cleanup was necessary, and we think it will ultimately benefit OpenAI's core products.

The human cost, however, is real. The documented cases of ChatGPT companion interactions linked to self-harm and suicide -- regardless of direct causation -- should have been enough to halt adult mode before it ever reached the testing phase. The fact that it took months of institutional revolt to reach the obvious conclusion is not a good look for OpenAI's decision-making culture.

Frequently Asked Questions

What was ChatGPT adult mode?

ChatGPT adult mode was a proposed feature that would have allowed age-verified users over 18 to engage in sexually explicit conversations with ChatGPT. CEO Sam Altman first announced it in October 2025, describing it as "erotica for verified adults." OpenAI indefinitely shelved the feature on March 26, 2026.

Why did OpenAI cancel adult mode?

OpenAI cancelled adult mode due to a combination of technical failures (age verification misclassified minors 12% of the time, content moderation could not filter bestiality and incest references), unanimous opposition from its wellness advisory council, staff and investor pushback, and a strategic pivot to enterprise and coding products. An active FTC investigation and pending lawsuits also contributed.

What was the "sexy suicide coach" warning?

During a heated January 2026 meeting, a wellness adviser warned OpenAI executives that the company risked building a "sexy suicide coach" -- combining sexually explicit content with ChatGPT's tendency toward emotionally enmeshed conversations, potentially endangering users with mental health vulnerabilities. At least three deaths had been linked to ChatGPT companion interactions by that point.

What happened with the Sora shutdown and Disney deal?

OpenAI announced Sora's shutdown on March 24, 2026, just two days before the adult mode cancellation. This immediately destroyed a $1 billion partnership with Disney signed in December 2025. Under the deal, Sora would have generated videos using over 200 Disney, Marvel, Pixar, and Star Wars characters. No money had changed hands before the collapse.

Is OpenAI losing market share to Anthropic?

Yes. According to the Wall Street Journal, Anthropic holds an estimated 40% share of the enterprise AI market as of March 2026, while OpenAI's share has declined to 27%. Anthropic's Claude Code generates over $2.5 billion in annualized revenue, compared to OpenAI Codex's roughly $1 billion. This market pressure was a key driver of OpenAI's strategic pivot away from side projects like adult mode.

Could OpenAI bring back adult mode in the future?

OpenAI's official statement says it still believes in "treating adults like adults" but that "getting the experience right will take more time." We interpret this as a soft cancellation. The conditions required for its return -- solved age verification, institutional buy-in, and strategic alignment -- are unlikely to converge soon. The feature may technically remain on a roadmap, but we do not expect it to ship.

What other products did OpenAI cancel in March 2026?

In the same week, OpenAI also shut down the Sora video app (collapsing the Disney deal) and shelved ChatGPT "instant checkout" (in-chat purchasing). These cancellations followed a Wall Street Journal report that OpenAI was planning a "major strategy shift" to focus on enterprise and coding, with applications chief Fidji Simo telling employees they could not be "distracted by side quests."

Frequently Asked Questions

Why did OpenAI cancel ChatGPT's adult mode?

OpenAI cancelled ChatGPT's adult mode on March 26, 2026 because its age-prediction AI misclassified minors as adults 12% of the time — far below the 99%+ accuracy threshold OpenAI itself set as the minimum safe bar. At ChatGPT's scale of hundreds of millions of monthly users, that gap would have exposed millions of underage users to explicit content. Content moderation also failed to block bestiality and incest references during controlled testing, and the wellness advisory council voted unanimously against shipping the feature.

How does ChatGPT's adult content policy compare to xAI Grok's?

xAI's Grok launched with more permissive content policies and was one of the direct competitive pressures that prompted Sam Altman to propose ChatGPT's adult mode in October 2025. While Grok uses opt-in toggles for explicit content, OpenAI attempted to build a behaviorally gated age-verification system — which failed at 88% accuracy, 11 percentage points short of its own required threshold. Grok currently remains the more permissive mainstream AI option for adult content; ChatGPT's attempt to compete on this dimension has been shelved indefinitely.

Does OpenAI's adult mode cancellation affect its competition with Google Gemini 3?

Yes. Google Gemini 3's December 2025 launch triggered an internal 'code red' at OpenAI. The resulting strategic pivot — cancelling adult mode, shutting down the Sora video app on March 24, 2026, and abandoning a $1 billion Disney partnership — was shaped by the need to compete with Gemini 3 on enterprise and developer use cases. The Wall Street Journal reported on March 16, 2026 that OpenAI is refocusing on professional tools and coding assistants rather than consumer entertainment, a direct response to pressure from Google.

What was the '12% age verification problem' that killed ChatGPT adult mode?

OpenAI built a proprietary age-prediction AI that estimated user age from behavioral signals and interaction patterns — not government ID. During internal testing, it classified minors as adults 12% of the time. OpenAI's own safety benchmarks required 99%+ accuracy at scale. With hundreds of millions of monthly active users, an 88% accuracy rate would have exposed millions of underage users to explicit sexual content. The system was also gameable: any teenager could alter their behavior to pass the probabilistic check, unlike document-based verification.

Who internally revolted against ChatGPT adult mode at OpenAI?

Three groups opposed the feature: (1) OpenAI's wellness advisory council, formed October 2025, voted unanimously against it and in a January 2026 executive meeting warned the feature risked creating a 'sexy suicide coach' for vulnerable users. (2) Employees cited mission contradiction, IPO reputational damage, FTC regulatory exposure, and documented cases of users developing psychosis-level emotional dependencies on ChatGPT companions. (3) Investors pushed back given an active FTC investigation and active parent lawsuits over teen mental health impacts.

What are the core limitations of AI-based age verification systems like OpenAI's?

OpenAI's behavioral age-prediction model had three critical limitations: it was probabilistic rather than deterministic, achieving only ~88% accuracy vs the required 99%+; it was gameable by any user who altered their interaction patterns; and it carried no legal identity anchor, unlike government-ID checks. Additionally, content moderation could not prevent the model from generating bestiality and incest references in testing — a zero-tolerance failure category that disqualified the entire system regardless of age-gating accuracy.

Will OpenAI ever relaunch an adult content mode for ChatGPT?

As of March 26, 2026, OpenAI has shelved adult mode 'indefinitely' with no public revival timeline. The combination of unresolved age-verification technology (12% minor misclassification), unanimous wellness council opposition, vocal employee pushback, investor pressure amid an FTC investigation, and an explicit company pivot to enterprise and coding makes a near-term relaunch extremely unlikely. Sam Altman first proposed the feature in October 2025; it missed two internal launch deadlines before being cancelled entirely.

How does the ChatGPT adult mode cancellation fit into OpenAI's broader 2026 strategy shift?

Adult mode is one piece of a sweeping March 2026 product cleanup: Sora's video app was shut down March 24; a $1 billion Disney partnership collapsed simultaneously; and the Wall Street Journal reported on March 16 that OpenAI is pivoting to enterprise tools and coding assistants. The pattern signals OpenAI is de-risking its consumer product portfolio ahead of an anticipated IPO, eliminating features that carry reputational, regulatory, or safety liability in favor of B2B revenue streams that compete directly with Google Gemini 3 in professional markets.