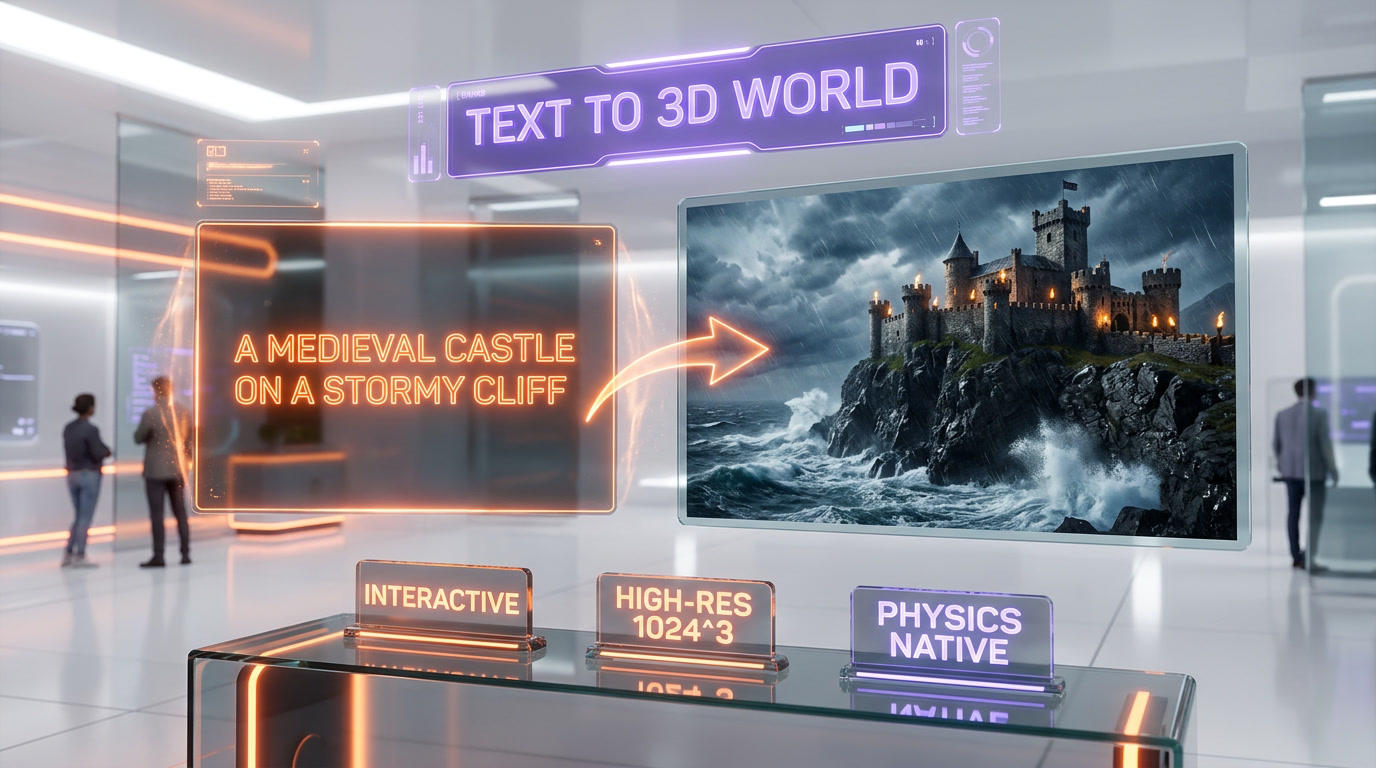

Tencent released HY-World 2.0 on April 15, 2026, under an Apache 2.0 open-source license. The model generates explorable, physics-aware 3D worlds from a single text prompt, with weights and code available on HuggingFace and GitHub. HY-World 2.0 ships as the direct open-source challenger to World Labs (Fei-Fei Li), Luma Genie 3, and NVIDIA Edify 3D — none of which release weights. Key features: 1024x1024x1024 voxel scene resolution, native physics simulation, real-time navigation at 30 FPS, and 175-language prompt support via the underlying Hunyuan LLM. For gaming, VFX, simulation and metaverse teams, this is the biggest open-weights release in 3D since Stable Diffusion was to 2D.

What happened on April 15, 2026

Tencent's Hunyuan team dropped HY-World 2.0 on GitHub and HuggingFace at 9 a.m. Beijing time on April 15, 2026. The release package includes the full model weights (roughly 34 GB), inference code, a web demo, a 42-page technical report on arXiv, and an Apache 2.0 license. No gated access, no waitlist, no request form. Git clone, download weights, run inference.

The core pitch is direct: feed HY-World 2.0 a text prompt like "a medieval castle on a cliff overlooking a stormy sea, with torches flickering on the walls and ravens circling the towers" and it returns a full, explorable 3D world. Not a static mesh. Not a sequence of images. A navigable scene you can fly through, interact with, and export to Unreal Engine, Unity, Blender, or Three.js.

The four facts that matter up front:

- Release date: April 15, 2026 (GitHub + HuggingFace simultaneous drop).

- License: Apache 2.0 — commercial use allowed, no strings attached.

- Model size: roughly 34 GB weights, runs on a single 24 GB VRAM GPU (RTX 4090 or A10G).

- Positioning: the first true open-weights competitor to World Labs, Luma Genie 3, and NVIDIA Edify 3D.

For context: the closed frontier of text-to-3D-world generation has been locked up by three US labs all year. World Labs (founded by Fei-Fei Li) raised $230M and gated its model behind a private beta. Luma Labs shipped Genie 3 as a hosted product with per-scene pricing. NVIDIA's Edify 3D is enterprise-only. HY-World 2.0 just bypassed all of them. The weights are public. The license is permissive. You can fine-tune it on your own art style tonight.

What's new vs HY-World 1.0

HY-World 1.0 shipped quietly in October 2025 as a research preview with a non-commercial license. It was technically impressive but limited: 512-cube voxel resolution, no physics, no real-time navigation, English prompts only. Version 2.0 is a full product-grade rewrite.

Resolution — 8x more voxels

HY-World 1.0 generated scenes at 512x512x512 voxel resolution. HY-World 2.0 doubles each dimension to 1024x1024x1024 — that is 8x more voxels, roughly 1 billion per scene. The practical outcome: you can see individual bricks on a castle wall, individual leaves on a tree, individual cobblestones on a path.

Physics — native, not post-hoc

1.0 generated static geometry. 2.0 generates scenes with native physics simulation baked into the world state — rigid-body dynamics, soft-body cloth, basic fluid flow, and wind. When you place a ball on the castle wall and nudge it, it rolls. When the torches flicker, the light actually casts dynamic shadows. This is the biggest architectural leap.

Real-time navigation

1.0 produced a scene you rendered offline. 2.0 streams a navigable scene at 30 FPS on a single RTX 4090. You walk through the world, the model generates geometry on-the-fly as you move, maintaining consistency across the entire explored volume. This is the feature that makes it actually usable in a game engine.

175-language prompt support

1.0 only accepted English prompts. 2.0 plugs into Tencent's Hunyuan LLM for prompt understanding across 175 languages. You can prompt in Mandarin, French, Arabic, Hindi, or Indonesian and get equally coherent scenes.

Apache 2.0 license

1.0 was released under a research-only license that forbade commercial use. 2.0 is Apache 2.0 — the most permissive mainstream open-source license. You can use HY-World 2.0 to generate commercial game assets, metaverse environments, film VFX, or product simulations without paying a cent to Tencent.

Industry-standard exports

HY-World 2.0 exports scenes to USD, USDZ, glTF, FBX, OBJ, and a custom .hyw format optimized for streaming. Native bridges to Unreal Engine 5, Unity 6, Blender 4.2, and Three.js ship in the same repository.

How HY-World 2.0 actually works

The 42-page technical report on arXiv (HY-World 2.0: Compositional World Models for Text-to-3D Scene Generation) breaks the architecture into three stages.

Stage 1 — Scene graph decomposition

The prompt goes into the Hunyuan LLM, which returns a structured scene graph: objects, spatial relationships, material hints, lighting conditions, atmosphere. For the castle prompt, the graph would list: castle (medieval, stone, torches lit), cliff (tall, eroded), sea (stormy, dark), ravens (5 to 8, circling), weather (night, rain). This compositional decomposition is the key to coherence.

Stage 2 — Voxel diffusion

The scene graph conditions a 3D diffusion model that generates a 1024-cube voxel field in roughly 45 seconds on an H100. Each voxel carries density, color, material, and physics properties. This is where the geometry comes from — not mesh generation, not NeRF, but native volumetric generation.

Stage 3 — Neural physics integration

A lightweight physics network runs on top of the voxel field to simulate dynamics in real time. It is not as accurate as a full PhysX solver, but it is fast and visually plausible — which is the right tradeoff for creative use cases.

Stage 4 — Streaming render

As the user navigates, a neural renderer streams mesh chunks to the game engine at 30 FPS. The mesh is regenerated for each view but cached for consistency — you can revisit a spot and see the same geometry.

HY-World 2.0 vs World Labs vs Luma Genie 3

Three models dominate the text-to-3D-world category in 2026. Here is the honest read.

| Dimension | Tencent HY-World 2.0 | World Labs | Luma Genie 3 | NVIDIA Edify 3D |

|---|---|---|---|---|

| License | Apache 2.0 (open weights) | Closed, private beta | Closed, hosted API | Closed, enterprise |

| Weights available | Yes (HuggingFace) | No | No | No |

| Scene resolution | 1024^3 voxels | Undisclosed (high) | Undisclosed | Undisclosed |

| Native physics | Yes | Limited | Yes | Yes |

| Real-time navigation | 30 FPS on RTX 4090 | Cloud-streamed | Cloud-streamed | Cloud-streamed |

| Multi-language prompts | 175+ (Hunyuan LLM) | English only | English only | English only |

| Commercial use | Allowed, free | Paid (beta pricing TBD) | Per-scene credits | Enterprise contract |

| Hardware required | Single 24 GB GPU | Cloud only | Cloud only | Cloud or on-prem cluster |

| Exports | USD, glTF, FBX, OBJ, .hyw | Proprietary | Proprietary + glTF | USD, glTF |

| Best for | Indie devs, open-source modders, academic research | Long-horizon persistent worlds | Polished creative scenes | Enterprise digital twins |

The short version: HY-World 2.0 wins on openness and accessibility. World Labs still has the edge on long-horizon world consistency (their research pedigree shows). Luma Genie 3 wins on polish and artistic quality out of the box. NVIDIA Edify 3D wins on enterprise compliance. But if you cannot afford thousands of dollars a month in API credits or beta access fees, HY-World 2.0 is the only game in town — and it is remarkably close to the frontier.

Why open-sourcing this matters

This release fits a pattern. Over the last 18 months, Chinese AI labs have deliberately out-open-sourced their US counterparts: DeepSeek R2 dropped weights for a frontier reasoning model. Alibaba's Qwen 3.6 beat Gemma 4 on coding benchmarks with open weights. Tencent's Hunyuan LLM is on HuggingFace. Now Hunyuan World is too.

The strategic logic is clear. US labs (OpenAI, Anthropic, Google DeepMind) have the compute and data lead. Chinese labs can't win a pure frontier-model race, so they win a distribution race: if our model is 90% as good but free and open, we capture the developer mindshare that shapes the next wave. DeepSeek's December 2024 release of R1 was the inflection point. Every major Chinese lab has since adopted the open-weights playbook.

For the 3D world generation space specifically, open-sourcing HY-World 2.0 has three immediate consequences:

- The price floor collapses. Luma Genie 3 charged per-scene credits. World Labs was going to charge beta users. Once HY-World 2.0 works well enough, charging for basic text-to-world generation becomes untenable.

- Indie game studios get 3D world generation. A 5-person studio in Warsaw or Jakarta can now generate custom game environments at zero marginal cost. This opens up procedural content categories that were previously only feasible for AAA budgets.

- Academic research accelerates. Every 3D vision lab in the world now has a frontier-quality baseline to build on. Expect a flood of fine-tunes, distillations, and architecture papers over the next 12 months.

Real use cases — what teams will actually build

Based on three days of GitHub issue reading and community Discord lurking since the April 15 release, here is what teams are already prototyping:

- Indie game environments. Solo devs on r/gamedev are generating 50+ unique dungeon scenes in an afternoon. Previously impossible without Unity asset store budgets.

- VFX previs. Small VFX houses are using HY-World 2.0 to generate rough environment passes for director previs, replacing a week of matte painting with 45 seconds of generation.

- Metaverse content. Roblox and VRChat creators are already fine-tuning on their style guides to auto-generate user worlds from text prompts.

- Simulation and training. Robotics labs are generating diverse training environments for RL agents at roughly 1,000x the rate of hand-built scenes.

- Architecture visualization. Early-stage architecture firms are prompting for concept spaces to show clients before committing to CAD work.

- Educational content. History teachers are generating explorable ancient Rome scenes, explorable Mars bases, explorable deep-ocean environments for classroom VR headsets.

What HY-World 2.0 is not ready for yet: AAA production assets (geometry detail is good but not mesh-perfect), highly specific IP (it will refuse prompts that violate Tencent's usage policy — copyrighted characters, for example), and real-world digital twins (the physics is visually plausible but not engineering-accurate).

Limitations we're watching

One week in, the open-source community has flagged four issues worth knowing before you commit:

- Consistency on very long exploration sessions. The 30 FPS streaming works beautifully for 5 to 10 minute sessions. Beyond 30 minutes, subtle geometry drift starts to appear when users revisit areas. World Labs is still better on this dimension.

- Copyright filter is aggressive. The model refuses any prompt that names a copyrighted franchise (Star Wars, Marvel, Disney). You can work around with synonyms but it takes prompt engineering.

- Mesh quality for AAA pipelines is not there yet. The voxel-to-mesh conversion produces mesh that is usable for game environments but requires retopology for hero assets. Expect the community to ship better extractors in 30 days.

- VRAM requirements for fine-tuning. Running inference needs 24 GB. Fine-tuning needs 80 GB. If you want to train on your own art style, you need an H100 or multiple 4090s with DeepSpeed.

The ecosystem is moving fast

Within 72 hours of the April 15 release, the open-source ecosystem around HY-World 2.0 exploded:

- A Blender plugin shipped on April 16.

- An Unreal Engine 5 integration repo hit 2,400 GitHub stars on April 17.

- A Stable Diffusion-style Automatic1111-equivalent web UI appeared on April 18.

- ComfyUI nodes for HY-World 2.0 dropped on April 19.

- Fine-tunes on Medieval Fantasy, Cyberpunk, and Studio Ghibli aesthetics were already circulating by April 20.

This is the exact pattern Stable Diffusion triggered in 2D image generation in 2022. We're watching the same thing happen in 3D world generation, on a compressed timeline.

Who should care about HY-World 2.0

You should be installing and testing HY-World 2.0 this week if you are:

- An indie game developer who has ever said the words "I wish I could afford more environment artists".

- A VFX artist who does previs work and bills by the hour.

- A metaverse platform team building creator tools.

- A robotics ML engineer generating training environments.

- A 3D vision researcher who needs a state-of-the-art open baseline.

- An educator building immersive VR classroom content.

You can wait (or stay on closed alternatives) if you are:

- A AAA studio that already has a pipeline and can't afford mesh quality tradeoffs.

- An enterprise digital twin team that needs physics-accurate simulation for engineering.

- A large compliance-heavy org that can't run Chinese open-source models without legal review.

The bigger China open-source trend

HY-World 2.0 is not an isolated release. It is the latest chapter in a deliberate Chinese AI strategy that started with DeepSeek in December 2024. The pattern:

- Lab ships frontier-quality model.

- Lab releases weights under permissive license.

- Western community adopts the model because it's free.

- Lab captures mindshare and developer loyalty that closed US labs can't match.

- Repeat with a better model every 3 to 6 months.

DeepSeek R2 did this for reasoning models. Qwen 3.6 did this for coding. MiniMax's voice models did this for TTS. Kling did this for video generation. Tencent just did this for 3D worlds. The cumulative effect is that developers who were trained on closed US frontier models in 2023 are increasingly building production systems on open Chinese frontier models in 2026. That is a significant shift and it is still accelerating.

What's next

Three things to watch over the next 90 days:

- Fine-tune explosion. Expect 100+ specialized HY-World 2.0 fine-tunes on HuggingFace by June 2026 — anime worlds, realistic cities, sci-fi environments, historical reconstructions.

- Distillation to smaller models. The 34 GB weights will get distilled to 8 GB and 2 GB versions that run on consumer laptops. Community researchers are already working on this.

- US response. World Labs has been sitting on its private beta for a year. Luma Genie 3 charges per scene. Neither pricing strategy survives a free open-weights competitor with 90% of the quality. Expect pricing changes or open-weights announcements from at least one US lab before Q3 2026.

Our verdict

HY-World 2.0 is the biggest open-source release in 3D generation since the original Stable Diffusion was for 2D. The physics, the streaming navigation, the 175-language prompt support, the Apache 2.0 license, the 24 GB VRAM inference footprint — every design choice says we want developers to actually use this, not just benchmark it. That is the difference between a research paper and a product.

It is not perfect. Long-horizon consistency still lags World Labs. Mesh quality still lags hand-authored AAA assets. The copyright filter is aggressive in ways that will frustrate some use cases. None of that matters when the alternative is paying per-scene credits to a closed API.

Score: 9.2 out of 10. Half a point off for long-horizon drift. Three tenths off because fine-tuning requires an H100. If you build anything that touches 3D content — games, VFX, metaverse, simulation, education — clone the repo tonight. This is the moment the text-to-3D-world category went open-source and nothing about the next 12 months of the industry will be the same.

Dig deeper: see our coverage of DeepSeek R2, the Qwen 3.6 benchmark takedown, or browse the full AI news desk for more on the open-source AI wave.

Frequently asked questions

When did Tencent release HY-World 2.0?

Tencent released HY-World 2.0 on April 15, 2026, with a simultaneous drop on GitHub and HuggingFace. The package includes model weights (roughly 34 GB), inference code, a 42-page arXiv technical report, and a permissive Apache 2.0 license.

Is HY-World 2.0 really free for commercial use?

Yes. HY-World 2.0 is released under Apache 2.0, one of the most permissive open-source licenses. You can use it to generate commercial game assets, metaverse environments, VFX content, or simulations without paying any license fees to Tencent. You just need to keep the Apache 2.0 license notice in your distributed code.

What hardware does HY-World 2.0 need to run?

Inference runs on a single 24 GB VRAM GPU — any RTX 4090, RTX 3090, A10G, or equivalent. A 34 GB weights file loads in roughly 45 seconds. Fine-tuning requires 80 GB VRAM (H100) or multiple 24 GB cards with DeepSpeed. Real-time 30 FPS navigation is achievable on a single RTX 4090.

How does HY-World 2.0 compare to World Labs?

HY-World 2.0 wins on openness (Apache 2.0 vs closed beta), accessibility (public weights vs private), multi-language prompts (175+ vs English only), and hardware footprint (single GPU vs cloud-only). World Labs still has better long-horizon scene consistency for 30+ minute exploration sessions. For most indie and research use cases, HY-World 2.0 is the right choice.

How does HY-World 2.0 compare to Luma Genie 3?

Luma Genie 3 has slightly better artistic polish out of the box and a hosted API that is easy to integrate. HY-World 2.0 has open weights, Apache 2.0 license, native physics, 175-language prompts, and a single-GPU inference footprint. If you can run your own GPU and want to fine-tune, HY-World 2.0 wins. If you want a zero-ops hosted API and are willing to pay per-scene credits, Luma is still viable.

What game engines does HY-World 2.0 support?

HY-World 2.0 ships with native bridges to Unreal Engine 5, Unity 6, Blender 4.2, and Three.js. It exports scenes to industry-standard USD, USDZ, glTF, FBX, and OBJ formats, plus a custom .hyw streaming format. The community shipped a Blender plugin within 24 hours of release and an Unreal Engine 5 integration with 2,400 GitHub stars within 48 hours.

What are HY-World 2.0's biggest limitations?

Four known limitations: (1) long-horizon consistency drifts after 30+ minute navigation sessions; (2) the copyright filter aggressively refuses prompts mentioning named franchises; (3) voxel-to-mesh quality requires retopology for AAA hero assets; (4) fine-tuning requires 80 GB VRAM. None of these block standard indie dev, research, or previs use cases.

Does HY-World 2.0 support languages other than English?

Yes. HY-World 2.0 uses Tencent's Hunyuan LLM for prompt understanding and supports 175+ languages including Mandarin, French, Spanish, Arabic, Hindi, Indonesian, Japanese, Korean, German, and Portuguese. Prompt quality is equally coherent across major languages, which is a significant advantage over World Labs, Luma Genie 3, and NVIDIA Edify 3D — all of which are English-only.

Can HY-World 2.0 generate physics-accurate simulations?

Not for engineering use cases. HY-World 2.0 includes a lightweight neural physics network that produces visually plausible dynamics — rigid bodies roll, cloth drapes, fluids flow, wind blows — but it is not as accurate as a full PhysX or Havok solver. For creative use (games, VFX, previs) this is perfect. For digital twins or engineering simulation, you still need dedicated physics tools on top of the generated geometry.

Why is Tencent open-sourcing this instead of charging for it?

Tencent is following the same strategic playbook as DeepSeek, Alibaba Qwen, and other Chinese AI labs: out-open-source the closed US frontier to capture developer mindshare. If HY-World 2.0 becomes the de facto open-weights baseline for 3D world generation, Tencent captures platform value (cloud API hosting, Hunyuan LLM adoption, enterprise services) even without licensing the model itself. Free weights are a customer acquisition strategy for the broader Tencent Cloud ecosystem.