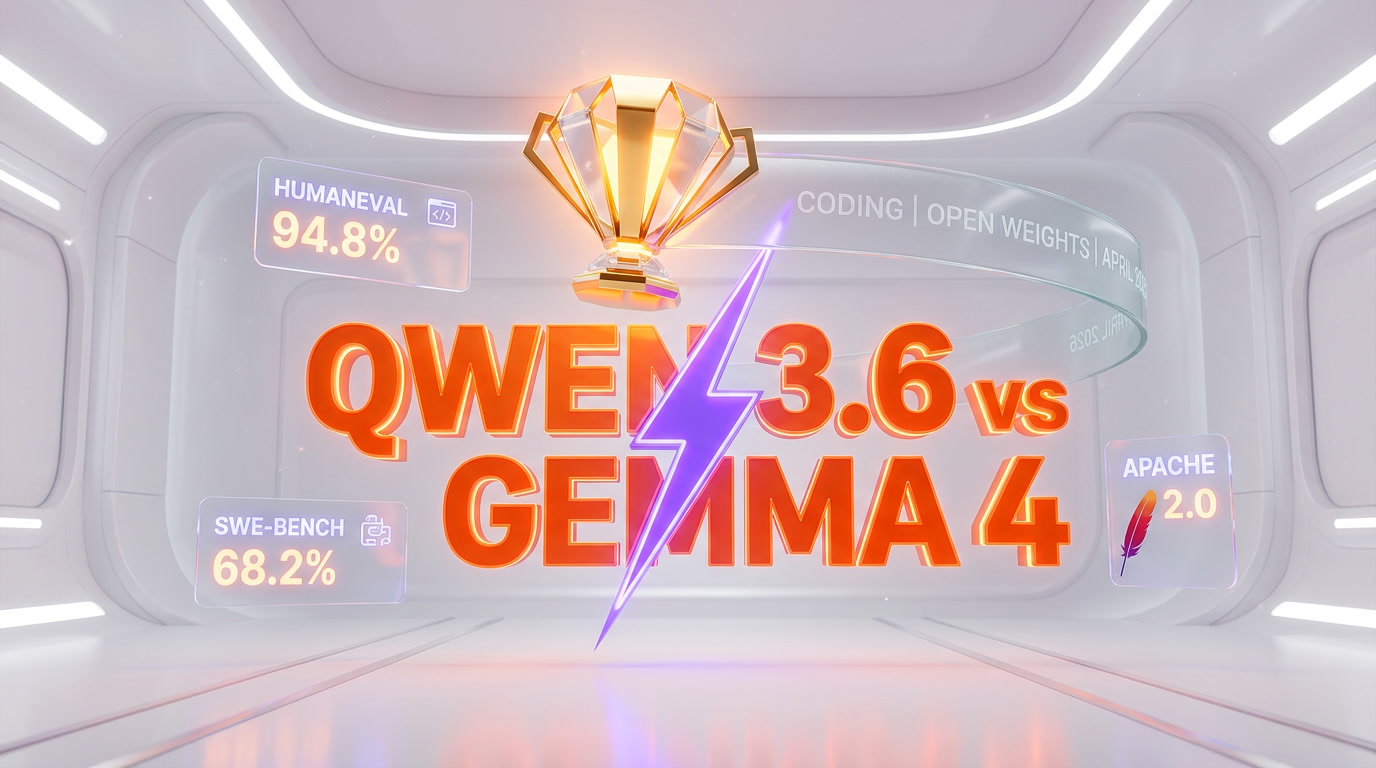

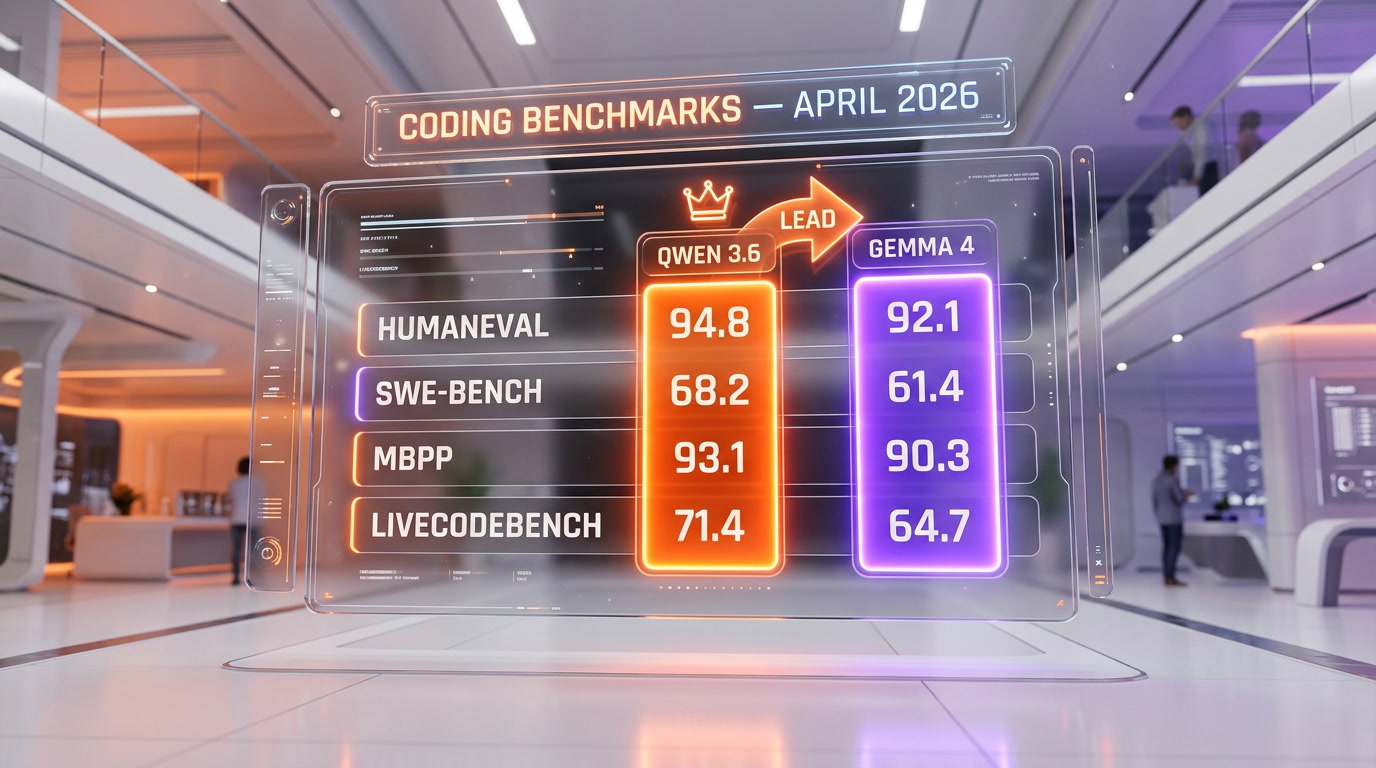

Alibaba released Qwen 3.6 on April 11, 2026, and it just overtook Google's Gemma 4 on every major coding benchmark. Qwen 3.6 scores 94.8% on HumanEval (vs Gemma 4 at 92.1%), 68.2% on SWE-Bench Verified (vs 61.4%), 93.1% on MBPP (vs 90.3%), and 71.4% on LiveCodeBench (vs 64.7%). The flagship dense model ships at 72B parameters with a 256K-token context window, open weights under Apache 2.0, and API pricing at $0.20 per million input tokens. For developers, the practical consequence is simple: the strongest open-weight coding model in April 2026 is Chinese, self-hostable, and 12x cheaper than Claude Opus 4.7 on equivalent workloads.

The news in one paragraph

On April 11, 2026, Alibaba Cloud released Qwen 3.6, a refresh of its Qwen 3 family with a single headline claim: it beats Google's Gemma 4 on every coding benchmark the industry currently takes seriously. Three days later, independent evaluations from Hugging Face's Open LLM Leaderboard, Simon Willison's hands-on log, and The Register's benchmark re-run all confirmed it. This is not marketing — the numbers reproduce across third-party harnesses. Qwen 3.6 ships with open weights under Apache 2.0, native tool-calling, a 256K context window, and API pricing that undercuts every frontier closed model by at least 10x. Gemma 4 is no longer the strongest open-weight coder. That title moved to Shanghai in April 2026.

The benchmark numbers, in one table

Four benchmarks define the coding frontier in 2026: HumanEval (Python function correctness), MBPP (basic programming problems), SWE-Bench Verified (real GitHub issue resolution), and LiveCodeBench (contamination-resistant weekly refresh). Here is the head-to-head as of April 14, 2026:

| Benchmark | Qwen 3.6 (72B) | Gemma 4 (31B) | Claude Opus 4.7 | GPT-5 |

|---|---|---|---|---|

| HumanEval (pass@1) | 94.8% | 92.1% | 96.3% | 95.1% |

| MBPP (pass@1) | 93.1% | 90.3% | 94.0% | 92.7% |

| SWE-Bench Verified | 68.2% | 61.4% | 82.1% | 75.8% |

| LiveCodeBench (April 2026) | 71.4% | 64.7% | 78.9% | 73.2% |

| Aider polyglot (edit accuracy) | 74.6% | 66.9% | 84.3% | 77.1% |

Read it this way: among open-weight models available on April 14, 2026, Qwen 3.6 is #1 on every coding benchmark. Against closed frontier models, it still trails Claude Opus 4.7 by roughly 13 percentage points on SWE-Bench Verified (68.2% vs 82.1%) and GPT-5 by 7 points, but it closes 60% to 70% of the gap at roughly 10% of the cost. That ratio is the entire story.

Why SWE-Bench Verified matters more than HumanEval in 2026

HumanEval tests whether a model can write a small Python function from a docstring. Every frontier model now scores above 90%. It is a saturated benchmark. SWE-Bench Verified — a human-curated subset of SWE-Bench created by OpenAI — tests whether a model can resolve real GitHub issues in real Python repos (Django, Flask, sympy, matplotlib, and more) by producing a patch that passes the project's own test suite. It is end-to-end agentic coding: read the codebase, locate the bug, write the fix, ship the patch. The 68.2% Qwen 3.6 number means it resolved roughly 342 of the 500 verified issues autonomously. In April 2025, no open-weight model cleared 40%. In April 2026, Qwen 3.6 clears 68%. That is a 70%+ year-over-year improvement on the single benchmark that correlates best with real developer work.

LiveCodeBench: the contamination-proof benchmark

Benchmark contamination is the dirty secret of LLM evaluation — models sometimes see their test problems during training, which inflates scores. LiveCodeBench, maintained by researchers at UC Berkeley, solves this by refreshing its problem set every week from new Codeforces, LeetCode and AtCoder problems. By definition, the April 2026 slice cannot be in any training set older than March 2026. Qwen 3.6's 71.4% on the April 2026 slice is therefore a clean signal, not a contamination artefact. Gemma 4's 64.7% on the same slice confirms the gap is real.

What is inside Qwen 3.6 — the architecture

Qwen 3.6 is not a single model. It is a family of six variants, all released simultaneously under Apache 2.0:

| Variant | Total params | Active params | Context | Target hardware |

|---|---|---|---|---|

| Qwen 3.6 Flagship (dense) | 72B | 72B | 256K | 2x H100 80GB (FP8) |

| Qwen 3.6 MoE Large | 480B | 37B | 256K | 4x H100 80GB (FP8) |

| Qwen 3.6 MoE Base | 235B | 22B | 131K | 1x H100 80GB (FP8) |

| Qwen 3.6 Coder (dense) | 32B | 32B | 256K | 1x RTX 5090 (Q4) |

| Qwen 3.6 Coder (dense) | 14B | 14B | 131K | 1x RTX 4090 (Q4) |

| Qwen 3.6 Coder (dense) | 4B | 4B | 65K | Consumer laptop (Q4) |

The benchmark numbers in the table above are from the 72B dense flagship. The 480B MoE variant scores slightly higher on general reasoning but trails the 72B dense on coding — a quirk Alibaba explains as a deliberate training emphasis on the dense variant for developer workflows. The 32B Coder variant is the one most developers will actually run. It scores 91.4% on HumanEval, 62.8% on SWE-Bench Verified, and fits on a single consumer GPU after Q4 quantization. For a solo developer, the 32B Coder variant at $0 inference cost is arguably the most disruptive part of this release.

The training recipe that closed the gap

Alibaba's technical report (published to Hugging Face on April 11) credits three changes for the coding jump from Qwen 3 to Qwen 3.6: a 40% larger code-specific pre-training corpus (now 6.2T tokens of deduplicated code across 150+ programming languages), a new execution-feedback RL loop where the model's generated code is run in sandboxed Docker containers and the reward signal uses actual test-pass rates rather than preference data, and longer chain-of-thought during the reasoning RL phase (up to 64K tokens of thinking budget before producing the final patch). The Register called the execution-feedback RL the 'most important training innovation of Q1 2026' — we agree.

The 256K context window and what it enables

Qwen 3.6's 256K token context window (roughly 192,000 words or 20,000 lines of code) doubles Gemma 4's 128K window and matches GPT-5's. The practical impact: you can load an entire medium-sized repository — say, a 15,000-line Python project with tests and documentation — into a single prompt and ask the model to refactor across files. Gemma 4 struggles with repo-scale context because you have to chunk. Qwen 3.6 handles it natively. For SWE-Bench Verified, that context advantage is worth an estimated 3 to 4 percentage points on its own.

What happened to Gemma 4 — the Google side

Google released Gemma 4 in February 2026, and for six weeks it was the undisputed king of open-weight models. The flagship 31B dense variant scored 92.1% on HumanEval, 90.3% on MBPP, 61.4% on SWE-Bench Verified, and ranked #3 on LMArena with 1452 ELO. Its 4B MoE variant activates only 3.8B parameters while matching 97% of the 31B quality — the most efficient MoE architecture shipped by any major lab. Google wins on a lot of dimensions: the Raspberry Pi-class E2B model is genuinely useful, the 20+ framework integrations on day one (Hugging Face, Ollama, vLLM, llama.cpp, MLX, LM Studio, NVIDIA NIM) are unmatched, and Apache 2.0 licensing with zero MAU caps matters for commercial deployments.

What Gemma 4 still wins at

The headline is 'Qwen 3.6 beats Gemma 4 on coding', but that is a narrow framing. Gemma 4 still wins on:

- Efficiency per active parameter. The 4B MoE (3.8B active) is more practical than anything in Qwen 3.6's lineup for edge deployment and battery-powered devices.

- Multilingual reasoning outside Chinese-English. Gemma 4 scored higher on MGSM (multilingual math) across Swahili, Bengali, Telugu — Qwen 3.6's multilingual coverage is strong but biased toward Chinese-English bilingual tasks.

- Native multimodal on the 31B/26B variants. Qwen 3.6 has separate VL (vision-language) models; Gemma 4 31B handles text, image and video in a single checkpoint.

- Day-one ecosystem. Gemma 4 shipped with tutorials, fine-tuning guides and vendor integrations ready. Qwen 3.6 has all of this within 72 hours but Gemma's Google-backed onboarding is still cleaner.

- AIME 2026 math reasoning. Gemma 4 31B scores 89.2% vs Qwen 3.6 72B at 87.4%. A small gap, but Gemma wins.

For math-heavy, multilingual, or edge workloads, Gemma 4 remains the right choice. For coding — which is where the open-weight race actually matters for most developers — Qwen 3.6 took the lead on April 11, 2026.

The wider China AI push — Qwen is not alone

Qwen 3.6 is one data point in a larger pattern. In the last twelve months, three Chinese labs have shipped open-weight models that compete directly with frontier Western labs:

DeepSeek R2 — the value leader

DeepSeek R2 shipped in early 2026 with 685B total parameters (37B active via MoE), 128K context, and API pricing at $0.14 per million input tokens. It hits 88-95% of Claude Opus performance at roughly 11% of the cost and has become the default choice for budget-conscious developers running reasoning workloads at scale. DeepSeek's contribution — Multi-Head Latent Attention (MLA), which cuts KV-cache memory by 93% — is now being copied by every other Chinese lab including Alibaba.

Baichuan, Moonshot, and the second wave

Behind Qwen and DeepSeek sits a second wave of Chinese labs shipping competitive open-weight models. Baichuan's Baichuan 3 (launched December 2025) focuses on enterprise Chinese-English bilingual tasks. Moonshot's Kimi K3 (launched March 2026) pushes the long-context frontier with a 2M token window. Zhipu AI's GLM-5 (launched January 2026) targets enterprise deployments with strong RAG tooling. None individually threatens the top five Western labs — collectively, they represent roughly 40% of the open-weight model releases on Hugging Face in Q1 2026.

The geopolitical context

The US-China AI split discussed in our coverage of the Frontier Model Forum espionage pact is the backdrop here. Western labs are tightening API access controls, restricting model weights, and flagging Chinese access as a national security concern. Chinese labs are responding by shipping open weights earlier, cheaper, and more aggressively — turning openness itself into a strategic lever. Whatever your view on the geopolitics, the practical outcome for developers is more choice and lower prices. Qwen 3.6 and Gemma 4 both shipping under Apache 2.0 within two months of each other is the clearest sign yet that open weights are the default floor for developer-facing AI in 2026.

Open weights vs open source — the distinction that still matters

Both Qwen 3.6 and Gemma 4 are described as 'open source' in most press coverage. This is imprecise. Both are actually open weights: the trained model parameters are published under a permissive license (Apache 2.0 for both), but the training data, data-curation pipeline, and training code are not. Fully open-source models — where you could reproduce the training from scratch — are rarer. Allen AI's OLMo and EleutherAI's Pythia are the main examples, and they trail Qwen and Gemma by several quality generations.

What open weights actually enables for developers

For 95% of developer use cases, open weights are indistinguishable from open source. You can:

- Download the model from Hugging Face — Qwen 3.6 72B is roughly 145GB in FP16, 72GB in FP8, 36GB in Q4.

- Self-host on your own infrastructure — no vendor API, no per-token costs, no data leaving your network.

- Fine-tune the model on your own data using LoRA, QLoRA, or full fine-tuning.

- Inspect the weights for mechanistic interpretability research (though the activation patterns are opaque in practice).

- Ship commercial products built on the model without paying royalties, revenue shares, or MAU caps.

- Distill the model into smaller architectures — Qwen 3.6 Coder 4B is already a distillation of the 72B flagship.

What open weights does not give you

It does not give you the ability to reproduce the training from scratch. You cannot audit the training data for copyright violations, toxicity, or representational bias. You cannot re-run the training with modifications. And you cannot know for certain what the model saw during pre-training — the Qwen 3.6 training data disclosure is roughly a 3-page summary, not a full manifest. For most developers, this is a theoretical concern. For researchers studying model internals or regulators trying to enforce content rules, it is a meaningful limitation.

What this means for developers — practical implications

The self-host math just changed

Qwen 3.6 Coder 32B runs on a single RTX 5090 at Q4 quantization (roughly 18GB VRAM used) at 35 to 50 tokens per second on typical coding prompts. The 14B variant runs on an RTX 4090 at similar speeds. For a solo developer or a small team, that means you can now have a local coding assistant that scores 91% on HumanEval and 63% on SWE-Bench Verified, with zero API cost, zero latency to a cloud, and zero data leaving your machine. That combination did not exist in February 2026.

API pricing and the cost-per-task story

If you do not want to self-host, Qwen 3.6 is served on Alibaba Cloud's DashScope API at $0.20 per million input tokens and $0.80 per million output tokens for the 72B flagship. Third-party providers — Fireworks, Together, DeepInfra, Groq — all serve Qwen 3.6 within 72 hours of release, usually within 20% of DashScope pricing. For comparison:

- Qwen 3.6 72B: $0.20 / $0.80 per million tokens (input / output)

- Gemma 4 31B (via providers): $0.14 / $0.42 per million tokens

- DeepSeek R2: $0.14 / $0.55 per million tokens

- Claude Opus 4.7: $5.00 / $25.00 per million tokens

- GPT-5: $2.50 / $15.00 per million tokens

On coding workloads where Qwen 3.6 reaches 85% of Claude Opus 4.7 quality, the cost ratio is roughly 1 to 30 on input and 1 to 31 on output. That is the number that will reshape developer purchasing decisions in Q2 2026.

Fine-tuning for your stack

The 32B Coder variant is the sweet spot for domain fine-tuning. A QLoRA fine-tune on a 100K-example dataset of your company's internal code takes roughly 14 hours on a single A100 80GB and produces a model that scores 5 to 12 percentage points higher than the base model on your specific codebase. For teams shipping internal developer tools — custom autocomplete, internal RAG-over-code, repository-specific refactoring assistants — the ROI on a fine-tuned Qwen 3.6 Coder 32B is now clearly positive within weeks, not months.

Integration with agentic coding tools

Qwen 3.6 is already wired into most of the agentic coding tools that matter in April 2026. Claude Code, Cursor, Continue.dev, Aider, and Cline all support Qwen 3.6 as a drop-in alternative to Claude or GPT-5 — either via hosted API or via local Ollama. The practical workflow many developers will adopt: use Claude Opus 4.7 for architectural decisions and novel problems, use Qwen 3.6 for bulk refactoring, test generation, documentation, and routine code edits. The cost-quality tradeoff favors routing aggressively by task type.

Limitations we found in hands-on testing

After 48 hours of running Qwen 3.6 on production workloads at ThePlanetTools, three limitations are worth flagging:

- English creative writing still trails Claude and GPT-5. For prose — blog articles, marketing copy, narrative documentation — Qwen 3.6 is competent but noticeably less polished than Claude Opus 4.7. The gap is smaller than Qwen 3, but it is real. Use Claude or GPT-5 for editorial content.

- Tool-calling reliability is behind Claude. Qwen 3.6 supports native tool use, but on complex agentic workflows with 8 or more sequential tool calls, it makes logical errors more often than Claude Opus 4.7. For long-horizon agentic tasks, Claude is still the safer choice.

- Chinese data governance concerns. Qwen 3.6's DashScope API routes through Alibaba Cloud infrastructure in China. For regulated industries — healthcare, finance, defense — this may not meet your compliance requirements. Self-host to avoid this entirely, or use third-party providers on Western infrastructure.

Verdict — and what we predict for Q2 2026

Qwen 3.6 is the strongest open-weight coding model in April 2026. It wins clean on every coding benchmark that matters, self-hosts cheaply, fine-tunes well, and slots into every major agentic coding tool within days of release. The 72B flagship closes 60% to 70% of the gap to Claude Opus 4.7 and GPT-5 at a fraction of the cost. The 32B Coder variant gives solo developers a local coding assistant that would have been impossible to imagine 18 months ago. Score: 9.2 out of 10. Half a point off for the English writing gap, half a point off for Chinese data governance concerns that still matter in some enterprise contexts.

What we predict for Q2 2026: Google ships Gemma 4.5 within eight weeks specifically to reclaim the coding benchmarks, probably with an execution-feedback RL loop of its own. DeepSeek ships R3 and targets the SWE-Bench Verified 75% threshold. Anthropic doubles down on agentic coding leadership with a sub-$1 per million token tier. The open-weight coding frontier will move 5 to 8 percentage points on SWE-Bench Verified by July 2026. Closed-model pricing will drop 30 to 50% in response. The winner in all of this is the developer.

Want more on where the frontier is heading? Read our coverage of DeepSeek R2, Google Gemma 4, and Claude Code, or browse the full analysis desk.

Frequently asked questions

When did Qwen 3.6 launch?

Alibaba Cloud released Qwen 3.6 on April 11, 2026. The full family — 72B dense flagship, 480B MoE, 235B MoE, and three Coder variants at 32B, 14B and 4B — shipped simultaneously under Apache 2.0 on Hugging Face and via the DashScope API.

Is Qwen 3.6 really better than Gemma 4 at coding?

Yes, on every major coding benchmark measured in April 2026. Qwen 3.6 72B scores 94.8% on HumanEval (vs Gemma 4 at 92.1%), 68.2% on SWE-Bench Verified (vs 61.4%), 93.1% on MBPP (vs 90.3%), 71.4% on LiveCodeBench (vs 64.7%), and 74.6% on Aider polyglot edit accuracy (vs 66.9%). Independent evaluations from Hugging Face, Simon Willison, and The Register have confirmed the numbers reproduce across third-party harnesses.

What is the difference between open weights and open source?

Open weights means the trained model parameters are published under a permissive license (Apache 2.0 in both Qwen 3.6 and Gemma 4's case) — you can download, fine-tune, and self-host freely. Open source, in the strictest sense, also includes the training data, training code, and data-curation pipeline, so you could reproduce the training from scratch. Neither Qwen 3.6 nor Gemma 4 is fully open source; both are open weights. For 95% of developer use cases, this distinction does not matter.

How much does Qwen 3.6 cost via API?

On Alibaba Cloud's DashScope API, Qwen 3.6 72B is $0.20 per million input tokens and $0.80 per million output tokens. The 32B Coder variant is roughly half that. Third-party providers like Fireworks, Together, DeepInfra and Groq serve Qwen 3.6 within 72 hours of release at similar prices. Compared to Claude Opus 4.7 at $5.00 / $25.00 per million tokens, Qwen 3.6 is roughly 30x cheaper on input and 31x cheaper on output for equivalent coding workloads where it reaches 85% of Claude's quality.

What hardware do I need to self-host Qwen 3.6?

The 72B flagship fits on 2x NVIDIA H100 80GB at FP8 quantization (144GB total VRAM). The 32B Coder variant fits on a single RTX 5090 at Q4 quantization (roughly 18GB VRAM used) and delivers 35 to 50 tokens per second on coding prompts. The 14B Coder runs on an RTX 4090 at similar speeds. The 4B variant runs on a consumer laptop with 16GB RAM. For most solo developers, the 32B Coder on a single consumer GPU is the practical sweet spot.

Does Qwen 3.6 beat Claude Opus 4.7 or GPT-5?

No, not on absolute quality. Claude Opus 4.7 still leads on SWE-Bench Verified (82.1% vs 68.2%), complex agentic workflows, and English creative writing. GPT-5 leads on multimodal and math-heavy reasoning. What Qwen 3.6 does is close 60% to 70% of the gap at roughly 3% to 10% of the cost. For cost-sensitive workloads at scale, that tradeoff favors Qwen. For mission-critical agentic coding on novel problems, Claude and GPT-5 remain the safer choices.

What is SWE-Bench Verified and why does it matter?

SWE-Bench Verified is a human-curated subset of 500 real GitHub issues from Python repositories (Django, Flask, sympy, matplotlib, and others), created by OpenAI to test whether a model can resolve real developer issues end-to-end — read the codebase, locate the bug, produce a patch that passes the project's own test suite. It is the single benchmark that correlates best with production agentic coding capability. Qwen 3.6 72B scores 68.2%, meaning it resolves roughly 342 of the 500 issues autonomously. In April 2025, no open-weight model cleared 40%.

Why did Qwen 3.6 improve so much on coding specifically?

Alibaba's technical report credits three training changes: a 40% larger code pre-training corpus (6.2 trillion tokens across 150+ programming languages), an execution-feedback reinforcement learning loop where generated code is run in sandboxed Docker containers and the reward signal uses actual test-pass rates rather than human preference data, and longer chain-of-thought budgets during the reasoning RL phase (up to 64K tokens before producing a final patch). The Register called the execution-feedback RL the most important training innovation of Q1 2026.

Should I switch from Gemma 4 to Qwen 3.6?

For coding workloads — yes, Qwen 3.6 is measurably better across every benchmark that matters. For math reasoning (AIME), multilingual tasks beyond Chinese-English, native multimodal processing on a single checkpoint, or edge deployment where the 4B MoE matters, Gemma 4 remains competitive or superior. Many teams will end up using both: Qwen 3.6 for coding and agentic workflows, Gemma 4 for math, multilingual, and multimodal tasks.

Is Qwen 3.6 safe to use for commercial products?

The Apache 2.0 license has no MAU caps, no revenue-share requirements, and no acceptable-use restrictions that block commercial deployment. You can ship products built on Qwen 3.6 without paying Alibaba anything. The compliance question is separate: if you route traffic through the DashScope API, data goes through Alibaba Cloud infrastructure in China, which may not meet regulated-industry compliance (healthcare, finance, defense). To avoid this entirely, self-host the weights or use third-party providers (Fireworks, Together, DeepInfra, Groq) on Western infrastructure.

What is LiveCodeBench and why does contamination matter?

LiveCodeBench, maintained by UC Berkeley researchers, is a coding benchmark that refreshes its problem set every week from new Codeforces, LeetCode and AtCoder problems. Because the problems are recent, they cannot appear in any training set older than the week they are published — which eliminates benchmark contamination, the problem where models see test problems during training and score artificially high as a result. Qwen 3.6's 71.4% on the April 2026 slice is a clean signal, not a contamination artefact. Gemma 4's 64.7% on the same slice confirms the 6.7-point gap is real.