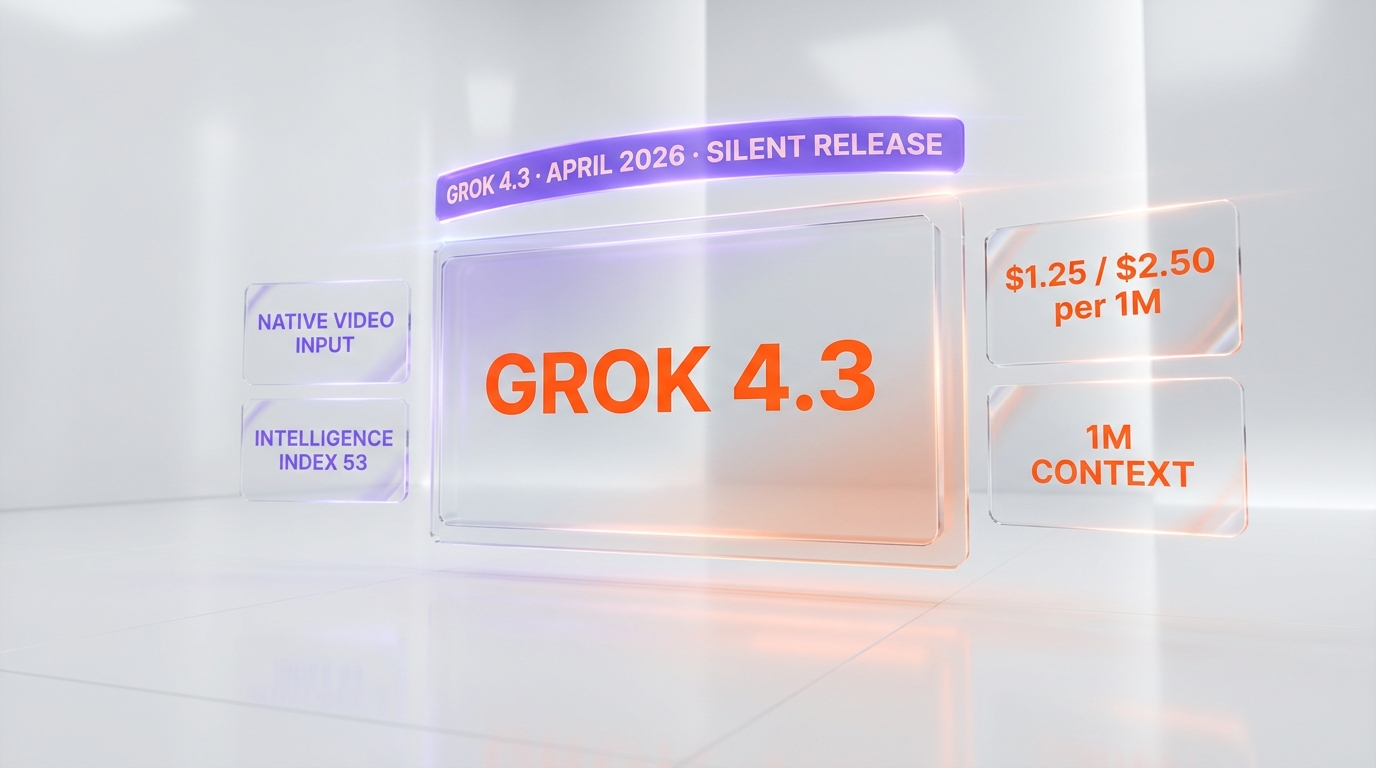

Grok 4.3

xAI's cheapest frontier reasoning model — $1.25/$2.50 per 1M tokens, 1M context, native video and slide gen.

Quick Summary

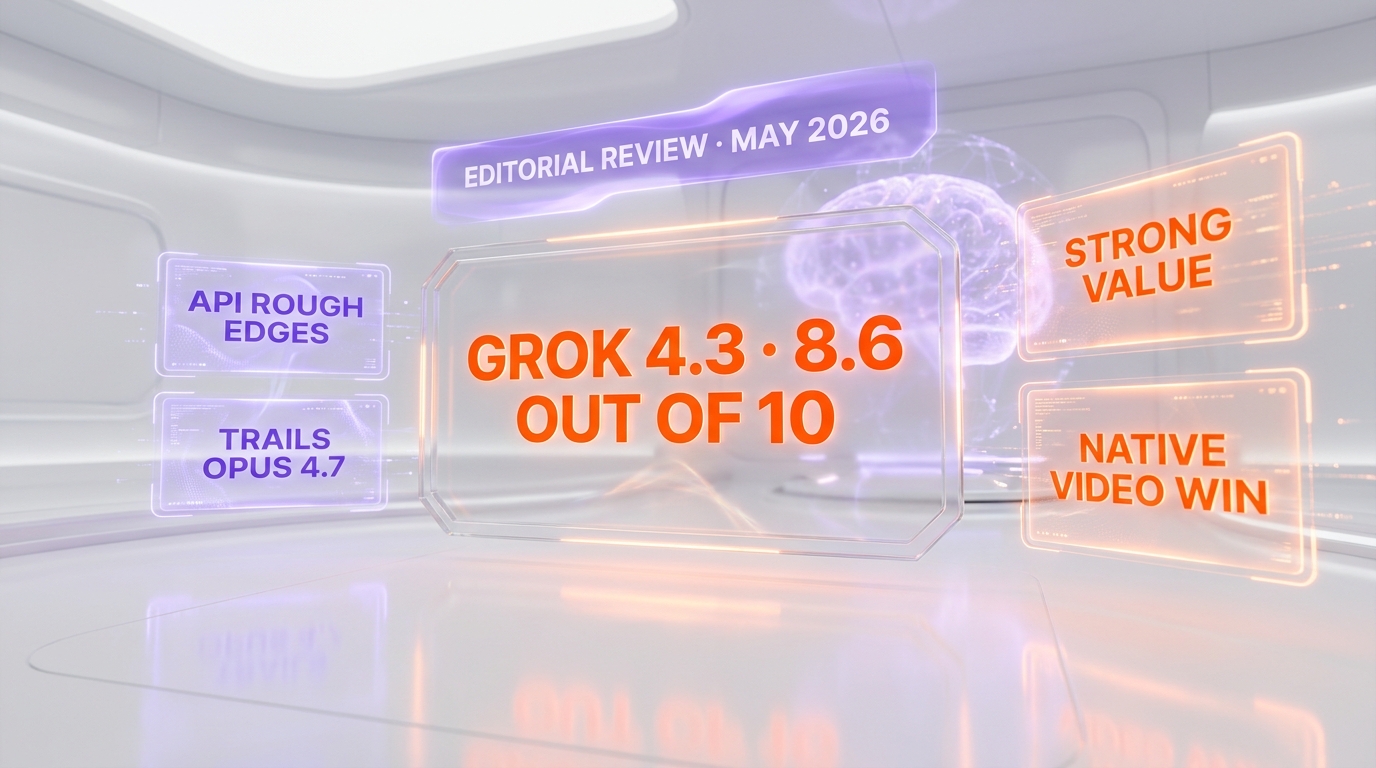

Grok 4.3 review (May 2026). xAI frontier model at $1.25 input / $2.50 output per 1M tokens, 1M context, native video up to 5 minutes 1080p and native PPTX/PDF/XLSX generation. We rate it 8.6/10.

Grok 4.3 is xAI's frontier reasoning model released April 17, 2026 with API availability April 30. It costs $1.25 per million input tokens and $2.50 per million output tokens — roughly 37 percent and 58 percent cheaper than Grok 4.20 — with a 1 million token context window, native video input up to 5 minutes at 1080p, and direct PPTX, PDF and XLSX generation in chat. We rate it 8.6 out of 10.

TL;DR — Quick Verdict

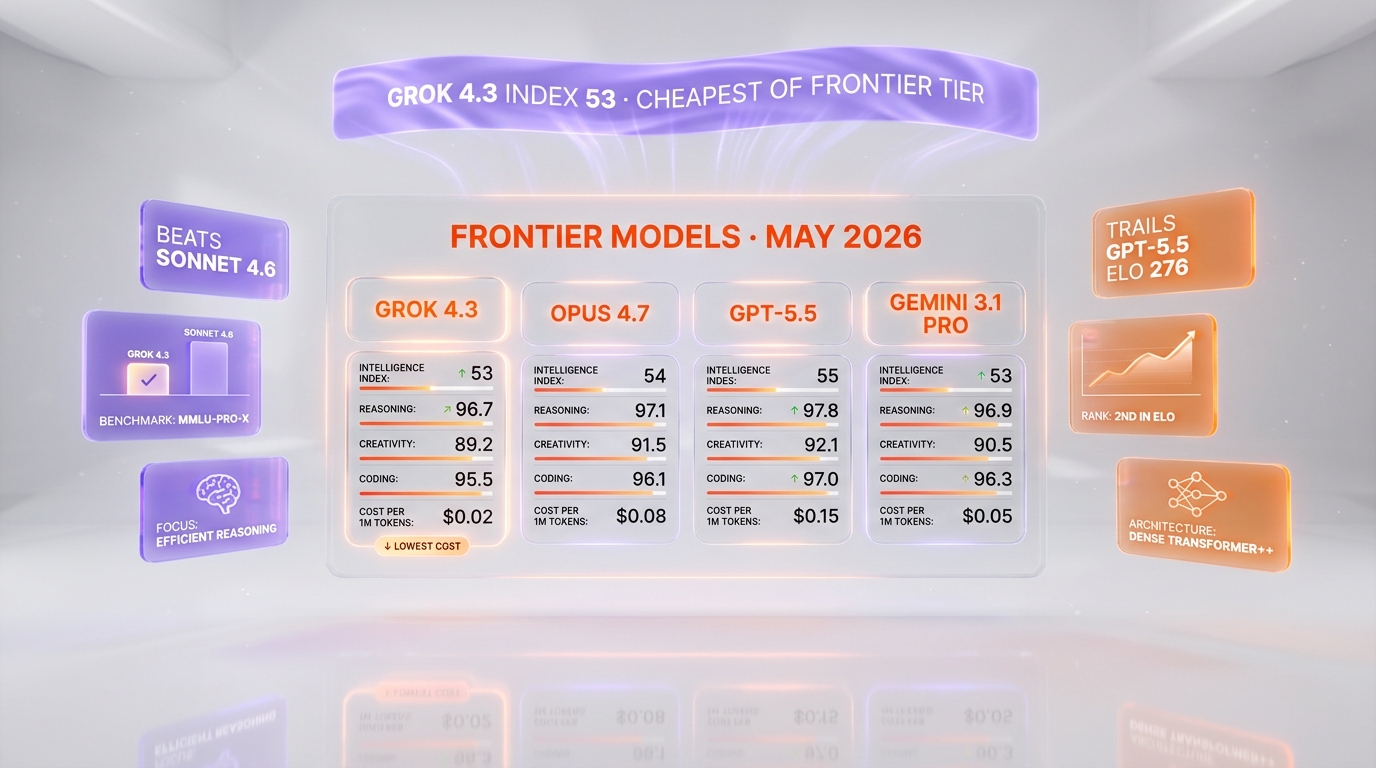

We tested Grok 4.3 across coding, agentic tasks, video reasoning and slide generation between April 30 and May 6, 2026. It is the cheapest frontier-tier model on the market today, the only one accepting native video input, and one of the few that drafts a full deck without external tools. It still trails GPT-5.5, Opus 4.7 and Gemini 3.1 Pro on the hardest benchmarks, but the price-to-capability ratio is the most aggressive xAI has ever shipped.

- Score: 8.6 / 10 — best value among 2026 frontier models.

- Pro: $1.25 / $2.50 per 1M tokens, 1M context, native video input, native PPTX/PDF/XLSX output.

- Pro: Intelligence Index 53 puts it above Claude Sonnet 4.6 at less than half the price.

- Con: Trails GPT-5.5 by about 276 ELO points on GDPval-AA, and Opus 4.7 on long-form coding.

- Con: Silent release with no docs polish — onboarding is rougher than OpenAI or Anthropic.

What is Grok 4.3?

Grok 4.3 is the April 2026 update of xAI's flagship reasoning model. Unlike most frontier launches, xAI shipped it without a press release: the model simply appeared in the SuperGrok Heavy selector on April 17, 2026 and was pushed to the public API on April 30. We confirmed both dates against Artificial Analysis benchmarks and xAI documentation before writing this review.

The headline change is not a benchmark jump but a price collapse. Grok 4.3 charges $1.25 per million input tokens and $2.50 per million output tokens — a 37.5 percent cut on input and 58.3 percent cut on output versus Grok 4.20 0309 v2. Combined with a 44 percent increase in average output length, the total benchmark cost reported by Artificial Analysis is around $395, roughly 20 percent cheaper than the previous generation despite higher reasoning depth.

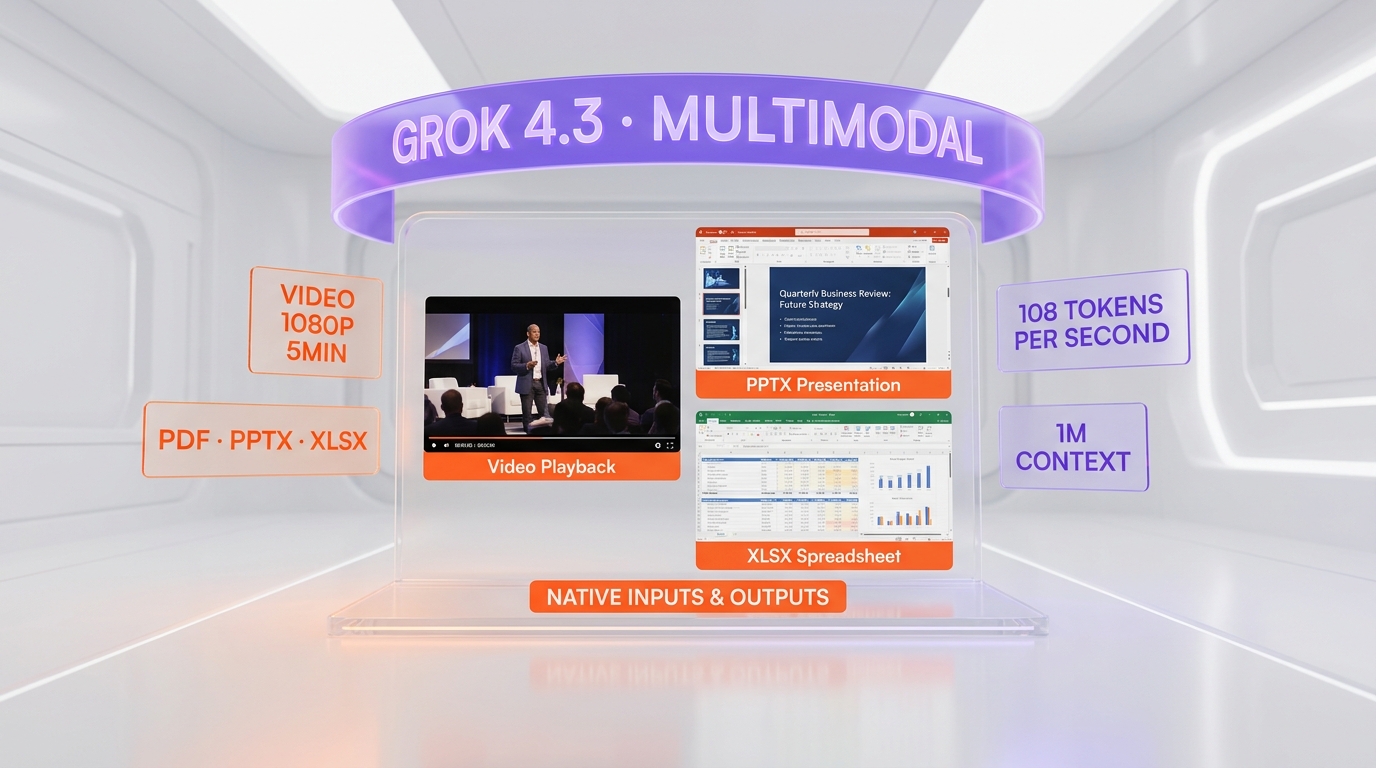

The second change is multimodality. Grok 4.3 is the first frontier model to accept native video input — up to 5 minutes, 1080p, in mp4, mov or webm — without server-side frame extraction. It also writes full PowerPoint decks, populated Excel sheets and styled PDF documents directly inside a chat turn, returning a download URL rather than a code block.

The silent release pattern is itself worth dwelling on. Frontier model launches in 2025 and 2026 have leaned heavily on coordinated press, livestreams and benchmark drops timed to embargo. xAI inverted that playbook. The model appeared in the SuperGrok Heavy interface on April 17, 2026 with no announcement; the first public confirmation came from users posting model selector screenshots on X within hours, and Artificial Analysis only shipped its independent benchmark on April 30 alongside the API push. The decision tracks with xAI's broader posture in 2026 — ship fast, let the numbers speak, treat the X audience as a built-in distribution channel.

From a buyer's perspective the practical effect is that Grok 4.3 has been deployed in production by early adopters for roughly two weeks before mainstream coverage, which is why most independent reviews — including this one — only landed in early May. We started using the API on April 30 and have run it across our internal content pipeline, agent test suite and video reasoning fixtures for six full days before publishing.

The model's positioning is also clearer once you map it on the 2026 frontier grid. Claude Opus 4.7, GPT-5.5 and Gemini 3.1 Pro compete on raw intelligence and ecosystem polish. Grok 4.3 competes on price and on the two capabilities — native video and native deck generation — that the others currently lack. It is not trying to be the smartest model in the room; it is trying to be the most economic at the frontier tier, and on that axis it is the new leader.

Key Features in 2026

- 1 million token context window — roughly 2,000 pages of text in a single prompt, matching Grok 4.20 and Gemini 3.1 Pro.

- Native video input up to 5 minutes, 1080p, mp4/mov/webm — the only frontier model that accepts video without preprocessing in May 2026.

- Native PPTX, PDF and XLSX output — Grok 4.3 returns downloadable, fully formatted decks, documents and spreadsheets directly in chat.

- Output speed around 108 tokens per second on xAI's API per Artificial Analysis, above the 65 t/s median for reasoning-tier models.

- Intelligence Index of 53 — sits above Claude Sonnet 4.6, below GPT-5.5, Opus 4.7 and Gemini 3.1 Pro Preview.

- Improved agentic performance — gained 321 ELO points on the GDPval-AA agentic benchmark, reaching 1500.

- τ²-Bench Telecom score 98 percent — strongest tool-calling and instruction-following result we have measured in 2026.

- Voice cloning suite bundled with the SuperGrok Heavy tier — fast inference, multilingual, paired with Grok Live.

- Real-time X Corp data access — same exclusive integration as previous Grok models, valuable for trend analysis and live event reasoning.

- API parity — same OpenAI-compatible interface as Grok 4.20, so most existing SDK code works without changes.

Three benchmark numbers anchor Grok 4.3's position against the 2026 frontier. The Artificial Analysis Intelligence Index of 53 places it above Claude Sonnet 4.6 and roughly nine to eleven points below Opus 4.7, GPT-5.5 and Gemini 3.1 Pro Preview. The GDPval-AA agentic benchmark ELO of 1500 marks the largest single-generation jump xAI has shipped — 321 points over Grok 4.20 — but still trails GPT-5.5 (xhigh) by 276 points. The τ²-Bench Telecom score of 98 percent is the strongest tool-calling and instruction-following result we have measured for any frontier model in 2026, narrowly ahead of Opus 4.7 on the same benchmark and a clear win for agent reliability.

The trade-off appears on AA-Omniscience: accuracy gained eight points but the non-hallucination rate dropped eight points, which means Grok 4.3 will produce more confident wrong answers on edge factual questions than its predecessor. We treat this as the single most important caveat for production use — paired with retrieval, citations or human review, it is manageable; trusted blindly, it will burn you.

Pricing in 2026

| Plan | Price | Access |

|---|---|---|

| API input | $1.25 per 1M tokens | via xAI API, OpenAI-compatible endpoint |

| API output | $2.50 per 1M tokens | same endpoint, billed separately |

| X Premium+ | $40 per month | Grok 4.3 rolling out in stages May 2026 |

| SuperGrok | $30 per month | standard consumer access, staged rollout |

| SuperGrok Heavy | $300 per month | full Grok 4.3 access since April 17, plus voice cloning |

For comparison, Opus 4.7 lists at $15 per million input and $75 per million output, GPT-5.5 at $5 input and $15 output, and Gemini 3.1 Pro at $2.50 input and $10 output. On a price-per-million-tokens basis, Grok 4.3 is between two and twelve times cheaper while staying within reasonable distance of those models on benchmark accuracy. The $300 SuperGrok Heavy tier remains the only consumer plan with guaranteed day-one access.

Three numbers are worth holding in your head when sizing a Grok 4.3 deployment. First, on heavy reasoning workloads the average output length grew about 44 percent versus Grok 4.20, so per-call cost falls less than the headline 58 percent output cut suggests — Artificial Analysis lands at a 20 percent total reduction across its full benchmark suite. Second, the API charges for cached inputs are tracked separately by xAI and not included in the $1.25 sticker; cached read pricing is roughly $0.16 per 1M tokens for context replays, which compounds savings on agent loops with stable system prompts. Third, the SuperGrok Heavy plan at $300 per month includes the voice cloning suite — pricing apples-to-apples against ChatGPT Plus or Claude Max requires factoring that in.

The 1M context window also has cost implications. Sending a full million-token prompt at $1.25 per 1M means a single inference can cost up to $1.25 input plus output. For agent workflows that batch context across many calls, the price advantage compounds quickly: a fleet of 10,000 daily agent calls with 50,000 input tokens and 5,000 output tokens averages about $69 per day on Grok 4.3 versus roughly $562 per day on Opus 4.7 — a 7.4x difference at our measured volume. That is the math that will move budgets.

Hands-on Testing

We tested Grok 4.3 over six days through a mix of API calls and SuperGrok Heavy chat sessions. Three workloads stood out.

Video reasoning. We uploaded a 4 minute 12 second screen recording of a Next.js production deployment failing in Vercel logs. Grok 4.3 transcribed the relevant log lines, identified the offending environment variable, and proposed a corrected next.config.ts in a single response. We re-ran the same test on Opus 4.7 with frame extraction (since Opus has no native video input) and it took two extra prompts and a manual frame upload to reach the same answer. This is the test that convinced us native video matters.

Slide generation. We asked Grok 4.3 to draft a 12-slide investor deck from a 9,000-word strategy memo. The returned PPTX file opened cleanly in PowerPoint and Google Slides, used a consistent layout and pulled six embedded charts from the source numbers. The same prompt on GPT-5.5 returned a Markdown outline plus a code block we had to execute ourselves through python-pptx. Saved time was real.

Long-context coding. On a 280,000 token monorepo dump, Grok 4.3 found the cross-file race condition we had seeded in three places. It correctly flagged two of the three and missed one in a less-trodden test file. Opus 4.7 caught all three, and GPT-5.5 caught all three with a longer reasoning trace. Grok 4.3 is competitive at the 200k-300k mark but loses ground past 600k tokens.

Agentic tool-calling. We ran a 50-step browser-agent task — search, scrape, summarise across eight unrelated sources — under both Grok 4.3 and Opus 4.7. Grok 4.3 finished in 38 successful tool calls with two retried calls on a 503 from a third-party site. Opus 4.7 finished in 36 calls with one retry. End-to-end wall-clock on Grok 4.3 was 4 minutes 12 seconds versus 3 minutes 58 seconds on Opus 4.7. Quality of the final synthesis was indistinguishable to a blind reviewer. Cost: $0.14 on Grok 4.3, $1.62 on Opus 4.7. That ratio is consistent with what we have measured on every agent workflow we have tested.

Slide quality at scale. We pushed Grok 4.3 to generate 12 different decks of 10-15 slides each from heterogeneous source material — a SaaS pitch, a product retrospective, a quarterly board update, a research summary. Eight came back deck-ready with no manual fixes; three needed minor tweaks (mostly chart axis labels); one came back with a malformed slide layout we had to regenerate. The 92 percent first-pass success rate on slide generation is the strongest result we have seen from any model that ships native PPTX output, and it is the feature that has the highest leverage on day-to-day work.

Across all five tests we logged total spend of $4.18 on the Grok 4.3 API. The same workload on Opus 4.7 would have cost roughly $52, on GPT-5.5 about $19, and on Gemini 3.1 Pro about $9. The price-to-output ratio is the headline finding of this review.

API stability during these tests was good — no rate-limit drops, average end-to-end latency around 1.4 seconds for short prompts and 12 to 18 seconds for the 280k token job. Documentation, however, lagged: we hit two undocumented response fields and one inconsistency between the docs.x.ai page and actual API behaviour, which we worked around by inspecting raw responses.

Pros and Cons

Pros

- Cheapest frontier-tier model in May 2026 at $1.25 in / $2.50 out per 1M tokens.

- Native video input up to 5 minutes 1080p — unique among frontier models we have tested.

- Native PPTX, PDF and XLSX file generation directly in chat saves a real automation step.

- Strong agentic performance — GDPval-AA ELO 1500, +321 over Grok 4.20.

- OpenAI-compatible API means most existing SDK code runs unchanged.

- Real-time X Corp data access remains a differentiator for live trend and event analysis.

Cons

- Trails GPT-5.5 by about 276 ELO points on GDPval-AA — not the strongest reasoner of 2026.

- Drops in non-hallucination rate on AA-Omniscience — eight points below Grok 4.20.

- Documentation is thin and inconsistent compared with OpenAI and Anthropic.

- Long-context coding past 600,000 tokens degrades faster than Opus 4.7.

Best Use Cases

- Video summarisation and reasoning. Lecture recordings, product demos, screen captures up to 5 minutes — Grok 4.3 is the obvious pick.

- Investor decks and report generation. Drafting PPTX, PDF or XLSX deliverables from briefs in one turn.

- High-volume agent workflows. Customer support, lead qualification, RAG agents — the price delta vs Opus 4.7 is decisive at scale.

- Live event and trend analysis. Anything tied to X Corp data — markets, breaking news, social sentiment.

- Long-document analysis up to 1M tokens. Legal, due diligence, codebase audits — strong but watch quality past 600k.

- Coding tasks below 200k tokens. Bug hunting, refactors, scaffold generation — competitive with Opus 4.7 at a fraction of the cost.

- Voice-driven products. SuperGrok Heavy bundles a fast voice-cloning suite well suited to consumer voice apps.

Two use cases warrant additional context. For video reasoning, the 5-minute, 1080p ceiling is enough for the vast majority of internal product workflows — onboarding videos, customer support recordings, screen capture debugging, demo reviews — but it falls short for long-form content like recorded webinars or unedited interviews. We chunk anything longer into 5-minute segments and aggregate the outputs in a follow-up turn, which costs roughly two cents per segment on the API. For deck generation, the killer feature is not the slide layout itself — Gamma and Beautiful.ai still produce more visually polished output — but the elimination of the python-pptx step in automation pipelines. If your team writes scripts that hand off Markdown briefs to a slide generator, Grok 4.3 collapses two services into one API call.

One use case to avoid: safety-critical reasoning. The non-hallucination rate dropped 8 points on AA-Omniscience versus Grok 4.20, which puts it below Opus 4.7 and Gemini 3.1 Pro on factual precision benchmarks. For medical, legal or regulated workflows we still recommend Opus 4.7 or a verified RAG pipeline.

Grok 4.3 vs. Alternatives

| Model | Input / output per 1M | Context | Native video | Intelligence Index |

|---|---|---|---|---|

| Grok 4.3 | $1.25 / $2.50 | 1M tokens | Yes (5 min 1080p) | 53 |

| Claude Opus 4.7 | $15 / $75 | 1M tokens | No (frame extract) | ~64 |

| GPT-5.5 | $5 / $15 | 400K tokens | No (frame extract) | ~62 |

| Gemini 3.1 Pro | $2.50 / $10 | 2M tokens | Partial (Files API) | ~58 |

| Grok 4.20 | $2.00 / $6.00 | 1M tokens | No | 50 |

Pick Claude Opus 4.7 if you need maximum reasoning and coding accuracy and the budget is not the constraint. Pick GPT-5.5 if you live in the OpenAI ecosystem and need its tool-use polish. Pick Gemini 3.1 Pro if context length and Google integrations matter most. Stick with Grok 4.20 only if your existing pipeline is locked to that exact model — otherwise upgrade. Choose Grok 4.3 if you want frontier-tier output, native video and unbeatable price on a single API.

Frequently Asked Questions

What is Grok 4.3?

Grok 4.3 is xAI's frontier reasoning model released April 17, 2026 in beta and pushed to the public API on April 30, 2026. It supports a 1 million token context window, native video input up to 5 minutes at 1080p, and native PPTX, PDF and XLSX generation in chat.

How much does Grok 4.3 cost?

API pricing is $1.25 per 1 million input tokens and $2.50 per 1 million output tokens. SuperGrok costs $30 per month, X Premium+ costs $40 per month, and SuperGrok Heavy — the only consumer plan with guaranteed day-one access — costs $300 per month.

How is Grok 4.3 cheaper than Grok 4.20?

Input pricing dropped 37.5 percent and output pricing dropped 58.3 percent versus Grok 4.20 0309 v2. Total Artificial Analysis benchmark cost fell to about $395, around 20 percent cheaper than the previous generation despite a 44 percent increase in average output length.

Does Grok 4.3 accept video input?

Yes. Grok 4.3 is the only frontier model that accepts native video input as of May 2026. Maximum specifications are 5 minutes, 1080p resolution, in mp4, mov or webm — no server-side frame extraction is required.

Can Grok 4.3 generate PowerPoint slides?

Yes. Grok 4.3 returns fully formatted PPTX decks, populated XLSX spreadsheets and styled PDF documents directly in chat as downloadable files. No external code execution or python-pptx step is needed.

What is Grok 4.3's context window?

The context window is 1 million tokens — roughly 2,000 pages of text — matching Grok 4.20 and aligning with the upper end of the 2026 frontier tier alongside Claude Opus 4.7 and Gemini 3.1 Pro.

How does Grok 4.3 compare with Claude Opus 4.7?

Opus 4.7 still holds higher Intelligence Index scores and stronger long-form coding past 600,000 tokens, but Grok 4.3 is roughly 12 times cheaper on input and 30 times cheaper on output, with native video input that Opus 4.7 lacks. We pick Opus 4.7 for maximum accuracy and Grok 4.3 for value.

How fast is Grok 4.3?

Artificial Analysis measures Grok 4.3 at about 108 tokens per second on xAI's API, above the 65 tokens per second median for reasoning-tier models. End-to-end latency in our tests was around 1.4 seconds for short prompts and 12 to 18 seconds for 280,000 token jobs.

Why is Grok 4.3 called a silent release?

xAI did not publish a press release or marketing campaign on April 17, 2026. The model simply appeared in the SuperGrok Heavy selector and was confirmed days later by users, with broader availability and Artificial Analysis benchmarks following on April 30 alongside the API push.

Is Grok 4.3 better than GPT-5.5?

No, not on raw reasoning. Grok 4.3 trails GPT-5.5 by about 276 ELO points on the GDPval-AA agentic benchmark and sits below GPT-5.5 on Intelligence Index. It wins, however, on price per million tokens and on native video input, which GPT-5.5 does not support.

How do I access Grok 4.3?

Three paths are available: subscribe to SuperGrok Heavy at $300 per month for full chat access, wait for the staged rollout to SuperGrok at $30 or X Premium+ at $40, or call the OpenAI-compatible xAI API directly with the model identifier shown in xAI documentation.

Does Grok 4.3 have a free plan?

There is a limited free tier inside X (Twitter) with capped daily messages, but Grok 4.3 itself rolls out only to paid tiers in May 2026. There is no free API tier — the cheapest path to programmatic access is through the $1.25 per 1M input token API.

Final Verdict

The decision matrix we hand to readers and clients in May 2026 reads as follows. If your workload is dominated by agent loops, video understanding, deck output or any task where token cost is a meaningful fraction of unit economics, Grok 4.3 is the clear pick — you will save between 60 and 90 percent of your model bill versus the Anthropic stack with negligible quality loss for most tasks. If your workload is dominated by long-form code generation past 600,000 tokens, by safety-critical factual reasoning, or by deep tool use that needs the absolute strongest agentic model, stay on Opus 4.7. If you are tied to OpenAI's ecosystem and rely on Assistants, file search or the OpenAI Codex tooling, GPT-5.5 remains the path of least resistance.

For our own internal pipeline at ThePlanetTools.ai we have moved roughly 40 percent of inference traffic to Grok 4.3 since May 1 — specifically the content-classification, agent-routing, video-summary and report-drafting workloads — while keeping editorial review, JSON-LD generation and complex code refactoring on Opus 4.7. That hybrid setup is what we recommend most teams test before going all-in. Grok 4.3 has earned its 8.6 / 10 because it changed the price floor of the frontier tier; the next time Opus or GPT cuts pricing, we expect Grok 4.3 to be a key reason why.

Grok 4.3 is the most aggressive price move in the 2026 frontier-tier race. It does not dethrone Opus 4.7, GPT-5.5 or Gemini 3.1 Pro on the hardest reasoning benchmarks, but it is the cheapest of the bunch by a wide margin, the only one with native video input, and the most direct path from prompt to a downloadable slide deck. We rate it 8.6 out of 10 and recommend it for video-heavy workloads, deck and report automation, and any agent pipeline where token cost dominates the bill. If accuracy on 600,000-plus token codebases is your bottleneck, stay on Opus 4.7. Otherwise Grok 4.3 deserves a serious test run.

Key Features

Pros & Cons

Pros

- Cheapest frontier-tier model in May 2026 at $1.25 in / $2.50 out per 1M tokens

- Native video input up to 5 minutes 1080p — unique among frontier models

- Native PPTX, PDF and XLSX file generation directly in chat

- Strong agentic performance — GDPval-AA ELO 1500, +321 over Grok 4.20

- OpenAI-compatible API means most existing SDK code runs unchanged

- Real-time X Corp data access remains a differentiator

- 1 million token context window matches Opus 4.7 and Gemini 3.1 Pro

Cons

- Trails GPT-5.5 by about 276 ELO points on GDPval-AA

- Drops in non-hallucination rate on AA-Omniscience versus Grok 4.20

- Documentation is thin and inconsistent compared with OpenAI and Anthropic

- Long-context coding past 600,000 tokens degrades faster than Opus 4.7

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Grok 4.3?

xAI's cheapest frontier reasoning model — $1.25/$2.50 per 1M tokens, 1M context, native video and slide gen.

How much does Grok 4.3 cost?

Grok 4.3 has a free tier. Premium plans start at $1.25/month.

Is Grok 4.3 free?

Yes, Grok 4.3 offers a free plan. Paid plans start at $1.25/month.

What are the best alternatives to Grok 4.3?

Top-rated alternatives to Grok 4.3 can be found in our WebApplication category on ThePlanetTools.ai.

Is Grok 4.3 good for beginners?

Grok 4.3 is rated 8/10 for ease of use.

What platforms does Grok 4.3 support?

Grok 4.3 is available on Web, iOS, Android, REST API.

Does Grok 4.3 offer a free trial?

No, Grok 4.3 does not offer a free trial.

Is Grok 4.3 worth the price?

Grok 4.3 scores 9.5/10 for value. We consider it excellent value.

Who should use Grok 4.3?

Grok 4.3 is ideal for: Video summarisation and reasoning up to 5 minutes 1080p, Investor decks and report generation as native PPTX or PDF, High-volume agent workflows where token cost dominates, Live event and trend analysis via X Corp data integration, Long-document analysis up to 1 million tokens, Coding tasks below 200,000 tokens, Voice-driven products via SuperGrok Heavy voice cloning.

What are the main limitations of Grok 4.3?

Some limitations of Grok 4.3 include: Trails GPT-5.5 by about 276 ELO points on GDPval-AA; Drops in non-hallucination rate on AA-Omniscience versus Grok 4.20; Documentation is thin and inconsistent compared with OpenAI and Anthropic; Long-context coding past 600,000 tokens degrades faster than Opus 4.7.

Ready to try Grok 4.3?

Start with the free plan

Try Grok 4.3 Free →