While everyone watches OpenAI make noise, Anthropic is quietly building an empire. After 16 hours a day inside Claude — Desktop, Claude Code, multi-agent worktrees, custom agents I built myself — I see something most people miss: the next 18 months of AI history are being decided in deals that don't make headlines.

Editorial Disclosure: This article is an editorial opinion piece from Anthony Martinez (CEO & Founder, ThePlanetTools.ai). It contains no affiliate links to Anthropic — Anthropic does not run a public affiliate program. Internal links to our tool reviews remain editorial. Read our full editorial policy.The 10-gigawatt war chest, in numbers

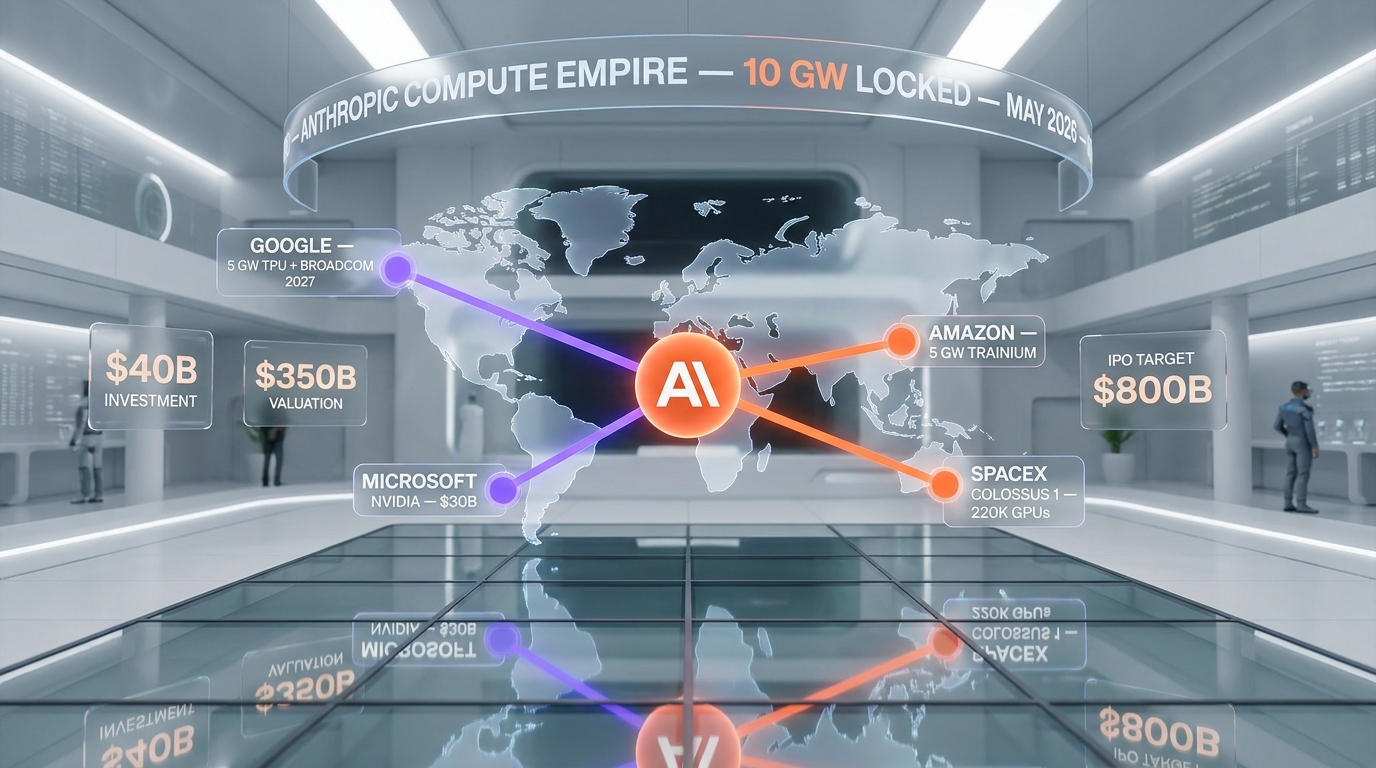

Let me lay out what Anthropic has actually locked down in the past 90 days:

- Amazon — up to 5 gigawatts of new Trainium compute through the expanded partnership announced April 20, 2026, plus up to $25 billion in additional investment ($5B immediate, up to $20B tied to commercial milestones) on top of a prior $8 billion stake — and a $100 billion+ commitment to AWS over 10 years for Trainium custom silicon (CNBC).

- Google — $40 billion investment at a $350 billion valuation, including a 5 GW compute commitment via TPUs and Broadcom custom silicon for 2027 deployment.

- Microsoft & NVIDIA — announced November 18, 2025: Anthropic commits $30 billion to Azure compute capacity (initial 1 GW on NVIDIA Grace Blackwell & Vera Rubin systems), with NVIDIA investing up to $10 billion and Microsoft up to $5 billion in Anthropic — making Claude the only frontier model available on all three major clouds.

- SpaceX Memphis Colossus 1 — a deal locking 220,000 GPUs announced May 6, 2026, alongside doubled Claude Code rate limits for paying subscribers.

- Project Glasswing (April 7, 2026) — Anthropic alongside AWS, Apple, Broadcom, Cisco, Google, JPMorganChase, Microsoft, and NVIDIA, focused on software security infrastructure.

If you tally just the publicly disclosed gigawatt commitments — 5 GW Amazon Trainium plus 5 GW Google TPU/Broadcom — you land at roughly 10 gigawatts. That's before adding the 1 GW initial Azure compute (with NVIDIA Grace Blackwell and Vera Rubin) and the 220,000 GPUs from SpaceX Colossus 1, which together push the real number meaningfully higher.

Read our hands-on Claude Code review →What 10 gigawatts actually means

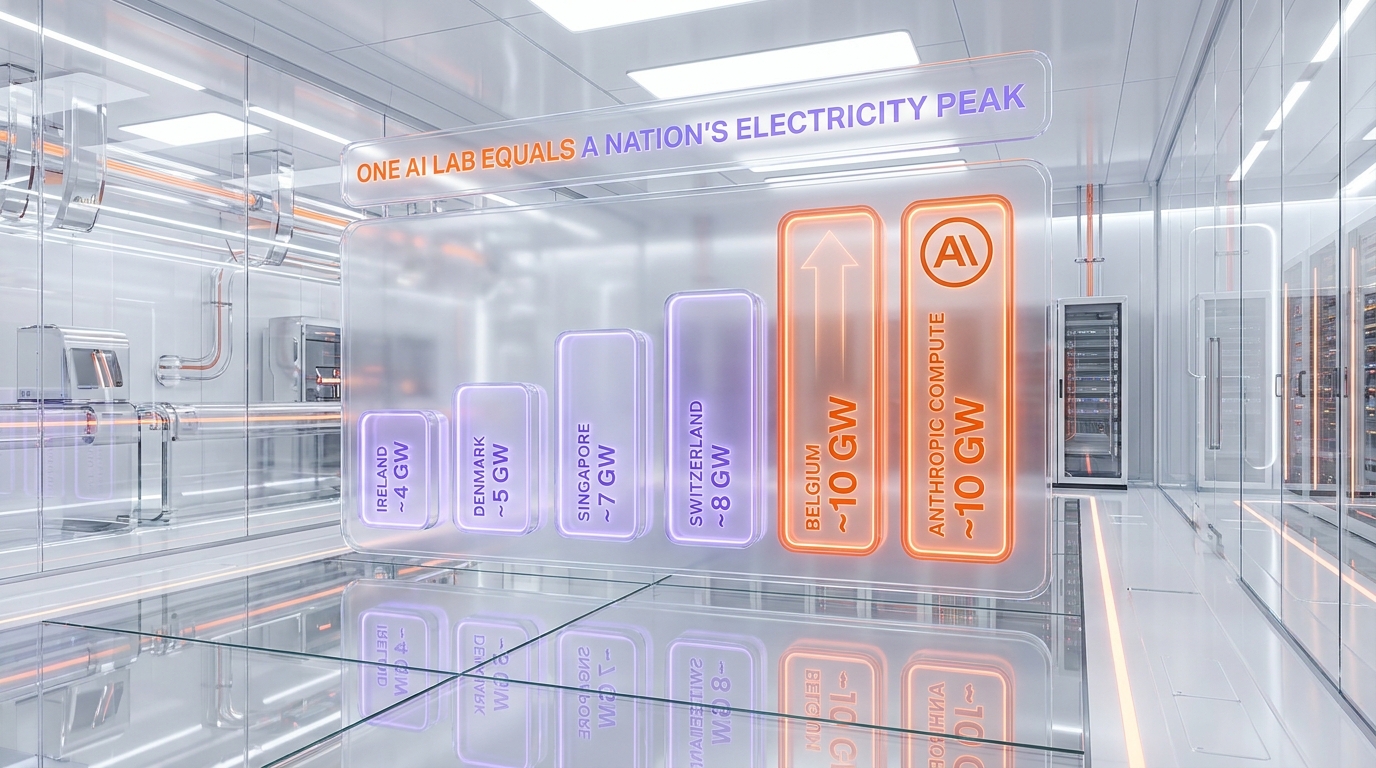

If you've never thought about gigawatts before, here's the scale that woke me up.

10 GW peak demand is roughly the entire electricity peak of Belgium — a country of 11.7 million people, with industry, hospitals, public transit, data centers, and households running at the same time. Annual figures from Wikipedia put Belgium around 82 TWh per year, which translates to a peak demand near 9 to 10 GW.

For more reference points: Ireland sits around 4 GW peak, Denmark near 4 to 5, Singapore around 7, Switzerland near 8, Portugal around 7, and Norway closer to 15 to 17 thanks to heavy hydropower industry.

So when one private AI lab locks 10 GW of compute commitment — that's not "a lot of GPUs." That's the energy footprint of a medium-sized European country, dedicated to training and inference for one company's models. And we're still talking about commitments that ramp through 2027. The trajectory is not flat.

The empire being built — beyond compute

Compute is just the iceberg tip. Look at what Anthropic announced in parallel:

- Enterprise services JV with Blackstone, Hellman & Friedman, Goldman Sachs (and Apollo, General Atlantic, Leonard Green, GIC, Sequoia Capital — May 4, 2026) — a $1.5 billion AI-native enterprise services firm. Anthropic, Blackstone, and H&F each contribute ~$300M; Goldman $150M. The vehicle gives Anthropic a direct pipeline into PE-owned mid-market portfolios — a distribution channel no software vendor has had at this scale.

- NEC Japan partnership (April 23, 2026) — NEC will use Claude across roughly 30,000 employees, building one of "Japan's largest AI-native engineering" organizations and making NEC Anthropic's first Japan-based global partner.

- Sydney office opening (April 28, 2026) with Theo Hourmouzis (former Snowflake SVP for ANZ & ASEAN) appointed General Manager for Australia & New Zealand — following recent openings in Tokyo and Bengaluru, with Seoul next.

- Project Glasswing — Anthropic positioning at the center of an industry consortium for software security across the biggest tech and finance names on the planet.

- Claude Design launched April 17, 2026 — a creative tool from Anthropic Labs extending beyond pure LLM territory.

- Coefficient Bio acquisition (early April 2026, reportedly $400 million per TechCrunch and The Information) — a stealth biotech AI startup of ~10 people joining Anthropic's Health Care Life Sciences group, signaling a deeper push into AI drug discovery.

This is not a company stockpiling GPUs. This is a company building distribution channels, vertical applications, geographic footprints, and industry alliances simultaneously. Quietly. While every blog post and tweet thread is busy comparing the latest GPT-5.5 score to Opus 4.7 on some benchmark.

Compute architecture — the anti-NVIDIA move

Here's a strategic detail that flew under most radars: Anthropic now trains and serves on four distinct chip architectures.

- NVIDIA (the legacy compute) — through Microsoft Azure and SpaceX Colossus 1.

- Amazon Trainium — through the expanded AWS partnership, AWS-native chips designed for transformer workloads at lower cost per token.

- Google TPU — through the new $40 billion deal, including custom optimization for Anthropic's training stack.

- Broadcom custom silicon — co-designed with Google for 2027 deployment, the layer most observers missed.

Why does this matter? Because every other frontier lab — including OpenAI — is heavily NVIDIA-dependent for training. NVIDIA pricing power, supply constraints, and roadmap timing become single points of failure. Anthropic just engineered themselves out of that single dependency. They can shift workloads across four architectures based on price, availability, and silicon performance per workload type.

For a company eyeing an $800 billion IPO valuation, that compute resilience is not a footnote. It's the foundation.

Why I think Opus 4.7 was a tactical release

Here's where I drop the editor hat for a second and put on the daily-driver hat.

I shipped Opus 4.7 across my entire workflow within 72 hours of its April 16 launch. Coded with it for two weeks straight. And honestly? The jump from Opus 4.6 to 4.7 is incremental, not transformational. Better at long-context tasks, slightly cleaner agentic loops, refined on edge cases. But to me it does not read like Anthropic's next big leap — it reads like the kind of step a lab takes when the real next model is being kept ready behind the scenes.

My read: Opus 4.7 was a hold-the-line release. Anthropic did not want the perceived gap with GPT-5.5 to widen during the months it takes to ship something genuinely new. So they shipped a strong refinement, banked the press cycle, and kept developers locked into the ecosystem while the real next model cooks behind closed doors.

I've watched Anthropic's release pattern long enough to recognize this. The genuine flagship leaps land with weeks of preparation, internal model cards leaked, safety statements polished. Opus 4.7 reads like a hold-the-line release — solid engineering, intentionally low-key marketing splash. Exactly the kind of move a lab makes to neutralize GPT-5.5 in the short term while a bigger model finishes baking behind closed doors. That tells me the next thing — Sonnet 4.8, or another Opus tier above 4.7 — is not far off, and it is going to hit harder than 4.7 did.

Mythos and the hidden pipeline

Mythos is the elephant in the room that few are addressing publicly.

The Mythos preview leaked in April 2026, described internally as "more powerful than Opus 4.7" with limited access. It is, by Anthropic's own framing, capability-restricted while safety teams evaluate it. That's the polite phrasing. The blunt translation: Anthropic has at least one model significantly stronger than Opus 4.7 already trained, and they have not shipped it because they are still working out alignment and deployment risk.

Read that sentence twice. The strongest publicly available model from Anthropic is the one they had to release. The actual frontier of their lab is one or two model generations beyond what we get to use.

And remember — Mythos was reported in April. Compute commitments to deploy 10 GW are dated for 2027. By the time that compute lands, Anthropic will have spent another 18 to 24 months scaling. The model after Mythos is presumably already in early training somewhere. The empire is not just present-tense.

My take after 16 hours a day in Claude Code

Now the part where I just tell you what I think, no diplomatic hedging.

To make the daily-driver claim concrete: last week I had four Claude agents running in parallel worktrees, debugging a race condition in a content pipeline that I had stared at alone for two hours. The agents triangulated it in 22 minutes. That is not a benchmark. That is a real Tuesday afternoon in my actual code, where the multi-agent setup paid for itself in a single bug. Multiply by every week of the past three months and you understand why I cannot go back.

In my production workflow, nothing comes close to Anthropic for coding and agentic work. Not OpenAI, not Google, not the open-source community, not the smaller labs I have tried. The gap, in the daily setups I run, is wide — and it widens every month I keep using these tools head-to-head.

I tested GPT-5.5 thoroughly when it dropped. I run Gemini 3.1 Pro Preview for parallel tasks. I keep Cursor and Windsurf installed. I gave Cursor's agent mode and Codex CLI extended trials. The conclusion has not budged in three months: Claude Code, on Opus 4.7 or Sonnet 4.6, in a properly configured multi-agent worktree setup, outperforms every alternative I have benchmarked in real production code. Not on cherry-picked SWE-bench scores. On daily, varied, messy, real-world coding work.

The benchmarks don't capture this anymore. SWE-bench Verified, HumanEval, the agentic eval suites — they all top out into noise around the frontier. What separates Claude is something benchmarks struggle to measure: how the model handles ambiguity, how cleanly it composes multi-step plans, how well it self-corrects mid-task, how it behaves inside a long-running agent loop without drifting. Hands-on, daily, the difference is night and day.

Read our Claude Opus 4.7 deep dive →OpenAI's existential question

Where does this leave OpenAI?

I think OpenAI is being squeezed in the developer mindshare lane — not as a company (their consumer footprint is enormous), but in the agentic-coding territory they used to define. The GPT-5.5 launch leaned hard into the "super-app" framing — chat plus shopping plus research plus code plus everything-bagel. That's a consumer story, and it is a real market. But it is not the agentic-coding empire Anthropic is building.

OpenAI ramped 10 gigawatts of NVIDIA hardware commitments themselves and they have the largest consumer AI footprint on the planet. They are not collapsing. But they appear to be pivoting toward consumer reach and away from frontier coding leadership. Whether that's a deliberate strategic call or a forced retreat is the open question.

Sam Altman's recent commentary on Anthropic — including the leaked internal memo accusing Anthropic of $8 billion in claimed revenue — reads less like confident competitor positioning and more like a company watching its dev mindshare drift to a smaller rival.

Google's parallel lane

Google is the player I think most observers misunderstand in this story.

Yes, Google invested $40 billion in Anthropic. Yes, Google hosts Claude Opus 4.7 natively on Vertex AI now. But Google is not betting against itself. Google is running a parallel game — and a smart one.

Google's lane is LLM plus the multimedia ecosystem. Gemini 3.1 Pro and Flash. Imagen for image generation. Veo 3.1 for video. Lyria 3 for audio and music. Nano Banana Pro for branded image generation. AI Studio for the developer playground. Notebook LM for research workflows. The roadmap is breadth across modalities, not depth in one vertical like Anthropic's coding focus.

By investing $40 billion in Anthropic and hosting Claude on Vertex, Google is hedging — capturing economic exposure to whoever wins agentic coding while doubling down on the multimedia and consumer-LLM playgrounds where they have structural advantages (search, YouTube, Android, Workspace, Chrome). It's portfolio management at hyperscale.

What we have not yet covered in our editorial pipeline are several Google products that deserve full reviews — Imagen 4 family across Fast/Standard/Ultra tiers, the new Vertex AI Agent Designer no-code tooling, TPU v8 Hypercomputer architecture, and the broader Project Glasswing security stack. Each of those is a separate story I'll be writing in coming weeks.

The three-track market thesis

Putting the pieces together, here is how I read the AI frontier market shaping up over the next 18 months:

- Anthropic = coding and agentic supremacy. The compute war chest, the model pipeline (Opus 4.7 → Mythos → next), the Claude Code ecosystem, and the enterprise distribution deals all point to one thesis: own the developer and agentic-workflow market. Build there until the moat is uncatchable.

- Google = LLM plus the multimedia and consumer ecosystem. Gemini for general-purpose LLM. Imagen, Veo, Lyria, Nano Banana for the creative stack. AI Studio and Vertex for distribution. Hedge through Anthropic investment.

- OpenAI = consumer chat plus the super-app pivot. ChatGPT brand mass-market. GPT-5.5 super-app integrations. Pulling agentic features into consumer flows. Walmart-style scale, not Wirecutter-style depth.

If you're a developer or builder, your bet is on Anthropic. If you're a marketing or content team needing breadth across image, video, and audio, your bet is on Google. If you're a casual consumer using AI for everyday questions, OpenAI's super-app is built for you. The market is segmenting, not consolidating.

What would prove me wrong

An editorial without an exit ramp is just cheerleading. So here is what would invalidate the thesis I just laid out, and what I am watching for honestly:

- Mythos shipping underwhelming. If the model Anthropic is keeping behind closed doors lands and turns out to be a marginal step, the "empire pipeline" narrative loses one of its key beats.

- OpenAI cracking agentic and developer workflows. If a future GPT release closes the daily-driver gap I describe — not on benchmarks, in actual production multi-agent setups — the coding moat thesis weakens fast.

- The 10 GW commitments turning into vague letters of intent. Multi-year compute deals can soften, slip, or get re-papered. If 2027 deployment slides hard or the gigawatt numbers get quietly revised down, the "war chest" looks more like a press release.

- Anthropic stumbling on safety or governance. The lab is more conservative on deployment than competitors by design. A misstep on alignment, a well-publicized model failure, or an internal restructuring could change the trajectory.

- A new entrant I am not modeling. Mistral, xAI, Chinese frontier labs, or an open-source consortium hitting agentic-coding parity would re-shape the three-track thesis I sketched above.

None of these feel imminent to me right now, but I would rather name them than pretend they do not exist. If any one of them lands in the next 12 months, I will write the follow-up piece honestly.

Final verdict — the empire being built quietly

Our take: Anthropic is, in May 2026, building the most strategically resilient frontier AI company on the planet. They have done it without dominating the news cycle, without consumer-app theatrics, and without the public personality battles that consume OpenAI's PR bandwidth. They have done it by closing $40 billion deals, locking 10 gigawatts of compute, signing enterprise distribution partnerships from Tokyo to Sydney to New York, and sitting on a model pipeline at least one generation ahead of what they ship publicly.

This is what an empire being built quietly looks like.

My bet on the timeline, on the record: Sonnet 4.8 (or another Opus tier above 4.7) ships within 60 days of this article. Mythos goes generally available before Q4 2026. The IPO files at the rumored $800 billion valuation by end of 2026. If I am wrong on any of these, I will write the follow-up. If I am right, you will remember where you read it first.

Skip if: you're benchmarking models on SWE-bench scores alone — that lens misses the picture. Or if you think the next AI war will be won by the chattiest brand. It won't.

My bet for the next 12 months: if you can only pick one model to build production agentic workflows on, my answer remains Claude Code on Opus 4.7. With the upgrade to whatever Anthropic ships next quarter pencilled in.

What I'm watching next: Mythos becoming generally available, the next Opus or Sonnet release dropping (I expect within 60 days), the IPO filing finalizing at the rumored $800B valuation, and Google's response on the multimedia front (Lyria 3 and Imagen 5 are the products to watch).

Frequently Asked Questions

Is Anthropic really locking 10 gigawatts of compute?

The publicly disclosed commitments add up to roughly 10 GW: up to 5 GW from the expanded Amazon Trainium partnership announced April 20, 2026, plus 5 GW from the Google TPU and Broadcom custom silicon deal that comes with the $40 billion investment at a $350 billion valuation. Microsoft and NVIDIA partnerships add more on top, as does the SpaceX Colossus 1 deal locking 220,000 GPUs. The 10 GW figure is the floor, not the ceiling.

How does 10 GW compare to a country's electricity consumption?

Roughly the peak electricity demand of Belgium, a country of 11.7 million people. For other comparison points: Ireland sits around 4 GW peak, Singapore around 7, Switzerland around 8, Portugal around 7, Norway around 15 to 17. Annual consumption per Wikipedia data places Belgium near 82 TWh, which translates to a 9 to 10 GW peak.

Why is Google investing $40 billion in Anthropic if they compete with Gemini?

Google is hedging while running a parallel strategy. Their lane is LLM breadth plus the multimedia ecosystem (Gemini 3.1 Pro/Flash, Imagen 4, Veo 3.1, Lyria 3, Nano Banana Pro) where they have structural advantages from search, YouTube, Android, and Workspace. By owning economic exposure to Anthropic and hosting Claude Opus 4.7 on Vertex AI, Google captures upside on whoever wins agentic coding while doubling down on creative and consumer LLM markets they are best positioned to dominate.

Does Anthropic actually use Google TPUs for training?

Yes. The April 26, 2026 deal includes Google TPU compute commitment plus Broadcom custom silicon co-designed for Anthropic's training workloads, with deployment ramping toward 2027. This makes Anthropic the only frontier AI lab actively training on four distinct chip architectures: NVIDIA, Amazon Trainium, Google TPU, and Broadcom custom silicon. Most competitors remain primarily NVIDIA-dependent.

Why do you say Opus 4.7 was a "tactical release"?

From two weeks of daily production usage across coding, agentic workflows, and long-context tasks, the upgrade from Opus 4.6 to 4.7 reads as a refinement rather than a generational leap. The press cycle and the engineering signals both suggest Anthropic shipped 4.7 to hold positioning against GPT-5.5 while a stronger next model cooks behind closed doors. The Mythos preview leak in April supports this read.

What is Mythos and why does it matter?

Mythos is an Anthropic model preview that leaked in April 2026, framed internally as more powerful than Opus 4.7 but capability-restricted while safety teams evaluate it. The blunt read: Anthropic already has at least one model meaningfully stronger than what they ship publicly, held back for alignment work. The strongest model in the lab is one or two generations ahead of what users get access to.

Is Claude really better than GPT-5.5 for coding?

Based on hands-on production usage at 16 hours per day across Claude Desktop, Claude Code, and custom multi-agent worktrees, yes — in my testing, decisively. GPT-5.5 scores well on benchmarks but, in my real-world workflows, it trails Opus 4.7 on ambiguity handling, multi-step plan composition, mid-task self-correction, and long-running agent loop stability. Benchmarks have plateaued into noise at the frontier. Daily-driver experience is where the gap shows for me.

What is OpenAI's strategic position in this picture?

OpenAI is pivoting toward consumer scale and the "super-app" framing they leaned into with the GPT-5.5 launch. They retain massive consumer reach through ChatGPT, but appear to be ceding agentic and developer mindshare to Anthropic. Whether this is a deliberate consumer-first strategy or a forced retreat from the developer market is the open question heading into the second half of 2026.

Should I switch from OpenAI or Google to Anthropic for coding?

For production coding and agentic workflows, yes, in our editorial view. Claude Code on Opus 4.7 inside a properly configured multi-agent setup outperforms every alternative we have benchmarked over the past three months. For multimedia generation (image, video, music) Google's stack is the right pick. For mass-consumer chat, OpenAI's ChatGPT remains the natural default.

Is Anthropic actually pursuing an $800 billion IPO?

Reporting in April 2026 indicated Anthropic filed toward an $800 billion IPO valuation, building on the $350 billion private valuation set by the Google investment. The compute commitments and enterprise distribution deals announced in parallel align with the kind of run-up a public offering at that scale typically requires. Final pricing and timing will depend on market conditions through Q3 to Q4 2026.

What about the Anthropic and Amazon partnership in this picture?

Anthropic and Amazon expanded their compute partnership on April 20, 2026, locking in up to 5 GW of new compute through Amazon Trainium and AWS infrastructure. This is on top of the existing AWS investment and the long-running Bedrock distribution channel. Amazon now anchors the cost-optimized side of Anthropic's compute strategy, while Google anchors the high-performance training side through TPU and Broadcom silicon.

What should I watch next?

Four near-term signals: Mythos becoming generally available, the next Opus or Sonnet release shipping (we expect within 60 days), the IPO filing finalizing toward the rumored $800B valuation, and Google's multimedia stack response (Lyria 3 and the Imagen 5 family). Each will tell us whether the three-track market thesis holds or whether the segmentation breaks back into a single-frontier consolidation.

Editorial Disclosure: This is an opinion piece from Anthony Martinez, daily Claude user (16+ hours per day across Claude Desktop, Claude Code, custom multi-agent worktrees) and CEO of ThePlanetTools.ai. We have no affiliate relationship with Anthropic, OpenAI, or Google for the LLM products discussed. The opinions are my own based on hands-on usage and industry observation. Read our full editorial and affiliate disclosure policy.