Anthropic announced on May 6, 2026 a compute partnership with Elon Musk's SpaceX that brings more than 300 megawatts of new capacity and over 220,000 NVIDIA GPUs online within a month. The same day, Anthropic doubled the five-hour rate limits for Claude Code across Pro, Max, Team, and seat-based Enterprise, removed the peak-hours limit reduction for Pro and Max, and raised Tier-1 Claude Opus API limits considerably — every change effective immediately.

TL;DR — what shipped on May 6, 2026

- The compute deal. Anthropic and SpaceX signed a partnership giving Anthropic 300+ MW and over 220,000 NVIDIA GPUs available within one month — the capacity Anthropic publicly attributes to the build it calls Colossus 1.

- Claude Code limits doubled. Five-hour rate limits doubled for Pro, Max, Team, and seat-based Enterprise, all effective May 6, 2026.

- Peak-hours throttling removed. Pro and Max no longer face the previous peak-hours rate-limit reduction. Effective immediately.

- API rate limits raised "considerably" for Claude Opus models on Tier-1 — input and output token limits both bumped.

- Forward-looking. Anthropic and SpaceX expressed interest in scaling to multiple gigawatts of orbital AI compute in future phases.

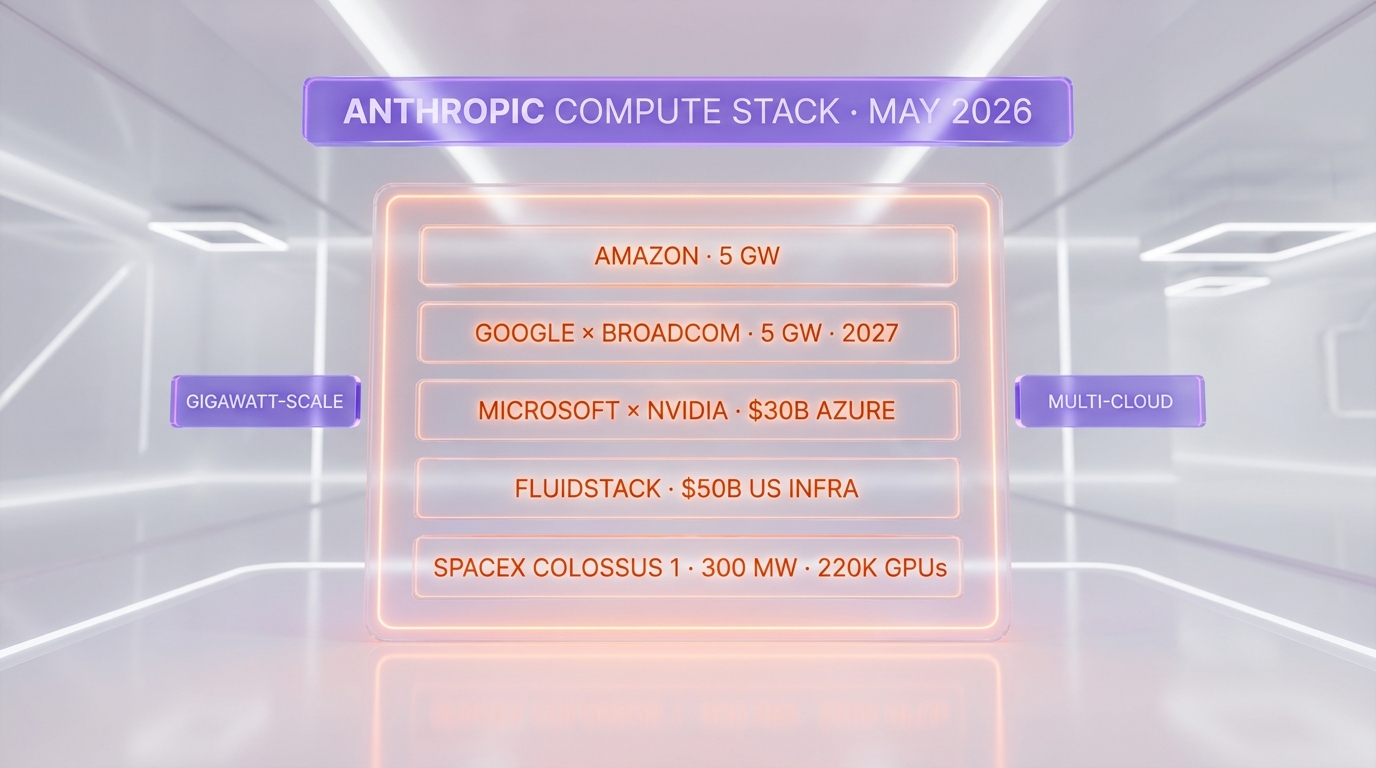

- Wider stack. The SpaceX deal sits alongside Anthropic's existing partnerships with Amazon (up to 5 GW), Google & Broadcom (5 GW for 2027), Microsoft & NVIDIA (a $30 billion Azure capacity partnership), and Fluidstack ($50 billion US infrastructure investment).

What happened

Anthropic published a single page on its newsroom titled "Higher Claude usage limits with new compute capacity from SpaceX," dated May 6, 2026. The post bundles two announcements that, individually, would each have been newsworthy: an infrastructure deal with SpaceX large enough to materially expand Anthropic's compute envelope, and a same-day customer-facing rate-limit change for the product most demanded by paying developers — Claude Code.

The SpaceX side describes "more than 300 megawatts of new capacity (over 220,000 NVIDIA GPUs) within the month." Anthropic's framing is that this capacity flows directly into Claude Pro and Max subscribers — the consumer-facing tiers that, until today, were the most rate-limited surface in the Anthropic stack. The post also explicitly references a forward-looking interest in "multiple gigawatts of orbital AI compute capacity" with SpaceX, language that nods to SpaceX's Starlink and Starshield logistics expertise without committing to a launch timeline.

The Claude Code side is the part developers will feel by the end of the day. The five-hour rate-limit window — the one that causes Pro and Max users to hit "you have reached your limit, please wait until X" mid-pull-request — has doubled. The peak-hours reduction, a separate throttle that lowered limits during US business hours, has been removed for Pro and Max. Team and seat-based Enterprise plans both inherit the doubled five-hour window.

Why it matters: Claude Code limits are the developer story of 2026

For anyone using Claude Code as a daily driver — and this includes our newsroom's own internal tooling — the five-hour limit was the single most frequent reason for context loss, deferred merges, and staggered work. We have been building, reviewing, and shipping with Claude Code as our primary developer agent through April and into May. The previous five-hour ceiling was reached, with concentration, somewhere around the 3.5-hour mark of an active refactor session, especially when Claude Code is fanning out to multiple sub-agents for parallel verification. Doubling the window is not a luxury. It is the difference between "I can ship this feature in one continuous flow" and "I have to checkpoint state and come back."

The peak-hours removal matters more than its name suggests. Until May 6, 2026, Claude Code Pro and Max users in US working hours faced a soft throttle that disproportionately hit teams in North American time zones. Removing that throttle is not just a quantity change — it is a fairness change. EU and APAC developers stop being implicitly favored by the rate-limit clock. For shops that bill clients on hours of agentic work, the line between billable and non-billable just got steeper for the better.

What changed exactly: the rate-limit table

Claude Code (subscription product)

| Plan | Five-hour rate limit | Peak-hours throttle |

|---|---|---|

| Pro | Doubled | Removed |

| Max | Doubled | Removed |

| Team | Doubled | n/a |

| Enterprise (seat-based) | Doubled | n/a |

Claude Opus API (Tier-1)

Anthropic's post states that API rate limits for Claude Opus models have been raised "considerably" for Tier-1 customers — both input tokens per minute and output tokens per minute. Anthropic publishes the precise numbers in its API rate-limits documentation; developers running production traffic against Claude Opus 4.7 on Tier-1 should re-read the rate-limits page before adjusting concurrency settings.

The SpaceX angle: who builds, who runs, who pays

Anthropic's post does not disclose the financial terms of the SpaceX deal, the lease duration, the geographic location of the 300 MW build, or whether the GPU mix is H100, H200, B200, or GB200 generation. Three questions matter for technical readers and we will answer them as documents emerge:

- Where is the capacity? A 300 MW data hall with 220,000+ accelerators is one of the largest single-tenant AI builds ever publicly described. Power, cooling, and grid interconnects of this size are typically pre-existing — meaning the build either retrofits an extant SpaceX or partner facility, or co-locates inside one. Specifics are pending Anthropic disclosure or independent reporting.

- Whose silicon? "Over 220,000 NVIDIA GPUs" is the only spec given. The mix between H200 (still the volume datacenter SKU as of Q2 2026) and Blackwell B200/GB200 (ramping but supply-constrained) materially affects training versus inference economics. A B200-heavy mix would price the deal at a multiple of an H200-heavy mix.

- Lease, swap, or build-to-suit? "Compute partnership" is deliberately ambiguous — it covers everything from pure capacity rental to a dedicated build that Anthropic eventually owns. The deal's language ("available within the month") suggests pre-existing capacity being re-pointed, which favors a lease structure.

The geopolitical angle: Anthropic signs with Musk while xAI competes with OpenAI

The cleanest read of this deal is purely operational — Anthropic needed compute, SpaceX had it, both sides made a deal, and the customer benefits. The longer read is harder to ignore. SpaceX is operationally adjacent to xAI, the frontier-model lab Musk founded and currently leads. xAI is a direct competitor to OpenAI, and increasingly to Anthropic itself in the agentic-coding category. A SpaceX-Anthropic compute partnership inserts Musk's broader infrastructure into Anthropic's critical path, even when xAI's product roadmap is competing with Anthropic's. Whether SpaceX's and xAI's capex are formally insulated from each other is a question for SEC filings, not press releases. We linked our prior reporting on the xAI–SpaceX corporate consolidation below; today's Anthropic deal sharpens, not softens, those questions.

Where this fits in Anthropic's broader compute stack

The SpaceX deal is the fifth public compute pillar Anthropic has stood up.

| Partner | Scale | Timeline |

|---|---|---|

| Amazon (AWS Trainium / NVIDIA) | Up to 5 GW total; ~1 GW by end of 2026 | Existing, ramping |

| Google & Broadcom (TPU) | 5 GW | 2027 deployment |

| Microsoft & NVIDIA (Azure) | $30 billion partnership | Existing, expanding |

| Fluidstack (US infra) | $50 billion investment | Multi-year |

| SpaceX Colossus 1 (this deal) | 300+ MW, 220K+ GPUs | Within 30 days of May 6, 2026 |

The SpaceX entry is the smallest by capacity but the fastest by deployment. Every other pillar is measured in gigawatts and years; the SpaceX line is measured in megawatts and weeks. Read together, they describe an Anthropic that has chosen breadth — multi-vendor, multi-silicon, multi-region — over the single-cloud lock-in pattern that defined OpenAI's dependency on Microsoft Azure through 2024 and 2025.

What developers should do this week

- Stop checkpointing mid-session. If your Claude Code workflow includes "save state at hour 3.5 and resume," delete that step. The new five-hour ceiling is comfortably past the typical 4-hour deep-work block.

- Re-tune your concurrency on Claude Opus API Tier-1. If your application throttled itself to stay safely below the old Opus rate limits, re-read the API rate-limits page and lift the cap. The "considerable" raise is real and documented.

- Re-evaluate plan tier. If you previously upgraded from Pro to Max specifically because of the peak-hours throttle on Pro, the calculus has shifted. Pro's peak-hours reduction is gone. Max still buys you the higher absolute ceiling, but the gap to Pro just narrowed.

- If you run Enterprise (seat-based), refresh your usage dashboards. The doubled five-hour window changes per-seat capacity planning by a clean 2x.

What to watch next

- Disclosure on Colossus 1 site and silicon. Expect a follow-up Anthropic post or third-party reporting in the next 30 days locating the data hall and naming the GPU SKU mix.

- Claude Opus 4.7 throughput improvement. The Tier-1 rate-limit raise is one input; sustained throughput on the API is another. Run your own benchmarks rather than trusting the marketing prose.

- Multi-gigawatt orbital follow-on. Anthropic's reference to "multiple gigawatts of orbital AI compute" is the most ambitious sentence in the post. If a follow-up announcement names a launch partner, a satellite size, or a power source, this becomes one of the largest space-infrastructure deals in AI history.

- xAI response. If SpaceX is selling capacity to Anthropic, xAI's capex math gets harder. Watch for Musk-side commentary in the next earnings cycle.

- EPAM and consulting impact. Hours after the SpaceX news, Anthropic announced a 10,000-architect Claude certification program with EPAM — a sign Anthropic is racing to lock in both compute supply and enterprise demand simultaneously.

The Planet Tools take

Today is the cleanest signal yet that Anthropic is not capacity-constrained the way the AI-doomer narrative implied through Q1 2026. Five compute pillars, one of which delivers in 30 days, plus a same-day customer-facing limit doubling, plus a rate-limit raise on the developer API, equals an organization that finally has the capacity headroom to market its way back into the mindshare lead. The interesting question is no longer "can Anthropic train a frontier model?" — it is "what does Anthropic do with surplus compute headroom while OpenAI is still re-architecting around Microsoft Azure dependence?" If the answer involves shipping Claude Opus 4.8 ahead of GPT-6, the May 6, 2026 announcement will be remembered as the inflection point.

Frequently asked questions

What did Anthropic announce on May 6, 2026 with SpaceX?

Anthropic announced a compute partnership with SpaceX that delivers more than 300 megawatts of new capacity and over 220,000 NVIDIA GPUs available within one month. The same announcement doubled Claude Code five-hour rate limits across Pro, Max, Team, and seat-based Enterprise, removed peak-hours throttling for Pro and Max, and raised Tier-1 Claude Opus API rate limits considerably.

How much did Claude Code rate limits increase on May 6, 2026?

Claude Code five-hour rate limits doubled — a 2x increase — for every paid plan: Pro, Max, Team, and seat-based Enterprise. In addition, Pro and Max users no longer face the previous peak-hours rate-limit reduction. Anthropic published the change as effective immediately on May 6, 2026.

What is SpaceX Colossus 1?

Colossus 1 is the name Anthropic publicly uses for the SpaceX-operated data hall covered by the May 2026 partnership. Anthropic describes the build as more than 300 megawatts of capacity holding over 220,000 NVIDIA GPUs, available within one month of the announcement. Anthropic has not publicly disclosed the geographic location, the GPU SKU mix (H200 versus B200 versus GB200), or the lease duration.

Did Anthropic also raise Claude Opus API rate limits?

Yes. Anthropic's announcement states that Tier-1 API rate limits for Claude Opus models have been raised considerably, covering both input tokens per minute and output tokens per minute. Developers running production workloads against Claude Opus 4.7 on Tier-1 should re-read Anthropic's API rate-limits documentation before adjusting their concurrency or queue configuration.

How does the SpaceX deal fit alongside Anthropic's other compute partnerships?

The SpaceX deal joins four other public Anthropic compute pillars: Amazon (up to 5 GW total, with nearly 1 GW by end of 2026), Google and Broadcom (5 GW deployment scheduled for 2027), Microsoft and NVIDIA (a $30 billion Azure capacity partnership), and Fluidstack ($50 billion US infrastructure investment). SpaceX is the smallest in capacity but the fastest in deployment timeline.

Why is the Anthropic-SpaceX deal politically notable?

SpaceX is operationally adjacent to xAI, the frontier-model lab Elon Musk founded and currently leads. xAI competes directly with Anthropic in the agentic-coding category and with OpenAI more broadly. A SpaceX compute partnership inserts Musk's infrastructure into Anthropic's critical compute path while xAI's product roadmap is competing with Anthropic's. Whether SpaceX and xAI capex are formally insulated is a corporate-governance question, not a technical one.

Does the deal involve orbital data centers?

Not on day one. Anthropic's May 6, 2026 post describes terrestrial capacity — over 300 MW available within a month. The post separately notes interest from both companies in developing "multiple gigawatts of orbital AI compute capacity" with SpaceX as a future direction. No launch date, satellite size, or orbital power-source has been publicly committed.

How does the peak-hours removal affect Claude Code Pro users?

Before May 6, 2026, Claude Code Pro and Max accounts faced a separate throttle during US business hours that lowered the effective rate limit for users in North American time zones. That throttle has been removed entirely for Pro and Max. Combined with the doubled five-hour window, US-based Pro users see the largest practical improvement of any tier.

Did Anthropic disclose the financial value of the SpaceX deal?

No. Anthropic's May 6, 2026 announcement does not disclose the financial terms of the SpaceX partnership, the lease duration, the precise location of the 300 MW data hall, or the specific NVIDIA GPU generations involved (H200, B200, or GB200). Independent disclosures may follow in the next 30 to 90 days through SEC filings or third-party reporting.

What should I do as a Claude Code user this week?

Three things. First, stop checkpointing mid-session — the doubled five-hour window comfortably covers a typical four-hour deep-work block. Second, re-tune any application-level throttling you applied to Claude Opus API Tier-1 to stay below the old limits. Third, if you upgraded from Pro to Max purely because of peak-hours throttling on Pro, reconsider — that gap has narrowed substantially after the May 6 change.

Where can I read Anthropic's official announcement?

The official announcement is published at anthropic.com/news/higher-limits-spacex, dated May 6, 2026. Anthropic's separate API rate-limits documentation is at platform.claude.com/docs/en/api/rate-limits. Both pages are the authoritative source for exact numbers; this article summarizes and contextualizes them.

Does this affect free Claude users or claude.ai consumer chat?

Anthropic's May 6, 2026 announcement focuses specifically on Claude Code (the developer subscription product) and the Claude Opus API. Free claude.ai consumer chat is not mentioned in the post. Indirect benefits — for example, faster responses or higher caps for free users — are plausible given the new compute headroom but were not committed publicly in this announcement.