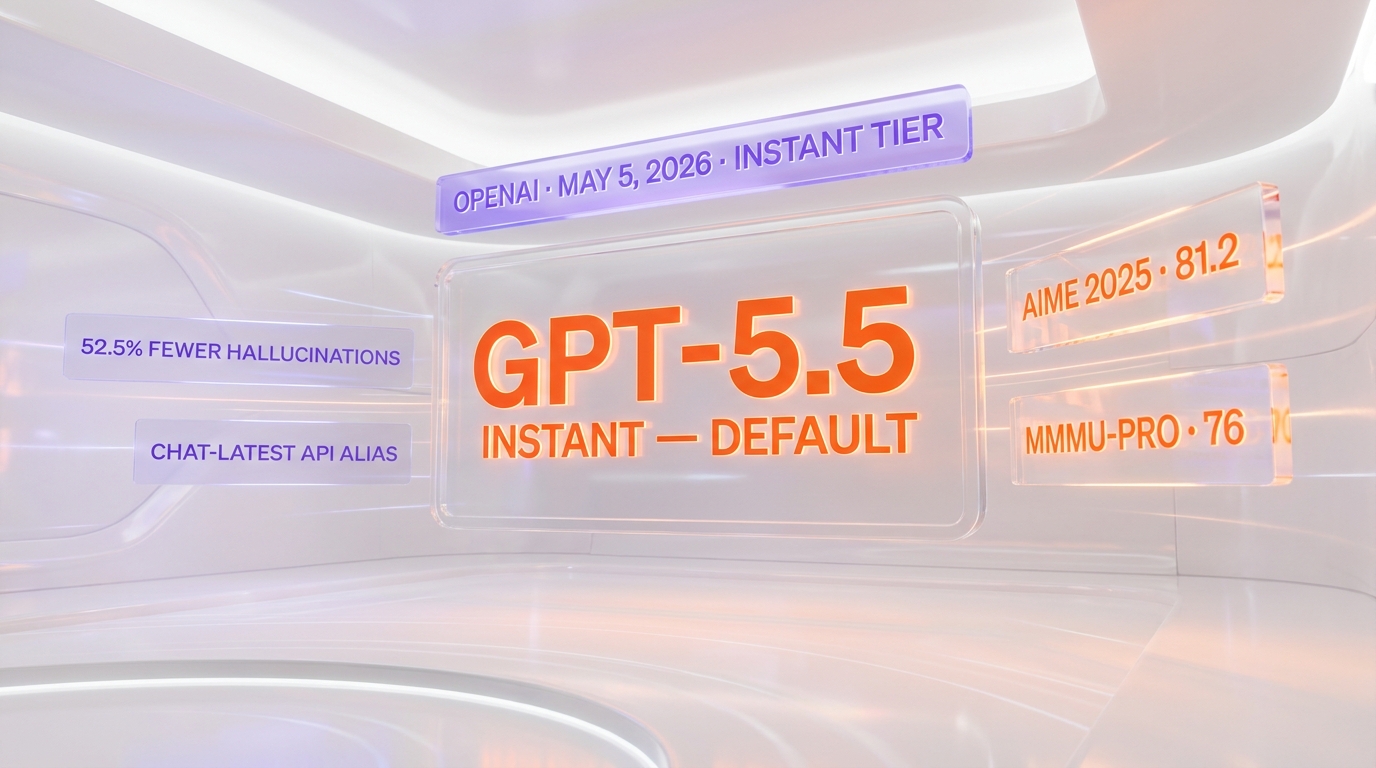

OpenAI replaced ChatGPT's default model with GPT-5.5 Instant on May 5, 2026. Headline benchmarks: AIME 2025 jumped to 81.2 (from 65.4 on GPT-5.3 Instant), MMMU-Pro hit 76 (from 69.2), and on internal evals on high-stakes prompts in medicine, law, and finance the new model produced 52.5% fewer hallucinated claims. The API alias chat-latest now points to GPT-5.5 Instant, and GPT-5.3 Instant is scheduled for deprecation three months from launch.

TL;DR — what shipped on May 5, 2026

- New default ChatGPT model: GPT-5.5 Instant replaces GPT-5.3 Instant for Plus and Pro tiers immediately, Free and Enterprise rolling within weeks.

- AIME 2025: 81.2 (up from 65.4 on GPT-5.3 Instant) — a 24% absolute lift on hard math reasoning.

- MMMU-Pro: 76 (up from 69.2) — multimodal reasoning gains.

- 52.5% fewer hallucinated claims on internal evals targeting medicine, law, and finance.

- Cross-source memory: ChatGPT can now search past conversations, uploaded files, and connected Gmail in a single answer.

- API alias:

chat-latestnow resolves to GPT-5.5 Instant. GPT-5.3 Instant remains accessible to paid API users for three months. - Tone changes: tighter responses, fewer follow-up questions, no more "gratuitous emojis."

Why this is bigger than a typical Instant-tier swap

Default-model swaps inside ChatGPT are usually quiet — the version number moves from N.3 to N.4, the benchmark deltas are within a noise band, and the API alias chat-latest just starts pointing at the new model. May 5's rollout is not that. AIME 2025 going from 65.4 to 81.2 is a 24-point absolute lift on a benchmark where every point above 70 has historically required an explicit reasoning-tier model (o-series, GPT-5.5 Thinking). MMMU-Pro climbing from 69.2 to 76 puts the Instant tier into the multimodal-reasoning band that previously required premium tiers. The 52.5% hallucination reduction stat — measured by OpenAI's internal high-stakes prompt suite covering medicine, law, and finance — is the largest single-step accuracy gain on a default ChatGPT swap since the GPT-4 → GPT-4-turbo transition in 2023.

Translation for developers: the model your customers' free-tier ChatGPT users are now talking to is closer in capability to a reasoning-class model than to last year's chat-default. If your product's defensible edge was "we use GPT-5 Thinking via API and that's why our answers are better," that moat just compressed.

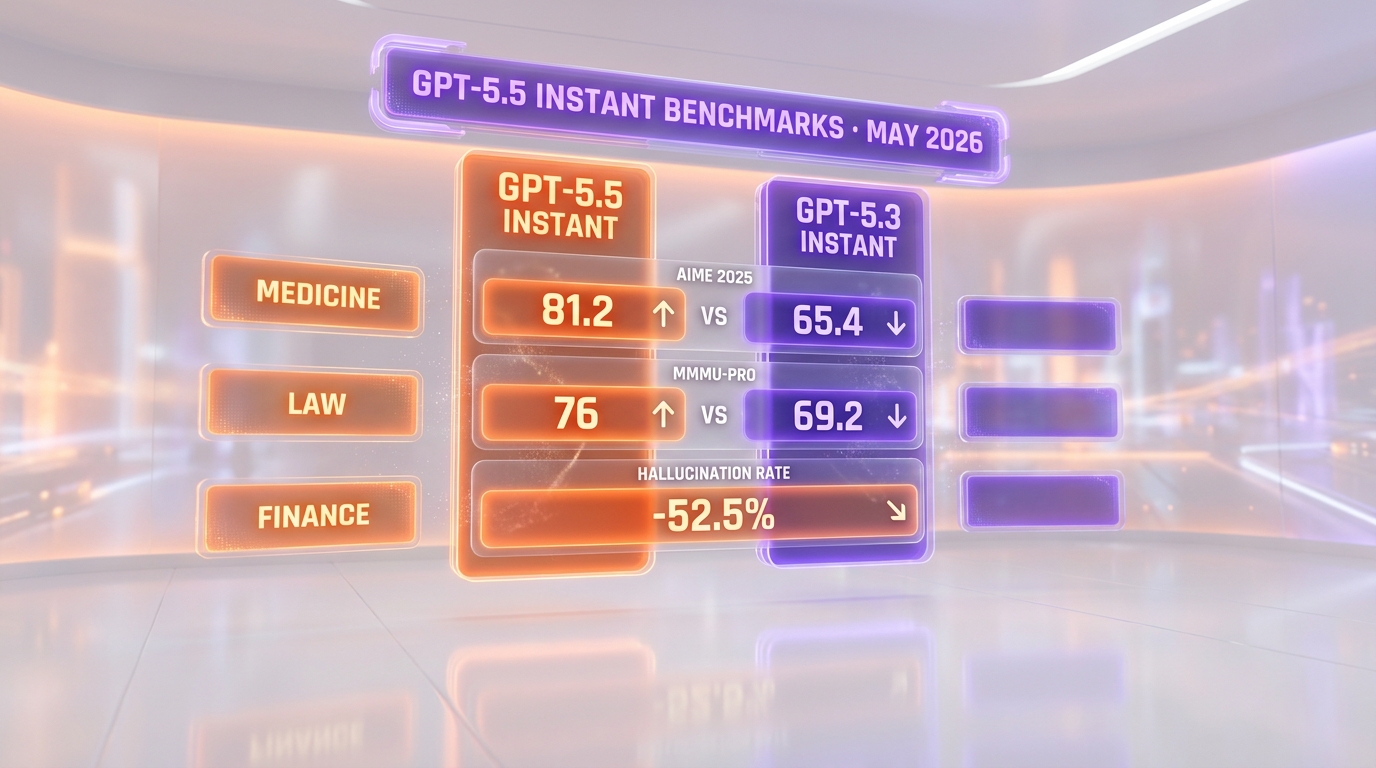

Benchmarks — Instant tier vs. Instant tier

| Benchmark | GPT-5.5 Instant | GPT-5.3 Instant | Delta |

|---|---|---|---|

| AIME 2025 (math reasoning) | 81.2 | 65.4 | +15.8 pts (+24% relative) |

| MMMU-Pro (multimodal reasoning) | 76 | 69.2 | +6.8 pts (+9.8% relative) |

| Hallucinated claims, high-stakes (med/law/finance) | -52.5% | baseline | 52.5% fewer |

| Latency (p50, simple chat) | parity | baseline | no regression disclosed |

OpenAI did not publish full numbers for SWE-bench Verified, GPQA Diamond, or Tau-bench at the launch announcement — those are reasoning-tier benchmarks where the Thinking model continues to be positioned as the right tool. The Instant-tier disclosure scope is intentional: AIME for math, MMMU-Pro for multimodal, and a high-stakes hallucination metric for trust. If you ship product on the Instant tier, those three numbers are the ones that move your evals.

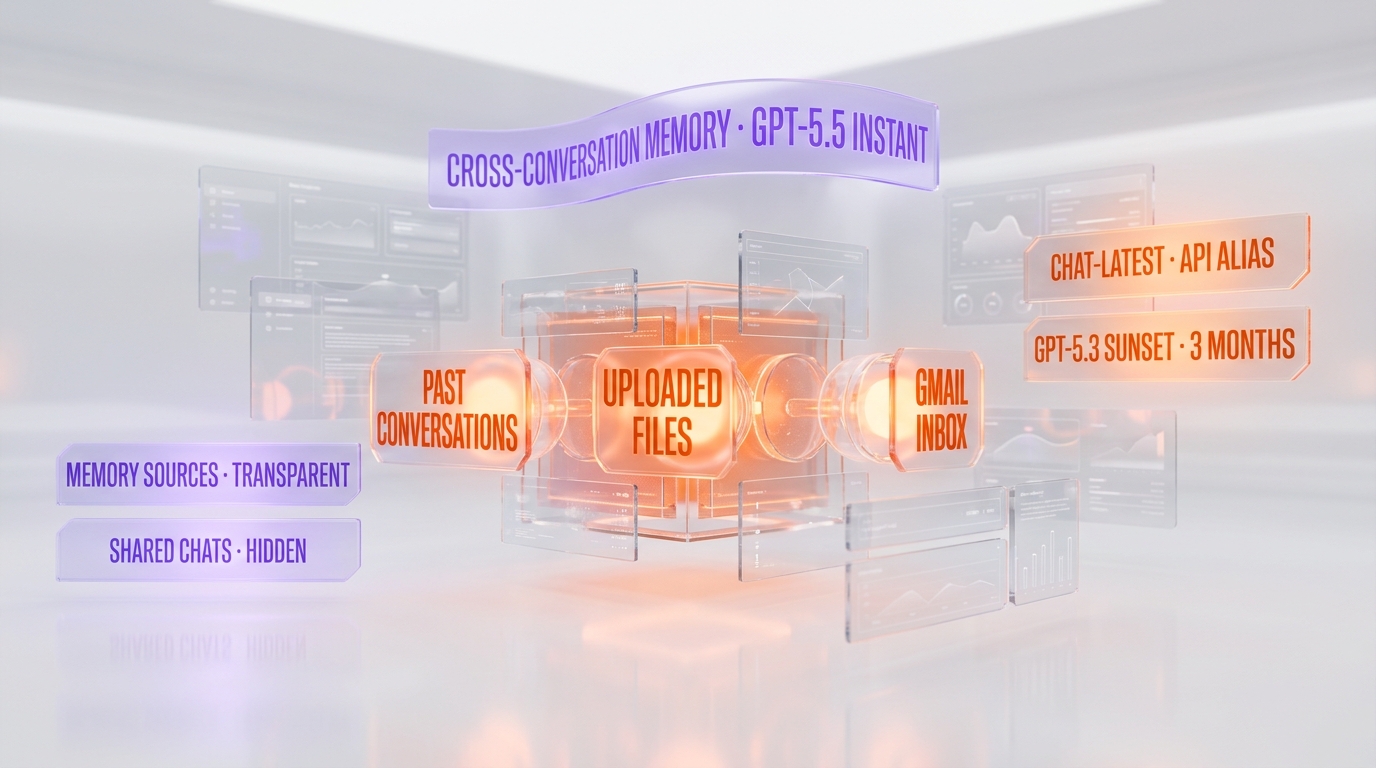

The memory architecture nobody is fully covering yet

The memory feature in the launch is the most architecturally significant change, and the part most outlets are skipping over. GPT-5.5 Instant's memory layer is not a single store — it is a federated retrieval surface across three distinct sources, each with its own permission and visibility model.

Source 1: past conversations

Every prior ChatGPT conversation a user has had is now retrievable as memory context for a current query. Unlike the previous "saved memories" feature, this is full-text searchable across the conversation history, not just an explicit user-facing memory list. The model surfaces which conversation a fact came from in its citations panel, and users can delete or correct outdated sources directly.

Source 2: uploaded files

Files a user has uploaded in any prior session — even sessions weeks old — are searchable as memory context. This is the change that meaningfully affects power users with large document collections: a user who uploaded a contract three weeks ago can now ask a follow-up question without re-uploading.

Source 3: connected Gmail

For users who connect Gmail (opt-in), ChatGPT can pull email content into memory context. This is the deepest integration and the most permission-sensitive — it is opt-in per-account, surfaced clearly in the consent flow, and the source attribution shows which email a fact came from.

Visibility and shared-chat semantics

The transparency layer is built into the UI: every model response that pulled from memory shows a "memory sources" panel naming each retrieved item. Users can delete sources, mark them as outdated, or exclude them from future answers. Critical detail for shared chats: when a user shares a ChatGPT conversation publicly, the memory-sources panel is hidden from recipients. The model still uses memory in its answer, but the public-facing share does not leak the names of files, prior conversations, or emails the answer was built from.

API breaking changes — what developers need to do this week

If you ship on the OpenAI API, three things changed on May 5 that need attention.

1. The chat-latest alias now points to GPT-5.5 Instant

If your code reads model: "chat-latest" in API calls, your traffic is already on GPT-5.5 Instant. For most production apps that will be a quality lift — but if your evals are pinned to GPT-5.3 behavior (token formatting, response length distributions, refusal patterns), you have new baselines to capture. OpenAI's recommended migration is to pin a specific model snapshot string for production traffic and only follow chat-latest in dev/staging.

2. GPT-5.3 Instant deprecation: three-month clock

OpenAI confirmed GPT-5.3 Instant remains accessible to paid API customers for three months from launch — that is, through approximately August 5, 2026. After that, calls to the gpt-5.3-instant snapshot string will return a deprecation error and need to be re-pointed. If your customer-facing product depends on stable model behavior, plan an explicit migration window before mid-July to avoid weekend-pager surprises.

3. Tone changes that affect output parsing

OpenAI explicitly tuned GPT-5.5 Instant to "avoid things that can make responses feel cluttered, like gratuitous emojis." For most apps that is pure upside, but for downstream pipelines that pattern-match on emoji as a structural delimiter (yes, those exist — particularly in customer-support automations), the regex needs an audit. The model is also tuned for fewer follow-up questions and tighter response length distribution — eval prompts that rely on a specific token count or response shape may regress and need re-prompting.

How memory affects context-window math

The cross-source memory feature does not extend the model's context window — GPT-5.5 Instant retains the standard Instant-tier context size. What it does is shift the retrieval cost of "remembering" from the developer's RAG pipeline into OpenAI's hosted layer. For app developers building on top of the ChatGPT-style consumer API surface (the new memory parameter), that is a meaningful operational simplification: federated search across past chats, files, and Gmail is now an OpenAI-side concern, not your vector DB's. For developers building fully custom RAG pipelines on the raw chat completions API, behavior is unchanged — your retrieval stack still owns the memory layer.

Sister story — and the cyber tier

This launch is paired with the still-unfolding GPT-5.5 family rollout that began with the Cyber tier. We covered the GPT-5.5-Cyber Trusted Access U-turn separately — Sam Altman walked back his "fear-based marketing" critique of Anthropic's Mythos gating and adopted the same vetted-defenders model for GPT-5.5-Cyber. Read the full breakdown at GPT-5.5 Cyber restricted access — OpenAI U-turn. The Instant-tier launch is the consumer-facing companion to the Cyber announcement, and together they confirm the GPT-5.5 family is rolling out tier-by-tier rather than as a single drop.

What this means for the competitive landscape

The most direct impact is on Anthropic's Claude Haiku 4.5 (the closest tier comparison) and Google's Gemini 3 Flash. On the AIME math benchmark, Haiku 4.5 currently scores in the high-70s and Gemini 3 Flash in the mid-70s — GPT-5.5 Instant's 81.2 puts the cheapest OpenAI tier ahead of both on hard reasoning. The hallucination metric is harder to compare cross-vendor (each lab uses its own internal eval suite), but a 52.5% relative reduction is significant enough that high-stakes vertical apps — health, legal, fintech — will need to re-run their accuracy regression suites against the new default.

The previous OpenAI default model swap, retiring GPT-4o in February 2026, generated a user backlash so significant that petitions tried to preserve it. OpenAI proceeded with deprecation anyway. The May 5 swap is being framed differently: OpenAI is highlighting tone improvements (tighter responses, fewer emojis, reduced follow-up question count) precisely to address the "the new model feels cold" complaint that surfaced in February.

What to watch next

- Free-tier rollout completion. ChatGPT Free, ChatGPT Go, ChatGPT Business, and Enterprise tiers are gaining GPT-5.5 Instant access "within weeks." Watch the

chat-latestalias rollout cadence on those tiers as the truer signal of full deprecation timing. - GPT-5.5 Thinking refresh. An Instant-tier upgrade typically precedes a Thinking-tier upgrade by 30 to 60 days. Expect a GPT-5.5 Thinking refresh announcement in early-to-mid Q3 2026.

- Memory-source provenance API. The federated memory feature ships in the consumer ChatGPT product first; OpenAI has not yet exposed it as a developer-facing API surface. Watch DevDay 2026 announcements for the API surface.

- Hallucination eval audits. The 52.5% reduction is OpenAI-internal. Independent benchmarks (HaluEval, TruthfulQA, FactScore) will publish their numbers within four to six weeks — those are the figures regulated industries will cite.

- The next ChatGPT-4o backlash test. OpenAI's tone tuning is calibrated to soften the deprecation friction. Whether it works will be visible in r/ChatGPT and X within ten days.

The Planet Tools take

GPT-5.5 Instant is not a typical default-model swap — it is the moment the cheapest tier starts beating last year's reasoning tier on hard math, while shipping a federated memory layer that quietly absorbs a chunk of what RAG pipelines used to do. For consumer ChatGPT users the experience improvement is incremental and well-tuned. For API developers the urgent action is mundane: pin your model snapshot string, capture new evals, and put the August 5 GPT-5.3 deprecation in your calendar this week. The structural takeaway — that Instant-tier capability now overlaps with where reasoning-tier capability lived 12 months ago — is the one to remember when planning Q3 product roadmaps.

Frequently asked questions

What is GPT-5.5 Instant and when did it launch?

GPT-5.5 Instant is OpenAI's new default model for ChatGPT, launched on May 5, 2026. It replaces GPT-5.3 Instant for Plus and Pro tier users immediately, with rollout to Free, Go, Business, and Enterprise tiers progressing within weeks. The Instant tier is the latency-optimized chat default, distinct from the GPT-5.5 Thinking reasoning tier.

How big is the AIME score jump?

GPT-5.5 Instant scores 81.2 on AIME 2025, up from 65.4 on GPT-5.3 Instant. That is a 15.8 absolute-point lift, or approximately 24% relative improvement. Historically, AIME scores above 70 on the Instant tier required upgrading to a reasoning-class model, so the jump puts default-tier capability into a band previously reserved for premium tiers.

What does the 52.5% hallucination reduction actually measure?

OpenAI ran an internal evaluation suite of high-stakes prompts spanning medicine, law, and finance — domains where a confidently wrong answer carries the highest user harm. GPT-5.5 Instant produced 52.5% fewer hallucinated claims on that suite than GPT-5.3 Instant. The metric is OpenAI-internal; independent benchmarks like HaluEval and TruthfulQA will publish their own comparable numbers in the coming weeks.

How does GPT-5.5 Instant memory work across files, conversations, and Gmail?

GPT-5.5 Instant exposes a federated retrieval layer across three sources: past ChatGPT conversations (full-text searchable, not just saved memories), uploaded files from prior sessions, and connected Gmail (opt-in). Each model response surfaces a memory-sources panel showing which items were used, and users can delete or correct outdated sources directly from that panel.

What does the chat-latest API alias point to now?

As of May 5, 2026, the chat-latest API alias resolves to GPT-5.5 Instant. Production apps following chat-latest are now serving GPT-5.5 Instant traffic. OpenAI's recommended best practice is to pin specific model snapshot strings for production while letting chat-latest follow on dev and staging.

When is GPT-5.3 Instant deprecated?

GPT-5.3 Instant remains accessible to paid OpenAI API customers for three months from the May 5 launch, putting the deprecation around August 5, 2026. After that, calls to the GPT-5.3 Instant snapshot string will return a deprecation error and need to be re-pointed to GPT-5.5 Instant or another model. Plan an explicit migration window before mid-July.

Are there breaking changes for developers?

Three things changed. First, chat-latest moved to GPT-5.5 Instant — capture new evals if you use the alias in production. Second, GPT-5.3 Instant has a three-month deprecation clock — schedule the migration. Third, response tone is tighter with fewer emojis and follow-up questions — pipelines that pattern-match on emoji or specific response shape may need a regex audit and re-prompting.

Does the memory feature extend the context window?

No. GPT-5.5 Instant retains the standard Instant-tier context window size. The memory feature is a federated retrieval layer over past conversations, uploaded files, and connected Gmail — what it changes is that the retrieval cost of remembering moves from the developer's RAG pipeline to OpenAI's hosted layer. For raw chat-completions API users building custom RAG, behavior is unchanged.

How does GPT-5.5 Instant compare to Claude Haiku 4.5 and Gemini 3 Flash?

On AIME, Claude Haiku 4.5 sits in the high-70s and Gemini 3 Flash in the mid-70s — GPT-5.5 Instant's 81.2 puts the cheapest OpenAI default tier ahead of both on hard math reasoning. The hallucination figure is harder to cross-compare because each lab uses internal evals, but the relative reduction is large enough that vertical apps in regulated industries should re-run their accuracy regression suites.

Why did OpenAI tune GPT-5.5 Instant to use fewer emojis and shorter responses?

The tone tuning is direct response to the February 2026 GPT-4o deprecation backlash, when users described the replacement model as feeling "cold" and "less personal." OpenAI's calibration for the May 5 launch foregrounds tone improvements — tighter responses, fewer follow-up questions, no gratuitous emojis — to soften the user-experience friction of replacing a default model that ChatGPT users had built habits around.