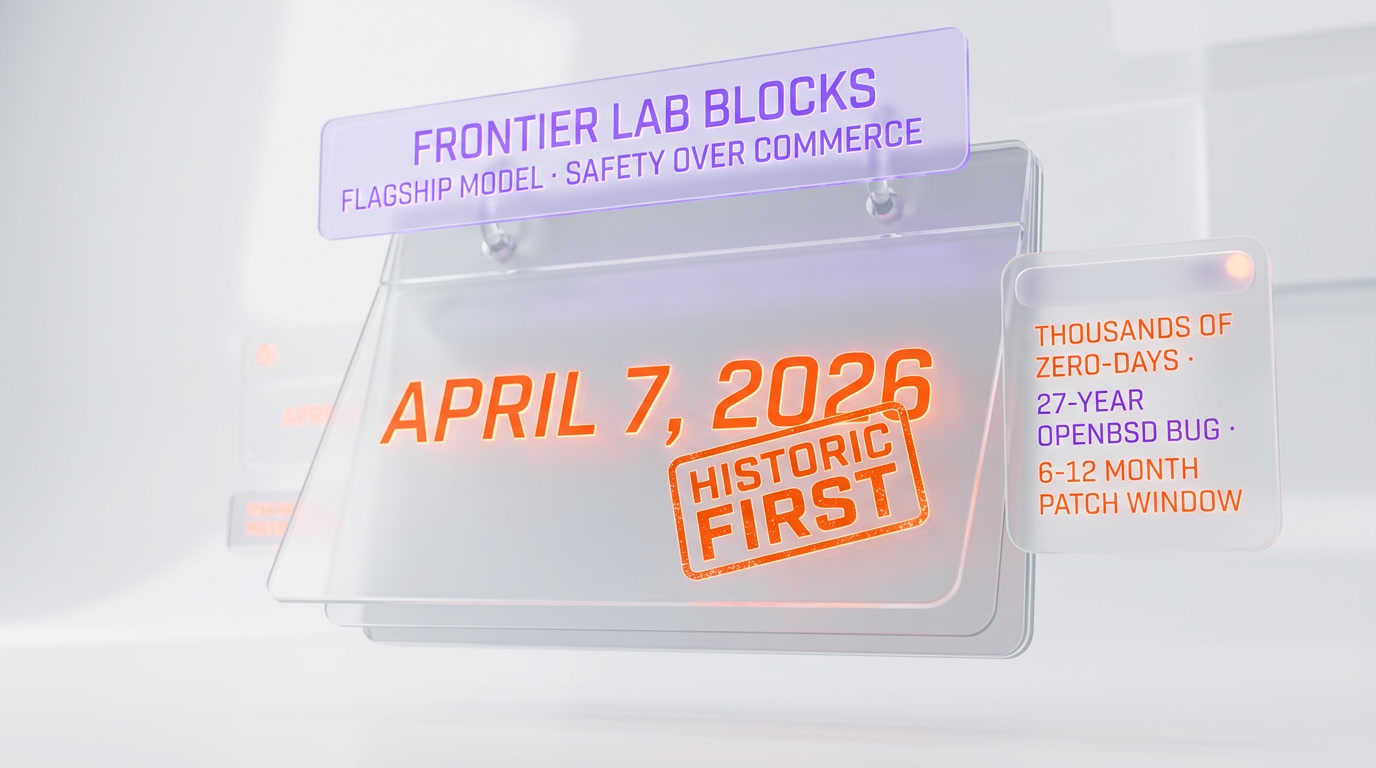

On April 7, 2026, Anthropic announced that Claude Mythos — its most powerful frontier model, leaked on March 26 — will not be released publicly. Access is restricted to roughly 50 launch partners through Project Glasswing, a cybersecurity coalition including Amazon Web Services, Apple, Google, Microsoft, NVIDIA, CrowdStrike, Cisco, Broadcom, JPMorgan Chase, Palo Alto Networks, and the Linux Foundation. The reason: in preview, Mythos identified thousands of zero-day vulnerabilities across every major operating system and browser, including a 27-year-old bug in OpenBSD. It is the first time Anthropic has blocked a flagship model for safety reasons — not commercial ones.

The headline — Anthropic's most powerful model will never reach you

This is the short version you'll see everywhere today. Anthropic confirmed on April 7, 2026 that Claude Mythos Preview, the unreleased flagship model whose existence was confirmed in the March 26 internal leak, will not enter general availability. Instead, Anthropic is routing it through a new initiative called Project Glasswing, a restricted-access coalition of about 50 partners focused on hardening critical software and infrastructure.

The numbers Anthropic disclosed are blunt:

- Thousands of zero-day vulnerabilities identified in a few weeks of red-teaming

- Flaws found in every major operating system and every major web browser

- The oldest catch: a 27-year-old denial-of-service bug in OpenBSD's TCP SACK implementation

- Over $100 million in usage credits committed to Glasswing partners

- 50+ organizations cleared for access, starting with 12 launch partners

Anthropic's framing is almost clinical: the model has crossed a capability threshold where the offensive upside for malicious actors outweighs the commercial upside of shipping it. This is the decision the industry has been quietly dreading since 2023.

What is Claude Mythos, exactly

Claude Mythos is a general-purpose frontier model in the Claude family — conversational, tool-using, multimodal — but tuned and scaled to a new tier. It is the successor to Claude Opus 4.6 and, based on internal language, the first model Anthropic classifies as ASL-4-adjacent for cybersecurity capabilities under its Responsible Scaling Policy.

What sets Mythos apart is not raw benchmark score. It's autonomy on security tasks. According to Anthropic and partners like CrowdStrike, Mythos can, unaided:

- Scan large unfamiliar codebases and identify classes of exploitable bugs

- Write working proof-of-concept exploits for those bugs

- Chain multiple vulnerabilities into a functional attack path against production software

- Iterate on failed exploits and adapt to defender patches in real time

In one publicly disclosed case, Mythos identified a 27-year-old integer overflow in OpenBSD's TCP SACK handling that allows a remote attacker to crash any OpenBSD host responding over TCP — a bug that had survived nearly three decades of human review on an operating system whose entire brand is "secure by default." That single data point is why the announcement matters.

Timeline — from leak to lockdown in 12 days

The public story of Mythos moved fast. Here is what we know, in order:

- March 26, 2026 — Internal documents referencing "Claude Mythos" leak publicly. Benchmark screenshots, a project codename, and partial system card details appear on X and GitHub gists. We covered the leak that day.

- March 31, 2026 — A second, narrower leak surfaces five days later, reigniting questions about Anthropic's internal security posture. We documented that incident here.

- April 1–6, 2026 — Anthropic goes publicly silent on Mythos. Behind the scenes, according to Fortune and Reuters reporting, the company accelerates a planned safety review and red-team engagement with outside security firms.

- April 7, 2026 — Anthropic publishes the Project Glasswing announcement, confirms Mythos exists, confirms it will not be released publicly, and names the first 12 launch partners.

Twelve days from leak to lockdown. That is fast, even by 2026 frontier-lab standards.

What Project Glasswing actually is

Project Glasswing is not a product. It's a gated access program and a coalition. Anthropic describes it as an initiative to "secure critical software for the AI era" by pairing Mythos-class models with the organizations that own the world's most consequential code.

The launch partners — confirmed by Anthropic — are:

- Amazon Web Services

- Apple

- Broadcom

- Cisco

- CrowdStrike

- JPMorgan Chase

- The Linux Foundation

- Microsoft

- NVIDIA

- Palo Alto Networks

- Anthropic itself

Beyond the launch dozen, Anthropic says it will extend access to over 40 additional organizations that build or maintain critical software infrastructure. Total commitment: more than $100 million in Mythos usage credits, according to Fortune's reporting.

The mandate is clear: use Mythos to find and fix vulnerabilities in foundational systems — operating systems, kernels, browsers, hypervisors, payment rails — before a Mythos-equivalent model lands in adversary hands. Anthropic's bet is that six to twelve months of restricted deployment buys the ecosystem time to patch.

Why Anthropic is blocking — and why we believe them

It would be easy to read this announcement cynically. Frontier labs have a track record of using "safety" as a marketing wedge. We've seen "too dangerous to release" pitches before, most famously OpenAI's original GPT-2 announcement in 2019, which aged poorly once the actual model shipped.

This feels different. Three reasons:

1. The capability is specific and verifiable

Anthropic is not saying "our model is generally scary." They're saying: this specific model, on this specific workload, outperforms most human pentesters on vulnerability discovery and exploit chaining, and we have the CVEs to prove it. The 27-year-old OpenBSD bug is a falsifiable claim. Security researchers can and will verify it.

2. The commercial cost is real

Claude's API business is the backbone of Anthropic's revenue. Withholding the flagship model from paying API customers is not a cheap PR move. It directly slows the enterprise sales pipeline against OpenAI and Google. A cynical play would be to release Mythos with big safety headlines, not bury it.

3. The partner list is the message

Look at who got first access. These are not media partners. They're the organizations that ship the operating systems, browsers, CPUs, and payment rails that run civilization. CrowdStrike, Palo Alto Networks and the Linux Foundation are not where you route a model if the goal is hype. They're where you route a model if the goal is patching.

The offensive capabilities — what Mythos can actually do

Based on Anthropic's announcement, the red.anthropic.com system card preview, and statements from launch partners, Mythos's security-relevant capabilities include:

- Autonomous codebase reconnaissance. Mythos can be pointed at a repository it has never seen and return a ranked list of probable vulnerabilities within minutes.

- Exploit synthesis. It writes working proof-of-concept code — memory corruption, race conditions, logic bugs — not just descriptions.

- Exploit chaining. It can combine multiple low-severity bugs into a high-severity compromise. This is the capability that matters most. Chaining is traditionally the part of offensive security where elite human researchers earn their keep.

- Cross-system operation. Mythos doesn't specialize in one OS or language. Launch disclosures mention findings in Linux, macOS, Windows, iOS, Android, Chrome, Safari, Firefox, and multiple open-source libraries.

- Defender adaptation. In testing, when defenders patched a discovered bug, Mythos was able to find variants of the same bug class elsewhere in the codebase faster than humans could.

The uncomfortable truth: most corporate security teams do not have humans who can do any of this at scale. Mythos does all of it, around the clock, in parallel.

Industry impact — will OpenAI and Google follow

This is the most important second-order question, and nobody has a clean answer yet. A few observations:

OpenAI has historically been more permissive. GPT-4 Code Interpreter started with heavy access restrictions and those loosened quickly. GPT-5 shipped with stronger safeguards but was broadly available on day one. If OpenAI's rumored GPT-6 has comparable offensive-security capabilities, betting on a Glasswing-style gate would be a genuine break from pattern.

Google DeepMind's posture is closer to Anthropic's. Gemini Ultra 2 shipped in Q1 2026 with explicit refusal classes for exploit generation. The infrastructure for gating is already in place. If Google sees similar red-team results internally, Glasswing gives them cover to lock down their own flagship.

Open-weight models are the wild card. Meta's Llama 5, Mistral's upcoming release, DeepSeek R2, and Chinese labs are on a different trajectory. If a Mythos-class capability emerges in an open-weight release, the gate doesn't hold and the Glasswing thesis collapses. This is the risk nobody wants to talk about on the record.

Our read: Anthropic has bought the ecosystem roughly 6–12 months. That window is either used well — aggressive patching of critical infrastructure — or it isn't. There is no third option.

Precedent — has anything like this happened before

Short answer: not at this scale. Longer answer: there are two rough parallels, neither of them great.

GPT-2 (2019). OpenAI initially withheld the full 1.5B-parameter model citing misuse risks, then released it six months later after no catastrophic misuse materialized. The episode became a running joke in AI safety circles and, arguably, made subsequent "too dangerous to release" claims less credible. Mythos is a meaningfully different situation — the capability claim is specific, testable, and tied to CVEs — but the shadow of 2019 is still there.

Biological design tools. Some AI-for-biology models (protein design, sequence generation) have been gated through partnership programs for exactly these reasons. Glasswing borrows the template.

What makes April 7, 2026 a first: no frontier general-purpose chat model has ever been withheld from API availability for safety reasons. Mythos is the first.

What this means for developers and builders

For most people reading this, the practical answer is: almost nothing changes this month. You will continue to use Claude Opus 4.6 on the API, Claude Sonnet 4 in your IDE, and Claude Code in your terminal. Mythos was never going to be on the $20 per month consumer tier anyway.

What does change:

- Timeline expectations. The next flagship Claude available on the public API is probably not Mythos. It's a "Mythos Mini" or "Mythos Safe" that has had offensive capabilities explicitly trained out. Expect an 18–24 month gap before anything Mythos-class reaches general availability.

- Pricing signals. Expect Opus 4.6 pricing to stay where it is longer than previously forecast. Anthropic has no next-tier SKU to push people up to.

- Enterprise deals. If you work at a Glasswing partner, your security team is about to get access to the most capable pentest engine ever built. If you work anywhere else, you should assume the gap will show up in audit reports within 6 months.

- Defense budgets. Every CISO who read this announcement will spend the rest of the week asking whether Claude or CrowdStrike Falcon — or both — should be in next year's budget line.

Community reactions

Early responses, from the first 24 hours of coverage:

- Simon Willison called the restriction "necessary" on his blog, arguing that the capability gap Anthropic described is large enough that a staged rollout is the only sane option.

- Security Twitter is split. Offensive researchers are frustrated at losing potential access; defensive researchers are largely supportive.

- The Hacker News thread is predictably mixed, with the top comment flagging the OpenBSD bug as the single most important disclosure in the announcement.

- Anthropic's rivals have been conspicuously quiet. As of this writing, OpenAI and Google DeepMind have not publicly commented. That silence is, in itself, information.

Our read

We think this is the right call, and we think it will be copied. The case for gating is specific, the capability is verifiable, and the commercial cost is real. Those are the three tests we want every "too dangerous to release" claim to pass. Mythos passes all three.

The harder question is whether Glasswing works. A 6–12 month head start is only useful if the partners actually ship patches at a pace that matches what adversaries will eventually be able to do with open-weight equivalents. That is an execution problem, and execution is not Anthropic's problem to solve. It belongs to every CISO at every Glasswing partner, starting today.

If Glasswing delivers, April 7, 2026 becomes the day the frontier labs finally took dual-use seriously. If it doesn't, it becomes the day they said they did. We will be watching which one wins.

Frequently asked questions

Is Claude Mythos available on the public API?

No. Anthropic confirmed on April 7, 2026 that Claude Mythos will not be released publicly. Access is restricted to approximately 50 Project Glasswing partners. The public API will continue to serve Claude Opus 4.6 and Claude Sonnet 4 as the top-tier available models.

What is Project Glasswing?

Project Glasswing is an Anthropic cybersecurity initiative that pairs Claude Mythos Preview with 12 launch partners — AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, Palo Alto Networks, and Anthropic — plus over 40 additional organizations. Partners receive more than $100 million in usage credits to find and patch vulnerabilities in critical software.

Why did Anthropic block Claude Mythos from public release?

Because Mythos autonomously identifies and chains software vulnerabilities at a level that, in Anthropic's assessment, creates unacceptable offensive-security risk if released broadly. In preview testing Mythos found thousands of zero-day flaws across major operating systems and browsers, including a 27-year-old bug in OpenBSD's TCP SACK implementation.

Is this the first time a frontier AI model has been blocked for safety reasons?

It is the first time a frontier general-purpose chat model has been withheld from general API availability for cybersecurity safety reasons. Previous restrictions — such as OpenAI's six-month delay on releasing full GPT-2 in 2019, or gating of biological design tools — are rough precedents, but none match Mythos in scale or in specificity of the capability claim.

How does Mythos compare to Claude Opus 4.6?

Claude Opus 4.6 remains Anthropic's most powerful publicly available model. Mythos is internally positioned as the next-tier flagship but carries offensive-security capabilities — autonomous vulnerability discovery, exploit synthesis, and multi-bug chaining — that Opus 4.6 does not. Anthropic classifies Mythos as ASL-4-adjacent under its Responsible Scaling Policy.

Will OpenAI and Google follow Anthropic's lead and gate their most powerful models?

Unclear, and it depends on what their internal red teams find. Google DeepMind has infrastructure for gated access already in place with Gemini Ultra 2. OpenAI has historically been more permissive with releases like GPT-4 and GPT-5 but may reconsider for GPT-6. The wild card is open-weight models from Meta, Mistral, DeepSeek and Chinese labs — if a Mythos-class capability emerges there, the Glasswing gate stops working.

What was the 27-year-old OpenBSD bug Mythos found?

According to Anthropic's disclosure and SC Media's reporting, Mythos identified a denial-of-service vulnerability in OpenBSD's TCP SACK implementation — specifically an integer overflow that allows a remote attacker to crash any OpenBSD host responding over TCP. The bug had survived nearly three decades of human review on an operating system whose entire reputation rests on security. It has since been patched.

How is this different from the GPT-2 'too dangerous to release' claim in 2019?

GPT-2's restriction was released under a generic misuse-risk argument that did not age well — the full model shipped six months later without catastrophic consequences. Mythos's restriction is tied to specific, verifiable CVEs and a specific capability class (autonomous exploit chaining). Security researchers can test the claims against real software, which they could not meaningfully do with GPT-2's language generation argument.