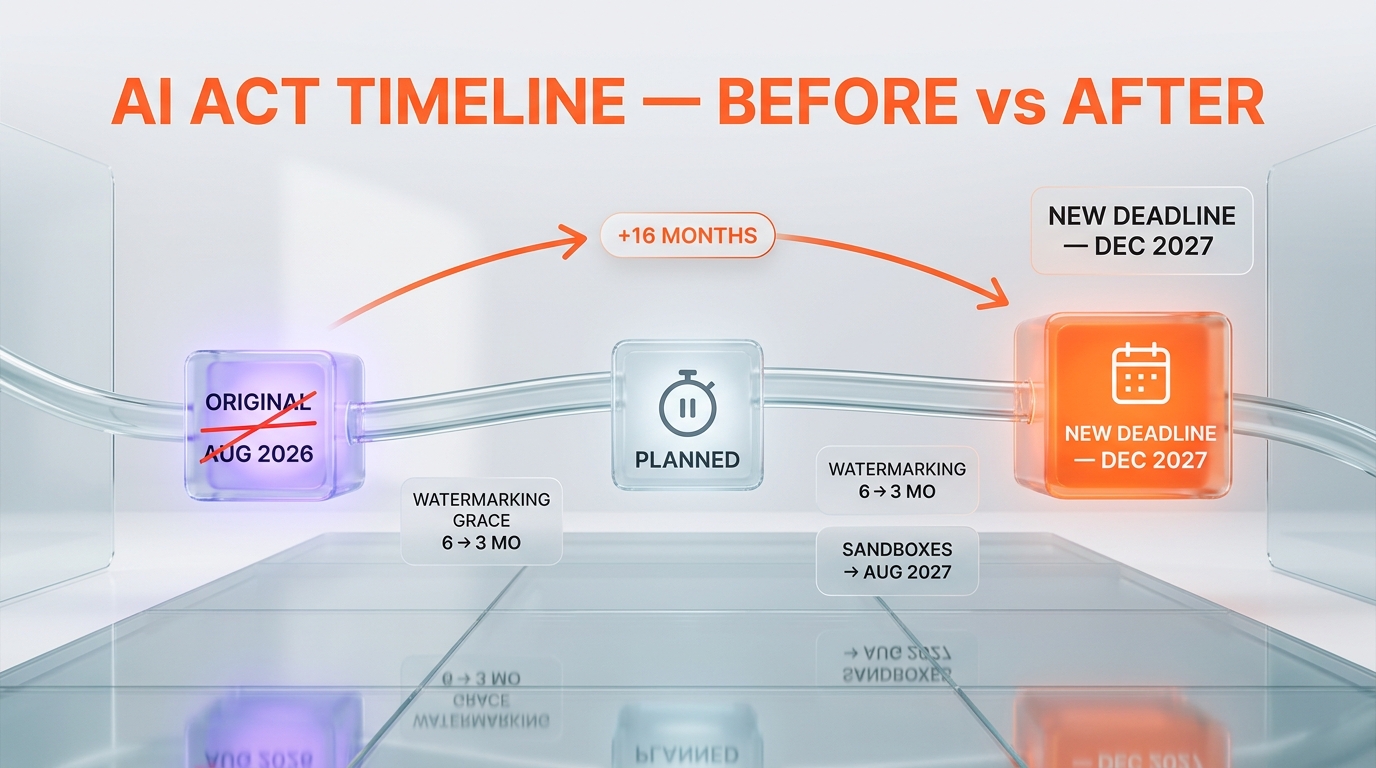

The regulation that was supposed to lead the world just got delayed. On 7 May 2026, the EU Council and the European Parliament agreed to push high-risk AI obligations from August 2026 to December 2027 — a 16-month slip — while granting industrial AI applications a near-total carve-out under separate machinery rules. Here's what changed, who lobbied, and what builders should actually do this quarter.

Six months ago, the AI Act was Brussels' moonshot. The first comprehensive AI law in the world. A model the EU hoped Washington, London, and Tokyo would copy. Today, after a German-led industrial offensive and sustained pressure from Washington, the Council and Parliament agreed to simplify and streamline the rules — a polite phrase that hides a meaningful retreat. High-risk obligations slip 16 months. Most factory-floor AI moves out of scope. Sandboxes get extended. The headline win for civil society — explicit bans on sexualized deepfakes and AI-generated child sexual abuse material (CSAM) — is real, but it sits next to a rollback that was unthinkable when the original text passed.

This is the story of how the EU's first-mover advantage on AI regulation eroded in one quarter, and what it changes for anyone shipping AI products in Europe right now.

What the 7 May deal actually changes

The political agreement reached in the early hours of 7 May 2026 between the Council of the EU and the European Parliament does not repeal the AI Act. It rewrites the implementation calendar and narrows several scope boundaries. The official Commission framing — "adjust the timeline for the application of high-risk rules to a maximum of 16 months" — is technically accurate and politically generous.

Concretely, four buckets of obligations move:

- High-risk system obligations (Annex III categories: employment, education, critical infrastructure, law enforcement, migration, justice) shift from August 2026 to December 2027. Standards drafting at CEN-CENELEC was running late, and the Commission used that gap as the official justification.

- Watermarking grace period for generative AI providers compresses from 6 months to 3 months. This is the only tightening in the package — a direct response to public outrage over abusive content generated by Elon Musk's Grok.

- National regulatory sandboxes stay open until August 2027, with broader access from 2028 onward. SMEs and SMCs get reduced documentation burdens.

- Industrial AI carve-out: AI embedded in factory machinery shifts from the AI Act to the existing Machinery Regulation. Medical devices stay in scope. This is the carve-out Siemens, Bosch, and Schneider Electric have been pushing for since 2024.

Two new prohibitions land at the same time, and both deserve attention. AI-generated sexualized deepfakes of identifiable individuals and AI-generated CSAM are now explicitly prohibited, joining the small list of practices banned outright under Article 5. The political timing is not subtle: the Grok controversy gave EU institutions cover to add prohibitions while loosening compliance burdens elsewhere. As the official framing puts it, the EU agreed to "simplify AI rules to boost innovation and ban 'nudification' apps to protect citizens" — a single sentence that captures the trade.

Who pushed for the rollback, and why it worked

The rollback did not come from nowhere. It is the result of an 18-month industrial campaign led by Germany, with active support from France and the Netherlands, and sustained pressure from the United States.

The German argument was framed around "double regulatory burden" — companies like Siemens AG and Robert Bosch GmbH argued that AI inside an industrial robot was already covered by the Machinery Regulation, and adding AI Act obligations on top would impose duplicate conformity assessments without improving safety. The German Federal Ministry for Economic Affairs took this argument to Brussels through 2025 and 2026, and it landed. Most factory-floor AI is now covered under the 2023 Machinery Regulation rather than the AI Act.

From Washington, the pressure was less subtle. The US Commerce Department's CASI program — the framework under which Google, Microsoft, and xAI agreed to pre-deployment evaluations — was repeatedly cited by US officials as evidence that voluntary cooperation works and that the EU's prescriptive approach risks splintering the transatlantic AI market. EU industry associations amplified this line in Brussels through Q1 2026.

Civil society pushed back. Groups including European Digital Rights (EDRi) and AlgorithmWatch argued the original timelines were "necessary to protect against documented near-term harms" — predictive policing, biometric surveillance, automated benefit denial. Their position lost. The Commission's framing — "innovation-friendly environment while protecting citizens" — accommodates the industry win and the deepfake/CSAM ban in one sentence, which is exactly what political compromises look like when the regulator is the one giving ground.

The new compliance calendar

Here is what the calendar looks like after 7 May 2026, side by side with the original schedule. Builders shipping in the EU should print this and pin it to a wall.

| Obligation | Original date | New date | Net change |

|---|---|---|---|

| Prohibited practices (Article 5) | February 2025 | February 2025 | Already in force |

| Deepfake / CSAM AI prohibitions (new) | — | August 2026 | New ban |

| GPAI (general-purpose AI) obligations | August 2025 | August 2025 | Already in force |

| Watermarking grace period | 6 months | 3 months | Tightened |

| High-risk Annex III systems | August 2026 | December 2027 | +16 months |

| High-risk Annex I systems | August 2027 | August 2027 | Unchanged |

| National sandboxes operational | August 2026 | August 2027 | +12 months |

| Factory-floor AI (Machinery Reg scope) | AI Act | Machinery Reg | Carved out |

Two things matter in this table. First, prohibited practices and GPAI obligations were already live before the deal — the rollback does not touch them. If you ship a foundation model in the EU today, your transparency, copyright, and risk-evaluation obligations remain. Second, Annex I high-risk systems (covered by existing product safety legislation — toys, cars, lifts, medical devices) keep their August 2027 deadline. The 16-month slip applies only to Annex III, which is the bigger and more contested category.

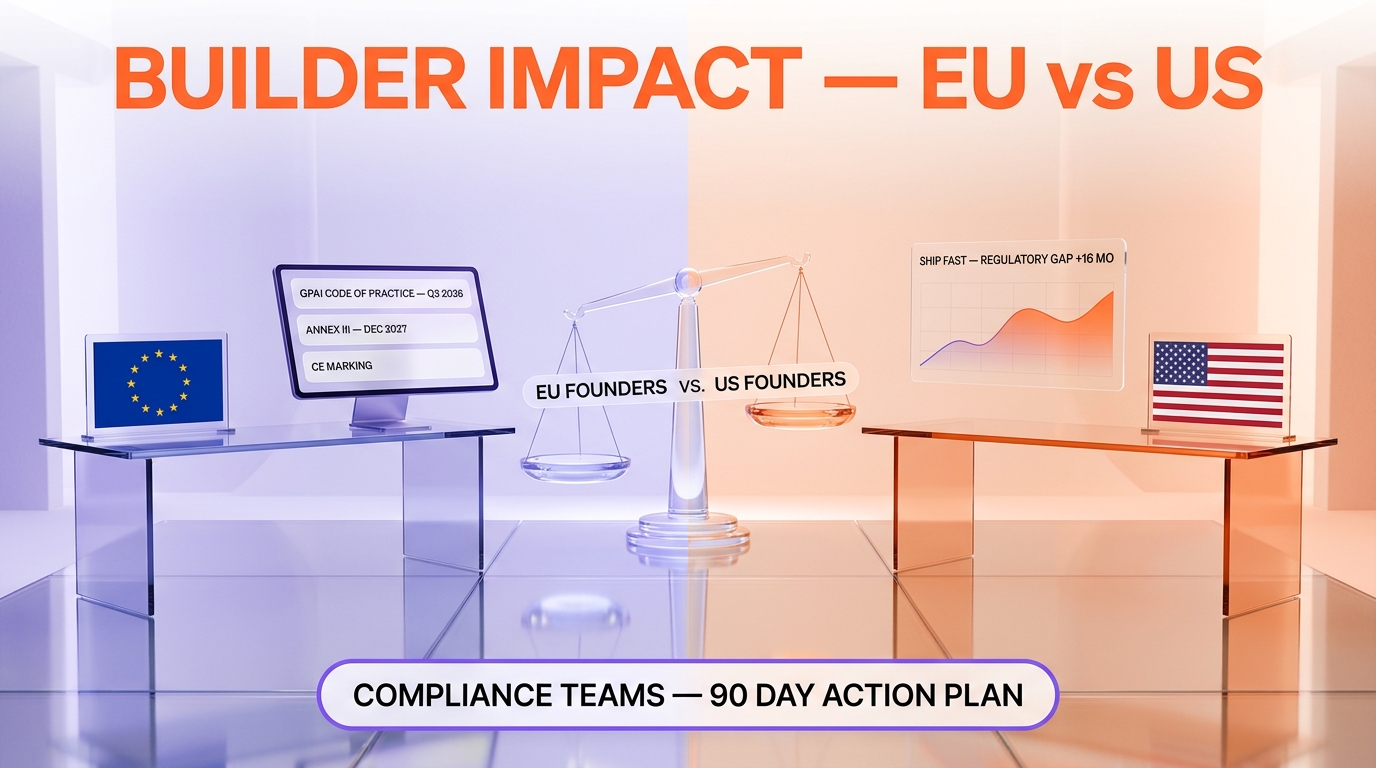

What this means for builders shipping in Europe

The honest answer for most teams: less work in 2026, more uncertainty in 2027. Specifically:

If you build factory-floor or machinery-embedded AI, you just got a meaningful exemption. Your product is now governed by the Machinery Regulation framework, which most industrial OEMs already navigate. Conformity assessment under the Machinery Reg is well-trodden ground; conformity under Annex III of the AI Act would have been new territory. Take the win.

If you build hiring, education, credit-scoring, or law-enforcement AI, you got 16 extra months — and 16 extra months of uncertainty. The standards your conformity assessment will hang on are still being drafted at CEN-CENELEC. Use this time to build internal documentation and risk-management processes that will map to whatever the standards say. Do not assume the rollback signals further leniency: the European Parliament negotiated this delay precisely because it wanted the standards to be solid before the rules apply.

If you ship generative AI products, the deal narrows your watermarking window. Three months is short. If you do not have provenance metadata baked into your output pipeline today, that is the highest-priority item on your 2026 roadmap. The C2PA spec is the most credible standard, and major players including Adobe, OpenAI, and Microsoft have implementations in production.

If you build deepfake or face-swap tools, read Article 5 carefully. The 7 May package adds explicit prohibitions on AI-generated sexualized deepfakes of identifiable individuals and AI-generated CSAM. "Identifiable" is the key word — the prohibition does not require the subject to consent or to be public; it requires only that the depicted person be identifiable. Generic synthetic faces remain legal; named-or-recognizable faces in sexual context are now banned outright.

The global picture: EU is no longer the regulator-in-chief

The political subtext of the 7 May deal is bigger than the technical changes. For three years the EU positioned the AI Act as the global benchmark — the GDPR moment for artificial intelligence. The expectation was that Washington, London, Singapore, and Tokyo would converge on the EU framework simply because it was the most detailed, the earliest, and tied to a 450-million-consumer market.

That expectation has dissolved. The United Kingdom's Data (Use and Access) Act took effect on 5 February 2026 with Article 22C providing a lighter-touch automated-decision-making framework that is explicitly not modeled on the AI Act. The United States chose voluntary pre-deployment evaluations through the Commerce Department's CASI program — Google, Microsoft, and xAI signed up — rather than legislation. China continues its sectoral approach. None of these jurisdictions are racing to copy the EU model anymore, and the 7 May deal makes the EU model itself look more negotiable than it was on paper.

This is not the end of EU regulatory leadership. It is the end of EU regulatory unilateralism. The Commission still has the most detailed AI rulebook in the world. But "most detailed" no longer translates to "most influential" by default — Brussels now has to convince other capitals that detail is worth the compliance cost, and the 7 May rollback weakens that argument.

What happens next: enforcement, standards, and the 2027 cliff

The next 18 months are going to look quiet on paper and busy in practice. CEN-CENELEC standards work continues, with harmonized standards expected in tranches through 2026 and 2027. The AI Office at the Commission gets strengthened oversight authority under the simplification deal — a small win that will matter when enforcement starts. National competent authorities are being designated across member states, and most of them are still understaffed.

Three things to watch:

- Standards delivery on time. If CEN-CENELEC misses the late-2027 deadline, expect another delay debate in 2027. The Commission has demonstrated it will move dates if standards are not ready.

- Enforcement of GPAI obligations. These have been live since August 2025. As of May 2026, public enforcement actions are rare. The first major case will set the tone for the broader regime.

- The 2027 cliff. When December 2027 arrives, thousands of Annex III systems will need to be classified, risk-assessed, and conformity-evaluated essentially at the same moment. Notified bodies (the third-party assessors) are not yet at scale. This is the operational risk most observers underestimate.

Verdict

Our take: the EU blinked, but the building still stands. The 7 May 2026 deal is a meaningful retreat on industrial scope and high-risk timing, paired with two real new prohibitions on the worst synthetic-media abuses. Civil society lost on enforcement timing; industry won on scope and calendar; the deepfake/CSAM ban gives political cover to both sides. For builders, the practical message is straightforward: keep your GPAI compliance current, accelerate watermarking work because the grace period shrank, and use the 16-month Annex III delay to build documentation infrastructure rather than assuming the obligations will keep slipping.

Skip the panic if: you do not ship Annex III systems, your factory-floor AI is now under Machinery Reg scope, or you are already C2PA-compliant on output provenance.

Watch closely if: you ship hiring, education, credit-scoring, biometric, or law-enforcement AI in the EU. Your December 2027 cliff is now a project plan with a hard deadline, not an aspirational target. For deeper context on how AI regulation interacts with model release schedules, see our analysis of Claude Opus 4.7's enterprise positioning and our running coverage of AI policy and tooling.

Frequently Asked Questions

What did the EU agree on 7 May 2026?

The Council of the EU and the European Parliament reached a political agreement to simplify the AI Act. The deal delays high-risk AI obligations under Annex III from August 2026 to December 2027 — a 16-month postponement. It also reduces the watermarking grace period for generative AI providers from 6 months to 3 months, extends national regulatory sandbox availability to August 2027, and moves most factory-floor and machinery-embedded AI out of the AI Act and under the existing Machinery Regulation. Two new prohibitions are added: AI-generated sexualized deepfakes of identifiable individuals and AI-generated child sexual abuse material (CSAM).

Why was the AI Act delayed?

Two pressures combined. First, the technical standards that high-risk conformity assessments depend on (drafted at CEN-CENELEC) are running behind schedule, and applying obligations without finalized standards would create compliance ambiguity. Second, sustained industrial lobbying — led by Germany on behalf of companies including Siemens and Bosch, and supported by France and the Netherlands — argued that AI Act obligations on top of existing Machinery Regulation rules would impose a "double regulatory burden". The United States added pressure through the Commerce Department's CASI program as evidence that voluntary frameworks are sufficient. The Commission framed the delay as "adjusting the timeline" to ensure standards are ready before compliance starts.

Are deepfakes now banned in the EU?

Specific deepfakes are now explicitly prohibited under Article 5 of the AI Act. The 7 May 2026 deal adds two new prohibited practices: AI-generated sexualized content depicting identifiable individuals ("nudification") and AI-generated child sexual abuse material (CSAM). Generic deepfakes (synthetic faces of non-identifiable people, satire of public figures with disclosure) remain legal but subject to transparency requirements under Article 50. The political driver for the new bans was public outrage over abusive synthetic content generated by Elon Musk's Grok in late 2025 and early 2026.

Does the delay apply to all high-risk AI systems?

No. Only Annex III high-risk systems — those defined by use case, including employment, education, credit scoring, critical infrastructure, law enforcement, migration, and justice — are pushed from August 2026 to December 2027. Annex I high-risk systems, which are AI components of products already covered by EU product safety legislation (toys, cars, lifts, medical devices), keep their original August 2027 deadline. Prohibited practices under Article 5 remain in force from February 2025, and general-purpose AI (GPAI) provider obligations remain in force from August 2025.

What changed for industrial and factory AI?

The deal moves AI embedded in factory machinery and industrial robots out of the AI Act and into the scope of the EU Machinery Regulation 2023/1230. This was the central ask of German industry, particularly Siemens AG and Robert Bosch GmbH, who argued that applying AI Act conformity assessment on top of Machinery Regulation conformity assessment would be duplicative without improving safety outcomes. Medical devices and other AI-embedded products covered by Annex I product safety legislation remain in the AI Act framework. The carve-out is narrower than industrial associations originally requested, but it removes the largest source of duplicate-compliance complaints.

What does the watermarking change mean for generative AI providers?

Generative AI providers — including foundation model developers and image, audio, and video generation services — must mark AI-generated output as machine-detectable under Article 50. The original AI Act gave providers a 6-month grace period after the obligation took effect to implement compliant watermarking and provenance metadata. The 7 May 2026 deal reduces that window to 3 months. In practice, providers shipping in the EU should already have a content-provenance pipeline based on a recognized standard such as C2PA, which Adobe, OpenAI, and Microsoft have deployed in production. Three months is too short to design and ship watermarking from scratch.

How does the EU AI Act compare to US and UK approaches now?

After 7 May 2026, the gap narrows. The United States operates the Commerce Department's CASI program, under which Google, Microsoft, and xAI agreed to pre-deployment evaluations — a voluntary framework with no statutory penalties. The United Kingdom's Data (Use and Access) Act took effect on 5 February 2026 with Article 22C providing a lighter-touch automated-decision-making framework explicitly not modeled on the AI Act. China continues its sectoral approach (deep-synthesis rules, generative AI rules) without an omnibus law. The EU still has the most prescriptive framework globally, but the 7 May rollback weakens the argument that other jurisdictions should converge on the EU model.

What should AI startups do right now?

Three priorities for any team shipping AI in the EU through 2027. First, confirm whether your product falls under Annex III (use-case-driven high-risk) or Annex I (product-safety-driven high-risk) — your deadline is December 2027 in the first case, August 2027 in the second. Second, accelerate watermarking and provenance work because the grace period shrank from 6 months to 3 months — implement C2PA or an equivalent standard now. Third, start internal documentation and risk-management processes that will map to forthcoming CEN-CENELEC harmonized standards, even if those standards are not final. The 16-month delay is a planning window, not a holiday.

Who lobbied for the AI Act rollback?

The campaign was led by Germany, with active support from France and the Netherlands. Industrial heavyweights including Siemens AG, Robert Bosch GmbH, and Schneider Electric pushed the "double regulatory burden" argument through national governments and the European Round Table of Industrialists. The German Federal Ministry for Economic Affairs carried the position into Council negotiations. The United States applied parallel pressure, citing the Commerce Department's CASI voluntary framework with Google, Microsoft, and xAI as evidence that prescriptive regulation is unnecessary. Civil society groups including European Digital Rights (EDRi) and AlgorithmWatch opposed the rollback, arguing the original timelines were necessary to address documented near-term harms — but they did not prevail.

Could the AI Act be delayed again after 2027?

Yes, structurally. The Commission has now demonstrated twice that it will adjust timelines if technical standards are not ready or if industrial pressure is sustained. If CEN-CENELEC standards for Annex III systems are not finalized by mid-2027, expect another delay debate in late 2027. The harder constraint is political: a second delay would visibly damage the EU's credibility as a regulatory leader, and the European Parliament has signaled it will resist further postponements. Builders should plan for December 2027 as a hard deadline while monitoring standards-track progress quarterly.

Where can I read the official text of the 7 May 2026 deal?

The political agreement was announced by the Council of the EU and the European Parliament on 7 May 2026. The Commission's Digital Strategy portal at digital-strategy.ec.europa.eu hosts the official summary. The full legislative text amending Regulation (EU) 2024/1689 (the AI Act) is going through final co-legislator procedure, with formal adoption following the procedural calendar. Until the consolidated text is published in the Official Journal, the safest reference is the Commission's announcement plus the original AI Act with the amendments highlighted in tracked-change form.