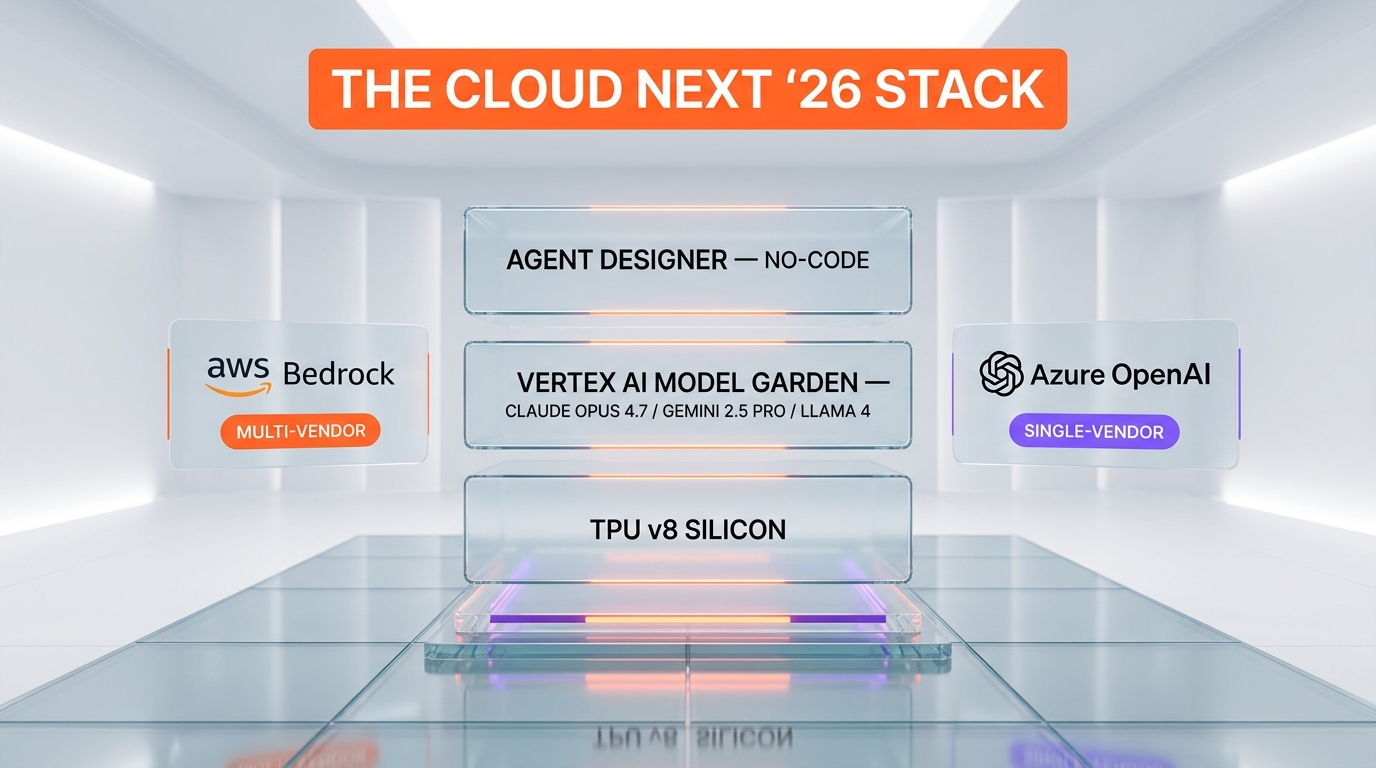

Google Cloud Next '26 dropped Gemini 3.1 Pro, Lyria 3, TPU v8, and a no-code Agent Designer on May 7, 2026. The line that actually moves the cloud AI race: Claude Opus 4.7 now runs natively on Vertex AI under what Google calls "open choice."

Read the official Cloud Next '26 recap on the Google blog, count how many model launches Google announced, and you arrive at a number around fifteen. Read the partner section, find one quiet line about Anthropic Claude on Vertex AI, and you arrive at the announcement that actually rewrites the cloud playbook for 2026.

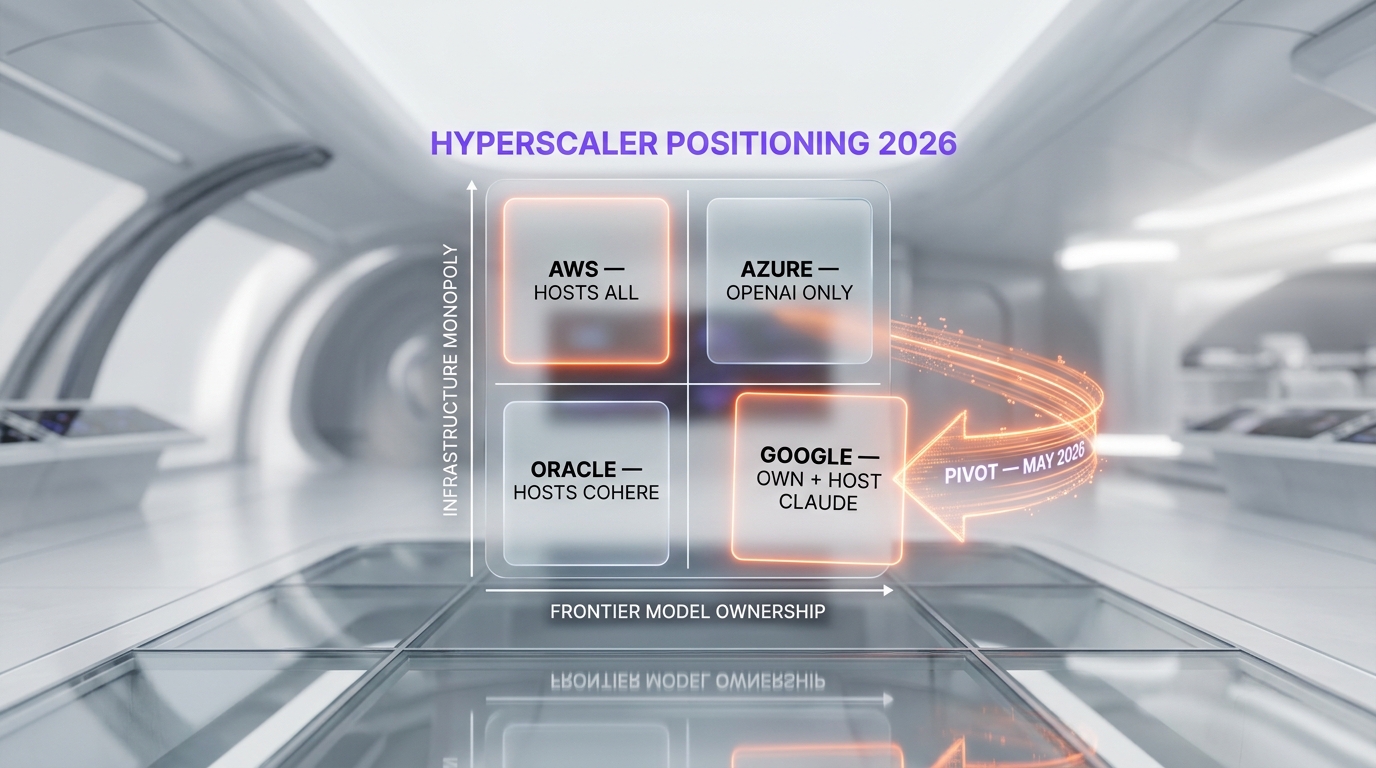

For the last three years the hyperscaler narrative has been simple. AWS sold Bedrock as the neutral marketplace where every frontier model lived next to every other frontier model. Microsoft Azure sold an OpenAI-only relationship that paid for itself the second ChatGPT crossed 200 million weekly users. Google Cloud sold Gemini, the model Google built itself, and pitched verticality as a feature. Cloud Next '26 is the keynote where Google stopped pretending that strategy still works.

What Google actually shipped at Cloud Next '26

The model lineup is real and the numbers are concrete. Gemini 3.1 Pro is positioned as the company's most capable model for complex agentic workflows, picking up where Gemini 3 left off in late 2025. Gemini 3.1 Flash Image, marketed externally as Nano Banana 2, is the same image model we run for hero generation across our daily content pipeline at ThePlanetTools.ai — output quality at 1376x768 is currently the best price-per-image in the high-realism category we have benchmarked. Lyria 3 is Google's first professional-grade audio model with multi-speaker dialog and broadcast-quality music, going head-to-head with ElevenLabs and Suno on different fronts.

The infrastructure half is heavier. TPU v8 ships in two SKUs — TPU 8t for training, TPU 8i for inference, with Google claiming "80% better performance per dollar" on the inference variant versus the previous generation. The whole stack now sits inside the rebranded AI Hypercomputer, wired together by a custom interconnect called Virgo Network and a Lustre-based storage tier that pushes 10 terabytes per second. NVIDIA Vera Rubin NVL72 racks are also available for customers who prefer GPUs, and Google Cloud Axion processors handle the cost-sensitive Arm workloads.

The agent platform is where the strategy gets aggressive. Agent Designer is a no-code, trigger-based interface aimed squarely at non-engineering users. Agent Studio covers low-code with natural language. The new Agentic Data Cloud wraps a Knowledge Catalog that auto-tags entities and a Cross-Cloud Lakehouse standardized on Apache Iceberg so customers can query AWS S3 without leaving Vertex.

Cloud Next '26 headline announcements

| Layer | Announcement | What it competes with |

|---|---|---|

| Models | Gemini 3.1 Pro | Claude Opus 4.7, GPT-5 |

| Models | Gemini 3.1 Flash Image (Nano Banana 2) | FLUX 1.1 Pro, GPT Image 1 |

| Models | Lyria 3 audio | ElevenLabs v3, Suno v5 |

| Hosted partner | Claude Opus 4.7 on Vertex AI | AWS Bedrock Claude, Azure OpenAI |

| Silicon | TPU 8t and TPU 8i | NVIDIA H200 / B200, AWS Trainium 3 |

| Network | Virgo Network interconnect | NVIDIA NVLink Switch, AWS EFA |

| Agent platform | Agent Designer (no-code) | Microsoft Copilot Studio, n8n |

| Agent platform | Gemini Enterprise Agent Platform | AWS Bedrock Agents, Azure AI Foundry |

| Data | Cross-Cloud Lakehouse on Iceberg | Snowflake, Databricks |

| Security | Wiz integration plus three SOC agents | CrowdStrike, Microsoft Defender XDR |

The Claude on Vertex pivot — and why it is the actual headline

Tucked between TPU benchmarks and Workspace demos, Google announced that Claude Opus 4.7, Anthropic's flagship model launched April 16, 2026, is now available natively on Vertex AI. Not via a thin proxy. Not as a "preview." Native, with the same per-region availability, the same VPC-SC controls, and the same enterprise-grade SLAs Google offers on Gemini itself. The framing in the Cloud Next blog is one short phrase — "open choice" — and that phrase is doing an enormous amount of strategic work.

Because here is what "open choice" means in plain English: Google has accepted that frontier capability cannot be guaranteed by a single research lab anymore. From Anthropic's launch post for Opus 4.7 we know the model holds the top SWE-bench Verified score at 78.4% and runs autonomously for hours on agentic coding tasks. Google's internal Gemini 3.1 Pro is competitive on most benchmarks but does not lead on long-horizon coding the way Opus 4.7 does. A bank, a law firm, or a quant fund evaluating Vertex in 2026 will ask: "What happens when the customer wants Claude for code review and Gemini for retrieval?" Until today, the answer was "you go to AWS Bedrock or run multi-cloud." After today, the answer is "stay on Vertex."

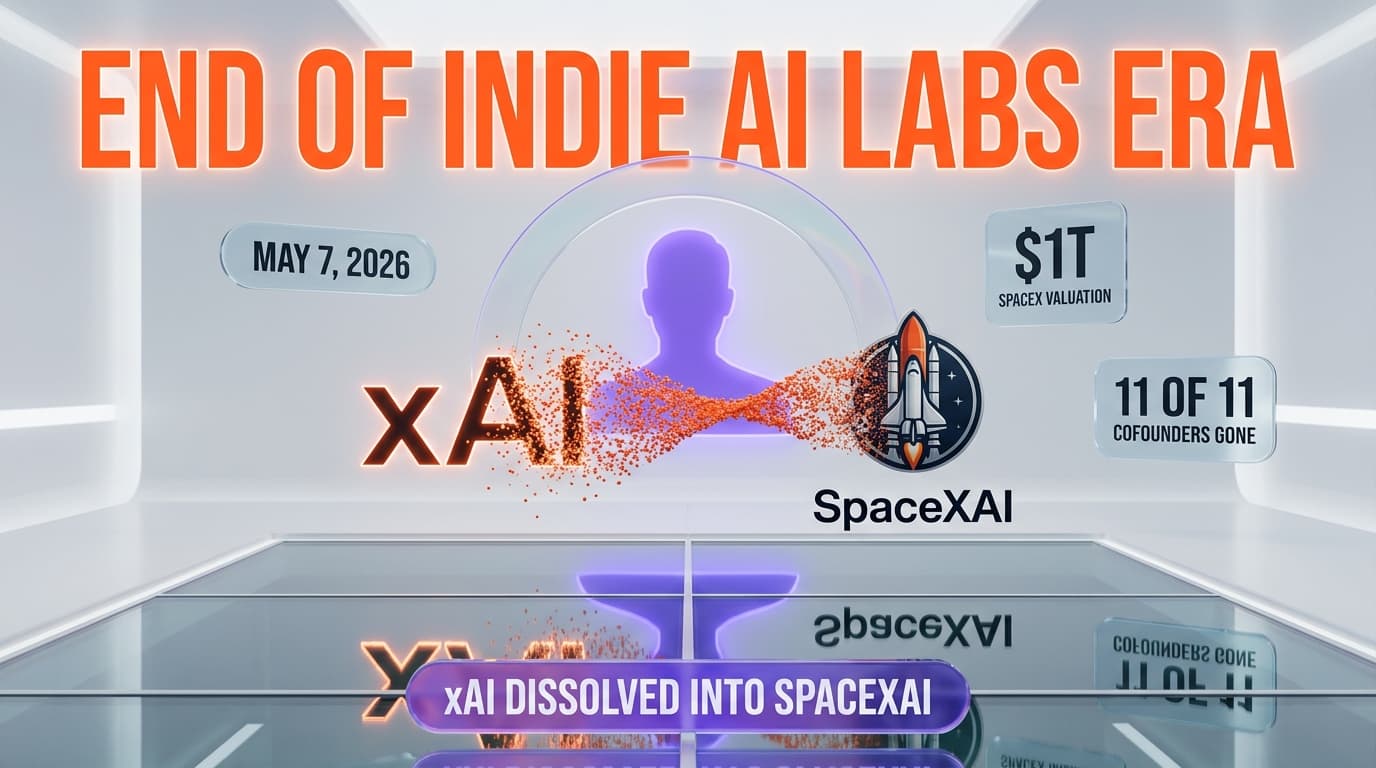

This is the second public signal in eighteen months that the cloud AI race has moved from a "best model" race to a "best optionality" race. The first was AWS doubling Bedrock's third-party model catalog. The second is Google capitulating on its own platform. Microsoft is now the single hyperscaler still betting that an exclusive frontier relationship will be enough — and Azure's OpenAI-only posture is starting to look more like a constraint than a moat.

What this means for Anthropic

For Anthropic the math is simple and brutal. Claude was already first-party available on AWS Bedrock since 2024. Now it ships natively on Vertex AI as well. Two of the three Western hyperscalers carry Claude Opus 4.7 as a marquee third-party model with full enterprise plumbing. The only remaining hyperscaler that does not is Microsoft Azure, and Azure has been losing ground in coding-heavy enterprise pilots since GitHub Copilot's switch to Claude Sonnet as the default model option in late 2025.

Anthropic's distribution problem, which seemed structural in 2023, is now solved at the cloud-vendor layer. The remaining battleground is direct API and the consumer Claude.ai surface. Claude Opus 4.7 at the API level still costs $15 per million input tokens and $75 per million output tokens — the same pricing Anthropic publishes on its own site applies via Vertex with Google's standard infrastructure markup on top. Customers gain VPC integration, regional residency, and Workspace plumbing. Anthropic gains Google's enterprise sales motion. The trade is so favorable for both sides that it is surprising it took until May 2026 to land in this form.

Agent Designer and the no-code bet

If Claude on Vertex is the strategic story, Agent Designer is the product story Google wants journalists to write. Trigger-based, no-code, drag-and-drop — the pitch is "anyone in your finance team can ship a long-running agent that watches an inbox and updates a spreadsheet." The competitive frame is Microsoft Copilot Studio and n8n, with a sprinkle of Zapier for branding posture.

The execution risk is real. No-code agent builders have a brutal track record — Salesforce Agentforce, Adept, and Builder.ai all attempted versions of this and hit the same wall: trigger-based workflows fall over when the underlying model has any non-determinism, and frontier LLMs have a lot of non-determinism. Google's bet is that the combination of Gemini 3.1 Pro's tool-use accuracy plus deterministic agent sandboxes can break that pattern. A six-month lookback on early-access customers is the only way to know.

The customer logos Google paraded onstage are unusually heavy for a Cloud Next: The Home Depot demoed a phone and in-store assistant, Papa John's showed an ordering agent that remembers the customer's "usual" order, Mars and Citadel Securities appeared together for quantitative research workflows, and Unilever claimed organization-wide agent deployment touching 3.7 billion consumers. Whether those deployments hold in production at twelve months is the only metric that matters. Cloud Next '24 had similarly heavy logos and several quietly walked back during 2025.

TPU v8 versus the NVIDIA roadmap

The 80% performance-per-dollar claim on TPU 8i is the kind of number that requires fine print and Google did not publish much. The internal benchmark almost certainly uses Google's own Gemini 3.1 Flash inference workload as the reference, which favors TPU's matrix-multiply geometry. NVIDIA's Blackwell B200 platform still leads on workloads where FP8 sparsity matters and on any model that was originally trained on CUDA. Translation: TPU v8 is going to win the Vertex captive-customer benchmark. NVIDIA still wins the merchant-silicon benchmark.

What changed materially is the AI Hypercomputer wrap. Google has stopped selling TPU as a chip and started selling it as a system that includes the Virgo Network interconnect, Managed Lustre storage at 10 terabytes per second, and a software stack that abstracts away the TPU-versus-GPU choice for most customers. AWS made the same pivot two years earlier with Trainium plus EFA plus FSx for Lustre. Microsoft has not. The strategic implication is that hyperscaler differentiation in 2026 is moving from individual chip benchmarks to full-stack system economics — and Google now has a defensible answer where it previously had only a roadmap.

The Wiz acquisition context

The Wiz acquisition closed earlier in 2026 and Cloud Next '26 was the first major venue where Google integrated Wiz formally into the security narrative. The pitch is now "Google's threat intelligence plus Wiz's cloud security platform plus three new agentic SOC agents (Threat Hunting, Detection Engineering, Third-Party Context)" packaged as a unified offering. Wiz expanded its support to Databricks, AI studios, and other multicloud platforms — a deliberate signal that Google is willing to secure workloads running on competitor clouds in exchange for owning the security control plane.

This matters for the Claude-on-Vertex story too. A bank running Claude on AWS Bedrock previously had to stitch together CrowdStrike or Microsoft Defender for the security overlay. With Wiz now native on Vertex and supporting cross-cloud, the same bank can run Claude on Vertex, secure it with Wiz, and have Wiz also watch its leftover AWS workloads. That is a security-ops consolidation argument Microsoft and AWS will struggle to match in the next twelve months.

What we watch next

Three signals will tell us whether the "open choice" framing was a one-off marketing line or a durable strategic shift. First, whether Google adds a second frontier non-Gemini model — xAI Grok or Mistral Large would be the obvious next hosts. Second, whether the Vertex API surface gives Claude full feature parity with Gemini, including the new Agent Designer triggers and Workspace integrations. Third, whether Anthropic's next release ships day-zero on Vertex or whether there is a delay window that signals AWS still has preferential access.

For the cloud AI race specifically, Cloud Next '26 is the moment Google admitted publicly that Gemini alone is not enough. For Anthropic, it is the moment the company became fully cloud-distribution-independent. For Microsoft, it is the moment the OpenAI-exclusive moat went from "an asset" to "a question." And for customers, it is the first Cloud Next where the right answer to "which hyperscaler should we standardize on?" stopped being a function of which model the hyperscaler builds — and started being a function of which models the hyperscaler hosts.

Frequently Asked Questions

What was announced at Google Cloud Next '26?

Google Cloud Next '26 introduced Gemini 3.1 Pro and Gemini 3.1 Flash Image (also called Nano Banana 2), the Lyria 3 audio model, TPU v8 in training (8t) and inference (8i) variants, the AI Hypercomputer system, the Virgo Network interconnect, Agent Designer (no-code) and Agent Studio (low-code), the Agentic Data Cloud with Apache Iceberg-based Cross-Cloud Lakehouse, and three security agents (Threat Hunting, Detection Engineering, Third-Party Context) integrated with the Wiz acquisition. The headline strategic announcement was native Vertex AI hosting of Anthropic's Claude Opus 4.7.

Why does hosting Claude on Vertex AI matter?

Until Cloud Next '26, AWS Bedrock was the only hyperscaler offering Claude as a first-party hosted model. Google's move puts Claude Opus 4.7 natively on Vertex with full VPC-SC controls and enterprise SLAs, ending Vertex's Gemini-only positioning. Strategically it signals Google has accepted that frontier capability is no longer guaranteed by a single research lab, and customers who previously had to multi-cloud to access Claude can now consolidate on Google Cloud. It also leaves Microsoft Azure as the only major hyperscaler still betting on a single-vendor (OpenAI) frontier relationship.

What is Gemini 3.1 Flash Image versus Nano Banana 2?

They are the same model. Gemini 3.1 Flash Image is the formal Vertex AI product name and Nano Banana 2 is the consumer and external marketing name Google uses on AI Studio and Google Labs. The model handles photorealistic image generation, character consistency, and editing at resolutions up to 1376x768 in the version available via Leonardo.ai's API, which our team uses daily for content hero images. It competes directly with Black Forest Labs FLUX 1.1 Pro and OpenAI GPT Image 1.

How does TPU v8 compare to NVIDIA Blackwell?

Google claims TPU 8i delivers "80% better performance per dollar" on inference versus the previous TPU generation, but the published comparison uses Gemini 3.1 Flash as the reference workload, which favors TPU geometry. NVIDIA Blackwell B200 still leads on workloads optimized for FP8 sparsity and on any model originally trained on CUDA. The realistic read is that TPU v8 wins the Vertex captive-customer benchmark while NVIDIA wins the merchant-silicon benchmark, with the AI Hypercomputer system economics being Google's actual competitive advantage rather than raw chip performance.

What is Agent Designer and who is it for?

Agent Designer is Google's new no-code, trigger-based interface for building long-running AI agents. It targets non-engineering users in functions like finance, operations, and customer support — anyone who could build a Zapier workflow but not a Python script. The closest competitors are Microsoft Copilot Studio and n8n. The execution risk is the same one that sank previous no-code agent platforms: trigger-based workflows fall over when the underlying model has non-determinism, and Google is betting Gemini 3.1 Pro's tool-use accuracy plus deterministic sandboxes can break that pattern.

When was Google Cloud Next '26 held?

Google published the official Cloud Next '26 recap on the Google blog on May 7, 2026. The conference itself ran in the days immediately preceding the recap publication, following the standard Cloud Next format Google has used since 2017. The recap covers all major announcements across models, infrastructure, agent tooling, security, and customer implementations from companies including The Home Depot, Papa John's, Mars, Citadel Securities, and Unilever.

Does Claude Opus 4.7 cost more on Vertex AI than on the Anthropic API?

Anthropic publishes Claude Opus 4.7 pricing at $15 per million input tokens and $75 per million output tokens on its direct API. Vertex AI applies Google's standard infrastructure markup on top of that base pricing, similar to how AWS Bedrock prices Claude. Customers pay slightly more on Vertex than on the Anthropic API but gain VPC-SC integration, regional data residency, unified billing with other Google Cloud services, and enterprise SLAs. For most enterprise buyers the markup is a rounding error compared to compliance and procurement savings.

What does "open choice" mean in Google's Cloud Next '26 messaging?

"Open choice" is the marketing phrase Google uses to describe the new strategy of hosting third-party frontier models alongside its own Gemini family on Vertex AI. The phrase is doing strategic work by signaling to enterprise buyers that Google will not lock them into Gemini if Claude or another model is a better fit for a specific workload. It mirrors AWS Bedrock's positioning since 2023 and represents a meaningful pivot from Google's earlier "Gemini-first" Vertex posture in 2024 and 2025.

Which Cloud Next '26 customer demos are most credible?

The Home Depot's phone and in-store assistant and Papa John's ordering agent with persistent customer memory are the most concrete demos because both companies have publicly disclosed multi-year Vertex AI commitments and on-stage demos showed real customer interactions rather than mocked flows. The Mars and Citadel Securities quantitative research demo and Unilever's claim of agent deployment serving 3.7 billion consumers organization-wide are more aspirational and worth revisiting at twelve months to see whether the deployments survived production scaling, the same way several Cloud Next '24 logo customers later quietly walked back their commitments.

How does this affect Microsoft Azure and the OpenAI partnership?

Cloud Next '26 leaves Microsoft Azure as the only one of the three Western hyperscalers still betting on a single-vendor frontier relationship through its OpenAI partnership. AWS Bedrock has hosted Claude since 2024 and added Mistral, Cohere, and Meta Llama. Google now hosts Claude Opus 4.7 natively on Vertex. The Azure OpenAI exclusive starts to look more like a constraint than a moat, especially as enterprise buyers increasingly want optionality across model families. Whether Microsoft pivots to host Claude or Gemini on Azure within the next twelve months is one of the most consequential open questions in cloud AI.

What is the Cross-Cloud Lakehouse and why does it use Apache Iceberg?

The Cross-Cloud Lakehouse is Google's new data layer that lets Vertex AI query data sitting in AWS S3, Azure Blob Storage, or other clouds without copying it into Google Cloud Storage first. It standardizes on Apache Iceberg as the open table format because Iceberg has become the de facto industry standard for cross-engine data lake interoperability, with native support in Snowflake, Databricks, AWS Athena, and now Vertex. The strategic effect is that customers can keep historical data on AWS while running new AI workloads on Vertex, lowering the migration friction that previously protected AWS Glue and AWS Athena revenue.

Should our company switch from AWS Bedrock to Vertex AI for Claude?

Probably not yet. The default recommendation for most enterprises in May 2026 is to keep Claude workloads where they currently run and use the new Vertex AI availability as a negotiation argument rather than a migration trigger. AWS Bedrock has eighteen additional months of production maturity with Claude including features like Bedrock Knowledge Bases and Bedrock Agents that have no exact Vertex equivalent yet. Companies considering net-new Vertex deployments where Gemini and Claude both fit the workload mix get the strongest argument to standardize on Google Cloud, particularly with Workspace integration and the Wiz security overlay factored in.