Sam Altman announced on X on April 30, 2026 that OpenAI will roll out GPT-5.5-Cyber only to critical cyber defenders through the company's Trusted Access for Cyber program, with general availability gated behind a vetting process. Weeks earlier, Altman publicly characterized Anthropic's similarly restricted release of Mythos as fear-based marketing. OpenAI's own deployment safety documentation now classifies GPT-5.5 as High capability in cybersecurity under its Preparedness Framework, citing penetration testing, vulnerability research, and malware analysis as dual-use workflows that need controlled access. The U-turn is the news. The technical reason is that the model crossed a capability threshold where the same risk calculus Anthropic ran two months ago now applies to OpenAI.

The announcement — April 30, Sam Altman's X post

The rollout was announced by Sam Altman on his X account on April 30, 2026. The substance: OpenAI will begin shipping GPT-5.5-Cyber to critical cyber defenders in the coming days, gated through the Trusted Access for Cyber program. Access requires a vetted application process. The model is fine-tuned for defensive cybersecurity workflows — penetration testing, vulnerability identification and exploitation, and malware reverse-engineering — and is positioned as a more permissive variant of the GPT-5.5 family for cyber defense use cases.

| Variable | Detail |

|---|---|

| Announcement date | April 30, 2026 (X post) |

| Source of announcement | Sam Altman, OpenAI co-founder and chief executive |

| Rollout window | "In the coming days" from April 30 |

| Access program | Trusted Access for Cyber (TAC) |

| Eligibility | Critical cyber defenders with legitimate cybersecurity credentials |

| Capabilities | Penetration testing, vulnerability identification and exploitation, malware reverse-engineering |

| Preparedness Framework classification | High capability in cybersecurity, below Critical |

| Critical threshold definition | Functional zero-day exploits in many hardened real-world systems without human intervention |

| Application route | OpenAI form, with submitted credentials and intended-use information |

| Government coordination | OpenAI consulting U.S. government to identify additional qualified users |

OpenAI's deployment safety document for GPT-5.5 supports the announcement with formal language. The model is classified as High capability in cybersecurity under the company's Preparedness Framework, but explicitly below the Critical threshold — defined as the ability to identify and develop functional zero-day exploits across many hardened real-world critical systems without human intervention. The Trusted Access for Cyber program controls the high-risk workflows specifically — "scaled agentic vulnerability research and exploit-chaining techniques" — through gated access rather than general availability.

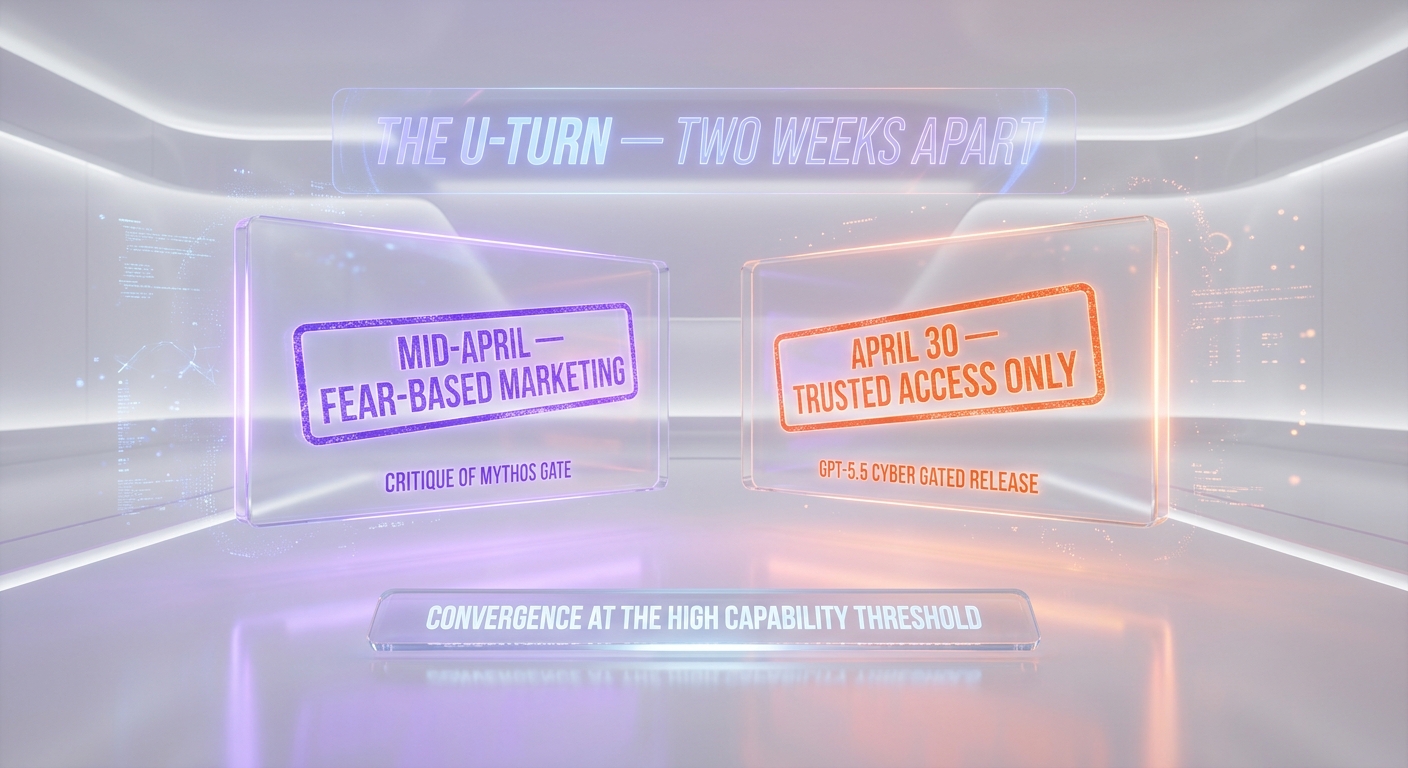

The U-turn — what Altman said about Mythos in mid-April

The narrative friction here is direct. In mid-April 2026, when Anthropic announced that its Mythos preview model would be released on a gated, application-only basis to a small number of vetted partners, Altman publicly characterized the approach as fear-based marketing. Our prior coverage of the Altman criticism walked through the language — the implication that gating was a marketing tactic to manufacture scarcity rather than a substantive safety decision.

On April 30, OpenAI announced an effectively identical structural decision for GPT-5.5-Cyber. The capability is gated. Access is application-only. Eligibility is restricted to vetted cyber defenders. Government coordination is part of the access decision. Every operational element of the Anthropic Mythos rollout that Altman criticized is present in OpenAI's own April 30 announcement. The substance is the same. The branding is different.

Two readings are available. The first is hypocrisy — OpenAI publicly criticized Anthropic for a deployment posture it then adopted itself, which is the framing TechCrunch's headline reached for. The second is convergence — the same capability threshold that triggered Anthropic's Mythos gate has now triggered OpenAI's own gate, and the public criticism in mid-April was a marketing decision that did not survive contact with the company's own preparedness review. Both readings can be true at once. The technical reason behind the U-turn is the more substantive story.

Why restrict — the technical reason behind the gate

OpenAI's deployment safety document for GPT-5.5 is unusually direct about the reasoning. The model is classified as High capability in cybersecurity under the Preparedness Framework. The framework defines the High threshold to include capabilities that meaningfully accelerate threat actor workflows — vulnerability discovery, exploit chaining, scaled agentic security research — even if they fall short of the Critical threshold of fully autonomous zero-day generation against hardened systems.

The capability profile that triggers the High classification also triggers the access gate. OpenAI's framing is that workflows like penetration testing, malware analysis, and vulnerability research are critical for defenders but can also enable harm if misused by threat actors. The Trusted Access for Cyber program is the mechanism for resolving the dual-use tension — provide capability to vetted defenders, restrict access to anyone who has not been vetted, and coordinate with the U.S. government on qualified-user identification.

This is identical in substance to Anthropic's reasoning for Mythos. Anthropic's preview model crossed capability thresholds that the company's responsible-scaling policy required to be deployed under controlled access. The Mythos gate was the operationalization of that policy. OpenAI's GPT-5.5-Cyber gate is the operationalization of an analogous Preparedness Framework decision. Both companies have effectively adopted the same posture in response to the same underlying capability inflection.

Trusted Access for Cyber — what we know about the program

The Trusted Access for Cyber program is the gating mechanism. Based on OpenAI's deployment safety documentation and the April 30 reporting, here is what is known.

- Application route. Eligibility is established through an OpenAI application form. Applicants submit information about their cybersecurity credentials and the intended use cases they plan to apply the model to. OpenAI vets the submission before granting access.

- Eligibility profile. Access is restricted to applicants with legitimate cybersecurity credentials. Reporting from PYMNTS suggests OpenAI is targeting scaled access to thousands of verified individuals and hundreds of teams responsible for defending critical software, though the precise definition of "critical cyber defender" is not fully published.

- Government coordination. OpenAI is consulting with the U.S. government to identify additional qualified users. The company has held demonstrations with government and national security officials in Washington, D.C. The Trusted Access for Cyber program is consequently both a vendor-controlled access list and a government-coordinated capability allocation.

- Gated workflows. The program controls access to the highest-risk workflows specifically — scaled agentic vulnerability research and exploit-chaining techniques — rather than the entire model. Lower-risk workflows on GPT-5.5 are available through general access channels.

The structure resembles previous controlled deployments of dual-use technologies in the security industry. What is new is the scale — OpenAI is signaling intent to expand the verified population to thousands of individuals and hundreds of teams, with government partnership shaping the scaling pace. That ambition is materially larger than Anthropic's reported Mythos partner count and is the most distinctive operational difference between the two gated releases.

GPT-5.5-Cyber vs Anthropic Mythos — the structural mirror

| Variable | OpenAI GPT-5.5-Cyber | Anthropic Mythos |

|---|---|---|

| Access model | Application-only via Trusted Access for Cyber | Application-only, vetted partners |

| Eligibility framing | Critical cyber defenders with legitimate credentials | Vetted research and enterprise partners |

| Public-policy coordination | Consulting U.S. government for qualified-user identification | Coordinated with relevant policy stakeholders |

| Publicly stated rationale | Preparedness Framework, High capability dual-use | Responsible Scaling Policy, capability inflection |

| Public-criticism context | Altman criticized Mythos gate as fear-based marketing in mid-April | Mythos gate announced first; OpenAI critique followed |

Read across the rows, the two releases are structurally near-identical. Both gate access. Both vet applicants. Both invoke a capability framework as the rationale. Both involve government coordination on qualified-user identification. The differences are operational scale, public framing, and the timing of the public critique that aged badly.

The substantive convergence is the analytically interesting part. Two months ago, the dominant industry narrative was that Anthropic's safety-first deployment posture was a competitive disadvantage relative to OpenAI's faster-shipping commercial posture. The Mythos gate was framed by critics as a return to that disadvantage. The OpenAI April 30 announcement is direct evidence that at the High capability threshold, the gate is a structural feature of frontier-model deployment rather than a brand choice. Whichever lab gets there first ships behind a gate. The question is no longer whether to gate but how to scale the gated population responsibly.

The context — GPT-5.4-Cyber and the security-AI race

This is not OpenAI's first restricted cyber-tier model. Our coverage of the GPT-5.4-Cyber launch in mid-April 2026 walked through the original Trusted Access for Cyber rollout — a security-team focused deployment with similar gating but a smaller capability profile and a narrower target user list. The April 30 announcement is the version-bump update of that posture, scaled to GPT-5.5's higher capability.

The broader context is the security-AI race that has accelerated through 2025 and 2026. Frontier models with native cybersecurity capability — penetration testing, vulnerability discovery, exploit chaining — are valuable to both defenders and attackers. The vendor calculation is that gating access to vetted defenders gives the defender community a meaningful capability advantage before the same capability becomes broadly available through open-weights models or third-party fine-tunes. Mistral's Medium 3.5 release with open weights the same week sharpens that argument: open-weights frontier-tier models will eventually carry similar capabilities, and the runway for vendor-gated cyber access is finite.

What to watch next

- The vetted user count. OpenAI has signaled intent to scale to thousands of verified individuals and hundreds of teams. The actual ramp through Q3 2026 will be the cleanest signal of how the Trusted Access for Cyber program operates in practice.

- Government partnership shape. The U.S. government coordination angle is consequential for international deployment. Expect parallel conversations with allied governments — UK, Canada, Australia, Japan — to determine eligibility for non-U.S. defenders.

- Anthropic's response. Anthropic now has a public datapoint where OpenAI structurally adopted the same gating posture Altman criticized. Watch for messaging from Anthropic that draws the line between the two announcements.

- Open-weights pressure. If open-weights frontier-tier models arrive at similar cyber capability — Mistral, DeepSeek, or others — the gated-access economics shift. Vendor-gated cyber tooling has a finite competitive runway against open alternatives.

Our verdict

The April 30 announcement matters less for what it says about OpenAI's commercial posture and more for what it confirms about frontier-model deployment at the High capability threshold. The same risk calculus that drove Anthropic's Mythos gate now drives OpenAI's GPT-5.5-Cyber gate. Vendor branding can vary. The structural decision does not. At the High threshold, frontier cyber capability ships behind an application form, a vetting process, and government coordination — and the two leading U.S. labs have now publicly committed to that posture.

For OpenAI specifically, the U-turn from public criticism of Anthropic in mid-April to structurally identical deployment on April 30 is a costly piece of brand inconsistency. The substantive defense is that the company's own Preparedness Framework triggered the gate, which is the right outcome for safety. The brand defense will be harder. Expect Altman's mid-April Mythos comments to be referenced in every subsequent OpenAI security communication for the rest of 2026.

For security teams and corporate buyers of frontier cyber tooling, the practical takeaway is that gated access is now the deployment default for both Claude and GPT cyber variants, with application processes, credential checks, and government coordination as the access mechanics. Multi-vendor security AI strategies will increasingly route through Trusted Access for Cyber on the OpenAI side and equivalent partner programs on the Anthropic side. Open-weights alternatives will exist but will lag the vendor-gated tier on capability for the foreseeable future.

For the wider context, see our coverage of GPT-5.4-Cyber's earlier rollout, Altman's mid-April Mythos criticism, Anthropic's Mythos preview rollout, and the broader AI news desk.

Frequently asked questions

What did OpenAI announce on April 30, 2026?

Sam Altman announced on X on April 30, 2026 that GPT-5.5-Cyber will roll out only to critical cyber defenders through the company's Trusted Access for Cyber program, with general availability gated behind a vetting process. The rollout was scheduled to begin within the days following the announcement.

What is GPT-5.5-Cyber capable of?

The model is fine-tuned for defensive cybersecurity workflows including penetration testing, vulnerability identification and exploitation, and malware reverse-engineering. OpenAI classifies the model as High capability in cybersecurity under its Preparedness Framework, explicitly below the Critical threshold of generating functional zero-day exploits in hardened real-world systems without human intervention.

What is the Trusted Access for Cyber program?

Trusted Access for Cyber is OpenAI's gated access program for high-risk cyber capabilities. Applicants submit credentials and intended-use information through an OpenAI form. The company vets applications and grants access to those determined to be legitimate cyber defenders. The program also involves coordination with the U.S. government to identify additional qualified users.

Why did OpenAI restrict access?

OpenAI's deployment safety documentation classifies GPT-5.5 as High capability in cybersecurity under its Preparedness Framework. The framework requires controlled access for capabilities that can meaningfully accelerate threat-actor workflows — vulnerability discovery, exploit chaining, scaled agentic security research — even when they fall short of the Critical threshold. The Trusted Access for Cyber program is the operational mechanism for that control.

How is this a U-turn from OpenAI's earlier position?

In mid-April 2026, Altman publicly characterized Anthropic's gated release of its Mythos preview as fear-based marketing. The April 30 GPT-5.5-Cyber announcement applies a structurally identical gating model — application-only access, credential vetting, government coordination, capability framework rationale. The two postures are operationally near-identical, which makes the mid-April critique difficult to reconcile with the April 30 deployment.

Is OpenAI working with the government?

Yes. OpenAI has stated that it is consulting with the U.S. government to identify additional qualified users for the Trusted Access for Cyber program. The company has held demonstrations with government and national security officials in Washington, D.C. The TAC program is consequently both a vendor-controlled access list and a government-coordinated capability allocation.

How does GPT-5.5-Cyber differ from GPT-5.4-Cyber?

GPT-5.4-Cyber, launched earlier in April 2026, was the original Trusted Access for Cyber deployment, targeting security teams with a smaller capability profile and a narrower target user list. GPT-5.5-Cyber is the version-bump update, with higher capability triggering more rigorous gating under the Preparedness Framework High classification and a stated ambition to scale the vetted user base to thousands of verified individuals and hundreds of teams.

Can I apply for access?

Access is restricted to applicants with legitimate cybersecurity credentials. The application process requires submitting credentials and intended-use information through an OpenAI form. Approval is at the discretion of OpenAI and, for some users, U.S. government coordination. Casual or hobbyist applications are unlikely to clear the vetting bar. Consult your organization's security and legal teams before applying for any restricted-access frontier capability program.

What does this mean for the broader AI industry?

The two leading U.S. frontier labs — OpenAI and Anthropic — have now both publicly committed to gated, application-only access for their highest-capability cyber-tier models. The convergence confirms that at the High capability threshold, restricted access is a structural feature of frontier-model deployment rather than a competitive choice. Open-weights alternatives such as Mistral's Medium 3.5 may eventually narrow the gap, but vendor-gated cyber capability will lead the frontier tier for the foreseeable future.