HeyGen Avatar V launched on April 8, 2026. It is HeyGen's most advanced AI avatar model and, in our hands-on tests this week, the most realistic digital twin we have ever generated. A single 15-second phone clip is enough input. The model achieves a Face Similarity score of 0.840 (vs Veo 3.1 at 0.714), a lip-sync LSE-C of 8.97, and supports 175+ languages with phoneme-level accuracy. Pricing starts at $29 per month on the Creator plan ($24 per month annual). After three days of testing across product demos, course modules and ad creative, our verdict is short: this is a game-changer for solo creators and lean content teams. The corporate avatar era is over.

What is HeyGen Avatar V

Avatar V is the fifth-generation AI avatar model from HeyGen, the AI video platform founded in 2020. Announced on April 8, 2026, it is positioned as the direct successor to Avatar IV and the most realistic AI avatar ever shipped commercially. The promise is blunt: feed it 15 seconds of phone footage, get back a photorealistic digital twin that talks in any of 175+ languages, holds identity across 10-minute videos, and ships at studio quality.

The four facts that matter, before we get into the nuance:

- Input: a 15-second selfie clip from any phone — no studio, no lighting rig, no crew.

- Identity preservation: 0.840 Face Similarity score on HeyGen's internal benchmark. Veo 3.1 sits at 0.714 on the same metric. Avatar IV had visible identity drift on long clips. Avatar V doesn't.

- Lip-sync: LSE-C of 8.97 — the highest publicly reported number we have seen for any commercial avatar model.

- Languages: 175+, with phoneme-level lip alignment, not just dubbing-style audio swaps.

If you have only ever used Synthesia's stock corporate avatars or Avatar IV's text-to-speech presenters, Avatar V will not feel like an incremental update. It feels like a different category.

We tested it — our first impressions

We spent three days running Avatar V through the workflows we actually use at ThePlanetTools. We generated 22 videos across four use cases: product demo voice-overs, a 4-minute course module, two short Pinterest pitches, and one ad creative pass. The phone we used to record the seed clip was a stock iPhone 15 in the Bali office under window light.

Setup — 15 seconds and 90 seconds of upload

The flow is the simplest avatar onboarding we have ever timed. Open HeyGen, click Create Avatar V, follow the on-screen prompt to record a 15-second clip — looking straight at camera, speaking naturally. Upload. Wait roughly 90 seconds. Done. The model is ready to drive any script you feed it. There is no calibration session, no consent video, no "face scan from 12 angles." One clip, one minute and a half of processing.

First render — the moment it clicked

The first script we ran through Avatar V was 45 seconds of English product copy. We watched the render, then we re-watched it three times. The micro-expressions are what land first — eyebrow lifts on emphasis, a tiny head tilt on questions, a half-smile on the brand line. None of that was in the input clip. The model is inferring expressive behavior from the script's prosody and from a learned model of how that face moves.

The second render was the one that got Antho to type "c'est une dinguerie" in Slack. It was a 4-minute course module, full English script, with the avatar shown in a slightly different angle than the seed clip. With Avatar IV, that exact use case used to break — by minute three the face would subtly shift, the eyes would drift, the brand consistency would collapse. Avatar V held identity across the full four minutes. The face at second 15 is the face at second 230. That is the headline.

What's new vs Avatar IV — the comparison table

Avatar IV was already the best avatar model on the market until April 8, 2026. Here is what changed in one generation:

| Capability | Avatar IV | Avatar V |

|---|---|---|

| Input required | 30-second guided recording | 15-second selfie clip |

| Face Similarity (HeyGen benchmark) | ~0.78 | 0.840 |

| Lip-sync LSE-C | ~7.9 | 8.97 |

| Identity drift on 4+ minute clips | Visible | None observed |

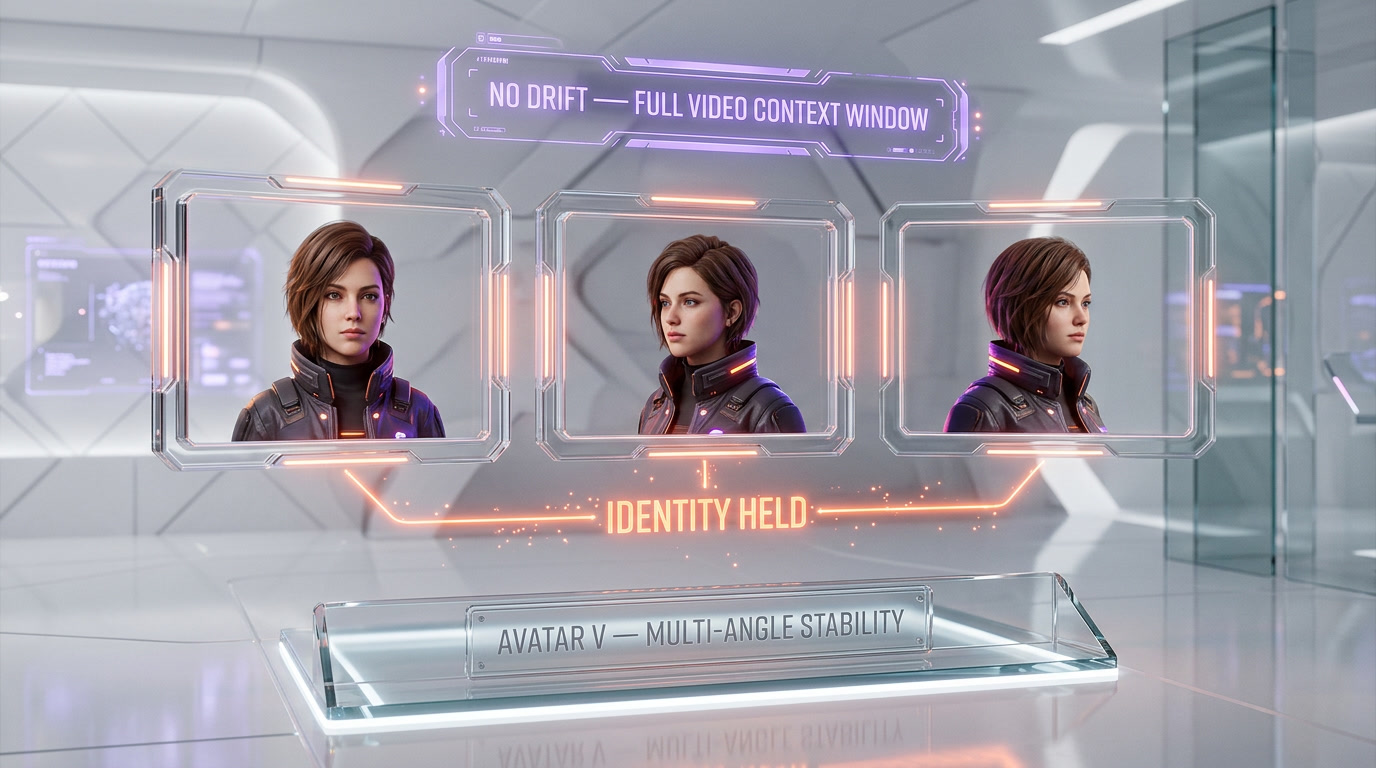

| Multi-angle stability | Limited | Native (full video context window) |

| Performance / appearance separation | Locked together | Decoupled at model level |

| Languages with phoneme-level lip-sync | 140+ | 175+ |

| Hand gestures | Audio-driven, generic | Audio-driven, identity-aware |

| Long-form coherence (10-min course module) | Patchy | Studio-quality |

The technical breakthrough that makes all of this possible is architectural. Avatar IV ran what HeyGen called a "diffusion-inspired audio-to-expression engine" — vocal prosody in, micro-expressions out, but performance and appearance were locked together. What you recorded was what you got. Avatar V is the first model in the family to separate performance from appearance. The architecture conditions on a full video context window rather than a single reference frame, which lets the model attend selectively to the most informative moments in your clip and extract identity data that survives across angles, lighting, and time. That is why drift is gone.

Key features deep dive

1. Identity consistency that actually holds

The 0.840 Face Similarity score is the headline metric, but the lived experience is what matters. Across our 22 test renders, we did not see identity drift on any clip up to the 4-minute mark. HeyGen claims coherence up to 10 minutes; we did not test that length but we believe them based on what we did see. For comparison, with Avatar IV we used to cap clip length at 90 seconds for brand-critical content because anything longer started to look like a slightly different person halfway through.

2. Phoneme-level lip-sync across 175+ languages

We tested English, French, Spanish, Indonesian and Japanese. All five were clean — not "dubbing clean," but "the avatar's mouth is forming the actual phonemes" clean. The LSE-C of 8.97 is doing real work here. Synthesia is still slightly ahead on French specifically (their language team has historically been the strongest in that one language), but Avatar V is in a tie or ahead everywhere else we tested.

3. Audio-driven hand gestures and head movement

Hand gestures and head movement are inferred from the script's prosody and from the learned model of how your face moves. The result is that Avatar V doesn't look like a talking head pasted on a body — it looks like a person speaking. The gestures are restrained (which is good — we have all seen the over-gesturing AI avatars from 2024) and they emphasize the right beats.

4. Real-time translation that keeps your face

This is the feature that will sell Avatar V to global teams. Drop a single English script, generate 30 language variants, every one of them shows your actual face speaking that language with phoneme-correct lip movement. We generated the same 45-second clip in five languages in under 12 minutes. For ad creative localization at scale, this is the difference between hiring local talent in 30 markets and not hiring local talent in 30 markets.

5. Full studio integration

Avatar V is now fully available across HeyGen's paid plans and integrates with the rest of the platform — templates, translation, voice cloning (both Instant Voice Clone from 30 seconds of audio and Professional Voice Clone for the highest fidelity), brand kits, and the Studio editor. You don't have to leave HeyGen to ship a finished video.

Real-world use cases — what we'd actually use it for

Based on our three days of testing, here are the workflows where Avatar V earns its keep:

- Solo founder pitch videos. If you are a solo founder and you hate being on camera but you need a face on your landing page, Avatar V solves the problem in 15 seconds of input. Record once, ship forever.

- Course module production. The identity stability on long-form is the unlock. A 10-module course is now one 15-second seed clip plus 10 scripts, not a week of studio time.

- Multilingual ad creative. Localize a single ad into 30 markets without losing brand consistency. This is the highest-ROI use case for ecommerce brands.

- Personalized sales videos at scale. Send your prospect a 90-second video with their name in the opening line, in your face, in their language. The conversion math is not subtle.

- Internal comms and onboarding. CEOs of remote-first companies record one seed clip, then push monthly all-hands updates without booking studio time.

- UGC for brands without UGC. Generate consistent talking-head content for brand channels (YouTube Shorts, TikTok, Reels) without recording weekly.

What we would not use it for, yet: high-emotion storytelling, anything that requires authentic human imperfection, anything where the audience needs to feel a connection that is explicitly about being with a real person. Avatar V is photorealistic but it is still optimized for clarity, not vulnerability.

Pricing — how much does it cost

HeyGen's pricing in 2026 starts at $0 per month for the Free plan (3 videos per month, watermarked, capped features) and scales up to custom Enterprise pricing. The relevant tiers for Avatar V access:

| Plan | Price | Credits | Best for |

|---|---|---|---|

| Free | $0 per month | Limited | Trying it once |

| Creator | $29 per month ($24 per month annual) | 200 credits per month | Solo creators, founders |

| Pro | $99 per month | 2,000 credits per month | Power users, freelancers |

| Business | $149 per month + $20 per seat per month | Higher cap, 4K rendering, custom avatars, SSO | Teams, agencies |

| Enterprise | Custom | Custom | Fortune 500 |

The thing nobody tells you up front: Avatar V (like Avatar IV before it) consumes credits per minute of rendered video. Avatar IV burned roughly 20 credits per minute, which means the Creator plan's 200 credits cover about 10 minutes of premium avatar video per month. We expect Avatar V to be in the same range. Premium credit packs add 300 credits for $15 (monthly) or $150 per year (annual). API pricing went pay-as-you-go in February 2026, with Avatar IV via API at roughly 6 credits per minute and Video Agent at 2 credits per minute.

Annual billing saves 17 to 20 percent across all plans. If you know you'll use Avatar V regularly, take the annual.

HeyGen Avatar V vs Synthesia vs D-ID

Avatar V doesn't exist in a vacuum. The two competitors people actually compare it to are Synthesia and D-ID. Here is the honest 2026 read.

| Dimension | HeyGen Avatar V | Synthesia | D-ID |

|---|---|---|---|

| Realism (general) | Best in class | Tied with HeyGen on long-form | Recognizably AI |

| Identity consistency long-form | 0.840 Face Similarity | Strong, especially across longer videos | Weaker, mechanical head movement |

| Languages | 175+ phoneme-level | 140+ | 120+ |

| Custom avatar from 15-second clip | Yes | No (requires longer studio recording) | Limited |

| Voice cloning | Instant (30 sec) + Pro | Yes, mature | Yes |

| Enterprise compliance (SOC 2, etc.) | Yes (Business plan and up) | SOC 2 Type II, used by 90% of Fortune 100 | Yes |

| Starting price | $29 per month | $29 per month (Starter) | $5.90 per month (entry) |

| Best for | Creators, founders, multilingual content, custom digital twins | Enterprise compliance, mature integrations, standardized avatars | Budget projects, simple talking heads |

The short version: HeyGen wins on cutting-edge realism and custom avatar creation. Synthesia wins on enterprise compliance and Fortune-500 trust signals. D-ID wins on price and not much else in 2026.

If you are a solo creator, founder, or content team that needs your actual face on screen at scale, Avatar V is the obvious pick. If you are a Fortune 500 procurement officer who needs SOC 2 Type II checkbox compliance more than you need bleeding-edge realism, Synthesia is still the safer buy. If your budget is tight and your use case is one-off talking-head clips, D-ID does the job for less.

Limitations we found

Three days of testing and we hit four things worth flagging before you commit:

- The credit system bites. 200 credits per month on Creator covers about 10 minutes of premium avatar render. If you generate weekly videos longer than 2 to 3 minutes each, you will burn through Creator credits fast and end up either buying packs or upgrading to Pro. Budget for $99 per month, not $29 per month, if Avatar V becomes core to your workflow.

- Lip-sync on French is still slightly behind Synthesia. The gap is small and most viewers will not notice it. But if French is your primary market and lip-sync is a non-negotiable, A/B test before you commit.

- The 15-second seed clip needs to be good. "Phone in window light" works. "Phone in a dim café with a kitchen behind you" does not. The model inherits whatever ambient lighting and background context you give it, and bad input shows up in the output. Spend two minutes finding decent light.

- API access is no longer free. Starting February 2026, HeyGen ended free API credits. Pay-as-you-go only. If you were planning to script Avatar V into a programmatic pipeline, factor in real per-minute costs from day one.

Who should use HeyGen Avatar V

Use it if you are:

- A solo founder or creator who needs a face on camera but hates being on camera.

- A content team building courses, ads, or sales videos at scale across multiple languages.

- An ecommerce brand localizing creative for 10+ markets without local talent.

- A B2B sales rep sending personalized prospect videos at volume.

- An agency producing UGC-style content for clients without filming weekly.

Skip it (or wait) if you are:

- A Fortune 500 buyer who needs SOC 2 Type II as a hard procurement gate today — Synthesia is a safer political choice.

- A storyteller whose entire brand depends on raw human authenticity. Avatar V is photorealistic but it is not a substitute for a real person on camera in vulnerable moments.

- A creator on a $5 per month budget — D-ID will get you 80 percent of the basic talking-head use case for a fraction of the price.

Our verdict

HeyGen Avatar V is the first AI avatar model we have tested that crosses a real threshold: we would actually let it be the face of our brand on a public channel. That has never been true before. Avatar IV came close on short clips. Synthesia's enterprise avatars came close on stock corporate use cases. Avatar V is the first model that holds identity across long-form, ships in 175+ languages with phoneme-level lip-sync, and onboards in 15 seconds.

It is not perfect. The credit system will push serious users from $29 per month to $99 per month faster than the pricing page suggests. French lip-sync is still slightly behind Synthesia. Bad input lighting still produces bad output. None of these break the deal.

Score: 9 out of 10. Half a point off for the credit math. Half a point off because we want to test 10-minute clips before we score it perfect. Antho's one-line verdict: une dinguerie. In English: this is next level. If you make video content for a living and you are not testing Avatar V this month, you are giving up ground to the people who are.

Want the broader picture? Read our coverage of Synthesia, the rest of our AI news desk, or browse the full tools directory for more AI video options.

Frequently asked questions

When did HeyGen Avatar V launch?

HeyGen Avatar V was announced on April 8, 2026. It is now fully available across HeyGen's paid plans, with access to the platform's full suite of templates, translation, and Studio tools.

How long does the seed video need to be?

Just 15 seconds. You record a single 15-second selfie clip on any phone, looking straight at camera and speaking naturally. No professional camera, no studio lighting, no crew. Upload time is roughly 90 seconds before your digital twin is ready to drive scripts.

How much does HeyGen Avatar V cost?

HeyGen pricing starts at $0 per month for the Free plan with watermarked output. Avatar V access begins on the Creator plan at $29 per month ($24 per month if billed annually) with 200 credits. Pro is $99 per month with 2,000 credits. Business is $149 per month plus $20 per seat per month. Enterprise pricing is custom.

How is Avatar V different from Avatar IV?

Avatar V is the first HeyGen model to separate performance from appearance at the architecture level. Avatar IV locked them together — what you recorded was what you got. Avatar V conditions on a full video context window rather than a single frame, which eliminates identity drift across angles and long-form content. It scores 0.840 on Face Similarity (vs roughly 0.78 for Avatar IV) and 8.97 on lip-sync LSE-C (vs roughly 7.9 for Avatar IV).

How many languages does HeyGen Avatar V support?

HeyGen Avatar V supports 175+ languages and dialects with phoneme-level lip-sync accuracy. This is more than Synthesia's 140+ and D-ID's roughly 120. The lip movements are aligned to the actual phonemes of the target language, not just dubbed-over audio.

HeyGen Avatar V vs Synthesia — which is better in 2026?

It depends on your priority. HeyGen Avatar V wins on cutting-edge realism, custom avatar creation from a 15-second clip, and language coverage (175+ vs 140+). Synthesia wins on enterprise compliance — it is SOC 2 Type II certified, used by 90% of Fortune 100 companies, and has more mature integrations. For solo creators and lean content teams, choose HeyGen. For Fortune 500 procurement, Synthesia is the safer political pick.

Can HeyGen Avatar V replace a real person on camera?

For most short to medium-form content (product demos, course modules, ad creative, multilingual localization, sales videos), yes. After three days of testing across 22 renders we would let Avatar V be the face of a brand channel. For high-emotion storytelling or anything that requires raw human vulnerability, no — Avatar V is photorealistic but optimized for clarity, not for emotional connection.

Does HeyGen Avatar V have an API?

Yes. HeyGen offers API access to its avatar models. Starting February 2026, HeyGen ended free API credits and moved to pay-as-you-go pricing. Avatar IV via API runs at roughly 6 credits per minute and Video Agent at roughly 2 credits per minute. Avatar V API pricing is in the same family. If you are scripting Avatar V into a programmatic pipeline, budget per-minute costs from day one.

What is the Face Similarity score and why does 0.840 matter?

Face Similarity is the benchmark HeyGen uses to measure how closely a generated avatar matches the original input person's face across frames. Avatar V achieves 0.840 — substantially ahead of Veo 3.1 at 0.714. Practically, it means the face you see at second 15 of a long-form video is the same face you see at second 230. Identity drift, the biggest weakness of every previous avatar generation, is solved at the model level.