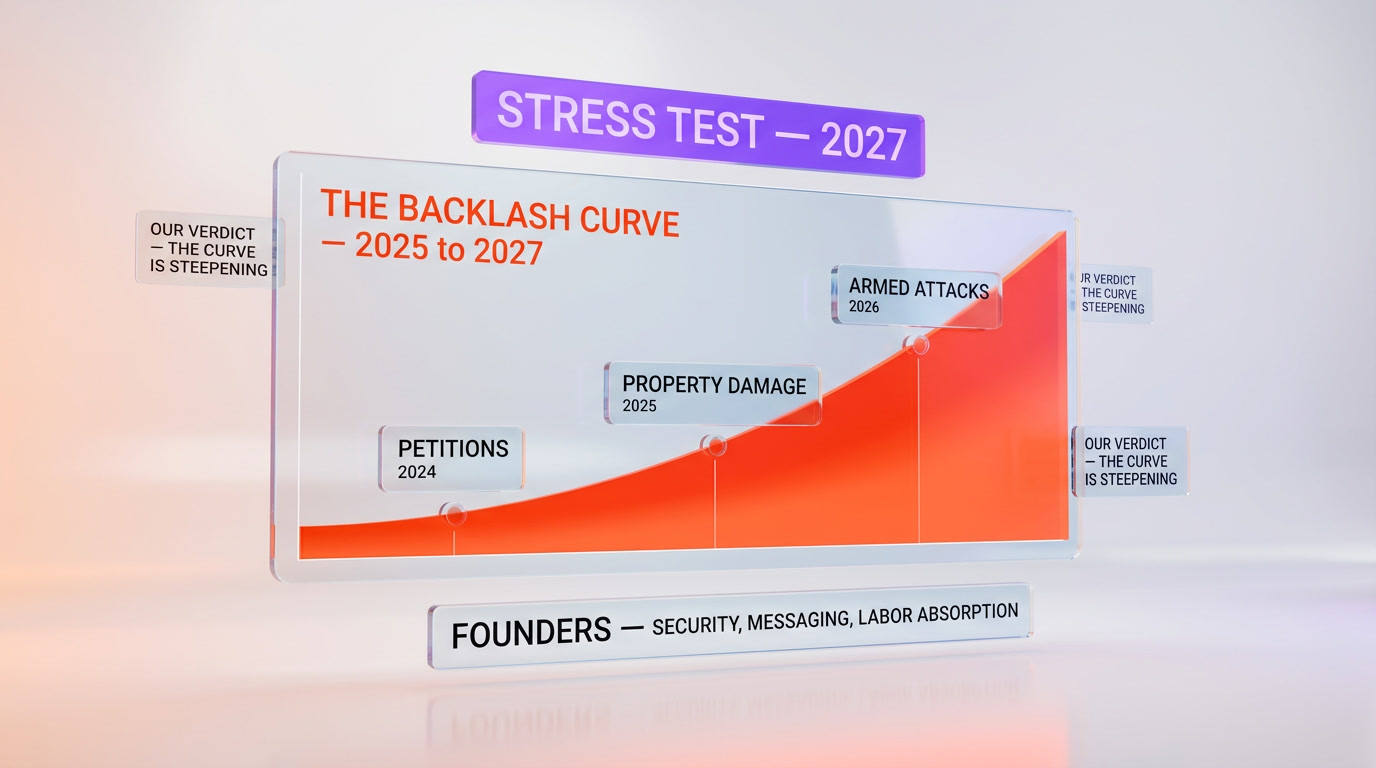

On April 14, 2026, San Francisco police arrested two Gen Z attackers, aged 23 and 25, after they fired shots near Sam Altman's home — the second escalation in weeks. A 20-year-old Texan had already thrown a Molotov cocktail at the same address. Investigators recovered a manifesto titled "extinction of humanity." The context: 55,000 US jobs were eliminated by AI-driven decisions in 2025 alone — 12 times the 2023 number. Brian Merchant, author of Blood in the Machine, has been documenting the radicalization in real time. The historical parallel that matters is not 2020 or 2016. It is 1812 — the year British luddites burned mills, broke looms, and shot factory owners. The AI backlash curve in 2026 is tracking the luddite curve of 1811 to 1813. Founders who ignore this are making the same mistake the British factory owners made. This is a ground-truth dispatch on what happened, what is coming in 2027, and what founders need to do now.

What happened at Altman's house

On the afternoon of April 14, 2026, two men — aged 23 and 25 — were arrested by San Francisco Police Department officers near the private residence of OpenAI CEO Sam Altman. Per the Axios SF report published that evening, both suspects were armed. Shots were fired. No one at the residence was injured. SFPD recovered multiple weapons, a handwritten document referred to in court filings as a manifesto, and digital material referencing "the extinction of humanity" as a stated goal.

Both suspects are under 30. Both are, by the generational bracket, Gen Z. Neither had a public criminal history before the incident. Both had, according to early reporting from Fortune, active accounts on online forums where AI-accelerationist content is discussed — and increasingly, opposed with violent rhetoric.

This was not the first time Altman's home was targeted. It was the second escalation in a matter of weeks.

The Texas precedent — the Molotov guy

Three weeks before the April 14 arrests, a 20-year-old from Texas traveled to San Francisco, approached Altman's residence at night, and threw a Molotov cocktail at the property. The device caused minor exterior damage. The attacker was detained within 48 hours. Court filings at the time described the motive as "philosophical opposition to artificial general intelligence and its human cost."

The Molotov attack barely made national news. Axios SF covered it. A few AI-focused newsletters picked it up. Brian Merchant's Blood in the Machine Substack flagged it as a signal — a young, ideologically motivated attacker traveling across state lines to target the face of the AI industry. Most of the Valley ignored it.

Three weeks later, two more young men with heavier weapons and an explicit extinction-of-humanity manifesto showed up at the same address. The signal just got a lot louder.

The manifesto — "extinction of humanity"

The document recovered from the April 14 suspects is not, based on the portions quoted in court filings, a coherent political program. It is a pastiche. It draws on three strands that have been metastasizing on the English-language internet since roughly 2023: AI-doomer rhetoric (the Eliezer Yudkowsky-adjacent argument that misaligned AGI kills everyone), anti-capitalist critique of Silicon Valley (Brian Merchant, Karen Hao, Ed Zitron), and a more recent strand — what some have started calling "AI-accelerationist nihilism," the claim that AI is inevitable, that it will concentrate power in a handful of labs, and that the only moral response is direct action against the people building it.

The manifesto's central claim, per the portions quoted, is that Sam Altman and a small group of AGI-lab founders have made a unilateral bet with the future of humanity, that this bet will fail, and that stopping them by any means necessary is morally justified. "Extinction of humanity" in the title refers to the attackers' belief that the AGI race leads there — not, as some initial headlines implied, to the attackers' goal.

Whether you agree with any part of that reasoning is beside the point. The point is: this reasoning now has enough gravitational pull to get two young men to show up armed at a private residence in San Francisco in April 2026. It is no longer a fringe internet discourse. It is action.

55,000 layoffs and rising — the numbers behind the rage

The context people miss when they read these stories as isolated incidents is the labor market data. In 2025, approximately 55,000 US jobs were eliminated in decisions where AI was either the direct cause or the explicit justification given by the employer — per aggregated reporting from Challenger, Gray & Christmas and tracked independently by Blood in the Machine. In 2023, that number was roughly 4,600. The 2025 figure is roughly 12 times the 2023 figure.

Breaking that 55,000 number down matters:

- Customer support and contact centers: the single largest category, roughly 18,000 to 22,000 of the 55,000. Replacement vector: ChatGPT-class agents plus vertical agents like Decagon and Sierra.

- Junior software engineers and QA: roughly 9,000 to 12,000. Replacement vector: Claude Code, Cursor, Windsurf, and internal coding agents at tier-one tech employers.

- Marketing copywriters and content production: roughly 6,000 to 8,000. Replacement vector: ChatGPT, Claude, Jasper-class tools, in-house workflow automations.

- Paralegals and legal research: roughly 3,500 to 4,500. Replacement vector: Harvey, CoCounsel, and Big Law in-house AI platforms.

- Data analysts and BI: roughly 3,000 to 4,000. Replacement vector: natural-language-to-SQL agents and modern BI platforms with built-in LLMs.

The rest of the 55,000 — roughly 8,000 to 12,000 — is distributed across translation, bookkeeping, design, radiology support, and a long tail of white-collar functions that two years ago nobody assumed would be automated this quickly.

The human math is the part that gets ignored at AI conferences. A 35-year-old support lead making $72,000 a year in Phoenix does not re-skill into a "prompt engineering" job with two Coursera certificates and a LinkedIn post. They file for unemployment. They burn through savings. They watch the news and they see OpenAI's valuation cross $800 billion. And some fraction of them — small, but non-zero — concludes that the system has betrayed them in a way that requires a response.

That is the rage the manifestos are pulling from. It is not irrational. It is a labor market reality rendered into ideology and, increasingly, into action.

The luddite parallel — 1812 to 2026

The historical analogy that is getting thrown around is "luddite." It is used as an insult by Silicon Valley and as a flag by critics like Brian Merchant. Merchant's thesis, laid out in Blood in the Machine (Little, Brown 2023), is that the original luddites were not anti-technology. They were workers whose entire trade was destroyed within a five-year window by industrial machinery deployed faster than any retraining program could absorb. When their political demands were ignored, they moved to direct action: breaking looms, burning mills, and — in several documented cases — shooting factory owners.

The luddite timeline, compressed:

- 1811: First organized loom-breaking in Nottinghamshire. Framed as criminal vandalism. Local political issue.

- 1812 spring: Movement spreads to Yorkshire and Lancashire. Attacks on mills. Parliament passes the Frame-Breaking Act, making loom-breaking punishable by death.

- 1812 April: William Horsfall, a mill owner near Huddersfield, is shot dead on horseback. National story.

- 1813: Mass trials at York. 17 luddites hanged. Movement suppressed militarily.

From first organized action to assassination of a factory owner: roughly 18 months. From there to state violence suppressing the movement: roughly another 12 months.

The 2025 to 2026 AI backlash timeline is tracking that curve uncomfortably closely:

- 2024 to early 2025: Organized criticism. Books like Merchant's Blood in the Machine, Hao's reporting on OpenAI, Zitron's Substack. Petitions, union drives, op-eds.

- Mid-2025: First documented property damage at AI labs (minor — tires, windows). Local stories.

- Late 2025: Doxxing campaigns targeting AI lab researchers. Swatting incidents.

- Early 2026: Texan Molotov at Altman's residence. Still a local SF story.

- April 14, 2026: Armed attackers at Altman's residence with extinction manifesto. National story.

We are roughly 14 to 16 months into the equivalent of the 1811 to 1812 luddite curve. If the parallel holds — and there is no strong reason to assume it will not, given the labor market dynamics and the online radicalization infrastructure — the next 12 to 18 months are the 1812 to 1813 window. That is the period in which, historically, the curve went from property damage to targeted assassination to mass state response.

Why this will get worse in 2027

Three forces make 2027 the critical year, in our read:

1. Layoff velocity accelerates, not decelerates

The 55,000 US number in 2025 was 12x the 2023 number. Internal forecasts from major consulting firms (Goldman, McKinsey, BCG, each with published research in 2024 to 2026) project the 2026 US AI-driven layoff number in the range of 140,000 to 200,000, with 2027 in the range of 300,000 to 500,000. Those numbers are contested — some economists argue they are high, others argue they are low — but the direction of travel is not contested. More jobs, faster, across more categories.

Every one of those displaced workers is a potential node in the radicalization network. Most will not radicalize. A small fraction will. A small fraction of 300,000 to 500,000 is still thousands of people. The math is the math.

2. AGI rhetoric from labs intensifies, not softens

OpenAI, Anthropic, Google DeepMind, Meta, xAI, and a handful of newer labs have all doubled down publicly on AGI timelines in 2025 to 2026. When the CEO of one of the largest AI labs tweets that "superintelligence" is three to five years out, he is simultaneously (a) marketing to investors, (b) recruiting to engineers, and (c) broadcasting a specific message to every critic who reads it as "we are building the thing you fear, on your timeline, in public."

You cannot ask for $500 billion data center buildouts on the promise of transformative AI and also expect the people being transformed to quietly re-skill.

3. Online radicalization infrastructure is mature

The internet infrastructure that radicalizes people in 2026 is different from the infrastructure that existed in 2016 or 2020. Algorithmic recommendation, encrypted messaging groups, AI-generated content farms producing outrage at scale, and — critically — the same LLM tools that power the AI labs also power the manifestos written by people who want to kill AI lab founders. The irony is total and unhelpful.

Young, displaced, online people who would have had to drive to a Klan meeting in 1968 or lurk on a poorly designed forum in 1998 now have algorithmic delivery of tailored radicalization content. The funnel is shorter. The conversion rate is higher.

What founders need to know — security, PR, messaging

This is the part that matters if you are building in AI in 2026. Short, practical, no fluff.

Security

- Residential security is no longer optional for high-profile AI founders. If you are the CEO or named public face of a lab with more than $100 million in funding, you need a professional residential security assessment this quarter. Camera coverage, alarm integration, panic room, address privacy (LLC-held residence title, mail forwarding, scrubbed public records).

- Executive protection at public events. Any speaking engagement, any conference, any public appearance — brief a professional protection firm. DEF CON, NeurIPS, YC Demo Day, TED, AI Engineer Summit are all on threat radar in 2026.

- Doxxing response playbook. Pre-contract with a doxxing cleanup firm. When your home address lands on a subreddit or a Telegram channel, you need it scrubbed within 4 hours, not 4 days.

- Staff exposure. Your research scientists are soft targets too. If you run a lab, brief your leads on personal OpSec, not just corporate.

PR and messaging

- Drop the superintelligence-as-marketing tone. If your public messaging boils down to "we are about to change everything about human civilization," you are broadcasting a target profile to people who are already radicalizing. The labs that weather 2027 best will be the ones who talk less like messianic cults and more like companies shipping useful tools.

- Acknowledge labor displacement explicitly. Not in a PR-polished "we are committed to workforce transition" statement. In actual, specific, funded programs: retraining grants, hiring commitments for displaced workers, transparent disclosure of automation decisions.

- Do not platform attackers. Do not respond publicly to manifestos. Do not debate the ideology. Public response feeds the loop.

- Have a crisis comms plan for an executive incident. Who speaks, when, on what channels, in what tone. Pre-drafted. Legal reviewed. Board approved. You do not want to be writing this in real time.

Messaging — the longer play

The labs that survive the 2026 to 2028 backlash window will be the ones who stop treating the backlash as irrational and start treating it as the predictable response of a labor market being restructured faster than it can absorb. That is not a political statement. It is a risk management statement. Ignore it and the risk compounds.

The counter-argument — is the rage justified?

The honest version of this piece has to take the counter-argument seriously. The case against the luddite framing, made by a number of labor economists and technology historians:

- AI is not, yet, net-destroying jobs. Top-line US unemployment in 2025 stayed in the 4.0 to 4.5 percent range. Job creation in AI-adjacent categories (data engineering, ML ops, applied ML, AI product management) absorbed much of the displacement. The gross number is 55,000, but the net number is harder to pin down and arguably smaller.

- Reskilling works at scale when funded. Post-2008 US retraining programs, while imperfect, moved large cohorts of displaced workers back into work within 24 to 36 months. The infrastructure exists.

- The luddite analogy is wrong because the end state is different. Industrial looms replaced one human motion with one machine motion. Modern LLMs augment tasks, not jobs, in the majority of white-collar contexts. The "full replacement" layoff stories get the headlines; the "one analyst does the work of three" augmentation stories don't — but the latter is the larger signal.

- Violent action is counter-productive. The 1812 luddites lost. They were hanged. The factories were still built. No direct-action movement in an industrializing economy has ever reversed the technology it opposed. The opportunity cost of a Molotov at Altman's door is not saving jobs — it is making the political argument harder for the labor critics who are trying to do this peacefully.

We find the first two points genuinely strong. The labor market as a statistical aggregate in 2026 is not, yet, in the luddite-trigger zone. The 4.2 percent unemployment number is doing a lot of work to obscure individual pain. The third point — the augmentation vs replacement framing — is partially true and partially a cope. It works well for knowledge workers in senior roles. It works very badly for entry-level functions where the junior position was the training ground for the senior position, and where automating the junior role means breaking the career pipeline that produced the senior in the first place.

The fourth point, on counter-productivity, is the one we agree with most. Violence against founders does not slow AI development. It accelerates state alignment with the labs (because the state will side with the attacked party), accelerates corporate security lockdown, and marginalizes the legitimate political-economic critique that people like Merchant, Hao, Zitron and others have been articulating in print and on the record for three years.

But agreeing that violence is counter-productive does not reduce the probability of more violence. It does not, on its own, change the labor market dynamics or the online radicalization infrastructure. The two things we think can reduce the probability are: (1) labor absorption programs at the scale of the displacement, funded by the companies doing the displacing, and (2) a public posture from AI founders that treats displaced workers as a constituency to be served, not a critique to be dismissed.

Our verdict

The April 14, 2026 arrests at Sam Altman's residence are not a one-off. They are a point on a curve. The curve is steepening. The labor market data, the rhetoric from the labs, and the online radicalization infrastructure are all pulling in the same direction. Our read is that 2027 is the stress test — the 12-month window in which the backlash either plateaus (because labor absorption and retraining finally keep pace with displacement) or intensifies into something that looks much more like the luddite endgame.

If you are building in AI in 2026, three things are true. One: your physical security posture needs to be better than it probably is. Two: your public messaging needs to stop sounding like a messianic countdown. Three: the critique coming from Merchant, Hao, Zitron and the labor economists is not the enemy — the enemy is the gap between what the labs promise and what the displaced workers experience, and closing that gap is the political work that makes the attacker's argument less gravitational.

The Molotov at Altman's door is easy to dismiss. The 55,000 is harder. And the 300,000 to 500,000 coming in 2027 is the one that will decide which labs are still standing in 2028.

Related reading: our coverage of ChatGPT, Claude, and our broader analysis desk on the AI industry.

Frequently asked questions

What happened at Sam Altman's house on April 14, 2026?

San Francisco Police Department officers arrested two men, aged 23 and 25, after they fired shots near Sam Altman's private residence on the afternoon of April 14, 2026. Both suspects were armed. No one at the residence was injured. SFPD recovered multiple weapons and a handwritten manifesto referencing the "extinction of humanity." The Axios SF report published that evening was the first to break the story nationally.

Was there a previous attack on Sam Altman's home?

Yes. Roughly three weeks before the April 14, 2026 arrests, a 20-year-old from Texas traveled to San Francisco and threw a Molotov cocktail at Altman's residence. The device caused minor exterior damage. The attacker was detained within 48 hours and described the motive as philosophical opposition to AGI. The Texas Molotov and the April 14 armed arrests are two escalations at the same address in under a month.

What was in the manifesto?

The document recovered from the April 14 suspects draws on three online discourses: AI-doomer rhetoric (the argument that misaligned AGI kills everyone), anti-capitalist critique of Silicon Valley, and a more recent "AI-accelerationist nihilism" strand that argues direct action against AGI lab founders is morally justified. The "extinction of humanity" phrase in the title refers to what the attackers believe the AGI race leads to — not, as some initial headlines implied, the attackers' own goal.

How many US jobs were eliminated by AI in 2025?

Approximately 55,000 US jobs were eliminated in 2025 in decisions where AI was either the direct cause or the explicit employer justification — roughly 12 times the 2023 figure of 4,600. The largest category was customer support and contact centers (18,000 to 22,000), followed by junior software engineering (9,000 to 12,000), marketing copywriting (6,000 to 8,000), paralegals (3,500 to 4,500), and data analysts (3,000 to 4,000). Source: aggregated Challenger, Gray & Christmas reporting and the Blood in the Machine tracker.

Who is Brian Merchant and why does his reporting matter here?

Brian Merchant is a technology journalist and the author of Blood in the Machine (Little, Brown 2023), a history of the British luddite movement and its relevance to modern labor displacement by technology. Since 2023, Merchant has been running the Blood in the Machine Substack, documenting AI-driven layoffs, worker backlash, and the radicalization patterns in real time. His work is the main English-language record of the AI backlash as a labor movement — which makes it essential context for understanding the April 14 attack.

Is the luddite parallel to AI backlash fair?

Partially. The original luddites were not anti-technology — they were workers whose trade was destroyed faster than any retraining program could absorb, and who moved to direct action (loom-breaking, burning mills, shooting factory owners) when political demands were ignored. The 2025 to 2026 AI backlash curve is tracking the 1811 to 1812 luddite curve uncomfortably closely on timeline, escalation pattern, and ideological framing. The parallel breaks down on end state — LLMs augment more tasks than they replace, unlike industrial looms. But the early-curve dynamics are close enough that treating the parallel as serious is warranted.

Why will this get worse in 2027?

Three forces. First, layoff velocity is forecast to accelerate — 2026 US AI-driven layoffs are projected at 140,000 to 200,000 and 2027 at 300,000 to 500,000 (Goldman, McKinsey, BCG 2024 to 2026 research). Second, AGI rhetoric from labs is intensifying, not softening, which directly recruits critics. Third, the online radicalization infrastructure — algorithmic recommendation, encrypted messaging, AI-generated content farms — is more mature in 2026 than in any previous technology backlash. The funnel from displaced worker to radicalized actor is shorter and higher-conversion than it was for any previous labor movement.

What should AI founders do right now?

Three priorities. Security: professional residential security assessment, executive protection at public events, pre-contracted doxxing cleanup, staff OpSec briefings. PR: drop the superintelligence marketing tone, acknowledge labor displacement with funded programs (not PR statements), do not platform attackers, have a pre-drafted crisis comms plan. Messaging: treat the critique from Merchant, Hao, Zitron and the labor economists as risk management signal, not political enemy. Close the gap between lab promises and displaced-worker experience — that gap is what makes the attacker's argument gravitational.

Is the rage justified?

The gross labor displacement number (55,000 in 2025) is real. The individual pain is real. The macro unemployment number (4.2 percent US in 2025) is also real and suggests the net labor market is absorbing the shock. The strongest counter-argument to the luddite framing is that augmentation is outpacing replacement in most white-collar contexts — but that argument breaks down at entry-level roles where automating the junior position breaks the career pipeline. The weakest pro-violence argument is that violence works: historically, direct-action movements in industrializing economies have never reversed the technology they opposed. Violence at founders' homes accelerates state lockdown, not labor protection.

What are the sources for this article?

Primary sources: the Axios SF report published April 14, 2026 covering the arrests; Fortune reporting on the suspects' online activity; Brian Merchant's Blood in the Machine Substack, which has been documenting the backlash since 2023. Labor data: aggregated Challenger, Gray & Christmas monthly layoff reports, plus the Blood in the Machine tracker. Historical context on the luddite movement: Merchant's book Blood in the Machine (Little, Brown 2023). Forecasts on 2026 and 2027 AI-driven layoffs: public research from Goldman Sachs, McKinsey, and BCG published 2024 to 2026.