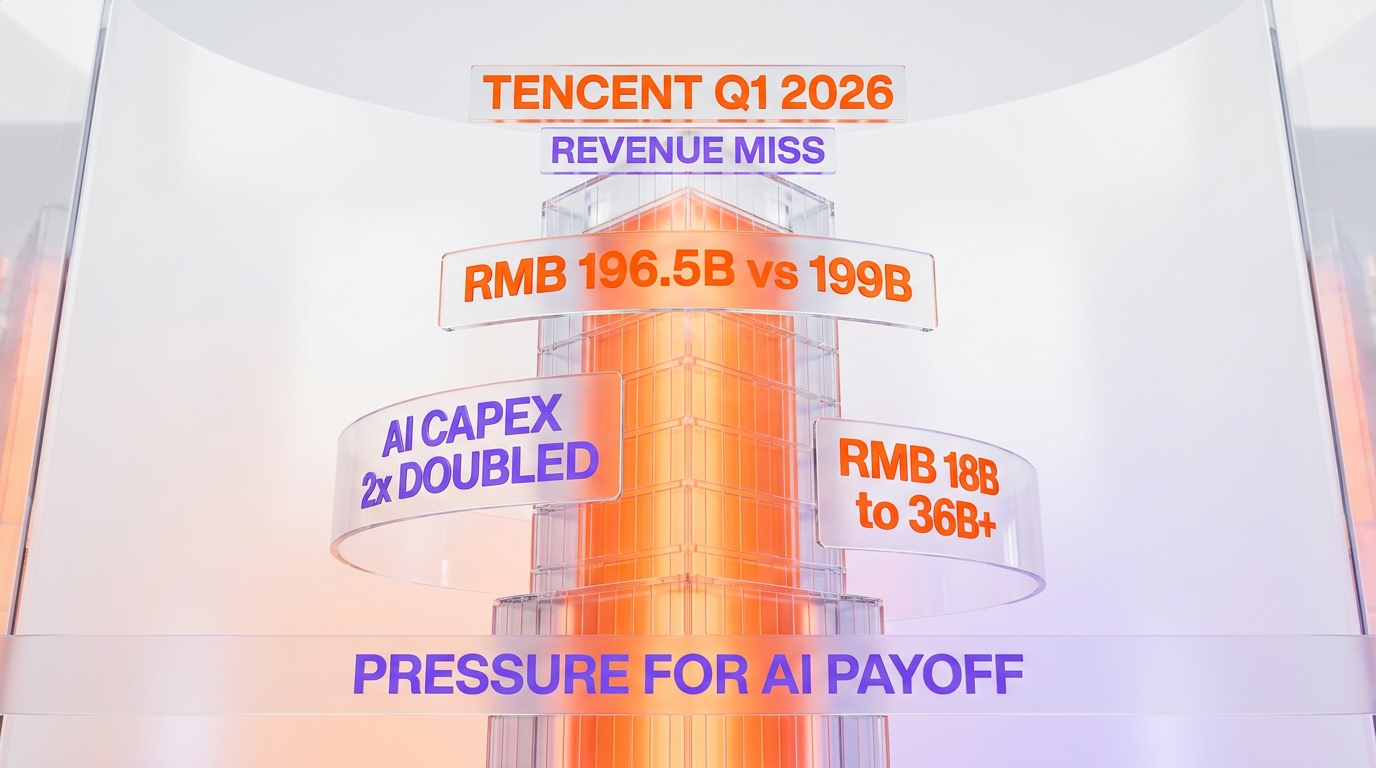

Tencent reported Q1 2026 revenue of RMB 196.5 billion on May 13, 2026, missing the RMB 199 billion analyst consensus, even as management more than doubled 2026 AI capital expenditure guidance from the RMB 18 billion spent on Hunyuan and Yuanbao in 2025 to in excess of RMB 36 billion for 2026. Revenue still grew approximately 9 percent year over year and net profit advanced 11 percent, but the consensus miss combined with the doubled capex commitment compressed the company's AI-payoff narrative into a single quarter. Bloomberg's reporting framed the dispatch as adding pressure on Tencent to convert Hunyuan model leadership into commercial revenue, and the China AI capex war escalated further as Tencent's RMB 36 billion plus stacks against Alibaba's RMB 380 billion three-year cloud and AI commitment and the open-weight pressure from DeepSeek's V4 release in April 2026.

We have tracked the China AI lab capex cycle since the early-2025 wave of frontier-model launches, and the May 13 Tencent dispatch is the cleanest signal yet that the China AI capex war has entered the same compressed-margin phase that the United States hyperscalers crossed in 2024. Tencent's RMB 18 billion 2025 capex on Hunyuan and Yuanbao already represented a non-trivial share of the consolidated free cash flow base, and the doubled RMB 36 billion plus 2026 guidance materially shifts the balance between AI investment, share buybacks, and dividend distribution at the company's capital-allocation table. The Q1 revenue miss tightens the timeline pressure on converting model leadership into commercial-payoff proof points that justify the doubled capex envelope, and that timeline pressure is precisely the structural question that the Bloomberg coverage put in front of investors on May 13.

What Tencent reported on May 13, 2026

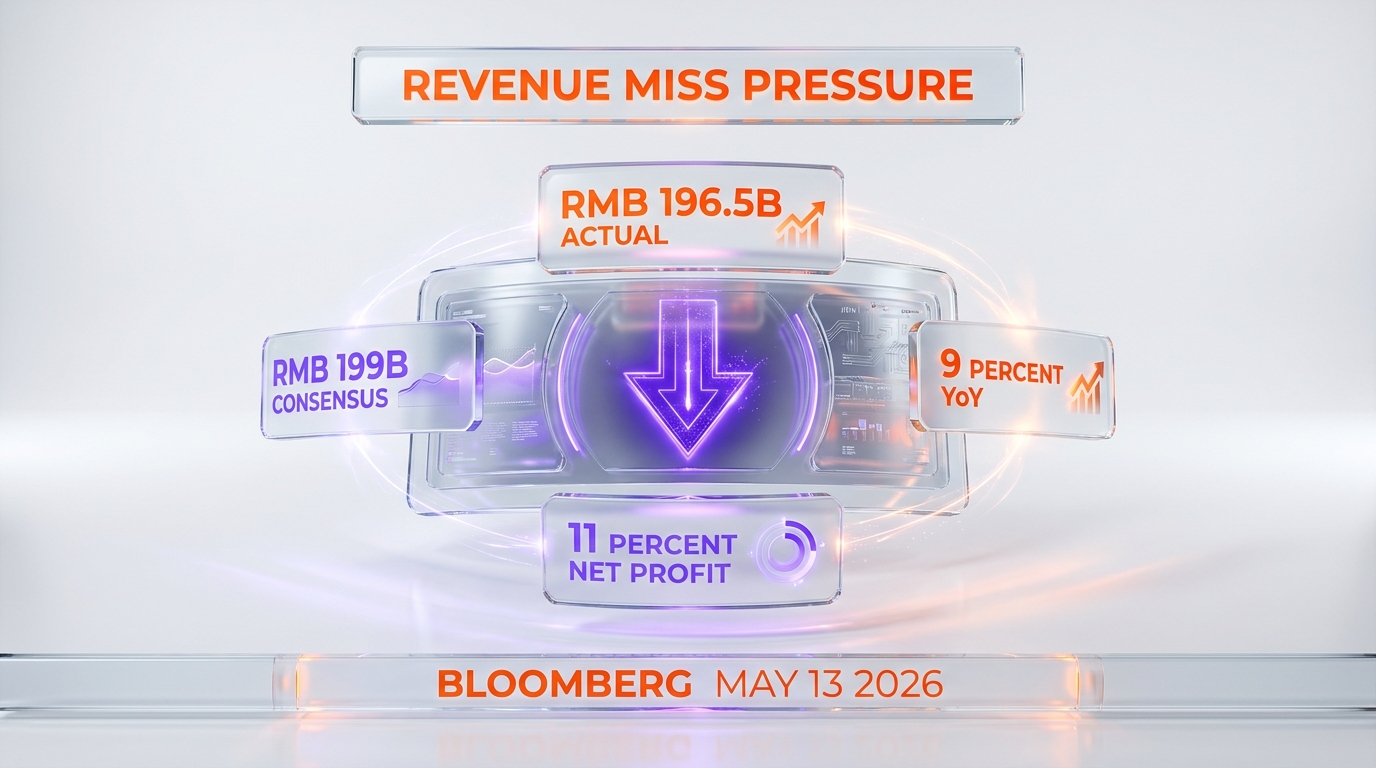

Tencent's Q1 2026 earnings release landed on May 13, 2026 and produced three primary data points that frame the AI-payoff debate. First, consolidated revenue came in at approximately RMB 196.5 billion against an analyst consensus of approximately RMB 199 billion, a miss of roughly RMB 2.5 billion or about 1.25 percent. Second, revenue growth posted at approximately 9 percent year over year and net profit growth at approximately 11 percent year over year, which means margins expanded modestly even on the revenue shortfall. Third, management guided 2026 AI-product capital expenditure to in excess of RMB 36 billion, more than doubling the approximately RMB 18 billion that Tencent deployed on the Hunyuan foundation model and the Yuanbao consumer AI assistant in 2025.

The Bloomberg coverage anchored on the miss-plus-doubled-capex combination because the two data points sit in direct tension with each other. A revenue miss in a quarter signals that the current monetization engines — games, online advertising, fintech and business services — are growing more slowly than analysts modeled. Doubling AI capex in the same quarter signals that management is accelerating investment into the part of the business that does not yet contribute meaningful consolidated revenue. The two moves together compress the timeline pressure on converting the AI investment envelope into revenue, and that compression is what the AI-payoff framing in the Bloomberg dispatch points at directly.

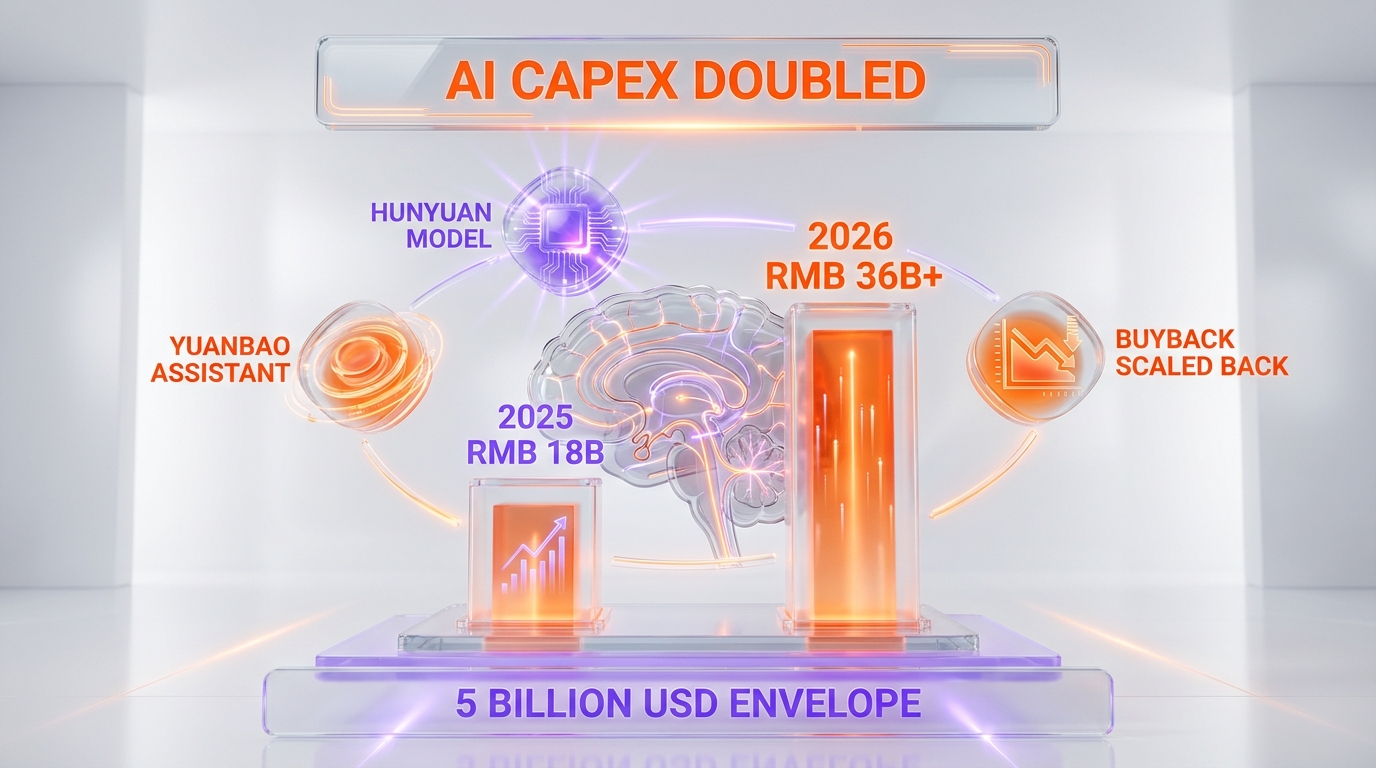

Share buybacks scaled back relative to prior pacing to fund the doubled AI capex envelope, which is the third structural signal that the May 13 release produced. Buyback pacing is one of the levers that management teams use to communicate confidence in near-term cash-flow durability, and dialing the lever back to fund AI capex is a deliberate choice that prioritizes long-horizon AI optionality over near-term shareholder-return optics. Investors who weight buyback-yield as a primary holding-period return component will read the buyback scale-back as a negative offset to the EPS growth that the modest margin expansion delivered.

The revenue miss anatomy — what came in soft

The approximately RMB 2.5 billion shortfall against consensus did not concentrate in a single segment. The Bloomberg coverage and subsequent earnings-call commentary indicated that growth across games, cloud, and advertising was solid but that the consensus modeling had embedded slightly higher growth assumptions across the three primary engines than the quarter actually delivered. Games revenue continued to benefit from domestic-China title performance and from international expansion through the Tencent-owned studios, but the growth pace settled slightly below the consensus track. Online advertising revenue extended its multi-quarter expansion driven by AI-enhanced ad-targeting through the Hunyuan models, but the year-over-year acceleration moderated slightly. Fintech and business services revenue continued to grow but at a pace that consensus modeling had projected slightly higher.

The structural read on the miss is that none of the primary engines decelerated materially but that the consensus track had embedded slightly optimistic growth across the basket. In a higher-multiple equity environment, a 1.25 percent miss against consensus on a base of approximately RMB 199 billion would typically produce a measured single-digit price-action response. The compression on the May 13 trading day reflected the combined effect of the miss alongside the doubled capex commitment and the buyback scale-back, rather than the miss in isolation. Analyst price-target revisions in the 48 hours following the release tracked the consensus-model recalibration on near-term margin trajectory rather than a fundamental thesis change on the long-horizon AI optionality.

The forward-looking question that the miss surfaces is whether the Q1 deceleration represents a transitory effect or a multi-quarter pattern. Games revenue is sensitive to title-release timing and to the regulatory environment in domestic China, online advertising revenue is sensitive to macroeconomic conditions in the consumer-spending base, and fintech revenue is sensitive to credit-cycle conditions and to regulatory adjustments. A multi-quarter deceleration pattern would tighten the AI-payoff timeline pressure further, while a single-quarter transitory effect would reset the consensus track without changing the underlying capex-justification thesis. The Q2 2026 release in August will be the next data point on which read is more accurate.

The RMB 36 billion plus AI capex envelope — what it funds

The RMB 36 billion plus 2026 AI capex guidance funds three primary cost categories. First, the compute infrastructure that trains and serves the Hunyuan foundation models. Tencent operates a hybrid compute stack that combines domestic Chinese chip suppliers, including Huawei Ascend and Cambricon, with international compute where the company holds existing relationships. The compute infrastructure cost line includes the procurement of additional accelerator capacity, the data-center build-out required to host the additional capacity, and the power and networking infrastructure that the additional compute footprint requires. Second, the model-training operational cost line, which covers the engineering and research talent compensation, the data-acquisition and labeling cost base, and the experimentation cycles that produce new model variants. Third, the model-serving operational cost line, which covers the inference compute that hosts production usage of Hunyuan and Yuanbao across Tencent's internal product surface and any external API access.

The allocation among the three cost categories is consequential because it determines the leverage profile that the capex envelope produces. Capex-heavy infrastructure investment generates depreciation expense that compresses near-term operating margins but builds long-horizon serving capacity. Opex-heavy training and serving investment compresses near-term margins more directly but produces lower depreciation drag in subsequent quarters. Tencent's commentary on the earnings call indicated that the RMB 36 billion plus envelope leans toward infrastructure and compute procurement relative to pure operational spending, which means the 2026 capex-cycle drag on operating margin will manifest more through depreciation in 2027 and beyond than through current-period operational expense. That accounting trajectory matters for the AI-payoff debate because the revenue payoff has to materialize before the depreciation drag fully phases in to keep the cumulative capex envelope value-accretive.

The RMB 36 billion plus envelope translates to approximately five billion United States dollars at current exchange rates, which sits below the per-year AI capex envelopes that the largest United States hyperscalers operate but above the per-year envelopes that most of the second-tier Chinese AI competitors operate. Alibaba's RMB 380 billion three-year cloud and AI infrastructure commitment, announced earlier in the cycle, averages to roughly RMB 127 billion per year — a materially larger annual envelope than Tencent's 2026 guidance. The per-year comparison signals that Tencent is operating at a smaller capex-scale than Alibaba in the China AI infrastructure race, which constrains the company's headroom on training-run scale and on inference-capacity expansion relative to the largest domestic competitor.

Hunyuan and Yuanbao — what the capex actually funds

The Hunyuan model family and the Yuanbao consumer AI assistant are the two product surfaces that absorb the RMB 36 billion plus 2026 capex envelope. Hunyuan is Tencent's foundation-model franchise, with the Hy3 preview model representing the most recent public release. The Hy3 preview model has been top-ranked on OpenRouter token-throughput measurements since approximately April 28, 2026, which signals strong external developer adoption and competitive parity with the leading frontier model families in its parameter-size class. Hunyuan 3 Preview has been deployed across approximately 131 internal Tencent products as of the May 13 earnings release, which means the model already functions as the consolidated AI substrate inside Tencent's internal product surface even before external API monetization scales.

The 131 internal product footprint is the structural advantage that Tencent holds against the standalone frontier-model competitors. Tencent's internal product surface spans WeChat, QQ, Tencent Cloud, Tencent Games, Tencent Music, Tencent Video, and dozens of additional product surfaces that collectively reach approximately 1.4 billion monthly active users. Deploying Hunyuan across the consolidated product surface produces immediate inference-scale demand that justifies the capex envelope on internal-utilization economics, even before external API monetization or direct enterprise deployment scales. The internal-utilization model is the same structural lever that the United States hyperscalers use to justify their capex envelopes — Google with Gemini across Workspace and Search, Microsoft with Copilot across Microsoft 365 and Azure, Amazon with Bedrock and the internal Amazon retail and AWS use cases.

Yuanbao is the consumer-AI-assistant product that competes with DeepSeek's V4 chat product, with Alibaba's Qwen 3.6, and with the Baidu ERNIE Bot family in the China consumer AI assistant market. Yuanbao's user-base scale, while not disclosed in absolute monthly active user terms on the May 13 release, has been growing through the integration with WeChat that gives Tencent a distribution advantage no competitor can match inside China. The capex envelope funds the inference compute that hosts Yuanbao production traffic across the WeChat distribution surface, and the Yuanbao product roadmap is the consumer-facing arm of the broader Hunyuan model family.

The structural read on the Hunyuan and Yuanbao combined position is that Tencent holds the strongest China-domestic distribution advantage in the consumer AI assistant market, holds a competitive frontier-model technical position through the Hy3 preview release, and is now committing capex at a scale that funds the inference-serving capacity required to scale both product surfaces against the open-weight pressure from DeepSeek and the Alibaba Qwen family. The execution question is whether the consolidated revenue lift from the AI capex envelope materializes within the timeline window that the doubled commitment compresses.

The China AI capex war landscape — Tencent versus Alibaba versus Baidu versus DeepSeek

The Q1 2026 earnings cycle has clarified the China AI capex hierarchy across the four primary lab footprints. Alibaba sits at the top of the per-year capex envelope through the RMB 380 billion three-year commitment that averages to roughly RMB 127 billion per year across cloud infrastructure and AI investment, with Q1 fiscal year 2026 capex execution at approximately RMB 38.6 billion. Tencent now sits at the second-tier per-year envelope through the RMB 36 billion plus 2026 guidance, with the doubled capex commitment positioning the company as the second-largest China AI infrastructure investor for the year. Baidu continues to run an aggressive capex profile across the ERNIE 5.1 model family and the AI Cloud business, with the AI Cloud segment growing 21 percent year over year but with the aggressive capex compressing profitability across the consolidated business. DeepSeek operates on a materially different cost structure through the open-weight model strategy that minimizes inference-monetization but maximizes ecosystem adoption.

The capex hierarchy maps directly onto the strategic positioning that each lab has chosen for the China AI cycle. Alibaba uses the RMB 380 billion envelope to anchor its position as the China cloud-infrastructure leader and to support the Qwen model family across both consumer and enterprise deployment lanes. Tencent uses the RMB 36 billion plus envelope to anchor the Hunyuan and Yuanbao products across the WeChat-anchored distribution moat. Baidu uses its aggressive capex profile to defend the ERNIE search-and-assistant position against pressure from both Alibaba and Tencent. DeepSeek operates on a smaller absolute capex base but extracts disproportionate ecosystem influence through the open-weight release model that builds developer-community defensibility independent of the model-API monetization lane.

The competitive complication for each of the four China labs is that the open-weight pressure from DeepSeek and from a growing set of open-weight competitors compresses the model-API pricing lane across the China market. DeepSeek's V4 release in April 2026 — with hybrid attention, 1 million-token context, and open-weight availability — sets a price-floor reference point that closed-weight competitors must defend their pricing premium against. The structural read for Tencent specifically is that the RMB 36 billion plus capex envelope must produce either model-quality differentiation that justifies a closed-weight pricing premium, or internal-utilization revenue uplift across the consolidated WeChat-and-broader-Tencent product surface, or some combination of both. The May 13 release did not yet disclose the quantitative breakdown between the two payoff lanes, and that disclosure gap is part of what the AI-payoff pressure framing in the Bloomberg coverage points at.

Open-weight pressure and the Hunyuan Video release

Tencent has also extended the Hunyuan franchise into the multi-modal domain through the recent Hunyuan Video 1.5 open-source release, which competes directly in the text-to-video model lane against OpenAI Sora, against Google Veo 4, and against the rapidly growing set of open-weight video model releases from competing China labs. The open-source release strategy for Hunyuan Video matches the playbook that Tencent used for the HY-World 2.0 text-to-3D model release, where the company chose open-weight distribution to build developer-ecosystem traction rather than to monetize through closed-weight API access.

The open-weight strategy on the multi-modal Hunyuan products is a hedge against the open-weight pressure that DeepSeek and the open-weight competitor cohort apply on the text-modal foundation-model lane. By releasing Hunyuan Video and HY-World as open weights, Tencent captures developer-community defensibility on the multi-modal side even as it maintains closed-weight monetization optionality on the core Hunyuan foundation-model lane. The strategic logic is sound, but it adds capex pressure because open-weight releases do not generate direct API revenue and therefore require either internal-utilization payoff or longer-horizon developer-ecosystem monetization to justify the compute investment that the open-weight models required to train.

The RMB 36 billion plus 2026 capex envelope likely allocates a meaningful share toward continuing the multi-modal release cadence across video, 3D, and image-generation product surfaces, in addition to the core Hunyuan text-foundation training and the Yuanbao serving capacity. The cumulative footprint is consistent with a strategy that positions Tencent as a full-stack AI lab across all major modalities, but the breadth of the modality footprint extends the timeline pressure on payoff conversion because each modality requires independent monetization or internal-utilization proof points.

Why the AI-payoff pressure framing matters

The Bloomberg dispatch's AI-payoff pressure framing captures a structural debate that has been building across the China AI capex cycle since the Q3 2025 capex acceleration. The framing is not about whether AI investment is strategically correct in the long horizon — the consensus across both Chinese and international AI labs is that frontier-model capability buildout is a winner-takes-most opportunity that justifies aggressive capex at the current cycle phase. The framing is about whether the timeline between capex commitment and revenue payoff is short enough to keep the cumulative AI investment value-accretive against the alternative capital-allocation choices that the company could make instead.

Tencent's specific timeline pressure is compressed by three factors. First, the share buyback scale-back that funded the capex envelope is visible to Wall Street and produces continuous pressure to demonstrate AI-revenue lift that exceeds the foregone buyback value. Second, the Q1 2026 revenue miss tightens the consensus track on the primary monetization engines, which means the AI investment has less margin error to absorb. Third, the open-weight pressure across the multi-modal product surface caps the revenue extractable per unit of model-quality differentiation, which means the capex-to-revenue conversion ratio is structurally lower than it would be in a closed-weight-only competitive environment.

The three compressions stack into a pressure profile that is consistent with the AI-payoff framing in the Bloomberg coverage. Tencent's management is not facing existential capex pressure — the company's consolidated free cash flow base remains substantial — but the relative-to-alternatives capital-allocation justification has tightened materially. The Q2 2026 release in August will be the next data point on whether the doubled capex commitment is producing visible revenue-lift signal or whether the pressure profile compounds across subsequent quarters.

Comparison to the United States hyperscaler capex cycle

The Tencent capex doubling lands inside the same global cycle that produced the United States hyperscaler capex acceleration across 2023, 2024, and 2025. The structural similarities are meaningful — frontier-model training compute requirements scaling at a multi-exponential rate, inference-serving capacity demand scaling alongside enterprise and consumer adoption, the competitive pressure to maintain capability parity across the leading-edge model frontier, and the optionality value of holding compute capacity that supports future model architectures. The structural differences are also meaningful — the China lab cost structures, the domestic chip-supply constraints, the regulatory environment for cross-border compute procurement, and the revenue-payoff timeline expectations that the China public-markets investor base applies.

The United States hyperscaler comparison is instructive on the AI-payoff timeline expectation specifically. Microsoft's Azure AI revenue contribution, Google's Gemini-driven product-revenue contribution, and Amazon's Bedrock-driven AWS revenue contribution all required multiple quarters of compounded capex commitment before producing visible revenue-lift signal that the public-markets investor base read as payoff confirmation. Meta's Llama-cycle capex, by contrast, has not yet produced a parallel revenue-payoff confirmation because Meta operates the open-weight ecosystem strategy that does not generate direct model-API revenue. The Tencent capex cycle structurally combines elements of both lanes — internal-utilization revenue lift across the WeChat-anchored product surface, plus open-weight ecosystem investment on the multi-modal product lane — which makes the payoff-timeline expectation harder to benchmark against any single United States hyperscaler precedent.

The most relevant comparison is probably the Amazon AWS Bedrock cycle, because Amazon similarly combines internal-utilization payoff across the consolidated Amazon product surface with external API monetization across the AWS cloud-services business. AWS Bedrock revenue contribution became visibly material in approximately Q3 2024, roughly six quarters after the capex acceleration began in earnest. If Tencent's doubled capex commitment follows a similar timeline pattern, the visible revenue-lift signal would surface around mid-2027, which is longer than the Bloomberg coverage's pressure framing implicitly demands but consistent with the actual mechanics of how capex-to-revenue conversion has historically operated in adjacent cycles.

What management said on the May 13 earnings call

The Tencent management team used the May 13 earnings call to anchor the capex commitment in three primary themes. First, the Hunyuan model franchise has reached competitive parity at the frontier and is now the consolidated AI substrate for the internal Tencent product surface, which justifies continued investment to maintain the parity and extend the internal-utilization revenue lift. Second, the Yuanbao consumer AI assistant continues to scale its user base through the WeChat distribution moat, and the inference-serving capacity must scale alongside the user-base growth. Third, the share buyback scale-back is a deliberate capital-allocation choice that prioritizes long-horizon AI optionality over near-term shareholder-return optics, with the explicit expectation that the AI investment produces compounded value that exceeds the foregone buyback value across the medium-term horizon.

The framing on the call was strategically confident but acknowledged the timeline-pressure debate. Management committed to producing regular disclosure cadence on the AI-payoff metrics across subsequent earnings cycles, including Hunyuan-driven advertising revenue lift, Yuanbao monthly active user trajectory, and Hunyuan-driven cloud and business services revenue contribution. The disclosure-cadence commitment is the management response to the AI-payoff pressure that the Bloomberg coverage articulated, and it suggests that subsequent earnings releases will provide more granular quantitative breakdown between the various payoff lanes than the May 13 release did.

The China public-markets investor base reaction to the May 13 release was measured rather than reactionary, which is consistent with a market that has internalized the AI capex cycle as a multi-year structural commitment rather than a quarter-to-quarter optionality decision. The Hong Kong listing trading reaction to the release saw modest single-digit pressure on the May 13 trading day with recovery action over the subsequent sessions as analyst reports digested the doubled capex commitment alongside the consensus-track recalibration on near-term margins. The measured reaction signals that the long-horizon AI optionality thesis remains intact in the consensus view even as the near-term payoff-timeline pressure tightens.

What to watch over the next 60 to 90 days

Three reporting lanes will surface the next 60 to 90 days of Tencent AI cycle development with the most fidelity. The Hunyuan model release cadence across multi-modal product surfaces — text, video, 3D, image — will produce ongoing developer-ecosystem signal on the open-weight strategy execution. The Yuanbao consumer AI assistant disclosure cadence, particularly any monthly-active-user or daily-active-user data points that surface in subsequent management commentary, will produce direct signal on the consumer-side payoff trajectory. The Q2 2026 earnings release in approximately August 2026 will be the next consolidated data point on whether the doubled capex commitment is producing visible revenue-lift signal across games, online advertising, and fintech and business services.

The competitive landscape data points to watch in the same window include the Alibaba Qwen 4 release that the cycle's release cadence suggests is approaching, any DeepSeek V5 development that follows the V4 April 2026 release, and the Baidu ERNIE 5.2 or 6.0 release cadence. The China regulatory environment around model exports and foreign acquisitions of Chinese AI startups will continue to shape the cross-border competitive dynamics in ways that compound the per-lab capex pressure. The compute-procurement landscape across Huawei Ascend, Cambricon, and any cross-border procurement that survives the export-control regime will determine the per-lab unit economics of the capex envelopes that the four primary China labs are deploying.

Investors and procurement teams reading the Tencent dispatch should anchor on three takeaways. First, the doubled capex commitment is a strategically rational response to the China AI cycle competitive dynamics, and the framing of the move as imprudent or excessive is not consistent with the structural realities of the cycle. Second, the AI-payoff timeline pressure is real and is compressed by the combination of the revenue miss, the share buyback scale-back, and the open-weight pressure on revenue extraction per unit of model differentiation. Third, the cycle's full payoff or pressure resolution will likely require multiple quarters of subsequent disclosure to settle, and the May 13 release is one data point inside a longer multi-quarter resolution arc rather than a definitive resolution event. The China AI capex war is escalating, and Tencent is now committing capital at a scale that confirms the company intends to remain a top-tier participant in the cycle.

Frequently asked questions

What revenue did Tencent report for Q1 2026 and by how much did it miss consensus?

Tencent reported Q1 2026 consolidated revenue of approximately RMB 196.5 billion against an analyst consensus of approximately RMB 199 billion, a shortfall of roughly RMB 2.5 billion or about 1.25 percent. Revenue still grew approximately 9 percent year over year, and net profit grew approximately 11 percent year over year. The miss did not concentrate in a single segment — games, online advertising, and fintech and business services all grew at paces slightly below the consensus track. The Bloomberg coverage of the May 13, 2026 release framed the miss as compressing the AI-payoff timeline rather than as signaling a fundamental thesis change on the primary monetization engines.

How much did Tencent commit to AI capex for 2026?

Tencent management guided 2026 AI capital expenditure to in excess of RMB 36 billion, more than doubling the approximately RMB 18 billion deployed across the Hunyuan foundation model and the Yuanbao consumer AI assistant in 2025. The RMB 36 billion plus envelope translates to approximately five billion United States dollars at current exchange rates. Share buyback pacing scaled back relative to prior cycles to fund the doubled capex commitment, which is a deliberate capital-allocation choice that prioritizes long-horizon AI optionality over near-term shareholder-return optics.

What does the RMB 36 billion plus AI capex envelope actually fund?

The RMB 36 billion plus envelope funds three primary cost categories. First, the compute infrastructure that trains and serves the Hunyuan foundation models, including procurement of additional accelerator capacity across Huawei Ascend, Cambricon, and other suppliers, plus the data-center build-out and power and networking infrastructure required to host the additional compute footprint. Second, the model-training operational cost line, covering engineering and research talent, data acquisition and labeling, and the experimentation cycles that produce new model variants. Third, the model-serving operational cost line, covering the inference compute that hosts production usage of Hunyuan and Yuanbao across Tencent's internal product surface and any external API access.

How does Tencent's Hy3 model compare to other leading AI models in 2026?

The Hunyuan Hy3 preview model has been top-ranked on OpenRouter token-throughput measurements since approximately April 28, 2026, signaling strong external developer adoption and competitive parity at the frontier within its parameter-size class. The model has been deployed across approximately 131 internal Tencent products as of the May 13 earnings release, which means Hy3 already functions as the consolidated AI substrate inside Tencent's internal product surface. Competitive positioning against the leading frontier model families remains constructive at the technical level, with the competitive pressure concentrated more on the monetization and ecosystem-defensibility dimensions than on raw capability parity.

How does Tencent's RMB 36 billion compare to Alibaba and Baidu AI capex?

Alibaba committed RMB 380 billion across three years for cloud and AI infrastructure, averaging to roughly RMB 127 billion per year, with Q1 fiscal year 2026 capex execution at approximately RMB 38.6 billion. Tencent's 2026 RMB 36 billion plus guidance sits at the second-tier per-year envelope behind Alibaba's annualized pace. Baidu continues to run an aggressive capex profile across the ERNIE 5.1 family and the AI Cloud business, with AI Cloud revenue growing approximately 21 percent year over year but with the capex compressing consolidated profitability. The hierarchy positions Alibaba as the largest per-year China AI capex investor, Tencent at the second-tier per-year envelope, and Baidu running aggressive but smaller-scale capex with more visible margin pressure.

Why is Bloomberg framing this as pressure for AI payoff?

The AI-payoff framing captures the structural tension between three compressions that stack onto Tencent simultaneously. The Q1 2026 revenue miss tightens the consensus track on the primary monetization engines. The share buyback scale-back to fund the doubled capex commitment produces ongoing pressure to demonstrate AI-revenue lift that exceeds the foregone buyback value. The open-weight pressure across the multi-modal Hunyuan product surface, plus open-weight pressure from DeepSeek V4 and the broader open-weight competitor cohort, caps the revenue extractable per unit of model-quality differentiation. The three compressions stack into a pressure profile that is consistent with the Bloomberg framing.

What is Yuanbao and how does it fit into the Tencent AI strategy?

Yuanbao is Tencent's consumer AI assistant product, integrated with the WeChat distribution surface that gives Tencent a distribution advantage no competitor can match inside the China consumer market. Yuanbao competes with DeepSeek's chat product, with Alibaba's Qwen, and with Baidu's ERNIE Bot family. The capex envelope funds the inference compute that hosts Yuanbao production traffic across the WeChat distribution channel. Yuanbao is the consumer-facing arm of the broader Hunyuan model family and serves as one of the primary internal-utilization revenue-lift lanes for the doubled 2026 capex commitment.

How does open-weight pressure from DeepSeek affect Tencent's AI strategy?

DeepSeek's open-weight release model sets a price-floor reference point that closed-weight competitors must defend their pricing premium against. The April 2026 release of DeepSeek V4 with hybrid attention and 1 million-token context compounded the price-floor pressure on the China model-API lane. Tencent's response is a hybrid strategy — closed-weight monetization on the core Hunyuan foundation-model lane to capture the pricing premium where capability differentiation supports it, alongside open-weight release on the multi-modal product surfaces including Hunyuan Video and HY-World to build developer-ecosystem defensibility on lanes where open-weight pressure caps closed-weight monetization potential.

What is the AI-payoff timeline that investors should expect?

The historical reference points from the United States hyperscaler cycle suggest that capex-to-revenue conversion typically requires multiple compounded quarters of capex commitment before producing visible revenue-lift signal. Amazon's AWS Bedrock revenue contribution became visibly material approximately six quarters after the capex acceleration began. Applying a similar timeline to Tencent's doubled commitment suggests visible revenue-lift signal would surface around mid-2027. The Bloomberg coverage's pressure framing implicitly demands a faster timeline than the historical pattern would support, which means investors should expect the AI-payoff debate to extend across multiple subsequent earnings cycles before resolving in either direction.

What did Tencent management say about the doubled AI capex on the earnings call?

The Tencent management team anchored the doubled capex commitment in three primary themes. First, the Hunyuan model franchise has reached competitive parity at the frontier and is now the consolidated AI substrate for the internal Tencent product surface, which justifies continued investment to maintain the parity and extend the internal-utilization revenue lift. Second, the Yuanbao consumer AI assistant continues to scale its user base through the WeChat distribution moat, and the inference-serving capacity must scale alongside the user-base growth. Third, the share buyback scale-back is a deliberate capital-allocation choice that prioritizes long-horizon AI optionality. Management committed to producing regular disclosure cadence on AI-payoff metrics across subsequent earnings cycles.

How does Tencent's WeChat distribution surface help justify the AI capex?

Tencent's internal product surface spans WeChat, QQ, Tencent Cloud, Tencent Games, Tencent Music, Tencent Video, and dozens of additional product surfaces that collectively reach approximately 1.4 billion monthly active users. Deploying Hunyuan across this consolidated product surface produces immediate inference-scale demand that justifies the capex envelope on internal-utilization economics, even before external API monetization or direct enterprise deployment scales. The internal-utilization model is the same structural lever that the United States hyperscalers use to justify their capex envelopes. Hunyuan 3 Preview already runs across approximately 131 internal Tencent products as of the May 13 earnings release.

Where can investors track Tencent AI capex execution over the next 60 to 90 days?

Three reporting lanes will surface Tencent AI cycle progression with the most fidelity. The Hunyuan model release cadence across multi-modal product surfaces — text, video, 3D, and image generation — will produce ongoing developer-ecosystem signal on the open-weight strategy execution. Yuanbao consumer AI assistant disclosure cadence, particularly any monthly-active-user data points that surface in subsequent management commentary, will produce direct signal on consumer-side payoff trajectory. The Q2 2026 earnings release in approximately August 2026 will be the next consolidated data point on whether the doubled capex commitment is producing visible revenue-lift signal across games, online advertising, and fintech and business services. Bloomberg, the Financial Times, and Caixin will continue to provide running editorial coverage of the cycle.

Sources and further reading

- Bloomberg — Tencent Revenue Miss Heightens Pressure for AI Payoff (May 13, 2026)

- CNBC — China's Tencent sees boost from gaming, AI demand even as revenue comes in weaker than expected (May 13, 2026)

- PR Newswire — Tencent Announces 2026 First Quarter Results (May 13, 2026)

- Alibaba Group — RMB 380 billion three-year AI and cloud infrastructure commitment