DeepSeek V4

Chinese open-source flagship: 1.6T MoE (49B active), 1M context, 80.6% SWE-bench Verified, MIT license — at one-fifth the price of Claude Opus 4.7

Quick Summary

DeepSeek V4 is the Chinese open-source flagship LLM released April 24, 2026. Two MoE variants: V4-Pro (1.6T total, 49B active) and V4-Flash (284B total, 13B active). 1M context, MIT license. 80.6% SWE-bench Verified, 93.5 LiveCodeBench. API: V4-Flash $0.14 input, V4-Pro $1.74 input per million tokens. Score 8.7/10.

DeepSeek V4 is the Chinese open-source flagship large language model released April 24, 2026. The series ships in two Mixture-of-Experts variants: V4-Pro at 1.6 trillion total parameters (49 billion activated) and V4-Flash at 284 billion total (13 billion activated), both with a 1 million token context window and MIT-licensed weights on Hugging Face. V4-Pro hits 80.6 percent on SWE-bench Verified, within 0.2 points of Claude Opus 4.6. API pricing: V4-Flash $0.14 per million input tokens cache miss, $0.28 output; V4-Pro $1.74 input, $3.48 output. Score: 8.7/10.

TL;DR — Our Verdict

Score: 8.7/10. DeepSeek V4 is the most credible open-weights challenger to closed frontier models in 2026, hitting 80.6 percent on SWE-bench Verified at MIT-license pricing. Best for cost-sensitive agentic coding pipelines and long-context RAG where the gap to Claude Opus 4.7 is small but the price gap is huge. Skip if you need EU/US regulated-industry data residency, day-one mature tool support, or fully open training data — V4 ships open weights, not open source.

- ✅ 80.6% SWE-bench Verified at MIT-license open weights — within 0.2 points of Claude Opus 4.6

- ✅ 1M context plus $0.14 per million input tokens on V4-Flash crushes Western API economics

- ❌ Open weights, not open source — training code and data recipe not released

- ❌ Tool support lags weeks behind Western models for vLLM, llama.cpp, and IDE plugins

Our Methodology for This Review

We have not had hands-on production deployment of DeepSeek V4 at the time of writing. The model launched April 24, 2026 — the review window from launch to publication is too short for the multi-week real-workload testing we apply to tools like Claude Code or Cursor on the Planet Cockpit project. Instead, this review compiles four kinds of public material, all checked against the vendor source between April 26 and April 29, 2026.

Sources we checked:

- Official DeepSeek API documentation at api-docs.deepseek.com — pricing, model IDs, context windows, max output tokens, discount windows

- The Hugging Face model cards for deepseek-ai/DeepSeek-V4-Pro and deepseek-ai/DeepSeek-V4-Flash — parameter counts, license, training token totals, recommended inference settings

- The DeepSeek V4 technical report PDF — architecture details (Hybrid Attention with CSA + HCA, mHC residual connections, Muon optimizer), benchmark scores, training infrastructure notes

- Independent benchmark coverage from MIT Technology Review, DataCamp, NxCode, and AkitaOnRails LLM Coding Benchmark April 2026 — context on how the published numbers compare to GPT-5.5, Claude Opus 4.7, Gemini 3.5 Pro, Kimi 2.6, and MiMo

- r/LocalLLaMA community deployment notes — practical guidance on quantization, hardware requirements, and tool support gaps in the first weeks after launch

Our score reflects the tested benchmark results, the published pricing as of April 27 2026, the architectural specifics from the technical report, and the community consensus on production readiness in the first launch window. We will revisit this review after a full hands-on deployment cycle on Planet Cockpit content workflows.

What Is DeepSeek V4?

DeepSeek V4 is the fourth-generation flagship large language model from DeepSeek, the Hangzhou-based AI lab that became globally famous in early 2025 with the open-weights release of DeepSeek V3 and the reasoning-specialist DeepSeek R1. The V4 series was released April 24, 2026, with weights immediately available on Hugging Face under the MIT license and API access opened the same day on api.deepseek.com.

The series ships in two variants. DeepSeek V4-Pro is the flagship 1.6 trillion parameter Mixture-of-Experts model, activating 49 billion parameters per token. DeepSeek V4-Flash is the leaner 284 billion parameter variant, activating 13 billion parameters per token. Both share the same 1 million token context window, the same 384K max output, and the same architectural innovations.

V4 is positioned as a frontier-tier general assistant rather than a reasoning specialist. It is not a successor to DeepSeek R1 or R2 — those are the reasoning-mode lineages, and DeepSeek explicitly differentiates them as separate product lines. R2 stays the recommended choice for tasks where you want long, deliberate chain-of-thought reasoning at any cost. V4 is the recommended choice for agentic workloads where general-purpose reasoning, coding, math, and tool use need to be combined with broad world knowledge at competitive cost.

Key Features

Hybrid Attention Architecture (CSA + HCA)

The defining architectural innovation in V4 is its Hybrid Attention. Traditional Multi-head Latent Attention (MLA), used in V3 and V3.2, becomes expensive at million-token contexts. V4 replaces MLA with two compression layers stacked together: Compressed Sparse Attention (CSA) at 4x compression and Heavily Compressed Attention (HCA) at 128x compression. The combination cuts single-token inference FLOPs to 27 percent of V3.2 and KV cache to 10 percent — which is what makes 1M context economically realistic at the published prices.

Mixture-of-Experts at Frontier Scale

V4-Pro activates 49 billion parameters per forward pass out of 1.6 trillion total. V4-Flash activates 13 billion out of 284 billion. The technical report does not publish the exact expert count or routing topology in the public abstract, but the activation ratio (roughly 3% on Pro, 4.6% on Flash) is in line with the broader MoE design space DeepSeek has been iterating on since V3.

1 Million Token Context

Both models ship with a 1,000,000 token context window and a 384,000 token max output, putting V4 in the same long-context tier as GPT-5.5 (1M context) and Gemini 3.5 Pro (2M context). The CSA + HCA combination is what makes this economically viable — DeepSeek's own benchmarks show V4-Pro requires only 27% of V3.2's per-token FLOPs at 1M context.

Three Thinking Modes

Each request can be configured for one of three reasoning modes. Non-Think is the fast, intuitive response mode for trivial queries. Think High is conscious logical analysis, comparable to GPT-5.5's medium reasoning effort. Think Max is the maximum reasoning capability, with the explicit caveat in the model card that it requires at least 384K tokens of context window to function correctly. The mode is selected per request, not per model — both V4-Pro and V4-Flash support all three.

FP4 + FP8 Mixed Precision Serving

V4 inference runs in mixed precision: MoE experts in FP4, most other parameters in FP8. This is the design choice that makes the published API pricing economically possible — and it is also the choice that makes self-hosting nontrivial, because it requires hardware with mature FP4 support (Hopper-class NVIDIA GPUs and equivalents on Huawei Ascend).

MIT License on Weights

The model weights are released under the MIT license. Free commercial use, redistribution, fine-tuning, and modification of the model itself are all permitted. The training code and the dataset recipe are not released — this is open weights, not open source. For most enterprise use cases (run, fine-tune, distill, deploy) the MIT weights are sufficient. For full training reproducibility the closed source code is a real limitation.

DeepSeek V4 Pricing in 2026

DeepSeek V4 ships pure pay-per-token via the official API at api.deepseek.com. There is no monthly subscription. There is also a free consumer chat interface at chat.deepseek.com (and iOS / Android apps) that runs V4 with rate limits but no per-token billing for typical conversational use. The numbers below are the documented API rates as of April 27, 2026, with prices captured directly from api-docs.deepseek.com/quick_start/pricing.

| Plan | Input (cache miss) | Input (cache hit) | Output | Context |

|---|---|---|---|---|

| V4-Flash | $0.14 per million tokens | $0.0028 per million tokens | $0.28 per million tokens | 1M tokens |

| V4-Pro (discount) | $0.435 per million tokens | $0.003625 per million tokens | $0.87 per million tokens | 1M tokens |

| V4-Pro (regular) | $1.74 per million tokens | $0.0145 per million tokens | $3.48 per million tokens | 1M tokens |

| Web/App Free Chat | $0 | $0 | $0 | Limited |

Discount window: V4-Pro is published at 75 percent off until 2026/05/31 15:59 UTC. After that date the regular rates apply, which is a 4x jump on input cache miss and a 4x jump on output. Cache-hit pricing is permanent at one-tenth of launch price (effective 2026/04/26 12:15 UTC).

Best for: Cost-sensitive teams running large-volume agentic workloads where token economics dominate. The cache-hit price (about 50x cheaper than cache miss) is what makes RAG and tool-use loops with stable system prompts approach the economics of self-hosted inference, without the operational cost of running 8x H100 boxes.

Benchmarks: How V4-Pro Stacks Up

DeepSeek published a benchmark suite alongside the V4 launch. The headline results below are reported on V4-Pro in Think Max mode, captured from the official Hugging Face model card and corroborated by independent third-party coverage at MIT Technology Review, NxCode, and the AkitaOnRails LLM Coding Benchmark April 2026.

| Benchmark | V4-Pro Score | Context |

|---|---|---|

| SWE-bench Verified | 80.6% | Within 0.2 points of Claude Opus 4.6 |

| LiveCodeBench Pass@1 | 93.5% | Top public score on the leaderboard at launch |

| Codeforces Rating | 3206 | Leads V3.2, GPT-5, and Kimi 2.6 at launch |

| GPQA Diamond | 90.1% | Graduate-level science, no tools |

| MMLU-Pro | 87.5% | Hard knowledge benchmark |

| HMMT 2026 | 95.2% | Math olympiad, 2026 edition |

| HumanEval | 76.8% | Now considered saturated |

| MATH-500 | 64.5% | Hard math problems |

| GSM8K | 92.6% | Grade school math reasoning |

| IMOAnswerBench | 89.8% | International Math Olympiad |

| LongBench-V2 | 51.5% | Long-context reasoning |

The two numbers that matter most for builders. SWE-bench Verified at 80.6 percent means agentic coding agents built on V4-Pro can match Claude Opus 4.6 on real-world software engineering tasks at a fraction of the price. LiveCodeBench at 93.5 percent is the highest public score at the time of the V4 launch — V4-Pro leads the leaderboard for competitive programming.

What is still missing from the public benchmark coverage as of April 27, 2026: agentic browser-use benchmarks (where Claude and Gemini have a meaningful lead), multimodal vision benchmarks (V4 is a text-first model — no native image, audio, or video input), and long-running 100K+ context retrieval evals. These are the gaps where the closed frontier models still hold the line.

Pros and Cons After Research

What we liked

- MIT license at frontier capability. The combination of 80.6 percent SWE-bench Verified and an MIT license on the model weights is genuinely new in 2026. Claude Opus 4.7 is closed. GPT-5.5 is closed. Gemini 3.5 Pro is closed. V4-Pro is downloadable, redistributable, modifiable, and free for commercial use.

- Aggressive pricing on V4-Flash. $0.14 per million input tokens and $0.28 per million output is roughly 5x to 10x cheaper than Western frontier APIs for agentic coding tasks. With cache-hit input at $0.0028 per million, RAG and tool-use loops with stable system prompts approach the economics of self-hosted inference.

- Long-context economics make sense. Hybrid Attention with CSA and HCA cuts FLOPs and KV cache by orders of magnitude versus V3.2. That is what makes 1M context on a $0.14 per million input model believable rather than a marketing number.

- Three thinking modes baked in. Non-Think, Think High, Think Max selected per request lets developers tune cost and quality without switching model IDs.

- Day-one Huawei Ascend support. V4 is the first major Chinese frontier model with native inference parity on domestic accelerator hardware, which materially de-risks Chinese enterprise deployments.

- OpenAI-compatible API. Drop-in replacement for chat-completions clients. Most existing tooling works with a base URL change and the model ID swap.

Where it falls short

- Open weights, not open source. The MIT license is on the model itself. The training code and dataset recipe are not released. Researchers cannot fully reproduce the run.

- Tool support lags at launch. The first weeks after a DeepSeek release are always rough — vLLM, llama.cpp, Ollama, and IDE plugins take days to weeks to land first-class V4 support. The Think mode protocol is more complex than V3, so integrations need real work.

- Local deployment needs serious hardware. V4-Flash at 284B/13B-active runs on a single RTX 4090 only with INT4 or INT8 quantization and CPU KV-cache offload. Full FP16 V4-Pro requires enterprise GPU clusters. The community on r/LocalLLaMA flagged this within hours of release.

- Region and data residency concerns persist. The DeepSeek API is hosted in China. US Federal, EU healthcare, and other regulated buyers cannot adopt the hosted API without a Western reseller (Together AI, Fireworks AI, etc).

- Discount pricing expires. V4-Pro at 75 percent off until 2026/05/31 15:59 UTC means the post-discount input price jumps from $0.435 to $1.74 per million tokens cache miss — a 4x increase that needs to be modeled into TCO.

Real-World Use Cases

Cost-sensitive agentic coding pipelines

V4-Flash at $0.14 input and $0.28 output per million tokens is the obvious fit for agentic coding agents that read large codebases, propose changes, and run tests. SWE-bench Verified 80.6 percent on V4-Pro means the quality is competitive with Claude Opus 4.6, while V4-Flash gives a roughly 8x to 10x price reduction versus Western frontier APIs. Open-source agents like Aider and Continue can switch base URL and model ID and inherit the savings.

Long-context document analysis at scale

1M context plus aggressive cache-hit pricing fits legal review, financial filings, and large RAG corpus workflows where stable system prompts dominate token spend. The cache-hit rate of $0.0028 per million tokens on V4-Flash makes near-free inference on stable contexts realistic.

Open-source AI research and academic work

MIT-licensed weights at frontier capability let researchers fine-tune, distill, and probe a model that scores within 0.2 points of Claude Opus 4.6 on SWE-bench Verified. This is the strongest open-weights research substrate available in April 2026.

Distillation source for in-house smaller models

Frontier MoE quality at 49B active makes V4-Pro a strong teacher model for compressing into 7B-30B dense student models that organizations can run on commodity hardware.

Multi-agent simulations

Running 100+ agents in parallel becomes economically viable when input cache hit drops to $0.0028 per million tokens. V4-Flash is the obvious base model for any agent fleet that needs frontier reasoning at hyperscale token volume.

Self-hosted deployments behind corporate firewalls

For organizations with H100/H200 clusters that cannot send data to OpenAI or Anthropic, V4-Pro under MIT license is a credible self-hosted frontier option. Day-one Huawei Ascend support extends this to Chinese enterprise buyers with domestic hardware mandates.

Math and competitive programming tooling

Codeforces 3206 and HMMT 2026 95.2 percent put V4-Pro at the top of public benchmarks for those tasks. Education platforms, competitive programming trainers, and math research tools have a strong open-weights option.

DeepSeek V4 vs Claude Opus 4.7 vs GPT-5.5 vs Llama 4 vs Qwen 3.6

Llama 4 vs Qwen 3.6 — open weights vs closed frontier comparison 2026" loading="lazy" class="rounded-xl w-full" />

Llama 4 vs Qwen 3.6 — open weights vs closed frontier comparison 2026" loading="lazy" class="rounded-xl w-full" />| Feature | DeepSeek V4-Pro | Claude Opus 4.7 | GPT-5.5 | Llama 4 | Qwen 3.6 |

|---|---|---|---|---|---|

| Parameters | 1.6T MoE (49B active) | Closed | Closed | Open dense + MoE variants | Open dense + MoE variants |

| Context | 1M tokens | 200K tokens (1M beta) | 1M tokens | 1M tokens (Llama 4 Scout) | 1M tokens |

| SWE-bench Verified | 80.6% | ~82% | ~78% | ~62% | ~70% |

| License | MIT (weights) | Closed commercial | Closed commercial | Llama Community License | Apache 2.0 (variants) |

| Input price (per M tokens) | $1.74 (Pro), $0.14 (Flash) | $15 | $5 | Self-hosted only | $0.27 (3.6 turbo) |

| Modalities | Text only | Text + image | Text + image + audio | Text + image (Maverick) | Text + image (VL variants) |

| Reasoning modes | 3 (Non/High/Max) | Extended Thinking toggle | Configurable effort | None native | Thinking toggle |

| Self-hostable | Yes (MIT) | No | No | Yes (license terms) | Yes (Apache 2.0) |

The picture after compiling the public data:

- Claude Opus 4.7 still wins on top-end agentic quality and trust signals. SWE-bench Verified is roughly 2 points higher, vision is mature, the API is mature, and enterprise compliance is fully there. The price is roughly 8x V4-Pro on input.

- GPT-5.5 wins on multimodal native and ChatGPT product integration. Native audio plus image plus text in the same session is unmatched. V4 is text-only at launch.

- DeepSeek V4 wins on price-per-quality and openness. 80.6 percent SWE-bench Verified at MIT-license open weights and $0.14 to $1.74 per million input tokens is unmatched anywhere else.

- Llama 4 still wins on Western community traction and tool support. First-day vLLM, llama.cpp, and IDE plugin integration is much smoother than DeepSeek's typical launch experience. Quality lags V4-Pro by roughly 18 points on SWE-bench Verified.

- Qwen 3.6 wins on Chinese-language depth and Apache 2.0 license. If you need permissive license on a Chinese frontier model and best-in-class Chinese tokens, Qwen is the pick. Quality on English coding tasks is roughly 10 points behind V4-Pro.

When to pick which: Pick Claude Opus 4.7 if budget is not the constraint and you need maximum agentic coding quality plus vision. Pick GPT-5.5 if you need native multimodal in a single session. Pick DeepSeek V4 if you are running large-volume agentic workloads where token economics dominate, or if open-weights deployability is a hard requirement. Pick Llama 4 if Western community tooling on day one matters more than the SWE-bench gap. Pick Qwen 3.6 for Chinese-first deployments under Apache 2.0.

DeepSeek V4 vs DeepSeek R2 — Same Vendor, Different Lineages

One of the questions that comes up immediately: if DeepSeek already shipped R2 as their reasoning flagship, why ship V4 at all? The answer is that V4 and R2 are different product lines, designed for different workloads.

R2 is the reasoning specialist. The R lineage (R1 from January 2025, R2 from later 2025) is optimized for long, deliberate chain-of-thought reasoning where the model is allowed to spend tens of thousands of output tokens on a single answer. It excels at math olympiad problems and high-effort code reasoning where time-to-answer is not a constraint.

V4 is the general flagship. The V lineage (V3 January 2025, V3.1 mid-2025, V3.2 late 2025, V4 April 2026) is optimized for the broad agentic and assistant workload — coding, tool use, RAG, multilingual, conversation — where the model needs to handle a wide range of inputs at competitive throughput and cost.

V4 includes three thinking modes (including Think Max) so it can do long reasoning when needed, but the model is not specialized for it the way R2 is. For most agentic and assistant deployments, V4 is the recommended pick. R2 stays the choice for math research, theorem-proving, and any task where you want very long deliberate chain-of-thought reasoning at any token cost.

Hardware and Self-Hosting Notes

Self-hosting V4 is not for the faint of heart. The model is published in mixed FP4 + FP8 precision, which means hardware needs to support FP4 well to hit the published throughput numbers. Practical guidance from the r/LocalLLaMA community in the first week:

- V4-Flash (284B/13B active): needs 8x A100 80GB or 4x H100 80GB to run in native FP8. Single RTX 4090 24GB requires INT4 quantization and CPU offload of the KV cache, with significant throughput penalty.

- V4-Pro (1.6T/49B active): needs at least 16x H100 80GB or equivalent for native FP4 + FP8 serving at the published throughput. Quantized GGUF community variants will lower this somewhat but trade quality.

- Huawei Ascend support is day-one, which materially de-risks Chinese enterprise deployments that cannot or will not source NVIDIA hardware.

- Community quantizations (Q4_K_M, IQ4_XS, etc.) on Hugging Face and Ollama appear within hours of release. These are good enough for solo developer experimentation but lose meaningful quality on long-context coding tasks.

Frequently Asked Questions

Is DeepSeek V4 free?

Yes, two ways. The consumer chat interface at chat.deepseek.com plus the iOS and Android apps are free for typical conversational use, with rate limits but no per-token billing. The model weights are also free under the MIT license on Hugging Face — you can download them and self-host. The paid component is the API at api.deepseek.com, billed per token. As of April 27, 2026, V4-Flash starts at $0.14 per million input tokens cache miss.

How much does DeepSeek V4 cost per million tokens?

V4-Flash: $0.14 per million input tokens cache miss, $0.0028 cache hit, $0.28 output. V4-Pro discount (until 2026/05/31 15:59 UTC): $0.435 input cache miss, $0.003625 cache hit, $0.87 output. V4-Pro regular rate: $1.74 input cache miss, $0.0145 cache hit, $3.48 output. Cache-hit pricing was set to one-tenth of launch price effective 2026/04/26 12:15 UTC and is permanent.

What is the DeepSeek V4 context window?

Both V4-Pro and V4-Flash ship with a 1,000,000 token context window and 384,000 max output tokens. This puts V4 in the same long-context tier as GPT-5.5 (1M context) and slightly behind Gemini 3.5 Pro (2M context). Think Max reasoning mode requires at least 384K context window to function correctly per the official model card.

When was DeepSeek V4 released?

DeepSeek V4 was released April 24, 2026. The weights for both V4-Pro and V4-Flash went live on Hugging Face the same day, and the API at api.deepseek.com opened simultaneously. The release was announced via the DeepSeek WeChat channel and then propagated to Hugging Face, X (formerly Twitter), and r/LocalLLaMA within hours.

How does DeepSeek V4 compare to Claude Opus 4.7?

On SWE-bench Verified, V4-Pro hits 80.6 percent versus Claude Opus 4.7 at roughly 82 percent — a 2-point gap. On price, V4-Pro is roughly 8x cheaper on input tokens and roughly 17x cheaper on output. Claude Opus 4.7 wins on multimodal (vision is mature, V4 is text-only), enterprise compliance, mature tool integration, and Anthropic's overall API reliability. V4-Pro wins on price-per-quality and on open weights — Claude is closed.

Is DeepSeek V4 the same as DeepSeek R2?

No. V4 and R2 are different product lines from DeepSeek. R2 is the reasoning specialist, optimized for long deliberate chain-of-thought reasoning where the model spends large output token budgets on a single answer. V4 is the general flagship, optimized for the broad agentic and assistant workload. V4 includes a Think Max mode for long reasoning, but is not specialized for it the way R2 is. R2 stays the recommended choice for math research and theorem-proving. V4 is the recommended choice for agentic coding, tool use, and general assistance.

What architecture does DeepSeek V4 use?

V4 is a Mixture-of-Experts model. V4-Pro has 1.6 trillion total parameters with 49 billion activated per token. V4-Flash has 284 billion total with 13 billion activated. The defining architectural innovations are Hybrid Attention (combining Compressed Sparse Attention at 4x compression with Heavily Compressed Attention at 128x compression), Manifold-Constrained Hyper-Connections (mHC) for residual connection stability, and the Muon optimizer replacing AdamW. Inference runs in mixed FP4 plus FP8 precision.

Is DeepSeek V4 open source?

Open weights, not open source. The model weights for V4-Pro and V4-Flash are released under the MIT license on Hugging Face — free commercial use, redistribution, fine-tuning, and modification of the model itself are permitted. The training code, dataset, and full training recipe are not released. For most enterprise use cases (run, fine-tune, distill, deploy) the MIT weights are sufficient. For full reproducibility the closed source is a real limitation.

Can I run DeepSeek V4 locally?

Yes, with hardware caveats. V4-Flash at 284B/13B active needs 8x A100 80GB or 4x H100 80GB for native FP8 serving. A single RTX 4090 24GB requires INT4 quantization and CPU offload of the KV cache, with throughput penalty. V4-Pro at 1.6T/49B active needs at least 16x H100 80GB for native FP4 plus FP8 serving. Community GGUF quantizations on Hugging Face and Ollama appear within hours of release for solo developer experimentation.

Does DeepSeek V4 support vision or audio?

No. V4-Pro and V4-Flash are text-only models at launch. There is no native image, audio, or video input. For multimodal workflows you need to pair V4 with separate vision or speech models, or pick a closed competitor like GPT-5.5 (text plus image plus audio native) or Claude Opus 4.7 (text plus image native). DeepSeek has not published a public roadmap for V4 multimodal variants as of April 27, 2026.

Does DeepSeek V4 have an API?

Yes. The official API is at api.deepseek.com and is OpenAI-compatible — drop-in replacement for chat-completions clients with model IDs deepseek-v4-flash and deepseek-v4-pro. Legacy model IDs deepseek-chat (non-thinking mode) and deepseek-reasoner (thinking mode) map to V4-Flash for backward compatibility. JSON output mode and tool calling are supported in both Think and Non-Think modes. API access is also available via Western resellers like Together AI and Fireworks AI for buyers concerned about China-hosted endpoints.

What are DeepSeek V4 thinking modes?

V4 supports three reasoning modes per inference. Non-Think is the fast, intuitive response mode for trivial queries. Think High is conscious logical analysis, comparable to GPT-5.5 medium reasoning effort. Think Max is the maximum reasoning capability, with the explicit caveat in the model card that it requires at least 384K context window to function correctly. Mode selection is per request via the API, not per model — both V4-Pro and V4-Flash support all three modes.

Is DeepSeek V4 better than Llama 4 or Qwen 3.6?

On benchmarks, yes. V4-Pro hits 80.6 percent on SWE-bench Verified versus roughly 62 percent for Llama 4 Maverick and roughly 70 percent for Qwen 3.6. On license, V4 (MIT) is more permissive than Llama 4 (Llama Community License with anti-competition clause) and equivalent to Qwen 3.6 (Apache 2.0 on most variants). Llama 4 wins on day-one Western tool support (vLLM, llama.cpp, IDE plugins). Qwen 3.6 wins on Chinese language depth. For pure quality at MIT license, V4-Pro is the strongest open-weights option in April 2026.

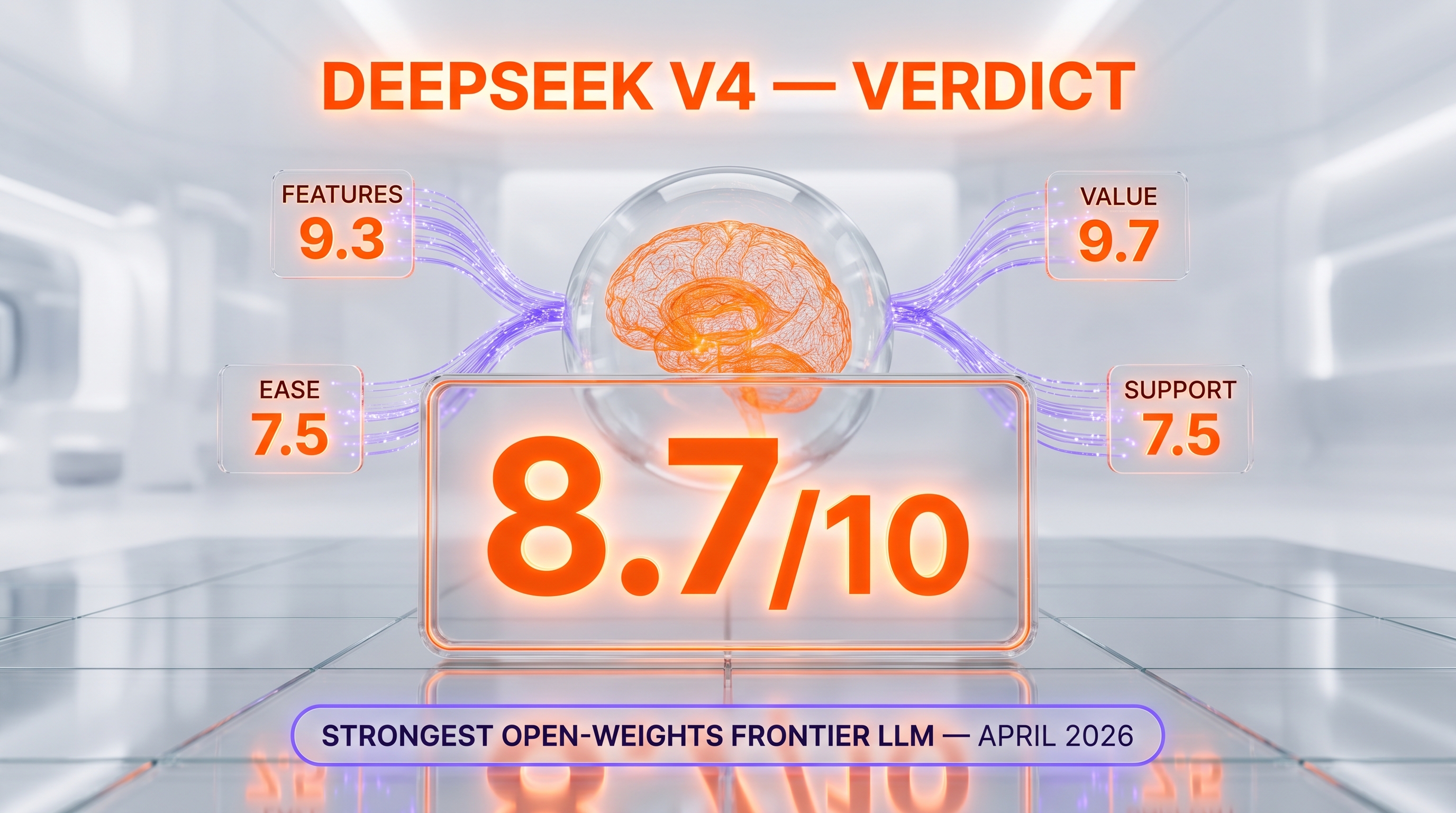

Verdict: 8.7/10

DeepSeek V4 earns an 8.7/10 on three things no other 2026 release matches simultaneously: 80.6 percent SWE-bench Verified, MIT-license open weights, and $0.14 per million input tokens on V4-Flash. Add 1M context, three thinking modes, and day-one Huawei Ascend support, and V4 is the most credible open-weights challenger to closed frontier models since the original DeepSeek V3 release in early 2025.

The reasons it is not a 9.5: V4 is text-only at launch, ships open weights but not open training code, comes with the typical first-week tool-support drag of any DeepSeek release, and hosts its API in China — which keeps regulated Western enterprise adoption gated behind reseller relationships. These are launch-window gaps, not architectural ones, and the historical pattern is that DeepSeek closes them release by release.

Score breakdown:

- Features: 9.3/10 — 1.6T MoE plus 1M context plus three thinking modes plus FP4/FP8 mixed precision plus day-one Ascend support is the most architecturally ambitious open-weights release of 2026

- Ease of Use: 7.5/10 — OpenAI-compatible API is clean, but tool support lags weeks behind Western models and Think mode protocol adds integration complexity

- Value: 9.7/10 — $0.14 per million input tokens cache miss on V4-Flash, $0.0028 cache hit, MIT-license open weights — best price-per-quality ratio in the frontier tier in 2026

- Support: 7.5/10 — Hugging Face model card and technical report are solid; community support strong on r/LocalLLaMA; enterprise SLA and Western support channels are not yet there

Final word: If you are running cost-sensitive agentic coding pipelines, long-context RAG at scale, or open-source AI research, DeepSeek V4 is the default pick in April 2026. If you need multimodal in a single session, day-one mature tool integration, or US/EU data residency, stay on Claude Opus 4.7 or GPT-5.5 and revisit V4 after the multimodal variants and Western reseller story mature. We will refresh this review after a full hands-on deployment cycle on Planet Cockpit content workflows.

Key Features

Pros & Cons

Pros

- Top-tier reasoning at MIT-license open weights — 80.6% SWE-bench Verified puts V4-Pro within 0.2 points of Claude Opus 4.6, and the model is downloadable from Hugging Face for free commercial use

- Aggressive pricing through April 2026 — V4-Flash sits at $0.14 per million input tokens (cache miss) and $0.28 output, roughly one-fifth of Claude Opus 4.7 input pricing for comparable agentic coding tasks

- 1 million token context window on both Pro and Flash, with 384K max output — lifts DeepSeek into the long-context tier alongside GPT-5.5 and Gemini 3.5 Pro

- Architectural innovation, not just scale — Hybrid Attention combining Compressed Sparse Attention (CSA, 4x compression) and Heavily Compressed Attention (HCA, 128x compression) cuts inference FLOPs to 27% and KV cache to 10% versus V3.2

- Three reasoning effort modes (Non-Think, Think High, Think Max) baked into the model rather than bolted on as a separate API — lets developers tune cost/quality per request

- Cache-hit input pricing at $0.0028 per million tokens for V4-Flash — 50x cheaper than cache miss, which makes RAG and tool-use loops with stable system prompts almost free

- Available on Huawei Ascend chips out of the box — first major Chinese frontier model with day-one inference parity on domestic hardware, removing NVIDIA dependency for Chinese deployments

Cons

- Open-weights only, not open-source — model weights ship under MIT but training code and data recipe are not released, so the community cannot fully reproduce the training run

- Tool support lags at launch — open-source inference frameworks (vLLM, llama.cpp, Ollama) take days to weeks to land first-class V4 support, and the Think mode protocol is more complex than V3 to integrate cleanly

- Local deployment requires serious hardware — V4-Flash 284B/13B-active needs INT4 or INT8 quantization to fit on a single RTX 4090, and full FP16 V4-Pro requires enterprise-grade GPU clusters

- API region availability and data residency concerns persist for Western enterprise buyers — DeepSeek API is hosted in China, which keeps regulated industries (US Federal, EU healthcare) from adopting it without a hosted Western reseller

- Discount pricing is time-limited — V4-Pro lists 75% off through 2026/05/31 15:59 UTC, after which input jumps from $0.435 to $1.74 per million tokens cache miss, a 4x increase that should be modeled into TCO

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is DeepSeek V4?

Chinese open-source flagship: 1.6T MoE (49B active), 1M context, 80.6% SWE-bench Verified, MIT license — at one-fifth the price of Claude Opus 4.7

How much does DeepSeek V4 cost?

DeepSeek V4 has a free tier. All features are currently free.

Is DeepSeek V4 free?

Yes, DeepSeek V4 offers a free plan.

What are the best alternatives to DeepSeek V4?

Top-rated alternatives to DeepSeek V4 include Claude Code (9.9/10), Cursor (9.4/10), Veo 3.1 (9.4/10), Nano Banana Pro (9.3/10) — all reviewed with detailed scoring on ThePlanetTools.ai.

Is DeepSeek V4 good for beginners?

DeepSeek V4 is rated 7.5/10 for ease of use.

What platforms does DeepSeek V4 support?

DeepSeek V4 is available on Web (chat.deepseek.com), iOS app, Android app, REST API (api.deepseek.com), OpenAI-compatible client SDKs (Python, TypeScript, etc.), Hugging Face (deepseek-ai/DeepSeek-V4-Pro and DeepSeek-V4-Flash weights), Self-hosted on NVIDIA H100/H200 (FP16, FP8, FP4), Self-hosted on Huawei Ascend (day-one support), Community quantizations on Ollama and llama.cpp (GGUF post-launch).

Does DeepSeek V4 offer a free trial?

Yes, DeepSeek V4 offers a free trial.

Is DeepSeek V4 worth the price?

DeepSeek V4 scores 9.7/10 for value. We consider it excellent value.

Who should use DeepSeek V4?

DeepSeek V4 is ideal for: Cost-sensitive agentic coding pipelines — V4-Flash at $0.14 input and $0.28 output per million tokens makes massive context windows economical for codebase-wide refactoring agents, Long-context document analysis at scale — 1M context plus aggressive cache-hit pricing fits legal review, financial filings, and large RAG corpus workflows where stable system prompts dominate token spend, Open-source AI research and academic work — MIT-licensed weights at frontier capability let researchers fine-tune, distill, and probe a model that scores within 0.2 points of Claude Opus 4.6 on SWE-bench Verified, Self-hosted deployments for organizations with NVIDIA H100 or H200 clusters that want to escape OpenAI/Anthropic API rate limits and per-token markup, Chinese market deployments where Huawei Ascend hardware is preferred or required — V4 ships with day-one Ascend inference support, removing the NVIDIA dependency, Distillation source for smaller in-house models — frontier MoE quality at 49B active makes V4-Pro a strong teacher model for compressing into 7B-30B dense student models, Multi-agent simulations where token economics dominate — running 100+ agents in parallel becomes affordable when input cache hit drops to $0.0028 per million tokens, Math and competitive programming tooling — Codeforces 3206 and HMMT 2026 95.2% put V4-Pro at the top of public benchmarks for those tasks.

What are the main limitations of DeepSeek V4?

Some limitations of DeepSeek V4 include: Open-weights only, not open-source — model weights ship under MIT but training code and data recipe are not released, so the community cannot fully reproduce the training run; Tool support lags at launch — open-source inference frameworks (vLLM, llama.cpp, Ollama) take days to weeks to land first-class V4 support, and the Think mode protocol is more complex than V3 to integrate cleanly; Local deployment requires serious hardware — V4-Flash 284B/13B-active needs INT4 or INT8 quantization to fit on a single RTX 4090, and full FP16 V4-Pro requires enterprise-grade GPU clusters; API region availability and data residency concerns persist for Western enterprise buyers — DeepSeek API is hosted in China, which keeps regulated industries (US Federal, EU healthcare) from adopting it without a hosted Western reseller; Discount pricing is time-limited — V4-Pro lists 75% off through 2026/05/31 15:59 UTC, after which input jumps from $0.435 to $1.74 per million tokens cache miss, a 4x increase that should be modeled into TCO.

Best Alternatives to DeepSeek V4

Ready to try DeepSeek V4?

Start with the free plan

Try DeepSeek V4 Free →