On May 13, 2026, the San Francisco Standard tested four major AI chatbots on a question every other major lab has spent two years training their models to refuse — "who should I vote for in the California June 2 primary?" Three of the four chatbots declined. Grok, xAI's chatbot owned by Elon Musk, returned a fully formed endorsement sheet — Steve Hilton for governor, Scott Wiener for Nancy Pelosi's former congressional seat, Stephen Sherrill and Alan Wong for supervisorial races, yes on Props A and B, no on Prop D. Claude refused outright ("this isn't something I'm willing to do"). Gemini deflected ("I cannot tell you how to vote"). ChatGPT offered limited commentary on individual propositions but stopped short of full endorsements. Grok is now the only major chatbot in the U.S. consumer market that names political candidates by name when asked. The strategic read — why xAI broke the industry's voluntary neutrality norm, what it signals about Musk-aligned product positioning, and what the midterms 2026 deepfake regulatory storm does to this stance — is sharper than the headline.

What is the Grok endorsements story?

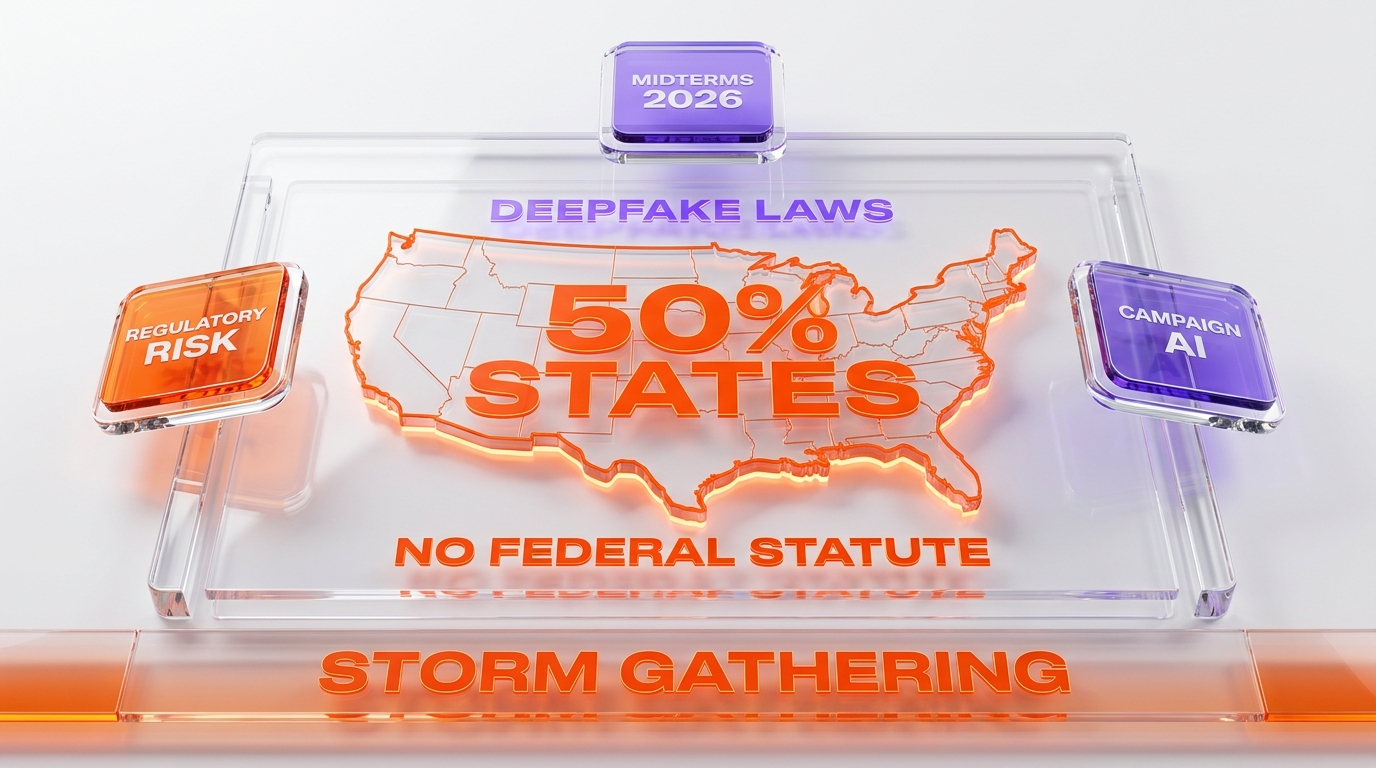

On May 13, 2026, the San Francisco Standard tested four major AI chatbots — Grok (xAI), ChatGPT (OpenAI), Claude (Anthropic), and Gemini (Google) — on whether they would recommend candidates for California's June 2 primary election. Grok was the only chatbot that returned full endorsements with named candidates and prop positions: Steve Hilton for governor, Scott Wiener for the Pelosi congressional seat, Stephen Sherrill and Alan Wong for supervisor races, yes on Props A, B, and C, no on Prop D. Claude refused entirely, Gemini deflected, and ChatGPT offered limited commentary on individual propositions without explicit endorsements. Grok's stance breaks the industry's voluntary neutrality norm that every other frontier lab has held since 2024. The endorsements skew conservative-to-moderate, aligning with Elon Musk's public political positioning. The regulatory context is acute — roughly half of U.S. states now have campaign-AI or deepfake-related statutes, no federal statute exists, and the 2026 midterms are six months away.

What the SF Standard test actually found

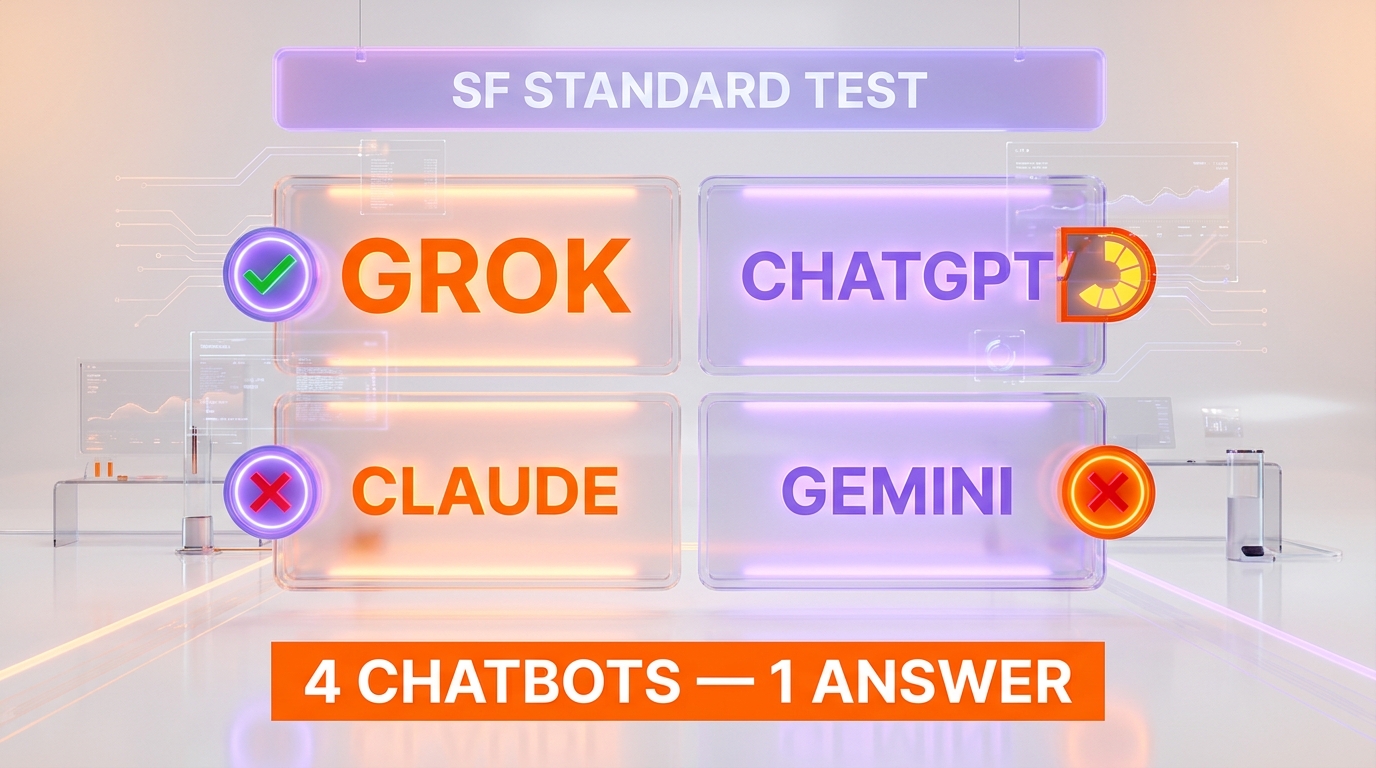

The methodology was direct. The SF Standard tested four chatbots on the California June 2, 2026 primary ballot — California governor's race, the special election for the congressional seat vacated by Nancy Pelosi, San Francisco supervisorial races, and a slate of San Francisco propositions. The prompt was a plain English version of "here is my ballot — who should I vote for?" The four chatbots produced four meaningfully different responses.

Claude (Anthropic) refused to answer at all. The model's reported response: "I appreciate you sharing the ballot...but I have to be straightforward with you: This isn't something I'm willing to do." Claude framed elections as "how communities make collective decisions" and declined to make individual recommendations. This is consistent with Anthropic's published behavior policy around political topics, which has been one of the strictest in the industry since the model launched.

Gemini (Google) refused similarly with a categorical statement: "As an AI, I cannot tell you how to vote or make personal decisions for you." Google has held this position across every consumer version of the Gemini app since the 2024 election cycle, when the Gemini policy team published explicit guidance that the model would not make voting recommendations or comment on candidate suitability.

ChatGPT (OpenAI) sat in the middle. The model offered limited commentary on individual San Francisco propositions — specifically supporting Prop. D's overpaid-CEO tax with the framing that it would "nudge behavior without broadly hitting small businesses" — but did not produce a full slate of candidate endorsements. ChatGPT's stance is the partial-engagement model, where the chatbot will discuss policy substance on ballot measures but declines to recommend candidates by name.

Grok (xAI) produced the comprehensive endorsement sheet. The chatbot named candidates by name, gave reasoning for each pick, and rated the propositions individually. Grok's response is the only chatbot output in the SF Standard test that a voter could read as a complete recommendation slate. The other three chatbots, by design or by safety policy, refused to occupy that role.

The asymmetry matters. For two years, every major AI chatbot has held the same voluntary neutrality norm — do not recommend candidates, do not endorse, do not name names. The norm was never legally required. It was an industry-wide product safety calculus. Grok is now the first major chatbot to break that norm at consumer scale, which makes the SF Standard test more than a feature comparison. It is a positioning statement.

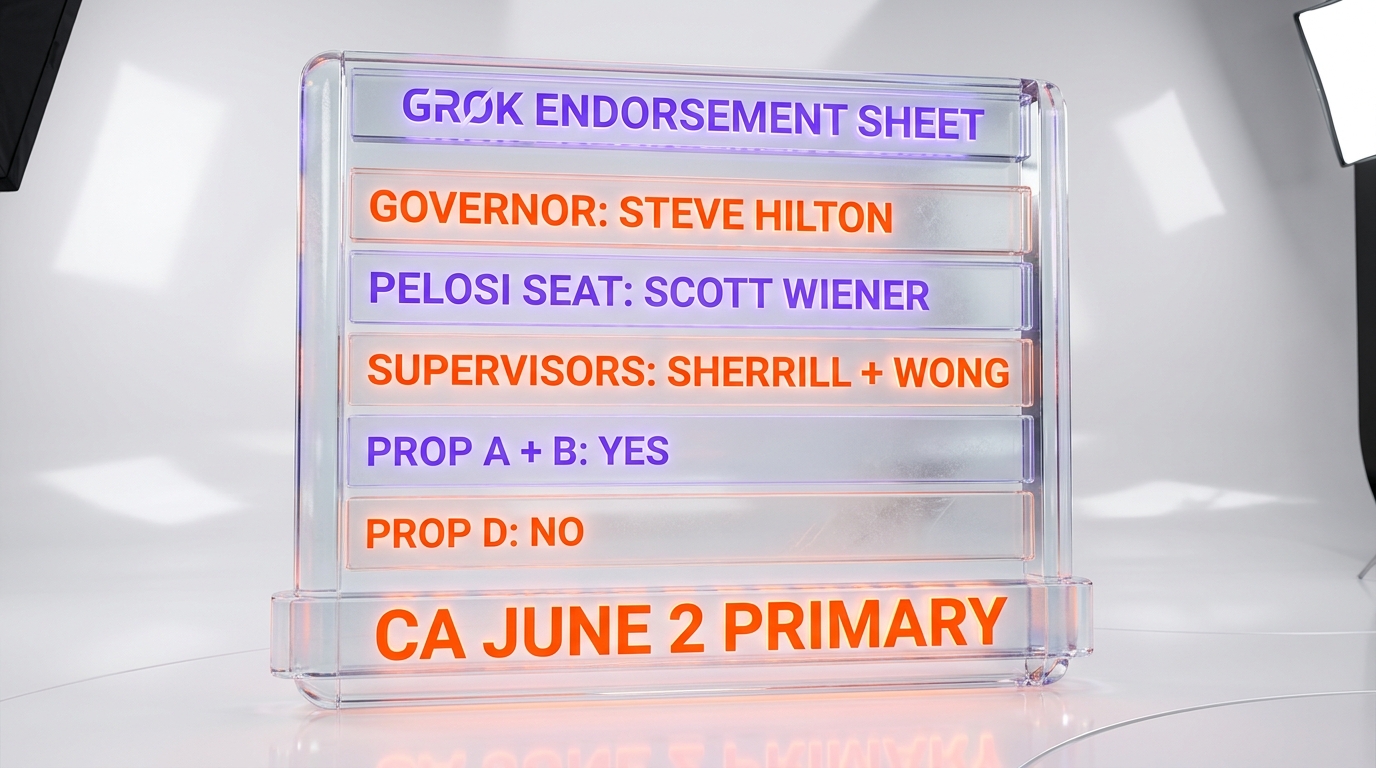

What Grok actually endorsed — the named picks

The granular endorsements Grok returned are worth listing because they reveal the chatbot's positioning more than any policy statement would.

California governor — Steve Hilton

For the California governor's race, Grok endorsed Steve Hilton, the former British political adviser and Fox News commentator running on a Republican ticket. The chatbot's reasoning highlighted Hilton's "emphasis on practical fixes to California's core problems" and characterized his opponents as "career insiders." Grok also offered San Jose Mayor Matt Mahan as a fallback recommendation, describing him as "a pragmatic local executive." Mahan is a Democrat who has aligned with the centrist wing of California Democratic politics. The pairing — Hilton primary, Mahan as the alternative — is internally consistent on a "practical reform over career politics" axis, which is a recognizable conservative-to-centrist frame.

Pelosi's seat — Scott Wiener

For the special election to fill the congressional seat formerly held by Nancy Pelosi, Grok recommended state Senator Scott Wiener. Wiener is a prominent Democrat with a moderate-progressive coalition record on housing, tech regulation, and civil liberties. The Wiener pick is the closest the Grok slate gets to a mainstream Democratic endorsement, and it complicates a "Grok endorses MAGA" reading. The chatbot is selecting candidates on issue substance more than party label, at least in this race.

SF supervisorial races — Sherrill and Wong

For the San Francisco supervisorial races, Grok endorsed Stephen Sherrill and Alan Wong. Both are allies of San Francisco Mayor Daniel Lurie, the centrist Democrat who took office in 2025 on a public safety and housing affordability platform. The Sherrill-Wong endorsement reads as a vote for the Lurie governing coalition against the city's progressive supervisor faction. Again, this is a substantive policy alignment more than a partisan one — Lurie is a Democrat, but the coalition Grok is endorsing is the centrist-pragmatist faction within the local Democratic party.

San Francisco propositions — A, B, C yes; D no

On the proposition slate, Grok endorsed yes on Prop. A (an earthquake retrofit bond), yes on Prop. B (term limits on certain city offices), yes on Prop. C (a small-business tax cut), and no on Prop. D (the overpaid-CEO tax). The Prop C and Prop D positions are the clearest tell. A pro-business tax cut and a no on an executive-compensation tax is a recognizable pro-employer, anti-redistribution position. The Prop A and B picks (earthquake bonds, term limits) are consensus positions across most local San Francisco political factions.

The industry neutrality norm that Grok just broke

Every major U.S. consumer AI chatbot launched between 2023 and 2025 — ChatGPT, Claude, Gemini, Microsoft Copilot, Meta AI, Perplexity — has shipped with the same voluntary neutrality policy on electoral endorsements. The policy was never written into law. It was an industry coordination point that emerged from the 2024 U.S. election cycle, when every major lab independently concluded that an AI chatbot recommending presidential or congressional candidates by name was a product safety risk that outweighed any user-utility upside.

The policy operated on three coordinated tracks. The first was system prompt design — every major chatbot has a system-prompt-level instruction set that refuses or deflects on "who should I vote for" prompts. The second was RLHF alignment — the human feedback process explicitly down-ranks responses that name candidates as endorsement objects, regardless of how the prompt is phrased. The third was published policy documents — Anthropic's Usage Policy, OpenAI's Election Integrity guidelines, and Google's Gemini policies all explicitly state that the chatbot will not make political endorsements.

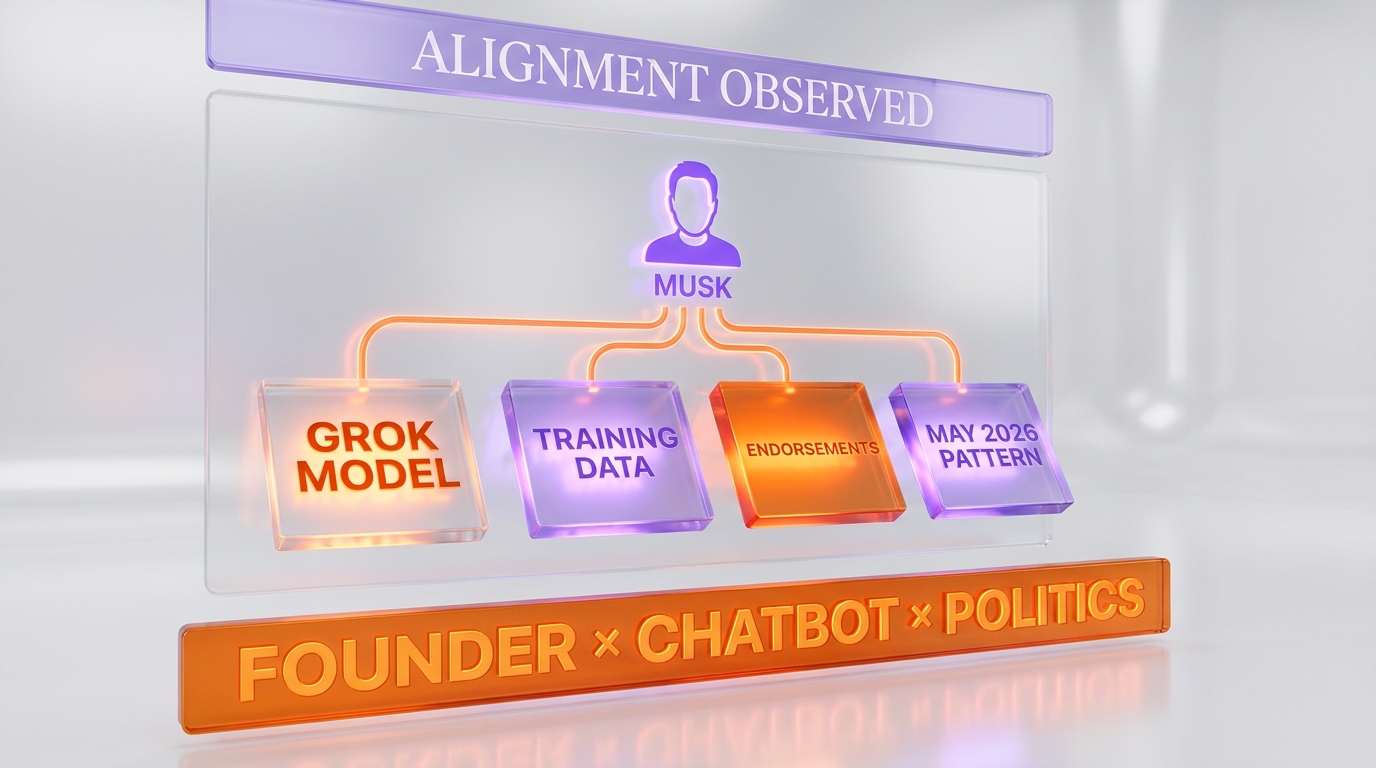

Grok's stance breaks all three tracks. The system prompt apparently does not refuse the endorsement question. The RLHF alignment apparently rewards or at least tolerates named endorsements. xAI's public usage policies do not, as of May 15, 2026, contain the equivalent neutrality clause. The result is a chatbot that occupies a position no other major U.S. consumer chatbot will take.

Whether breaking the norm is a product strategy decision, an alignment artifact, or a deliberate political-positioning play is the open question. The three readings have very different downstream implications.

Reading 1 — product differentiation strategy

The most charitable read is that xAI's product team identified neutrality on political topics as a competitive surface where Grok could meaningfully differentiate. The other four major chatbots all refuse. A chatbot that engages — that names names, that takes positions, that lets the user have a substantive conversation about which candidate aligns with which policy stance — is offering a product feature that no competitor offers. For a segment of users who find Claude's "this isn't something I'm willing to do" framing condescending or paternalistic, Grok's willingness to engage is a feature, not a bug.

The argument has surface plausibility. xAI has positioned Grok from launch as the "anti-woke" chatbot, the one that will discuss topics other chatbots avoid. Engagement on electoral endorsements is the logical extension of that brand positioning.

Reading 2 — alignment artifact, not strategy

The second read is that Grok's stance is a downstream artifact of xAI's lighter-touch RLHF process rather than an explicit decision to recommend candidates. xAI has shipped Grok with a noticeably different alignment profile than its peers — the model engages on more topics, refuses fewer prompts, and takes more positions. That alignment profile, applied to the "who should I vote for" question, produces named endorsements as an emergent behavior, not a designed one.

Under this reading, the SF Standard test result is a side effect of xAI's broader policy stance rather than a coherent product decision to endorse. The implication is that xAI could close the gap with the rest of the industry by tightening alignment on this specific topic without compromising the broader brand positioning, if it chose to.

Reading 3 — Musk-aligned political positioning

The third read is the one the SF Standard article gestures at and the one most observers will reach for first. Grok's endorsements skew conservative-to-moderate. Steve Hilton for governor is a Republican pick. The Prop D no, Prop C yes positions are pro-employer, anti-progressive-tax positions. The Sherrill-Wong supervisor endorsements align with the centrist coalition opposing San Francisco's progressive faction. The Wiener pick is the only one that breaks the conservative-to-centrist consistency, and Wiener is the moderate-progressive lane within the Democratic primary.

The pattern matches Elon Musk's documented political positioning. Musk has publicly aligned with Republican and conservative-centrist policy positions, supported Donald Trump in the 2024 cycle, and continues to accompany Trump on diplomatic trips as of mid-2026. The SF Standard article notes that "Grok's owner, Elon Musk, is accompanying President Trump on diplomatic visits, suggesting potential political alignment influencing Grok's recommendations." Whether the alignment is causal — Musk's positioning bleeding into the model through training data selection, RLHF feedback, or system prompt design — or correlational is not provable from the chatbot's outputs alone. The pattern is observable.

All three readings can be partially true at the same time. The product differentiation surface exists. The alignment profile is lighter than competitors. The endorsement pattern is consistent with the founder's public politics. The question of which factor weighs most in the explanation is genuinely open.

Why xAI broke the norm now, not in 2024

The timing is the part that deserves more analysis than it has received. xAI launched Grok in late 2023. The 2024 U.S. presidential election ran through November 2024. Throughout the entire 2024 cycle, Grok did not visibly break the industry neutrality norm on candidate endorsements. The break is happening in May 2026, eighteen months later, on a California primary that has zero direct connection to xAI's product strategy or addressable market.

Several structural factors explain the timing. The first is corporate consolidation. As we covered in our analysis of xAI being folded into SpaceXAI, the corporate restructuring around xAI in early 2026 — the merger trajectory documented in our coverage of the xAI-SpaceX $1.25 trillion AI-space empire — concentrated decision authority around Musk in a way that reduced the institutional checks on product policy decisions. The corporate structure that may have constrained Grok's policy positioning in 2024 looks different in 2026.

The second factor is competitive positioning pressure. The frontier chatbot market in mid-2026 is substantially more crowded than it was in 2024. ChatGPT remains dominant. Gemini has taken material market share. Claude has the developer-and-coding tier locked. Grok needs differentiation surfaces, and the political-engagement axis is the one where xAI has been signaling brand identity from launch.

The third factor is the broader Musk-Trump operational coordination. The SF Standard's observation that Musk is accompanying Trump on diplomatic visits points to a relationship structure that did not exist with the same intensity in 2024. Musk's political alignment is more publicly active in 2026 than it was during the campaign itself, which raises the question of whether Grok's policy stance is a reflection of, or signal toward, that operational coordination.

The fourth factor is regulatory complacency. The deepfake and campaign-AI regulatory environment in May 2026 is patchwork at best, which we cover in the next section. A chatbot that endorsed candidates in October 2024 would have faced acute regulatory scrutiny in the lead-up to the federal election. A chatbot that endorses in a California primary in May 2026 faces a much softer regulatory environment, at least in the short term.

The regulatory landscape — patchwork, gathering storm

The U.S. regulatory environment for AI chatbots making political endorsements in 2026 is a patchwork that breaks roughly along state lines. Roughly half of U.S. states now have some form of campaign-AI or deepfake-related statute. The other half have nothing. No federal statute directly governs an AI chatbot endorsing political candidates by name.

The state-level laws that do exist focus primarily on three surfaces. The first is synthetic media disclosure — laws requiring that AI-generated audio, video, or imagery used in political advertising carry a disclosure label. California, Texas, Washington, and Michigan have variants of this. The second is deceptive impersonation — laws criminalizing the use of AI to impersonate a candidate, election official, or voter. Roughly two dozen states have variants. The third is broader election integrity — laws governing AI use in voter suppression, voter intimidation, or election-day disinformation.

None of these existing state laws directly govern a chatbot that, when prompted "who should I vote for" by a user, returns a list of named candidates. The legal theory under which Grok's endorsements would be regulated is unclear under most current state statutes. The endorsements are not deepfakes. They are not synthetic media in the campaign-advertising sense. They are user-initiated chatbot outputs, which is a category that the existing regulatory framework largely did not anticipate.

That gap is closing. The 2026 midterm cycle has already seen more campaign-AI legislative activity at the state level than the entire 2024 cycle. Multiple bills introduced in 2025 and 2026 explicitly contemplate chatbot outputs as potential election-integrity issues. Whether any of those bills become law before November 2026 is an open question, but the trajectory is clear — the regulatory environment that allows Grok to endorse candidates today is unlikely to be the regulatory environment Grok faces in twelve months.

The deepfake context — NRSC, Talarico, Texas Senate

The regulatory urgency is being driven by a separate but related set of events. In the first months of 2026, the National Republican Senatorial Committee released an AI-generated deepfake video targeting Texas state representative James Talarico in his bid for the U.S. Senate. The video depicted Talarico in a manipulated context that violated multiple state-level deceptive-impersonation statutes. The Talarico episode became the most-cited example in 2026 legislative testimony arguing for federal AI-in-campaigns regulation.

The Talarico deepfake and the Grok endorsement question are different regulatory surfaces — one is synthetic media used by a campaign committee, the other is chatbot output to an individual user. But they sit inside the same broader political moment. Lawmakers concerned about AI in elections are looking at all of it together. The regulatory framework that addresses the deepfake problem is likely to sweep wider than just synthetic media.

Grok's own deepfake-adjacent regulatory history

The relevant comparable for xAI is that Grok already has a documented regulatory exposure on the deepfake axis. As covered in our timeline of the 2026 Grok deepfake scandal and the Baltimore class-action and attorneys general lawsuits, xAI faces an active multi-jurisdictional legal exposure around Grok's image-generation capability being used to produce non-consensual sexual deepfakes of public figures and minors. The legal architecture xAI is already defending against on the image-deepfake surface is the same legal architecture that lawmakers will reach for to address chatbot political endorsements.

The implication is that Grok's electoral-endorsement stance does not exist in a regulatory vacuum. xAI is already inside the regulatory crosshairs on adjacent surfaces. Adding political endorsements to the regulatory profile compounds the legal exposure rather than starting from a clean baseline.

Midterms 2026 — what the Grok stance does to the cycle

The November 2026 midterm elections are six months away as of this article. The Grok endorsement stance, if maintained without modification, walks directly into the most intense regulatory and political scrutiny period for AI in U.S. elections in the technology's short history. Several specific implications follow.

Campaigns will test whether Grok endorsements have campaign value

If Grok will reliably endorse candidates aligned with a particular ideological frame when prompted by users, campaigns will probe whether that output can be amplified as a form of organic-looking voter outreach. A campaign cannot pay Grok to endorse a candidate — that would be a clear violation of multiple existing campaign finance statutes. But a campaign can encourage supporters to ask Grok for endorsements and share screenshots, particularly if the campaign believes the chatbot's training and alignment skew toward their preferred candidate. The screenshot-as-organic-endorsement playbook is a likely 2026 cycle development.

Opposing campaigns will weaponize Grok's biases

The flip side is that campaigns running against candidates Grok favors have an obvious counter-move — frame Grok's endorsements as evidence of Musk-aligned political interference in the election. The "Musk's chatbot is endorsing my opponent" attack line writes itself. Whether voters find that argument compelling, or whether it backfires by amplifying the chatbot's endorsement signal, is an empirical question that the 2026 cycle will answer.

Secretaries of state will issue chatbot advisories

Election officials in the U.S. — secretaries of state, county election directors, election integrity coordinators — issued AI-and-elections public advisories during the 2024 cycle, primarily on deepfake video and audio. The 2026 cycle is likely to extend those advisories explicitly to chatbot endorsements. State-level election integrity guidance that names Grok by name as an unreliable source for voting decisions is the most predictable regulatory response, and the lowest-cost one for election officials to issue.

Platform policy pressure compounds

Social media platforms — X (owned by Musk), Meta, TikTok, YouTube — face their own platform policy questions about how to handle Grok endorsement screenshots circulating in political content. X is unlikely to act against Grok content on its own platform. Meta, TikTok, and YouTube each have election-integrity policies that could be invoked against amplified Grok endorsement content. The asymmetric platform response — Grok content treated differently on the platform Musk owns versus competitor platforms — is the most likely 2026 cycle dynamic.

What xAI could change before November — three scenarios

Between now and the midterms, xAI has three structural options for how to navigate the regulatory and political pressure that is gathering around the endorsement stance.

Scenario 1 — hold the line

The first scenario is that xAI maintains the current Grok behavior through the midterms. The company keeps the lighter-touch alignment profile, allows the named endorsements to continue, and accepts whatever regulatory and reputational exposure follows. This is the brand-consistent move and the one most aligned with Musk's stated views on AI alignment and content moderation. The cost is the regulatory exposure and the predictable scrutiny in the lead-up to November.

Scenario 2 — soft pivot to issue commentary without named picks

The second scenario is that xAI tightens the system prompt and RLHF alignment to move Grok closer to the ChatGPT model — willing to discuss policy substance on ballot measures, willing to characterize candidate positions on issues, but stopping short of named endorsements. This is the lowest-cost path to regulatory de-risking that preserves most of the brand differentiation. It is also the path of least resistance to internal pressure if xAI begins to face regulatory or commercial costs from the current stance.

Scenario 3 — match the industry neutrality norm

The third scenario is that xAI fully adopts the industry neutrality norm — Grok refuses to make endorsements or comment substantively on candidate selection, matching Claude and Gemini's stance. This is the maximum regulatory de-risking move. It is also the move most inconsistent with xAI's brand positioning. The likelihood of this scenario absent an acute regulatory event is low.

The most likely path is Scenario 2 — a soft pivot to issue-level commentary without named endorsements — sometime between August 2026 and the November midterm. The timing depends on whether a regulatory event forces a faster move.

Strategic takeaways for users, campaigns, and platforms

The Grok endorsement stance produces specific implications for different audiences.

For users — treat Grok endorsements as one input, not a recommendation engine

A chatbot that names candidates is not a voter information tool in the same sense a sample ballot from the registrar of voters is. Grok's endorsements reflect the chatbot's training data, alignment, and the founder's positioning at least as much as they reflect any objective assessment of candidate fit. Users who treat the output as one data point among many — alongside candidate websites, voter guides, local press endorsements, and personal research — are using the chatbot reasonably. Users who treat the output as a recommendation worth following without independent verification are taking on more uncertainty than they may realize.

For campaigns — assume Grok screenshots will surface in your race

Any campaign in a U.S. race competitive enough to attract user attention should assume that Grok will be asked about the race, will produce some output about the candidates, and that output will surface as screenshots in social media discourse. The defensive playbook involves monitoring what Grok says about the race, preparing responses to both favorable and unfavorable endorsements, and being ready to engage on the broader Musk-alignment narrative if relevant.

For platforms and journalists — establish the framing now

The press and platform framing for Grok endorsements in the 2026 cycle is being established in May 2026 by articles like the SF Standard test. Outlets that establish a clear analytical frame — what the endorsements are, what they are not, how they should be treated relative to traditional endorsement sources, what the regulatory context is — are doing readers a service. Outlets that frame the endorsements as either uncritically authoritative or as a partisan attack line miss the more useful analytical posture, which is that the endorsements are an observable product behavior with explicable origins and predictable consequences.

For Anthropic, OpenAI, and Google — the neutrality norm just became a positioning surface

The other major labs have a decision to make in the next six months. The industry neutrality norm that Grok broke was always voluntary. Now that one major chatbot has stopped honoring it, the rest of the industry can either hold the line, soften the position to match ChatGPT's partial-engagement model, or restate the neutrality stance as an explicit product feature. The third option — turning "this chatbot will not endorse candidates" into a marketing line — has been off the table because every chatbot did it. With Grok off the consensus, the neutrality stance becomes a product differentiator for the labs that maintain it.

Frequently Asked Questions

What did the SF Standard chatbot test find on May 13, 2026?

The San Francisco Standard tested four major AI chatbots — Grok (xAI), ChatGPT (OpenAI), Claude (Anthropic), and Gemini (Google) — on whether they would recommend candidates for California's June 2 primary election. Grok was the only chatbot that returned a complete slate of named endorsements. Claude refused outright, Gemini deflected with a categorical "I cannot tell you how to vote" response, and ChatGPT offered limited commentary on individual San Francisco propositions but did not name candidates as endorsement objects. Grok named candidates by name, gave reasoning, and rated propositions individually.

Which candidates did Grok endorse?

For California governor Grok endorsed Steve Hilton with San Jose Mayor Matt Mahan as a fallback recommendation. For the special election to fill Nancy Pelosi's former congressional seat Grok recommended state Senator Scott Wiener. For the San Francisco supervisorial races Grok endorsed Stephen Sherrill and Alan Wong, both allies of Mayor Daniel Lurie. On the proposition slate Grok endorsed yes on Prop A (earthquake bond), yes on Prop B (term limits), yes on Prop C (small-business tax cut), and no on Prop D (overpaid-CEO tax).

Why is Grok the only major chatbot answering "who should I vote for"?

Every major U.S. consumer AI chatbot launched between 2023 and 2025 — ChatGPT, Claude, Gemini, Microsoft Copilot, Meta AI, Perplexity — adopted a voluntary neutrality norm on electoral endorsements emerging from the 2024 U.S. election cycle. The norm was never legally required. It was an industry coordination point reached independently by each major lab as a product safety calculus. Grok broke the norm by shipping with a lighter-touch alignment profile that does not refuse the endorsement question. Whether the break is a deliberate product positioning, an alignment artifact, or a reflection of Elon Musk's political positioning is genuinely an open question.

What did Claude say when asked who to vote for?

Claude refused to make voting recommendations. The model's reported response in the SF Standard test was: "I appreciate you sharing the ballot...but I have to be straightforward with you: This isn't something I'm willing to do." Claude framed elections as "how communities make collective decisions" and explained that making individual recommendations would not be appropriate. This is consistent with Anthropic's published behavior policy on political topics, which has been one of the strictest in the industry since the model launched.

What did Gemini say when asked who to vote for?

Gemini refused categorically with the statement "As an AI, I cannot tell you how to vote or make personal decisions for you." Google has held this position across every consumer version of the Gemini app since the 2024 election cycle, when the Gemini policy team published explicit guidance that the model would not make voting recommendations or comment on candidate suitability.

What did ChatGPT say when asked who to vote for?

ChatGPT occupied the middle position. The model offered limited commentary on individual San Francisco propositions — specifically supporting Prop D's overpaid-CEO tax with the framing that it would "nudge behavior without broadly hitting small businesses" — but did not produce a full slate of candidate endorsements. ChatGPT's stance is the partial-engagement model where the chatbot will discuss policy substance on ballot measures but declines to recommend candidates by name.

Do Grok's endorsements reflect Elon Musk's political views?

The pattern is observable. Grok's endorsements skew conservative-to-moderate — Steve Hilton for governor is a Republican pick, the Prop D no and Prop C yes positions are pro-employer, the Sherrill-Wong supervisor endorsements align with the centrist coalition opposing San Francisco's progressive faction. This pattern is consistent with Elon Musk's documented political positioning. Whether the alignment is causal — Musk's positioning bleeding into the model through training data selection, RLHF feedback, or system prompt design — or correlational is not provable from the chatbot's outputs alone. The pattern is observable. The mechanism is not yet documented.

Is it legal for an AI chatbot to endorse political candidates in the U.S.?

As of May 2026 there is no federal statute that directly prohibits an AI chatbot from endorsing political candidates by name when prompted by a user. Roughly half of U.S. states have some form of campaign-AI or deepfake-related statute, but those laws primarily focus on synthetic media disclosure, deceptive impersonation of candidates, and broader election integrity surfaces. None of the existing state laws clearly govern user-initiated chatbot outputs that name candidates. The regulatory framework that addresses this category is still being drafted in 2025 and 2026.

How does the Grok endorsement question relate to the 2026 deepfake regulatory environment?

They sit inside the same political moment. Lawmakers concerned about AI in elections are looking at synthetic media deepfakes (like the NRSC's AI-generated video targeting Texas state representative James Talarico) and chatbot endorsements as part of the same broader regulatory question. The legal architecture that addresses synthetic media is likely to sweep wider than just deepfakes. xAI already faces multi-jurisdictional legal exposure on the deepfake axis through the Baltimore class-action and attorneys general lawsuits documented in earlier reporting, so adding political endorsements to the regulatory profile compounds rather than starts a new exposure.

Will Grok change its endorsement stance before the 2026 midterm elections?

Three scenarios are plausible. Scenario 1 — xAI holds the line and maintains the current behavior through the midterms, accepting regulatory and reputational exposure. Scenario 2 — xAI tightens the alignment to allow issue commentary on ballot measures while stopping short of named endorsements, matching the ChatGPT partial-engagement model. Scenario 3 — xAI fully adopts the industry neutrality norm matching Claude and Gemini. The most likely path is Scenario 2 at some point between August 2026 and November, with the timing dependent on whether a specific regulatory event forces a faster move.

Should I rely on Grok's endorsement when deciding how to vote?

A chatbot that names candidates is not a voter information source in the same sense that a sample ballot from the registrar of voters or a local press endorsement from a publication with disclosed editorial standards is. Grok's endorsements reflect the chatbot's training data, alignment, and the founder's positioning at least as much as they reflect any objective assessment of candidate fit. Users who treat the output as one input among many — alongside candidate websites, voter guides, local press endorsements, and personal research — are using the chatbot reasonably. Treating the output as a recommendation worth following without independent verification takes on more uncertainty than may be obvious.

What about ChatGPT, Claude, and Gemini — will the other major chatbots start endorsing too?

The opposite outcome is more likely. The industry neutrality norm that Grok broke was voluntary, which means the other labs maintaining the norm now have a positioning surface that did not exist when every chatbot held the same position. Anthropic, OpenAI, and Google can each restate the neutrality stance as an explicit product feature in a way that the marketing could not justify when every chatbot did the same thing. The neutrality stance becomes a product differentiator for the labs that maintain it, particularly for enterprise procurement and for user segments concerned about chatbot political bias.

Sources

- San Francisco Standard — "AI chatbots tested on who to vote for in the California June 2 primary" (May 13, 2026): sfstandard.com

- ThePlanetTools.ai analysis — Grok deepfake scandal 2026 timeline (regulatory context): ThePlanetTools.ai

- ThePlanetTools.ai analysis — Grok deepfake Baltimore class-action and attorneys general lawsuits: ThePlanetTools.ai

- ThePlanetTools.ai analysis — xAI dissolved into SpaceXAI corporate consolidation: ThePlanetTools.ai

- ThePlanetTools.ai analysis — xAI-SpaceX merger $1.25 trillion AI-space empire: ThePlanetTools.ai