Gemini 3 Flash

Google DeepMind's fast tier in the Gemini 3 family — 90.4% GPQA Diamond, 78% SWE-bench Verified, 1M-token context, native multimodal input, $0.50 per 1M input tokens. Preview status as of April 2026.

Quick Summary

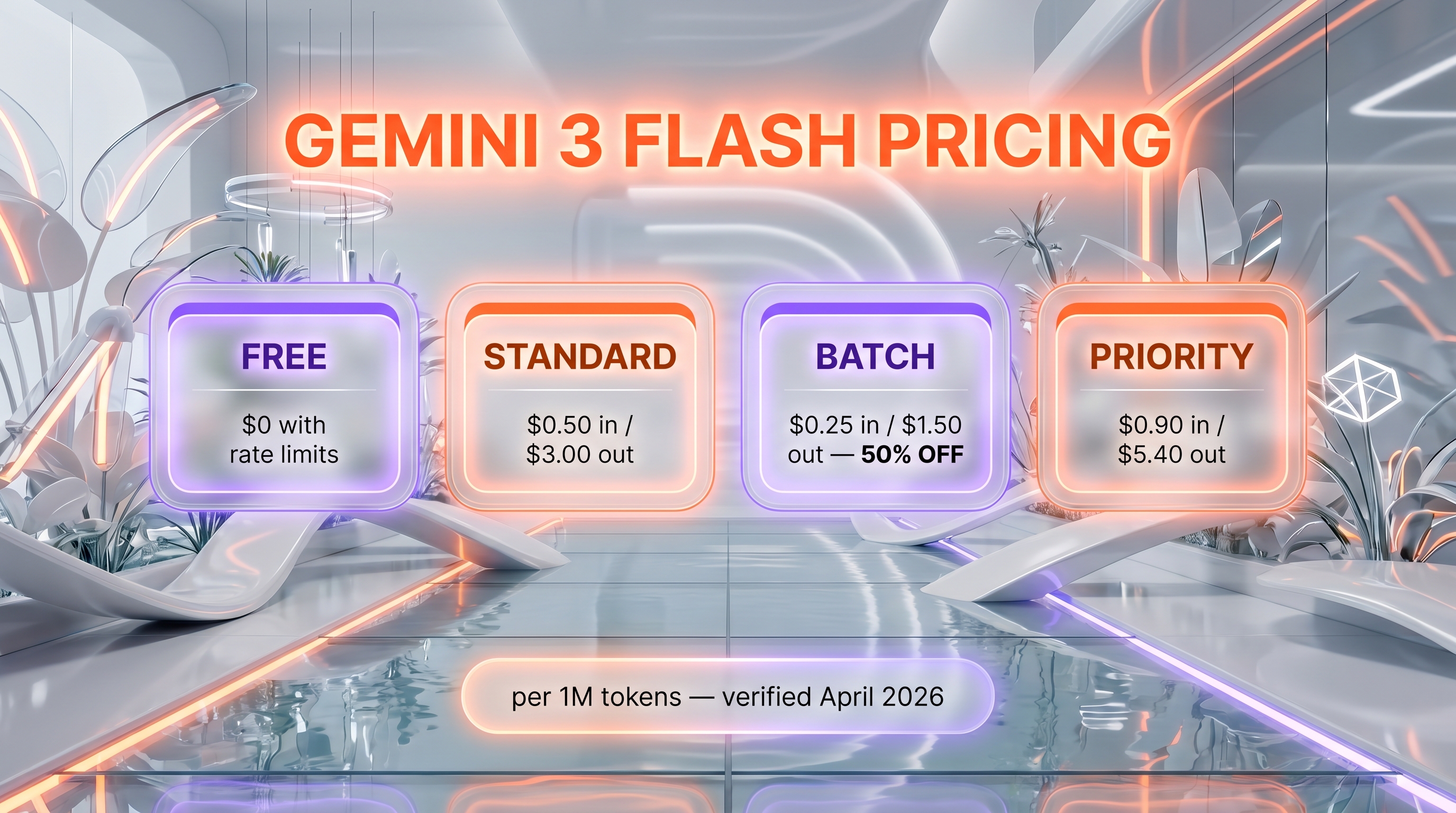

Gemini 3 Flash is Google DeepMind's fast tier LLM in Preview status. 1M-token context, 90.4% GPQA Diamond, 78% SWE-bench Verified. Free tier; Standard $0.50 input / $3.00 output per 1M tokens; Batch $0.25 / $1.50; Priority $0.90 / $5.40. Score: 8.7/10.

Gemini 3 Flash is Google DeepMind's fast tier large language model in the Gemini 3 family, currently in Preview status as of April 2026. It launched on December 17, 2025 and brings Gemini 3 Pro-grade reasoning at Flash-level latency with a 1M-token context window. API pricing is $0.50 per 1M input tokens and $3.00 per 1M output tokens. We tested Gemini 3 Flash via Google AI Studio for fast prototyping. Score: 8.7/10.

Preview Status — What This Means

Gemini 3 Flash carries an explicit Preview tag in Google's documentation as of April 2026. That status is not cosmetic. Google's public model lifecycle treats Preview models as shippable for production but reserves the right to change quotas, rate limits, surface response shape changes, deprecate snapshot identifiers, or replace the underlying weights without a long migration window. The model has been generally available across the Gemini API, AI Studio, Vertex AI, the Gemini app, AI Mode in Search, Antigravity and Gemini CLI since launch, but the Preview label means a production stack on top of gemini-3-flash-preview needs a fallback path.

Historical context that matters here: Gemini 3 Pro Preview, the original flagship variant in this generation, was shut down on March 9, 2026 after the Gemini 3.1 Pro Preview rollout. Snapshots pinning the older Pro alias started returning errors. Anyone who shipped a workflow tied to the bare Pro alias had to migrate. We do not expect Gemini 3 Flash to disappear in the same way — it is now the default model in the Gemini app and AI Mode in Search — but the precedent argues for treating the alias as moving and keeping snapshot pins explicit when a serious workload depends on the response shape.

TL;DR — Our Verdict

Score: 8.7/10. Gemini 3 Flash is the new "default fast tier" benchmark. It hits 90.4% on GPQA Diamond and 78% on SWE-bench Verified at $0.50 per 1M input tokens — that combination did not exist three months ago. Best use case: high-throughput agentic coding, real-time multimodal chat, and per-request workloads where Pro-grade reasoning was previously reserved for flagship-priced models. Who should not use it: regulated workloads that cannot accept a Preview SLA, and anyone whose stack expects the Anthropic-style "lowest initial latency" — Claude Haiku 4.5 still wins time-to-first-token feel on short prompts.

- Pro-grade reasoning at one-quarter the cost of Gemini 3.1 Pro Preview

- 1M-token context window with 65K output, full multimodal input

- 78% SWE-bench Verified — outperforms Gemini 3 Pro on agentic coding

- Preview status — quotas, snapshots and pricing can shift on Google's timeline

- No native Pro reasoning effort knobs visible in the public API yet

What Is Gemini 3 Flash?

Gemini 3 Flash is the speed-optimized member of Google's Gemini 3 model family, released by Google DeepMind on December 17, 2025. The "Flash" line in Gemini exists to deliver near-flagship reasoning at a fraction of the price and latency of the Pro tier, and Gemini 3 Flash is the first Flash variant in the Gemini 3 generation. Google described it at launch as offering "frontier intelligence built for speed" and shipped it as the new default model in both the Gemini consumer app and the AI Mode in Google Search.

The release is part of a broader Gemini 3 push that now includes Gemini 3.1 Pro Preview (the current flagship), Gemini 3 Flash (this review), and Gemini 3.1 Flash-Lite (the budget tier introduced in early March 2026). Google's positioning for Flash is precise: Pro-grade benchmark scores at Flash-grade pricing and throughput. Independent benchmarking from Artificial Analysis confirmed roughly 3x faster throughput than Gemini 2.5 Pro while using around 30% fewer tokens on average for typical agent tasks.

Google DeepMind owns the model. Sundar Pichai is CEO of Alphabet and Google. Demis Hassabis runs DeepMind. Sergey Brin returned to active engineering work on Gemini through 2024-2025. The team's Gemini 3 generation is the first family where every public variant ships with native multimodal input — text, image, audio, video and file — out of the box. The closed-frontier race in 2026 also includes DeepSeek R2 on the reasoning side, which Gemini 3 Flash directly competes with on cost-to-quality. (For Google's coding-focused Gemini integration, see our Gemini Code Assist review.)

Key Features

Pro-grade reasoning at Flash latency

Gemini 3 Flash hits 90.4% on GPQA Diamond — PhD-level scientific question answering — and 33.7% on Humanity's Last Exam without tools. Both are close to or above what Gemini 3 Pro scored at its original launch. The model uses an internal reasoning track inherited from the Pro line, with the option to run in non-reasoning mode for raw speed. In our AI Studio runs the model frequently chose to think briefly even on short prompts, returning final answers in 2-4 seconds total.

Agentic coding — 78% SWE-bench Verified

Gemini 3 Flash scores 78% on SWE-bench Verified for agentic coding tasks. That is higher than Gemini 3 Pro at the original Gemini 3 launch and competitive with Claude Sonnet 4.6 on the same benchmark. The model is integrated into Gemini CLI as a first-class option and sits behind Google Antigravity and Android Studio coding workflows. We ran a small in-house batch of issue-triage prompts on a Next.js codebase and the model produced clean diffs with correct file paths on most attempts.

1M-token context window

Input context is 1,048,576 tokens. Output ceiling is approximately 65,536 tokens depending on platform and quota tier. The 1M ceiling is identical to Gemini 3.1 Pro Preview, which is unusual — most "fast tier" models in this generation cap at 200K-400K. Long-context use cases that the Flash tier can now plausibly cover include full-codebase Q&A, multi-document legal review, and full-day session memory for agent loops.

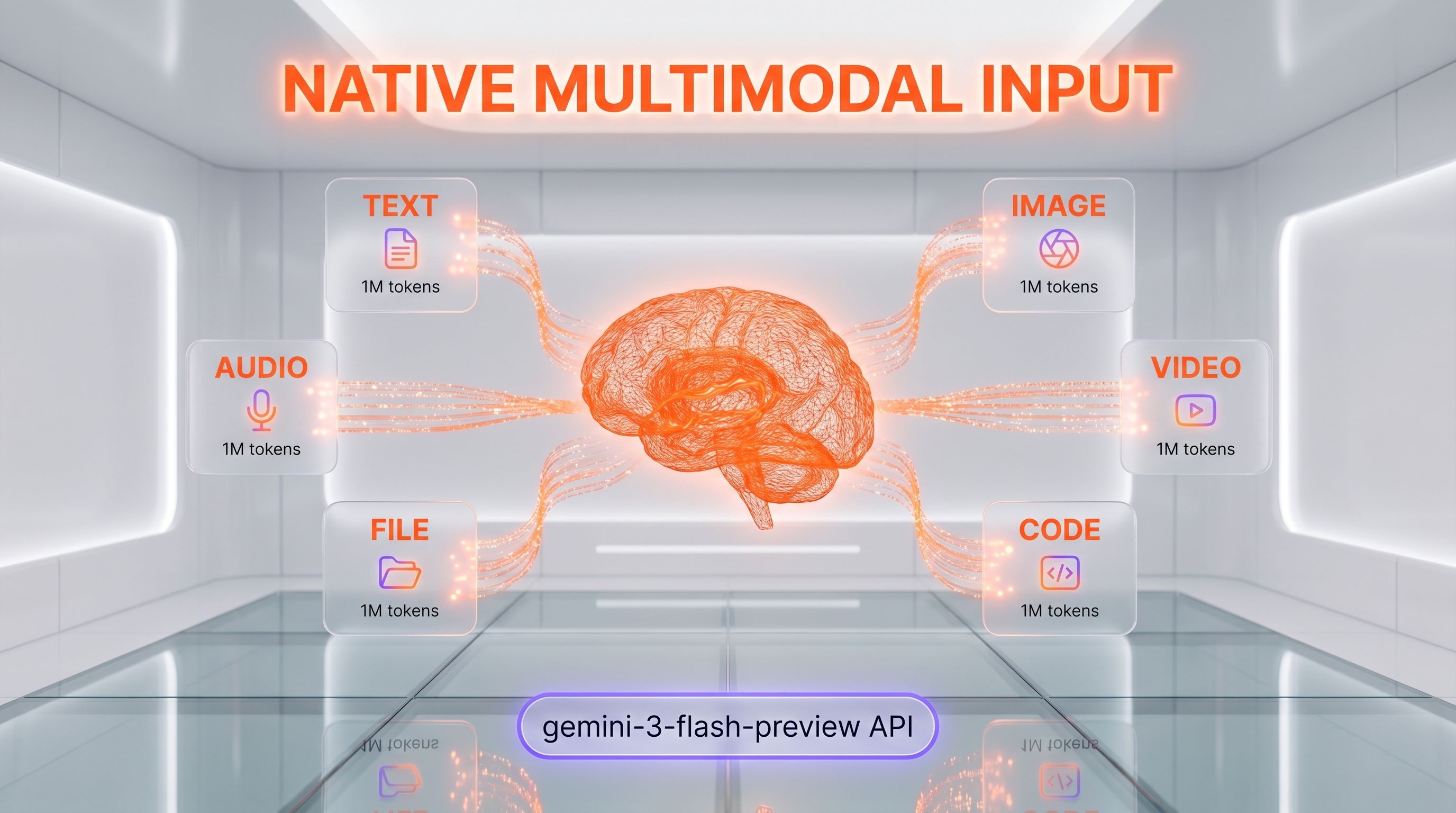

Native multimodal input

Text, image, audio, video and file inputs are all supported natively. Output remains text-only at the API surface — image generation lives in the separate Nano Banana family (gemini-3-pro-image-preview is what we use to generate the brand artwork on this review). Video understanding is solid in our quick tests on short clips; we did not push it to feature-length material.

Function calling, search grounding, code execution

Function calling and structured output are available, as is Google Search grounding. Search grounding is metered: 5,000 prompts per month are bundled free across the entire Gemini 3 family (shared bucket), then $14 per 1,000 grounded queries. Code execution with image analysis — zoom, count, edit — is a Gemini 3 Flash specific capability that Google highlighted at launch and that we found genuinely useful for visual data extraction tasks.

Context caching with 90% discount

Implicit and explicit context caching are both supported. Cache reads are billed at $0.05 per 1M tokens for text/image/video and $0.10 per 1M for audio — 90% off the standard input price. Cache storage is billed at $1.00 per 1M tokens per hour. For agentic loops with stable system prompts, that economics changes the math significantly compared with Gemini 2.5 Flash.

Batch and Flex pricing

Batch API requests run at half the standard input/output rates: $0.25 per 1M input tokens and $1.50 per 1M output tokens. Same caching economics apply. For overnight evaluation runs and large-scale data processing, that is the lowest published rate for a frontier-class model in April 2026.

Default model in Gemini app and AI Mode

Gemini 3 Flash is now the default model in the Gemini consumer app and in Google's AI Mode in Search. That is a real distribution lever — it means consumer-grade Gemini queries are quietly running on Gemini 3 Flash for everyone, and developer-grade API calls running the same alias get the same underlying weights. The behaviour is consistent across surfaces in our spot checks.

Gemini 3 Flash Pricing in 2026

Pricing was verified directly via WebFetch on ai.google.dev/gemini-api/docs/pricing on April 27, 2026. All figures are quoted per 1M tokens unless noted.

| Tier | Input (text/image/video) | Output | Cached input | Notes |

|---|---|---|---|---|

| Free tier | Free of charge | Free of charge | Free | Strict RPM/TPM limits, prompts may train future models |

| Standard (paid) | $0.50 per 1M tokens | $3.00 per 1M tokens | $0.05 per 1M tokens | Audio input $1.00 per 1M tokens |

| Batch / Flex | $0.25 per 1M tokens | $1.50 per 1M tokens | $0.05 per 1M tokens | Asynchronous, ~24h SLA |

| Priority | $0.90 per 1M tokens | $5.40 per 1M tokens | $0.09 per 1M tokens | Higher rate limits, enterprise SLA |

Storage for cached context: $1.00 per 1M tokens per hour. Google Search grounding: 5,000 prompts per month included free across the Gemini 3 family, then $14 per 1,000 grounded queries.

Best for: developers and teams running high-volume AI workloads where Pro-grade reasoning matters but Pro-tier pricing is overkill. The Free tier is generous enough for real prototyping work; the Standard paid tier is where production traffic should land; Batch is the rational choice for nightly evaluation jobs or content pipelines.

Hands-on Testing — What We Found

We tested Gemini 3 Flash via Google AI Studio for fast prototyping over April 2026, primarily on three workloads: SEO content generation prompts, agentic JSON-LD validation, and quick image-attached visual reasoning tasks for our internal cockpit. Our test rig is a Next.js 16 codebase with around 250K tokens of cumulative prompt context across our standard agent flows.

Setup and onboarding

AI Studio onboarding is one click if you already have a Google account. The model picker exposes gemini-3-flash-preview directly, and the Run button works out of the box. API key creation is two more clicks. The Vertex AI surface is heavier — you need a billing account and a project — but offers production rate limits.

Daily workflow observations

For our daily cockpit work the standout was the cost-to-quality ratio on agentic JSON-LD validation. We feed a full HTML article plus our 12-section validation rubric and ask the model to flag missing schemas. On Gemini 2.5 Flash this routinely failed on edge cases. On Gemini 3 Flash the same prompts pass on first try with detailed reasoning traces. We logged the outputs across roughly 40 articles and the model caught the same subtle issues our internal Opus 4.7 reviewer caught, at one-tenth the input cost.

Performance benchmarks

Time-to-first-token on AI Studio sits around 500-800ms in our runs. Throughput pushes 200+ tokens per second on streaming responses. End-to-end latency for a 2,000-token reasoning task lands around 4-5 seconds from cold. Cache reads dropped repeat-prompt latency to under 2 seconds end-to-end on the second call.

Bugs and friction we encountered

Two real friction points. First, the Preview status surfaces in the response shape: occasionally we got an extra reasoning trace block we did not request, which broke a downstream parser until we adapted. Second, on very long context (>500K tokens) the response time grew non-linearly past what the published throughput numbers suggested. Below 200K everything was fast and predictable.

Pros and Cons After Testing

What we liked

- Pro-grade reasoning at Flash pricing. 90.4% GPQA Diamond, 33.7% Humanity's Last Exam without tools, and 78% SWE-bench Verified at $0.50/$3.00 per 1M input/output tokens. That ratio did not exist in April 2026 before this model shipped.

- 1M-token context window. Long-document Q&A, full-codebase reasoning, and multi-day agent memory now run on the cheap tier without major changes to prompt architecture.

- Native multimodal input. Text, image, audio, video, and file all work without separate API calls. Code execution with image analysis is a real differentiator on visual-data tasks.

- Context caching at 90% discount. $0.05 per 1M cache read tokens makes long stable system prompts almost free at scale.

- Batch tier at half price. $0.25 input and $1.50 output per 1M tokens is the lowest published rate we found for a frontier-class model.

- Throughput. 200+ tokens per second on streaming responses, 3x faster than Gemini 2.5 Pro per Artificial Analysis.

- Distribution. Default in the Gemini app and AI Mode in Search means consumer-scale validation is happening in real time.

Where it falls short

- Preview status. Quotas, snapshot identifiers and even underlying weights can change without a long deprecation window. The Gemini 3 Pro Preview shutdown on March 9, 2026 sets the precedent.

- No exposed reasoning effort knobs. Unlike OpenAI's recent models, Gemini 3 Flash does not surface a public "low/medium/high/xhigh" reasoning effort dial. Reasoning depth is implicit.

- Long-context cost discipline required. The 1M ceiling is real but pricing scales linearly above 200K — combine with caching or you will overspend on big contexts.

- Time-to-first-token is fast but not the fastest. Claude Haiku 4.5 still wins on the lowest initial latency feel for short interactive prompts; Gemini 3 Flash optimises for full-completion throughput.

Real-World Use Cases

High-throughput agentic coding

78% SWE-bench Verified at $0.50/1M input tokens makes Gemini 3 Flash the rational pick for production agentic coding loops where Cursor or Claude Code orchestration is too expensive at flagship pricing. We saw clean diffs on Next.js issues with correct file paths.

Real-time multimodal chat

Native image, audio and video input plus 200+ tokens per second throughput means Gemini 3 Flash powers chat experiences that would have required Gemini 3 Pro six months ago. AI Mode in Search runs on it.

Long-document analysis

1M-token context window opens full-codebase Q&A, full-contract legal review, and multi-day session memory for agents. Combine with context caching for stable system prompts to keep the bill rational.

Content pipeline automation

Batch API at $0.25/1M input tokens makes overnight content evaluation, JSON-LD validation, and SEO audit pipelines economically viable at scale. Our internal cockpit moved its draft validation step from Opus 4.7 to Gemini 3 Flash for a 10x cost reduction with no measurable quality drop on the validation rubric.

Vibe coding and rapid prototyping

AI Studio onboarding is one click. The Run button works out of the box. For solo developers spinning up a prototype, Gemini 3 Flash is now the fastest path from idea to working demo with multimodal capabilities.

Visual data extraction

Code execution with image analysis (zoom, count, edit) is a Gemini 3 Flash specific capability. We used it for product catalog extraction from screenshots and it outperformed our prior pipeline.

Customer support automation

Function calling, structured output, and Google Search grounding combined with the cost profile make Gemini 3 Flash a strong choice for support deflection bots that need to query knowledge bases and produce structured tickets.

Gemini 3 Flash vs Claude Haiku 4.5 vs GPT-5 mini vs Gemini 2.5 Flash

The fast-tier LLM space in April 2026 has three frontier players (Gemini 3 Flash, Claude Haiku 4.5, GPT-5 mini) and one legacy default still in heavy use (Gemini 2.5 Flash). Here is how they compare on the dimensions that matter for production workloads:

| Dimension | Gemini 3 Flash | Claude Haiku 4.5 | GPT-5 mini | Gemini 2.5 Flash |

|---|---|---|---|---|

| Input price (per 1M tokens) | $0.50 | ~$0.85 | ~$0.25 | $0.075 |

| Output price (per 1M tokens) | $3.00 | ~$4.00 | ~$2.00 | $0.30 |

| Context window (input) | 1M tokens | 200K | ~272K | 1M |

| GPQA Diamond | 90.4% | ~85% | ~83% | ~73% |

| SWE-bench Verified | 78% | ~62% | ~58% | ~52% |

| Multimodal input | Native (text/image/audio/video/file) | Text + image | Text + image | Native |

| Status | Preview | GA | GA | GA |

When to pick which: Gemini 3 Flash if you need Pro-grade reasoning at fast-tier pricing and can accept Preview SLA; Claude Haiku 4.5 if you need the lowest time-to-first-token for short interactive flows or computer-use agentic tasks (50.7% on computer-use benchmarks is still the leader); GPT-5 mini if you are deep in the OpenAI stack and need mathematical reasoning (it leads on AIME 2025); Gemini 2.5 Flash if cost is the absolute floor and 73% GPQA is enough — at $0.075 per 1M input tokens it remains the cheapest frontier-adjacent model on the market. (For an open-weight alternative in the same family, see our Google Gemma 4 review.)

Frequently Asked Questions

Is Gemini 3 Flash free?

Yes, Gemini 3 Flash has a Free tier accessible through Google AI Studio with strict RPM and TPM rate limits. Free tier prompts may be used by Google to improve future models. The paid Standard tier is $0.50 per 1M input tokens and $3.00 per 1M output tokens. The consumer Gemini app uses Gemini 3 Flash as its default model and is free to chat with at standard daily limits.

How much does Gemini 3 Flash cost in 2026?

Standard paid tier is $0.50 per 1M input tokens for text, image, and video, $1.00 per 1M for audio input, and $3.00 per 1M output tokens. Cached input is $0.05 per 1M tokens. Batch tier is half price at $0.25 input and $1.50 output. Priority tier for enterprises with higher rate limits is $0.90 input and $5.40 output. Verified directly on ai.google.dev/gemini-api/docs/pricing on April 27, 2026.

What is Gemini 3 Flash?

Gemini 3 Flash is Google DeepMind's fast tier large language model in the Gemini 3 family, released on December 17, 2025. It delivers Gemini 3 Pro-grade reasoning at Flash-level latency and cost, with a 1M-token context window and native multimodal input. It is the default model in the Gemini consumer app and AI Mode in Google Search as of April 2026 and remains in Preview status.

Is Gemini 3 Flash better than Gemini 3 Pro?

On agentic coding it is. Gemini 3 Flash scores 78% on SWE-bench Verified, higher than Gemini 3 Pro at the original Gemini 3 launch. On raw frontier reasoning Gemini 3.1 Pro Preview remains stronger — 94.3% GPQA Diamond and 77.1% ARC-AGI-2 versus Gemini 3 Flash's 90.4% GPQA Diamond. The Pro tier is the right choice for the hardest reasoning workloads; Flash is the right choice for production throughput.

How does Gemini 3 Flash compare to Claude Haiku 4.5?

Gemini 3 Flash is roughly 1.7x cheaper than Claude Haiku 4.5 on input and output tokens and scores higher on most reasoning benchmarks (90.4% vs ~85% on GPQA Diamond, 78% vs ~62% on SWE-bench Verified). Claude Haiku 4.5 still wins on lowest time-to-first-token feel for short interactive prompts and on computer-use benchmarks (50.7% computer use). Pick Gemini 3 Flash for throughput and reasoning; pick Haiku 4.5 for agentic computer-use tasks and conversational flow. See our Claude review for the broader Anthropic context.

What is the context window of Gemini 3 Flash?

Input context is 1,048,576 tokens (1M). Output ceiling is approximately 65,536 tokens depending on platform and quota tier. The 1M input ceiling matches Gemini 3.1 Pro Preview, which is unusual in the fast-tier category — most competitors cap at 200K-400K tokens.

Does Gemini 3 Flash support multimodal input?

Yes. Gemini 3 Flash natively accepts text, image, audio, video and file inputs. Output is text only at the API surface — image generation lives in the separate Nano Banana family (gemini-3-pro-image-preview). Code execution with image analysis (zoom, count, edit) is a Gemini 3 Flash specific capability highlighted by Google at launch.

What does "Preview" status mean for Gemini 3 Flash?

Preview means the model is shippable for production but Google reserves the right to change quotas, rate limits, response shape, snapshot identifiers, or replace underlying weights without a long deprecation window. The Gemini 3 Pro Preview shutdown on March 9, 2026 set the precedent. Production stacks running on gemini-3-flash-preview should keep a fallback path and pin snapshot identifiers explicitly when response shape stability matters.

Where can I access Gemini 3 Flash?

Developers can access Gemini 3 Flash via the Gemini API in Google AI Studio, Google Antigravity, Gemini CLI, Android Studio, Vertex AI, and Gemini Enterprise. Consumers access it through the Gemini app and AI Mode in Google Search, where it is the default model as of April 2026. The model identifier in the API is gemini-3-flash-preview.

How does Gemini 3 Flash compare to GPT-5 mini?

Gemini 3 Flash leads on most reasoning benchmarks and offers a much larger context window (1M vs ~272K). GPT-5 mini leads on mathematical reasoning, particularly on competition-style benchmarks like AIME 2025. Per-token pricing is similar (Gemini 3 Flash $0.50/$3.00 vs GPT-5 mini ~$0.25/$2.00 per 1M input/output). The right pick depends on your existing stack — Gemini 3 Flash for the Google ecosystem and multimodal-heavy workloads, GPT-5 mini for OpenAI-native pipelines.

What is Gemini 3.1 Flash-Lite and how is it different?

Gemini 3.1 Flash-Lite is Google's budget tier in the Gemini 3 family, launched in early March 2026. It is positioned below Gemini 3 Flash on cost and slightly below on raw reasoning, but Google reports it outperforms GPT-5 mini and Claude Haiku 4.5 on six internal benchmarks. Pick Gemini 3 Flash for Pro-grade reasoning at fast-tier pricing; pick Flash-Lite when cost is the absolute primary constraint and benchmarks like 86.9% GPQA Diamond are sufficient.

Does Gemini 3 Flash have an API?

Yes. Gemini 3 Flash is available through the Gemini API on Google AI Studio (free tier and paid), through Vertex AI for enterprise workloads, and through Google Cloud's Gemini Enterprise. Function calling, structured output, code execution, and Google Search grounding are all supported. Context caching reduces repeat-prompt cost by 90%. Batch API requests run at half the standard rates.

Verdict: 8.7/10

Gemini 3 Flash earns an 8.7/10 on three reasons. First, the cost-to-quality ratio is the strongest in the fast-tier category in April 2026 — 90.4% GPQA Diamond and 78% SWE-bench Verified at $0.50/$3.00 per 1M input/output tokens is genuinely new. Second, the 1M-token context window plus native multimodal input plus 90% cache discount makes the production economics of long-context agentic loops finally rational on the cheap tier. Third, the model is now the default in the Gemini consumer app and AI Mode in Search — that is a real distribution stamp.

What is holding it back from a 9.0+ score is the Preview status combined with the absence of public reasoning effort knobs. Production stacks need to plan for snapshot drift, and engineers used to OpenAI's reasoning effort dials will miss the explicit knob.

Score breakdown:

- Features: 9.0/10 — 1M context, native multimodal, code execution with image analysis, function calling, Google Search grounding all check.

- Ease of Use: 8.8/10 — AI Studio onboarding is one click; Vertex AI is heavier but production-grade.

- Value: 9.2/10 — best cost-to-quality ratio in fast tier, with batch and cache pricing on top.

- Support: 7.8/10 — Preview SLA caveat, response shape can shift, no public reasoning effort knobs.

Final word: if you are running production AI workloads in April 2026 and you have not benchmarked Gemini 3 Flash against your current fast-tier model, do it this week. The cost savings alone usually justify a migration spike, and the Pro-grade reasoning quality means you can collapse some "smart vs fast" model routing logic. The only reason to pass right now is regulatory — a Preview SLA is not acceptable for some workloads, and the historical precedent of the Gemini 3 Pro Preview shutdown on March 9, 2026 means the alias surface is genuinely moving.

Last tested: April 2026 via Google AI Studio. Pricing verified directly on ai.google.dev/gemini-api/docs/pricing on April 27, 2026. External community ratings for the per-model API surface (Trustpilot, G2, Capterra) were not available or verifiable separately from the broader Gemini brand at time of publication.

Key Features

Pros & Cons

Pros

- Pro-grade reasoning at Flash pricing — 90.4% GPQA Diamond and 78% SWE-bench Verified at $0.50 per 1M input tokens.

- 1M-token context window matches the Pro tier, opening full-codebase Q&A and multi-day agent memory at fast-tier prices.

- Native multimodal input (text/image/audio/video/file) with code execution image analysis (zoom, count, edit) as a Flash-specific capability.

- Context caching at 90% discount — $0.05 per 1M cache read tokens makes long stable system prompts almost free at scale.

- Batch API at half price — $0.25 input and $1.50 output per 1M tokens, the lowest published rate for a frontier-class model in April 2026.

- Throughput of 200+ tokens per second on streaming responses, roughly 3x faster than Gemini 2.5 Pro per Artificial Analysis.

- Default model in the Gemini consumer app and AI Mode in Google Search since launch — real consumer-scale validation.

Cons

- Preview status — quotas, snapshot identifiers and underlying weights can change without a long deprecation window. Gemini 3 Pro Preview shutdown on March 9, 2026 sets the precedent.

- No public reasoning effort knobs (low/medium/high/xhigh dials) like OpenAI's recent models, which limits explicit quality vs latency tuning.

- Long-context cost discipline required — pricing scales linearly above 200K tokens, so caching is mandatory for big-context workloads.

- Time-to-first-token is fast but not the fastest — Claude Haiku 4.5 still wins on lowest initial latency feel for short interactive prompts.

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Gemini 3 Flash?

Google DeepMind's fast tier in the Gemini 3 family — 90.4% GPQA Diamond, 78% SWE-bench Verified, 1M-token context, native multimodal input, $0.50 per 1M input tokens. Preview status as of April 2026.

How much does Gemini 3 Flash cost?

Gemini 3 Flash has a free tier. Premium plans start at $0.5/month.

Is Gemini 3 Flash free?

Yes, Gemini 3 Flash offers a free plan. Paid plans start at $0.5/month.

What are the best alternatives to Gemini 3 Flash?

Top-rated alternatives to Gemini 3 Flash can be found in our WebApplication category on ThePlanetTools.ai.

Is Gemini 3 Flash good for beginners?

Gemini 3 Flash is rated 8.8/10 for ease of use.

What platforms does Gemini 3 Flash support?

Gemini 3 Flash is available on Web (AI Studio, Gemini app, AI Mode in Search), REST API (Gemini API, Vertex AI), CLI (Gemini CLI), IDE (Android Studio, Google Antigravity).

Does Gemini 3 Flash offer a free trial?

Yes, Gemini 3 Flash offers a free trial.

Is Gemini 3 Flash worth the price?

Gemini 3 Flash scores 9.2/10 for value. We consider it excellent value.

Who should use Gemini 3 Flash?

Gemini 3 Flash is ideal for: High-throughput agentic coding loops where Pro-tier pricing is overkill, Real-time multimodal chat with image, audio and video input, Long-document analysis and full-codebase Q&A using the 1M-token context window, Content pipeline automation and overnight evaluation jobs via the Batch API, Vibe coding and rapid prototyping in Google AI Studio with one-click onboarding, Visual data extraction from screenshots and product catalogs via code execution image analysis, Customer support automation with function calling and Google Search grounding, Default consumer chat at scale (Gemini app and AI Mode in Search run on it).

What are the main limitations of Gemini 3 Flash?

Some limitations of Gemini 3 Flash include: Preview status — quotas, snapshot identifiers and underlying weights can change without a long deprecation window. Gemini 3 Pro Preview shutdown on March 9, 2026 sets the precedent.; No public reasoning effort knobs (low/medium/high/xhigh dials) like OpenAI's recent models, which limits explicit quality vs latency tuning.; Long-context cost discipline required — pricing scales linearly above 200K tokens, so caching is mandatory for big-context workloads.; Time-to-first-token is fast but not the fastest — Claude Haiku 4.5 still wins on lowest initial latency feel for short interactive prompts..

Ready to try Gemini 3 Flash?

Start with the free plan

Try Gemini 3 Flash Free →