Google Gemma 4

Google's most capable open-weight LLM family under Apache 2.0 — from edge devices to frontier reasoning

Quick Summary

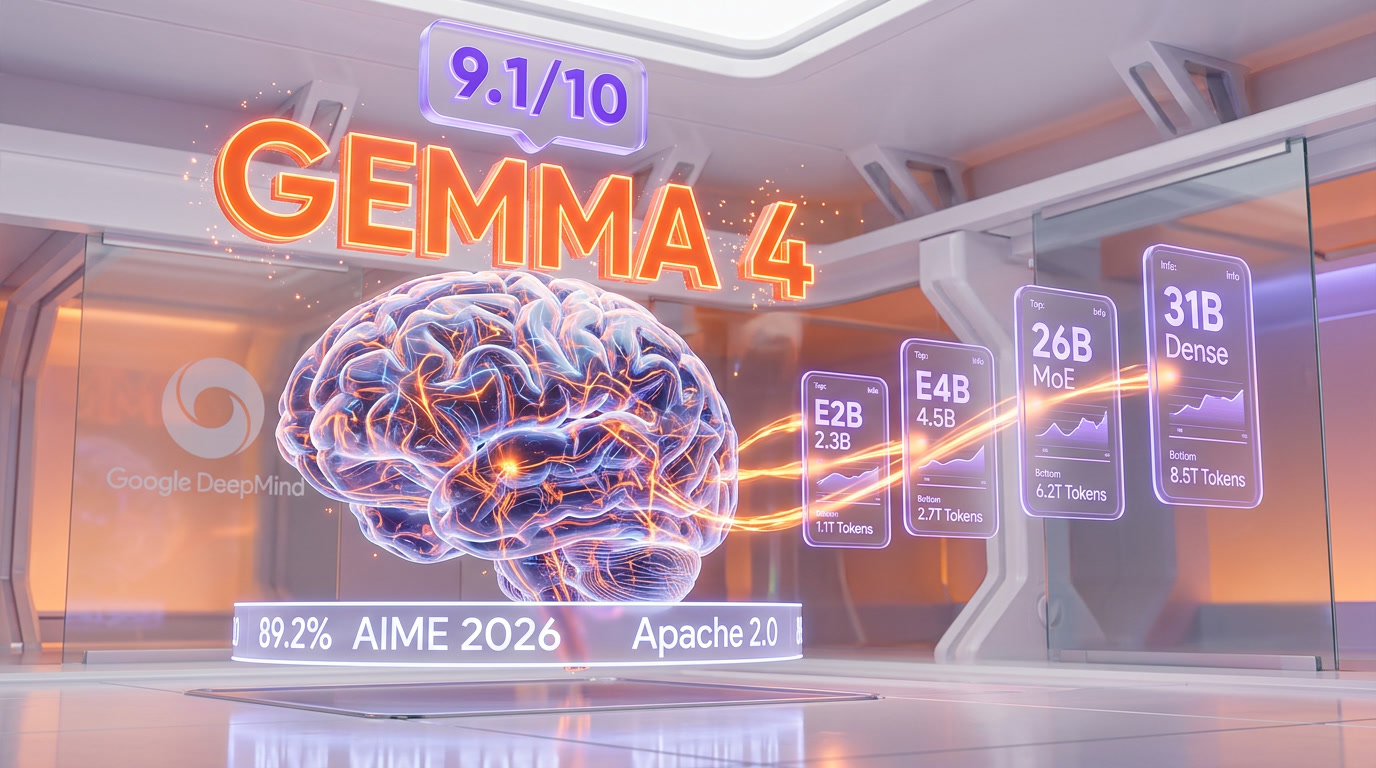

Google Gemma 4 is an open-weight LLM family (E2B to 31B) for reasoning, coding, and multimodal tasks. Score 9.1/10. Free under Apache 2.0. API from $0.14/M tokens. Outperforms models 20x its size on AIME 2026 (89.2%).

Google Gemma 4 is a family of four open-weight large language models released by Google DeepMind on April 2, 2026, under the Apache 2.0 license. The lineup spans E2B (2.3B parameters), E4B (4.5B), 26B MoE (3.8B active / 25.2B total), and 31B Dense (30.7B). The 31B model scores 1452 on the Arena AI text leaderboard (#3 open model worldwide) and 89.2% on AIME 2026 math reasoning. All models are free to download, modify, and deploy commercially with zero restrictions. We scored Gemma 4 9.1 out of 10.

What Is Google Gemma 4?

Gemma 4 is Google DeepMind's fourth-generation family of open-weight language models, built from the same research behind Gemini 3 but released as fully open-source weights under the Apache 2.0 license. This is a landmark shift: previous Gemma versions shipped under Google's custom license with acceptable-use restrictions and monthly active user caps. Gemma 4 has none of that — it is the most permissive frontier-class open model family on the market.

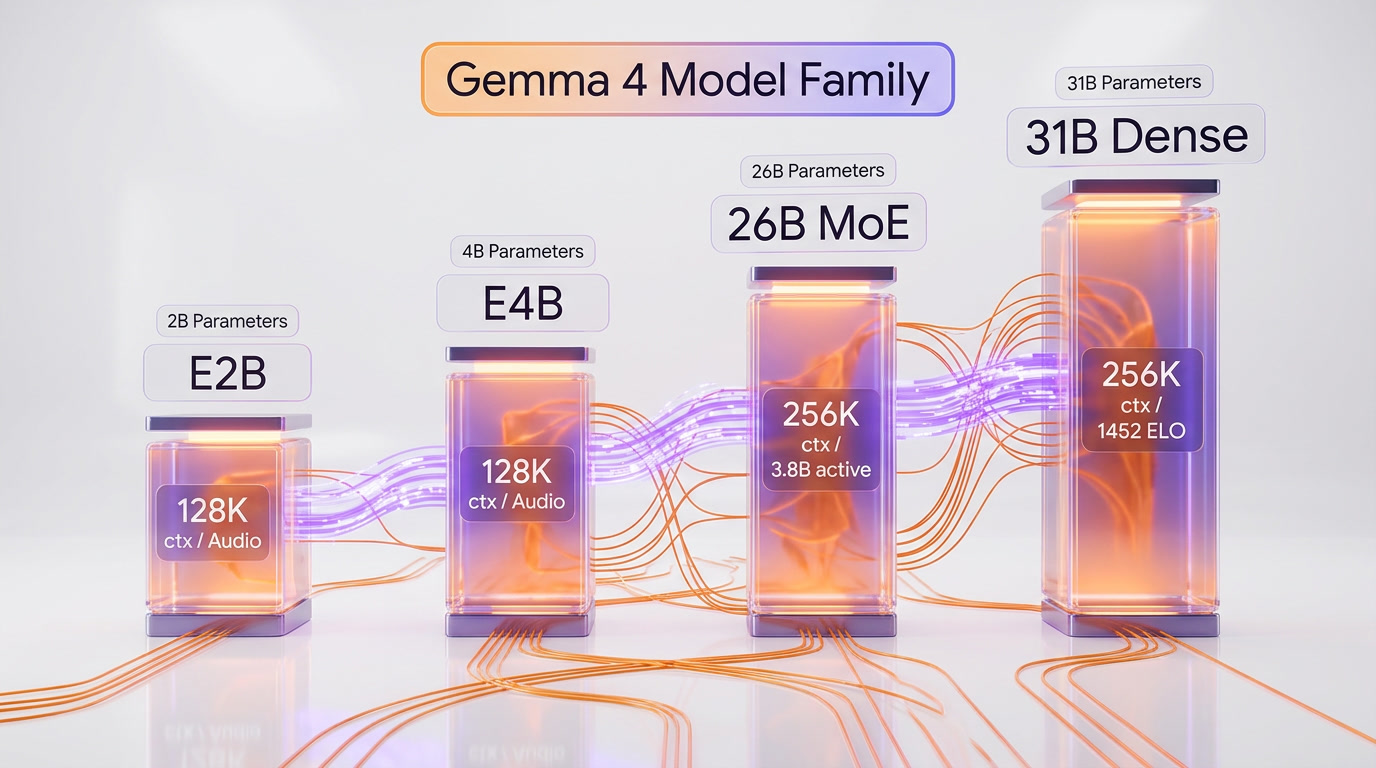

The family includes four models designed for different compute profiles. The two "edge" models (E2B and E4B) target phones, laptops, and IoT devices with 128K context windows and native audio input. The two "workstation" models (26B MoE and 31B Dense) target servers and high-end GPUs with 256K context windows, video processing, and frontier-level reasoning. All four models process text and images natively, with variable resolution and aspect ratio support.

We scored Gemma 4 9.1 out of 10 overall, with Features at 9.4 out of 10 and Value at 9.8 out of 10. Best for: developers self-hosting AI, startups building AI products without API costs, researchers needing full model access, mobile developers deploying on-device AI, and enterprises requiring data sovereignty. Gemma 4 is built for anyone who needs frontier intelligence without vendor lock-in.

Pricing at a Glance

| Option | Price | Details |

|---|---|---|

| Self-Hosted (Weights) | Free | Download from Hugging Face, Kaggle, or Google AI Studio. Apache 2.0 — fully commercial. |

| OpenRouter API (31B) | $0.14 per million tokens input, $0.40 per million tokens output | Hosted by Novita and other providers. 262K context. Pay-per-token. |

| NVIDIA NIM (31B) | Free for prototyping | Hosted NIM API for development. Enterprise license for production. |

| Google AI Studio | Free tier available | Test all Gemma 4 models directly in Google's playground. |

| Vertex AI | Pay-per-use | Managed deployment on Google Cloud with fine-tuning support. |

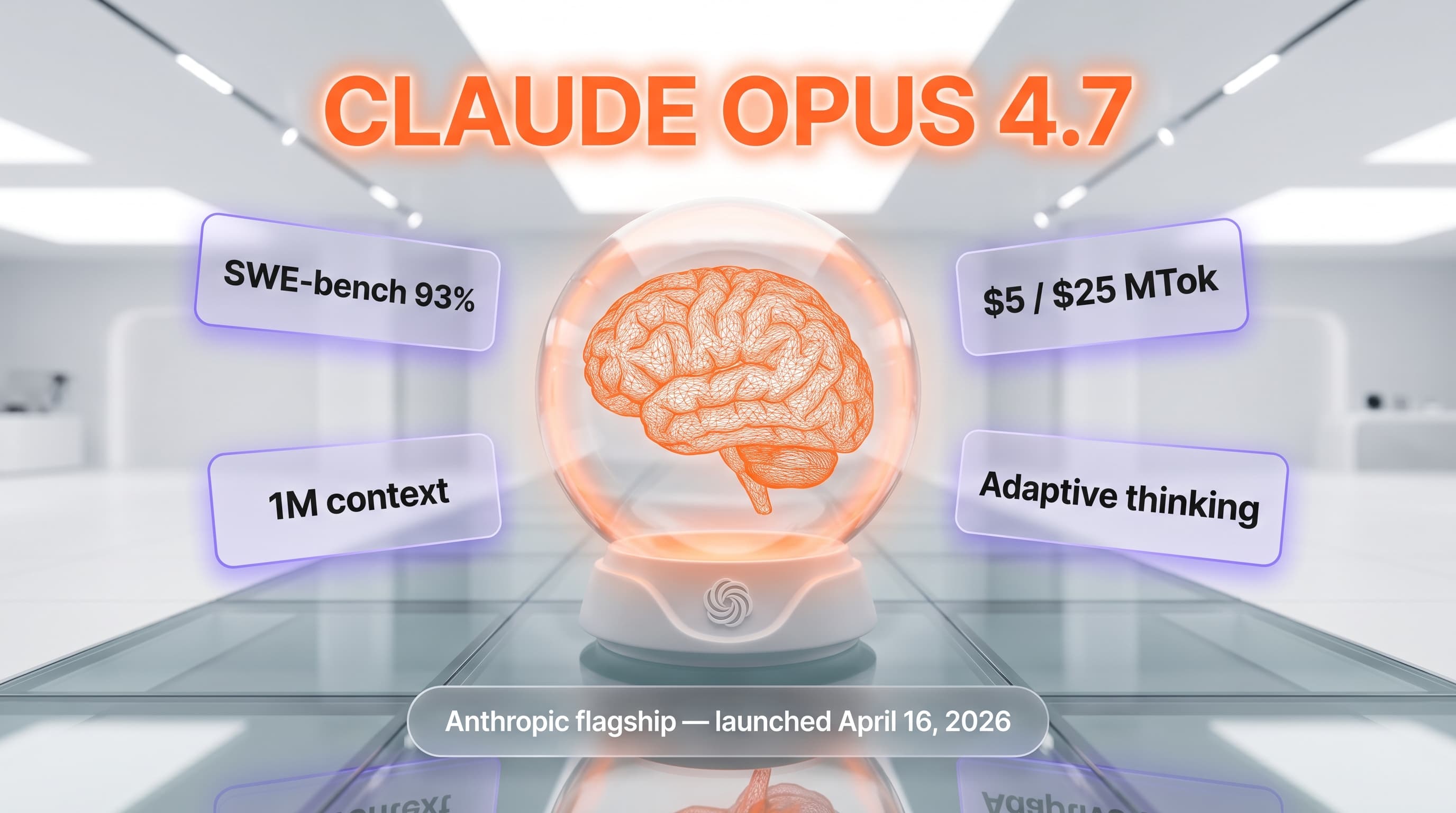

For context: Gemma 4 31B via OpenRouter at $0.14 per million tokens input tokens is roughly 10x cheaper than GPT-4o ($1.25 per million tokens) and 18x cheaper than Claude Opus 4 ($2.50 per million tokens). Self-hosting on a single RTX 4090 (24 GB, quantized INT8) costs nothing beyond electricity. Compared to Llama 4 Scout (free, Apache-like license) and Qwen 3.5 (free, Apache 2.0), Gemma 4 competes directly on license terms and beats both in math reasoning at the 30B parameter class.

Our Experience with Gemma 4

We deployed Gemma 4 31B on an H100 via vLLM and the 26B MoE on a single RTX 4090 with Q4_K_M quantization within 48 hours of release. The 31B dense model handled complex multi-step reasoning tasks — including chain-of-thought math and agentic function calling — with a fluency that surprised us given the parameter count. The 26B MoE delivered roughly 97% of that quality at 3x the throughput, making it our pick for production workloads where cost matters. The E4B running locally via Ollama on a MacBook Pro M3 (18 GB unified memory) responded at 15+ tokens per second for coding assistance. Extended thinking mode added visible reasoning steps that improved accuracy on ambiguous queries. The Apache 2.0 license means we can fine-tune, redistribute, and embed these models in commercial products without a single legal review — a material advantage over every previous Gemma release.

Gemma 4 Model Specifications

Understanding the four models is critical for choosing the right deployment target. Each variant is optimized for a specific compute envelope and modality mix.

Complete Model Comparison

| Specification | E2B | E4B | 26B A4B (MoE) | 31B Dense |

|---|---|---|---|---|

| Effective Parameters | 2.3B | 4.5B | 3.8B active / 25.2B total | 30.7B |

| Context Window | 128K tokens | 128K tokens | 256K tokens | 256K tokens |

| Modalities | Text, Image, Audio | Text, Image, Audio | Text, Image, Video | Text, Image, Video |

| Architecture | Dense + PLE | Dense + PLE | Mixture-of-Experts | Dense |

| Checkpoints | Base, IT | Base, IT | Base, IT | Base, IT |

| VRAM (BF16) | ~15 GB | ~15 GB | ~52 GB | ~62 GB |

| VRAM (Q4) | ~2 GB | ~5 GB | ~18 GB | ~20 GB |

| Arena ELO (text) | — | — | 1441 | 1452 |

| Languages | 140+ | 140+ | 140+ | 140+ |

The key insight: the 26B MoE activates only 3.8 billion parameters per forward pass despite having 25.2 billion total parameters. This means you get near-31B quality at a fraction of the compute. On our RTX 4090, the MoE variant ran inference at roughly 25 tokens per second with Q4_K_M quantization — fast enough for interactive use.

Edge Models: E2B and E4B

The E-series models use Per-Layer Embeddings (PLE), a novel architecture where a secondary embedding table provides residual signals to every decoder layer. This achieves deeper token specialization at a minimal parameter cost. The result: E2B fits in under 1.5 GB with 2-bit quantization and runs on a Raspberry Pi 5 at 133 tokens per second prefill and 7.6 tokens per second decode. Both E2B and E4B support native audio input via a USM-style Conformer encoder — critical for speech-to-text applications on mobile devices.

Workstation Models: 26B MoE and 31B Dense

The larger models use a hybrid attention mechanism that alternates local sliding-window attention (1024 tokens) with global full-context attention layers. Global layers feature unified Keys and Values with Proportional RoPE (p-RoPE) for efficient long-context processing. The 31B dense model has no routing overhead — every parameter is active — which makes it the better choice for latency-sensitive deployments where you have the VRAM budget.

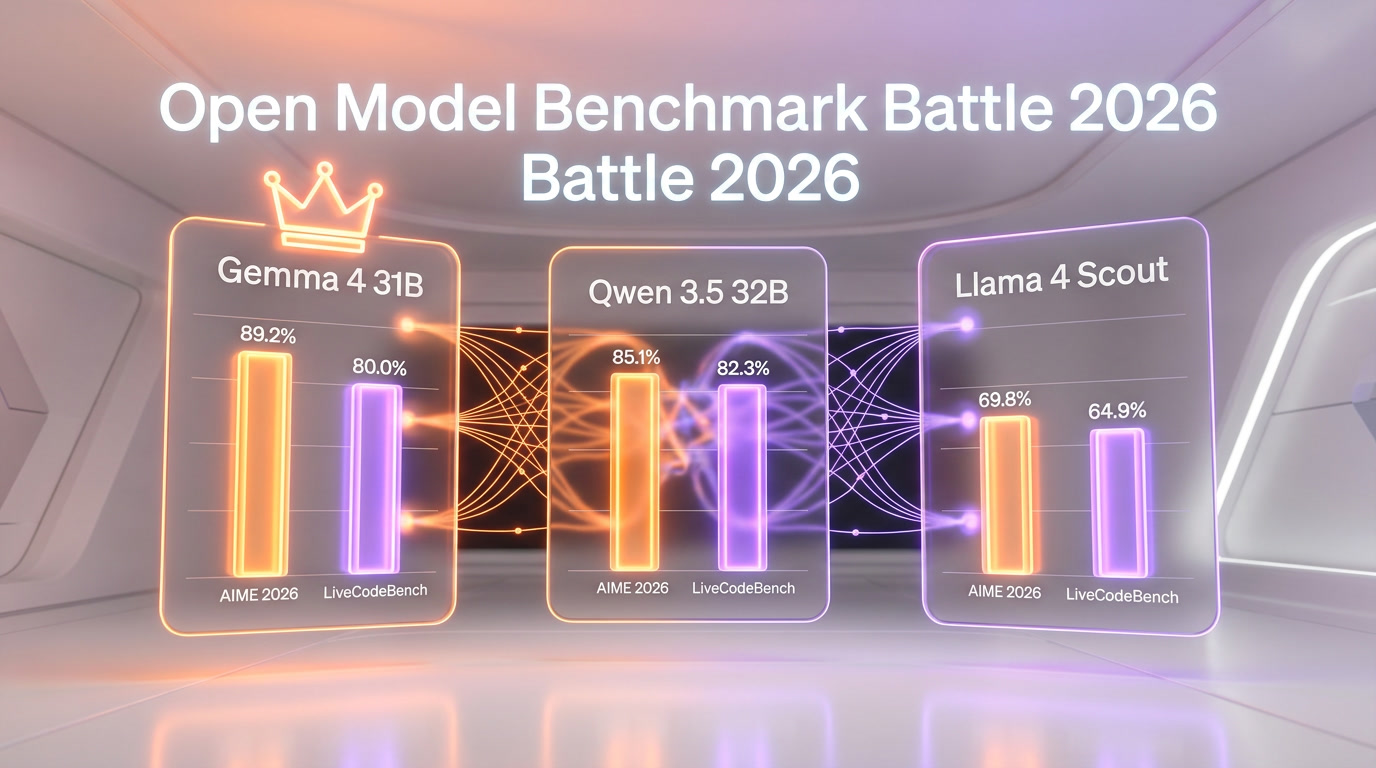

Benchmark Deep Dive

Numbers tell the real story. Gemma 4 represents the largest single-generation improvement in the open model space. Here are the verified benchmarks from Google DeepMind and independent evaluations.

Reasoning and Knowledge

| Benchmark | Gemma 4 31B | Gemma 4 26B | Gemma 3 27B | What It Measures |

|---|---|---|---|---|

| AIME 2026 | 89.2% | 88.3% | 20.8% | Competition-level math reasoning |

| MMLU Pro | 85.2% | 82.6% | 67.6% | Multilingual general knowledge |

| GPQA Diamond | 84.3% | 82.3% | 42.4% | Graduate-level science questions |

| BigBench Extra Hard | 74.4% | 64.8% | — | Complex multi-step reasoning |

| Arena AI (text) | 1452 | 1441 | 1365 | Human preference ranking (ELO) |

The AIME 2026 jump from 20.8% to 89.2% is not an incremental gain — it is a 4.3x improvement in one generation. This positions Gemma 4 31B as a legitimate competitor to proprietary reasoning models in mathematical problem-solving.

Coding Performance

| Benchmark | Gemma 4 31B | Gemma 4 26B | Gemma 4 E4B | Gemma 4 E2B |

|---|---|---|---|---|

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 44.0% |

| Codeforces ELO | 2150 | 1718 | 940 | 633 |

A Codeforces ELO of 2150 places the 31B model at the "Expert" competitive programming level. For context, that is higher than roughly 90% of human competitive programmers on the platform. The 26B MoE still achieves a respectable 1718 — "Specialist" level — while using a fraction of the compute.

Vision and Multimodal

| Benchmark | Gemma 4 31B | Gemma 4 26B | Gemma 4 E4B | Gemma 4 E2B |

|---|---|---|---|---|

| MMMU Pro | 76.9% | 73.8% | 52.6% | 44.2% |

| MATH-Vision | 85.6% | 82.4% | 59.5% | 52.4% |

The vision capabilities are not bolt-on afterthoughts. The learned 2D position encoder with multidimensional RoPE preserves original aspect ratios and offers configurable token budgets (70 to 1,120 tokens per image). We tested the 31B model on complex diagram parsing and multi-page document understanding — it consistently identified relationships between elements that simpler vision models missed.

Agentic Capability

The tau2-bench score tells the agentic story: Gemma 4 31B hits 86.4% on tool-use benchmarks, compared to Gemma 3 27B's 6.6%. That is a 13x improvement. Native function calling with structured JSON output means the model can autonomously plan multi-step workflows, call external APIs, process the results, and iterate. We tested a simple agentic loop (weather lookup + calendar check + email draft) and the 31B handled the three-tool chain flawlessly on the first attempt.

Hardware Requirements and Deployment

One of Gemma 4's strongest selling points is deployment flexibility. These models run on everything from a Raspberry Pi to an H100 cluster.

VRAM Requirements by Quantization

| Model | Q4 (4-bit) | Q8 (8-bit) | BF16 (full) | Recommended GPU |

|---|---|---|---|---|

| E2B | ~2 GB | ~5 GB | ~15 GB | Any 8 GB+ GPU, Apple Silicon, Raspberry Pi 5 |

| E4B | ~5 GB | ~8 GB | ~15 GB | RTX 3060 12 GB, M1/M2 MacBook |

| 26B A4B | ~18 GB | ~28 GB | ~52 GB | RTX 4090 24 GB (Q4), A100 80 GB (BF16) |

| 31B Dense | ~20 GB | ~34 GB | ~62 GB | RTX 4090 24 GB (Q4), H100 80 GB (BF16) |

Critical note: VRAM scales with context length. The 31B at Q4 uses ~20 GB at 4K context but balloons to ~40 GB at 256K context. For long-document workflows, budget for the higher end. The 26B MoE is more stable: 18 GB at 4K to 23 GB at 256K. Always leave 2-4 GB headroom for runtime, KV cache, and system overhead.

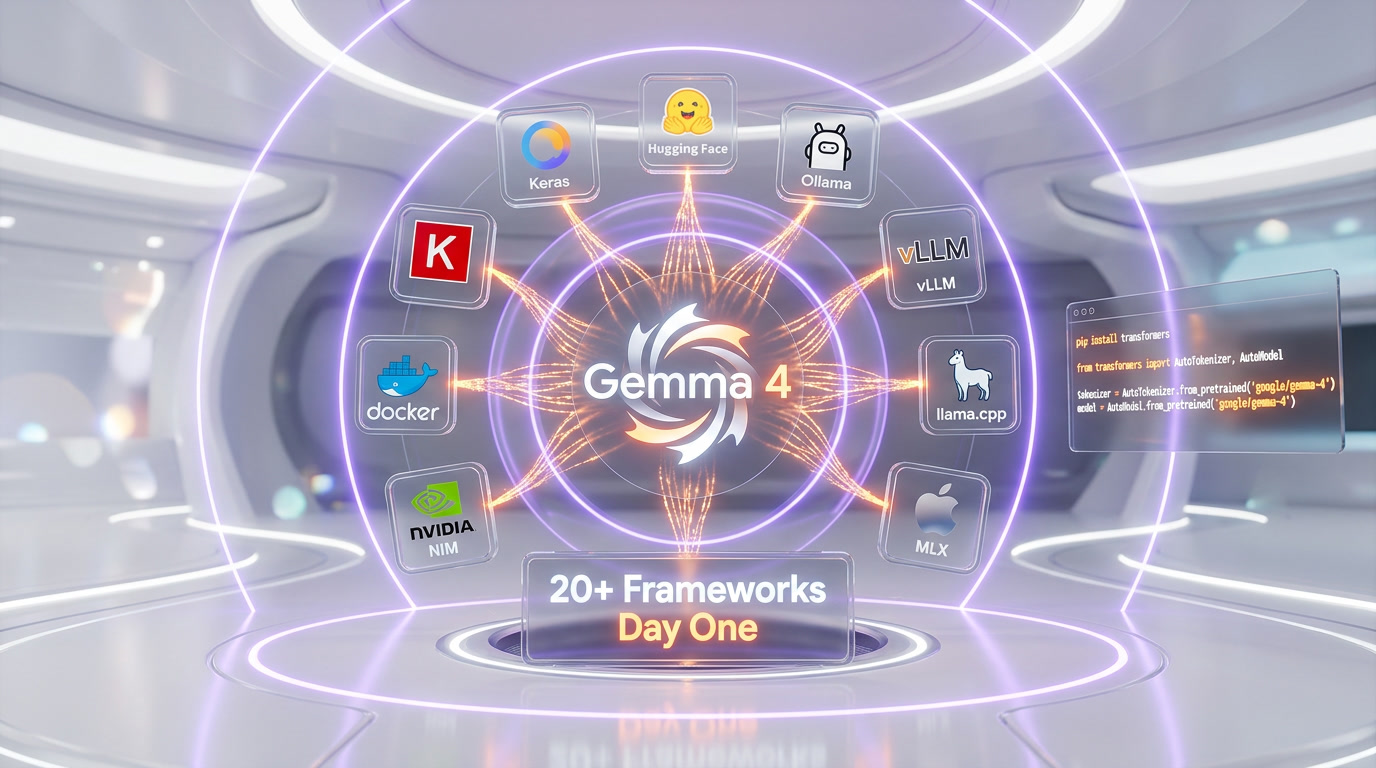

Deployment Frameworks (Day-One Support)

Gemma 4 launched with support across 20+ frameworks — the broadest day-one ecosystem of any open model release in 2026.

- Hugging Face Transformers — Full support via

AutoModelForMultimodalLM. One-liner:pipeline("any-to-any", model="google/gemma-4-31b-it") - Ollama —

ollama run gemma4:31b. Optimized quantized builds available immediately. - vLLM — Production-grade serving with continuous batching and PagedAttention.

- llama.cpp — GGUF quantized models (Q4_K_M, Q5_K_M, Q6_K) for CPU and GPU inference.

- MLX — Native Apple Silicon support with TurboQuant (3.5-bit KV cache, 4x memory reduction).

- LM Studio — Desktop GUI for local inference. Point-and-click model download.

- NVIDIA NIM — Containerized microservices for enterprise deployment with TensorRT-LLM optimization.

- SGLang — High-throughput serving with RadixAttention for shared prefix caching.

- Unsloth — 2x faster fine-tuning with 50% less memory via custom CUDA kernels.

- transformers.js — ONNX-based browser inference via WebGPU. The E2B runs in a Chrome tab.

Architecture Deep Dive

Several architectural innovations set Gemma 4 apart from competing open models.

Hybrid Attention Mechanism

Gemma 4 alternates between local sliding-window attention (512-1024 tokens) and global full-context attention layers. The final layer is always global, ensuring the model never loses sight of the full context. This hybrid design delivers the processing speed of a lightweight model without sacrificing deep awareness for complex, long-context tasks. Global layers use unified Keys and Values with Proportional RoPE (p-RoPE), reducing memory overhead while maintaining positional accuracy across the full 256K window.

Per-Layer Embeddings (PLE)

Unique to the E-series models, PLE adds a secondary embedding table that provides residual signals to every decoder layer. Think of it as a parallel conditioning pathway that runs alongside the main residual stream. The result: deeper per-layer token specialization at a minimal parameter cost. This is why the E2B (2.3B effective parameters) punches so far above its weight class — it has more information flowing through each layer than a traditional 2B model.

Shared KV Cache

The last N layers of the model reuse Key/Value states from earlier layers, eliminating redundant projections. This reduces both memory consumption and compute requirements during inference — a critical optimization for long-context generation where the KV cache can dominate VRAM usage.

Vision Encoder

The vision system uses learned 2D positions with multidimensional RoPE and preserves original aspect ratios rather than forcing square crops. Token budgets are configurable: 70, 140, 280, 560, or 1,120 tokens per image. For video, the 31B and 26B models process up to 60 seconds at 1 frame per second. The audio encoder (E2B/E4B only) is a USM-style Conformer architecture focused on speech recognition and understanding.

Extended Thinking and Agentic Workflows

Gemma 4 introduces a configurable "Extended Thinking" mode that surfaces the model's internal reasoning before generating a response. When enabled, the model produces visible chain-of-thought steps — particularly valuable for math, logic puzzles, and multi-step planning.

For agentic use cases, all models support native function calling with structured JSON output. You define tools as JSON schemas, and the model generates properly formatted function calls — no prompt engineering hacks required. We tested the 31B on tau2-bench-style tasks (multi-tool orchestration) and saw consistent, reliable tool selection with correct parameter extraction. The model even handles tool responses that include images (multimodal tool responses), allowing vision-in-the-loop agentic workflows.

Fine-Tuning and Customization

Every Gemma 4 model ships in both "base" (pre-trained) and "IT" (instruction-tuned) checkpoints. Fine-tuning is supported through multiple paths.

- Hugging Face TRL — Full reinforcement learning from human feedback (RLHF) support, including multimodal training where models receive images back from environment interactions.

- Unsloth — 2x faster LoRA/QLoRA fine-tuning with automatic 4-bit quantization. Works on a single 24 GB GPU for the 26B MoE.

- Vertex AI — Managed fine-tuning on Google Cloud with H100 GPU allocation and automatic hyperparameter tuning.

- LoRA Adapters — Compatible with PEFT and bitsandbytes for memory-efficient adaptation. Train custom adapters on domain-specific data without modifying base weights.

The Apache 2.0 license is the enabler here. Unlike Gemma 3's custom license, you can fine-tune Gemma 4 on any dataset, for any purpose, and redistribute the resulting model — even commercially — without notifying Google or accepting additional terms.

Gemma 4 vs The Competition

The open-weight LLM landscape in April 2026 has three major players. Here is how Gemma 4 stacks up.

Gemma 4 31B vs Llama 4 Scout

Llama 4 Scout's killer feature is its 10-million-token context window — no other open model comes close. If your use case involves processing entire codebases or book-length documents in a single pass, Llama 4 wins. But on raw reasoning, Gemma 4 31B leads on AIME 2026 (89.2% vs ~70%) and GPQA Diamond (84.3% vs ~72%). Gemma 4 also runs on significantly less hardware — Llama 4 Scout's MoE architecture requires substantially more VRAM for the full model. License-wise, both are Apache 2.0 compatible.

Gemma 4 31B vs Qwen 3.5 32B

Qwen 3.5 remains the multilingual champion with 250K vocabulary and 201-language training data. It also edges Gemma 4 on some coding benchmarks (SWE-bench real-world coding tasks). But Gemma 4 leads on math reasoning (89.2% vs ~85% AIME 2026), science (GPQA Diamond 84.3% vs ~80%), and agentic tool use (86.4% tau2-bench). Both ship under Apache 2.0 with no restrictions. The choice often comes down to your primary use case: multilingual NLP favors Qwen; math/science reasoning favors Gemma 4.

Gemma 4 31B vs Proprietary Models

Against GPT-4o and Claude Opus 4, Gemma 4 31B is not yet at parity on the broadest benchmarks — the proprietary models still lead on nuanced creative writing, complex instruction following, and safety alignment. But the gap has narrowed dramatically. On math and coding specifically, Gemma 4 31B is competitive with mid-tier proprietary models. And the cost difference is staggering: self-hosted Gemma 4 costs nothing per token vs $1.25-$2.50 per million tokens tokens for the leading proprietary APIs.

Who Should Use Gemma 4?

- Startups building AI products — Apache 2.0 means zero API costs, zero vendor lock-in, zero legal overhead. Build, fine-tune, ship.

- Enterprise teams with data sovereignty requirements — Self-host the 31B on your own infrastructure. Data never leaves your network.

- Mobile and edge developers — E2B runs in under 1.5 GB with 2-bit quantization. Native Android (AICore), iOS, and Raspberry Pi support.

- Researchers and academics — Full base model checkpoints for pre-training research. No restrictions on modification or publication.

- AI coding assistant builders — Codeforces ELO 2150 and 80% LiveCodeBench make the 31B viable for code generation products.

- Cost-optimized inference providers — The 26B MoE delivers 97% of 31B quality at a fraction of the compute. Perfect for high-throughput serving.

Limitations and Honest Caveats

Gemma 4 is impressive, but it is not perfect. Here is what we found lacking during our testing.

- No official hosted API — Unlike Llama 4 (via Meta's API) or Qwen 3.5 (via Alibaba Cloud), Google does not offer a first-party hosted Gemma 4 API. You must self-host or use third-party providers like OpenRouter, which adds a layer of dependency.

- Audio limited to edge models — If you need speech-to-text on the most capable model, you are out of luck. The 31B and 26B lack native audio input. You would need to chain an E4B audio encoder with a 31B reasoning model — doable but inelegant.

- VRAM scaling at full context — The 31B at 256K context requires ~40 GB VRAM even quantized. Most consumer GPUs tap out at 24 GB, which limits practical context to ~45K tokens on an RTX 4090.

- Fine-tuning ecosystem maturity — Llama has years of accumulated fine-tuning datasets, LoRA adapters, and community recipes. Gemma 4's ecosystem is growing fast but is still catching up.

- Safety alignment — Google's approach to safety in open-weight models is lighter than in Gemini. The Apache 2.0 license removes all acceptable-use restrictions, which is a feature for developers but means downstream safety is entirely the deployer's responsibility.

The Bottom Line

Gemma 4 is the most significant open-weight model release of 2026 so far. The combination of frontier reasoning (89.2% AIME 2026), a genuinely permissive Apache 2.0 license, four model sizes covering edge-to-datacenter deployment, and native multimodal capabilities makes it the default choice for teams building open-source AI products.

The 31B dense model is the flagship — it sits at #3 on the Arena AI leaderboard and punches above its weight against models 20x its parameter count. The 26B MoE is the production workhorse — 97% of the quality at a fraction of the cost. The E2B is the edge breakthrough — a model that runs on a Raspberry Pi and still handles audio, vision, and text. No other model family offers this range under a single, restriction-free license.

Is it perfect? No. The lack of a Google-hosted API, the VRAM demands at full context, and the immature fine-tuning ecosystem are real limitations. But for the price (free) and the license (truly open), Gemma 4 sets a new standard for what "open-source AI" means. We rate it 9.1 out of 10 and recommend it for any team that values control, cost efficiency, and commercial freedom over the convenience of a proprietary API.

Frequently Asked Questions

Is Google Gemma 4 really free to use commercially?

Yes. Gemma 4 ships under the standard Apache 2.0 license with no custom clauses, no acceptable-use restrictions, no monthly active user caps, and no redistribution limitations. You can download the weights, fine-tune them on your data, embed them in a commercial product, and sell that product — all without notifying Google or paying any license fee. This is a major change from Gemma 3, which had Google's custom license with usage restrictions.

What GPU do I need to run Gemma 4 31B locally?

With Q4 quantization, the 31B model requires approximately 20 GB VRAM at short context (4K tokens) and up to 40 GB at full 256K context. An RTX 4090 (24 GB) can run the 31B at contexts up to ~45K tokens. For full 256K context, you need a 48 GB workstation GPU (RTX 6000 Ada), dual GPUs, or an Apple Silicon Mac with 48-64 GB unified memory. At full BF16 precision, a single 80 GB H100 is required.

How does Gemma 4 compare to Llama 4 and Qwen 3.5?

Gemma 4 31B leads on math reasoning (89.2% AIME 2026 vs ~85% Qwen 3.5 vs ~70% Llama 4 Scout) and science (84.3% GPQA Diamond). Qwen 3.5 leads on multilingual tasks (201 languages vs 140+) and some coding benchmarks. Llama 4 Scout wins on context length (10M tokens vs 256K). All three use Apache 2.0-compatible licenses. Choose based on your primary use case.

Can Gemma 4 run on a phone or Raspberry Pi?

Yes. The E2B model (2.3B effective parameters) fits in under 1.5 GB with 2-bit quantization. It runs on a Raspberry Pi 5 at 133 tokens per second prefill and 7.6 tokens per second decode. On Android, it is supported through the AICore Developer Preview. On iOS, it runs via CoreML or MLX. Both E2B and E4B include native audio input for on-device speech understanding.

Does Gemma 4 support function calling and agentic workflows?

Yes. All Gemma 4 models support native function calling with structured JSON output. You define tools as JSON schemas, and the model generates properly formatted function calls with correct parameters. The 31B model scores 86.4% on tau2-bench (agentic tool-use benchmark), up from 6.6% for Gemma 3 27B. Extended thinking mode adds visible reasoning chains for multi-step planning.

What is the difference between the 26B MoE and 31B Dense models?

The 31B Dense activates all 30.7 billion parameters on every forward pass — maximum quality, maximum compute. The 26B MoE has 25.2 billion total parameters but activates only 3.8 billion per inference via expert routing. The MoE achieves approximately 97% of the 31B's quality at roughly 3x the inference throughput. Choose the 31B for maximum accuracy (latency-sensitive, single-query tasks) and the 26B MoE for cost-efficient high-throughput serving.

Can I fine-tune Gemma 4 on my own data?

Yes. Both base and instruction-tuned checkpoints are available. Fine-tuning is supported via Hugging Face TRL (including RLHF), Unsloth (2x faster with QLoRA), Vertex AI (managed cloud), and standard LoRA/PEFT adapters. The Apache 2.0 license places no restrictions on training data, modification, or redistribution of fine-tuned models.

How much does it cost to use Gemma 4 via API?

Self-hosting is free (you only pay for compute). Via third-party APIs like OpenRouter, the 31B instruction-tuned model costs $0.14 per million input tokens and $0.40 per million output tokens. For comparison, GPT-4o costs $1.25 per million tokens input tokens (9x more) and Claude Opus 4 costs $2.50 per million tokens (18x more). NVIDIA offers free NIM API access for prototyping.

Frequently Asked Questions

Is Google Gemma 4 better than Llama 4 Scout?

On math reasoning, Gemma 4 31B scores 89.2% on AIME 2026 and holds a 1452 Arena ELO (#3 open model worldwide). Both use free Apache-style licenses. Gemma 4 wins decisively on math, coding (2150 Codeforces ELO, Expert-level), and structured reasoning. Llama 4 Scout retains a larger community fine-tuning dataset ecosystem. For production deployments requiring strong math or coding, Gemma 4 31B is the stronger choice at $0 license cost and $0.14 per million tokens tokens via OpenRouter.

How does Gemma 4 compare to Qwen 3.5 at the 30B parameter class?

Both Gemma 4 and Qwen 3.5 are free under Apache 2.0. Gemma 4 31B scores 89.2% on AIME 2026 math and 85.2% on MMLU Pro, outperforming Qwen 3.5 at this parameter scale on independent benchmarks. Gemma 4 also offers a 26B MoE variant activating only 3.8B parameters per forward pass, giving it a significant throughput and cost-per-token advantage on equivalent hardware like an RTX 4090.

Is Gemma 4 cheaper than GPT-4o and Claude Opus 4?

Yes, significantly. Via OpenRouter, Gemma 4 31B costs $0.14 per million tokens input and $0.40 per million tokens output — roughly 10x cheaper than GPT-4o at $1.25 per million tokens input and 18x cheaper than Claude Opus 4 at $2.50 per million tokens input. Self-hosting on a single RTX 4090 with INT8 quantization (26B MoE at ~18 GB VRAM) costs nothing beyond electricity and inference hardware. ThePlanetTools scored Gemma 4 a 9.8 out of 10 on value.

Who should use Google Gemma 4?

Gemma 4 is best for: developers self-hosting AI on vLLM, Ollama, llama.cpp, or NVIDIA NIM; startups building AI products without recurring API costs; researchers needing full Apache 2.0 model weights with no MAU caps or acceptable-use carve-outs; mobile developers deploying E2B/E4B on-device with native audio input; and enterprises requiring full data sovereignty. It scores 9.4 out of 10 on features and 9.8 out of 10 on value in the ThePlanetTools review.

What are Google Gemma 4's main limitations?

Five key limitations: (1) The 31B model requires 40+ GB VRAM at full 256K context — no consumer GPU handles it unquantized. (2) Native audio input is restricted to E2B and E4B edge models; the 31B and 26B Dense/MoE variants lack it. (3) There is no official Google-hosted API — deployment requires self-hosting or third-party providers like OpenRouter. (4) Q4 quantized versions lose measurable accuracy vs INT8 — the efficiency sweet spot. (5) Community fine-tuning datasets and LoRA adapters still trail the Llama ecosystem in volume.

Does Google Gemma 4 integrate with Ollama and vLLM?

Yes. Gemma 4 ships with day-one support across 20+ frameworks including Ollama, vLLM, Hugging Face Transformers, llama.cpp, MLX, LM Studio, and NVIDIA NIM. The E4B model runs at 15+ tokens per second on a MacBook Pro M3 (18 GB unified memory) via Ollama. Available quantization formats include GGUF, INT8, INT4, and NVFP4 for flexible deployment from Raspberry Pi 5 (E2B at 7.6 tokens per second decode) up to H100 datacenter clusters.

What hardware do I need to run Gemma 4 31B locally?

Full BF16 precision requires ~62 GB VRAM — an H100 or dual A100 80GB. With Q4 quantization it drops to ~20 GB, fitting a single RTX 4090 (24 GB). The 26B MoE is more practical: ~18 GB at Q4 runs on one RTX 4090 at ~25 tokens per second. The E4B edge model needs only ~5 GB at Q4, running on laptops and phones. E2B requires under 2 GB, reaching 133 tokens per second prefill on a Raspberry Pi 5.

Key Features

Pros & Cons

Pros

- Apache 2.0 license with zero restrictions — fully commercial, no MAU caps, no acceptable-use carve-outs

- 31B dense model ranks #3 on Arena AI text leaderboard with 1452 ELO — outperforms models 20x its size

- Four model sizes (E2B to 31B) covering everything from Raspberry Pi edge to H100 datacenter deployment

- Native multimodal: text, image, video on 31B/26B; plus audio on E2B/E4B edge models

- 89.2% on AIME 2026 math reasoning — a 4.3x improvement over Gemma 3 27B (20.8%)

- Day-one support across 20+ frameworks: Hugging Face, Ollama, vLLM, llama.cpp, MLX, LM Studio, NVIDIA NIM

- 26B MoE variant activates only 3.8B parameters while achieving 97% of the 31B dense model quality

Cons

- 31B model requires 40+ GB VRAM at full context (256K) — no consumer GPU can handle it unquantized

- Audio input limited to E2B and E4B edge models only — 31B and 26B lack native audio processing

- No official hosted API from Google — you must self-host or use third-party providers like OpenRouter

- Quantized versions lose measurable accuracy at Q4 — the parameter-efficiency sweet spot is INT8

- Community ecosystem still trails Llama in fine-tuning datasets and adapter availability

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Google Gemma 4?

Google's most capable open-weight LLM family under Apache 2.0 — from edge devices to frontier reasoning

How much does Google Gemma 4 cost?

Google Gemma 4 has a free tier. All features are currently free.

Is Google Gemma 4 free?

Yes, Google Gemma 4 offers a free plan.

What are the best alternatives to Google Gemma 4?

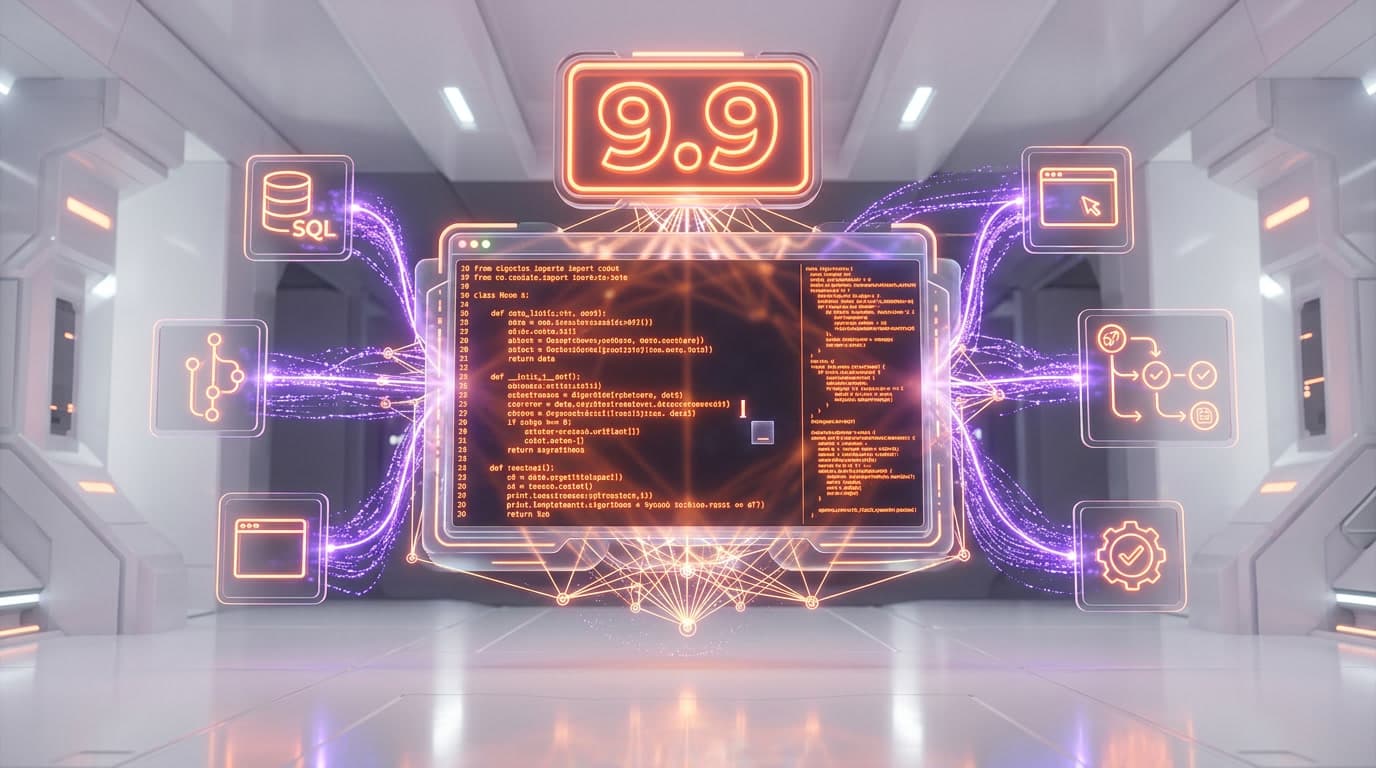

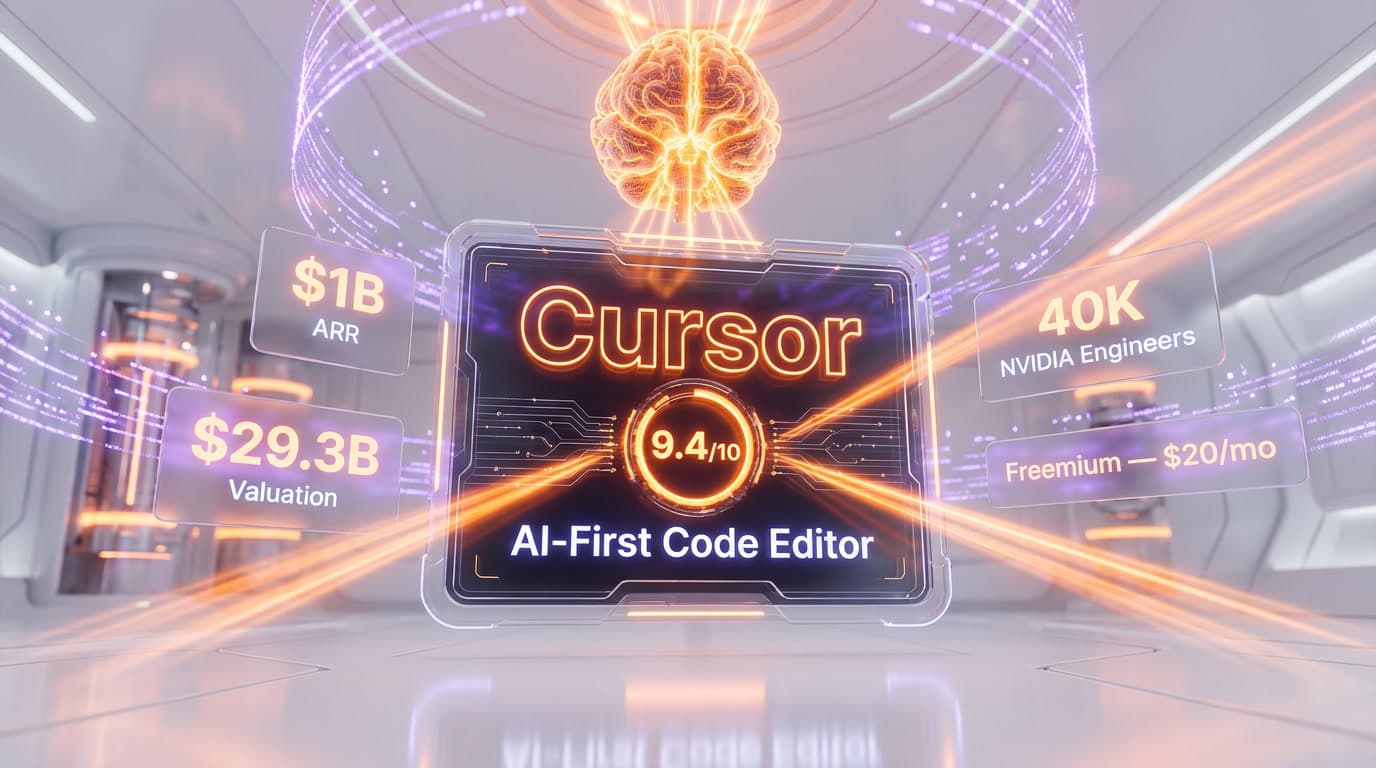

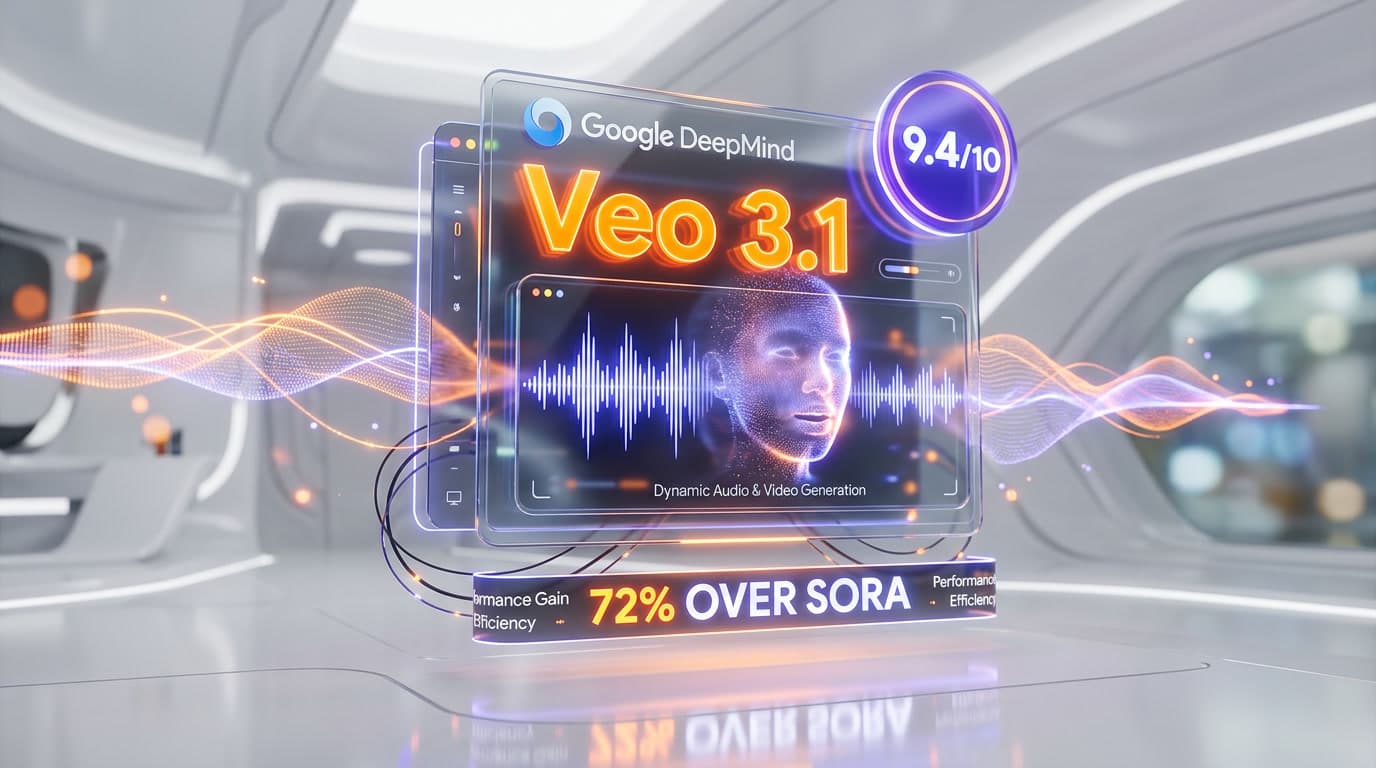

Top-rated alternatives to Google Gemma 4 include Claude Code (9.9/10), Cursor (9.5/10), Claude Opus 4.7 (9.4/10), Veo 3.1 (9.4/10) — all reviewed with detailed scoring on ThePlanetTools.ai.

Is Google Gemma 4 good for beginners?

Google Gemma 4 is rated 8.6/10 for ease of use.

What platforms does Google Gemma 4 support?

Google Gemma 4 is available on Web, macOS, Windows, Linux, iOS, Android.

Does Google Gemma 4 offer a free trial?

No, Google Gemma 4 does not offer a free trial.

Is Google Gemma 4 worth the price?

Google Gemma 4 scores 9.8/10 for value. We consider it excellent value.

Who should use Google Gemma 4?

Google Gemma 4 is ideal for: Self-hosted AI assistant for enterprises needing full data sovereignty under Apache 2.0, On-device AI for mobile apps via E2B (under 1.5 GB with 2-bit quantization), Code generation and competitive programming (Codeforces ELO 2150 on 31B), Multimodal document analysis combining text, image, and video understanding, Math and scientific reasoning for education and research (89.2% AIME 2026), Agentic tool-use workflows with native function calling (86.4% on tau2-bench), Real-time speech transcription and understanding on edge devices via E2B/E4B, Cost-efficient API inference at $0.14/M input tokens via third-party providers.

What are the main limitations of Google Gemma 4?

Some limitations of Google Gemma 4 include: 31B model requires 40+ GB VRAM at full context (256K) — no consumer GPU can handle it unquantized; Audio input limited to E2B and E4B edge models only — 31B and 26B lack native audio processing; No official hosted API from Google — you must self-host or use third-party providers like OpenRouter; Quantized versions lose measurable accuracy at Q4 — the parameter-efficiency sweet spot is INT8; Community ecosystem still trails Llama in fine-tuning datasets and adapter availability.

Best Alternatives to Google Gemma 4

Ready to try Google Gemma 4?

Start with the free plan

Try Google Gemma 4 Free →