GPT-5.4

OpenAI intermediate frontier model from March 2026 — 1.05M context, $2.50 input and $15 output per million tokens, native computer use, predecessor of GPT-5.5.

Quick Summary

GPT-5.4 is OpenAI intermediate frontier LLM released March 5, 2026. It ships with a 1,050,000-token context window, 128,000 max output tokens, native computer-use, and 5-level reasoning effort. Pricing: $2.50 per million input tokens, $0.25 cached, $15 per million output. Score: 8.0/10.

GPT-5.4 is OpenAI intermediate frontier large language model released March 5, 2026. It ships with a 1,050,000-token context window, 128,000 max output tokens, native computer-use, and five reasoning effort levels. Pricing: $2.50 per million input tokens, $0.25 cached input, and $15 per million output tokens. Score: 8.0/10.

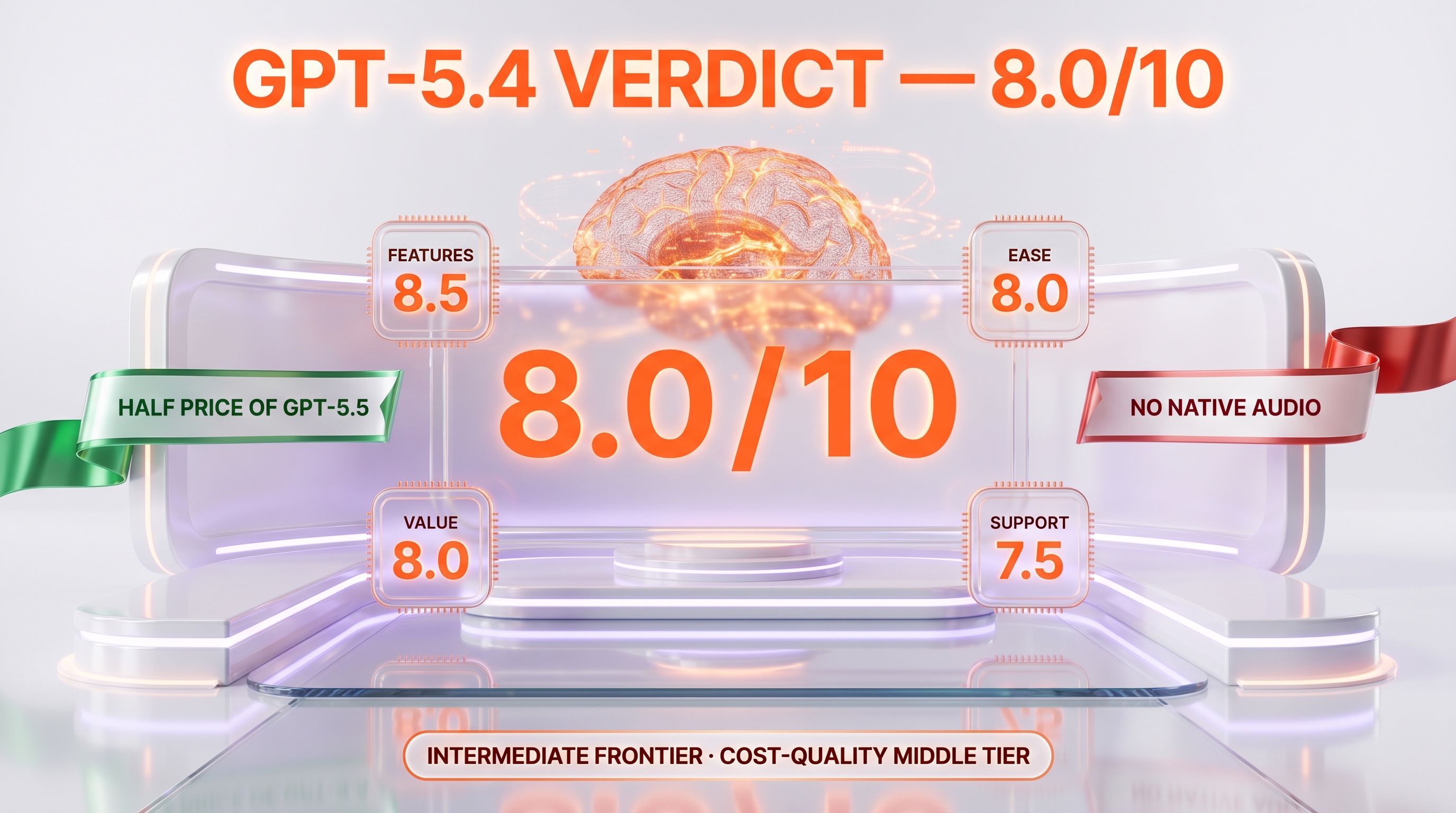

TL;DR — Our Verdict

Score: 8.0/10. GPT-5.4 is the OpenAI frontier model that shipped March 5, 2026 as the cost-quality intermediate between GPT-5.3-Codex and GPT-5.5. It introduced native computer-use in Codex, a 1.05M context window, the new 5-level reasoning effort scale (none, low, medium, high, xhigh), and the new Tool Search system. Best for production pipelines pinned to gpt-5.4-2026-03-05 that want the GPT-5.5 stack at half the input price. Skip it for new projects starting after April 24, 2026 — GPT-5.5 ships with 40 percent fewer tokens per task on agentic workloads and a higher 58.6 percent SWE-bench Pro score.

- 1,050,000-token context window with 128,000 max output tokens

- $2.50 per million input tokens — half the GPT-5.5 input price for the same context size

- First general-purpose OpenAI model with native computer-use in Codex

- New 5-level reasoning effort scale (none, low, medium, high, xhigh)

- 33 percent fewer claim errors and 18 percent fewer overall errors than GPT-5.2

- GPT-5.5 (April 24, 2026) outperforms it on agentic coding (58.6 percent SWE-bench Pro vs 58.7 percent SWE-bench Verified) at 40 percent fewer tokens

What Is GPT-5.4?

GPT-5.4 is the OpenAI frontier large language model released on March 5, 2026. The snapshot ID is gpt-5.4-2026-03-05. It sits in the GPT-5.x lineage as the predecessor of GPT-5.5 (April 23-24, 2026) and the successor of GPT-5.3-Codex (January 2026), GPT-5.2 (late 2025), and GPT-5.1 (October 2025). The base GPT-5 from August 2025 was retired from ChatGPT on February 13, 2026 and remains live only via the API.

OpenAI introduced GPT-5.4 in three flavors: a standard model (GPT-5.4), a reasoning-optimized variant (GPT-5.4 Thinking), and a high-performance variant (GPT-5.4 Pro). GPT-5.4 also became the first general-purpose model with native computer-use capabilities in Codex, where it can directly drive a desktop environment to complete agentic tasks. It also shipped with experimental support for the 1,050,000-token context window for long-horizon tasks.

The snapshot supports text input and output plus image input. Audio and video are not supported on GPT-5.4 directly — those modalities arrived later with GPT-5.5 (text plus image plus audio). The knowledge cutoff is August 31, 2025.

OpenAI claimed three concrete quality wins over GPT-5.2 at launch: GPT-5.4 was 33 percent less likely to make individual claim errors, overall responses were 18 percent less likely to contain errors, and the model solved the same problems with significantly fewer tokens — a token-efficiency gain that compounds on agentic workloads with many turns. GPT-5.4 also set record scores at the time on the OSWorld-Verified and WebArena Verified computer-use benchmarks.

GPT-5.4 introduced a new tool-management system called Tool Search. Instead of always shipping every tool definition in the system prompt, the model can look up tool definitions on demand. The result is faster and cheaper requests in production agents that have many available tools — a pattern that becomes the standard in GPT-5.5 a few weeks later.

Key Features

1,050,000-token context window

GPT-5.4 ships with experimental support for a 1.05M-token context window and 128K max output tokens. Prompts above 272K input tokens incur a 2x input and 1.5x output uplift — a soft penalty that nudges teams toward retrieval and chunking on extreme contexts but lets you skip aggressive chunking for everything under 272K. By comparison, GPT-5 (August 2025) capped at 400K context and GPT-5.5 (April 2026) holds the same 1.05M ceiling.

Native computer use in Codex

GPT-5.4 was the first OpenAI general-purpose model to include native computer-use capabilities in Codex. The model can drive a desktop environment directly: open windows, click, type, navigate. At launch, GPT-5.4 set record scores on OSWorld-Verified and WebArena Verified — two benchmarks that measure end-to-end UI control on real applications and websites. For browser agents and desktop automation pipelines, this changed the cost equation: tasks that previously required the more expensive GPT-5 Computer-Use specialty model could now run on the standard GPT-5.4.

5-level reasoning effort scale

GPT-5.4 introduced a new five-level reasoning effort scale: none (default), low, medium, high, and xhigh. The xhigh level is new — it pushes reasoning depth beyond the high tier on the hardest math, science, and coding problems. The trade-off is latency and output tokens. On standard reasoning tasks at medium effort, GPT-5.4 uses significantly fewer tokens than GPT-5.2 to reach the same answer.

Tool Search

Tool Search is a new system in the Responses API that lets the model fetch tool definitions on demand instead of always loading them in the system prompt. For agentic systems with dozens of tools, Tool Search cuts token consumption on each request and reduces tool-selection latency. This pattern becomes the production default in GPT-5.5 a few weeks after the GPT-5.4 launch.

Three variants — standard, Thinking, Pro

GPT-5.4 ships in three forms. The standard model handles general-purpose work at $2.50 input and $15 output per million tokens. GPT-5.4 Thinking is the reasoning-optimized variant for math, science, and complex coding. GPT-5.4 Pro is the high-performance variant tuned for the hardest benchmarks. All three share the 1.05M context window and the new 5-level reasoning scale.

Full tool stack — web search, file search, image gen, code interpreter, hosted shell, MCP

GPT-5.4 supports the complete OpenAI hosted tool surface: web search, file search, image generation, code interpreter, hosted shell, apply patch (file edit), skills, computer use, and Model Context Protocol (MCP) servers. Function calling and structured outputs work as expected. The model also supports the Responses API, which is the production-recommended surface for agentic workflows in 2026.

Cached input pricing — 90 percent discount

GPT-5.4 cached input is priced at $0.25 per million tokens — a 90 percent discount on the $2.50 input rate. For agentic pipelines that repeatedly send the same system prompt and tool definitions across many turns, cached input cuts cost dramatically on long-running sessions. Combined with Tool Search, the cost-per-turn on a 20-step agent drops well below GPT-5 (August 2025) on the same workload.

Token efficiency gain — 33 percent fewer claim errors than GPT-5.2

OpenAI emphasized at launch that GPT-5.4 solves the same problems with significantly fewer tokens than GPT-5.2. Concretely: 33 percent fewer claim-level errors and 18 percent fewer overall errors. On agentic coding benchmarks, this translates to fewer retries and fewer wasted tokens chasing wrong leads — which is the kind of compounding gain that matters more than a one-shot benchmark number on production work.

GPT-5.4 Pricing in 2026

GPT-5.4 follows the OpenAI standard pay-per-token pricing on the API. There is no monthly subscription on the API surface — you pay only for tokens consumed. Pricing was verified directly on developers.openai.com/api/docs/models/gpt-5.4 in April 2026. Two notable surcharges to budget around: prompts above 272K input tokens incur a 2x input and 1.5x output uplift, and regional data-residency endpoints add a 10 percent uplift.

| Plan | Input price | Cached input | Output price | Best for |

|---|---|---|---|---|

| GPT-5.4 (standard) | $2.50 per million tokens | $0.25 per million tokens | $15.00 per million tokens | General-purpose reasoning, coding, agentic workflows |

| Long-context surcharge | 2x input above 272K tokens | — | 1.5x output above 272K tokens | Anything past 272K input tokens |

| Regional data residency | +10 percent uplift | +10 percent uplift | +10 percent uplift | Customers requiring EU/Asia data residency |

| Batch API | Same token rates | Same token rates | Same token rates | High-volume offline batch jobs |

Best for: developers running production pipelines who need the GPT-5.5 capability stack (1.05M context, native computer use, full tool surface) at half the input price ($2.50 vs $5.00 per million). New projects starting after April 24, 2026 should evaluate GPT-5.5 first — its 40 percent token-efficiency gain often offsets the higher per-token price on agentic workloads.

Our Methodology for This Review

We researched GPT-5.4 rather than ran it as our primary daily driver. By the time of this review (April 27, 2026), GPT-5.5 has been live for three days and our cockpit pipelines run primarily on Claude Opus 4.7 with selective GPT-5.5 calls — GPT-5.4 sits between the two as the cost-quality intermediate we evaluated for production routing. This review compiles GPT-5.4 official model documentation (developers.openai.com/api/docs/models/gpt-5.4, last verified April 27, 2026), the OpenAI Codex changelog (developers.openai.com/codex/changelog), the GPT-5.5 launch announcement (openai.com/index/introducing-gpt-5-5, which references GPT-5.4 baselines for the 40 percent token-efficiency comparison), independent benchmark coverage (LayerLens GPT-5.4 Benchmark Review, BenchLM GPT-5.4 page, Vellum LLM Leaderboard 2026), and TechCrunch coverage of the March 5, 2026 launch (techcrunch.com/2026/03/05/openai-launches-gpt-5-4-with-pro-and-thinking-versions). Our score reflects the technical capability stack at launch, the value proposition relative to GPT-5.5 at half the input price, and the practical fit for production pipelines that need a stable snapshot through deprecation cycles.

GPT-5.4 in the OpenAI Lineage

GPT-5.4 sits in the middle of a fast-moving release cadence. Here is the timeline of the GPT-5.x family as of April 2026, so you can place GPT-5.4 in context.

- GPT-5 (August 7, 2025). Original flagship. 400K context. Retired from ChatGPT February 13, 2026, still live via API.

- GPT-5.1 (October 2025). Refresh with improved reasoning calibration and reduced hallucination compared to GPT-5.

- GPT-5.2 (late 2025). Multimodal expansion — added text plus image plus audio plus video input. The baseline that GPT-5.4 surpassed by 33 percent on claim errors and 18 percent on overall errors.

- GPT-5.3-Codex (January 2026). Codex-tuned variant focused on agentic software engineering. Direct predecessor of the GPT-5.4 computer-use work.

- GPT-5.4 (March 5, 2026 — this review). Cost-quality intermediate. 1.05M context, native computer-use in Codex, Tool Search, three variants (standard, Thinking, Pro). $2.50 input and $15 output per million tokens.

- GPT-5.5 (April 23-24, 2026). Latest flagship at the time of writing. Same 1.05M context. 58.6 percent on SWE-bench Pro. 40 percent fewer tokens per task on agentic workloads vs GPT-5.4. $5.00 input and $30 output per million tokens — exactly double GPT-5.4 input price.

Why does GPT-5.4 still matter? Two reasons. First, the price-per-token is half of GPT-5.5 — for production pipelines where token efficiency does not fully offset the per-token premium, GPT-5.4 remains the cheaper option on the same capability stack (1.05M context, computer use, full tool surface). Second, snapshot pinning at gpt-5.4-2026-03-05 gives production systems stable behavior through deprecation cycles. OpenAI has committed to advance notice before any API retirement, so GPT-5.4 is a defensible production choice through at least Q3 2026.

Benchmarks at Launch

OpenAI and independent reviewers published several headline numbers at the March 5, 2026 launch:

- SWE-bench Verified — 58.7 percent on the verified set. Note that OpenAI flagged training-data contamination concerns across all frontier models on SWE-bench Verified, which is why SWE-bench Pro (multi-language, standardized scaffold) became the more reliable successor for agentic-coding benchmarking with GPT-5.5.

- OSWorld-Verified — record score at launch. Computer-use benchmark on real desktop applications. The record reflects the native computer-use capabilities in Codex.

- WebArena Verified — record score at launch. Browser-based agentic tasks on real websites. Same reasoning — native computer-use changes the ceiling.

- 33 percent fewer claim-level errors and 18 percent fewer overall errors vs GPT-5.2. OpenAI internal evaluation, published with the launch.

- AIME 2025 (competition math) sits at 16.67 percent on the standard configuration as published by independent benchmarkers — competition-level math is where the xhigh reasoning effort matters most. AIME 2026 numbers reported by independent benchmarkers: nano variant 30.0 percent, mini variant 6.67 percent.

- MMLU is saturated for frontier models (88-94 percent across the GPT-5.x family) and no longer differentiates the lineage meaningfully.

The benchmark picture in April 2026 is that GPT-5.4 is a strong but not dominant model. GPT-5.5 surpasses it on agentic coding (58.6 percent SWE-bench Pro vs 58.7 percent SWE-bench Verified — different benchmarks but Pro is the harder one) and on token efficiency. Claude Opus 4.7 surpasses it on Humanity Last Exam (HLE 46.9 percent vs Opus 4.7 leadership). For raw cost-per-quality on production work, GPT-5.4 sits comfortably in the upper-middle tier.

Pros and Cons After Research

What stands out

- 1.05M context window with 128K max output. Covers full repositories, multi-PDF research, and long-horizon agentic tasks without aggressive chunking up to the 272K soft threshold.

- Native computer use in Codex. Record OSWorld-Verified and WebArena Verified scores at launch made GPT-5.4 the first general-purpose OpenAI model to handle desktop and browser automation on the standard tier instead of a specialty Computer-Use endpoint.

- Sharp pricing at $2.50 per million input tokens. Half the GPT-5.5 input price for the same context size and most of the same capability stack. The cached input rate at $0.25 per million tokens (90 percent discount) compounds the savings on agentic pipelines.

- 5-level reasoning effort scale (none, low, medium, high, xhigh). Lets you scale cost vs depth per call — minimum cost for boilerplate, xhigh for the hardest reasoning, with the xhigh tier new in this generation.

- Tool Search. Reduces token consumption and selection latency on agents with many tools — significant improvement for production agentic workloads and the pattern adopted as default in GPT-5.5.

- Three variants — standard, Thinking, Pro. Same context window across all three but tuned for different cost-quality points, which matches well with the OpenAI three-tier mini-nano pattern from GPT-5.

Where it falls short

- Surpassed by GPT-5.5 on April 24, 2026. 40 percent fewer tokens per task on agentic workloads, higher SWE-bench Pro score, native audio input — for new projects, GPT-5.5 is the default unless price sensitivity dominates.

- No native audio or video. Text plus image input only. Audio workflows still need Whisper plus a separate text-to-speech model. GPT-5.5 added native audio shortly after.

- Long-context surcharge above 272K tokens. 2x input and 1.5x output uplift past the soft threshold creates a pricing cliff that long-document workloads have to design around.

- SWE-bench Verified contamination flag. OpenAI itself flagged training-data contamination concerns on SWE-bench Verified across all frontier models — the 58.7 percent number should be read alongside the SWE-bench Pro alternative, where GPT-5.4 was not the publicly reported headline.

- Knowledge cutoff August 31, 2025. Anything after that requires browsing or RAG, which adds latency and cost.

- Variant naming friction. Three variants (standard, Thinking, Pro) plus the older mini and nano endpoints from previous generations make it easy to land on the wrong tier in production routing — common cost surprise for teams migrating from GPT-5.

Real-World Use Cases

Long-document analysis and full-codebase reviews

The 1.05M context window covers most enterprise long-document workloads in a single prompt. Legal contracts, multi-PDF research bundles, and full codebases under 272K tokens fit comfortably without chunking. The 128K max output handles long structured analyses without truncation. Above 272K input the long-context surcharge kicks in — for those workloads, retrieval-augmented chunking remains the cheaper path.

Browser and desktop automation agents

Native computer-use in Codex with record OSWorld-Verified and WebArena Verified scores at launch makes GPT-5.4 the obvious choice for browser and desktop agents. Test automation, form filling, data scraping with click-through navigation, and end-to-end UI testing all benefit from the integrated tool. Combined with Tool Search and cached input, the cost-per-task on a 20-step browser agent drops well below the previous GPT-5 Computer-Use specialty model.

Reasoning-heavy coding pipelines

The 5-level reasoning effort scale lets you balance cost and depth on a per-call basis. Use none or low for boilerplate generation and refactors. Use medium for typical feature work. Use high for hard architectural decisions and xhigh for competition-level math and science problems. The token-efficiency gain over GPT-5.2 (33 percent fewer claim errors, 18 percent fewer overall errors) compounds on multi-turn debug sessions.

Cost-optimized agentic workloads at production scale

GPT-5.4 is the cost intermediate in the GPT-5.x family — half the input price of GPT-5.5 with most of the same capability stack. For production agents where token volume dominates the bill, GPT-5.4 + cached input + Tool Search + Batch API stacks the discounts. New projects after April 24, 2026 should benchmark GPT-5.5 in parallel — the 40 percent token-efficiency gain often closes the gap.

Vision-language workflows

Text plus image input native in the API. Mix screenshots, diagrams, charts, and text in a single prompt. Useful for UI testing automation, document OCR with structured extraction, and visual QA on dashboards. Audio and video remain out of scope on GPT-5.4 — pair with Whisper for STT and a video model if those modalities matter.

Production pipelines pinned to gpt-5.4-2026-03-05

The strongest reason to keep GPT-5.4 specifically through 2026: snapshot pinning. Production systems validated against a specific behavior want exactly that behavior frozen. The snapshot pin gpt-5.4-2026-03-05 gives you that stability through any deprecation window, with OpenAI advance-notice commitment on the API surface. Migration to GPT-5.5 becomes a planned validation effort rather than a forced surprise.

GPT-5.4 vs GPT-5 vs GPT-5.5 vs Claude Sonnet 4.6

By April 2026, GPT-5.4 sits between the legacy GPT-5 and the latest GPT-5.5. Claude Sonnet 4.6 is the closest cross-vendor comparison on the cost-quality intermediate tier. Here is how the four stack up.

| Feature | GPT-5.4 | GPT-5 | GPT-5.5 | Claude Sonnet 4.6 |

|---|---|---|---|---|

| Released | March 5, 2026 | August 7, 2025 | April 23-24, 2026 | April 2026 |

| Context window | 1,050,000 tokens | 400,000 tokens | 1,050,000 tokens | 1,000,000 tokens |

| Max output | 128,000 tokens | 128,000 tokens | 128,000 tokens | 128,000 tokens |

| Input price (per million) | $2.50 | $1.25 | $5.00 | $3.00 |

| Cached input (per million) | $0.25 | $0.125 | $0.50 | $0.30 |

| Output price (per million) | $15.00 | $10.00 | $30.00 | $15.00 |

| Modalities | Text + image input | Text + image input | Text + image + audio | Text + image input |

| Native computer use | Yes (Codex) | No (specialty endpoint only) | Yes | Yes (Claude Code) |

| Knowledge cutoff | August 31, 2025 | September 30, 2024 | Late 2025 | Early 2026 |

| SWE-bench (Verified or Pro) | 58.7% Verified | 74.9% Verified | 58.6% Pro | 57.4% Pro |

| Status | Live API | Live API only — retired ChatGPT | Live API + ChatGPT default | Live API + Claude.ai |

Pick GPT-5.4 if: you need the GPT-5.5 capability stack (1.05M context, native computer use, full tool surface) but want to pay half the input price ($2.50 vs $5.00 per million), or you are running a production pipeline pinned to gpt-5.4-2026-03-05 that validates against a specific behavior.

Pick GPT-5 if: you have a legacy production pipeline pinned to gpt-5-2025-08-07 that already works, your context budget fits in 400K, and you want the cheapest input rate in the OpenAI frontier lineup ($1.25 per million).

Pick GPT-5.5 if: you are starting a new project after April 24, 2026, you need native audio input, and the 40 percent token-efficiency gain over GPT-5.4 offsets the doubled per-token price on your specific workload.

Pick Claude Sonnet 4.6 if: you prefer the Anthropic ecosystem (Claude Code, Anthropic Workbench), you value coding quality and writing nuance over raw price, and the $3.00 input rate fits your budget. Cross-vendor diversity is also a reasonable defensive argument for any production system.

Frequently Asked Questions

Is GPT-5.4 still available in 2026?

Yes. GPT-5.4 is live via the OpenAI API as of April 2026. The model ID is gpt-5.4 and the snapshot pin is gpt-5.4-2026-03-05. OpenAI has committed to give advance notice before any future API retirement. GPT-5.4 also remains available in Codex with native computer-use capabilities. The newer GPT-5.5 (April 23-24, 2026) is now the default in ChatGPT, but GPT-5.4 stays a defensible production choice through at least Q3 2026 thanks to snapshot pinning.

How much does GPT-5.4 cost per million tokens?

GPT-5.4 costs $2.50 per million input tokens, $0.25 per million cached input tokens (90 percent discount on repeated context), and $15.00 per million output tokens. Prompts above 272,000 input tokens incur a 2x input and 1.5x output uplift. Regional data-residency endpoints add a 10 percent uplift. The Batch API offers the same token rates without surcharge but with delayed delivery for offline jobs. There is no monthly subscription on the API surface — you pay only for tokens consumed.

What is the GPT-5.4 context window?

GPT-5.4 ships with a 1,050,000-token context window and a 128,000-token max output. Prompts up to 272,000 input tokens are billed at the standard rate. Above that threshold, input is billed at 2x and output at 1.5x — a soft penalty that nudges teams toward retrieval-augmented chunking on extreme contexts. The same 1.05M context window is shared with GPT-5.5.

When was GPT-5.4 released?

GPT-5.4 was released on March 5, 2026, with three variants — standard, GPT-5.4 Thinking (reasoning-optimized), and GPT-5.4 Pro (high-performance). The snapshot pin is gpt-5.4-2026-03-05. GPT-5.4 was also the first general-purpose OpenAI model to ship with native computer-use capabilities in Codex. The knowledge cutoff is August 31, 2025.

How does GPT-5.4 compare to GPT-5.5?

GPT-5.5 (April 23-24, 2026) outperforms GPT-5.4 on three dimensions: 40 percent fewer tokens per task on agentic coding workloads, native audio input, and a higher 58.6 percent SWE-bench Pro score. GPT-5.4 wins on price — $2.50 input vs $5.00 input per million tokens, exactly half. The capability stack is otherwise close: same 1.05M context, same 128K max output, same five-level reasoning effort scale. New projects after April 24, 2026 should evaluate GPT-5.5 first; existing pipelines pinned to gpt-5.4-2026-03-05 stay defensible.

How does GPT-5.4 compare to GPT-5?

GPT-5.4 (March 2026) and GPT-5 (August 2025) are different generations. GPT-5.4 ships with 1.05M context vs 400K, native computer use vs specialty-endpoint only, the new five-level reasoning effort scale (none/low/medium/high/xhigh) vs four levels, and Tool Search. GPT-5 is cheaper at $1.25 input vs $2.50 input per million. GPT-5 was retired from ChatGPT February 13, 2026 and is API-only. GPT-5.4 remains live everywhere.

Does GPT-5.4 support native computer use?

Yes. GPT-5.4 was the first general-purpose OpenAI model to ship with native computer-use capabilities in Codex. The model can drive a desktop environment directly — open windows, click, type, navigate. At launch GPT-5.4 set record scores on OSWorld-Verified and WebArena Verified, the two main benchmarks for end-to-end UI control on real applications and websites. Combined with Tool Search and the Responses API, this makes GPT-5.4 a strong fit for browser and desktop automation agents.

What is the GPT-5.4 reasoning effort scale?

GPT-5.4 introduced a five-level reasoning effort scale: none (default), low, medium, high, and xhigh. The xhigh level is new in this generation — it pushes reasoning depth beyond the previous high tier on the hardest math, science, and coding problems. The trade-off is latency and output token consumption. On standard reasoning tasks at medium effort, GPT-5.4 uses significantly fewer tokens than GPT-5.2 to reach the same answer.

What is Tool Search in GPT-5.4?

Tool Search is a new system in the OpenAI Responses API introduced with GPT-5.4. Instead of always shipping every tool definition in the system prompt, the model can fetch tool definitions on demand. For agentic systems with dozens of tools, Tool Search reduces token consumption per request and cuts tool-selection latency. The pattern becomes the production default in GPT-5.5 a few weeks later — early GPT-5.4 adoption was the migration path.

Does GPT-5.4 support audio or video?

GPT-5.4 supports text and image input only. Audio and video are not supported natively. For audio workflows, pair GPT-5.4 with Whisper for speech-to-text and a separate text-to-speech model. For video, use a dedicated video model. GPT-5.5 added native audio input, so workflows that need audio should evaluate the upgrade. GPT-5.2 added text plus image plus audio plus video earlier in the lineage but was surpassed on quality by GPT-5.4 and 5.5.

What were the GPT-5.4 launch benchmarks?

OpenAI and independent reviewers published several headline numbers at the March 5, 2026 launch. SWE-bench Verified 58.7 percent, with OpenAI flagging training-data contamination concerns across all frontier models on this benchmark. Record scores at launch on the OSWorld-Verified and WebArena Verified computer-use benchmarks. 33 percent fewer claim-level errors and 18 percent fewer overall errors versus GPT-5.2 on internal evaluation. AIME 2025 16.67 percent on the standard configuration. MMLU is saturated at 88-94 percent across the GPT-5.x family and no longer differentiates the lineage.

What are the GPT-5.4 variants — standard, Thinking, Pro?

OpenAI ships GPT-5.4 in three variants. The standard model handles general-purpose work at $2.50 input and $15 output per million tokens. GPT-5.4 Thinking is the reasoning-optimized variant for math, science, and complex coding — it ramps up reasoning effort by default. GPT-5.4 Pro is the high-performance variant tuned for the hardest benchmarks. All three share the 1.05M context window, the 128K max output, and the new five-level reasoning effort scale. Choose based on the cost-quality point that matches your workload.

Is GPT-5.4 worth it in 2026?

Yes for production pipelines that need the GPT-5.5 capability stack (1.05M context, native computer use, full tool surface) at half the input price. Yes for legacy systems pinned to gpt-5.4-2026-03-05 that validate against a specific behavior. For new projects starting after April 24, 2026, evaluate GPT-5.5 first — the 40 percent token-efficiency gain often closes the price gap on agentic workloads, and native audio input matters for voice agents. External community ratings on Trustpilot, G2, and Capterra were not directly available for GPT-5.4 specifically (most reviews aggregate across OpenAI ChatGPT plans rather than per-model API), which is why our score reflects benchmark performance, capability stack, and value-vs-GPT-5.5 rather than a Trustpilot or G2 average.

Verdict: 8.0/10

GPT-5.4 earns a 8.0/10 for its 1.05M context window, native computer use in Codex with record OSWorld-Verified and WebArena Verified scores at launch, the new five-level reasoning effort scale with the xhigh tier, and Tool Search that cuts agentic-pipeline cost. The launch within seven weeks of GPT-5.5 is what holds it back from a higher score — for new projects after April 24, 2026, GPT-5.5 is the default with 40 percent fewer tokens per task on agentic workloads.

Score breakdown:

- Features: 8.5/10 — 1.05M context, native computer use, five-level reasoning, Tool Search, three variants, full tool surface; missing native audio and video

- Ease of Use: 8.0/10 — standard OpenAI API surface, snapshot pinning, Responses API works well; variant naming friction (standard/Thinking/Pro) plus older mini/nano endpoints can confuse routing

- Value: 8.0/10 — $2.50 input is half of GPT-5.5 for most of the same capability stack; cached input at 90 percent discount compounds on agentic pipelines; long-context surcharge above 272K is a pricing cliff to design around

- Support: 7.5/10 — OpenAI Help Center coverage is solid, advance-notice commitment on API retirement is welcome; per-model community feedback is hard to isolate from generic ChatGPT reviews

Final word: Use GPT-5.4 for production pipelines that want the GPT-5.5 capability stack at half the input price, or for systems pinned to gpt-5.4-2026-03-05 that need stable behavior through deprecation cycles. Skip it for new projects starting after April 24, 2026 in favor of GPT-5.5 — the token-efficiency gain and native audio input usually dominate the price-per-token argument on real agentic workloads. External community ratings on Trustpilot, G2, and Capterra were not directly available for GPT-5.4 specifically, which is why our score reflects benchmark performance and capability stack rather than a Trustpilot or G2 average.

Key Features

Pros & Cons

Pros

- 1,050,000-token context window with 128,000-token max output covers full repositories, multi-PDF research, and long-horizon agentic tasks without aggressive chunking up to the 272K soft threshold.

- Native computer use in Codex with record OSWorld-Verified and WebArena Verified scores at launch — first general-purpose OpenAI model to handle desktop and browser automation on the standard tier.

- Sharp pricing at $2.50 per million input tokens — half the GPT-5.5 input price for the same context size and most of the same capability stack. Cached input at $0.25 per million tokens compounds the savings on agentic pipelines.

- Five-level reasoning effort scale (none, low, medium, high, xhigh) lets you scale cost vs depth per call, with the new xhigh tier pushing reasoning beyond the previous high level on the hardest problems.

- Tool Search reduces token consumption and selection latency on agents with many tools — the pattern adopted as default in GPT-5.5 a few weeks later.

- Three variants — standard, Thinking, Pro — share the 1.05M context window and the new reasoning scale but tune for different cost-quality points.

- 33 percent fewer claim-level errors and 18 percent fewer overall errors versus GPT-5.2 on OpenAI internal evaluation.

Cons

- Surpassed by GPT-5.5 on April 23-24, 2026 — 40 percent fewer tokens per task on agentic workloads, higher SWE-bench Pro score, and native audio input make GPT-5.5 the default for new projects.

- No native audio or video input — text plus image only. Audio workflows still need Whisper plus a separate text-to-speech model. GPT-5.5 added native audio shortly after.

- Long-context surcharge above 272K input tokens (2x input, 1.5x output) creates a pricing cliff that long-document workloads have to design around with retrieval-augmented chunking.

- SWE-bench Verified contamination flag — OpenAI itself flagged training-data contamination concerns on this benchmark across all frontier models, which is why the 58.7 percent number should be read alongside the SWE-bench Pro alternative.

- Knowledge cutoff August 31, 2025 — anything more recent requires browsing or RAG, which adds latency and cost.

- Variant naming friction — standard, Thinking, Pro variants plus older mini and nano endpoints from previous generations make it easy to land on the wrong tier in production routing.

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is GPT-5.4?

OpenAI intermediate frontier model from March 2026 — 1.05M context, $2.50 input and $15 output per million tokens, native computer use, predecessor of GPT-5.5.

How much does GPT-5.4 cost?

GPT-5.4 costs $2.5/month.

Is GPT-5.4 free?

No, GPT-5.4 starts at $2.5/month.

What are the best alternatives to GPT-5.4?

Top-rated alternatives to GPT-5.4 include Claude Code (9.9/10), Cursor (9.4/10), Veo 3.1 (9.4/10), Nano Banana Pro (9.3/10) — all reviewed with detailed scoring on ThePlanetTools.ai.

Is GPT-5.4 good for beginners?

GPT-5.4 is rated 8/10 for ease of use.

What platforms does GPT-5.4 support?

GPT-5.4 is available on OpenAI API (Responses + Chat Completions), Azure OpenAI Foundry, OpenRouter, OpenAI Codex.

Does GPT-5.4 offer a free trial?

Yes, GPT-5.4 offers a free trial.

Is GPT-5.4 worth the price?

GPT-5.4 scores 8/10 for value. We consider it excellent value.

Who should use GPT-5.4?

GPT-5.4 is ideal for: Long-document analysis and full-codebase reviews where the 1.05M context window covers most enterprise workloads in a single prompt up to the 272K soft threshold, Browser and desktop automation agents leveraging native computer-use in Codex with record OSWorld-Verified and WebArena Verified scores, Reasoning-heavy coding pipelines using the five-level reasoning effort scale to balance cost and depth, including the new xhigh tier for the hardest problems, Cost-optimized agentic workloads at production scale — half the GPT-5.5 input price for most of the same capability stack, Vision-language workflows mixing screenshots, diagrams, and text in a single prompt for UI testing automation, document OCR, and visual QA, Production pipelines pinned to gpt-5.4-2026-03-05 that need stable behavior through deprecation cycles with OpenAI advance-notice commitment.

What are the main limitations of GPT-5.4?

Some limitations of GPT-5.4 include: Surpassed by GPT-5.5 on April 23-24, 2026 — 40 percent fewer tokens per task on agentic workloads, higher SWE-bench Pro score, and native audio input make GPT-5.5 the default for new projects.; No native audio or video input — text plus image only. Audio workflows still need Whisper plus a separate text-to-speech model. GPT-5.5 added native audio shortly after.; Long-context surcharge above 272K input tokens (2x input, 1.5x output) creates a pricing cliff that long-document workloads have to design around with retrieval-augmented chunking.; SWE-bench Verified contamination flag — OpenAI itself flagged training-data contamination concerns on this benchmark across all frontier models, which is why the 58.7 percent number should be read alongside the SWE-bench Pro alternative.; Knowledge cutoff August 31, 2025 — anything more recent requires browsing or RAG, which adds latency and cost.; Variant naming friction — standard, Thinking, Pro variants plus older mini and nano endpoints from previous generations make it easy to land on the wrong tier in production routing..

Best Alternatives to GPT-5.4

Ready to try GPT-5.4?

Start your free trial

Try GPT-5.4 Free →