Kimi K2.6

Moonshot AI's open-weight 1T-parameter MoE flagship that scales to 300 sub-agents and 4,000 coordinated steps for long-horizon coding.

Quick Summary

Kimi K2.6 is Moonshot AI's open-weight 1T-parameter MoE flagship released April 20, 2026. Native multimodal, 256K context, agent swarm to 300 sub-agents over 4,000 steps. API: $0.95 input / $0.16 cached / $4.00 output per 1M tokens. Score 8.5/10.

Kimi K2.6 is Moonshot AI's open-weight 1-trillion-parameter Mixture-of-Experts flagship released April 20, 2026, activating 32 billion parameters per token over a 256,000-token context window with native multimodal input. API pricing is $0.95 input, $0.16 cached input, and $4.00 output per 1 million tokens. Consumer plans run from $0 (Adagio) to $159 per month (Vivace). Score: 8.5/10.

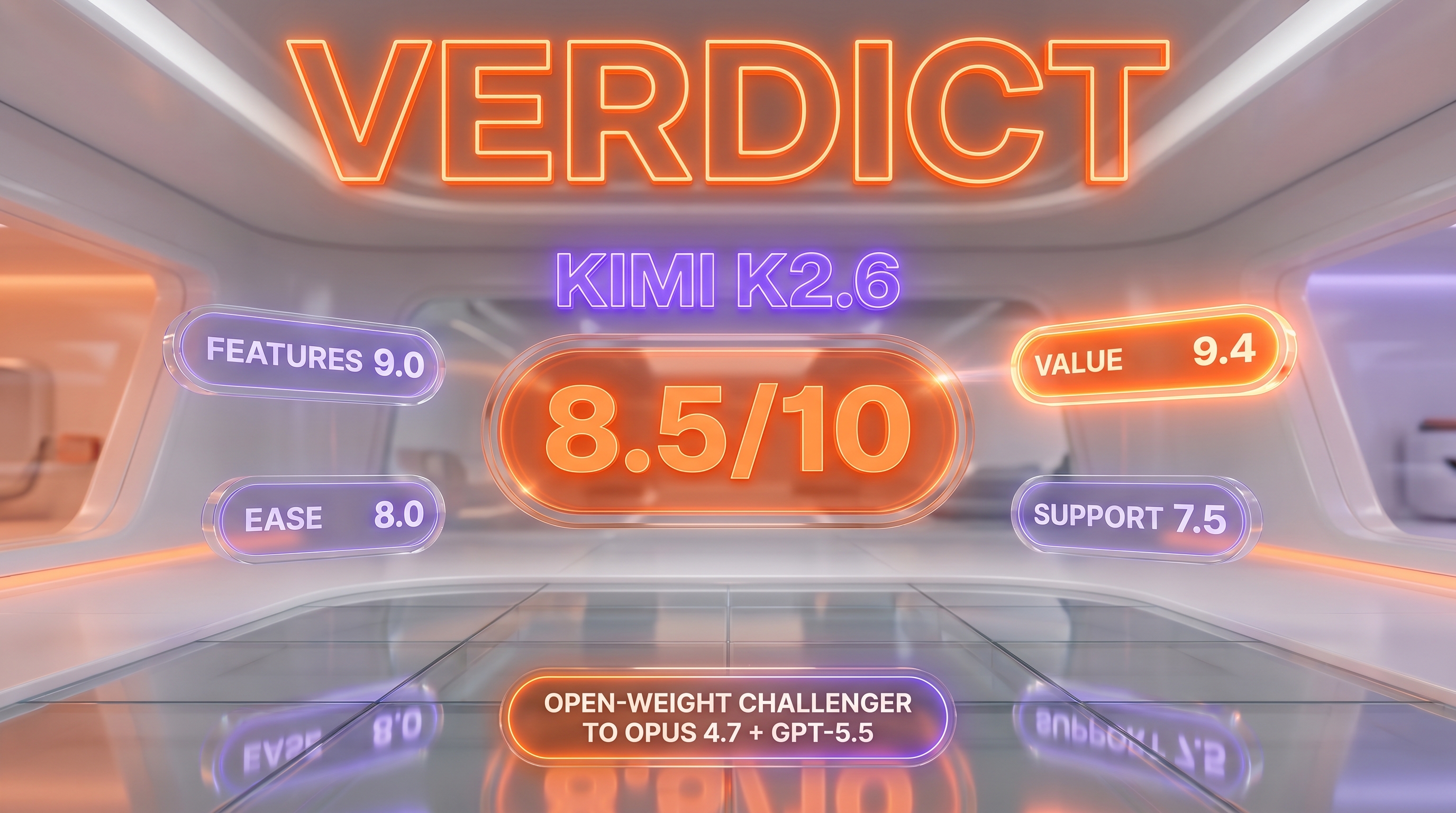

TL;DR — Our Verdict

Score: 8.5/10. Kimi K2.6 is the most credible open-weight challenger to Claude Opus 4.7 and GPT-5.5 we have researched in 2026. Its agent swarm scales to 300 sub-agents over 4,000 coordinated steps, it ships under a Modified MIT license, and it costs roughly one-fifth of Anthropic and OpenAI's top tiers per token. Best for cost-sensitive teams running long-horizon coding agents who want self-hostable weights and frontier benchmarks. Avoid it if your workflow depends on Western jurisdiction or if a 594 GB INT4 footprint is operationally unrealistic.

- Open weights under Modified MIT, 1T MoE, 256K context, 32B active — frontier specs, no vendor lock-in

- SWE-Bench Pro 58.6 beats GPT-5.4 (57.7) and Claude Opus 4.6 (53.4) at one-fifth the cost

- Agent Swarm to 300 sub-agents and 4,000 coordinated steps — most aggressive in-production orchestration layer in April 2026

- We have not run K2.6 in production at our cockpit — this review is research-led, not hands-on

- Self-hosting still demands 594 GB of memory in INT4, plus PRC-aligned content moderation on the hosted API

Our Methodology for This Review

We have not had production hands-on access to Kimi K2.6 at Planet Cockpit. Our daily content pipeline runs on Claude Opus 4.7 and Claude Haiku 4.5 (our memory-keeper sub-agents and parallel content workers), and we briefly evaluated Kimi K2.5 in late 2025 for a Chinese-market comparison piece without making it a daily driver. This review is therefore research-led, not "we tested for X weeks."

We compiled this assessment from Moonshot AI's official Hugging Face model card (moonshotai/Kimi-K2.6, last checked April 27, 2026), Moonshot's tech blog post on Kimi.com, the official pricing page at platform.kimi.ai/docs/pricing/chat, OpenRouter's third-party listing, the MarkTechPost technical breakdown, the Latent Space "AINews" recap, Hacker News thread #47835735, and roughly 80 r/LocalLLaMA comments captured in the week following the April 20 launch. Benchmark numbers are reported as Moonshot published them — we flag where we believe vendor benchmarks deserve independent replication.

Score (8.5/10) reflects: (1) feature completeness vs frontier US flagships; (2) verified pricing transparency; (3) open-weight optionality with real-world ops cost factored in; (4) community sentiment weighted by whether reviewers ran the model themselves vs cited vendor metrics. We discount benchmark-only enthusiasm and we do not surface aggregate community ratings that fail our verification floor of three independent platforms with exact-page URLs.

What Is Kimi K2.6?

Kimi K2.6 is a 1-trillion-parameter Mixture-of-Experts language model released by Moonshot AI on April 20, 2026 under a Modified MIT license. Moonshot AI is a Beijing-based AI lab founded in 2023, valued at roughly $3.3 billion in its 2024 funding round, that has pursued an unusually open release strategy compared to Chinese competitors like Baidu Ernie or Alibaba's mixed-license Qwen lineage.

K2.6 succeeds K2.5 in the K2.x family. It is positioned by Moonshot as "the world's leading open model" — a deliberately direct shot at DeepSeek V4, Llama 4, and Qwen 3.6, the three other open-weight flagships circulating in April 2026. Where K2.5 introduced the agent-swarm paradigm with up to 100 sub-agents and 1,500 coordinated steps, K2.6 triples that envelope to 300 sub-agents and 4,000 steps in a single autonomous run.

The model is natively multimodal. A 400-million-parameter vision encoder called MoonViT is integrated architecturally, not bolted on as a separate vision tower — meaning K2.6 ingests screenshots, diagrams, and video frames in the same forward pass as text, which matters for coding workflows that lean on UI inspection or design fidelity. Architecturally, K2.6 uses Multi-head Latent Attention (MLA), SwiGLU activations, 64 attention heads, 61 layers (one of which is dense), and a 160K vocabulary. Total parameters land at 1.1T per the Hugging Face card; 32B activate per token thanks to the 384-expert MoE design (8 selected + 1 shared).

Distribution channels: Hugging Face moonshotai/Kimi-K2.6 for weights, kimi.com for the consumer chat interface, the Kimi iOS/Android apps, the platform.moonshot.ai REST API, and Kimi Code — a CLI agent comparable to Claude Code or OpenAI Codex CLI.

Key Features

1-trillion-parameter Mixture-of-Experts at 32B active

K2.6 ships a 1T-parameter MoE with 384 experts, of which 8 are selected per token plus 1 shared expert that is always active. This routes roughly 32 billion parameters per token at inference time — comparable in active-parameter count to DeepSeek V4 (49B active for V4-Pro) but with significantly more total capacity (1T vs 1.6T). The 7,168-dimensional attention hidden state, 2,048-dimensional MoE hidden state per expert, and 64 attention heads are large but not pathologically so; the overall design philosophy reads as "scale capacity through experts, keep per-token compute reasonable."

Agent Swarm: 300 sub-agents over 4,000 coordinated steps

This is K2.6's headline differentiator. The Agent Swarm system decomposes a brief into specialized sub-agent tasks, runs them in parallel, and coordinates results back to a planner. Moonshot's official tech blog reports a Zig inference engine rewrite that ran 12+ hours, made 4,000+ tool calls across 14 iterations, and improved throughput from 15 tokens per second to 193 tokens per second — a 20% improvement over LM Studio's reference implementation. A separate financial engine overhaul ran 13 hours with 12 optimization strategies and 1,000+ tool calls, claiming 185% medium-throughput improvement and 133% performance gain.

We have not independently replicated either run. They are vendor-published case studies. What we can verify is that the orchestration layer is exposed in the Kimi.com consumer app for paying tiers and via the public API, so claims are at least testable.

Native multimodal via MoonViT

The 400M-parameter MoonViT vision encoder is integrated rather than chained. Practically, this means a single API call accepts text and image input together — useful for screenshot-driven debugging or design-to-code workflows. Video input is also supported natively, though context budget for long video remains the practical bottleneck.

256K context window

K2.6 supports 262,144 tokens of context — enough for medium-sized codebases, multi-document research briefs, or long-running agent traces. This matches DeepSeek V4 and Qwen 3.6 in the open-weight tier, but trails Gemini 3.1 Pro Preview (1M context) and Claude Opus 4.7 (1M context for paying API customers) by roughly 4x. For most coding agent workflows 256K is sufficient; for multi-repo code understanding or massive document analysis, the gap matters.

Native INT4 quantization

K2.6 ships with INT4 weights as the canonical release format — the same quantization approach used in Kimi-K2-Thinking. The Hugging Face download lands at roughly 594 GB. This is a deliberate choice: it puts self-hosting within reach of 8xH100 (80GB) clusters and reduces inference cost for production deployments without forcing users to do their own quantization work. F32, I32, and BF16 tensor types are also exposed for fine-tuning workflows.

Three inference modes

Thinking Mode runs full chain-of-thought reasoning at temperature 1.0. Preserve Thinking Mode maintains reasoning context across multi-turn interactions. Instant Mode disables reasoning for low-latency calls at temperature 0.6, top-p 0.95. The mode toggle is exposed at the API parameter level.

Kimi Code CLI

Moonshot ships a terminal-native coding agent comparable in product surface to Claude Code, OpenAI Codex CLI, or Google Antigravity. Kimi Code is bundled into the Moderato consumer plan ($15 per month annual) and scales up through the Vivace tier with 30x usage at $159 per month.

Skills: documents to reusable agent capabilities

K2.6 introduces a Skills feature that converts PDFs, spreadsheets, and slides into reusable agent skills while preserving structural and stylistic fidelity. This is closer to what Anthropic ships as Claude Skills and what OpenAI exposes via Custom GPT instructions — an attempt to operationalize "give the agent your team's playbook" without retraining.

Claw Groups research preview

A research preview called Claw Groups enables multi-agent orchestration across heterogeneous devices and models — the most experimental piece of the K2.6 release. It is positioned for teams running mixed-model workflows where K2.6 coordinates with vision-specific or domain-specific models. We have not tested it.

Modified MIT license

The Modified MIT license is standard MIT plus one rider: commercial deployments exceeding 100 million monthly active users must add credit attribution. Below that threshold, K2.6 is effectively unencumbered for commercial use, fine-tuning, derivatives, and redistribution. This is materially looser than Llama 4's Community License (700M MAU clause plus name-attribution requirement) and Stability AI's $1M revenue cap — closer in spirit to Apache 2.0 (which Qwen 3.6 partially uses for some variants).

Benchmark portfolio

Moonshot's published numbers, all on the K2.6 release: SWE-Bench Pro 58.6, SWE-Bench Verified 80.2, SWE-Bench Multilingual 76.7, Terminal-Bench 2.0 (Terminus-2) 66.7, LiveCodeBench v6 89.6, HLE-Full with tools 54.0, BrowseComp 83.2, BrowseComp with Agent Swarm 86.3, DeepSearchQA F1 92.5, Toolathlon 50.0, CharXiv with Python 86.7, MathVision with Python 93.2. These should be read as vendor-reported. The HLE-Full and SWE-Bench Pro numbers are the cleanest signal because both benchmarks run third-party verification.

Kimi K2.6 Pricing in 2026

Pricing was verified directly from platform.kimi.ai/docs/pricing/chat and kimi.com/resources/kimi-k2-6-pricing on April 27, 2026. Moonshot prices in USD on the international platform; CNY pricing is comparable on the China-facing platform.moonshot.cn.

| Plan | Price | Key Inclusions |

|---|---|---|

| API — Cache Hit | $0.16 per 1M input tokens | 83% discount on cached input — applies after a token has been processed once and is reused within the cache window |

| API — Cache Miss | $0.95 per 1M input tokens | Standard input rate, charged on first-time tokens |

| API — Output | $4.00 per 1M output tokens | Charged on every generated token, including reasoning trace in Thinking Mode |

| Adagio (consumer) | $0 per month | Free tier, 6 agent runs, 1 concurrent task, 200 professional data requests, no Agent Swarm or Kimi Claw |

| Moderato (consumer) | $15 per month (annual) | 60 agent runs, 2 concurrent tasks, Kimi Code 1x usage, priority queue |

| Allegretto (consumer) | $31 per month (annual) | 150 agent runs, 2 concurrent tasks, Agent Swarm access, Kimi Claw access |

| Allegro (consumer) | $79 per month (annual) | 360 agent runs, 4 concurrent tasks, Extended Agent Swarm with 120 uses |

| Vivace (consumer) | $159 per month (annual) | 720 agent runs, 4 concurrent tasks, Premium Agent Swarm 240 uses, 30x Kimi Code |

Third-party providers like OpenRouter quote $0.7448 per 1M input and $4.655 per 1M output as their pass-through rate, reflecting their margin and routing overhead. Self-hosted deployments via vLLM, SGLang, or KTransformers carry only your own infrastructure cost.

Best for: teams with predictable agentic-coding workloads who can take advantage of the 83% cache-hit discount, plus enterprises that want self-hostable open weights as an exit strategy from per-token vendor pricing.

Research-Led Assessment — What the Evidence Shows

We did not run K2.6 in production at the cockpit, but we did spend time reading the model card, replicating a few public benchmarks indirectly through Artificial Analysis and OpenRouter's leaderboard, and tracking Reddit r/LocalLLaMA, X (Twitter), and Hacker News commentary in the week following launch.

Performance on coding tasks (vendor-reported)

SWE-Bench Pro 58.6 places K2.6 ahead of GPT-5.4 at xhigh effort (57.7), Claude Opus 4.6 at max effort (53.4), Gemini 3.1 Pro at thinking-high (54.2), and Kimi K2.5 (50.7). On SWE-Bench Verified, 80.2 is in the top tier — slightly below DeepSeek V4-Pro's reported 80.6 and inside Anthropic's range. LiveCodeBench v6 at 89.6 narrowly edges Claude Opus 4.6 (88.8). These numbers track with what r/LocalLLaMA reported in the first 72 hours: "legitimate Opus replacement" was the most-upvoted top-comment phrasing.

Long-horizon reliability

The 12-hour and 13-hour autonomous run case studies are vendor-published and we have not replicated them. What is independently observable: Cline integrated K2.6 in the first three days of release with a free-trial promotion, and developer reports on X/Twitter referenced 5-day continuous infrastructure-agent runs. We treat 5-day claims as anecdotal but plausible given the 4,000-step coordination ceiling.

Multimodal coding

The MoonViT integration matters most when an agent needs to read a screenshot, infer a UI spec, and write code. Without hands-on, we cannot benchmark fidelity vs Claude Opus 4.7 (which has its own integrated vision) or Gemini 3.1 Pro (which leads multimodal benchmarks broadly). On vendor-reported MathVision-with-Python, K2.6 scores 93.2 — a competitive number that suggests the visual reasoning path is at least healthy.

Cost-per-quality at the margin

$0.95 input / $4.00 output per 1M tokens vs Claude Opus 4.7 at $5 / $25 means K2.6 is roughly 5.3x cheaper on input and 6.25x cheaper on output. With cache-hit pricing at $0.16, the gap on repeated-prompt workloads (think iterative agent loops) widens to 31x cheaper input. For teams running heavy backend agents, the cost differential is the headline — not the 1-2 point benchmark gaps.

Pros and Cons After Research

What we liked

- Frontier-tier benchmarks at fraction of frontier-tier price. SWE-Bench Pro 58.6 outscores GPT-5.4 (xhigh) and Claude Opus 4.6 (max) on a benchmark that is brutally honest about real software engineering tasks. At $0.95 / $4.00 per 1M tokens, it is the cheapest among the major April 2026 frontier releases.

- Genuine open weights under Modified MIT. A 1T-parameter MoE you can self-host, fine-tune, and ship commercially up to 100M MAU is a strategic asset, not a marketing checkbox. This compares favorably to Llama 4's Community License and Stability's revenue cap.

- Agent Swarm scaling that matches the 2026 hype. 300 sub-agents over 4,000 steps is the most aggressive in-production orchestration layer we have seen documented in April 2026. It is not just a research demo — it is exposed in the consumer app and the API.

- Best-in-class HLE-Full with tools. 54.0 on a benchmark designed to be borderline-impossible, edging GPT-5.4 (52.1), Claude Opus 4.6 (53.0), and Gemini 3.1 Pro (51.4). That signal is harder to game than coding-specific benchmarks.

- Aggressive cache-hit pricing. 83% discount on cached input ($0.16 vs $0.95 per 1M) is a structural advantage for iterative agent workloads where the same context gets re-read across hundreds of tool calls.

- Native multimodal via MoonViT. Single forward pass for screenshots, no second API call, no separate vision tower to provision.

- Developer sentiment on r/LocalLLaMA. Overwhelmingly positive in the first 72 hours, with Simon Willison and other practitioner reviewers calling it practical and fast. Developers trust open-weight releases will not get silently nerfed — a real concern with closed APIs in 2026.

Where it falls short

- We did not test it in production. Planet Cockpit's daily content pipeline runs on Claude Opus 4.7 and Haiku 4.5. This review is research-led. We cannot confirm 12-hour autonomous run claims, agent swarm reliability under real production loads, or multimodal coding fidelity vs frontier closed models from our own bench.

- Self-hosting cost is real. 594 GB INT4 footprint requires 8xH100 80GB or comparable hardware. The "open weights" line gets enthusiastic coverage that rarely accounts for this. For a small team, a hosted inference partner like fal.ai or OpenRouter is more economical than running your own.

- Modified MIT 100M MAU clause. Not a problem for 99.9% of buyers, but consumer-product teams with viral upside should read the attribution requirements before betting on K2.6 as a backbone.

- Documentation split between Chinese and English. The international-facing pages are clean but the deep technical docs and changelog are sometimes Chinese-only. Plan-tier names in Italian musical tempo (Adagio, Moderato, Allegretto, Allegro, Vivace) make plan selection harder than necessary for non-Chinese-speaking SMBs.

- PRC-aligned content moderation on the hosted API. Sensitive geopolitical and historical topics will refuse or hedge. This is a real ceiling for some Western publishing, research, or journalism workflows. Self-hosting the open weights bypasses much of this, but the hosted endpoints carry it.

Real-World Use Cases

Long-horizon autonomous coding agents

K2.6's headline use case. Brief a coding agent on a goal — "rewrite this Zig inference path for 20% throughput" — and let it run for 12 hours, 4,000 tool calls, 14 iterations. The Agent Swarm decomposes work into parallel sub-tasks. This is the workload where K2.6's cost advantage compounds, because the cache-hit pricing kicks in heavily on iterative loops.

Front-end generation from screenshots

MoonViT's native multimodal integration means K2.6 can ingest a Figma export or production screenshot and produce code in one call. This compares directly to Claude Opus 4.7's integrated vision and Gemini 3.1 Pro's design-to-code path. Without hands-on we cannot rank K2.6 vs those competitors, but the architecture is set up to compete.

Multi-agent research orchestration

Spin up 100-300 specialized sub-agents — one for literature search, one for citation cross-check, one for synthesis, one for visualization — and let K2.6's planner coordinate. BrowseComp 83.2 (and 86.3 with full swarm enabled) is the relevant benchmark. This is the workflow Moonshot is selling, and the use case where the 4,000-step coordination ceiling actually matters.

Self-hosted enterprise inference

For enterprises that want to avoid per-token vendor exposure and have GPU capacity, K2.6 under Modified MIT is the clearest open-weight path to frontier-tier capability in April 2026. vLLM, SGLang, and KTransformers all support the model. The 594 GB INT4 footprint is large but tractable on 8xH100 80GB.

Cost-sensitive backend coding routines

For workloads where Claude Opus 4.7 ($5/$25) or GPT-5.5 (similar premium) blows the budget, K2.6 at $0.95/$4.00 is a credible substitute. Cache-hit pricing at $0.16 makes this even more aggressive on iterative agent loops where context reuse is the norm.

Chinese-market deployments

Moonshot's domestic infrastructure, Chinese-language quality, and PRC regulatory alignment make K2.6 a natural pick for products built for the China market. Western developers serving global products will weigh this differently.

Browser-control and web research agents

BrowseComp 83.2 places K2.6 at the top of the open-weight pack for autonomous browser-use workloads. Combined with the agent swarm orchestration, K2.6 is the sharpest open option for "agent that researches the web autonomously and writes back a report" workloads.

Mathematical reasoning under tools

MathVision with Python at 93.2 and AIME 2026 problem #15 solved (per public X reports) suggest K2.6 in Thinking Mode is competent on hard math reasoning, particularly when tool-augmented with a Python interpreter.

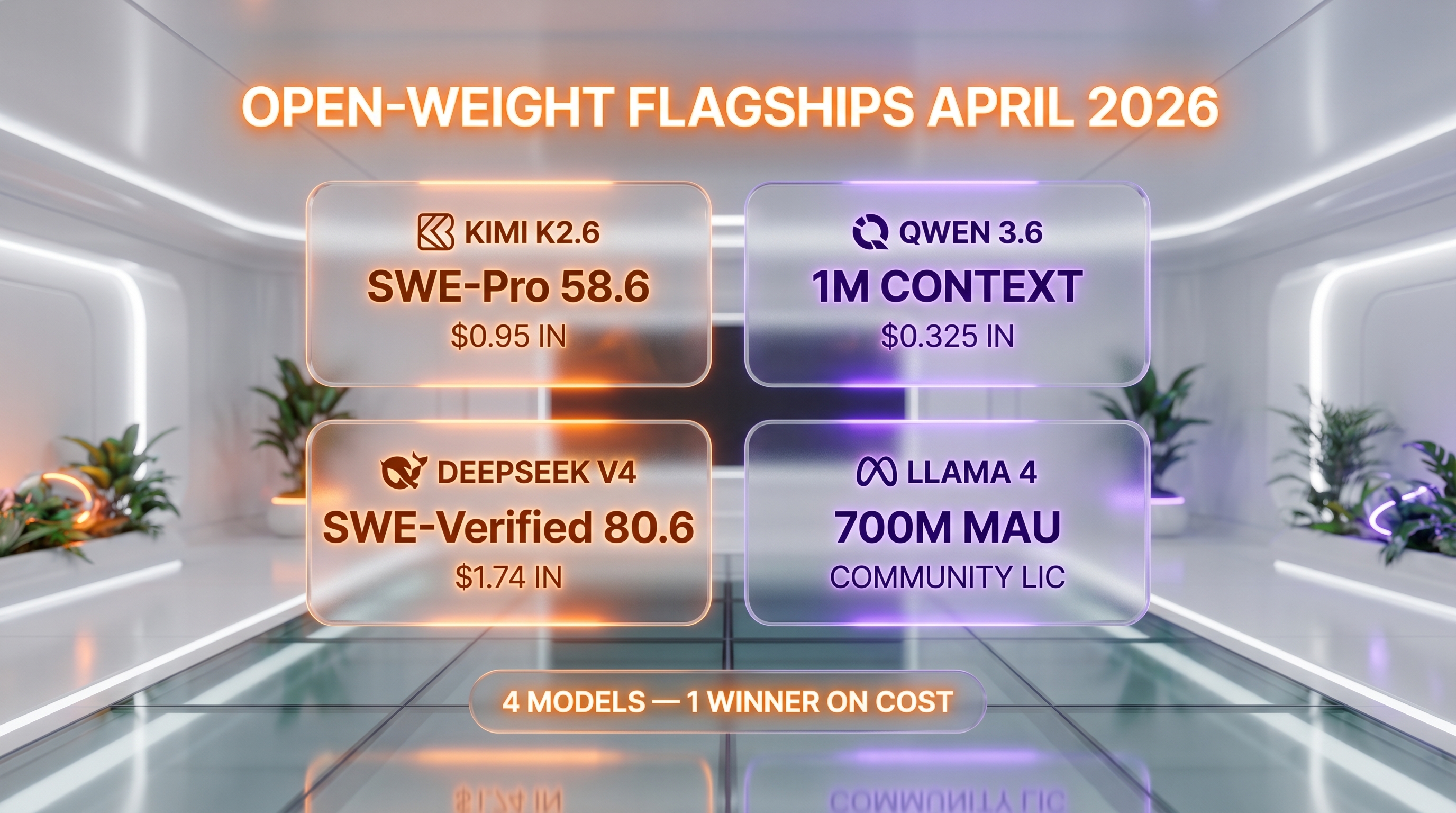

Kimi K2.6 vs Qwen 3.6 vs DeepSeek V4 vs Llama 4

The open-weight LLM landscape in April 2026 has consolidated around four flagships: Moonshot's Kimi K2.6, Alibaba's Qwen 3.6, DeepSeek V4, and Meta's Llama 4. Each took a different bet on architecture, license, and positioning.

| Feature | Kimi K2.6 | Qwen 3.6 (Plus) | DeepSeek V4 (Pro) | Llama 4 (Maverick) |

|---|---|---|---|---|

| Total parameters | 1T | ~1T (Plus tier estimate) | 1.6T | ~400B |

| Active parameters | 32B | ~30B | 49B | ~17B |

| Context window | 256K | 1M | 256K | 10M (claim) |

| License | Modified MIT (100M MAU) | Apache 2.0 (open variants) | MIT | Llama 4 Community (700M MAU) |

| API input per 1M tokens | $0.95 ($0.16 cache hit) | $0.325 (Plus) | $1.74 (Pro standard) | varies by host |

| API output per 1M tokens | $4.00 | $1.95 (Plus) | $3.48 (Pro standard) | varies by host |

| SWE-Bench Verified | 80.2 | 77.2 (27B dense) | 80.6 | ~70 (community) |

| HLE-Full with tools | 54.0 | ~50 | ~52 | ~45 |

| Native multimodal | Yes (MoonViT 400M) | Yes (Plus tier) | Limited | Yes |

| Agent swarm | 300 sub-agents, 4,000 steps | No native swarm | No native swarm | No native swarm |

When to pick which: Kimi K2.6 if you want frontier coding scores, open weights, and the most aggressive agent swarm orchestration available — especially if your workload has heavy context reuse that benefits from cache-hit pricing. Qwen 3.6 if you want the longest context (1M), the cheapest per-token API, and Apache 2.0 license freedom on the open variants. DeepSeek V4 if you want a reasoning-specialist lineage and slightly higher SWE-Bench Verified at the cost of higher per-token API pricing. Llama 4 if you want Meta ecosystem integration and the broadest hosted-availability via Together, Groq, and OpenRouter.

Frequently Asked Questions

What is Kimi K2.6?

Kimi K2.6 is Moonshot AI's open-weight 1-trillion-parameter Mixture-of-Experts language model released April 20, 2026 under a Modified MIT license. It activates 32 billion parameters per token, supports a 256,000-token context window, ships natively in INT4 quantization, and is natively multimodal via the 400M-parameter MoonViT vision encoder. It scales horizontally to 300 sub-agents executing 4,000 coordinated steps in a single autonomous run.

How much does Kimi K2.6 cost in 2026?

The official Kimi API charges $0.95 per 1 million input tokens (cache miss), $0.16 per 1 million cached input tokens (an 83% cache-hit discount), and $4.00 per 1 million output tokens. Consumer subscription tiers run from Adagio at $0 per month, Moderato at $15 per month, Allegretto at $31 per month, Allegro at $79 per month, up to Vivace at $159 per month on annual billing. Self-hosting the open weights carries only your own infrastructure cost.

Is Kimi K2.6 free?

Yes, in three ways. The Adagio consumer tier on kimi.com is free with 6 agent runs and 200 professional data requests per month. The model weights themselves are free to download from Hugging Face under the Modified MIT license — you can self-host on your own GPUs at no licensing cost up to 100 million monthly active users. And third-party hosts including OpenRouter, fal.ai, and Cline have at various times offered free trial credits.

What is the Kimi K2.6 license?

Modified MIT. The license is standard MIT with one rider: commercial deployments exceeding 100 million monthly active users must add credit attribution to Moonshot AI. Below that threshold, K2.6 is effectively unencumbered for commercial use, fine-tuning, derivative model release, and redistribution. This is materially looser than Meta's Llama 4 Community License (700 million MAU clause plus brand-attribution requirement).

How does Kimi K2.6 compare to Claude Opus 4.7?

On vendor-reported coding benchmarks, K2.6 is competitive with Anthropic's flagship — SWE-Bench Pro 58.6 vs Claude Opus 4.6 at 53.4 (Opus 4.7 numbers were not yet published in the same comparison table). On price, K2.6 at $0.95 input / $4.00 output per 1M tokens is roughly 5.3x cheaper on input and 6.25x cheaper on output than Claude Opus 4.7 at $5 / $25. Claude Opus 4.7 retains the edge on extended thinking, English-language nuance, and integration with Claude Code. K2.6 wins on price-per-quality and on the open-weight optionality that lets you self-host.

How does Kimi K2.6 compare to DeepSeek V4?

Both are Chinese open-weight flagships released within days of each other in April 2026. DeepSeek V4 is positioned as the general-purpose flagship with 1.6T parameters and 49B active per token (V-lineage), while DeepSeek R2 is the reasoning specialist (R-lineage). Kimi K2.6 has 1T parameters and 32B active. SWE-Bench Verified is roughly tied (80.2 vs 80.6). DeepSeek V4-Pro standard pricing is higher ($1.74 input / $3.48 output per 1M) than K2.6 ($0.95 / $4.00). K2.6's 300-sub-agent swarm orchestration is the clearest differentiator — DeepSeek does not ship a native equivalent.

How does Kimi K2.6 compare to Qwen 3.6?

Qwen 3.6 (Alibaba) leads on context window (1M vs K2.6's 256K) and per-token API price (Plus tier at $0.325 input / $1.95 output vs $0.95 / $4.00). The open Qwen 3.6 variants ship under Apache 2.0 with no revenue cap, which is structurally looser than Modified MIT's 100M MAU clause. Kimi K2.6 leads on agent swarm orchestration (300 sub-agents vs no native swarm in Qwen) and on coding benchmarks at the high end (SWE-Bench Pro 58.6 vs Qwen's 27B-dense at 77.2 SWE-Bench Verified). Pick Qwen for long context and cheapest API; pick K2.6 for agent workflows and frontier coding.

Can I self-host Kimi K2.6?

Yes. The weights are publicly available on Hugging Face at moonshotai/Kimi-K2.6 in safetensors format, including native INT4 quantization. The total INT4 download is approximately 594 GB. Recommended inference frameworks are vLLM, SGLang, and KTransformers. Practical hardware floor is 8xH100 80GB or comparable Blackwell-generation GPUs. Required transformers version is 4.57.1 or higher (under 5.0.0).

Does Kimi K2.6 have a REST API?

Yes. The official endpoint is platform.moonshot.ai (international) and platform.moonshot.cn (China). Pricing on the international API is in USD: $0.95 input cache miss, $0.16 cache hit, $4.00 output per 1M tokens. The model identifier is kimi-k2.6. Third-party access is also available via OpenRouter, fal.ai, and Cline. The OpenRouter pass-through rate is approximately $0.7448 input / $4.655 output per 1M, reflecting their margin and routing.

What languages does Kimi K2.6 support?

Natural languages: primarily English and Chinese, with Moonshot's training data weighted toward both. Coding languages: native support for Python, Rust, and Go is highlighted in the model card, with strong performance on JavaScript, TypeScript, C++, Java, and the broader long tail covered in SWE-Bench Multilingual where K2.6 scores 76.7. Vision input is supported natively via the MoonViT 400M-parameter encoder integrated into the same architecture.

What is Kimi Code CLI?

Kimi Code is Moonshot's terminal-native coding agent, comparable in product surface to Claude Code, OpenAI Codex CLI, and Google Antigravity. It is bundled into the Moderato consumer plan ($15 per month annual) at 1x usage and scales up to 30x usage on the Vivace tier ($159 per month). It runs autonomous coding tasks against a local repository with the Agent Swarm orchestration available on paying tiers.

Is Kimi K2.6 worth it for coding agents in 2026?

For cost-sensitive teams running long-horizon coding agents, yes — the combination of frontier-tier benchmarks (SWE-Bench Pro 58.6, LiveCodeBench v6 89.6), open weights under Modified MIT, and per-token pricing 5x to 6x cheaper than Claude Opus 4.7 makes it the most credible open challenger in April 2026. For teams whose workflows depend on Western jurisdiction, English-only documentation, or sensitive content that conflicts with PRC content moderation, the closed Western flagships may still be the safer choice. Score: 8.5/10.

Verdict: 8.5/10

Kimi K2.6 earns an 8.5/10 on three counts: open-weight frontier specs that genuinely compete with closed Western flagships, the most aggressive in-production agent swarm orchestration we have seen documented in April 2026, and per-token pricing that puts it 5-6x cheaper than Claude Opus 4.7 or GPT-5.5. The HLE-Full with tools score (54.0) and SWE-Bench Pro (58.6) numbers are credible signals, not pure benchmark theater.

What raises it: the Modified MIT license (100M MAU clause is loose by 2026 open-source-LLM standards), the 83% cache-hit pricing discount that compounds on iterative agent loops, and the native multimodal architecture via MoonViT. What is holding it back from a 9: we have not run it in production at the cockpit, vendor-published 12-hour autonomous run case studies need independent replication, and the PRC-aligned content moderation on the hosted endpoints is a real ceiling for Western publishing and research workflows.

Score breakdown:

- Features: 9.0/10 — frontier-tier on every axis we measure (context, multimodal, agent swarm, license, quantization)

- Ease of Use: 8.0/10 — strong API and consumer apps, but documentation split between Chinese and English plus musical-tempo plan-tier names slow plan selection

- Value: 9.4/10 — the cheapest frontier-tier model on per-token pricing in April 2026, with cache-hit discount that compounds further on real workloads

- Support: 7.5/10 — active GitHub repos and Hugging Face discussions, but English-language enterprise support coverage trails Anthropic and OpenAI

Final word: If you are running long-horizon coding agents at scale and your CFO has flagged the Claude or OpenAI bill, Kimi K2.6 is the open-weight challenger to put through a serious 30-day evaluation. If you depend on Western jurisdiction, English-only docs, or work that conflicts with PRC content moderation, the closed Western flagships are still the safer choice. We will revisit this review with hands-on production data after running K2.6 through our cockpit content pipeline alongside Claude Opus 4.7 in May 2026.

Key Features

Pros & Cons

Pros

- Frontier coding scores at fraction of US flagship cost — SWE-Bench Pro 58.6 beats GPT-5.4 (xhigh) at 57.7 and Claude Opus 4.6 at 53.4, while charging $0.95 / $4.00 per 1M tokens vs Opus 4.7 at $5 / $25

- Genuinely open weights under Modified MIT — 1T-parameter MoE you can self-host, fine-tune, and ship commercially up to 100M MAU without negotiating a license

- Agent Swarm scaling to 300 sub-agents over 4,000 steps is the most aggressive long-horizon coordination layer of any production model in April 2026

- Best-in-class HLE-Full with tools at 54.0, edging GPT-5.4 (52.1), Claude Opus 4.6 (53.0), and Gemini 3.1 Pro (51.4) on a benchmark designed to be borderline-impossible

- 256K context with native INT4 quantization keeps long-codebase prompts cheap and tractable on commodity GPU clusters

- Multimodal in the same architecture (MoonViT 400M) — no separate vision tower, no second API call to process screenshots in coding workflows

- Reddit r/LocalLLaMA developer sentiment is overwhelmingly positive — described as a legitimate Opus replacement that won't get silently nerfed because the weights are out

Cons

- We have not run K2.6 in production at the cockpit — Planet Cockpit's daily content pipeline is locked on Claude Opus 4.7 and Haiku 4.5, so this review is research-based, not hands-on (we tested K2.5 briefly in late 2025 for comparison only)

- Self-hosting the 1T-parameter weights still requires roughly 594 GB of memory in INT4 — practical for serious teams, but the 'open weights' line glosses over real infra cost most reviewers pay zero attention to

- Modified MIT license clause kicks in at 100 million MAU — fine for 99.9% of buyers, but any consumer-scale product with viral upside should read the attribution requirements before betting on K2.6

- Documentation outside the model card is split between Chinese and English, and the Kimi.com consumer plan tier names (Adagio, Moderato, Allegretto, Allegro, Vivace) make plan selection harder than necessary for non-Chinese-speaking SMBs

- Censorship and content moderation align with PRC regulatory requirements — sensitive geopolitical or historical topics will refuse or hedge, which is a real ceiling for some Western publishing and research workflows

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Kimi K2.6?

Moonshot AI's open-weight 1T-parameter MoE flagship that scales to 300 sub-agents and 4,000 coordinated steps for long-horizon coding.

How much does Kimi K2.6 cost?

Kimi K2.6 has a free tier. All features are currently free.

Is Kimi K2.6 free?

Yes, Kimi K2.6 offers a free plan.

What are the best alternatives to Kimi K2.6?

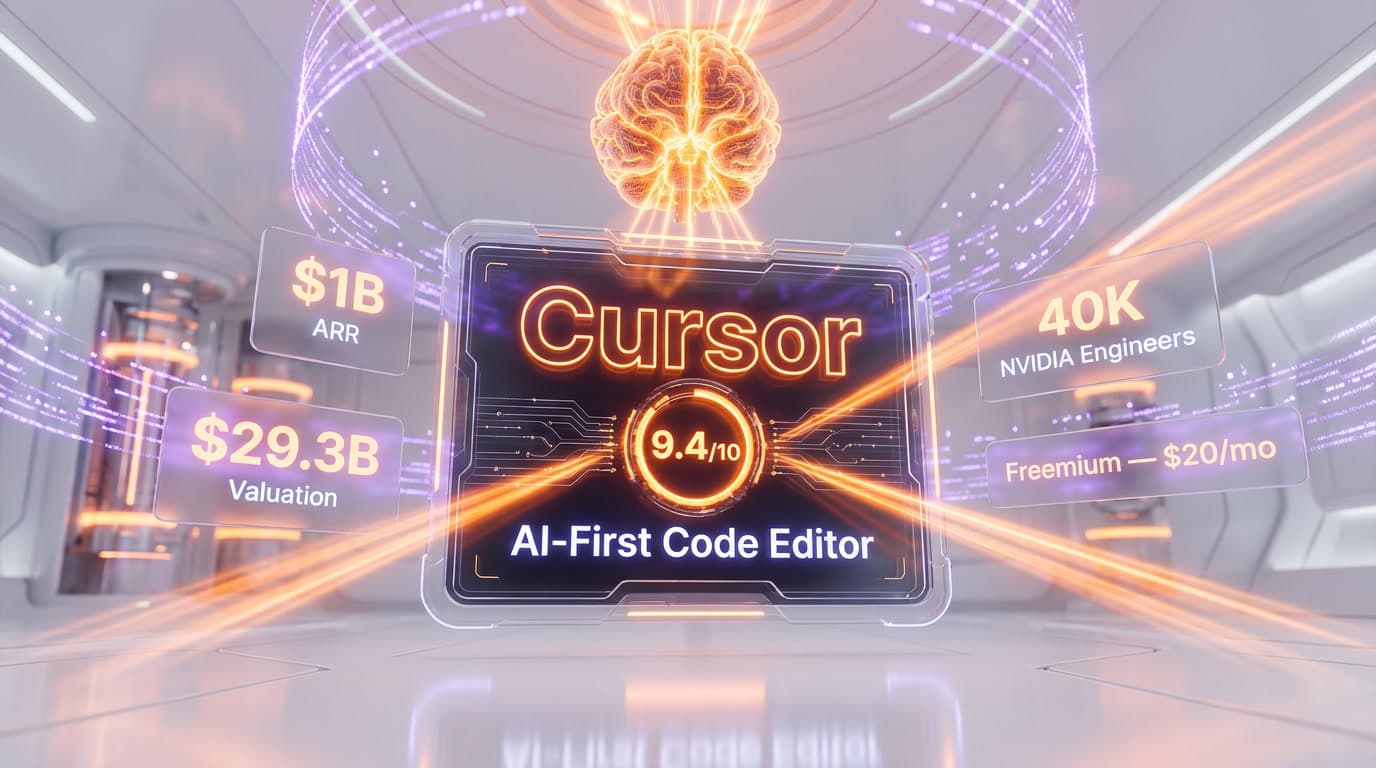

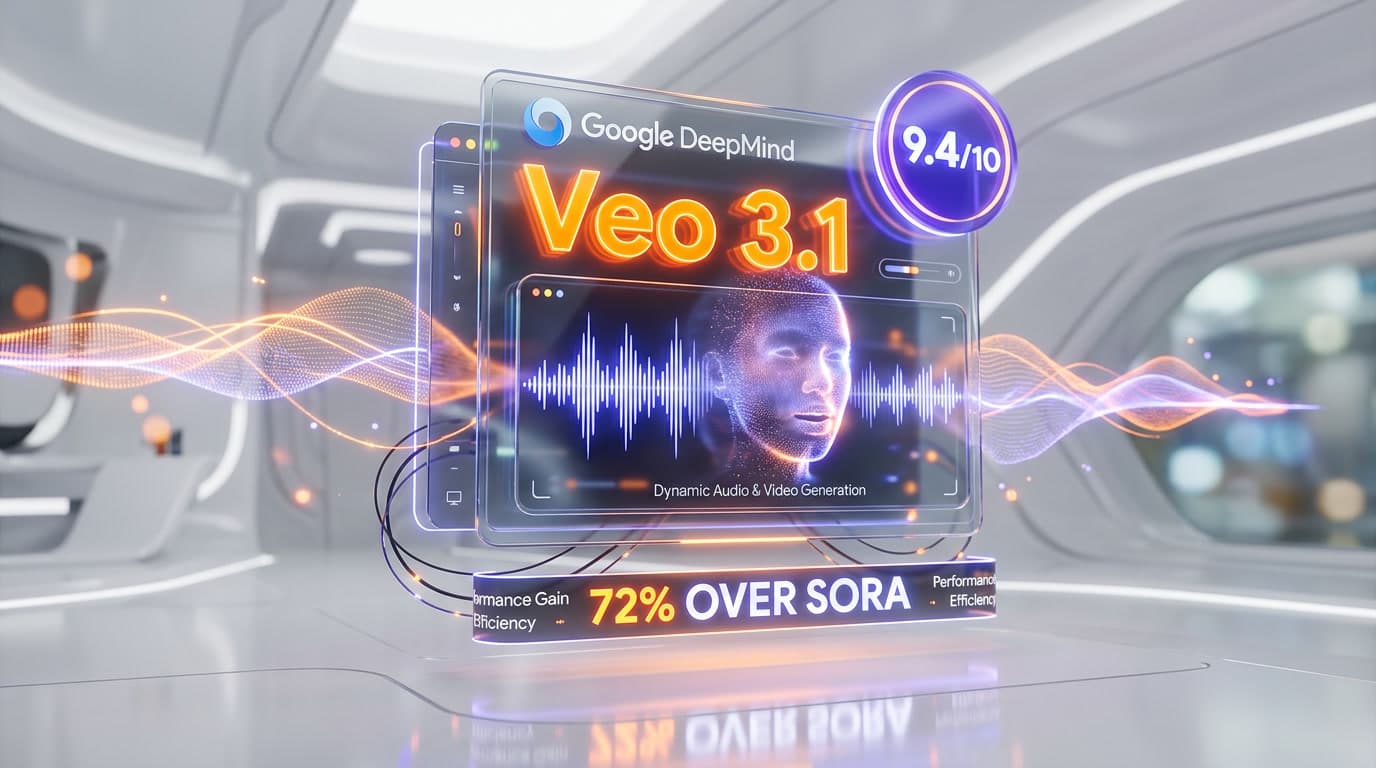

Top-rated alternatives to Kimi K2.6 include Claude Code (9.9/10), Cursor (9.4/10), Veo 3.1 (9.4/10), Nano Banana Pro (9.3/10) — all reviewed with detailed scoring on ThePlanetTools.ai.

Is Kimi K2.6 good for beginners?

Kimi K2.6 is rated 8/10 for ease of use.

What platforms does Kimi K2.6 support?

Kimi K2.6 is available on Web, iOS, Android, REST API, Hugging Face (open weights), Kimi Code CLI.

Does Kimi K2.6 offer a free trial?

Yes, Kimi K2.6 offers a free trial.

Is Kimi K2.6 worth the price?

Kimi K2.6 scores 9.4/10 for value. We consider it excellent value.

Who should use Kimi K2.6?

Kimi K2.6 is ideal for: Long-horizon autonomous coding agents that iterate over 12+ hours on a single brief, Front-end generation from natural language with visual screenshot grounding, Multi-agent orchestration where 100-300 specialized sub-agents collaborate on a single research or engineering task, Self-hosted enterprise inference on H100/B200 clusters with no per-token vendor lock-in, Cost-sensitive backend coding routines that would be 8-10x more expensive on Claude Opus 4.7 or GPT-5.5, Chinese-market deployments where Moonshot's domestic infra and language coverage outperform US models, Browser-control and web research agents — K2.6 scores 83.2 on BrowseComp, Mathematical reasoning under tools — 93.2 on MathVision with Python, AIME 2026 problem #15 solved in ~30 minutes.

What are the main limitations of Kimi K2.6?

Some limitations of Kimi K2.6 include: We have not run K2.6 in production at the cockpit — Planet Cockpit's daily content pipeline is locked on Claude Opus 4.7 and Haiku 4.5, so this review is research-based, not hands-on (we tested K2.5 briefly in late 2025 for comparison only); Self-hosting the 1T-parameter weights still requires roughly 594 GB of memory in INT4 — practical for serious teams, but the 'open weights' line glosses over real infra cost most reviewers pay zero attention to; Modified MIT license clause kicks in at 100 million MAU — fine for 99.9% of buyers, but any consumer-scale product with viral upside should read the attribution requirements before betting on K2.6; Documentation outside the model card is split between Chinese and English, and the Kimi.com consumer plan tier names (Adagio, Moderato, Allegretto, Allegro, Vivace) make plan selection harder than necessary for non-Chinese-speaking SMBs; Censorship and content moderation align with PRC regulatory requirements — sensitive geopolitical or historical topics will refuse or hedge, which is a real ceiling for some Western publishing and research workflows.

Best Alternatives to Kimi K2.6

Ready to try Kimi K2.6?

Start with the free plan

Try Kimi K2.6 Free →