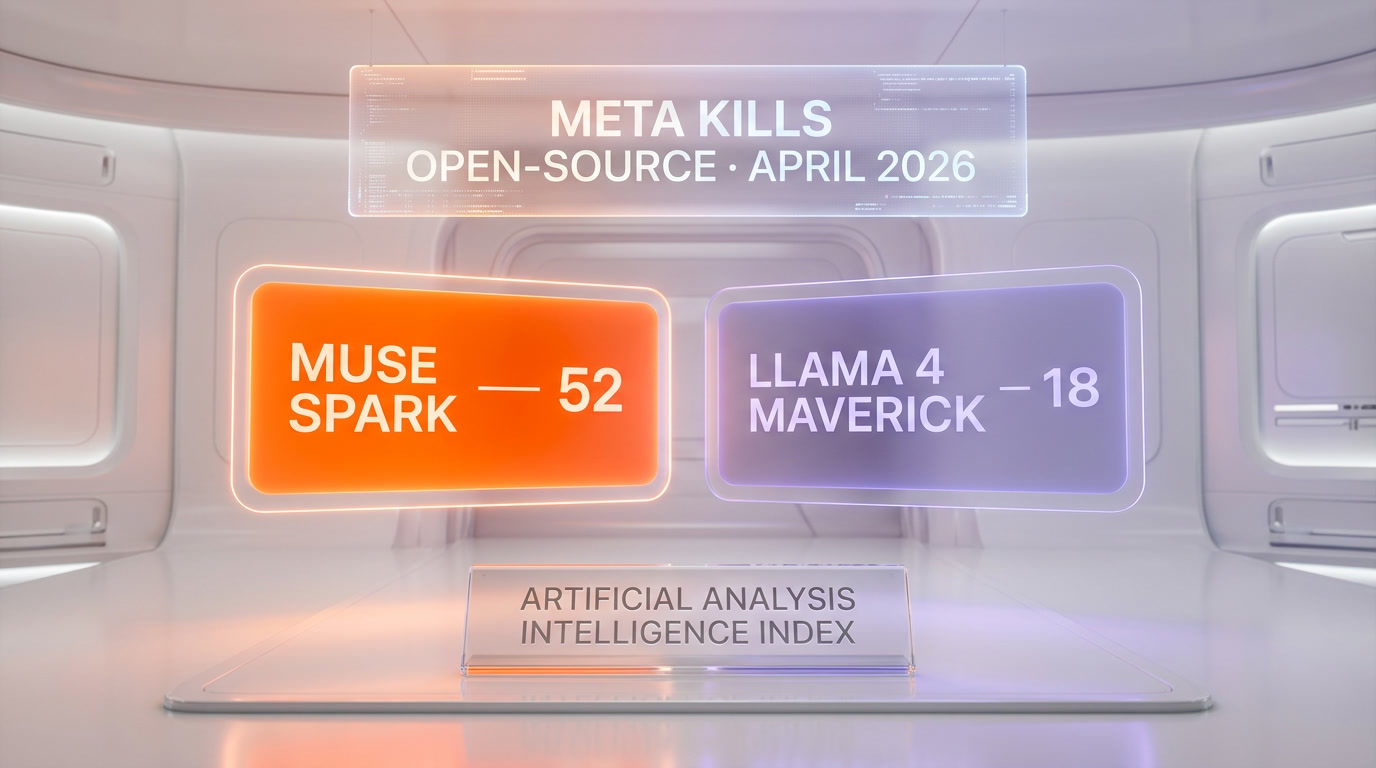

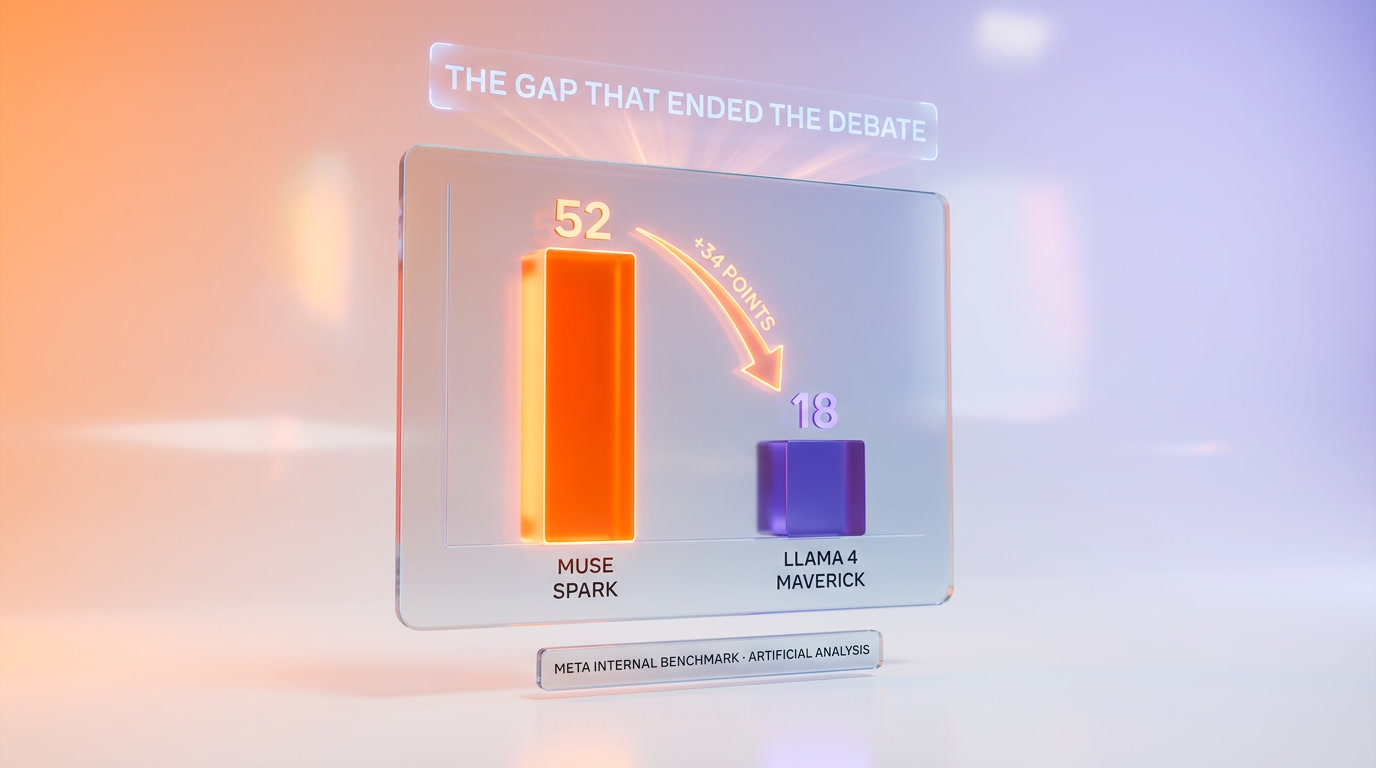

Meta just killed its own open-source playbook. Muse Spark, the first proprietary frontier model from Meta Superintelligence Labs, scored 52 on the Artificial Analysis Intelligence Index. Llama 4 Maverick — Meta's current flagship open-weights model — sits at 18. That is a 34-point gap. Muse Spark is available only on meta.ai. No open weights. No Hugging Face drop. No API at launch. This is the single most dramatic strategic reversal in frontier AI since OpenAI closed GPT-4 in 2023, and it was led by Alexandr Wang — the ex-Scale AI founder who joined Meta in June 2025 as part of the $14.3 billion Scale AI investment and now runs Superintelligence Labs. We covered the launch announcement last week. This article is the post-mortem: what developers lose, what Zuck is trying to steal from Anthropic, and what comes next for open-source AI.

The score that tells everything

On April 8, 2026, Meta shipped Muse Spark on meta.ai. Within 48 hours, Artificial Analysis — the independent benchmarking outfit that has become the de facto scoreboard for frontier models — published its Intelligence Index score. Muse Spark: 52. That number is not just "good for Meta." It puts Muse Spark in the top tier of 2026 frontier models, roughly shoulder to shoulder with the current generation of closed-source leaders and well ahead of every open-weights model on the market.

Llama 4 Maverick, by contrast, scored 18. Llama 4 Maverick is Meta's open-weights flagship, released in 2025, the model that was supposed to prove Meta could compete at the frontier while keeping weights free. The 34-point delta is the story. Meta is not just hedging — Meta has demonstrated, with its own benchmark numbers, that the closed-source path produces a categorically better model than the open-source path it spent years evangelizing.

- Muse Spark (closed, meta.ai only): 52 on Artificial Analysis Intelligence Index.

- Llama 4 Maverick (open weights): 18.

- Delta: 34 points — a chasm, not a gap.

- Distribution: meta.ai web and app only. No weights, no API, no Hugging Face.

- Lead: Alexandr Wang, Chief AI Officer, Meta Superintelligence Labs.

The numbers come from public reporting by VentureBeat and CNBC on April 8, 2026, confirmed by WaveSpeedAI's independent evaluation suite. This is not a leak. Meta is publishing these numbers itself. Zuck wants you to see them. Zuck wants the industry to see them. The message is simple: open weights were holding us back, and we are done holding ourselves back.

Llama 4 Maverick: the last open weights?

Llama 4 was supposed to be the generation where Meta's open-weights strategy caught up with the closed frontier. When Llama 4 Maverick and Llama 4 Scout launched in 2025, the pitch was that open weights plus Meta's compute scale would close the gap with GPT and Claude. It did not close. The Artificial Analysis score of 18 is not a rounding error — it is a generation behind.

For a year, Meta's AI leadership tried to frame this as philosophical. "Open-source democratizes AI." "Open weights are good for the ecosystem." "The community will close the gap." Those arguments were sincere and they were also a cover for a harder truth: open-sourcing a frontier model is expensive, slow, and strategically suicidal once your competitors stop matching you.

The rumor inside Meta for most of 2025 was that Llama 5 would be the make-or-break open-weights release. Muse Spark answers that question by not being called Llama 5. The name change is the strategy change. Llama is legacy. Muse is the future. And the future is closed.

Will Meta ship a Llama 5? Publicly, Meta has said nothing to kill the line. Internally, multiple reports suggest the Llama team has been deprioritized, with top researchers reassigned to the Muse family. If Llama 5 ships at all, it will ship as a smaller, cheaper, open-weights model — a community offering, not a frontier bet. The frontier is Muse Spark and whatever comes after it, and it is all closed.

Why Meta abandoned open-source

There are three reasons the open-weights strategy died at Meta, and all three have been visible for 18 months.

1. The competitive math stopped working

When Llama 2 launched in mid-2023, open-sourcing a near-frontier model was a defensive move that worked. It commoditized Meta's competitors' moat and positioned Meta as the benevolent open-source leader. In 2023 and early 2024, the gap between open-weights leaders and closed-frontier leaders was small enough that Meta could credibly claim parity. By mid-2025, that gap had widened — GPT-5, Claude Opus 4, and Gemini 3 were all meaningfully ahead of anything in the open-weights ecosystem. By early 2026, the gap was a chasm. You cannot win a race you are 34 points behind in.

2. The infrastructure bill came due

Training a frontier-class model in 2026 costs a serious fraction of a billion dollars in compute alone. Giving the weights away after spending that much means every competitor and every nation-state gets the full output of your most expensive engineering project for free. That math was tolerable when training cost $20 million. It is not tolerable when training costs $500 million and your competitors are not reciprocating. OpenAI didn't open-source GPT-5. Anthropic didn't open-source Claude Opus. Google didn't open-source Gemini 3. Meta was the last major lab playing the open game, and it was paying the bill alone.

3. Alexandr Wang arrived with a different worldview

Alexandr Wang sold Scale AI to Meta in a deal that valued Scale at $14.3 billion and brought Wang in as Chief AI Officer and head of Superintelligence Labs. Wang did not build Scale AI on open-source ideology. Scale was a data-labeling company that lived and died on proprietary data pipelines, enterprise contracts, and tightly controlled intellectual property. He came to Meta with a closed-source operator's mindset, not a Facebook-era open-source mindset. Muse Spark is the first model shipped under his leadership. The fact that it is closed is not an accident.

The Alexandr Wang era

The $14.3 billion Scale AI deal was the largest AI talent acquisition in history when it closed. It was also, in hindsight, an acqui-hire for a single person. Wang brought Scale AI's engineering team, its data operations, and — critically — its philosophy about how to build frontier AI. That philosophy is straightforward: closed weights, enterprise distribution, proprietary data, aggressive IP protection.

Inside Meta, Wang now runs Superintelligence Labs as a separate unit with its own compute budget, its own research roadmap, and its own release cadence. Muse Spark is his first public output. The strategic posture is a near-complete mirror of Anthropic's and OpenAI's — which is not a coincidence. Wang is explicitly trying to out-execute Anthropic and OpenAI at their own game, using Meta's compute and Meta's consumer distribution as the edge.

The next question is whether Meta's consumer distribution is actually an edge. Muse Spark ships on meta.ai, but meta.ai is a fraction of ChatGPT's scale and a fraction of Claude's developer mindshare. Wang's bet is that WhatsApp, Instagram, and Facebook integration will make up the gap. That bet is unproven.

What developers lose

For the open-source AI community, Meta's pivot is a body blow. Llama was the anchor of the open-weights ecosystem. Every major open-source project — from Ollama to vLLM to LM Studio to the long tail of fine-tuned domain models — built on Llama as the default base model. Losing Llama as the frontier-chasing flagship changes the math for thousands of projects.

Three specific losses matter most:

- No fine-tuning on frontier weights. The entire LoRA and QLoRA ecosystem depends on having frontier-quality base models to fine-tune. Muse Spark's 52-point class of capability is now locked behind meta.ai's consumer product. You cannot fine-tune it on your legal corpus, your medical data, your support transcripts. You are renting the API or you are building on Llama 4 Maverick at 18.

- No local or air-gapped deployment. Regulated industries — defense, healthcare, finance, government — that chose Llama specifically because the weights could run on internal infrastructure have no Muse Spark story. They are locked to whatever model Meta's hosted product supports, which as of launch is zero enterprise-grade offering.

- No free tier for research. Academic labs, independent researchers, and students who built their careers on open Llama weights have no equivalent access to Muse Spark. The research-to-production pipeline that powered half of the post-2023 AI paper explosion just lost its free base model.

Beyond those concrete losses, there is a cultural one: the signal Meta is sending to other labs. If Meta — the one lab with a structural, ideological commitment to open-sourcing — is pulling back, every other lab with a frontier model has just had their "stay closed" decision validated. Mistral will face new pressure from its investors to close. DeepSeek will face new pressure from its own government. Expect the open frontier to shrink further in the next 12 months, not grow.

What Zuck is trying to steal from Anthropic

The strategic question is not "why did Meta close Muse Spark." It is "what is Meta trying to become." The answer, when you read Wang's recent interviews and Zuck's all-hands comments, is clear: Meta is trying to build its own Anthropic.

Anthropic has three things Meta wants and does not have. First, a reputation for safety-and-alignment rigor that lets it sell into enterprises and governments at premium prices. Second, a coding-first Claude product line that has captured the developer workflow layer in a way no other lab has matched — read our coverage of Claude for the full picture. Third, an identity as the "serious" frontier lab, the one where you go when you want a thoughtful partner rather than a consumer toy.

Muse Spark's closed-weights positioning is Zuck copying Anthropic's posture. The next moves will tell us how far the copy goes. Watch for: a Meta API that targets coding specifically (Meta does not currently compete with Claude Code or GPT-5 Codex in that workflow layer); a Meta enterprise offering with SOC 2, HIPAA, and FedRAMP certifications; a Meta safety team release schedule that starts looking more like Anthropic's responsible scaling policy and less like Facebook's move-fast-and-break-things posture.

This copy is not dishonorable — it is smart. Anthropic's position is the single most valuable position in frontier AI in 2026, and Meta is the only lab other than Google with the compute, the cash, and the consumer reach to credibly compete for it. The question is whether Wang can ship an "Anthropic-shaped" Meta in 12 to 24 months. Muse Spark is step one. It is not the whole plan.

The open-source vs closed battle in 2026

With Meta out, the open frontier is now carried by three laps of scale, in descending order of capability.

| Lab / Model family | Openness | Frontier capability (2026) | Signal |

|---|---|---|---|

| OpenAI (GPT-5) | Closed | Top tier | Never opened anything since 2023 |

| Anthropic (Claude) | Closed | Top tier | Explicit closed-weights philosophy |

| Google (Gemini 3) | Closed (Gemini) / Open (Gemma) | Top tier (Gemini) | Split strategy, Gemma as community offering |

| Meta (Muse Spark) | Closed (as of April 2026) | Top tier (score 52) | Just pivoted |

| Mistral | Mixed (open small, closed large) | Mid to high tier | Under pressure to fully close |

| DeepSeek (R2, V3) | Open weights | High tier — see DeepSeek R2 | Last major open frontier lab |

| Cohere, AI21, others | Mostly closed | Mid tier | Enterprise-only plays |

DeepSeek and Mistral are now carrying the open-source frontier largely alone. Google's Gemma line is open but deliberately positioned as a smaller community offering, not a frontier peer. If DeepSeek R2's next generation does not close the gap with closed frontier models, the open-weights ecosystem will spend 2026 and 2027 being structurally outperformed and slowly outmaneuvered in procurement.

The flip side: open weights still win on four dimensions that closed weights cannot match. Local deployment. Fine-tuning rights. Cost of ownership at scale. And — crucially — longevity, because an open-weights model that you downloaded in 2026 is still yours in 2030, whereas a closed API can be deprecated, price-hiked, or censored at the provider's discretion. That durability argument matters more to enterprises than most AI vendors admit.

Will Mistral or DeepSeek fill the gap?

The honest answer is "partially, and not immediately." Mistral has the European regulatory tailwind, the Le Chat consumer product, and a team that has consistently shipped on budget. But Mistral's frontier models have been closed since 2024 — the open Mistral 7B era is long gone, and the company is increasingly pitched to investors as a European closed-frontier play, not an open-source champion.

DeepSeek is the real wildcard. DeepSeek R2 and DeepSeek V3 are the only open-weights models currently in serious conversation with the closed frontier. DeepSeek's compute budget is smaller than Meta's, but its engineering efficiency has repeatedly punched above its weight. If DeepSeek R3 ships in 2026 at a genuine frontier level while keeping weights open, the open-source ecosystem lives. If it does not, the next 18 months will look like a steady consolidation of capability into the closed labs, with open weights relegated to a permanent second tier.

There is also a long tail — Alibaba's Qwen line, Tencent's Hunyuan, Falcon, Cohere Command, and the various startup frontier attempts — any of which could surprise. None of them are currently positioned to replace Llama as the ecosystem's anchor.

Our verdict

Muse Spark scoring 52 while Llama 4 Maverick scores 18 is the clearest single data point we have seen that closed-weights training is outperforming open-weights training at the frontier by a margin that is no longer explainable away. Meta's decision to go closed is the correct business decision given those numbers. It is also a serious loss for the open-source AI ecosystem and — if you zoom out — for the long-term competitive health of the AI industry.

Three takeaways you should remember:

- Closed won this round. The 34-point gap is not a benchmark quirk. Every major lab that stayed closed is now ahead of every major lab that stayed open, with DeepSeek as the only serious counterexample.

- Alexandr Wang is the person to watch inside Meta. Muse Spark is step one of an Anthropic-shaped playbook. Expect enterprise offerings, a coding product, and a safety team rebrand within 12 months.

- The open-source dream is not dead — but it is thinner. DeepSeek R2, Google Gemma 4, and Mistral's smaller releases are carrying a load that used to be shared with Meta. If one of them falters, the open frontier disappears.

Antho's one-line take: Zuck just traded idealism for a shot at the top tier, and the numbers say he was right to do it. Whether the industry is better for his choice is a different question, and we will be answering it in real time for the rest of 2026.

Want the broader picture? Read our Muse Spark launch coverage, our Claude deep dive, or browse DeepSeek R2 and Google Gemma 4 for the open-source alternatives.

Frequently asked questions

What is Meta Muse Spark?

Muse Spark is the first proprietary frontier AI model from Meta Superintelligence Labs, launched on April 8, 2026 and available exclusively on meta.ai. It is the first Meta model since 2023 that is not released as open weights. It scores 52 on the Artificial Analysis Intelligence Index, compared to 18 for Meta's open-weights Llama 4 Maverick.

Why did Meta stop open-sourcing its frontier models?

Three reasons: the competitive gap between closed-frontier labs (OpenAI, Anthropic, Google) and open-weights models widened to a level Meta could no longer close for free; training cost per frontier model reached hundreds of millions of dollars, making weight release economically unsustainable when competitors were not reciprocating; and Alexandr Wang, who joined Meta as Chief AI Officer after the $14.3 billion Scale AI deal in June 2025, brought a closed-source operator's worldview to Meta's AI leadership.

What is the Artificial Analysis Intelligence Index score for Muse Spark?

Muse Spark scored 52 on the Artificial Analysis Intelligence Index after its April 8, 2026 launch. This places it in the top tier of 2026 frontier models, well ahead of the 18 scored by Llama 4 Maverick and roughly competitive with the leading closed-source models from OpenAI, Anthropic, and Google.

Is Muse Spark available on Hugging Face or via API?

No. At launch, Muse Spark is available only through meta.ai — Meta's consumer chat product on web and app. There is no open-weights release, no Hugging Face drop, and no public API. Meta has not published a timeline for an API, and no enterprise or developer-grade offering has been announced as of this writing.

Who is Alexandr Wang and what is his role at Meta?

Alexandr Wang is the founder of Scale AI, which Meta acquired in June 2025 in a deal valuing Scale at $14.3 billion. As part of that deal, Wang joined Meta as Chief AI Officer and head of Meta Superintelligence Labs. Muse Spark is the first frontier model shipped under his leadership and reflects his preference for closed-weights, enterprise-grade distribution over Meta's historical open-source posture.

What does this mean for Llama 5?

Meta has not publicly cancelled the Llama line, but the naming change from Llama to Muse signals that the flagship frontier effort has moved away from open weights. Multiple reports suggest the Llama team has been deprioritized and top researchers reassigned to the Muse family. If a Llama 5 ships, it is likely to be a smaller, cheaper, community-facing model rather than a frontier bet.

What do developers lose with Meta's closed-source pivot?

Three concrete losses: the ability to fine-tune on frontier-quality Meta weights (LoRA, QLoRA, domain-specific tuning); the ability to run Meta's best model locally or in air-gapped environments for regulated industries like defense, healthcare, and finance; and free access to a frontier base model for academic and independent research. The LoRA and fine-tuning ecosystem that was built on Llama has no Muse Spark equivalent.

Can DeepSeek or Mistral replace Meta in the open-source AI ecosystem?

Partially. DeepSeek R2 and V3 are currently the only open-weights models in serious conversation with the closed frontier, and DeepSeek has consistently punched above its weight on compute efficiency. Mistral's frontier models have been closed since 2024, so its role as an open-source leader is limited. Google's Gemma 4 is open but positioned as a smaller community offering rather than a frontier peer. None of them currently matches the ecosystem anchor role Llama played.

Is Meta becoming the next Anthropic?

That appears to be the strategic intent. Alexandr Wang's playbook at Meta mirrors Anthropic's positioning: closed-weights philosophy, enterprise distribution, safety and alignment rigor, and a shift toward the serious-partner brand image that Anthropic owns in 2026. Muse Spark is the first move. Expect a coding-focused API, enterprise certifications like SOC 2 and HIPAA, and a safety team rebrand within the next 12 to 24 months.

What is the difference between Muse Spark and Llama 4 Maverick?

Muse Spark is closed-weights, available only on meta.ai, and scores 52 on the Artificial Analysis Intelligence Index. Llama 4 Maverick is open-weights, freely downloadable, and scores 18 on the same benchmark. The 34-point gap is the clearest signal that closed-source training is currently outperforming open-source training at the frontier. Llama 4 Maverick remains Meta's best option for developers who need fine-tuning rights and local deployment; Muse Spark is Meta's best option for consumer chat quality.