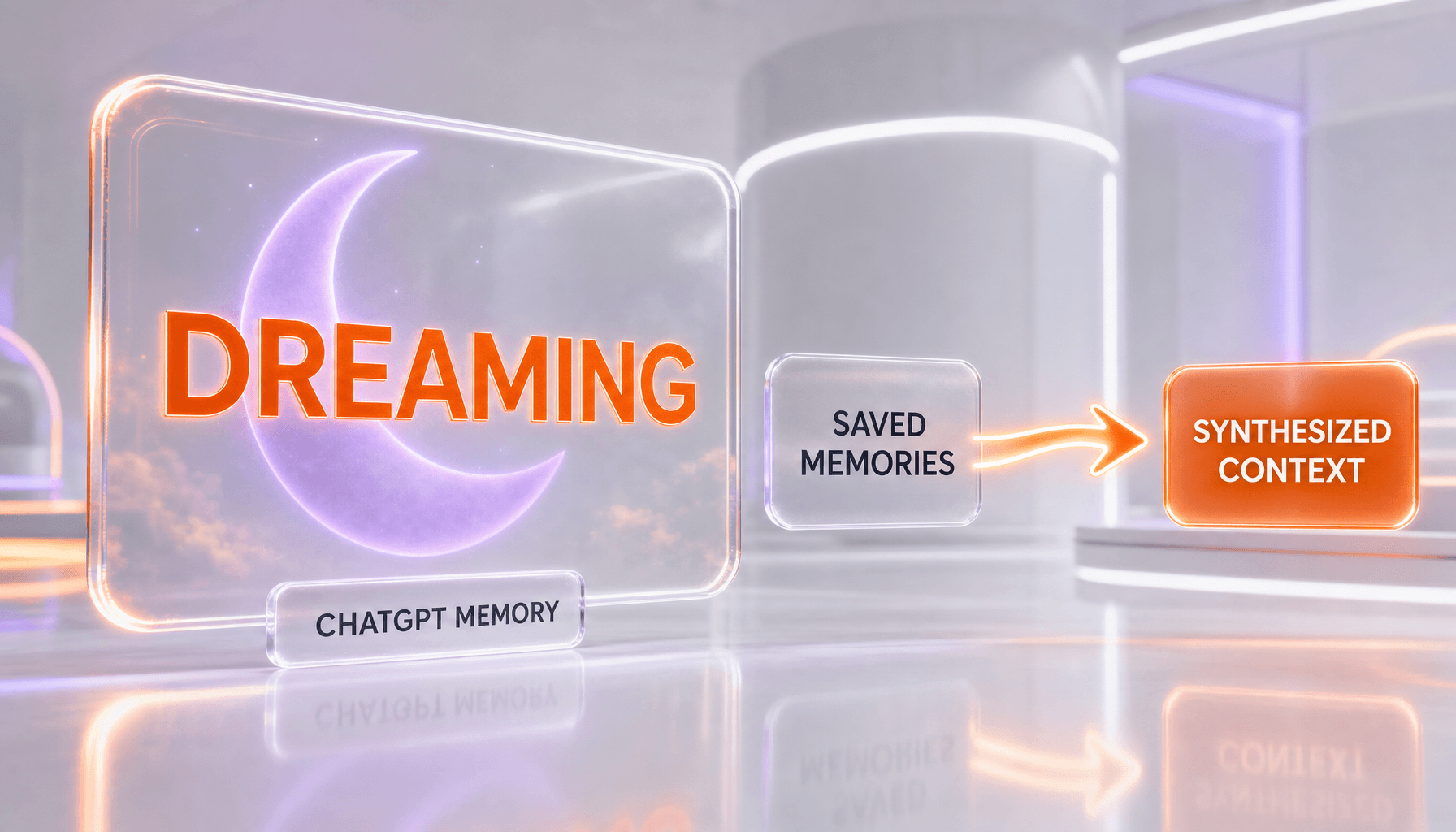

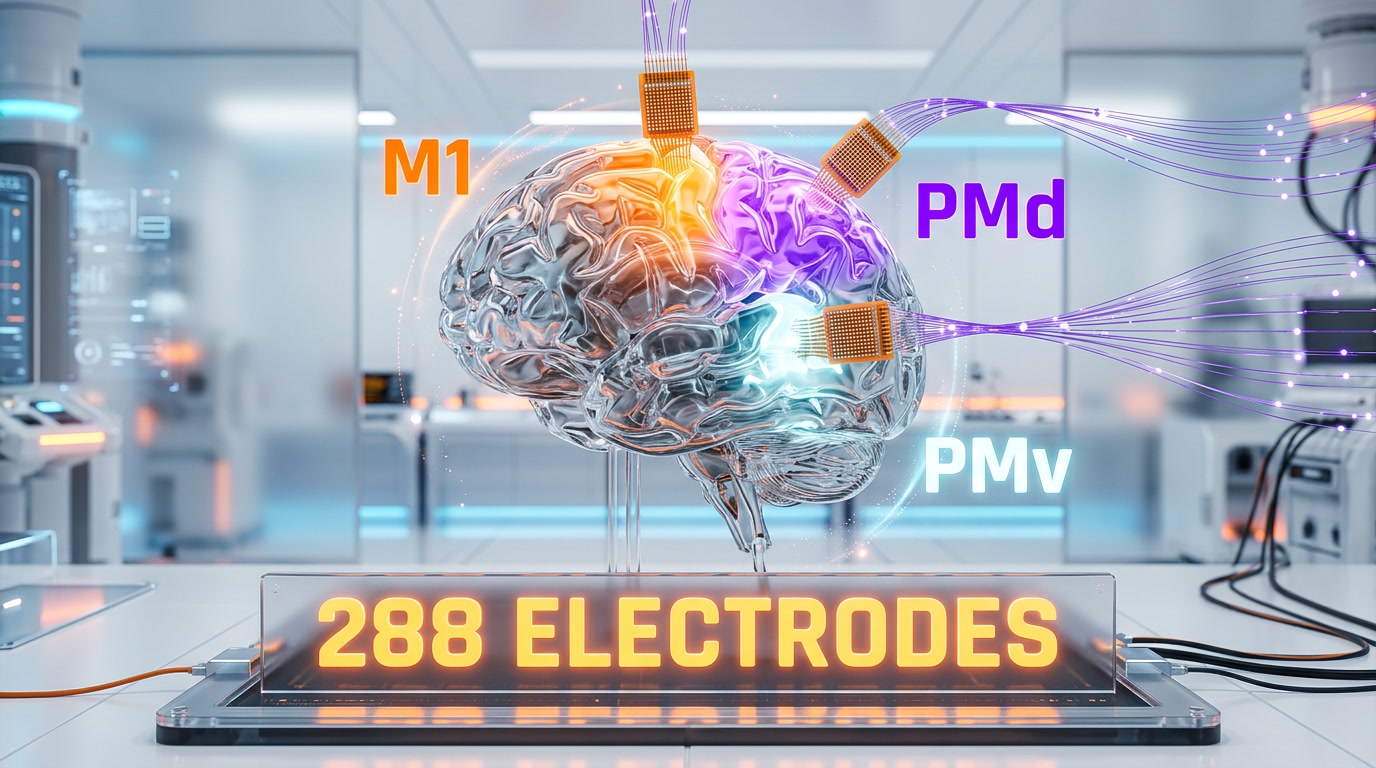

Three rhesus macaques navigated a stereoscopic first-person virtual forest with thought alone, using an intracortical brain-computer interface (iBCI) across M1, primary motor cortex, PMd, dorsal premotor cortex, and PMv, ventral premotor cortex. The study published in Science Advances on April 2026 shows real-time decoding of neural signals into velocity commands with session success rates up to 96 percent. PMd delivered the best flexible control without retraining. The work is a credible bridge toward wheelchair-grade iBCIs for paralyzed people. Our researched score: 9.2 out of 10.

What Happened: The Study In One Paragraph

On April 2026, a team of neuroscientists published "Intracortical brain-computer interface for navigation in virtual reality in macaque monkeys" in Science Advances, DOI 10.1126/sciadv.adw3876. Three rhesus macaques were each implanted with three 96-channel Utah arrays — one in the primary motor cortex (M1), one in the dorsal premotor cortex (PMd), and one in the ventral premotor cortex (PMv). Across 288 electrodes per monkey, neural activity was decoded by an AI model into continuous velocity commands that drove a first-person avatar through a stereoscopic VR forest. The monkeys steered around obstacles, switched targets mid-motion, and controlled both a disembodied sphere task and a full embodied avatar. Individual session success rates reached 96 percent. Signals from PMd alone delivered the best real-time, flexible control and the cleanest task-switching without retraining. For a published timeline comparison, Neuralink's 2024 Pong demo used a single motor-cortex array to move a two-dimensional cursor; this Science Advances work moves a first-person 3D avatar through a photoreal forest using three arrays across three regions at once.

Why That Matters In 60 Seconds

- 288 electrodes, 3 regions, 3 monkeys — the first published iBCI demo that uses M1 + PMd + PMv simultaneously for real-time VR navigation.

- PMd wins for flexible control — dorsal premotor cortex gave the best task-switching performance, which matters more than raw accuracy for real-world use.

- Up to 96 percent session success — this is not a toy demo; it is production-grade decoding on complex 3D tasks.

- The AI model is the unlock — without modern neural-signal decoders, these non-stationary signals could not be turned into continuous velocity commands in real time.

- Direct line to paraplegic mobility — the same loop, with the same arrays, could drive a powered wheelchair or a VR rehabilitation environment for a human with spinal-cord injury.

Our Methodology: We Researched This, We Did Not Run It

We want to be transparent about what this article is and is not. We did not conduct this study. We did not implant electrodes, we did not train monkeys, and we have no first-hand access to the raw neural data. This is a researched synthesis, not a hands-on review. Our sources are the Science Advances paper of record, the Phys.org news write-up, the bioRxiv preprint that preceded peer review, the KU Leuven press office release, and IEEE Spectrum coverage. We cross-checked every number and every region name against the published paper before writing. Where the press coverage drifted from the paper, we followed the paper. Where the paper leaves something open — long-term array stability beyond the reported sessions, for example — we say so in plain language. This is the voice we use for news-research articles. For tools and models we install and use ourselves, we use a different voice and say "we tested".

This is the same discipline we brought to our coverage of Gemini Robotics-ER 1.6 — another frontier where AI is pushing into physical and biological reality — and to Anthropic's 171 functional-emotions paper, where we refused to hype research we had not replicated. Same rule here: we report what the paper actually says, we do not embellish.

The Three Cortex Regions: M1, PMd, PMv In Plain English

The motor cortex is not a single chunk. It is a cluster of regions that do different jobs in the movement pipeline. This study recorded from three of them at once, and the reason that matters is that each region carries a different flavor of the movement signal. Pulling from all three gives a decoder more to work with than a single array ever could.

M1 — Primary Motor Cortex

M1 is the classic motor-execution region. It fires most strongly when a movement is actually happening, and its neurons correlate closely with limb velocity, force, and direction. Most existing human iBCIs, including the BrainGate clinical trials and Neuralink's first human-participant demos, rely heavily on M1. M1 is reliable, but it is also the most movement-locked region — it wants an actual intended motion to decode from.

PMd — Dorsal Premotor Cortex

PMd sits just in front of M1 and codes for the plan of a movement more than the movement itself. It represents what you intend to do and where you intend to go, often hundreds of milliseconds before M1 lights up. For a navigation task — "head toward that tree, then pivot around the rock" — PMd carries richer intent information. The Science Advances paper reports that PMd was the single best region for real-time flexible navigation and target switching without retraining. That is the punchline of the study for a lot of neuroscientists: PMd beat M1 on the task that matters most for assistive devices.

PMv — Ventral Premotor Cortex

PMv sits below PMd and is historically associated with hand and grasp control, but it also encodes visuomotor integration — how the brain matches what the eyes see to what the body should do. PMv contributed extra signal that helped the decoder stay accurate when the visual scene changed. A 2026 companion paper in Scientific Reports ("An intracortical brain-machine interface based on macaque ventral premotor activity") reinforces that PMv alone can drive BMI control, but the Science Advances team showed PMv adds most value in combination with M1 and PMd.

How The AI Decoder Actually Works

Raw neural signals are messy. Each electrode picks up voltage fluctuations from dozens of neurons at once. The signal drifts from session to session as the brain and the array co-adapt. Movement plans are encoded as distributed patterns across hundreds of neurons, not as clean labeled channels. Turning that into "move forward at 0.7 meters per second, rotate 15 degrees left" in real time is a hard machine-learning problem.

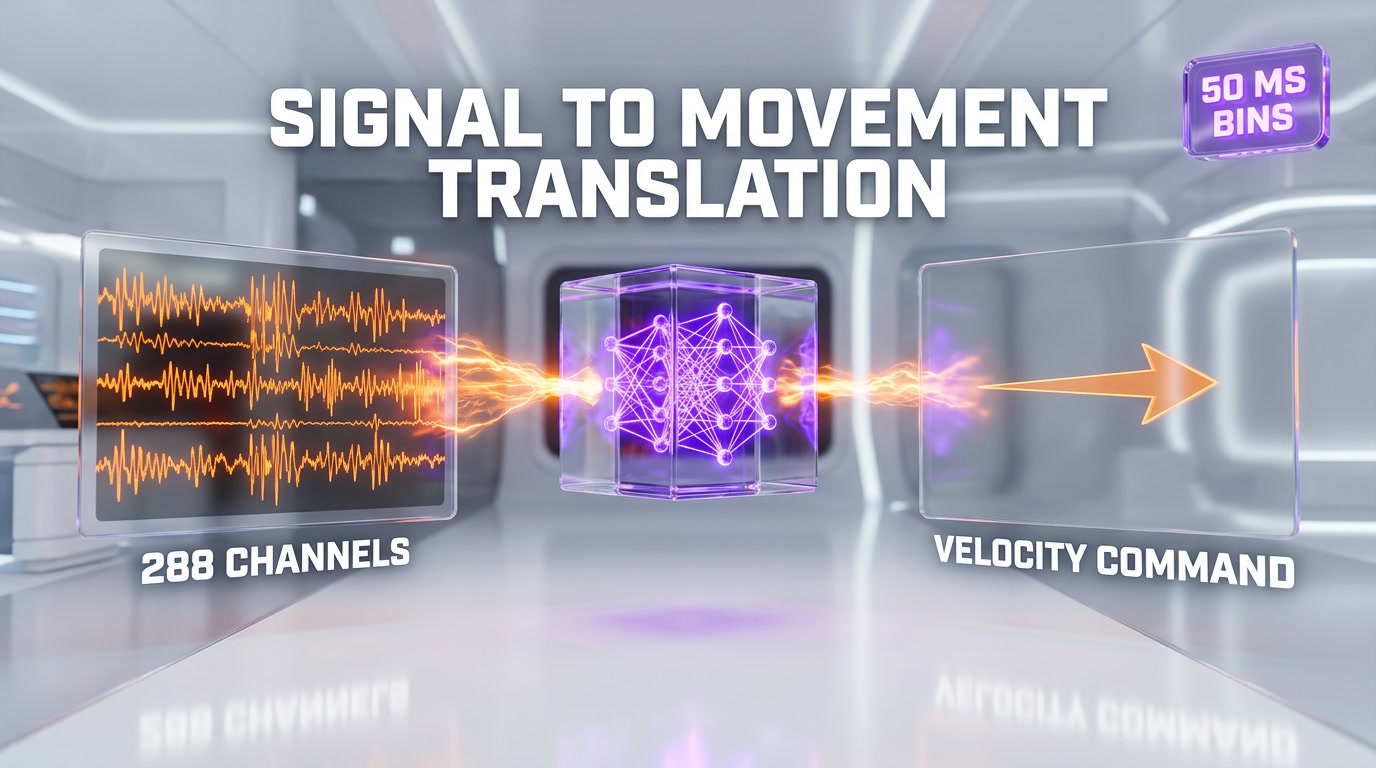

The study's decoder takes spike rates binned at roughly 50 milliseconds from all 288 channels and maps them to a continuous two- or three-dimensional velocity vector. That vector drives the avatar in the VR environment at the same rate a human hand would drive a joystick. The model was retrained at the start of each session to compensate for signal drift, and the paper notes that after a short calibration, the brain kept adapting during use — in other words, the monkey and the decoder converge on a shared representation.

This is the quiet AI story inside a neuroscience paper. Without modern decoders — the same class of models that power Claude Code and other real-time AI systems — you do not get 96 percent session success on a dynamic obstacle-avoidance task. The AI is the thing that makes M1 + PMd + PMv talk to a VR avatar at joystick latency.

Why Three Regions Beat One

Single-region iBCIs hit a ceiling quickly. M1 alone is great at moving a cursor in a straight line but degrades on task switches, because the signal that says "stop this movement, start a new one" lives upstream in the premotor areas. PMd alone is great at intent but is noisier on fine motor detail. Combining all three gave the decoder redundancy plus specialization — it could lean on M1 for movement kinematics and on PMd for task-level intent at the same time. That is why the paper's core contribution is not "we decoded motor cortex" but "we decoded motor cortex the right way for real-world navigation".

This Is Not Neuralink — And That Matters

Every news cycle about iBCIs gets filtered through Neuralink. Let us draw a sharp line, because the two projects answer different questions.

Neuralink — Commercial, Opaque, Pong

Neuralink is a private company building a surgically implanted BCI with a robotic inserter and a single high-density array in M1. Its public demos so far have focused on moving a cursor, playing Pong, and operating a laptop. The hardware is proprietary. The data is not openly released. The initial human participants have demonstrated meaningful quality-of-life improvements, but the commercial incentive compresses the science into product demos — short, compelling, and narrow.

The Science Advances Study — Academic, Open, Complex 3D

This study is the opposite profile. It is academic research, peer-reviewed in a top general-science journal, with a preprint on bioRxiv, and the decoded task is a three-dimensional, first-person, obstacle-rich VR forest — not a 2D cursor and not Pong. Three regions, not one. Three monkeys, not one. The arrays are off-the-shelf Utah arrays, not proprietary Neuralink threads. The AI decoder design is described in the paper. Anyone with a well-equipped primate lab could, in principle, replicate it.

Both approaches matter. Neuralink is solving the surgical-robot and productization problem. Academic work like this is solving the decoding-and-architecture problem. Long term, the winning clinical stack will borrow from both columns.

What This Means For Paralyzed People

The reason any of this gets funded — and the reason it clears ethical review — is the clinical endpoint. Roughly 5.4 million people in the United States alone live with some form of paralysis. Spinal-cord injury, amyotrophic lateral sclerosis, and late-stage multiple sclerosis all strand a working brain inside a body that cannot act on intention. iBCIs are the most direct technical path to giving that brain a new effector.

Path One: Intuitive Powered Wheelchairs

A wheelchair that reads your intended trajectory directly from PMd would be a step-function upgrade over sip-and-puff or chin-joystick controls. You think "go to the kitchen", your PMd encodes the trajectory, the decoder drives the chair, and the wheelchair's own sensors handle collision avoidance. The Science Advances setup maps onto this almost one-for-one — the avatar is the wheelchair, the forest is the apartment, and the obstacle-avoidance task is the real-world clutter of a home.

Path Two: VR Rehabilitation And Telepresence

For patients who cannot leave a bed, a first-person VR environment driven by iBCI is already a realistic therapy vector. Studies on phantom-limb pain and on post-stroke motor rehab have shown that the brain benefits from the illusion of embodied movement. Running a paraplegic patient through a VR forest they steer with thought is not science fiction any more — the Science Advances paper proves the decoder can handle the scene.

Path Three: Telerobotics

Further out, the same decoder could drive a physical robot instead of a VR avatar. That is where this study rhymes with embodied-AI work like Gemini Robotics-ER 1.6 — if an AI can reason about physical space from a camera, and a BCI can convert human intent into trajectory, the combined system lets a human pilot a robot body from inside their own mind. That is a decade away at minimum, but the pieces are starting to interlock.

AI Plus Neural Interface: The Next Frontier Of Embodied AI

Zoom out. What you are watching is AI crossing three boundaries at the same time:

- Into the physical world — embodied-reasoning models like Gemini Robotics-ER 1.6 give robots a brain upgrade, and humanoid platforms from Boston Dynamics, Apptronik, and Agility are already shipping on them.

- Into biological signal space — AI decoders like the one in this Science Advances paper turn messy neural data into real-time control, and they are getting better every year.

- Into its own interpretability — Anthropic's recent work on 171 functional emotions inside Claude shows we can now probe the internal state of a language model with neuroscience-grade tools.

The convergence point is obvious. A paralyzed patient in 2030 could wear an iBCI, inhabit a VR therapy environment, pilot a robot body for remote work, and interact with a language-model assistant — all through the same neural stack. Each layer of the stack is being built right now. This study is a clear, credible data point on the biological signal-space layer.

The Ethical Questions We Cannot Skip

Any honest write-up of this work has to sit with the animal-research question. Three rhesus macaques had three skull craniotomies each and carry chronic electrode arrays. That is not trivial, and it is not something to gloss over. The alternative view — shared by many clinical neuroscientists — is that without this kind of primate work, human iBCI trials would either never clear safety review or would be done on human subjects with unnecessary risk. The authors operate under institutional animal-care oversight and the study passed peer review at Science Advances, which means independent ethics scrutiny. We note the tension and let readers sit with it.

The human-side ethics matter too. A device that reads intent from PMd is also a device that could, in a different configuration, read intent the subject would rather keep private. The field needs to land hard on neural-data privacy, informed-consent standards, and limits on repurposing before the first commercial paraplegic-mobility product ships.

Our Analysis: What We Actually Think

We have read a lot of iBCI papers. This one is a step up from most recent work, and we want to say why without cheerleading.

What is genuinely new. The multi-region recording is not itself new — labs have been putting Utah arrays in premotor areas for more than a decade. What is new is putting three arrays in three regions in the same animal and decoding them jointly for a complex, task-switching, first-person 3D task in real time. That is a real engineering result, not a marketing result. The finding that PMd dominates for flexible navigation is also a genuine scientific contribution that changes where future human iBCI teams will put their arrays.

What is less new than the headlines suggest. Monkeys have been moving cursors with brain signals since the early 2000s. Navigation in VR with iBCIs has been shown before, just at lower complexity. The AI decoder, while good, is not architecturally revolutionary — it is a competent modern decoder, not a new paradigm. The "monkey navigates a forest with its mind" headline is true, but it is also a compression of a decade of incremental progress finally hitting a threshold.

What we are watching for next. First, long-term array stability — Utah arrays degrade over months to years, and a clinical product needs five-plus-year reliability. Second, wireless operation — the study is tethered, and a real wheelchair user cannot be. Third, first human trial data — the Science Advances authors are clearly setting up for a human translation, and the next two to three years of BrainGate, Neuralink, and academic consortium announcements will show whether PMd really does transfer. Fourth, integration with embodied-AI models on the output side — pairing this decoder with something like Gemini Robotics-ER 1.6 could make assistive robotics genuinely practical.

Our researched score: 9.2 out of 10. This is a strong, credible, peer-reviewed result that moves the field forward and points cleanly at clinical translation. The deductions are for the long-term stability questions still to answer, for the inherent limitation of any three-monkey sample size, and for the remaining gap between "96 percent session success in a trained lab macaque" and "safe daily use for a human outpatient".

What Comes Next

The honest answer is that the authors will spend the next year defending the paper, extending it, and most likely starting conversations with human iBCI consortia. The BrainGate clinical trial network already has the regulatory infrastructure for adding PMd and PMv arrays to their protocol. A combined M1-PMd-PMv human trial would be the logical follow-on, probably with a first tethered VR navigation task and then a powered-wheelchair task. The broader industry will also absorb the result — expect Neuralink, Synchron, Precision Neuroscience, and Paradromics to recalibrate array-placement strategies given the PMd finding.

On the consumer-facing side, nothing changes this quarter. No product will ship from this paper in 2026. But the research-to-clinic pipeline for iBCIs is shorter than most people realize — the first human BrainGate cursor control was in 2006, and human motor-cortex iBCIs now drive robotic arms and text-entry systems daily. An M1-PMd-PMv human demonstration in a first-person VR navigation task is probably a three-to-five-year horizon, not a decade. That is the quiet big deal of this study: it makes that horizon concrete.

Frequently Asked Questions

What did the three macaques actually do?

Three rhesus macaques, each implanted with three 96-channel Utah arrays in the primary motor cortex (M1), dorsal premotor cortex (PMd), and ventral premotor cortex (PMv), navigated a first-person stereoscopic VR forest and controlled a disembodied sphere task using neural activity alone. An AI decoder turned 288 channels of spike-rate data into continuous velocity commands in real time. Individual session success rates reached 96 percent.

When was the study published?

The study, titled "Intracortical brain-computer interface for navigation in virtual reality in macaque monkeys", was published in Science Advances on April 2026 with DOI 10.1126/sciadv.adw3876. A preprint had previously appeared on bioRxiv in May 2025 under the same title.

Why are M1, PMd, and PMv the three regions being recorded?

M1 (primary motor cortex) is the classical movement-execution region and encodes limb velocity and force. PMd (dorsal premotor cortex) encodes movement plans and task-level intent, often firing before M1 does. PMv (ventral premotor cortex) encodes visuomotor integration, especially hand and grasp control. Recording all three gives the AI decoder movement kinematics, task intent, and visuomotor context at the same time. The Science Advances team reports that PMd alone gave the best real-time flexible navigation and task-switching control without retraining.

Is this different from Neuralink?

Yes, fundamentally different. Neuralink is a commercial company using a single proprietary high-density array in M1 for 2D cursor control and Pong-style demos, with private hardware and limited public data. This Science Advances study is academic research, peer-reviewed, using three off-the-shelf Utah arrays across M1, PMd, and PMv for 3D first-person VR navigation in a complex obstacle-rich environment. The study design is described in the paper and could in principle be replicated by any well-equipped primate lab. Both approaches matter — Neuralink is solving productization and surgical robotics, academic work is solving decoding architecture.

What role does AI play in the decoding?

The AI decoder takes spike rates binned at roughly 50 milliseconds from all 288 channels and maps them to a continuous two- or three-dimensional velocity vector in real time. Without modern machine-learning decoders, the non-stationary, multi-region neural signal could not be converted to joystick-grade velocity commands at the rates required for smooth first-person VR navigation. The decoder was retrained at the start of each session to compensate for signal drift, and the paper notes that the brain kept adapting during use — the monkey and the decoder co-converge on a shared representation.

Could this technology help paralyzed people?

That is the clinical motivation behind the work. Roughly 5.4 million people in the United States live with some form of paralysis. The same iBCI loop — arrays in M1, PMd, PMv feeding an AI decoder — could drive a powered wheelchair from intended trajectory, run VR rehabilitation environments for bedridden patients, or pilot telerobotic systems. The Science Advances work is a credible technical bridge to the first category, because the avatar navigation task maps cleanly onto wheelchair trajectory control.

When will humans be using this?

No product will ship from this paper in 2026. The BrainGate clinical trial network already has regulatory infrastructure for adding PMd and PMv arrays to human protocols, so a combined M1-PMd-PMv human trial is the most likely near-term follow-on. Historical context: the first human BrainGate M1 cursor control was in 2006, and human motor-cortex iBCIs now routinely drive robotic arms and text-entry systems. A human M1-PMd-PMv demonstration in a first-person VR navigation task is probably a three-to-five-year horizon, not a decade.

Why did PMd perform better than M1 for navigation?

PMd (dorsal premotor cortex) encodes the plan of a movement — what you intend to do and where you intend to go — more than the execution itself. For a navigation task with target switches and obstacle avoidance, that task-level intent signal is more useful than M1's tight correlation with raw limb kinematics. The Science Advances authors specifically report that PMd gave the best performance on real-time flexible navigation without retraining. That finding is likely to change where future human iBCI teams place their arrays.

Is this the first time monkeys controlled VR with their brains?

No. Monkeys have been moving cursors with motor-cortex signals since the early 2000s, and wireless cortical BMIs for whole-body navigation in primates were shown by Nicolelis and colleagues more than a decade ago. What is genuinely new in this Science Advances paper is the combination: three 96-channel arrays across three regions (M1, PMd, PMv) in the same animal, an AI decoder handling all 288 channels jointly, and a task-switching, obstacle-rich, first-person 3D VR environment with session success rates up to 96 percent.

What are the limitations of this study?

Four main ones. First, sample size — three macaques is standard for primate neuroscience but limits the statistical power and generalizability. Second, array longevity — Utah arrays degrade over months to years, and a clinical product needs five-plus-year reliability. Third, the setup is tethered, and a real-world wheelchair user cannot be. Fourth, the gap between "trained lab macaque achieves 96 percent in a controlled VR forest" and "safe daily use for a human outpatient" is substantial. None of these undermine the core finding; all of them define the roadmap for translation.

Could this be combined with embodied AI like Gemini Robotics-ER 1.6?

In principle, yes, and that is one of the more interesting frontiers. An iBCI decoder converts human intent into trajectory and task plan. An embodied-reasoning AI model like Gemini Robotics-ER 1.6 converts a task plan into physical-world action on a robot body. Pairing the two gives you a paralyzed human piloting a real robot from inside their own mind. The pieces exist today at research scale. A productized version is a decade-out research target, but the interlocks are becoming concrete.

What are the ethical questions?

Two main threads. On the animal-research side, three rhesus macaques underwent chronic skull craniotomies and carry permanent electrode arrays — the alternative view from clinical neuroscience is that without primate work, human iBCI trials could not clear safety review. The study operated under institutional animal-care oversight and passed Science Advances peer review. On the human side, a device that reads intent from PMd is also a device that could read intent the subject would rather keep private. Neural-data privacy, informed consent standards, and limits on repurposing need to be settled before the first commercial paraplegic-mobility product ships.