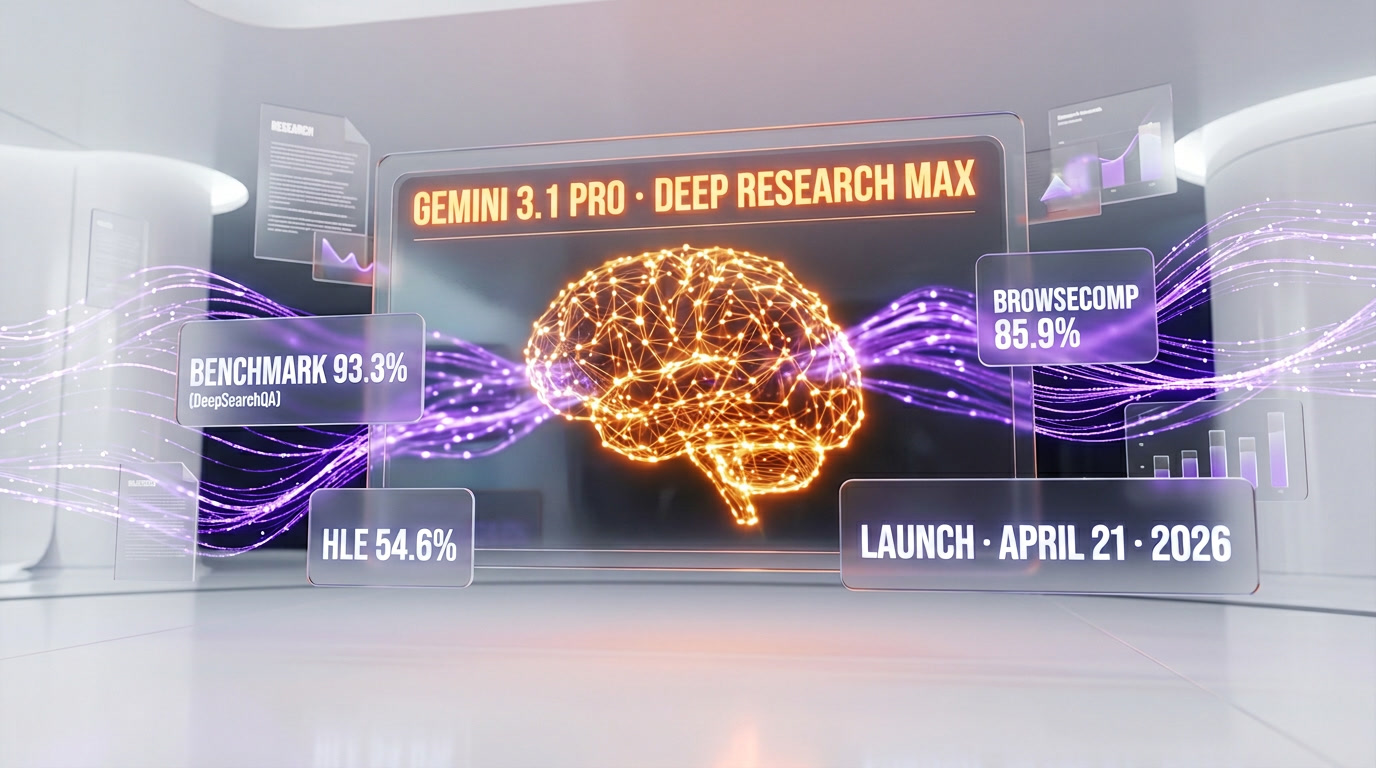

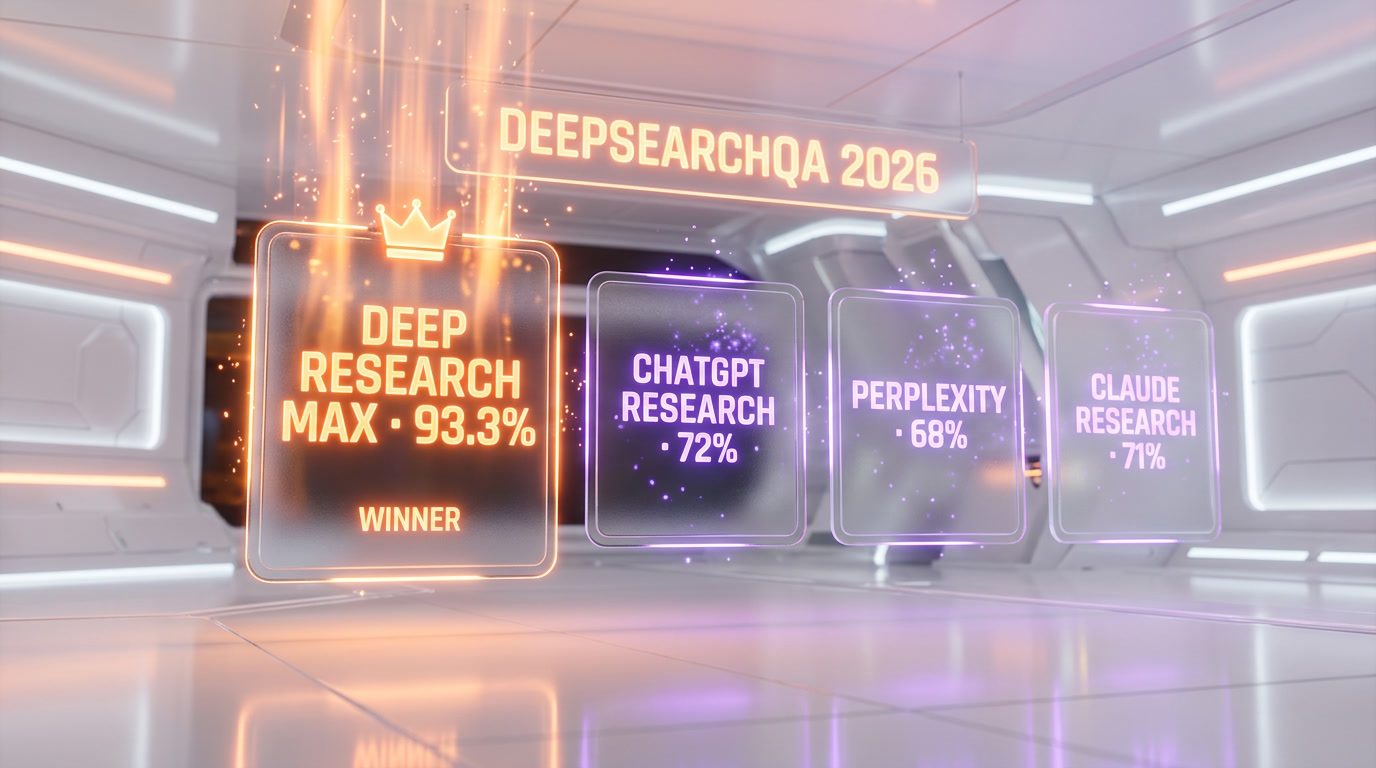

Google launched Deep Research and Deep Research Max on April 21, 2026, two autonomous research agents built on Gemini 3.1 Pro. Deep Research Max scores 93.3% on DeepSearchQA (up from 66.1% in December), 54.6% on Humanity's Last Exam (up from 46.4%), and 85.9% on BrowseComp (+25 points versus Gemini 3 Pro). Both variants are in public preview through paid Gemini API tiers, ship with native Model Context Protocol support, inline chart generation, and can blend open web data with internal company systems in a single call.

Our Methodology: What This Report Is (and Isn't)

We have not had API access to Deep Research Max yet. This report is not a hands-on review — it is a structured compilation of Google's official benchmarks, the Gemini API documentation, early developer tutorials, and our own competitive analysis against the other agentic research products we already use (ChatGPT Deep Research, Perplexity Deep Research, Anthropic Claude with tool use).

Every benchmark figure in this article comes from either Google's official April 21, 2026 announcement or third-party coverage that cites Google's numbers. When we say "93.3% on DeepSearchQA," we mean Google says so — we have not independently replicated that benchmark. When we compare latency, cost, or workflow patterns, we draw on months of production use of competing agents at ThePlanetTools and clearly label those claims as "based on our testing of competitor X."

This matters because the autonomous research agent space is now noisy enough that operator-style reporting is the differentiator. Press releases tell you the score. This piece tells you what that score means for the product you actually build on top of it.

What Happened on April 21, 2026

Google shipped the largest upgrade to its autonomous research stack since the original Deep Research debuted in December 2025 through the Gemini Interactions API. Two variants are now live in public preview on paid tiers of the Gemini API: Deep Research, optimized for speed and reduced cost on interactive surfaces, and Deep Research Max, which uses extended test-time compute to iterate through plan → search → reason → refine cycles for as long as the task needs.

Both run on Gemini 3.1 Pro. Both can read public web results, accept PDFs, CSVs, images, audio, and video as inputs, and — for the first time in a Google research agent — reach into internal company systems through Model Context Protocol (MCP) servers. Google specifically called out planned MCP integrations with FactSet and PitchBook for financial research workflows and flagged healthcare research and financial analysis as launch sectors.

Outputs can be rendered with inline charts and infographics generated either as HTML or through Google's Nano Banana image model. The agent streams its intermediate reasoning as it works, and users can review and refine the research plan before execution — a pattern that mirrors what OpenAI's Deep Research popularized in 2025.

The Benchmark Story: 93.3%, 54.6%, 85.9%

The headline numbers are the reason this launch matters more than an incremental update. Here is what Google published:

| Benchmark | Deep Research Max (Gemini 3.1 Pro) | Previous (Gemini 3 Pro, Dec 2025) | Delta |

|---|---|---|---|

| DeepSearchQA | 93.3% | 66.1% | +27.2 points |

| Humanity's Last Exam (HLE) | 54.6% | 46.4% | +8.2 points |

| BrowseComp (OpenAI) | 85.9% | ~60% | +25 points |

Three things are notable. First, 27 points in four months on DeepSearchQA is unusual — even in a market moving as fast as 2026 AI, that kind of delta on a search-and-retrieval benchmark usually comes from a combination of a stronger base model plus a materially different agent scaffold, not just more compute. Second, BrowseComp is OpenAI's benchmark, which makes the 85.9% score deliberately pointed: Google is scoring on the competition's home turf. Third, HLE at 54.6% is the number to watch — Humanity's Last Exam is designed to be unsaturatable by definition, and a research agent (not a base model) crossing 54% is the kind of figure that shows up in enterprise procurement decks.

The caveat: Google has not yet published the full benchmark methodology for Deep Research Max specifically — how many retries, which tools were enabled, how the plan-refine loop was scored. That disclosure usually lands in a follow-up technical report. Until then, read these numbers as "best configuration, Google-run" rather than "what you'll see out of the box on your first API call."

Deep Research vs Deep Research Max: Which One to Use

The split between the two variants is the most important product decision Google made in this launch, and it's one OpenAI didn't make with ChatGPT Deep Research. You're not picking based on quality — both ship on Gemini 3.1 Pro — you're picking based on workflow shape.

Deep Research — The Interactive Tier

Reduced latency, reduced cost per query, designed to sit behind a chat UI or an interactive research tab where a human is waiting for an answer. Think: a sales engineer researching a prospect mid-call, a journalist fact-checking a draft, a customer support agent pulling product comparisons. Response time matters more than exhaustiveness.

Deep Research Max — The Async Tier

Extended test-time compute. Iterative plan → search → reason → refine. Designed for workloads where the user submits the task and checks back in minutes, hours, or overnight. Google's own framing: "a nightly cron job that produces exhaustive due diligence reports by morning." Use cases: M&A diligence, competitive landscape analyses for quarterly reports, literature reviews in healthcare, investment memos built from 50+ sources.

How to Decide

Rule of thumb from how the market has used these patterns so far: if the user is on the page waiting, use Deep Research. If the output goes into a shared doc, a Slack channel, or an email digest — and no human is blocked on it — use Deep Research Max. The cost differential between the two on a per-task basis has not been published by Google, but the pattern in competing products (OpenAI Deep Research, Anthropic agentic workflows) is that comprehensive-tier calls run five to ten times the price of interactive-tier calls.

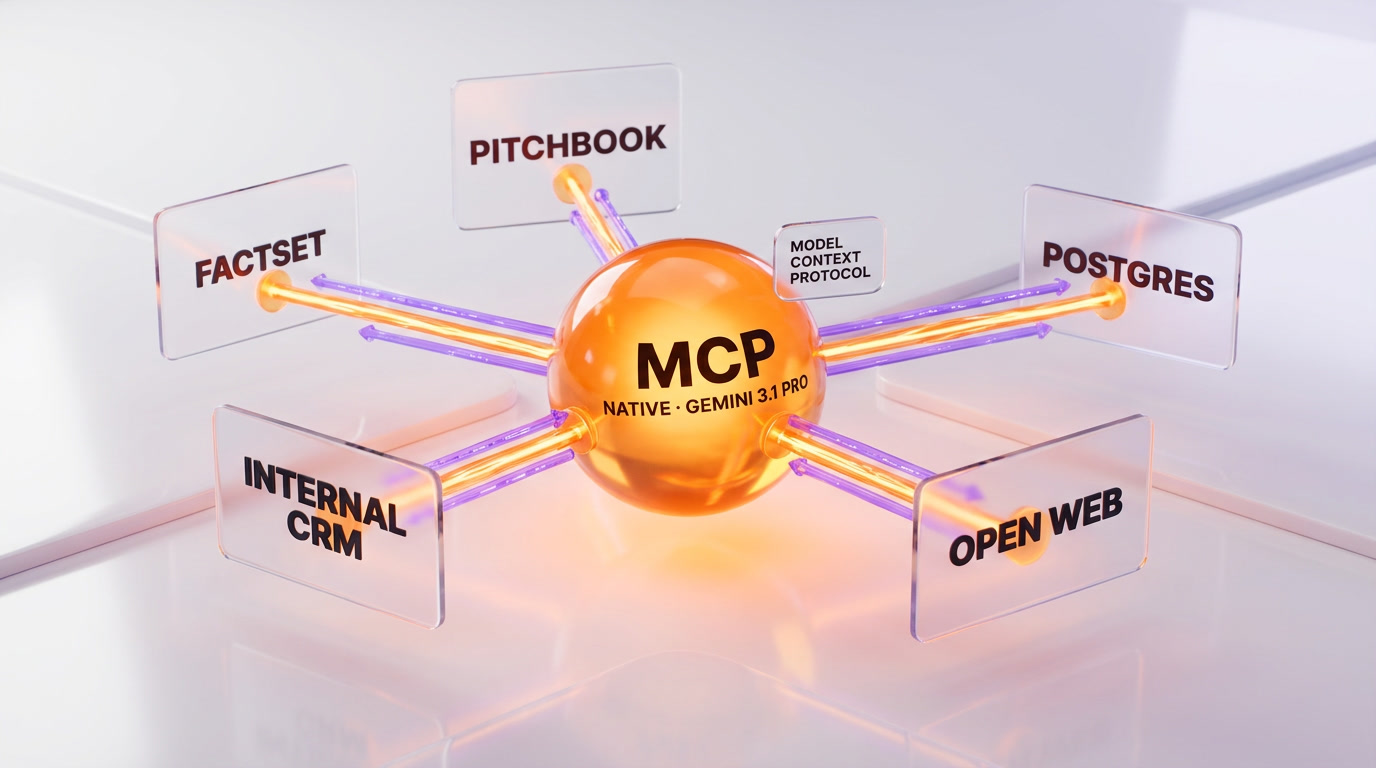

Native MCP Support: Why This Is the Real Headline

The benchmarks will make the news cycle, but the feature that will matter in twelve months is native Model Context Protocol support. This is the first time a first-party research agent from Google can, through a single API call, fuse the open web with a company's proprietary internal data without an intermediate orchestration layer.

MCP is the standard Anthropic open-sourced in late 2024 and that Claude Code helped popularize across the developer ecosystem. It lets any data source — a Postgres database, an S3 bucket, a Jira instance, a Salesforce CRM, a FactSet feed — expose itself as a toolset that a model can call. By making Deep Research a first-class MCP client, Google is doing two things at once:

- Enterprise lock-in play: once a company has plumbed its internal systems through an MCP server, switching research agents becomes trivial. Google is betting that MCP's portability actually pulls usage toward whichever agent scores highest on the benchmarks, which right now is theirs.

- Commoditizing the integration layer: frameworks that stitch together web search + private data for research workflows (LangChain research chains, custom RAG stacks, a dozen YC agent startups) now compete directly with a native Gemini API primitive.

The announced launch integrations — FactSet and PitchBook — are financial data providers, not accidents. Google knows the highest willingness to pay for autonomous research sits in finance, law, and healthcare. FactSet and PitchBook access through MCP is a direct shot at the analyst workflow that currently lives in Bloomberg Terminal sessions and hand-assembled Excel models.

How Deep Research Max Stacks Up Against the Competition

The autonomous research agent category did not exist two years ago. In April 2026, it is a four-player race. Here is how the big names compare based on publicly announced benchmarks and the capability patterns we have observed in our own production use of each competitor:

| Agent | Base Model | Key Benchmark | MCP Native | Internal Data Access | Async Variant |

|---|---|---|---|---|---|

| Deep Research Max (Google) | Gemini 3.1 Pro | 93.3% DeepSearchQA, 54.6% HLE, 85.9% BrowseComp | Yes | Yes (MCP + uploads) | Yes (Max tier) |

| ChatGPT Deep Research (OpenAI) | GPT-5 class | Original BrowseComp authors | Partial (connectors) | Limited (ChatGPT connectors) | Implicit (single tier) |

| Perplexity Deep Research | Mixed (incl. Sonar) | Not published on HLE/DeepSearchQA | No (planned) | Limited (Enterprise tier) | No (single tier) |

| Anthropic Claude (research via MCP) | Claude Opus / Sonnet class | Strong on agentic benchmarks | Yes (originator) | Yes (via MCP servers) | Custom (dev-built) |

The honest read across the four:

- ChatGPT Deep Research defined the consumer-facing UX for this product category in 2025 and still has the widest mindshare. OpenAI hasn't published head-to-head benchmark numbers against Gemini 3.1 Pro yet, and based on how rapidly both labs have historically responded to each other, a counter-launch is likely within weeks.

- Perplexity Deep Research is the fastest-shipping of the four on features that matter to search users — citation UX, source transparency, pro-search routing. It trails on structured MCP integration, which matters less for consumer research and much more for enterprise.

- Anthropic Claude with MCP is where the most sophisticated developer workflows actually live today, because Anthropic invented MCP and Claude's tool use is extremely well-behaved. It's not a single "Deep Research" product — it's a primitive you compose. That's a strength for engineering teams and a weakness for non-technical users.

- Deep Research Max is the first agent from a frontier lab that ships with (a) the highest published research benchmarks, (b) native MCP support, (c) a formal two-tier speed/comprehensiveness split, all at launch. That combination is what makes this more than a spec bump.

API Access, Pricing, and the Public Preview Window

Public preview means two things in Google parlance: available to all developers on paid Gemini API tiers, but subject to change, with full production SLAs not yet guaranteed. Google's announcement confirmed the rollout sequence:

- Now: Gemini API public preview, paid tiers only (no free-tier access at launch).

- Coming soon: Google Cloud Vertex AI for startups and enterprises, with the usual enterprise features — VPC service controls, customer-managed encryption keys, residency options.

- Roadmap: deeper MCP partner integrations (FactSet, PitchBook confirmed; more announced partners expected in the next Google Cloud Next event).

Specific per-token or per-task pricing for the two variants has not been published. Google's language — "a nightly cron job" for Deep Research Max — strongly implies async pricing with per-task billing rather than pure per-token, which is a departure from standard Gemini API pricing and closer to how OpenAI prices its Deep Research product. We'll update this piece when Google publishes the rate card.

If you're building on top of this today, the BuildFastWithAI tutorial on the Gemini Deep Research API is the cleanest walkthrough we've seen so far for wiring up the public preview endpoints in Python.

Use Cases That Actually Change Because of This Launch

The hype cycle around autonomous research agents has been running for eighteen months. Most of the use cases that show up in launch posts are aspirational. Here are the ones that genuinely shift because of what shipped on April 21:

1. Financial Due Diligence That Runs Overnight

With FactSet and PitchBook exposed through MCP, a PE associate can kick off a Deep Research Max task at 7 PM — "build a diligence memo on target company X, including comparables, transaction history, and sector trends over five years" — and have a structured, cited, chart-rendered report by 7 AM. This workflow currently takes a junior analyst one to three days of manual work. The agent does not replace the analyst, but it compresses the first draft by an order of magnitude.

2. Literature Reviews in Healthcare Research

Google called out healthcare explicitly. A clinical research team can point Deep Research Max at PubMed plus internal trial databases through MCP, ask for a synthesis of evidence on a specific endpoint across the last decade, and receive a fully cited document with inline charts. The 54.6% HLE score matters here because medical reasoning on edge cases is exactly what HLE tests.

3. Competitive Intelligence for Product Teams

Mid-size SaaS teams that don't have an analyst relations function can run weekly "what shipped in our category" reports against public data sources, pricing pages, and their internal CRM data through MCP. At the Deep Research (fast) tier, this becomes a Slack bot. At the Deep Research Max tier, it becomes the Monday morning digest that product leadership reads.

4. Internal Knowledge Retrieval at Enterprise Scale

The MCP-native model flips the "search our internal docs" problem. Instead of building a custom RAG stack on top of Elasticsearch and hoping retrieval quality holds, enterprises expose their systems as MCP servers and let Gemini 3.1 Pro handle the research loop. This is the workflow where the 93.3% DeepSearchQA score becomes tangible — it's the benchmark most correlated with "find the right fact across a large, messy corpus."

The Part We're Genuinely Skeptical About

Three concerns worth raising before anyone builds a production workflow on Deep Research Max:

- Benchmark-to-production gap: a 93.3% DeepSearchQA score is a lab result. Production queries are longer, messier, and more ambiguous than benchmark questions. We expect real-world accuracy on enterprise corpora to land 15–25 points below the benchmark, which is still excellent but not magic.

- MCP server reliability: the agent is only as good as the MCP servers it queries. If your FactSet MCP bridge times out, Deep Research Max will either hallucinate around the gap or produce a partial report — either way, worse than your analyst would have done. MCP server SLAs are going to become a procurement line item.

- Public preview pricing surprise: Google's "paid tier" framing with no public rate card is a yellow flag. The pattern from the last two years is that async frontier-model agents quietly price in the tens of dollars per long task. Build cost controls before you build the feature.

Our Take: This Is the New Floor, Not the Ceiling

Deep Research Max resets the expectation for what a first-party research agent should ship with at launch. A two-tier speed/comprehensiveness split, native MCP, inline charts, streaming reasoning, and public benchmark numbers that beat the previous generation by 27 points is the new baseline. Anyone launching an autonomous research product after April 2026 now has to clear that bar or explain why they don't need to.

The bigger move is strategic. By embedding MCP natively and partnering with FactSet and PitchBook at launch, Google is positioning Gemini 3.1 Pro as the research primitive that sits between an enterprise's internal systems and the analyst who reads the output. That's a dominant position if they hold it. OpenAI will respond. Anthropic will keep investing in the MCP ecosystem it started. Perplexity will keep shipping consumer polish.

For operators, the call to action is smaller and more practical: pick one Deep Research Max use case that currently eats more than four hours a week of your team's time, prototype it on the public preview, and measure the delta. The tool is new enough that the first teams to wire it into their daily workflow will pull ahead meaningfully.

This launch is also the best evidence yet of why Google's broader Gemini 3 offensive in 2026 deserves to be taken seriously. Between Gemini Robotics-ER 1.6 for embodied reasoning, the developer tooling in Google AI Studio, and now Deep Research Max as the enterprise-facing research primitive, Google is executing a stack play that competitors will have trouble matching in parallel.

What's Next

Three things we're watching in the weeks after launch:

- OpenAI's response: ChatGPT Deep Research is due for a version bump. A counter-launch with GPT-5-class upgrades and either native MCP or deeper connector support is the obvious move. Timeline: four to six weeks.

- Google's public rate card: per-task pricing for Deep Research Max will determine which companies actually deploy it at scale versus those who run small pilots and wait. Watch for a Google Cloud Next side announcement.

- Third-party benchmark replications: independent research groups will attempt to replicate the 93.3% DeepSearchQA and 54.6% HLE numbers. If those hold within three to five points, the launch numbers get locked in as reference. If they don't, Google will face the same skepticism that follows every self-reported benchmark.

We'll update this piece as each of those lands.

Frequently Asked Questions

What is Google Deep Research Max?

Deep Research Max is Google's autonomous research agent built on Gemini 3.1 Pro, launched on April 21, 2026. It uses extended test-time compute to iteratively plan, search, reason, and refine across the public web plus internal company systems exposed through Model Context Protocol (MCP). It scores 93.3% on DeepSearchQA, 54.6% on Humanity's Last Exam, and 85.9% on BrowseComp, and is designed for asynchronous workflows like overnight due diligence reports.

How is Deep Research Max different from Deep Research?

Both run on Gemini 3.1 Pro. Deep Research is optimized for speed and lower cost on interactive surfaces where a user is waiting for an answer. Deep Research Max invests more time and hardware per task for comprehensive, multi-source synthesis — best for asynchronous workflows like nightly reports, M&A diligence, or literature reviews. Use Deep Research when the user is on the page; use Deep Research Max when the output goes into a shared doc or digest.

What benchmarks did Deep Research Max actually hit?

Google published three headline scores: 93.3% on DeepSearchQA (up from 66.1% in December 2025, a 27.2-point jump), 54.6% on Humanity's Last Exam (up from 46.4%, +8.2 points), and 85.9% on OpenAI's BrowseComp benchmark (+25 points versus Gemini 3 Pro). Full methodology per benchmark has not been published yet — read these as best-configuration, Google-run numbers rather than what you'll see on a cold API call.

Is Deep Research Max better than ChatGPT Deep Research?

On published benchmarks as of April 2026, yes — Deep Research Max's 85.9% on OpenAI's own BrowseComp benchmark is the clearest apples-to-apples indicator. But ChatGPT Deep Research has wider consumer mindshare and a more polished UX. For pure research quality and enterprise workflows with MCP, Deep Research Max leads today. OpenAI is expected to ship a counter-launch within four to six weeks.

Does Deep Research Max support MCP (Model Context Protocol)?

Yes — natively. Deep Research and Deep Research Max are the first first-party Google research agents with native MCP client support. A single API call can fuse public web results with internal company data exposed through MCP servers. Google announced FactSet and PitchBook as launch MCP integrations, with more partners expected. This is the feature that matters most for enterprise use cases.

How much does Deep Research Max cost?

Google has not published a per-token or per-task rate card for either variant as of the April 21, 2026 launch. Both are in public preview on paid tiers of the Gemini API. The framing used by Google — "a nightly cron job for Deep Research Max" — suggests per-task pricing rather than pure per-token, which is standard for async research agents. Expect async-tier calls to run several multiples of interactive-tier calls based on how the rest of the market prices this workflow.

How do I access Deep Research Max right now?

Through the Gemini API on paid tiers, in public preview. Google Cloud Vertex AI availability for startups and enterprises is on the roadmap but not live at launch. If you want the fastest path from zero to a working prototype, the BuildFastWithAI Python tutorial for the Gemini Deep Research API walks through the public preview endpoints step by step.

What can Deep Research Max do that Perplexity Deep Research cannot?

Three things. First, native MCP client support — Perplexity does not yet ship first-class MCP. Second, a formal two-tier speed/comprehensiveness split (Deep Research vs Deep Research Max) — Perplexity Deep Research is single-tier. Third, the 93.3% DeepSearchQA and 54.6% HLE benchmarks specifically — Perplexity has not published comparable figures on these evaluations. Perplexity still leads on consumer-facing citation UX and pro-search routing.

Can Deep Research Max read my company's internal data?

Yes, through Model Context Protocol servers that you or your vendors deploy. An MCP server exposes a data source (a database, a Jira instance, a Salesforce CRM, a document store, a financial data provider like FactSet) as a set of tools Gemini 3.1 Pro can call. Deep Research Max then fuses those internal results with open web data in a single research run. File uploads (PDF, CSV, images, audio, video) are also supported as direct inputs.

How does Deep Research Max compare to Claude with MCP?

Anthropic Claude with MCP is where the most flexible developer workflows live today — MCP is Anthropic's standard, and Claude's tool use is extremely well-behaved. But it's a primitive you compose, not a packaged research product. Deep Research Max ships as a finished two-tier product with published benchmarks, native inline charting, and Google Cloud enterprise integrations on the roadmap. For engineering teams, Claude via MCP is more composable. For finance, legal, and healthcare workflows that need an out-of-the-box research agent, Deep Research Max is faster to deploy.

What are Deep Research Max's biggest limitations right now?

Three issues we're flagging. First, the benchmark-to-production gap — expect real enterprise accuracy 15 to 25 points below the 93.3% lab score on messy real-world queries. Second, MCP server reliability: the agent is only as good as the MCP servers it queries, and partial or timed-out responses will degrade output quality. Third, pricing opacity — paid public preview with no public rate card means cost surprises are possible. Build usage caps before production deployment.

When will Deep Research Max leave public preview?

Google has not committed to a general availability date. The stated rollout sequence is: Gemini API public preview first (live now), Google Cloud Vertex AI for startups and enterprises coming soon, and deeper MCP partner integrations on the roadmap. Based on Google's historical pattern from preview to GA on major Gemini features, a six-to-nine-month preview window is reasonable to expect.