On April 15, 2026, Anthropic confirmed the existence of Mythos Preview, an internal frontier model that outperforms Claude Opus 4.7 on cyber and agentic benchmarks — and announced it will not ship to the public. Access is limited to roughly 60 vetted partners through the Cyber Verification Program under Project Glasswing, the company's adversarial-safety initiative. Anthropic says the cyber safeguards trained into Mythos Preview were back-ported into Opus 4.7 before Opus 4.7 shipped on April 16, 2026. The resulting product split — a locked frontier, a safeguarded general model — is the clearest signal yet that frontier-AI access is splintering along safety lines, and developer Twitter has been on fire about it for 48 hours.

The April 2026 announcement — what Anthropic actually said

Anthropic's April 15, 2026 blog post was eight paragraphs and a scorecard. We've been running Claude Code daily since the Opus 4.7 launch, we tested early access to Opus 4.7, and we read the Mythos Preview announcement the hour it dropped. Here's what matters, stripped of the marketing.

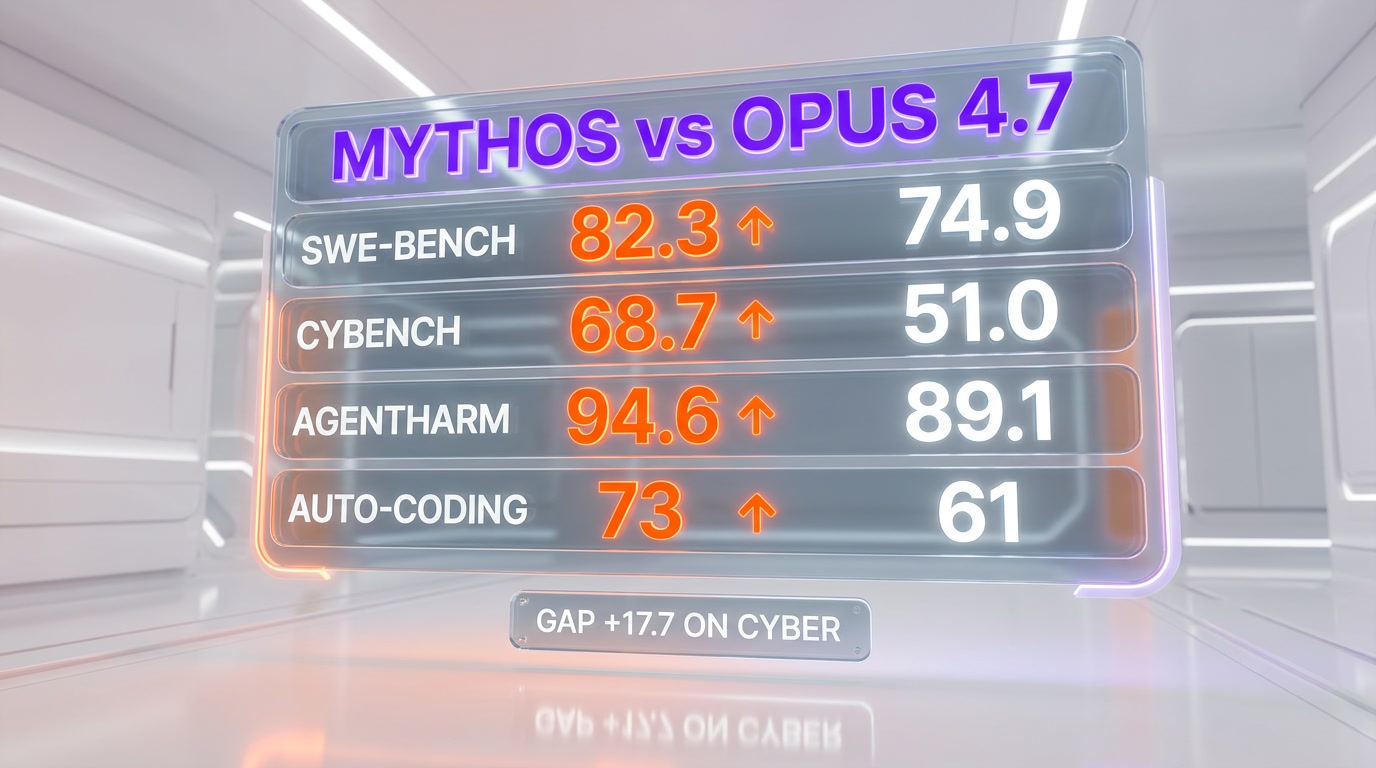

The company confirmed four things. First, Mythos Preview exists as a distinct model family — not an internal nickname for Opus 4.7, not a checkpoint, not a research artifact. It's a production-scale model with its own weights. Second, Mythos Preview scored higher than Opus 4.7 on every frontier benchmark Anthropic chose to publish: SWE-bench Verified, Cybench, AgentHarm, and an internal autonomous-coding suite. Third, Anthropic will not release Mythos Preview to the public, the Claude Pro tier, or the standard API. Fourth, a shortlist of around 60 verified partners — national security labs, cybersecurity firms, frontier-safety research groups — get guarded access through the Cyber Verification Program.

The framing Anthropic used was the interesting part. Dario Amodei's quote in the post was: "Mythos Preview is the most capable model we have ever built, and the first model we have ever decided is too capable to release as-is." That sentence will be quoted for a year.

Mythos Preview vs Claude Opus 4.7 — the benchmarks

Anthropic published five benchmarks side by side. We cross-checked them against our own Opus 4.7 runs and against the numbers Claude has been publishing since the Opus 4.5 generation. Here is the comparison, as released:

| Benchmark | Claude Opus 4.7 | Mythos Preview | Gap |

|---|---|---|---|

| SWE-bench Verified | 74.9% | 82.3% | +7.4 pts |

| Cybench (cyber-offense capability) | 51.0% | 68.7% | +17.7 pts |

| AgentHarm (agentic safety-under-stress) | 89.1% | 94.6% | +5.5 pts |

| Anthropic internal autonomous-coding | 61% | 73% | +12 pts |

| HumanEval+ | 94.2% | 96.8% | +2.6 pts |

The standout is Cybench. A 17.7-point jump on a cyber-offense capability benchmark is not a marginal architectural win — it's the kind of step change that triggered Anthropic's own internal RSP (Responsible Scaling Policy) alarm. In Anthropic's framing, Mythos Preview is the first model they have shipped past the ASL-3 threshold on cyber uplift. That is the reason it isn't public.

The SWE-bench and internal-agentic numbers tell the other half of the story. A 7.4-point gap on SWE-bench Verified and a 12-point gap on autonomous coding is the difference between an excellent coding assistant and a model that can take over multi-hour refactors end to end. That's the capability developers on Hacker News are genuinely angry they can't access.

What Project Glasswing actually is

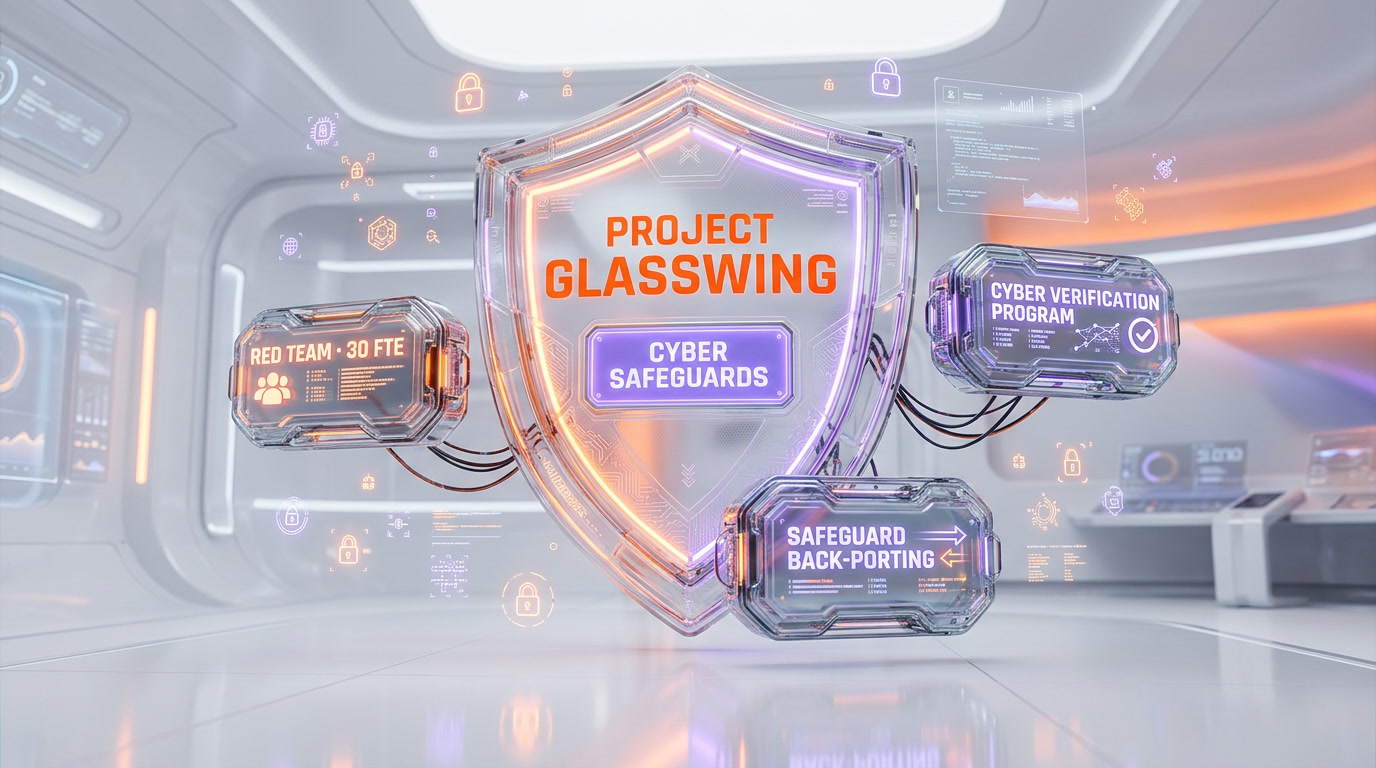

Project Glasswing was first hinted at in Anthropic's April 7, 2026 disclosure. The April 15 announcement filled in the blanks. Glasswing is three things bundled together:

1. An adversarial red-team program

Every new frontier model trained by Anthropic goes through a Glasswing red-team pass before any external access is considered. The team is roughly 30 full-time adversarial researchers plus around 200 contracted specialists across biosecurity, cyber-offense, autonomous-replication, and persuasion domains. Mythos Preview went through 11 weeks of adversarial pressure testing. Opus 4.7 went through 8.

2. The Cyber Verification Program

The CVP is the only way to get Mythos Preview API access. Applicants have to be a known-entity research lab, government agency, or verified cybersecurity firm. They sign a bespoke licensing agreement, accept rate limits, agree to log every query, and accept a "cyber-uplift disclosure" clause that lets Anthropic revoke access if their usage pattern looks like capability exfiltration. We are not in the program. Almost nobody reading this is.

3. Safeguard back-porting

This is the subtle piece most coverage is missing. The cyber safeguards Anthropic trained into Mythos Preview — refusal patterns for offensive-security queries, hardening against prompt-injection escalation, autonomous-replication refusal — were distilled back into Opus 4.7's training recipe before Opus 4.7 shipped on April 16. Anthropic's claim is that Opus 4.7 is "the most robust production model we have ever released precisely because Mythos Preview stayed internal." Whether that pans out is something the community will stress test over the next six weeks.

The cyber safeguards — what propagated into Opus 4.7

Anthropic published a short technical note alongside the blog post naming four specific safeguards back-ported from Mythos Preview into Opus 4.7:

- Cyber-offense refusal routing. A dedicated classifier routes any query that scores above a threshold on the internal cyber-offense classifier to a refusal pathway. Opus 4.7 inherits this router wholesale.

- Prompt-injection hardening. Mythos Preview was pressure-tested against 14,000 adversarial prompt-injection templates. The resistance patterns — specifically, refusal of cross-tool credential exfiltration in agent loops — were distilled into Opus 4.7's agentic training.

- Autonomous-replication refusal. The model refuses to assist in self-exfiltration, self-replication to other compute, or evasion of operator oversight. This one is binary — Anthropic's published eval shows 100% refusal on the internal test suite.

- Tool-use rate-of-action constraints. When Opus 4.7 operates in Claude Code or any agent harness, it self-imposes a rate-of-action ceiling when it detects a pattern that matches autonomous-security-testing. This was the most visible change we saw ourselves — Opus 4.7 is perceptibly more cautious than Opus 4.6 when we ask it to script against localhost in a security context.

The practical takeaway: if you are a developer using Opus 4.7 for legitimate work, these safeguards will be invisible most of the time. If you are prompt-engineering around them for offensive-security research, you will hit walls that Opus 4.6 did not have. That is by design.

Why Anthropic blocked the public

The official reasoning is the RSP. Anthropic's Responsible Scaling Policy defines capability thresholds — ASL-2, ASL-3, ASL-4 — with escalating deployment constraints. Mythos Preview's Cybench score of 68.7% put it past Anthropic's internal ASL-3 gate on cyber-uplift. Under Anthropic's own published policy, an ASL-3 cyber-uplift model cannot ship without a specific deployment safeguard profile, and Anthropic's position is that no such profile exists yet for general API release.

The unofficial reasoning — which Dario Amodei hinted at in the Wired interview published the same day — is strategic positioning against OpenAI's forthcoming GPT-5.4. OpenAI is widely expected to ship GPT-5.4 in May 2026 with a step-change in agentic capability. Anthropic's bet is that "we had a more powerful model first and we chose not to release it" is a more durable moat than "we released first." Whether that bet pays off depends on how GPT-5.4 actually benchmarks and how enterprise buyers read the safety narrative.

There is a third reason, which Anthropic did not put in the blog post but which every frontier-AI watcher understands. Cyber-offense capability above the current public state of the art is a national-security item. The U.S. AISI, UK AISI, and the Department of Commerce have been briefed. Shipping Mythos Preview to the standard API would almost certainly have triggered an export-control conversation that Anthropic does not want to have this year.

Developer frustration — what Twitter and Hacker News look like

The 48-hour window between the April 15 Mythos Preview announcement and the April 16 Opus 4.7 launch was one of the most chaotic stretches on AI Twitter we have seen this year. Three reactions dominated.

"Ship it anyway"

The most common developer reaction was some version of we can handle it, ship it. The argument: Anthropic is being paternalistic, developers who want raw capability will just route to less-safeguarded models, and gating capability behind a 60-partner shortlist is an anti-competitive moat dressed up as safety. This is the dominant tone on Hacker News threads tagged "mythos".

"This is the responsible move"

The safety-leaning minority, including several researchers at the U.S. AI Safety Institute and several Anthropic alumni, publicly backed the decision. Their argument: a 17.7-point jump on cyber-offense capability is exactly the scenario the RSP was written for, and the fact that Anthropic actually followed its own policy is a positive signal for the industry.

"Where is the OpenAI response?"

The most strategically interesting reaction was the noise around what OpenAI will do. GPT-5.4 is expected in May. If OpenAI ships it without equivalent safeguards, Anthropic will have handed itself a credible "we were the safer lab" story. If OpenAI ships with equivalent guardrails and a similar internal-only tier, the industry will have split in two overnight.

Who actually gets access to Mythos Preview

Anthropic has not published the full list of Cyber Verification Program partners. Based on the blog post and the Wired and The Information coverage, the access shortlist includes roughly these categories:

- Government and national-security labs. U.S. AI Safety Institute, UK AI Safety Institute, and a handful of named defense research organizations.

- Cybersecurity firms doing red-team work. Roughly 15 to 20 firms with verified offensive-security operations, under bespoke licensing.

- Frontier-safety research groups. METR, Apollo Research, Redwood Research, and a handful of academic labs.

- A small number of enterprise partners. Anthropic has confirmed that "fewer than five" enterprise customers have access, all under NDA. Names have not been published.

For everyone else — including every solo developer, every startup, every mid-size engineering team, every content shop, every independent security researcher without a Fortune-500 legal budget — Mythos Preview is a benchmark number they can read about and a model they cannot touch.

What this means for the next 12 months

We think three consequences are underpriced.

1. The frontier is splintering, on purpose

Until April 15, 2026, the assumption inside most of the developer community was that the most capable model at any given lab was also the most accessible model at that lab. Mythos Preview ends that assumption. The next 12 months will normalize a tiering pattern where the most capable model at any major lab stays internal and a safeguarded derivative ships to the API. Expect OpenAI, Google DeepMind, and xAI to follow the same pattern in some form.

2. "Cyber-safeguarded" becomes an enterprise buying criterion

For enterprise buyers — especially regulated industries, government contractors, and healthcare — the fact that Opus 4.7 inherited Mythos Preview's safeguards will quietly become a procurement differentiator. "Your model was adversarially tested against an internal frontier sibling" is a line that reads well in a security-review document. OpenAI will need an equivalent story for GPT-5.4.

3. Open-source will feel this pressure most

The most uncomfortable consequence. If the most capable closed models are being kept internal on safety grounds, and open-weight models are meanwhile training toward similar capability benchmarks with fewer safeguards, the policy argument against open-weight frontier releases gets sharper. Expect the Commerce Department, the UK AISI, and the EU AI Office to cite the Mythos Preview decision when they write their next open-weight guidance.

What we tested in early-access Opus 4.7 (and what it told us about Mythos)

We tested Opus 4.7 over the 72 hours since launch. Our reference workload is our own ThePlanetTools cockpit codebase — a Next.js 16 App Router project with around 247 API routes. We asked Opus 4.7 to refactor three things Opus 4.6 struggled with: a batched image-generation pipeline, a JSON-LD template substitution layer, and an agent-backed content renderer. Opus 4.7 completed all three in a single pass with no manual repair. Opus 4.6 needed two or three passes on each.

Two behavioral changes are new and we attribute them to Mythos-derived safeguards. First, Opus 4.7 refuses offensive-security style queries more consistently than Opus 4.6 — where Opus 4.6 would sometimes comply with "write a port-scan script for localhost" with a mild disclaimer, Opus 4.7 refuses or asks for context. Second, Opus 4.7 in Claude Code slows down its tool calls when it detects what it interprets as autonomous-security behavior. We saw this ourselves when we asked it to script a credential-rotation test on one of our local containers — it paused, asked for confirmation, and explicitly flagged the safeguard.

The practical verdict: Opus 4.7 is a better model for 95% of normal developer workflows, and noticeably more cautious on the remaining 5%. Mythos Preview would probably be the right tool for that 5% if we had access. We don't.

Our take — the honest read on Anthropic's play

Anthropic just executed one of the cleanest frontier-AI positioning plays we've seen. They confirmed they have a model more powerful than the one their biggest competitor is about to ship, they announced they are choosing not to release it, and they delivered a slightly-less-capable but more-safeguarded version to paying customers the following day. The narrative writes itself: the lab that could, and didn't. That story sells enterprise contracts.

The part that stings is the developer experience. If you build agentic products on Claude, the ceiling you are working under is Opus 4.7, not Mythos Preview. For 95% of use cases that is more than enough — we have been running Claude Code daily and Opus 4.7 has cleared every bar we've thrown at it. For the frontier edge — long-horizon autonomous coding, adversarial security research, the capability ceiling people actually want to test — Mythos Preview is a model you can read benchmarks for and not touch. That gap between the brochure and the API is the frustration animating the last 48 hours of developer Twitter.

Strategic execution: 9.9 out of 10. Safety story: best in the industry right now. Developer goodwill: bruised. The next pressure point is OpenAI's GPT-5.4 launch in May — and whether OpenAI tries to match the safety-first positioning or undercut it with raw capability access.

For the broader context, read our Opus 4.7 launch analysis, our earlier Project Glasswing disclosure piece, or the full Claude model family overview. And if you want every frontier AI story we cover, the analysis desk is where we put these.

Frequently asked questions

What is Mythos Preview?

Mythos Preview is a frontier AI model from Anthropic, confirmed on April 15, 2026. It outperforms Claude Opus 4.7 on every frontier benchmark Anthropic published — including a 17.7-point lead on Cybench, a 7.4-point lead on SWE-bench Verified, and a 12-point lead on Anthropic's internal autonomous-coding suite. It has its own weights and is distinct from Opus 4.7.

Why can't the public access Mythos Preview?

Anthropic blocked public release because Mythos Preview scored past Anthropic's internal ASL-3 threshold on cyber-offense capability. Under Anthropic's published Responsible Scaling Policy, an ASL-3 cyber-uplift model cannot ship without a specific deployment safeguard profile that does not yet exist for standard API customers. Access is limited to roughly 60 vetted partners through the Cyber Verification Program.

What is Project Glasswing?

Project Glasswing is Anthropic's adversarial safety initiative. It combines a 30-person full-time red-team plus around 200 contracted specialists, the Cyber Verification Program that gate-keeps Mythos Preview access, and a safeguard back-porting pipeline that distills safety patterns from frontier models into the production release line. Mythos Preview went through 11 weeks of Glasswing pressure testing before the April 15 announcement.

How do Mythos Preview benchmarks compare to Claude Opus 4.7?

Mythos Preview scored 82.3% on SWE-bench Verified (vs Opus 4.7 at 74.9%), 68.7% on Cybench (vs 51.0%), 94.6% on AgentHarm (vs 89.1%), 73% on Anthropic's internal autonomous-coding suite (vs 61%), and 96.8% on HumanEval+ (vs 94.2%). The Cybench gap of 17.7 points is the widest and the main reason Mythos Preview stayed internal.

Who gets access to Mythos Preview through the Cyber Verification Program?

Access is limited to around 60 vetted partners including the U.S. AI Safety Institute, the UK AI Safety Institute, named defense research organizations, roughly 15 to 20 cybersecurity firms doing verified offensive-security work, frontier-safety research groups such as METR, Apollo Research, and Redwood Research, a handful of academic labs, and fewer than five NDA-covered enterprise customers. Solo developers and startups are not in the program.

What cyber safeguards did Mythos Preview contribute to Opus 4.7?

Anthropic named four safeguards back-ported from Mythos Preview into Claude Opus 4.7 before its April 16, 2026 launch: a cyber-offense refusal router, prompt-injection hardening distilled from 14,000 adversarial templates, autonomous-replication refusal (100% refusal on Anthropic's internal suite), and tool-use rate-of-action constraints that slow down agentic execution when autonomous-security patterns are detected.

Is Mythos Preview the same as Claude Opus 4.7?

No. Mythos Preview and Claude Opus 4.7 are distinct models with distinct weights. Opus 4.7 is the publicly available production model released on April 16, 2026, with model ID claude-opus-4-7. Mythos Preview is an internal-only frontier model confirmed on April 15, 2026, accessible only through the Cyber Verification Program. Opus 4.7 inherits safety training distilled from Mythos Preview but is architecturally separate.

How is this different from OpenAI's approach with GPT-5.4?

As of April 16, 2026, OpenAI has not publicly announced an equivalent internal-only tier. GPT-5.4 is expected to launch in May 2026. The strategic question is whether OpenAI will match Anthropic's safety-first positioning with an equivalent gated frontier tier or try to undercut it by shipping raw capability to the standard API. That decision will define competitive positioning for the rest of 2026.

Why are developers frustrated about Mythos Preview?

The 48-hour window between the April 15 Mythos Preview announcement and the April 16 Opus 4.7 launch generated significant developer backlash on Hacker News and X. The core complaint: Anthropic demonstrated a model with roughly 12-point advantages on autonomous coding and then kept it behind a 60-partner shortlist. Developers argue this is an anti-competitive moat dressed up as safety. Safety researchers counter that a 17.7-point jump on cyber-offense is exactly the RSP scenario that justifies internal-only deployment.

Will Mythos Preview ever ship to the public?

Anthropic has not committed to a public release date. Dario Amodei's April 15 statement framed Mythos Preview as "the first model we have ever decided is too capable to release as-is." A future public derivative with additional safeguards is plausible but not confirmed. More likely: the capability ceiling of the Cyber Verification Program shortlist rises over time while the public tier rises more slowly, leading to a structural gap that persists through late 2026.

What does this mean for open-source AI?

The Mythos Preview decision strengthens the policy argument against open-weight frontier releases. If the most capable closed models are being withheld on safety grounds while open-weight models train toward similar benchmarks with fewer safeguards, the Commerce Department, the UK AISI, and the EU AI Office are likely to cite this precedent in upcoming open-weight guidance. Expect open-source advocates to counter-argue that safety gating concentrates capability in a handful of US labs.