Claude Opus 4.7 shipped on April 16, 2026 as Anthropic's most capable coding model to date. Model ID claude-opus-4-7, pricing unchanged at $5 per 1M input tokens and $25 per 1M output tokens. CursorBench jumps from 58% to 70% (+12 points), XBOW visual-acuity goes from 54.5% to 98.5% (+44 points), vision accepts images up to 2,576 pixels on the long side (~3.75 megapixels, 3x previous), Claude Code default effort is now xhigh, and Anthropic introduced task budgets in public beta plus a new /ultrareview slash command (3 free for Pro and Max users). Its big sibling, Claude Mythos Preview, is in limited access. After 14 months running Claude 4.x daily, we're literally using Opus 4.7 to write this article — and the shift from 4.6 to 4.7 is unmistakable.

What just changed

Anthropic announced Claude Opus 4.7 this morning, April 16, 2026, as a direct upgrade from Opus 4.6. Same pricing. Same 1M context window in general availability. Same platforms (Claude.ai, API, AWS Bedrock, Google Vertex AI, Microsoft Foundry). What changed is the model itself — rigor, consistency, vision, design taste, file-system memory — plus three new developer-facing controls: the xhigh effort level, task budgets, and the /ultrareview command in Claude Code. If you use Claude Code every day like we do, this is the biggest single-version leap in productivity we've seen since the original Opus 4 launch.

Our immediate hands-on impressions

We built ThePlanetTools.ai and ThePlanetTools.ai — the internal CMS you're probably hearing about in our content — entirely with Claude Code and Opus 4.x. After 14 months with Opus 4.x daily, 4.7 feels immediately different. We swapped the model string from claude-opus-4-6 to claude-opus-4-7 in our Claude Code config, kicked off the same kinds of tasks we've been running for a year, and here's what hit us in the first hour:

- Fewer infinite loops. Genspark called this out as their #1 differentiator and we can confirm — on agentic tasks that used to stall on Opus 4.6 (file-system memory writes, multi-step refactors), 4.7 pushes through. Loop resistance is real.

- Self-correction during planning. 4.7 caught two logic flaws in our plan before starting to code. PayPal's early-access team reported the same behavior. This is new.

- It pushes back. The Replit and Fin teams flagged this publicly and we saw it within the first session — 4.7 counter-argues instead of just agreeing. You propose a refactor, it tells you why your approach is brittle, suggests a better one, and is usually right. It genuinely feels like pairing with a stronger engineer.

- Instructions are taken literally. This is the one to watch. Prompts that used to work because older Claude models quietly softened edge cases now get followed to the letter. Two of our mega-prompts produced outputs we didn't want on the first run. Fixed by tightening the language. More on this below.

- More rigor on hard code. The Vercel team reported that 4.7 writes proofs on system code before starting work — a behavior they had never seen on a previous Claude. We didn't hit that specific behavior yet in our tests, but we did see 4.7 run self-verification passes on outputs before handing them back, which matches what Anthropic describes.

We're writing this article in Claude Code with Opus 4.7 set to xhigh effort (the new default). The draft-to-ship time is meaningfully faster than the same workflow would have been on 4.6 two weeks ago. Not because 4.7 is faster per token — it isn't, in fact it thinks a bit more — but because the first draft is tighter, the corrections are cleaner, and we stopped wasting loops on obviously-wrong paths.

Benchmarks — the numbers that matter

Anthropic published a wave of partner benchmarks with the release. Here's the comparison across the metrics we actually care about as builders:

| Benchmark / Source | Opus 4.6 | Opus 4.7 | Delta |

|---|---|---|---|

| CursorBench (Cursor) | 58% | 70% | +12 pts |

| XBOW visual-acuity | 54.5% | 98.5% | +44 pts |

| GitHub 93-task coding | baseline | +13% resolution | +13% |

| Rakuten SWE-Bench (prod) | baseline | 3x tasks solved | 3x |

| Finance Agent (General Finance) | 0.767 | 0.813 | +4.6 pts |

| Notion Agent (complex workflows) | baseline | +14% at fewer tokens | +14% |

| Notion tool-call errors | baseline | 1/3 of errors | -66% |

| Databricks OfficeQA Pro | baseline | -21% reasoning errors | -21% |

| Harvey BigLaw Bench (high effort) | — | 90.9% | SOTA |

| Factory Droids (task success) | baseline | +10 to +15% | +10-15% |

| Bolt (app-building sessions) | baseline | up to +10% | +10% |

| CodeRabbit (code-review recall) | baseline | +10% | +10% |

Two numbers in this table are the ones that will get quoted everywhere for the next six months:

- CursorBench 58% to 70%. This is the bench Cursor uses internally to grade autonomous coding performance. A 12-point jump in one version is enormous. It means 4.7 one-shots tasks that 4.6 failed on.

- XBOW 54.5% to 98.5%. That's not an improvement — that's a phase change. Vision workloads (screenshots, diagrams, chemical structures, dense PDFs) that were unreliable on 4.6 are now trivially solved.

Opus 4.7 is also state-of-the-art on GDPval-AA, a third-party evaluation of economically valuable work in finance, law, and adjacent domains.

What's new in the model itself

Advanced software engineering — rigor and consistency

Anthropic describes 4.7 as a notable improvement over 4.6 on hard software engineering, with outsized gains on the hardest tasks. The pattern we see, and that partners confirm, is fewer cut corners — 4.7 finishes. It doesn't hand back half-done implementations with TODOs. It verifies its own output. It recognizes its own logic mistakes during planning and fixes them. It pushes counter-arguments instead of rubber-stamping your request.

Vision — 2,576 pixels on the long side

4.7 accepts images up to 2,576 pixels on the longest side (approximately 3.75 megapixels), roughly 3x the previous Claude models. This is a model-level change, not an API flag — images just get processed at higher fidelity automatically. No beta header, no migration. The unlocks: computer-use agents reading dense screenshots, data extraction from complex diagrams, pixel-perfect reference work, chemical structure parsing (reported by Solve Intelligence). The XBOW 98.5% visual-acuity benchmark is the headline proof point.

File-system memory

4.7 is meaningfully better at using file-system-based memory. It retains important notes across long multi-session work and reuses them with less context bootstrapping required. For anyone running a perpetual memory system on top of Claude Code — primer files, decision logs, hindsight notes — this is a direct uplift. Our own .claude/memory/ system noticed it on day one.

Design taste and creativity

Anthropic specifically calls 4.7 more "tasteful" and creative on professional tasks — interfaces, slides, documents. Vercel's v0 team put it bluntly: "the best model in the world for building dashboards and data-dense UIs — the design taste is genuinely surprising." We saw it on a ThePlanetTools.ai dashboard refactor: 4.7 picks color and spacing choices we'd actually ship.

Self-verification before delivery

4.7 invents its own verification steps before handing work back. In practice this looks like: you ask for a function, it implements, runs through edge cases mentally, catches a failure mode, patches, and then hands back. On 4.6 you often had to ask for that second pass explicitly.

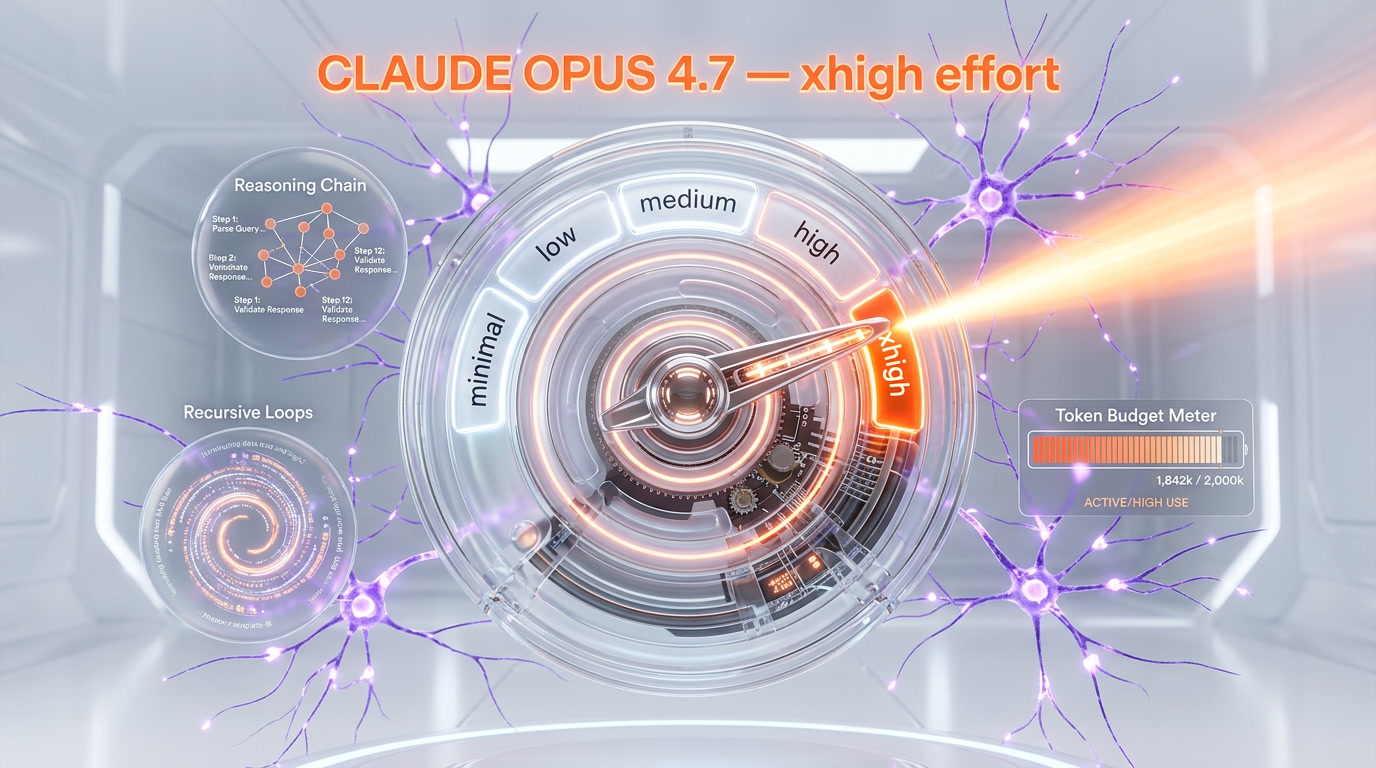

The new xhigh effort level

Opus 4.7 introduces a new effort tier, xhigh ("extra high"), sitting between high and max. The full hierarchy is now:

| Effort | Use case |

|---|---|

low | Fast, cheap, simple tasks |

medium | Standard coding and content |

high | Hard coding, agentic work (old default) |

xhigh | New. Hard coding with more rigor. Claude Code default on 4.7 |

max | Ceiling for the hardest problems |

In Claude Code, the default effort level has been raised to xhigh for all plans (Pro, Max, Team, Enterprise). You don't need to configure anything. Anthropic recommends starting with high or xhigh for coding and agentic workloads.

Task budgets (public beta)

Task budgets are a new control mechanism launching in public beta alongside 4.7. They let developers guide how Claude spends tokens on long agentic executions — essentially a soft cap that tells the model when to stop reasoning and start executing. This matters because 4.7 thinks more at high effort levels, particularly on late-turn agentic work. Task budgets give you a lever to say "you have X tokens of reasoning room, then ship."

Combined with the effort parameter, you now have two independent knobs on Claude's thinking: quality tier (effort) and total spend (task budget). If you run long agents in production, test this.

Tokenizer update — watch your spend

Opus 4.7 uses an updated tokenizer. The tradeoff: the same input may map to more tokens, roughly 1.0x to 1.35x depending on content type. That means some workloads could see up to 35% more token consumption on input alone, before we even talk about the extra reasoning at xhigh effort.

Mitigations we're running this week:

- Measure first. Compare 4.6 and 4.7 token usage on a representative sample of real traffic for 48 hours before generalizing.

- Use the

effortparameter. Drop tomediumon workloads that don't need xhigh. - Turn on task budgets. Cap reasoning on long agent loops.

- Prompt for concision. Explicit "be concise" instructions work better on 4.7 because it follows instructions literally.

Hex's team reported a useful insight: "Opus 4.7 at low effort is roughly equivalent to Opus 4.6 at medium effort." That's the other side of the token math — you can hit the same quality bar for less budget if you step the effort down.

Claude Code — what's new for developers

/ultrareview — the new slash command

The headline addition to Claude Code is /ultrareview, a dedicated review session that reads through changes and surfaces bugs and design issues that an attentive human reviewer would catch. Anthropic is giving every Claude Code Pro and Max user 3 free ultrareviews at launch. We're burning ours this week on ThePlanetTools.ai and a private Next.js 16 project — we'll report back in a follow-up.

Auto mode extended to Max users

Auto mode, the permissions flag where Claude makes decisions on your behalf instead of prompting you, is now available to Max-plan users (previously more restricted). Long autonomous sessions are the win here — lower risk than "skip all permissions," fewer interruptions than the default.

Default effort raised to xhigh

Across all plans, Claude Code's default effort with 4.7 is now xhigh. No configuration needed.

Partner early-access quotes

Anthropic shipped 4.7 with a wave of partner testimonials. The ones that matter for a stack like ours — dev tools, agents, SaaS:

Michael Truell, CEO of Cursor: "Claude Opus 4.7 is a very impressive coding model, particularly for its autonomy and more creative reasoning. On CursorBench, Opus 4.7 reaches 70% vs 58% for Opus 4.6."

Michele Catasta, President of Replit: "For Replit, Claude Opus 4.7 was an obvious upgrade. Same quality at lower cost. I personally love how it pushes back during technical discussions to help me make better decisions. It really feels like a better colleague."

Sarah Sachs, AI Lead at Notion: "+14% over Opus 4.6 on complex multi-step workflows, at fewer tokens and with a third of the tool-call errors. First model to pass our implicit-need tests. It continues execution through tool failures that used to block Opus."

Joe Haddad, Distinguished SWE at Vercel: "Solid upgrade with no regressions. Phenomenal on one-shot coding tasks. It even writes proofs on system code before starting — behavior we had never seen on a previous Claude."

Scott Wu, CEO of Devin (Cognition): "Claude Opus 4.7 pushes long-horizon autonomy to a new level in Devin. It works coherently for hours, pushes through hard problems instead of giving up, and unlocks a class of deep investigation work we couldn't run reliably before."

Kay Zhu, Co-founder and CTO of Genspark: "For Genspark's Super Agent, Claude Opus 4.7 nails the three prod differentiators that matter most: loop resistance, consistency, graceful error recovery. Loop resistance is the most critical."

Eric Simons, CEO of Bolt: "Measurably better than Opus 4.6 on Bolt's longer app-building work, up to 10% better in the best cases, without the regressions we've learned to expect from highly agentic models."

Hanlin Tang, CTO Neural Networks at Databricks: "On Databricks OfficeQA Pro, Opus 4.7 shows significantly stronger document reasoning, with 21% fewer errors than Opus 4.6 when working with sources. The best Claude for enterprise document analysis."

David Loker, VP AI at CodeRabbit: "For CodeRabbit's code-review workflows, Claude Opus 4.7 is the sharpest model we have tested. Recall improved by over 10%, surfacing some of the hardest-to-detect bugs. A bit faster than GPT-5.4 xhigh on our harness."

Migration Opus 4.6 to 4.7 — what to watch

4.7 is a drop-in upgrade from 4.6, but three changes deserve attention before you flip model strings in production:

1. New tokenizer (up to +35% tokens on some content)

Measure real traffic for 48 hours. Some content types will be near 1.0x, some closer to 1.35x. Budget accordingly.

2. More output tokens at high effort levels on hard problems

Opus 4.7 thinks more at high, xhigh, and max on hard agentic work, especially in late turns. Net effect per Anthropic is positive (better result at equivalent spend), but measure on your actual workload.

3. Literal instruction following — review your prompts

This is the change most likely to bite you. Prompts written for older models, especially long "mega-prompts" with optional sections, can produce unexpected results on 4.7 because it treats suggestions as requirements. Our action item: review any prompt longer than a page. Tighten the language. Make optional sections explicit.

Your levers: the effort parameter, the new task budgets (public beta), and explicit concision prompts.

Safety and alignment

4.7 shows a similar overall safety profile to 4.6. Honesty and resistance to prompt-injection attacks are up; there's a slight regression on willingness to give detailed harm-reduction advice on controlled substances. Anthropic's one-line verdict: "largely well-aligned and trustworthy, though not fully ideal in its behavior." The best-aligned model Anthropic has trained is still Claude Mythos Preview (limited access).

4.7 is the first post-Project Glasswing model to ship with the new cyber safeguards — automatic detection and blocking of requests indicating prohibited or high-risk cybersecurity use. During training, Anthropic experimented with methods to differentially reduce cyber capability relative to Mythos. Legitimate security professionals (pentest, red-team, vulnerability research) can apply to the new Cyber Verification Program to regain access to blocked capability classes.

The big sibling — Claude Mythos Preview

Alongside 4.7, Anthropic is still running the limited-access preview of Claude Mythos, a larger and more capable model that sits above Opus 4.7. Mythos is Anthropic's most capable and best-aligned model to date. Access is restricted and most builders won't touch it in 2026. We covered it in detail in our earlier piece: Claude Mythos and Project Glasswing — why it's not public and what it means for cybersecurity.

Who should upgrade right now

- Solo devs and founders. Game-changer. The loop resistance and literal-instruction behavior alone save hours per day. Swap to 4.7 today.

- Content teams using Claude Code / Opus for generation. Better quality at equal or lower cost (Hex's "low 4.7 ≈ medium 4.6" finding). Upgrade and re-benchmark effort levels.

- Agentic SaaS products. Loop resistance, graceful error recovery, and long-horizon autonomy are real. Migration is drop-in. Do it.

- Enterprise with document-heavy reasoning. Databricks OfficeQA Pro -21% errors, Harvey 90.9% SOTA, GDPval-AA SOTA. Upgrade.

- Design-heavy teams (dashboards, slides, interfaces). v0's quote is the tell. Upgrade.

- Teams with rigid mega-prompts. Upgrade, but budget two hours to review and tighten your top 5 prompts before going to prod.

Our verdict

Not subtle: this is the biggest single-version leap in Claude Code productivity we've seen since the original Opus 4 launch. The shift from 4.6 to 4.7 is unmistakable after two hours of real use, and every partner quote we've read from Cursor to Replit to Vercel lines up with what we saw in our own workflow — loop resistance, push-back, self-verification, better taste, literal instruction following.

The costs: watch your tokenizer math (up to +35%), review your mega-prompts (literal instruction following will bite you), and budget for a bit more reasoning spend at high effort levels. The levers (effort parameter, task budgets, concision prompts) give you the control you need.

We built ThePlanetTools.ai with Claude Code and 14 months of daily Opus 4.x use. 4.7 changes how we build. If you ship software for a living and you haven't flipped your model string today, you're giving up compounding productivity to whoever has.

Read more: our Claude Code pillar page, our Claude tool review, the Claude Code vs OpenAI Codex comparison, our 14-month ROI breakdown in Is Claude Code Max x20 Worth It — 14-Month Verdict, and the zero-to-first-project beginner guide.

Frequently asked questions

Is Opus 4.7 available now?

Yes. Claude Opus 4.7 was announced on April 16, 2026 and is in general availability today on Claude.ai, the Claude API, AWS Bedrock, Google Vertex AI, and Microsoft Foundry. The model ID is claude-opus-4-7.

How much does Claude Opus 4.7 cost?

Pricing is $5 per 1M input tokens and $25 per 1M output tokens. That is unchanged from Opus 4.6. The 1M-token context window is in general availability at the standard rate, with up to 128k tokens of output on the synchronous API and up to 300k on the Batches API with the output-300k-2026-03-24 header.

Is there a price increase vs Opus 4.6?

No — per-token pricing is identical to 4.6. However, the updated tokenizer can map the same input to roughly 1.0x to 1.35x the token count depending on content type, and 4.7 produces more reasoning tokens at higher effort levels. Net effective cost on your workload can be higher. Measure 48 hours of real traffic before generalizing, and use the effort parameter plus task budgets to control spend.

Should I migrate my existing Opus 4.6 prompts?

Yes, review them. Opus 4.7 follows instructions more literally. Where older Claude models interpreted prompts with some liberty and quietly skipped certain parts, 4.7 takes the instructions at face value. Long "mega-prompts" with optional sections are the highest-risk category. Tighten the language and make optional sections explicit before shipping to production.

What is the xhigh effort level?

xhigh is a new effort tier introduced with Opus 4.7, sitting between high and max in the hierarchy low → medium → high → xhigh → max. It gives finer control over the reasoning-vs-latency tradeoff on hard problems. In Claude Code, xhigh is the new default effort level for all plans when using 4.7. Anthropic recommends high or xhigh for coding and agentic workloads.

What is /ultrareview?

/ultrareview is a new slash command in Claude Code that runs a dedicated review session over your changes, surfacing bugs and design issues that an attentive human reviewer would catch. At launch, Anthropic is giving every Claude Code Pro and Max user 3 free ultrareviews as a trial. It is tuned to catch issues that standard review passes miss.

Does Opus 4.7 have a 1M context window?

Yes. Opus 4.7 keeps the 1M-token context window in general availability, inherited from Opus 4.6. No beta header is required, and it is priced at the standard rate. Output tokens go up to 128k on the synchronous API and up to 300k on the Batches API with the output-300k-2026-03-24 header.

How does Opus 4.7 compare to GPT-5.4?

On CodeRabbit's code-review harness, Opus 4.7 is slightly faster than GPT-5.4 xhigh while delivering the highest recall they have measured. On CursorBench, Opus 4.7 reaches 70%. On GDPval-AA, Opus 4.7 is state-of-the-art. The short answer: for autonomous coding, long-horizon agents, and enterprise document reasoning, Opus 4.7 is currently Anthropic's answer to GPT-5.4 and wins on most coding and agentic benchmarks published with the release.

What is Claude Mythos?

Claude Mythos Preview is Anthropic's larger, more capable sibling model to Opus 4.7. It is in limited access, not generally available. Anthropic describes it as the best-aligned model they have trained. Opus 4.7 is the first post-Project Glasswing model to ship with cyber safeguards developed during Mythos training. We cover Mythos in detail in our separate article on Project Glasswing.

Is Opus 4.7 available on AWS, Vertex, and Foundry?

Yes. Claude Opus 4.7 is available on Claude.ai, the Claude API, AWS Bedrock, Google Vertex AI, and Microsoft Foundry from launch day, April 16, 2026. Same model ID (claude-opus-4-7) and same capability profile across surfaces.

Does the tokenizer change affect my existing bills?

Potentially yes. The same input text may map to roughly 1.0x to 1.35x the token count on 4.7 vs 4.6, depending on content type. Per-token pricing is unchanged, but effective bills can rise. Measure for 48 hours, then use the effort parameter, the new task budgets in public beta, and explicit concision prompts to control spend.

Can I use Auto mode in Claude Code on Opus 4.7?

Yes. Auto mode — the permissions setting where Claude makes decisions on your behalf — is now available to Claude Code Max users with Opus 4.7 (previously more restricted). It is designed for longer autonomous sessions with fewer interruptions and lower risk than fully skipping permissions.