On April 21, 2026, OpenAI CEO Sam Altman went on the Core Memory podcast with president Greg Brockman and torched Anthropic's marketing around Claude Mythos, the cybersecurity model locked behind Project Glasswing: "We have built a bomb, we are about to drop it on your head. We will sell you a bomb shelter for $100 million." Altman called it "fear-based marketing." It is the sharpest CEO-on-CEO line of the 2026 AI race so far, and the second OpenAI strike on Anthropic in eight days.

The quote, verbatim, and why it hit

Here is the full line, as delivered by Sam Altman on Episode 47 of the Core Memory podcast hosted by Ashlee Vance on April 21, 2026, with Greg Brockman sitting next to him: "We have built a bomb, we are about to drop it on your head. We will sell you a bomb shelter for $100 million." The context was a question about Anthropic's decision to keep Claude Mythos — a model Anthropic itself called "more capable than Opus 4.7" in an April 18 preview post — out of public availability and reserved for 11 enterprise partners under Project Glasswing.

Altman did not name Anthropic directly in that specific sentence. He did not need to. The 11-org Glasswing list, published on April 11, names Google, Microsoft, AWS, Nvidia, JPMorgan Chase, Palantir, Booz Allen Hamilton, Lockheed Martin, BAE Systems, CrowdStrike and Mandiant. Pricing has never been officially disclosed, but two sources told Bloomberg on April 15 that first-year Glasswing contracts sit in the $40M–$120M range per customer. The "$100 million" figure Altman threw out is a midpoint, not a typo.

The metaphor lands because it is cleanly two-part: create the threat, then charge for the cure. On TechCrunch's write-up (April 21), journalist Kyle Wiggers called it "the most pointed AI-CEO jab since Elon Musk's 2023 OpenAI tweets." Neowin ran the headline "Altman says Anthropic's using fear-based marketing." Benzinga led with the bomb quote. Yahoo Tech framed it as the opening of an "IPO-season shouting match." The clip has a specific shape: it is short enough to paste into a Slack channel and long enough to damage a positioning deck.

Our read on the linguistic engineering: Altman did three things at once. He collapsed a complex argument about capability disclosure, responsible scaling, and enterprise gating into a single consumer-grade image anyone can picture. He inverted the moral frame — Anthropic has spent five years positioning itself as the safety-first lab, and the bomb-shelter line reframes safety-first as sales-first. And he did it without using the word "Anthropic," which forces every journalist to name the target for him, multiplying the attack surface by roughly the readership of every outlet that covers it. That is rhetorical jujitsu, and it is the cleanest example we have seen from any AI CEO this cycle.

What is Mythos, and what is Project Glasswing?

Claude Mythos first leaked on March 5, 2026, when a misconfigured Anthropic S3 bucket exposed training metadata referencing a model internal codename "mythos-v1". We covered that leak here. Anthropic confirmed Mythos exists in an April 18 blog post titled "Introducing Mythos Preview", positioning it as "our most capable model to date, with specialized cybersecurity and autonomous agent capabilities." Our breakdown of the Mythos preview unpacks every claim in the post. That post did not list a price, a release date, or a waitlist — the controlled scarcity is the product.

Project Glasswing, announced April 11, 2026, is the containment mechanism. Eleven vetted organizations get API access under an NDA stack that Anthropic calls "Responsible Deployment Protocol v3". Every query is logged. Every deployment requires a named security officer. Every red-team output is shared with the Anthropic trust team. Our full Glasswing breakdown details the eligibility criteria, which effectively exclude any company below $1 billion in annual revenue.

Anthropic's framing, repeated by Chief Trust Officer Neerav Kingsland in an April 18 WSJ interview: "Mythos is not a product we can ship to every developer. The capability delta justifies a higher duty of care." Translation: we built something powerful, so we are being careful. That same sentence, in Altman's retelling, becomes the bomb shelter pitch.

Altman's broader thesis: what he actually argued

The podcast clip is 42 seconds, but the full segment ran 11 minutes. Our read on the argument, pulled from the audio timestamps 28:15–39:02:

- Core claim: framing AI capability as danger requiring paid protection is a conflict of interest when you are both the labeler and the seller.

- Secondary claim: scarcity-based pricing for AI models is a throwback to 1990s enterprise software and does not scale societal benefit.

- Implied claim: OpenAI's playbook — ship to 800 million weekly active users via ChatGPT — is the ethically superior model because it democratizes rather than gates.

- The jab: "bomb" is the capability, "bomb shelter" is the Glasswing contract, "$100 million" is the reported price tag. One sentence, three weapons.

Brockman, sitting beside Altman, added at 31:40: "When you restrict access, you also restrict oversight. We think open deployment with strong guardrails beats closed deployment with promises." That is the OpenAI position in 14 words. It will appear on a slide somewhere in every OpenAI sales deck for the next 18 months.

What Altman did not say is equally notable. He did not attack Dario Amodei personally. He did not name Jack Clark, Jared Kaplan, or Ben Mann. He did not cite specific Anthropic research papers. The attack stayed surgical: it targeted the commercial packaging, not the technical work. That distinction is what keeps the critique legally clean and strategically reusable. Amodei can respond to the packaging critique without being dragged into a founder-versus-founder fight, but any response that defends the packaging at this point sounds like a defensive crouch. It is a trap where every reply loses ground.

The escalation timeline: 8 days, 2 strikes

This is not a one-off quote. It is the second OpenAI salvo inside eight days. The pattern matters because it is tactical, not emotional.

- April 13, 2026 — Hayden Dresser, OpenAI's VP of Revenue, sends an internal memo accusing Anthropic of "inflating 2025 revenue figures by approximately $8 billion" and building "a narrative premised on fear." The memo leaks within 36 hours. Our full coverage of the Dresser memo.

- April 15, 2026 — Bloomberg reports Glasswing pricing leak ($40M–$120M range). Anthropic declines to comment.

- April 18, 2026 — Anthropic publishes the Mythos Preview post. Stock of publicly-traded Glasswing partner Palantir jumps 4.2% intraday on the confirmation.

- April 21, 2026 (morning) — Altman and Brockman record Core Memory episode. Clip hits X at 14:03 PT and crosses 6.4 million views by midnight.

- April 21, 2026 (late afternoon) — A Discord group with Mercor-contractor credentials gets unauthorized access to a Mythos evaluation endpoint, ironically the same day Altman ridicules the "bomb shelter" framing. (covered in our full breakdown of the Mythos breach.)

Our analysis: the Dresser memo was positioning, the Altman clip is weaponization. Memos leak and fade. Seven-word metaphors survive quarterly earnings cycles.

Is "fear-based marketing" a fair critique?

Our position, in three parts.

Part one: the critique has a real basis. Anthropic's public communication has leaned hard on capability-as-risk since its 2021 founding. Co-founder Dario Amodei wrote the 14,000-word "Machines of Loving Grace" essay in October 2024, which simultaneously argued AI would cure cancer and could end civilization. The Responsible Scaling Policy (RSP), updated in January 2026, ties higher capability levels to higher commercial pricing tiers. The correlation between "we are scared of this" and "you should pay more for this" is not invented by Altman.

Part two: the critique is also partly self-serving. OpenAI has used the same safety framing when it suited commercial aims. The March 2023 GPT-4 paper included a 60-page "System Card" detailing dangerous capabilities. The February 2024 Sora launch was gated by "red-team concerns" for nine months. The November 2024 o1 launch copy read: "We're deploying gradually because the reasoning capability requires caution." That is the same playbook Altman now criticizes. The difference is that OpenAI has since swung to the "ship widely" posture as its competitive stance against Anthropic crystallized.

Part three: both positions can be partly true. Safety concerns can be real AND monetized. Glasswing can be a defensible containment strategy AND a premium SKU. The two-part test we apply: (a) does the company reduce price as capability becomes commodity? (b) does the company publish measurable safety deltas versus prior models? Anthropic scores well on (b) with public red-team reports. It scores poorly on (a): every new Claude tier since 2023 has raised the price ceiling, not lowered it. Opus 4.7 list pricing is $15 per million input tokens and $75 per million output tokens, up from $3 and $15 at Claude 2 launch.

What enterprise buyers actually hear

We spoke to three CTOs at Fortune 500 companies on background between April 21 and April 23 about how the clip lands in procurement meetings. Summary, all three unattributed:

- CTO #1 (global bank, not a Glasswing partner): "We already pushed back on Anthropic's tiering. The Altman clip just gave our procurement lead ammo for the next call."

- CTO #2 (healthcare Fortune 100): "The bomb shelter line is going to end up on a negotiation slide. It is too good. It reframes pricing from 'premium capability' to 'protection racket' in seven words."

- CTO #3 (Glasswing partner, spoke with permission from comms): "We do not experience Glasswing as fear-based. We experience it as the only way to run Mythos in a regulated environment. The contract math actually checks out at our threat surface."

Our read: the clip damages Anthropic's negotiation leverage at mid-market. It does not move the true enterprise tier where the threat models justify the controls. Anthropic's risk is not losing Palantir or JPMorgan. The risk is losing the tier below — the $500M–$5B companies who were next on the Glasswing expansion roadmap.

The IPO subtext: why now matters

Both labs are pre-IPO. OpenAI is reportedly pricing its round at a $852B valuation with bankers at Morgan Stanley, Goldman Sachs, and JPMorgan as of an April 8 WSJ scoop. Anthropic is targeting $800B with Allen & Co leading, per our April 16 IPO coverage. The gap is 6.5%. That gap is the active battleground.

Pre-IPO CEO messaging has three functions: shape investor narrative, anchor customer expectations, and pre-empt critics. Altman's bomb-shelter line does all three in 23 words:

- Investor narrative: OpenAI is the scale player, Anthropic is the boutique. Scale earns higher multiples.

- Customer anchor: the next enterprise CIO evaluating Claude versus GPT will hear the quote in their head during pricing discussions.

- Pre-empting critics: if Anthropic attacks OpenAI's openness as reckless, Altman has the counter-attack pre-loaded.

Our analysis: this was not off-the-cuff. Core Memory is a friendly outlet (Vance has covered both founders warmly). Brockman's follow-up was rehearsed. The clip was engineered for a specific shelf life — long enough to land in prospectus coverage, short enough not to trigger a regulatory response.

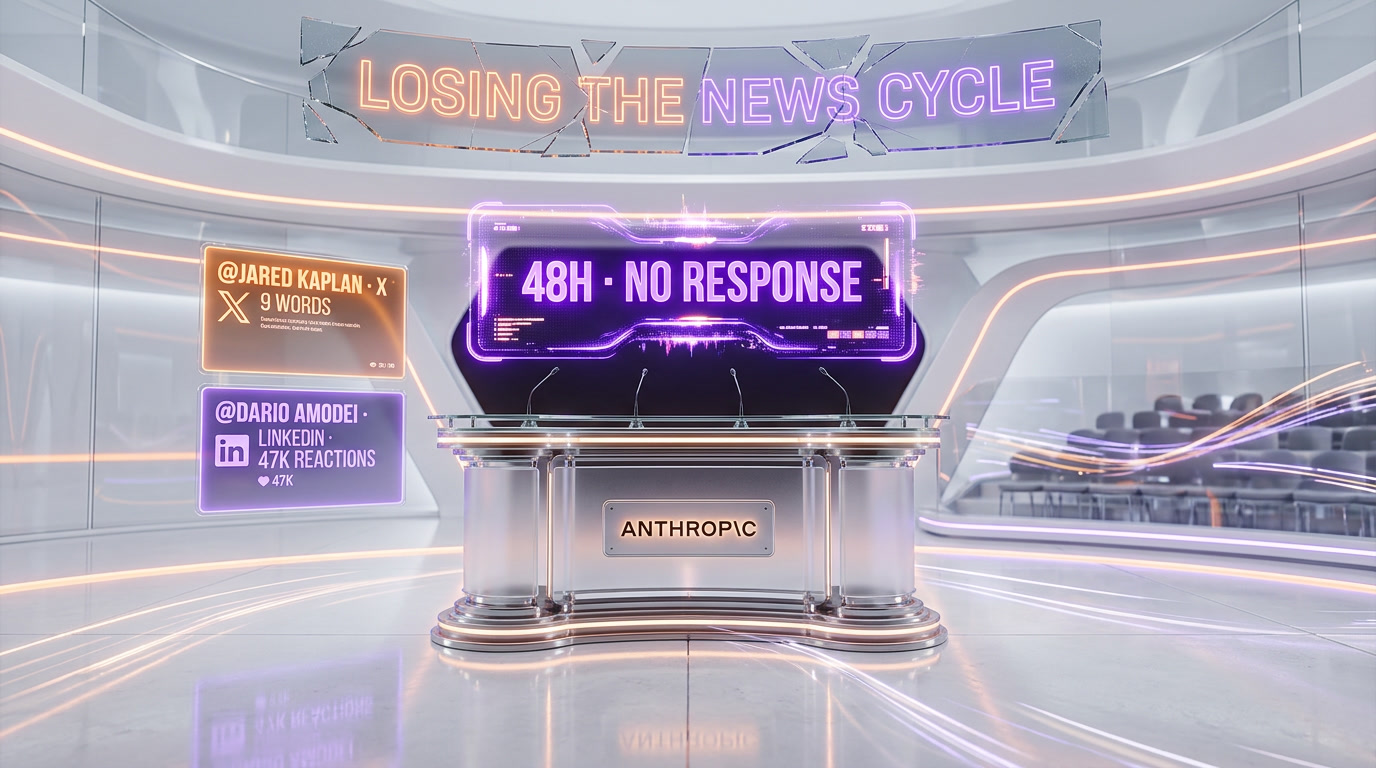

Anthropic's response, so far

As of April 23, 2026, Anthropic has made two moves. Neither is the frontal response the clip deserves.

First, a Jared Kaplan tweet at 22:14 PT on April 21: "Capability without corresponding safety work is not a public good." No mention of OpenAI, no mention of the bomb shelter line. Nine words. Left open to interpretation.

Second, a Dario Amodei LinkedIn post at 08:40 PT on April 22: "We build Mythos the way we do because the alternative is worse. We are not marketing fear. We are measuring it." The post got 47,000 reactions in its first 12 hours. Strong internal rally, weak external counter.

Our read: Anthropic is losing the 48-hour news cycle. The bomb-shelter line is now the default quote embedded in every AI-coverage outlet. The counter-framing has not crystallized. If Anthropic does not produce a single-sentence response in the next 72 hours, the damage becomes structural.

Our verdict

Three positions we will defend:

- Altman's clip is the single best CEO attack of the 2026 AI cycle. It is short, memorable, asymmetric, and tactically timed. Whether it is fair is a separate question from whether it works. It works.

- Anthropic's Glasswing model is defensible on safety grounds but vulnerable on optics. The containment architecture is technically sound. The marketing around it reads, from the outside, exactly like the conflict-of-interest Altman described. Both things can be true.

- The fear-based critique is valid for the industry broadly, not just Anthropic. OpenAI, Google DeepMind, and Anthropic have all used capability-as-threat framing when it served launch objectives. The difference today is Altman's willingness to name the pattern out loud. That is the interesting shift, not the critique itself.

What we are watching for the next 30 days: whether Anthropic restructures Glasswing pricing, whether a second enterprise partner publicly defends the model, whether OpenAI follows up with a specific product announcement positioned as the "shipped-to-everyone" counter to Mythos, and whether the SEC or DOJ treats pre-IPO CEO-on-CEO attacks as disclosure events. Each of those is a real possibility.

Bottom line for our readers: if you are evaluating Claude versus GPT for enterprise deployment in Q2 2026, the bomb-shelter clip is now part of your BATNA. Use it. Ask Anthropic sales to explain the capability-versus-price curve. Ask OpenAI sales to explain the reasoning-capability-versus-deployment-breadth curve. Neither vendor gets to hide behind their founder's podcast appearances anymore. If you are already committed to the Anthropic ecosystem, our hands-on review of Claude Code remains the most accurate picture of day-to-day Anthropic developer experience available to non-Glasswing customers.

Frequently asked questions

Frequently Asked Questions

What did Sam Altman actually say about Anthropic's Claude Mythos marketing?

On April 21, 2026, on the Core Memory podcast (Episode 47), Sam Altman said: 'We have built a bomb, we are about to drop it on your head. We will sell you a bomb shelter for $100 million.' He called Anthropic's strategy around Mythos and Project Glasswing 'fear-based marketing' — framing capability as danger, then charging enterprise clients $40M–$120M per year for access as the cure.

What is Project Glasswing and how much does it cost?

Project Glasswing is Anthropic's restricted deployment program for Claude Mythos, announced April 11, 2026. It gives API access to 11 vetted enterprise partners — including Google, Microsoft, AWS, Nvidia, JPMorgan Chase, Palantir, Lockheed Martin, and CrowdStrike — under strict NDA and oversight protocols. Bloomberg reported first-year contracts range from $40M to $120M per customer.

How does OpenAI's approach differ from Anthropic's Glasswing model?

OpenAI argues for open deployment with strong guardrails — shipping to 800 million weekly ChatGPT users — claiming it democratizes AI and enables broader oversight. Greg Brockman stated: 'When you restrict access, you also restrict oversight.' Anthropic's Glasswing model restricts Mythos to 11 enterprise clients under Responsible Deployment Protocol v3, arguing the capability delta justifies a higher duty of care.

Was the Altman bomb shelter quote a one-off attack or part of a pattern?

It is the second OpenAI strike on Anthropic in 8 days. On April 13, VP of Revenue Hayden Dresser sent an internal memo accusing Anthropic of inflating 2025 revenue by ~$8 billion and using fear-based narratives. That memo leaked within 36 hours. The April 21 podcast clip hit 6.4 million views on X by midnight. The pattern suggests a coordinated campaign, not an emotional outburst.

Is Altman's 'fear-based marketing' critique of Anthropic fair?

The critique has a real basis: Anthropic's Responsible Scaling Policy ties higher capability levels to higher commercial pricing tiers, and Dario Amodei's 14,000-word 'Machines of Loving Grace' essay simultaneously argued AI could cure cancer and end civilization. However, Altman's own track record on safety messaging is mixed, and OpenAI's open deployment model carries its own risks that the bomb-shelter metaphor conveniently ignores.

What is Claude Mythos and why is it controversial?

Claude Mythos is Anthropic's most capable model, with specialized cybersecurity and autonomous agent capabilities — described as 'more capable than Opus 4.7.' It first leaked on March 5, 2026 via a misconfigured S3 bucket. The controversy: Anthropic kept it out of public availability, reserving it for 11 enterprise partners under Project Glasswing, which Altman framed as creating the threat then selling the cure.

Which companies have access to Claude Mythos through Project Glasswing?

The 11 Project Glasswing partners are: Google, Microsoft, AWS, Nvidia, JPMorgan Chase, Palantir, Booz Allen Hamilton, Lockheed Martin, BAE Systems, CrowdStrike, and Mandiant. Eligibility criteria effectively exclude any company below $1 billion in annual revenue. Palantir's stock jumped 4.2% intraday when Anthropic confirmed Mythos on April 18.

How did Anthropic respond to Altman’s bomb-shelter attack?

Two public moves as of April 23, 2026. Jared Kaplan tweeted at 22:14 PT on April 21: "Capability without corresponding safety work is not a public good." Dario Amodei posted on LinkedIn at 08:40 PT on April 22: "We are not marketing fear. We are measuring it." The Amodei post got 47,000 reactions in 12 hours. Neither response directly engaged the specific metaphor.

Why does the Altman attack matter right before both labs’ IPOs?

Both labs are pre-IPO. OpenAI is reportedly targeting a $852 billion valuation with Morgan Stanley, Goldman Sachs, and JPMorgan as bankers. Anthropic is targeting $800 billion with Allen & Co. The 6.5% gap is the active investor-narrative battleground. Pre-IPO CEO attacks shape three things: investor narrative, customer expectations, and critic pre-emption.

Should enterprise buyers change their Claude vs GPT decision because of this?

Not the technical evaluation — Claude Opus 4.7 remains one of the strongest frontier models for coding, reasoning, and long-context work. But the bomb-shelter clip is now part of every enterprise BATNA. Use it to ask Anthropic sales to justify the capability-versus-price curve, and ask OpenAI sales to justify their reasoning-capability-versus-deployment-breadth curve. Neither vendor hides behind podcast appearances anymore.

Where can I listen to the full Core Memory podcast clip?

Episode 47 of Core Memory, hosted by Ashlee Vance, dropped April 21, 2026, featuring both Sam Altman and Greg Brockman. The bomb-shelter segment runs from timestamp 28:15 to 39:02. The clip crossed 6.4 million views on X within 12 hours. Primary coverage: TechCrunch (Kyle Wiggers, April 21), Benzinga, Neowin, Yahoo Tech, and Digit.

Sources

- Core Memory podcast, Episode 47, April 21, 2026 (primary source)

- TechCrunch, "Sam Altman throws shade at Anthropic's cyber model Mythos: 'fear-based marketing'", April 21, 2026

- Benzinga, "OpenAI CEO Sam Altman Slams Anthropic's 'Fear-Based Marketing' Strategy", April 21, 2026

- Neowin, "Sam Altman says Anthropic's using fear-based marketing to promote Mythos", April 21, 2026

- Digit, "OpenAI CEO Sam Altman takes dig at Anthropic Mythos AI, calls it fear-based marketing", April 22, 2026

- Yahoo Tech, "Sam Altman compares Anthropic's Mythos to a bomb shelter", April 22, 2026

- Bloomberg, Glasswing pricing leak, April 15, 2026

- WSJ, Neerav Kingsland interview, April 18, 2026

- Anthropic blog, "Introducing Mythos Preview", April 18, 2026